CS 160 Lecture 16 Professor John Canny Fall

- Slides: 29

CS 160: Lecture 16 Professor John Canny Fall 2001 Oct 25, 2001 based on notes by James Landay 10/17/2021 1

Outline 4 Review 4 Why do user testing? 4 Choosing participants 4 Designing the test 4 Administrivia 4 Collecting data 4 Analyzing the data 10/17/2021 2

Review 4 Personalization: How? 4 E-commerce: shopping carts, checkout 4 Web site usability survey * Readability of start page? * Graphic design? * Short vs. long anchor text in links? 10/17/2021 3

Why do User Testing? 4 Can’t tell how good or bad UI is until? * people use it! 4 Other methods are based on evaluators who? * may know too much * may not know enough (about tasks, etc. ) 4 Summary Hard to predict what real users will do Jakob Nielsen 10/17/2021 4

Choosing Participants 4 Representative of eventual users in terms of * job-specific vocabulary / knowledge * tasks 4 If you can’t get real users, get approximation * system intended for doctors + get medical students * system intended for electrical engineers + get engineering students 4 Use incentives to get participants 10/17/2021 5

Ethical Considerations 4 Sometimes tests can be distressing * users have left in tears (embarrassed by mistakes) 4 You have a responsibility to alleviate * * * make voluntary with informed consent avoid pressure to participate will not affect their job status either way let them know they can stop at any time [Gomoll] stress that you are testing the system, not them make collected data as anonymous as possible 4 Often must get human subjects approval 10/17/2021 6

User Test Proposal 4 A report that contains * * * * objective description of system being testing task environment & materials participants methodology tasks test measures 4 Get approved & then reuse for final report 10/17/2021 7

Selecting Tasks 4 Should reflect what real tasks will be like 4 Tasks from analysis & design can be used * may need to shorten if + they take too long + require background that test user won’t have 4 Avoid bending tasks in direction of what your design best supports 4 Don’t choose tasks that are too fragmented 10/17/2021 8

Data Types 4 Independent Variables: the ones you control * * Aspects of the interface design Characteristics of the testers Discrete: A, B or C Continuous: Time between clicks for double-click 4 Dependent variables: the ones you measure * Time to complete tasks * Number of errors 10/17/2021 9

Deciding on Data to Collect 4 Two types of data * process data + observations of what users are doing & thinking * bottom-line data + summary of what happened (time, errors, success…) + i. e. , the dependent variables 10/17/2021 10

Process Data vs. Bottom Line Data 4 Focus on process data first * gives good overview of where problems are 4 Bottom-line doesn’t tell you where to fix * just says: “too slow”, “too many errors”, etc. 4 Hard to get reliable bottom-line results * need many users for statistical significance 10/17/2021 11

The “Thinking Aloud” Method 4 Need to know what users are thinking, not just what they are doing 4 Ask users to talk while performing tasks * * tell us what they are thinking tell us what they are trying to do tell us questions that arise as they work tell us things they read 4 Make a recording or take good notes * make sure you can tell what they were doing 10/17/2021 12

Thinking Aloud (cont. ) 4 Prompt the user to keep talking * “tell me what you are thinking” 4 Only help on things you have pre- decided * keep track of anything you do give help on 4 Recording * use a digital watch/clock * take notes, plus if possible + record audio and video (or event logs) 10/17/2021 13

Administrivia 4 Yep, we know the server is down 4 Could be a hard or easy fix 4 Check www. cs. berkeley. edu/~jfc for temp replacement 4 Please hand in projects tomorrow. 4 Use zip and email if no server. 10/17/2021 14

Using the Test Results 4 Summarize the data * make a list of all critical incidents (CI) + positive & negative * include references back to original data * try to judge why each difficulty occurred 4 What does data tell you? * UI work the way you thought it would? + consistent with your cognitive walkthrough? + users take approaches you expected? * something missing? 10/17/2021 15

Using the Results (cont. ) 4 Update task analysis and rethink design * rate severity & ease of fixing CIs * fix both severe problems & make the easy fixes 4 Will thinking aloud give the right answers? * not always * if you ask a question, people will always give an answer, even it is has nothing to do with the facts * try to avoid specific questions 10/17/2021 16

Measuring Bottom-Line Usability 4 Situations in which numbers are useful * time requirements for task completion * successful task completion * compare two designs on speed or # of errors 4 Do not combine with thinking-aloud. Why? * talking can affect speed & accuracy (neg. & pos. ) 4 Time is easy to record 4 Error or successful completion is harder * define in advance what these mean 10/17/2021 17

Some statistics 4 Variables X & Y 4 A relation (hypothesis) e. g. X > Y 4 We would often like to know if a relation is true * e. g. X = time taken by novice users * Y = time taken by users with some training 4 To find out if the relation is true we do experiments to get lots of x’s and y’s (observations) 4 Suppose avg(x) > avg(y), or that most of the x’s are larger than all of the y’s. What does that prove? 10/17/2021 18

Significance 4 The significance or p-value of an outcome is the probability that it happens by chance if the relation does not hold. 4 E. g. p = 0. 05 means that there is a 1/20 chance that the observation happens if the hypothesis is false. 4 So the smaller the p-value, the greater the significance. 10/17/2021 19

Significance 4 And p = 0. 001 means there is a 1/1000 chance that the observation happens if the hypothesis is false. So the hypothesis is almost surely true. 4 Significance increases with number of trials. 4 CAVEAT: You have to make assumptions about the probability distributions to get good p-values. 10/17/2021 20

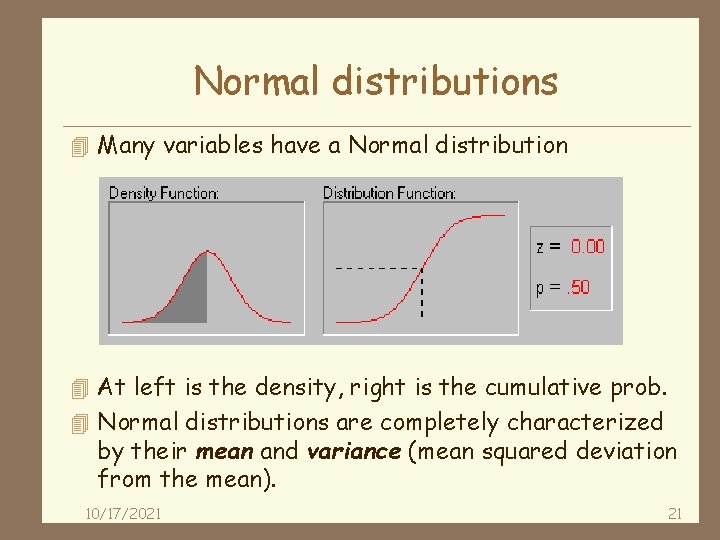

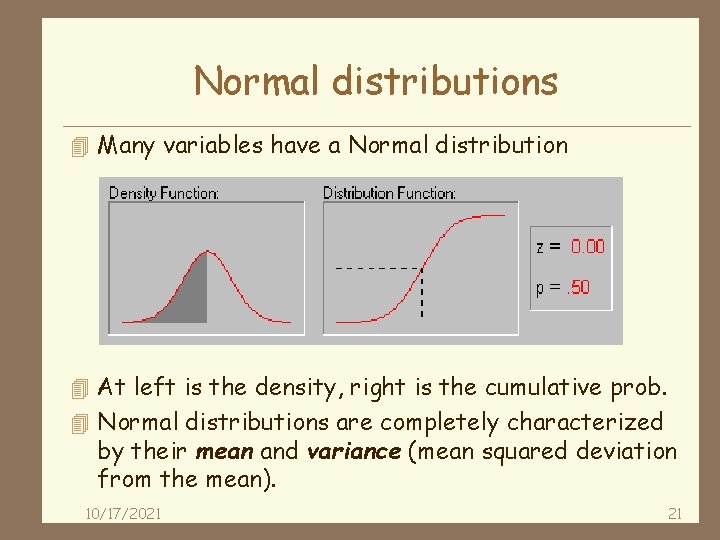

Normal distributions 4 Many variables have a Normal distribution 4 At left is the density, right is the cumulative prob. 4 Normal distributions are completely characterized by their mean and variance (mean squared deviation from the mean). 10/17/2021 21

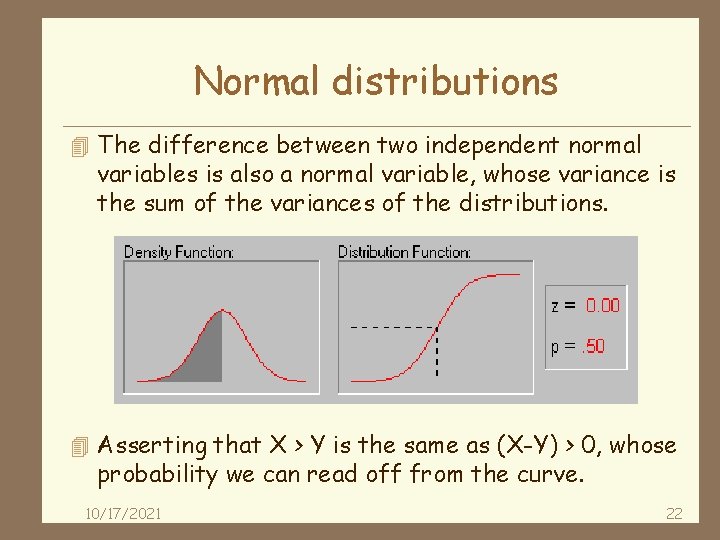

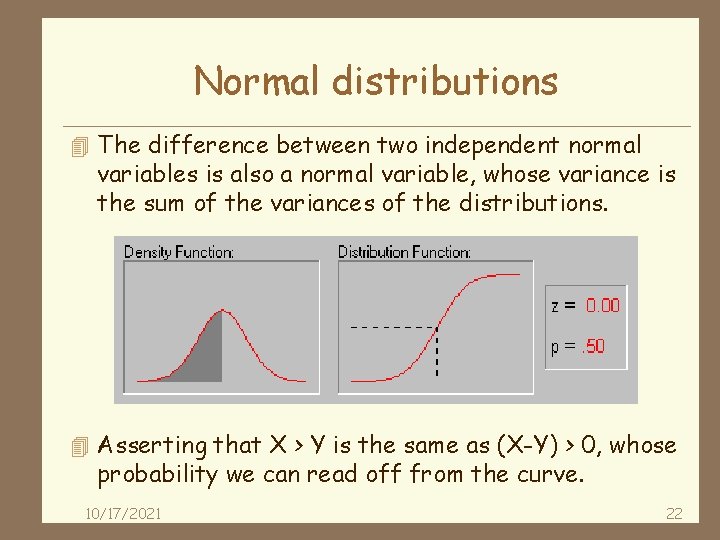

Normal distributions 4 The difference between two independent normal variables is also a normal variable, whose variance is the sum of the variances of the distributions. 4 Asserting that X > Y is the same as (X-Y) > 0, whose probability we can read off from the curve. 10/17/2021 22

Analyzing the Numbers 4 Example: trying to get task time <=30 min. * * * test gives: 20, 15, 40, 90, 10, 5 mean (average) = 30 median (middle) = 17. 5 looks good! wrong answer, not certain of anything 4 Factors contributing to our uncertainty * small number of test users (n = 6) * results are very variable (standard deviation = 32) + std. dev. measures dispersal from the mean 10/17/2021 23

Analyzing the Numbers (cont. ) 4 Crank through the procedures and you find * 95% certain that typical value is between 5 & 55 4 Usability test data is quite variable * need lots to get good estimates of typical values * 4 times as many tests will only narrow range by 2 x + breadth of range depends on sqrt of # of test users * this is when online methods become useful + easy to test w/ large numbers of users (e. g. , Net. Raker) 10/17/2021 24

Measuring User Preference 4 How much users like or dislike the system * can ask them to rate on a scale of 1 to 10 * or have them choose among statements + “best UI I’ve ever…”, “better than average”… * hard to be sure what data will mean + novelty of UI, feelings, not realistic setting, etc. 4 If many give you low ratings -> trouble 4 Can get some useful data by asking * what they liked, disliked, where they had trouble, best part, worst part, etc. (redundant questions) 10/17/2021 25

Comparing Two Alternatives 4 Between groups experiment * two groups of test users * each group uses only 1 of the systems 4 Within groups experiment * one group of test users A B + each person uses both systems + can’t use the same tasks or order (learning) * best for low-level interaction techniques 4 Between groups will require many more participants than a within groups experiment 4 See if differences are statistically significant * assumes normal distribution & same std. dev. 10/17/2021 26

Experimental Details 4 Order of tasks * choose one simple order (simple -> complex) + unless doing within groups experiment 4 Training * depends on how real system will be used 4 What if someone doesn’t finish * assign very large time & large # of errors 4 Pilot study * helps you fix problems with the study * do 2, first with colleagues, then with real users 10/17/2021 27

Reporting the Results 4 Report what you did & what happened 4 Images & graphs help people get it! 10/17/2021 28

Summary 4 User testing is important, but takes time/effort 4 Early testing can be done on mock-ups (low-fi) 4 Use real tasks & representative participants 4 Be ethical & treat your participants well 4 Want to know what people are doing & why * i. e. , collect process data 4 Using bottom line data requires more users to get statistically reliable results 10/17/2021 29