COMP 73307336 Advanced Parallel and Distributed Computing Parallel

COMP 7330/7336 Advanced Parallel and Distributed Computing Parallel Computer Architecture Dr. Xiao Qin Auburn University http: //www. eng. auburn. edu/~xqin@auburn. edu Some slides are adopted from Dr. David E. Culler, U. C. Berkeley

What is Parallel Architecture? • A parallel computer is a collection of processing elements that cooperate to solve large problems fast • Some broad issues: – Resource Allocation: – Data access, Communication and Synchronization – Performance and Scalability

Broad Issues in Parallel Architecture • Some broad issues: – Resource Allocation: • how large a collection? • how powerful are the elements? • how much memory? – Data access, Communication and Synchronization • how do the elements cooperate and communicate? • how are data transmitted between processors? • what are the abstractions and primitives for cooperation? – Performance and Scalability • how does it all translate into performance? • how does it scale?

What are the major differences among the following three supercomputers?

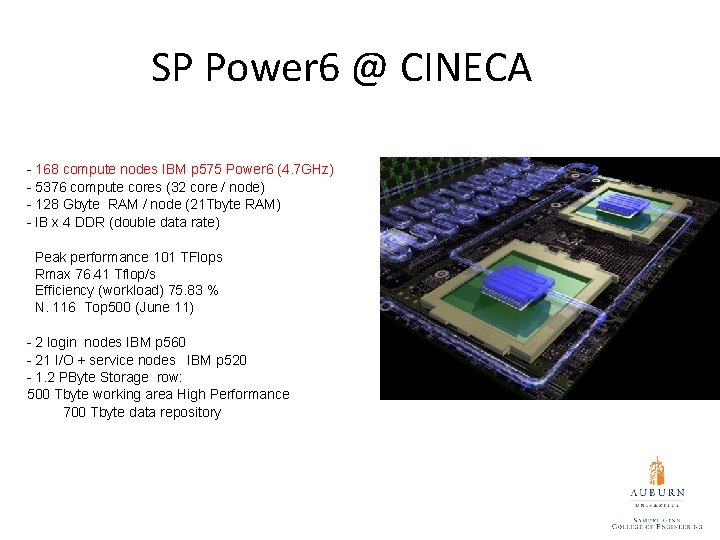

SP Power 6 @ CINECA - 168 compute nodes IBM p 575 Power 6 (4. 7 GHz) - 5376 compute cores (32 core / node) - 128 Gbyte RAM / node (21 Tbyte RAM) - IB x 4 DDR (double data rate) Peak performance 101 TFlops Rmax 76. 41 Tflop/s Efficiency (workload) 75. 83 % N. 116 Top 500 (June 11) - 2 login nodes IBM p 560 - 21 I/O + service nodes IBM p 520 - 1. 2 PByte Storage row: 500 Tbyte working area High Performance 700 Tbyte data repository

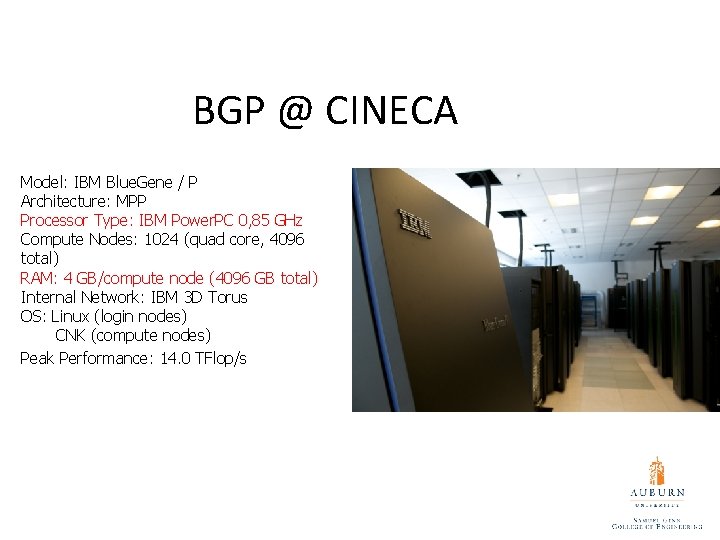

BGP @ CINECA Model: IBM Blue. Gene / P Architecture: MPP Processor Type: IBM Power. PC 0, 85 GHz Compute Nodes: 1024 (quad core, 4096 total) RAM: 4 GB/compute node (4096 GB total) Internal Network: IBM 3 D Torus OS: Linux (login nodes) CNK (compute nodes) Peak Performance: 14. 0 TFlop/s

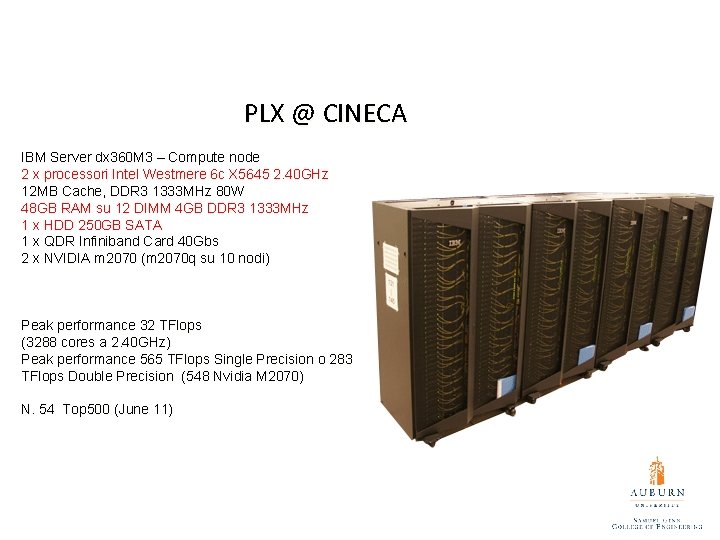

PLX @ CINECA IBM Server dx 360 M 3 – Compute node 2 x processori Intel Westmere 6 c X 5645 2. 40 GHz 12 MB Cache, DDR 3 1333 MHz 80 W 48 GB RAM su 12 DIMM 4 GB DDR 3 1333 MHz 1 x HDD 250 GB SATA 1 x QDR Infiniband Card 40 Gbs 2 x NVIDIA m 2070 (m 2070 q su 10 nodi) Peak performance 32 TFlops (3288 cores a 2. 40 GHz) Peak performance 565 TFlops Single Precision o 283 TFlops Double Precision (548 Nvidia M 2070) N. 54 Top 500 (June 11)

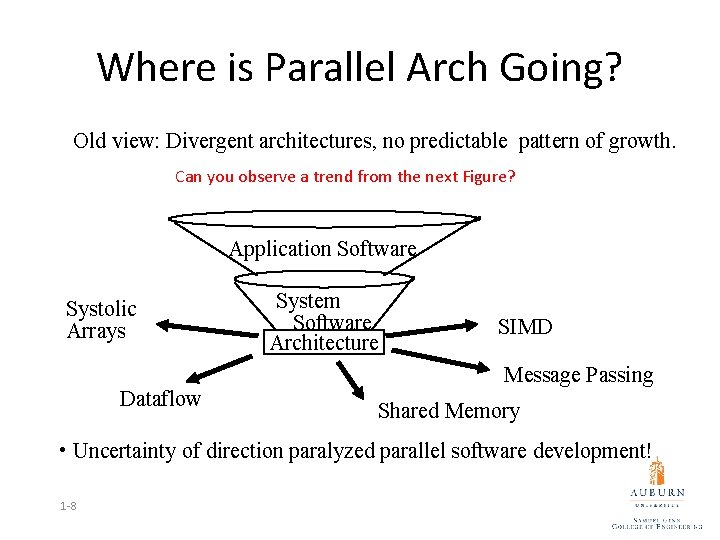

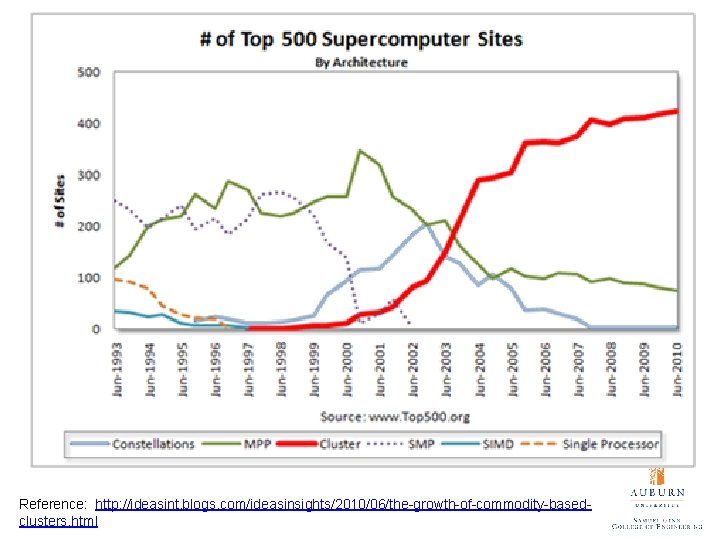

Where is Parallel Arch Going? Old view: Divergent architectures, no predictable pattern of growth. Can you observe a trend from the next Figure? Application Software Systolic Arrays Dataflow System Software Architecture SIMD Message Passing Shared Memory • Uncertainty of direction paralyzed parallel software development! 1 -8

Reference: http: //ideasint. blogs. com/ideasinsights/2010/06/the-growth-of-commodity-basedclusters. html

Is Parallel Computing Inevitable? • Application demands: Our insatiable need for computing cycles • Technology Trends • Architecture Trends • Economics • Current trends: – Today’s microprocessors have multiprocessor support – Servers and workstations becoming MP: Sun, SGI, DEC, COMPAQ!. . . – Tomorrow’s microprocessors are multiprocessors

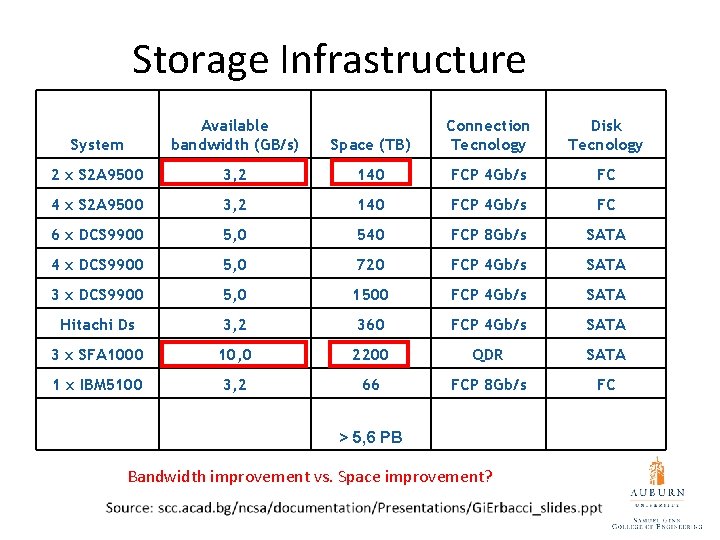

Storage Infrastructure System Available bandwidth (GB/s) Space (TB) Connection Tecnology Disk Tecnology 2 x S 2 A 9500 3, 2 140 FCP 4 Gb/s FC 4 x S 2 A 9500 3, 2 140 FCP 4 Gb/s FC 6 x DCS 9900 5, 0 540 FCP 8 Gb/s SATA 4 x DCS 9900 5, 0 720 FCP 4 Gb/s SATA 3 x DCS 9900 5, 0 1500 FCP 4 Gb/s SATA Hitachi Ds 3, 2 360 FCP 4 Gb/s SATA 3 x SFA 1000 10, 0 2200 QDR SATA 1 x IBM 5100 3, 2 66 FCP 8 Gb/s FC > 5, 6 PB Bandwidth improvement vs. Space improvement?

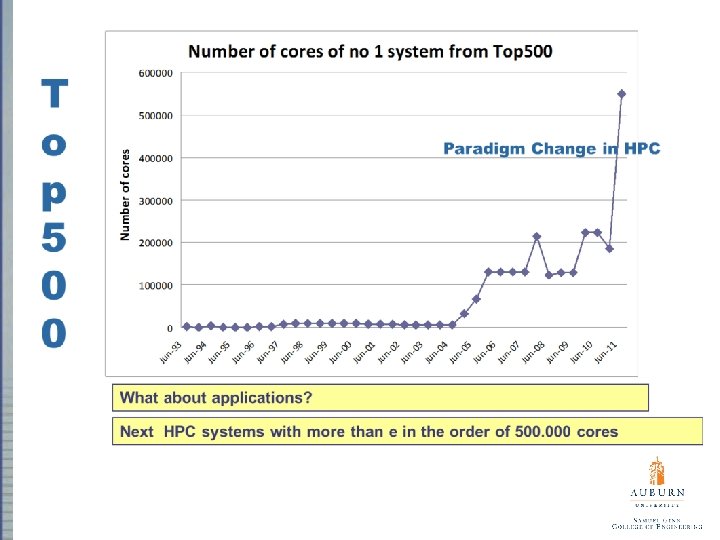

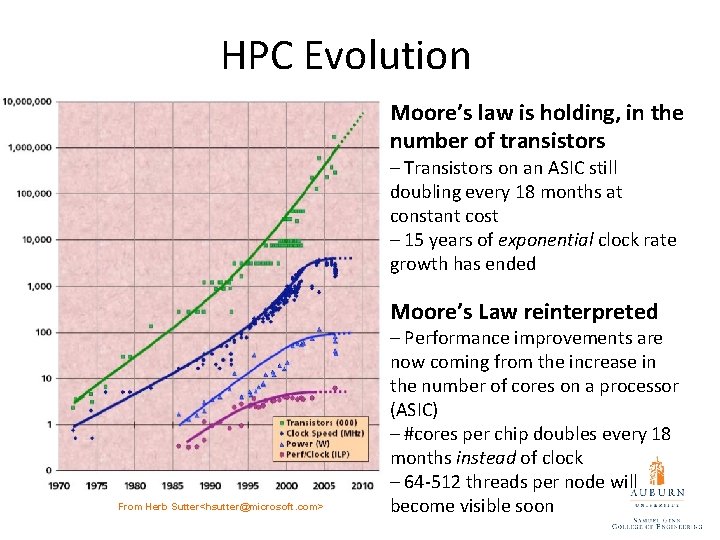

HPC Evolution Moore’s law is holding, in the number of transistors – Transistors on an ASIC still doubling every 18 months at constant cost – 15 years of exponential clock rate growth has ended Moore’s Law reinterpreted From Herb Sutter<hsutter@microsoft. com> – Performance improvements are now coming from the increase in the number of cores on a processor (ASIC) – #cores per chip doubles every 18 months instead of clock – 64 -512 threads per node will become visible soon

Real HPC Crisis is with ____? A supercomputer application and software usually much more long-lived than a hardware - Hardware life typically four-five years at most. - Fortran and C are still the main programming models Programming is stuck -Arguably hasn’t changed so much since the 70’s Software is a major cost component of modern technologies. - The tradition in HPC system procurement is to assume that the software is free.

It’s time for a change • Complexity is rising dramatically • Challenges for the applications on Petaflop systems • The use of O(100 K) cores implies dramatic optimization effort • New paradigm as the support of a hundred threads in one node implies new parallelization strategies

It’s time for a change (cont. ) • Improvement of existing codes will become complex and partly impossible • Implementation of new parallel programming methods in existing large applications has not always a promising perspective • There is the need for new community codes

Speedup • Speedup (p processors) = Performance (p processors) Performance (1 processor) • For a fixed problem size (input data set), performance = 1/time • Speedup fixed problem (p processors) = Time (1 processor) Time (p processors)

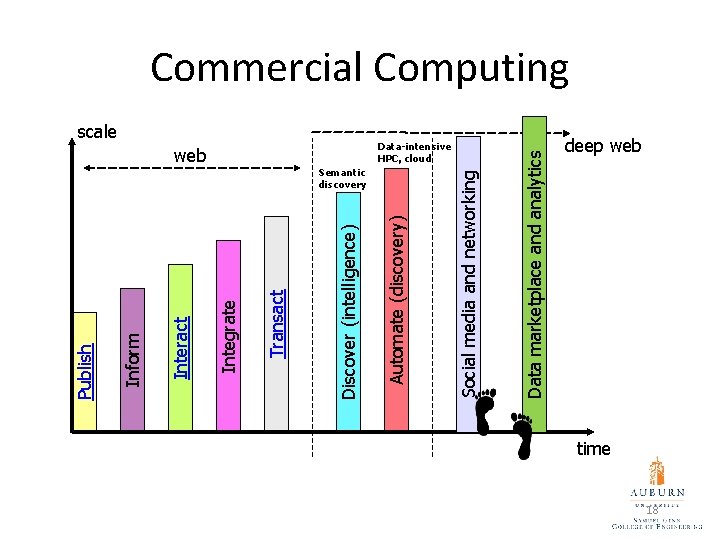

Semantic discovery Data-intensive HPC, cloud Data marketplace and analytics Social media and networking scale Automate (discovery) web Discover (intelligence) Transact Integrate Interact Inform Publish Commercial Computing deep web time 18

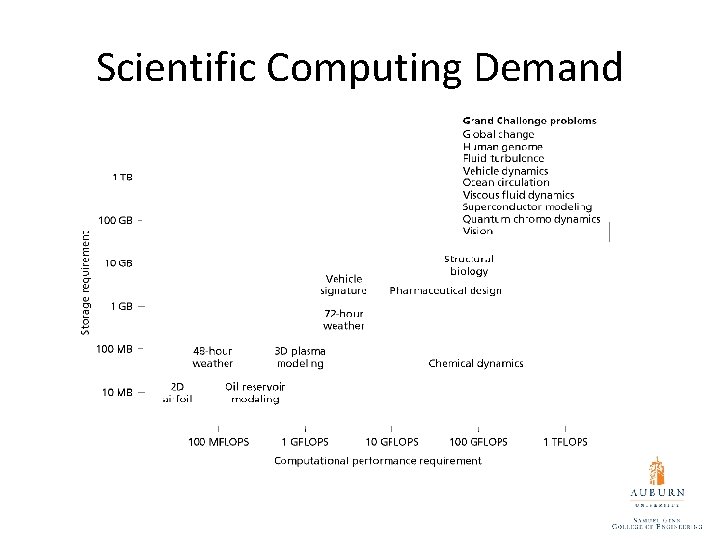

Scientific Computing Demand

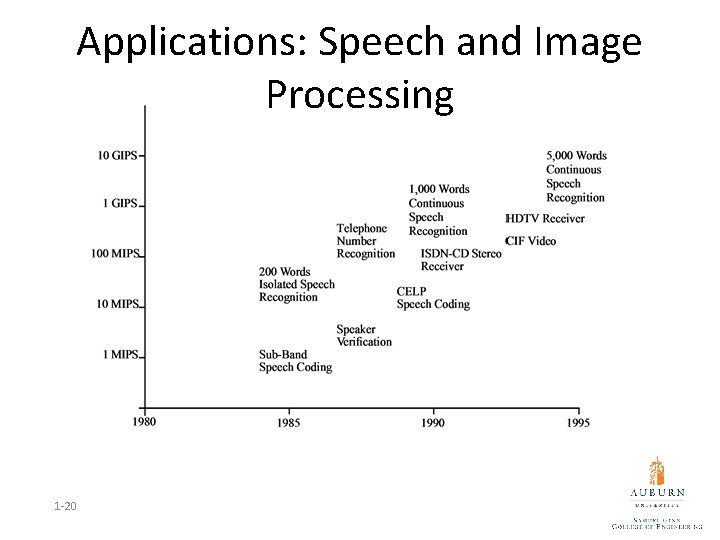

Applications: Speech and Image Processing 1 -20

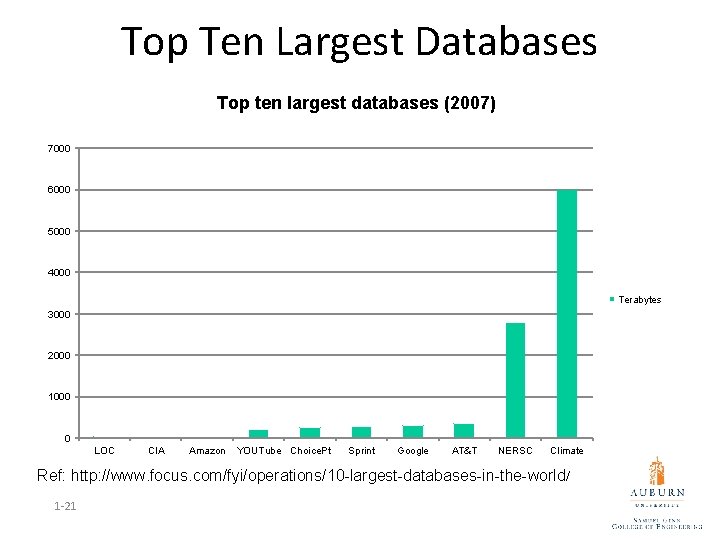

Top Ten Largest Databases Top ten largest databases (2007) 7000 6000 5000 4000 Terabytes 3000 2000 1000 0 LOC CIA Amazon YOUTube Choice. Pt Sprint Google AT&T NERSC Climate Ref: http: //www. focus. com/fyi/operations/10 -largest-databases-in-the-world/ 1 -21

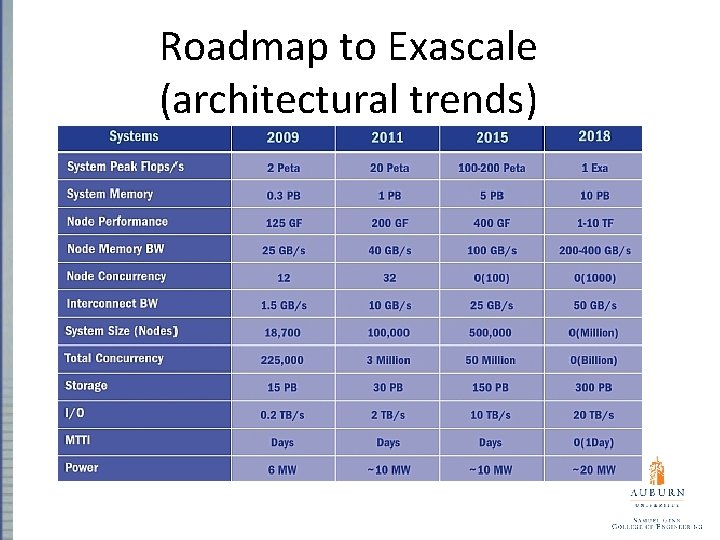

Roadmap to Exascale (architectural trends)

- Slides: 22