Audio Fingerprinting JyhShing Roger Jang http mirlab orgjang

Audio Fingerprinting Jyh-Shing Roger Jang (張智星) http: //mirlab. org/jang CSIE Dept, National Taiwan University

Intro to Audio Fingerprinting (AFP) z. Goal y. Identify a noisy version of a given audio clips z. Also known as… y“Query by exact example” no “cover versions” z. Can also be used to… y. To align two different-speed audio clips of the same source

AFP Challenges z. Music variations y. Encoding/compression (MP 3 encoding, etc) y. Channel variations x. Speakers & microphones, room acoustics y. Environmental noise z. Efficiency (6 M tags/day for Shazam) z. Database collection (15 M tracks for Shazam)

AFP Applications z. Commercial applications of AFP y. Music identification & purchase y. Royalty assignment (over radio) y. TV shows or commercials ID (over TV) y. Copyright violation (over web) z. Major players y. Shazam, Soundhound, Intonow, Viggle

Company: Shazam z. Facts y. First commercial product of audio fingerprinting y. Since 2002, UK z. Technology y. Audio fingerprinting z. Founder y. Avery Wang (Ph. D at Standard, 1994)

Company: Soundhound z Facts y. First product with multi-modal music search y. AKA: midomi z Technologies y. Audio fingerprinting y. Query by singing/humming y. Speech recognition z Founder y. Keyvan Mohajer (Ph. D at Stanford, 2007)

Two Stages in AFP z Offline y. Robust feature extraction (audio fingerprinting) y. Hash table construction y. Inverted indexing z Online y. Robust feature extraction y. Hash table search y. Ranked list of the retrieved songs/music

Robust Feature Extraction z. Various kinds of features for AFP y. Invariance along time and frequency y. Landmark of a pair of local maxima y. Wavelets y… z. Extensive test required for choosing the best features

Representative Approaches to AFP z Philips y. J. Haitsma and T. Kalker, “A highly robust audio fingerprinting system”, ISMIR 2002. z Shazam y. A. Wang, “An industrialstrength audio search algorithm”, ISMIR 2003 z Google y S. Baluja and M. Covell, “Content fingerprinting using wavelets”, Euro. Conf. on Visual Media Production, 2006. y V. Chandrasekhar, M. Sharifi, and D. A. Ross, “Survey and evaluation of audio fingerprinting schemes for mobile query-by-example applications”, ISMIR 2011

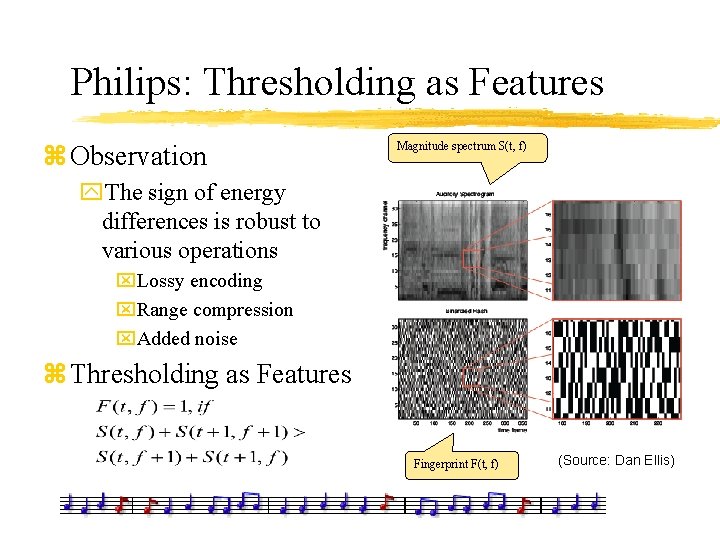

Philips: Thresholding as Features z Observation Magnitude spectrum S(t, f) y. The sign of energy differences is robust to various operations x. Lossy encoding x. Range compression x. Added noise z Thresholding as Features Fingerprint F(t, f) (Source: Dan Ellis)

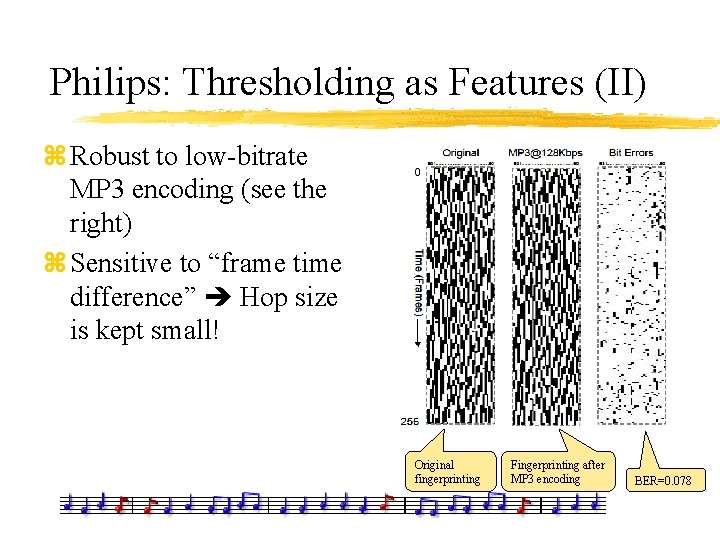

Philips: Thresholding as Features (II) z Robust to low-bitrate MP 3 encoding (see the right) z Sensitive to “frame time difference” Hop size is kept small! Original fingerprinting Fingerprinting after MP 3 encoding BER=0. 078

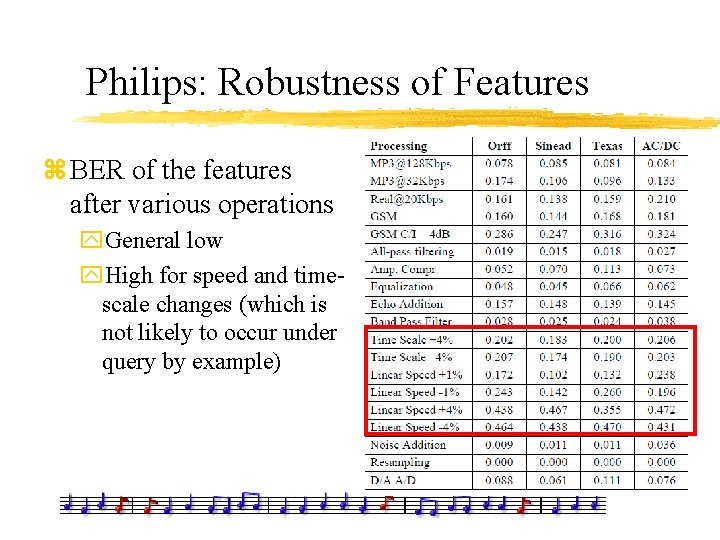

Philips: Robustness of Features z BER of the features after various operations y. General low y. High for speed and timescale changes (which is not likely to occur under query by example)

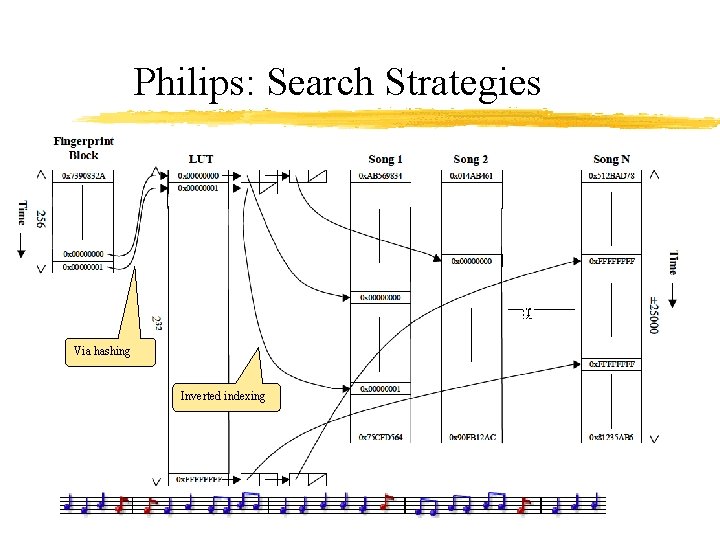

Philips: Search Strategies Via hashing Inverted indexing

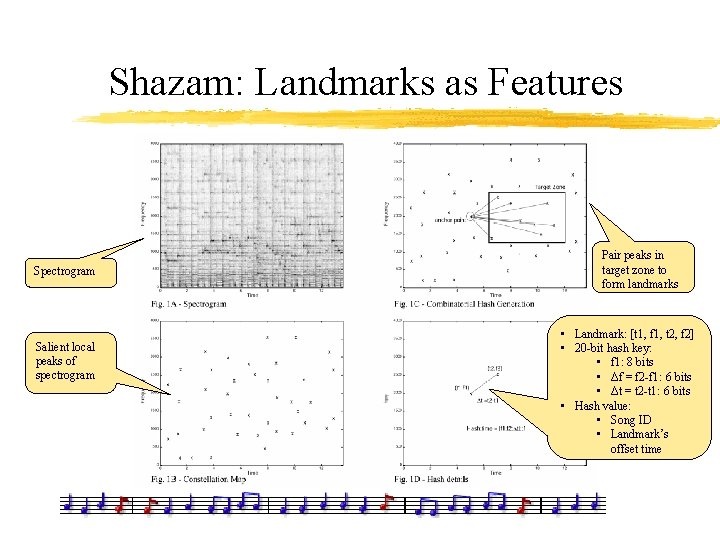

Shazam: Landmarks as Features Spectrogram Salient local peaks of spectrogram Pair peaks in target zone to form landmarks • Landmark: [t 1, f 1, t 2, f 2] • 20 -bit hash key: • f 1: 8 bits • Δf = f 2 -f 1: 6 bits • Δt = t 2 -t 1: 6 bits • Hash value: • Song ID • Landmark’s offset time

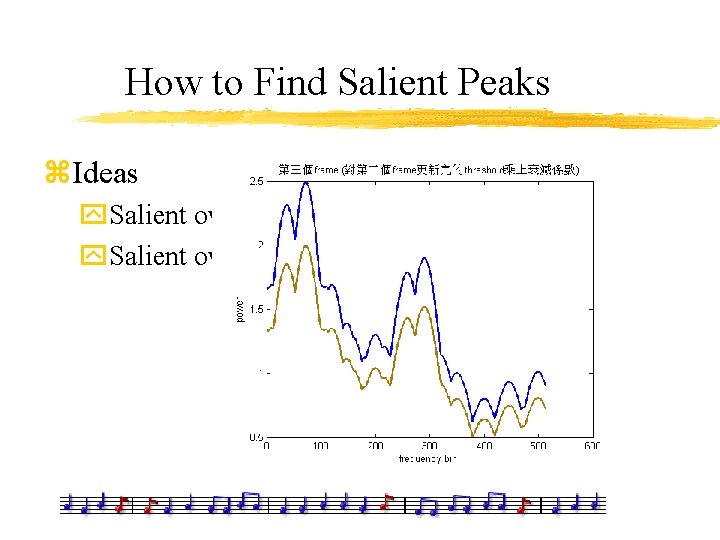

How to Find Salient Peaks z. Ideas y. Salient over frequency y. Salient over time

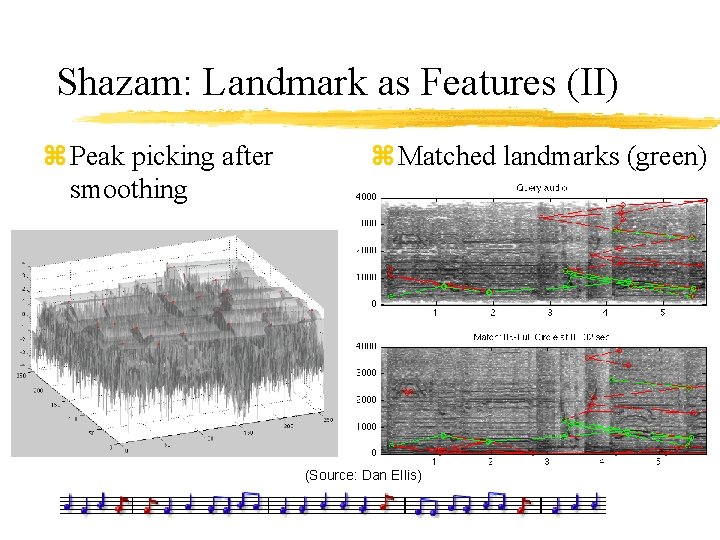

Shazam: Landmark as Features (II) z Peak picking after smoothing z Matched landmarks (green) (Source: Dan Ellis)

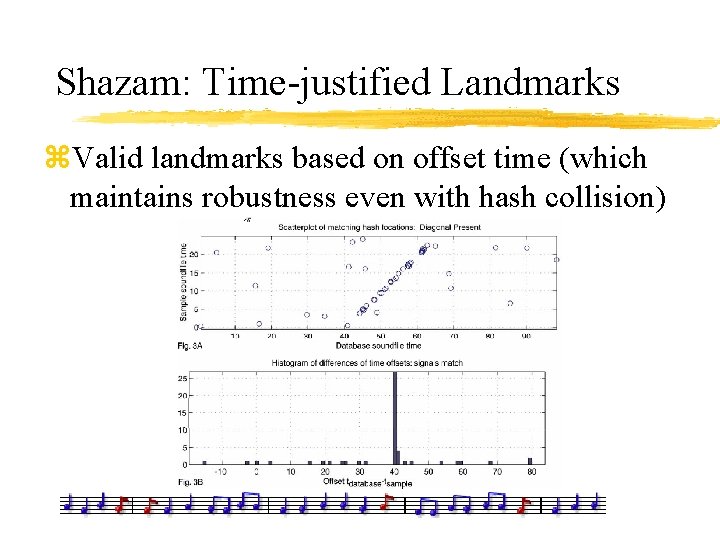

Shazam: Time-justified Landmarks z. Valid landmarks based on offset time (which maintains robustness even with hash collision)

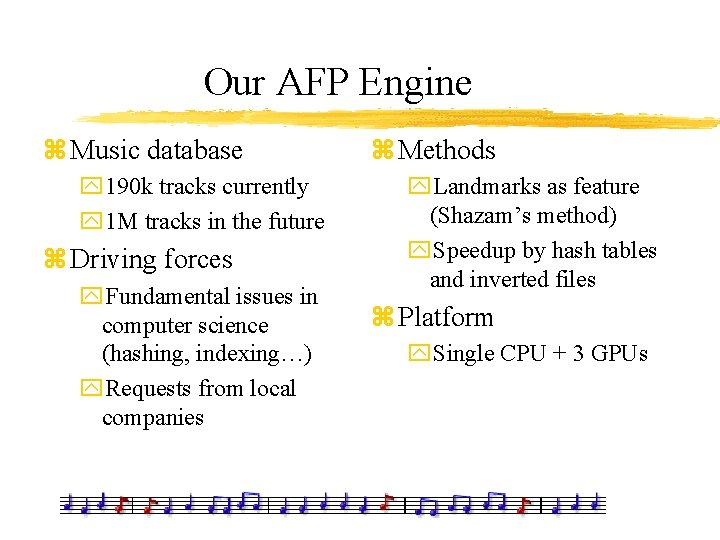

Our AFP Engine z Music database y 190 k tracks currently y 1 M tracks in the future z Driving forces y. Fundamental issues in computer science (hashing, indexing…) y. Requests from local companies z Methods y. Landmarks as feature (Shazam’s method) y. Speedup by hash tables and inverted files z Platform y. Single CPU + 3 GPUs

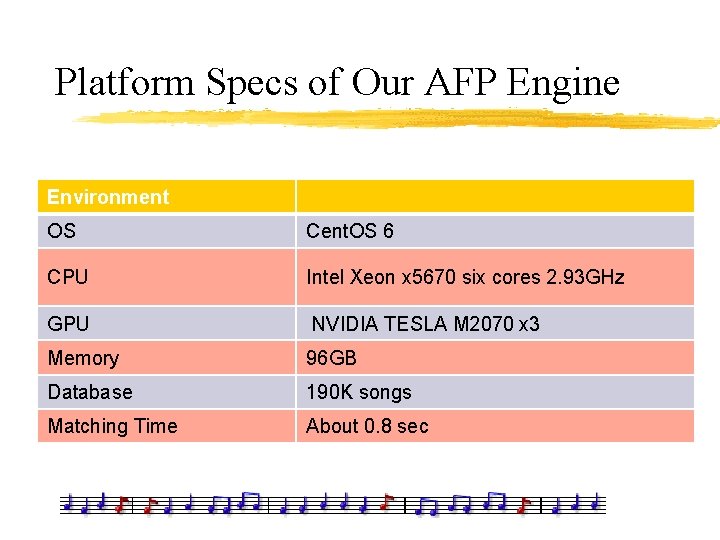

Platform Specs of Our AFP Engine Environment OS Cent. OS 6 CPU Intel Xeon x 5670 six cores 2. 93 GHz GPU NVIDIA TESLA M 2070 x 3 Memory 96 GB Database 190 K songs Matching Time About 0. 8 sec

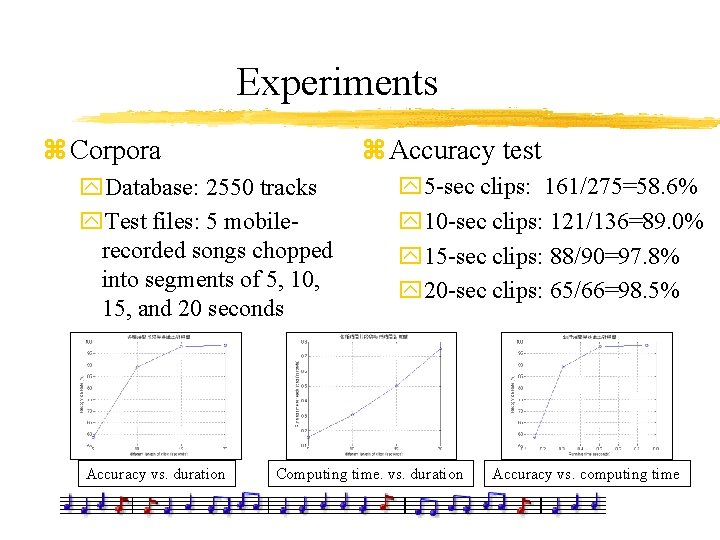

Experiments z Accuracy test z Corpora y. Database: 2550 tracks y. Test files: 5 mobilerecorded songs chopped into segments of 5, 10, 15, and 20 seconds Accuracy vs. duration y 5 -sec clips: 161/275=58. 6% y 10 -sec clips: 121/136=89. 0% y 15 -sec clips: 88/90=97. 8% y 20 -sec clips: 65/66=98. 5% Computing time. vs. duration Accuracy vs. computing time

Demos of Audio Fingerprinting z. Commercial apps y. Shazam y. Soundhound z. Our demo yhttp: //mirlab. org/demo/audio. Fingerprinting (190 k songs)

Conclusions For AFP z. Conclusions y. Landmark-based methods are effective y. Machine learning is indispensable for further improvement. z. Future work: Scale up y. Shazam: 15 M tracks in database, 6 M tags/day y. Our goal: x 50 K tracks with a single PC and GPU x 1 M tracks with cloud computing of 10 PC

References z “An Industrial-Strength Audio Search Algorithm”, Avery Wang, ISMIR, 2003 z Robust Landmark-Based Audio Fingerprinting, Dan Ellis, http: //labrosa. ee. columbia. edu/matlab/fingerprint/ z “Computer Vision for Music Identification”, Y. Ke, D. Hoiem, and R. Sukthankar, CVPR, 2005 z “Content Fingerprinting Using Wavelets”, Baluja, Covell. , Proc. CVMP , 2006 z “Survey and Evaluation of Audio Fingerprinting Schemes for Mobile Query-by-Example Applications”, Vijay Chandrasekhar, Matt Sharifi, David A. Ross, ISMIR, 2011

- Slides: 23