Approximate Inference Methods Loopy belief propagation Forward sampling

Approximate Inference Methods • Loopy belief propagation • Forward sampling • Likelihood weighting • Gibbs sampling • Other MCMC sampling methods • Variational methods Many slides by Daniel Rembiszewski and Avishay Livne. Based on the book (Chapter 12): “Probabilistic Graphical Models, Principles And Techniques” By Koller and Friedman. 1

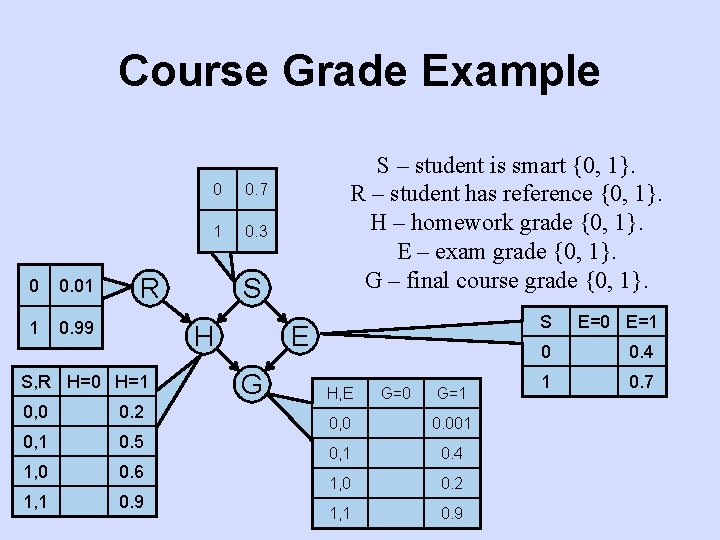

Course Grade Example 0 0. 01 1 0. 99 0 0. 7 1 0. 3 R S H S, R H=0 H=1 0, 0 0. 2 0, 1 0. 5 1, 0 0. 6 1, 1 0. 9 S – student is smart {0, 1}. R – student has reference {0, 1}. H – homework grade {0, 1}. E – exam grade {0, 1}. G – final course grade {0, 1}. E G H, E G=0 G=1 0, 0 0. 001 0, 1 0. 4 1, 0 0. 2 1, 1 0. 9 S E=0 E=1 0 0. 4 1 0. 7

![Query: P(G=1) Forward sampling counter = 0 For i=[1, M]: s = sample from Query: P(G=1) Forward sampling counter = 0 For i=[1, M]: s = sample from](http://slidetodoc.com/presentation_image_h2/815af8996459d421fce66e60aa6cfe03/image-3.jpg)

Query: P(G=1) Forward sampling counter = 0 For i=[1, M]: s = sample from P(S) r = sample from P(R) h = sample from P(H|S=s, R=r) e = sample from P(E|S=s) g = sample from P(G|H=h, E=e) If (condition holds - g=1) counter++ R S H S R H E G Counter 01100 0 10111 1 01001 2 01010 2 11100 2 01110 2 00001 3 … [k, M] E G Sample 1 Sample 2 Sample 3 Sample 4 Sample 5 Sample 6 Sample 7 Sample M

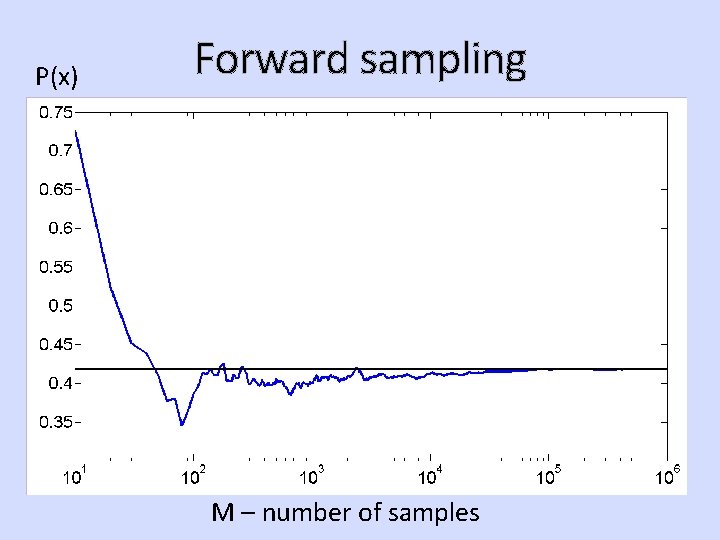

P(x) Forward sampling M – number of samples

![Forward sampling x[m] – sample m. x – the query. - 1 if the Forward sampling x[m] – sample m. x – the query. - 1 if the](http://slidetodoc.com/presentation_image_h2/815af8996459d421fce66e60aa6cfe03/image-5.jpg)

Forward sampling x[m] – sample m. x – the query. - 1 if the condition is satisfied (e. g. G=1), else 0. M – number of samples. Count the number of samples that satisfy the condition and divide by number of samples.

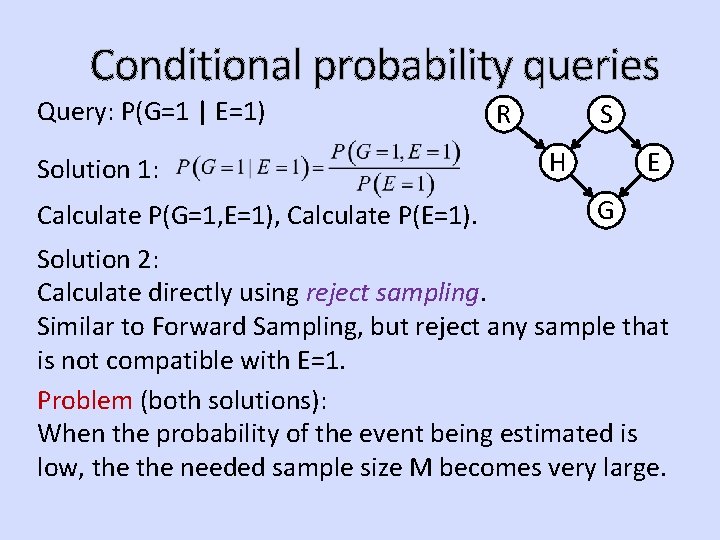

Conditional probability queries Query: P(G=1 | E=1) Solution 1: Calculate P(G=1, E=1), Calculate P(E=1). R S H E G Solution 2: Calculate directly using reject sampling. Similar to Forward Sampling, but reject any sample that is not compatible with E=1. Problem (both solutions): When the probability of the event being estimated is low, the needed sample size M becomes very large.

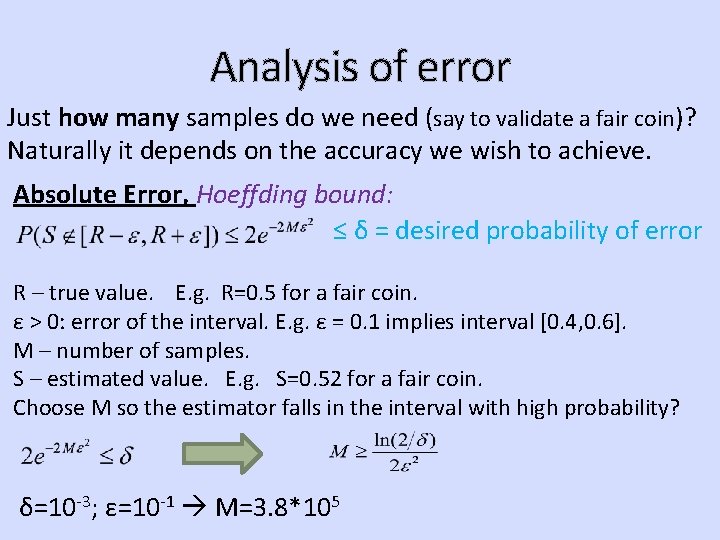

Analysis of error Just how many samples do we need (say to validate a fair coin)? Naturally it depends on the accuracy we wish to achieve. Absolute Error, Hoeffding bound: ≤ δ = desired probability of error R – true value. E. g. R=0. 5 for a fair coin. ε > 0: error of the interval. E. g. ε = 0. 1 implies interval [0. 4, 0. 6]. M – number of samples. S – estimated value. E. g. S=0. 52 for a fair coin. Choose M so the estimator falls in the interval with high probability? δ=10 -3; ε=10 -1 M=3. 8*105

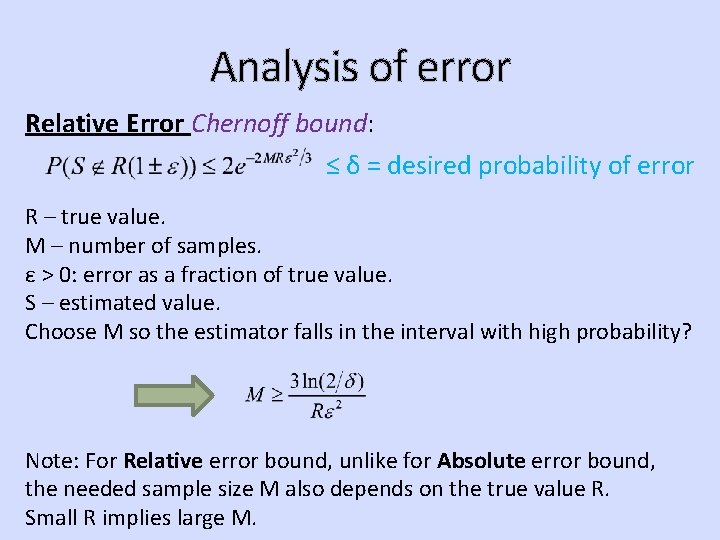

Analysis of error Relative Error Chernoff bound: ≤ δ = desired probability of error R – true value. M – number of samples. ε > 0: error as a fraction of true value. S – estimated value. Choose M so the estimator falls in the interval with high probability? Note: For Relative error bound, unlike for Absolute error bound, the needed sample size M also depends on the true value R. Small R implies large M.

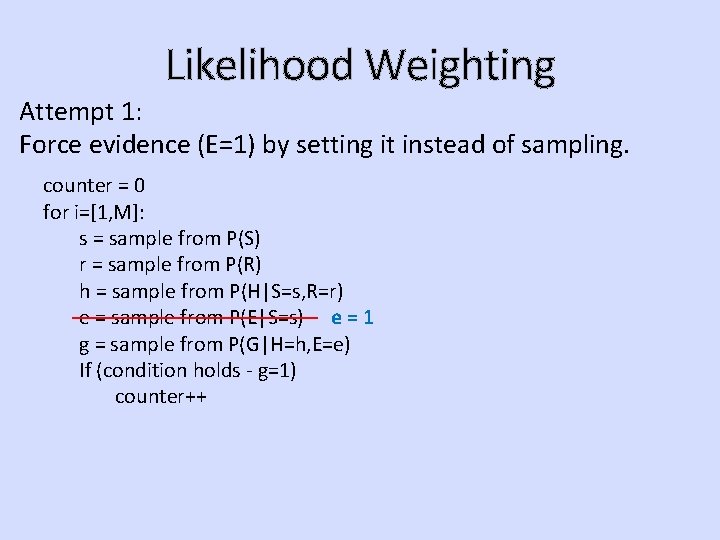

Likelihood Weighting Attempt 1: Force evidence (E=1) by setting it instead of sampling. counter = 0 for i=[1, M]: s = sample from P(S) r = sample from P(R) h = sample from P(H|S=s, R=r) e = sample from P(E|S=s) e = 1 g = sample from P(G|H=h, E=e) If (condition holds - g=1) counter++

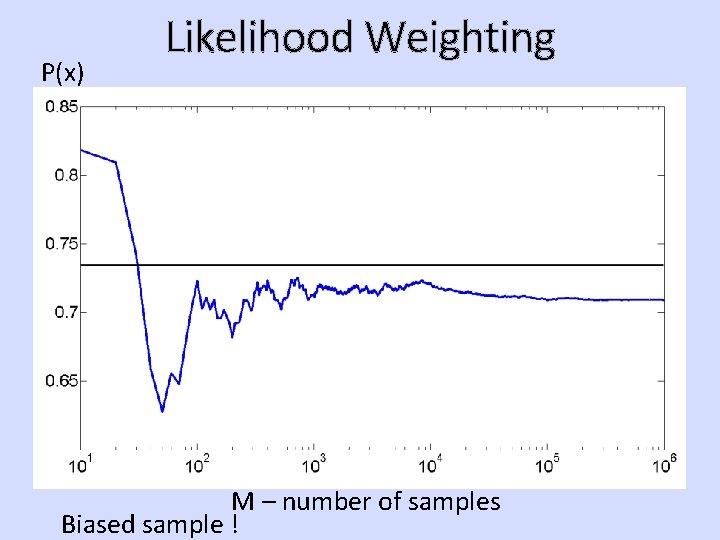

P(x) Likelihood Weighting M – number of samples Biased sample !

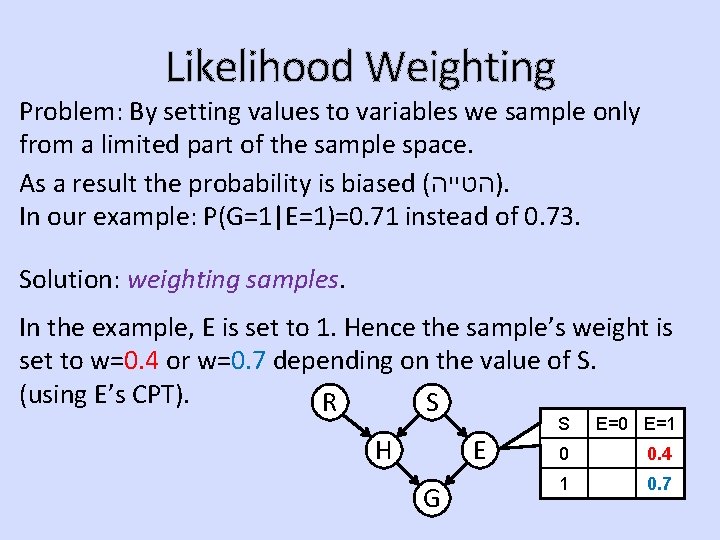

Likelihood Weighting Problem: By setting values to variables we sample only from a limited part of the sample space. As a result the probability is biased ( )הטייה. In our example: P(G=1|E=1)=0. 71 instead of 0. 73. Solution: weighting samples. In the example, E is set to 1. Hence the sample’s weight is set to w=0. 4 or w=0. 7 depending on the value of S. (using E’s CPT). R S H E G S E=0 E=1 0 0. 4 1 0. 7

![Likelihood Weighting Our algorithm: counter = 0 for i=[1, M]: w=1 S = sample Likelihood Weighting Our algorithm: counter = 0 for i=[1, M]: w=1 S = sample](http://slidetodoc.com/presentation_image_h2/815af8996459d421fce66e60aa6cfe03/image-12.jpg)

Likelihood Weighting Our algorithm: counter = 0 for i=[1, M]: w=1 S = sample from P(S) R = sample from P(R) H = sample from P(H|S, R) E = set to true. w *= P(E=1|S=s) G = sample from P(G|H, E) If (condition holds - g=1) counter += w Output = counter / M

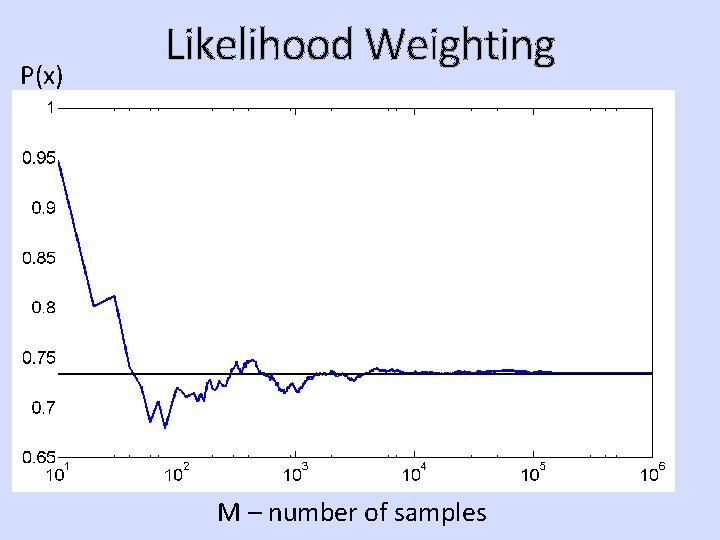

P(x) Likelihood Weighting M – number of samples

![Likelihood Weighting Our algorithm: General case algorithm: counter = 0 for i=[1, M]: w=1 Likelihood Weighting Our algorithm: General case algorithm: counter = 0 for i=[1, M]: w=1](http://slidetodoc.com/presentation_image_h2/815af8996459d421fce66e60aa6cfe03/image-14.jpg)

Likelihood Weighting Our algorithm: General case algorithm: counter = 0 for i=[1, M]: w=1 S = sample from P(S) foreach x (in topological order): R = sample from P(R) if (x in evidence) H = sample from P(H|S, R) x = value in evidence E = set to true. w *= P(E=1|S=s) w *= P(X=x|u) G = sample from P(G|H, E) else If (condition holds - g=1) x = sample from P(X|u) counter += w If (condition holds) Output = counter / M counter += w Output = counter / M u = variables with assigned value. P(X|u) = probability of x given its parents in BN.

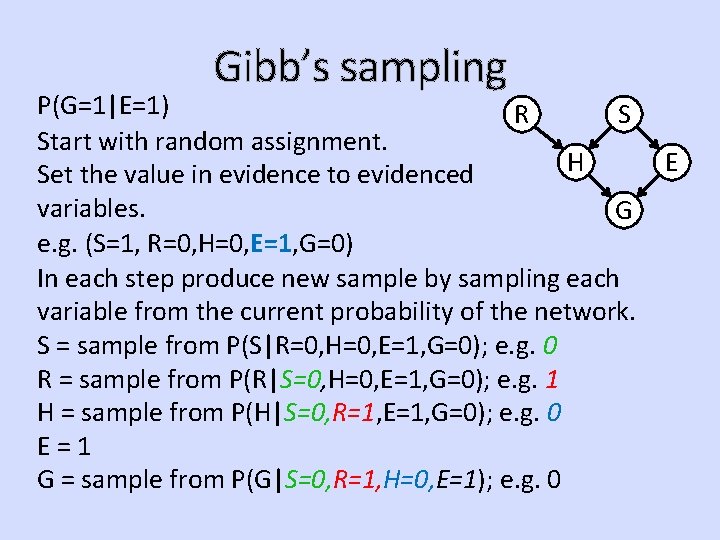

Gibb’s sampling P(G=1|E=1) R S Start with random assignment. H Set the value in evidence to evidenced variables. G e. g. (S=1, R=0, H=0, E=1, G=0) In each step produce new sample by sampling each variable from the current probability of the network. S = sample from P(S|R=0, H=0, E=1, G=0); e. g. 0 R = sample from P(R|S=0, H=0, E=1, G=0); e. g. 1 H = sample from P(H|S=0, R=1, E=1, G=0); e. g. 0 E=1 G = sample from P(G|S=0, R=1, H=0, E=1); e. g. 0 E

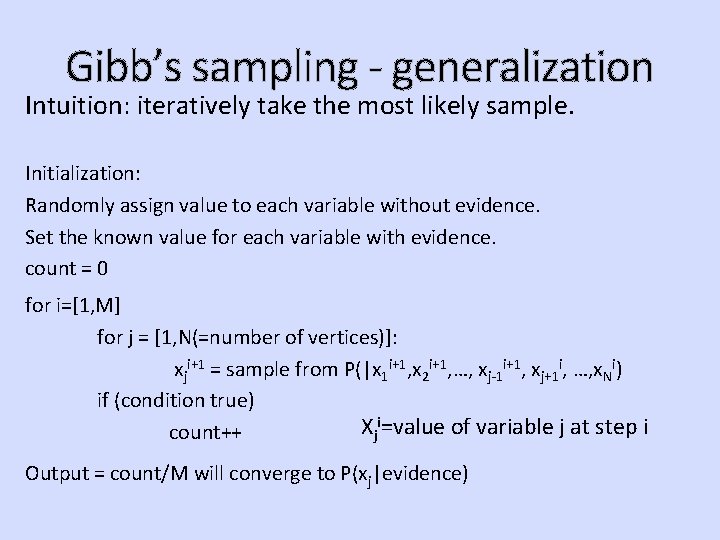

Gibb’s sampling - generalization Intuition: iteratively take the most likely sample. Initialization: Randomly assign value to each variable without evidence. Set the known value for each variable with evidence. count = 0 for i=[1, M] for j = [1, N(=number of vertices)]: xji+1 = sample from P(|x 1 i+1, x 2 i+1, …, xj-1 i+1, xj+1 i, …, x. Ni) if (condition true) Xji=value of variable j at step i count++ Output = count/M will converge to P(xj|evidence)

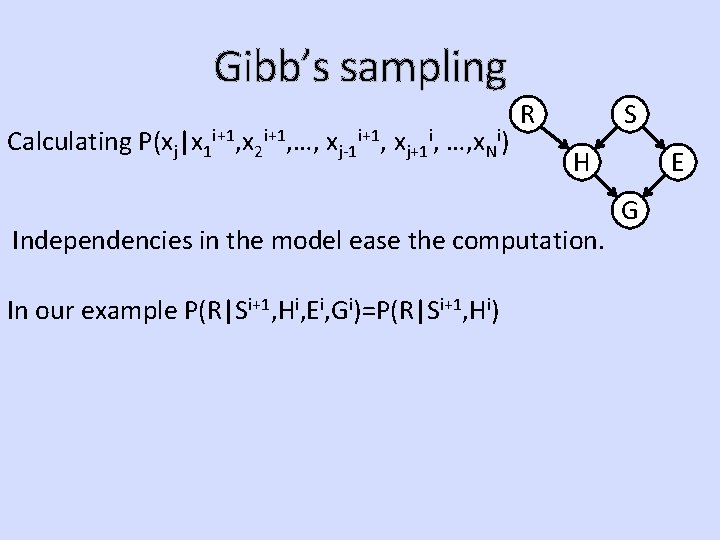

Gibb’s sampling Calculating P(xj|x 1 i+1, x 2 i+1, …, xj-1 i+1, xj+1 i, …, x. Ni) R S H Independencies in the model ease the computation. In our example P(R|Si+1, Hi, Ei, Gi)=P(R|Si+1, Hi) E G

- Slides: 17