Advanced Condor mechanisms CERN Feb 14 2011 Condor

Advanced Condor mechanisms CERN Feb 14 2011 Condor Project Computer Sciences Department University of Wisconsin-Madison

a better title… “Condor Potpourri” › Igor feedback › “Could be useful to people, but not Monday” If not of interest, new topic in 1 minute www. condorproject. org 2

Central Manager Failover › Condor Central Manager has two › services condor_collector h. Now a list of collectors is supported › condor_negotiator (matchmaker) h. If fails, election process, another takes over h. Contributed technology from Technion www. condorproject. org 3

Submit node robustness: Job Progress continues if connection is interrupted › Condor supports reestablishment of the connection between the submitting and executing machines. h If network outage between execute and submit machine h If submit machine restarts › To take advantage of this feature, put the following line into their job’s submit description file: Job. Lease. Duration = <N seconds> For example: job_lease_duration = 1200 www. condorproject. org 4

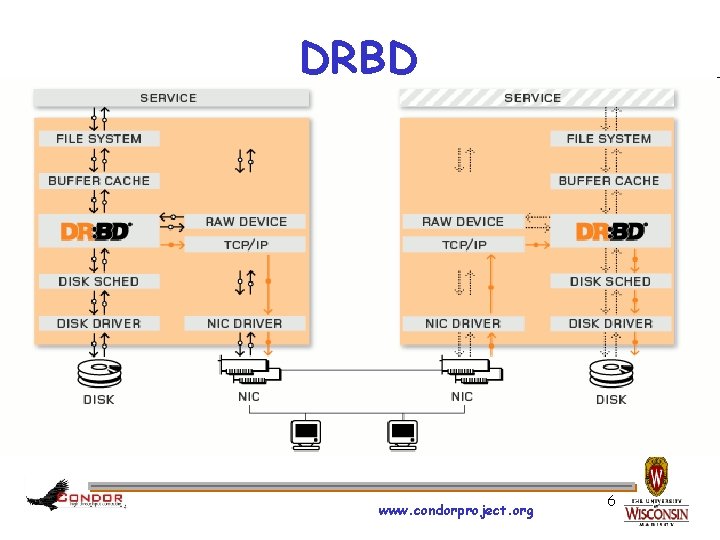

Submit node robustness: Job Progress continues if submit machine fails Automatic Schedd Failover Condor can support a submit machine “hot spare” h. If your submit machine A is down for longer than N minutes, a second machine B can take over h. Requires shared filesystem (or just DRBD*? ) between machines A and B *Distributed Replicated Block Device – www. drbd. org www. condorproject. org 5

DRBD www. condorproject. org 6

Interactive Debugging › Why is my job still running? › Is it stuck accessing a file? Is it in an infinite loop? condor_ssh_to_job h. Interactive debugging in UNIX h. Use ps, top, gdb, strace, lsof, … h. Forward ports, X, transfer files, etc. www. condorproject. org 7

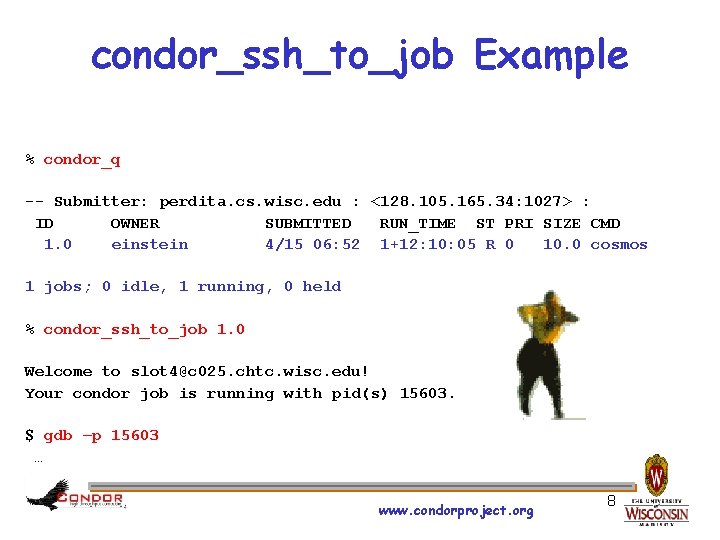

condor_ssh_to_job Example % condor_q -- Submitter: perdita. cs. wisc. edu : <128. 105. 165. 34: 1027> : ID OWNER SUBMITTED RUN_TIME ST PRI SIZE CMD 1. 0 einstein 4/15 06: 52 1+12: 10: 05 R 0 10. 0 cosmos 1 jobs; 0 idle, 1 running, 0 held % condor_ssh_to_job 1. 0 Welcome to slot 4@c 025. chtc. wisc. edu! Your condor job is running with pid(s) 15603. $ gdb –p 15603 … www. condorproject. org 8

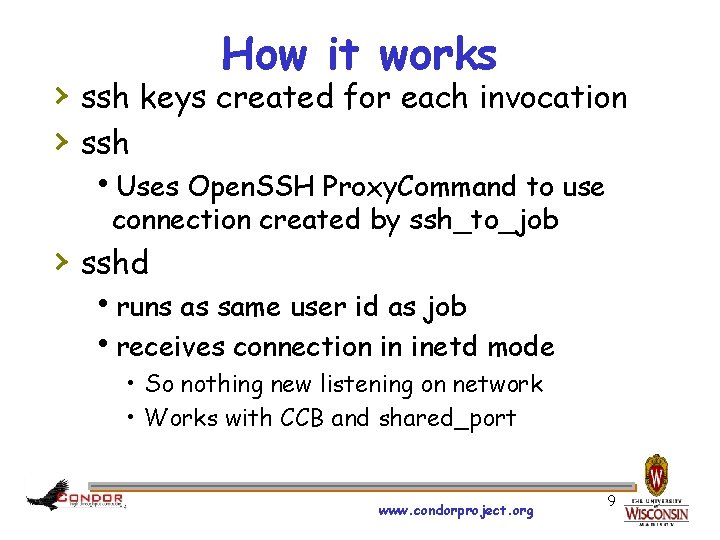

How it works › ssh keys created for each invocation › ssh h. Uses Open. SSH Proxy. Command to use connection created by ssh_to_job › sshd hruns as same user id as job hreceives connection in inetd mode • So nothing new listening on network • Works with CCB and shared_port www. condorproject. org 9

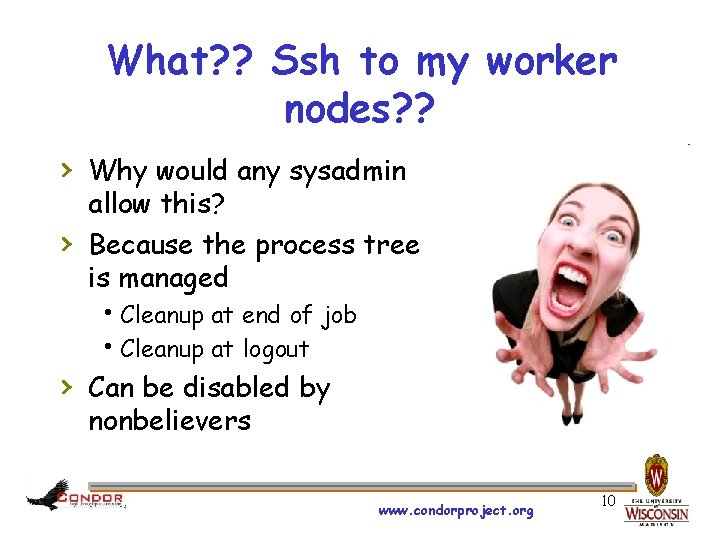

What? ? Ssh to my worker nodes? ? › Why would any sysadmin › allow this? Because the process tree is managed h. Cleanup at end of job h. Cleanup at logout › Can be disabled by nonbelievers www. condorproject. org 10

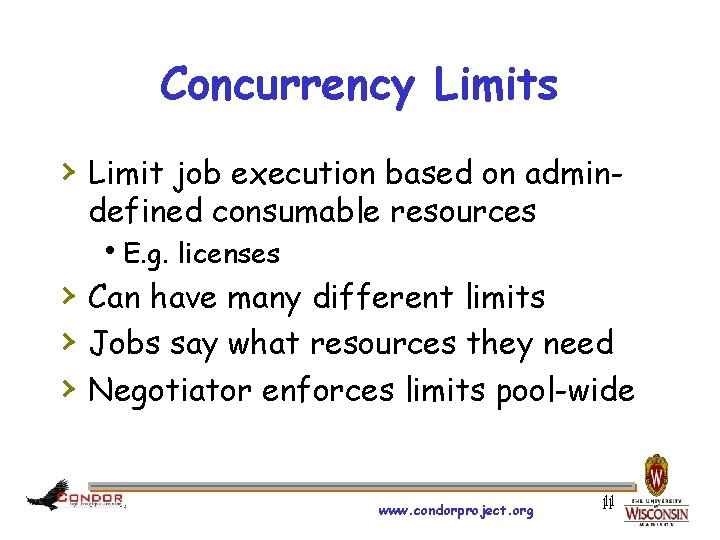

Concurrency Limits › Limit job execution based on admindefined consumable resources h. E. g. licenses › Can have many different limits › Jobs say what resources they need › Negotiator enforces limits pool-wide www. condorproject. org 11 11

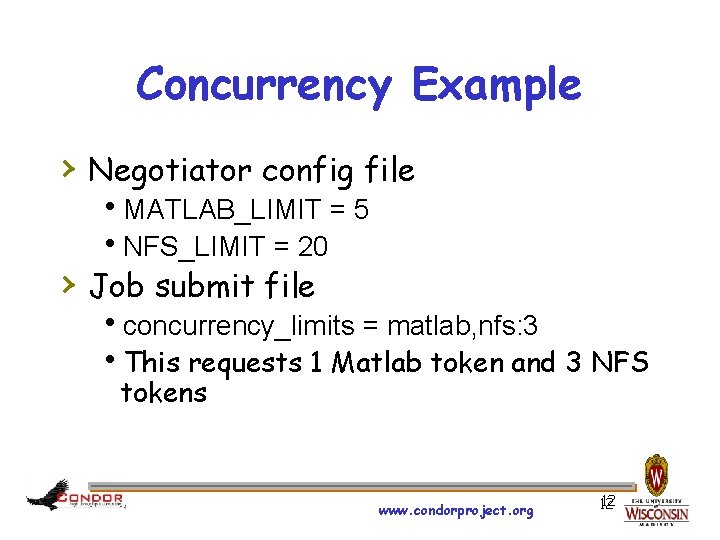

Concurrency Example › Negotiator config file h. MATLAB_LIMIT = 5 h. NFS_LIMIT = 20 › Job submit file hconcurrency_limits = matlab, nfs: 3 h. This requests 1 Matlab token and 3 NFS tokens www. condorproject. org 12 12

Green Computing › The startd has the ability to place a machine into a low power state. (Standby, Hibernate, Soft-Off, etc. ) h. HIBERNATE, HIBERNATE_CHECK_INTERVAL h. If all slots return non-zero, then the machine can powered down via condor_power hook h. A final acked classad is sent to the collector that contains wake-up information › Machines ads in “Offline State” h. Stored persistently to disk h. Ad updated with “demand” information: if this machine was around, would it be matched? www. condorproject. org 13

Now what? www. condorproject. org 14

condor_rooster › Periodically wake up based on Class. Ad › › expression (Rooster_Un. Hibernate) Throttling controls Hook callouts make for interesting possibilities… www. condorproject. org 15

Dynamic Slot Partitioning › Divide slots into chunks sized for › › › matched jobs Readvertise remaining resources Partitionable resources are cpus, memory, and disk See Matt Farrellee’s talk www. condorproject. org 20 20

Dynamic Partitioning Caveats › Cannot preempt original slot or group of sub-slots h. Potential starvation of jobs with large resource requirements › Partitioning happens once per slot each negotiation cycle h. Scheduling of large slots may be slow www. condorproject. org 21 21

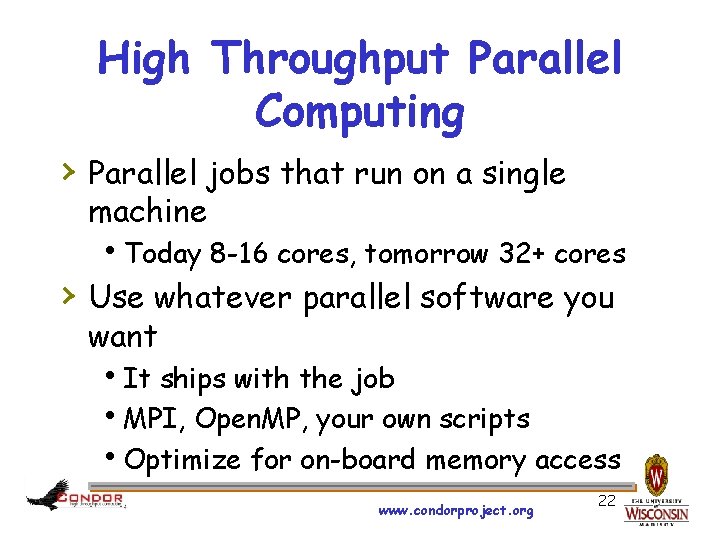

High Throughput Parallel Computing › Parallel jobs that run on a single machine h. Today 8 -16 cores, tomorrow 32+ cores › Use whatever parallel software you want h. It ships with the job h. MPI, Open. MP, your own scripts h. Optimize for on-board memory access www. condorproject. org 22

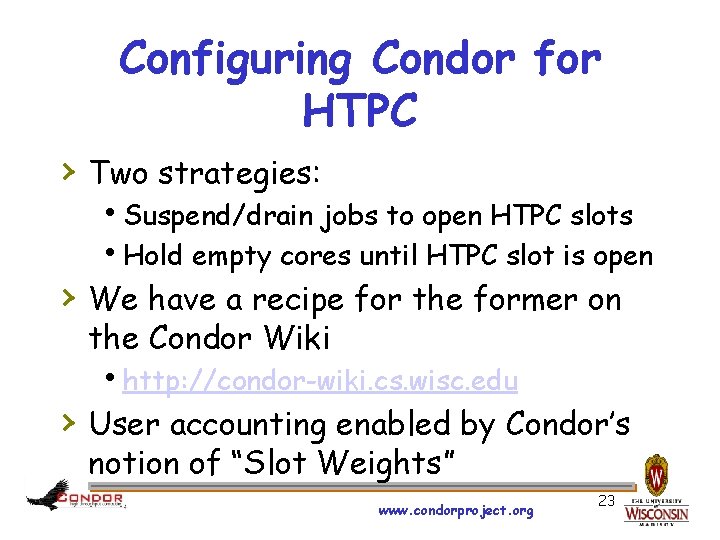

Configuring Condor for HTPC › Two strategies: h. Suspend/drain jobs to open HTPC slots h. Hold empty cores until HTPC slot is open › We have a recipe for the former on the Condor Wiki hhttp: //condor-wiki. cs. wisc. edu › User accounting enabled by Condor’s notion of “Slot Weights” www. condorproject. org 23

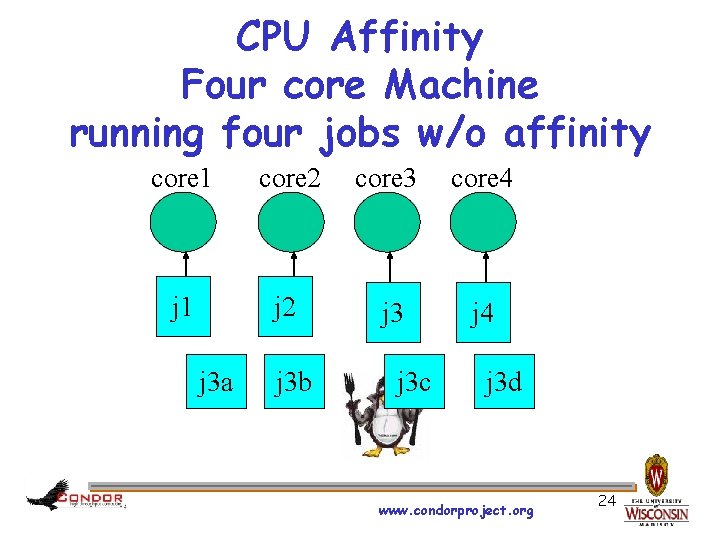

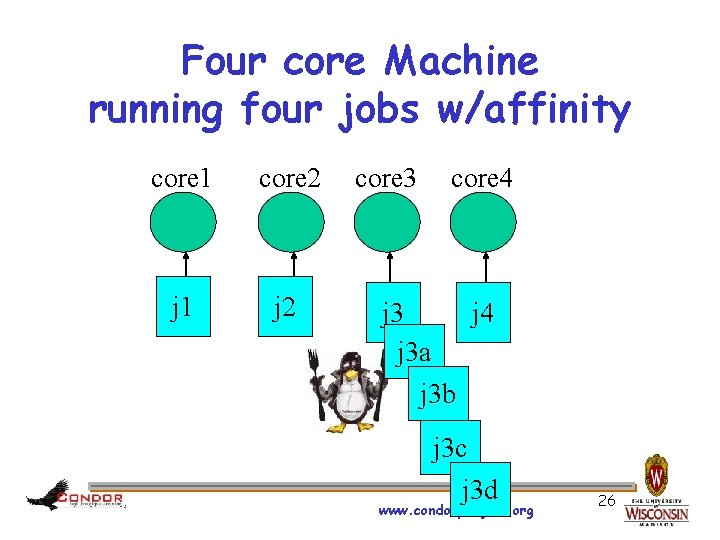

CPU Affinity Four core Machine running four jobs w/o affinity core 1 core 2 core 3 core 4 j 1 j 2 j 3 j 4 j 3 a j 3 b j 3 c j 3 d www. condorproject. org 24

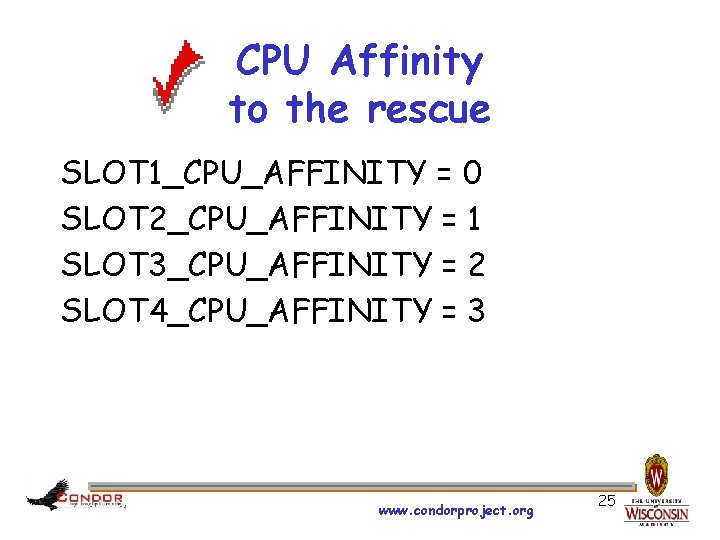

CPU Affinity to the rescue SLOT 1_CPU_AFFINITY = 0 SLOT 2_CPU_AFFINITY = 1 SLOT 3_CPU_AFFINITY = 2 SLOT 4_CPU_AFFINITY = 3 www. condorproject. org 25

Four core Machine running four jobs w/affinity core 1 core 2 j 1 j 2 core 3 core 4 j 3 j 4 j 3 a j 3 b j 3 c j 3 d www. condorproject. org 26

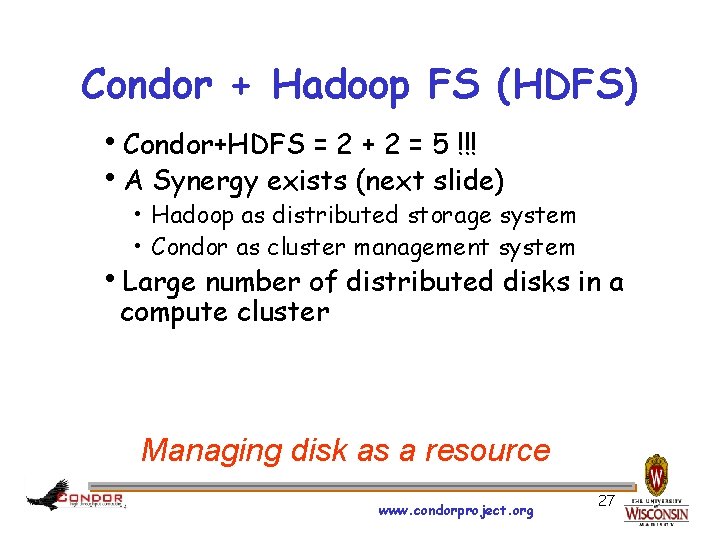

Condor + Hadoop FS (HDFS) h. Condor+HDFS = 2 + 2 = 5 !!! h. A Synergy exists (next slide) • Hadoop as distributed storage system • Condor as cluster management system h. Large number of distributed disks in a compute cluster Managing disk as a resource www. condorproject. org 27

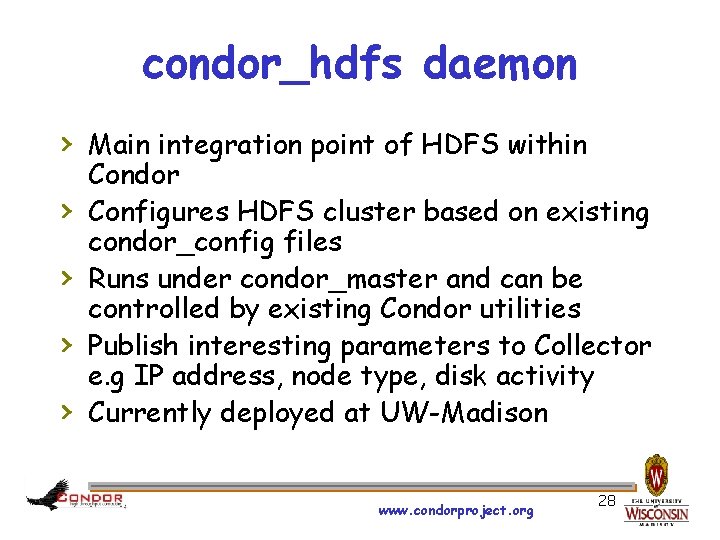

condor_hdfs daemon › Main integration point of HDFS within › › Condor Configures HDFS cluster based on existing condor_config files Runs under condor_master and can be controlled by existing Condor utilities Publish interesting parameters to Collector e. g IP address, node type, disk activity Currently deployed at UW-Madison www. condorproject. org 28

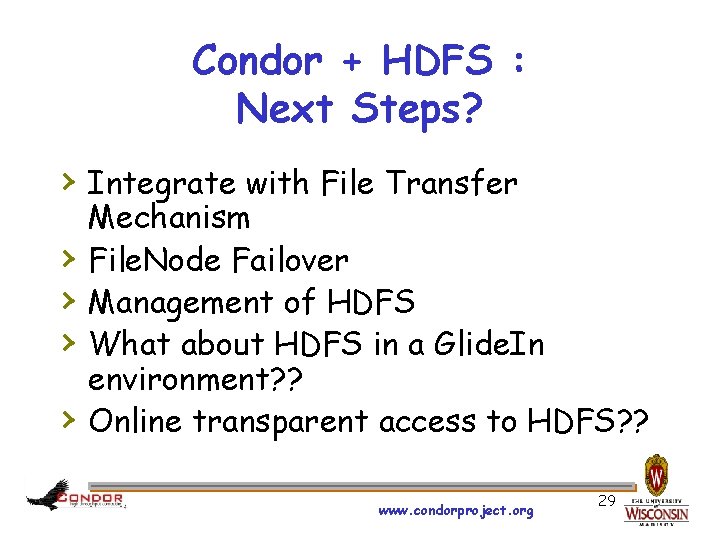

Condor + HDFS : Next Steps? › Integrate with File Transfer › › Mechanism File. Node Failover Management of HDFS What about HDFS in a Glide. In environment? ? Online transparent access to HDFS? ? www. condorproject. org 29

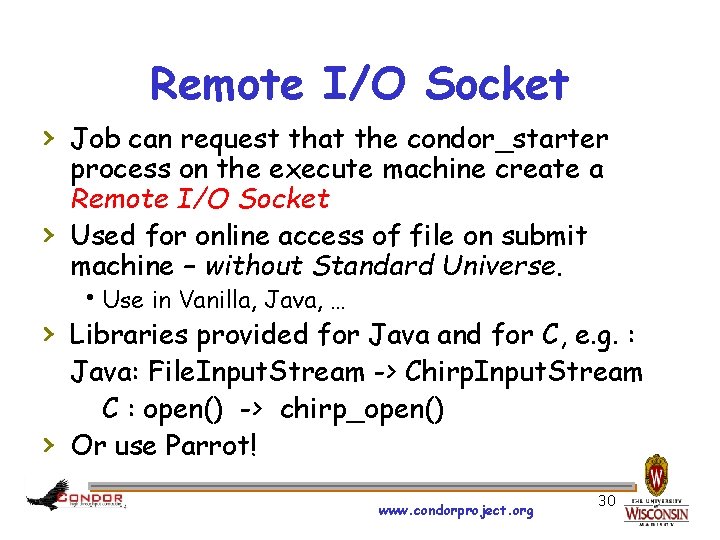

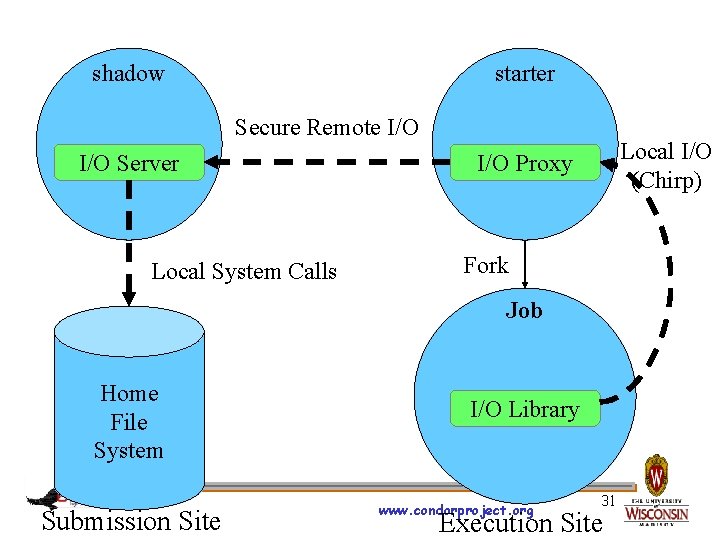

Remote I/O Socket › Job can request that the condor_starter › process on the execute machine create a Remote I/O Socket Used for online access of file on submit machine – without Standard Universe. h. Use in Vanilla, Java, … › Libraries provided for Java and for C, e. g. : › Java: File. Input. Stream -> Chirp. Input. Stream C : open() -> chirp_open() Or use Parrot! www. condorproject. org 30

shadow starter Secure Remote I/O Server Local System Calls Local I/O (Chirp) I/O Proxy Fork Job Home File System Submission Site I/O Library www. condorproject. org 31 Execution Site

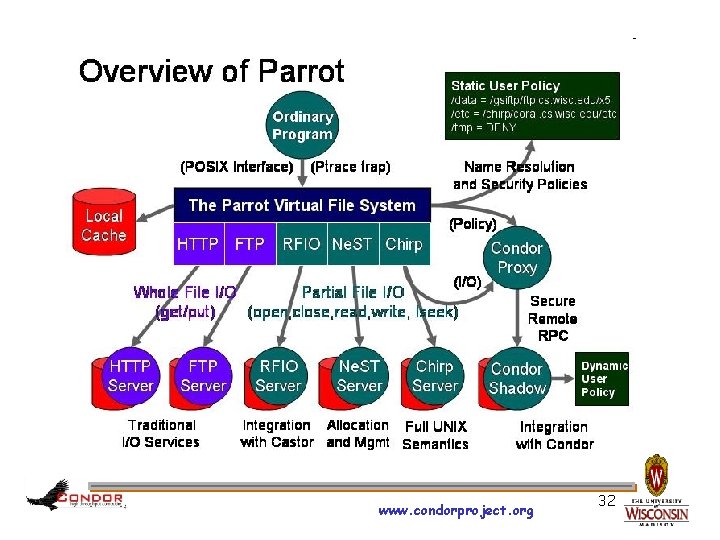

www. condorproject. org 32

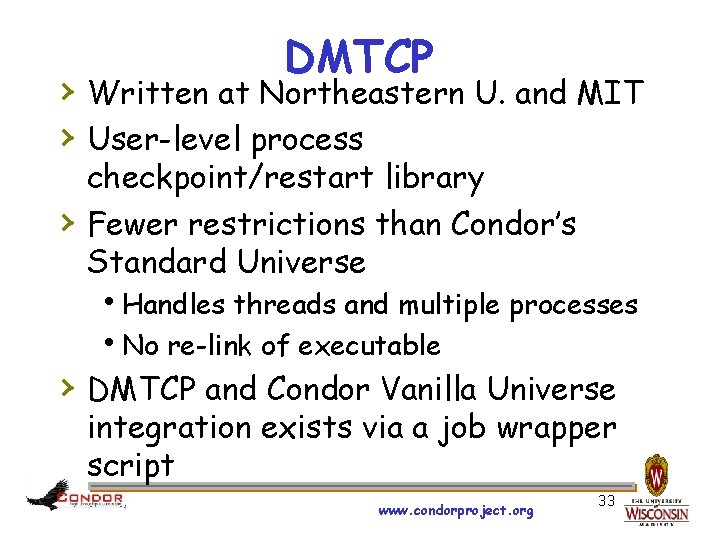

DMTCP › Written at Northeastern U. and MIT › User-level process › checkpoint/restart library Fewer restrictions than Condor’s Standard Universe h. Handles threads and multiple processes h. No re-link of executable › DMTCP and Condor Vanilla Universe integration exists via a job wrapper script www. condorproject. org 33

Questions? Thank You! www. condorproject. org 34

- Slides: 30