A Statistical Learning Approach to Diagnosing e Bays

A Statistical Learning Approach to Diagnosing e. Bay’s Site Mike Chen, Alice Zheng, Jim Lloyd, Michael Jordan, Eric Brewer mikechen@cs. berkeley. edu Jan 12, 2004 Path-based Diagnosis

Motivation § Fast failure detection and diagnosis are critical to high availability – But, exact root cause may not be required for many recovery techniques § Many potential causes of failures – Software bugs, hardware, configuration, network, database, etc. – Manual diagnosis is slow and inconsistent § Statistical approaches are ideal – Simultaneously examining many possible causes of failures – Robust to noise Jan 12, 2004 Path-based Diagnosis 2

Challenges § Lots of (noisy) data § Near real-time detection and diagnosis § Multiple independent failures § Root cause might not be captured in logs Jan 12, 2004 Path-based Diagnosis 3

Talk Outline § Introduction § e. Bay’s infrastructure § 3 statistical approaches § Early results Jan 12, 2004 Path-based Diagnosis 4

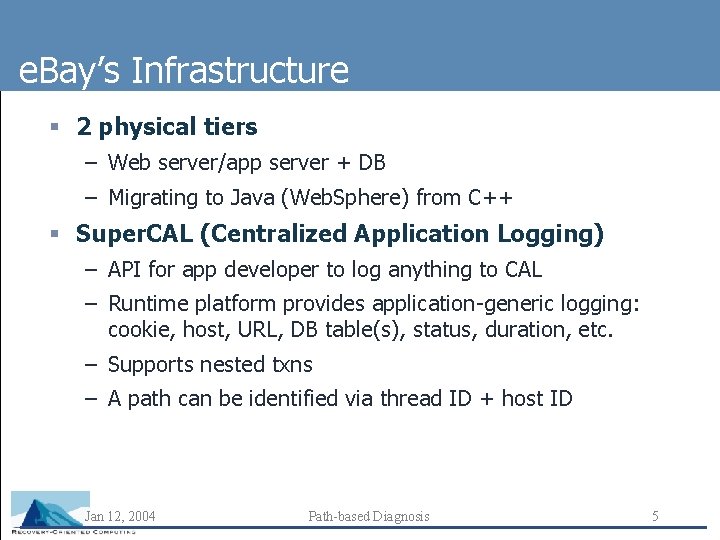

e. Bay’s Infrastructure § 2 physical tiers – Web server/app server + DB – Migrating to Java (Web. Sphere) from C++ § Super. CAL (Centralized Application Logging) – API for app developer to log anything to CAL – Runtime platform provides application-generic logging: cookie, host, URL, DB table(s), status, duration, etc. – Supports nested txns – A path can be identified via thread ID + host ID Jan 12, 2004 Path-based Diagnosis 5

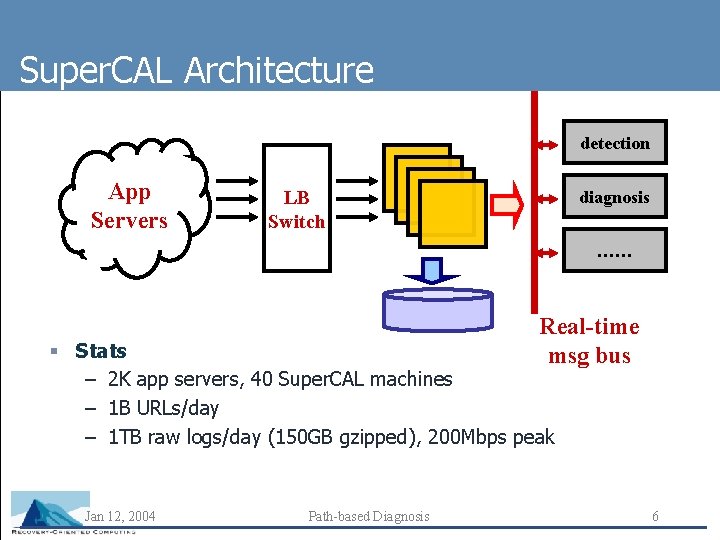

Super. CAL Architecture detection App Servers LB Switch diagnosis …… Real-time msg bus § Stats – 2 K app servers, 40 Super. CAL machines – 1 B URLs/day – 1 TB raw logs/day (150 GB gzipped), 200 Mbps peak Jan 12, 2004 Path-based Diagnosis 6

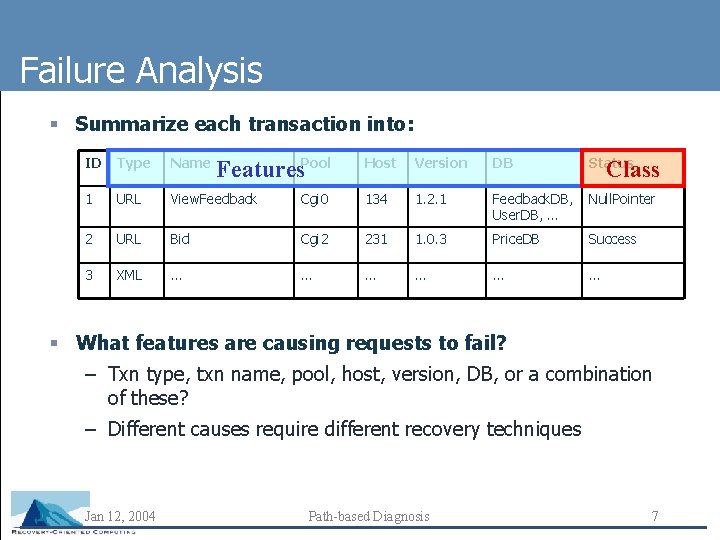

Failure Analysis § Summarize each transaction into: ID Type Name Features. Pool Host Version DB Status 1 URL View. Feedback Cgi 0 134 1. 2. 1 Feedback. DB, User. DB, … Null. Pointer 2 URL Bid Cgi 2 231 1. 0. 3 Price. DB Success 3 XML … … … Class § What features are causing requests to fail? – Txn type, txn name, pool, host, version, DB, or a combination of these? – Different causes require different recovery techniques Jan 12, 2004 Path-based Diagnosis 7

3 Approaches § Machine learning – Decision trees – Min. Entropy – e. Bay’s greedy variant of decision trees § Data mining – Association rules Jan 12, 2004 Path-based Diagnosis 8

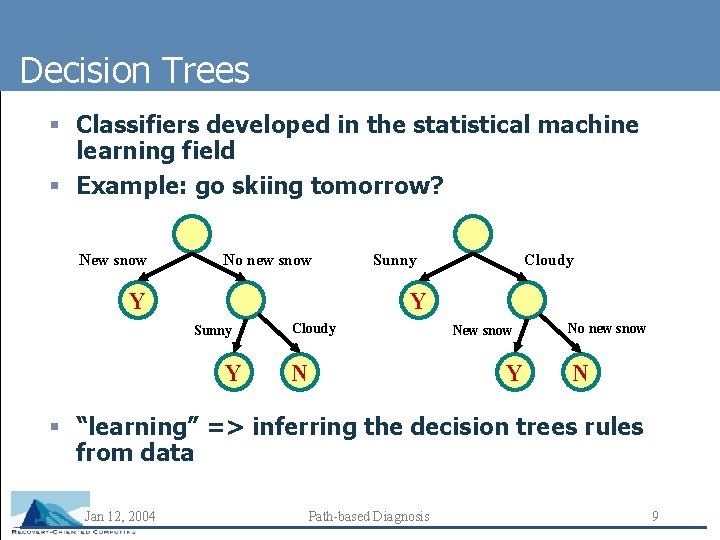

Decision Trees § Classifiers developed in the statistical machine learning field § Example: go skiing tomorrow? New snow No new snow Y Sunny Cloudy Y Sunny Y Cloudy N New snow Y No new snow N § “learning” => inferring the decision trees rules from data Jan 12, 2004 Path-based Diagnosis 9

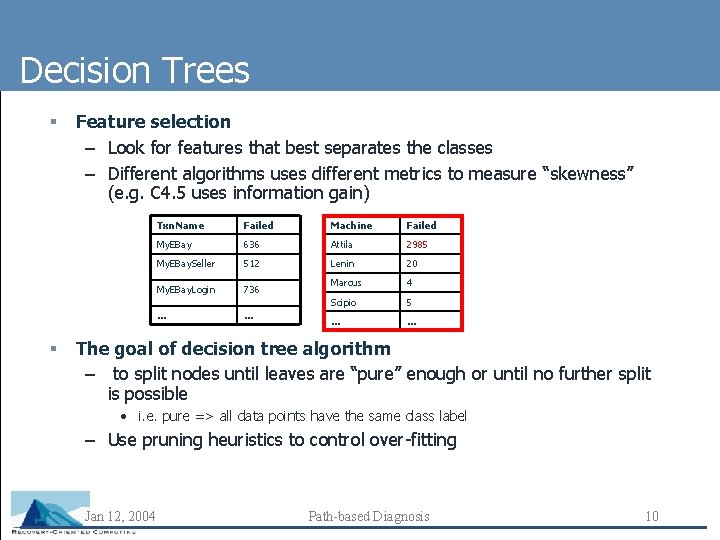

Decision Trees § § Feature selection – Look for features that best separates the classes – Different algorithms uses different metrics to measure “skewness” (e. g. C 4. 5 uses information gain) Txn. Name Failed Machine Failed My. EBay 636 Attila 2985 My. EBay. Seller 512 Lenin 20 My. EBay. Login 736 Marcus 4 Scipio 5 … … The goal of decision tree algorithm – to split nodes until leaves are “pure” enough or until no further split is possible • i. e. pure => all data points have the same class label – Use pruning heuristics to control over-fitting Jan 12, 2004 Path-based Diagnosis 10

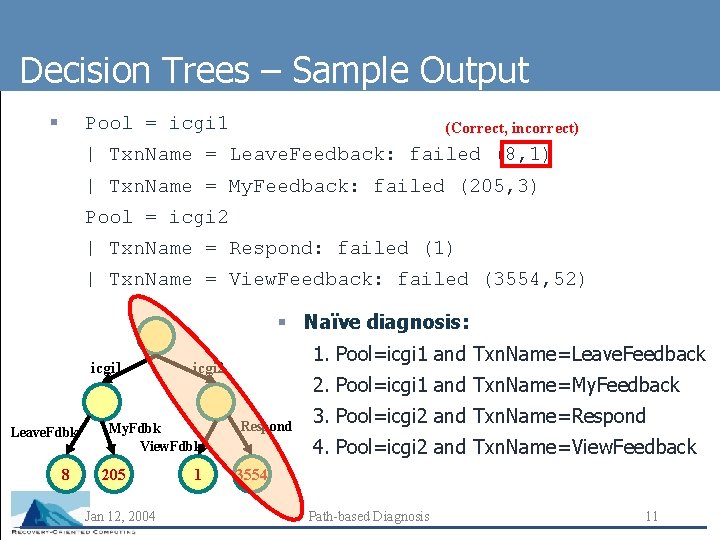

Decision Trees – Sample Output § Pool = icgi 1 (Correct, incorrect) | Txn. Name = Leave. Feedback: failed (8, 1) | Txn. Name = My. Feedback: failed (205, 3) Pool = icgi 2 | Txn. Name = Respond: failed (1) | Txn. Name = View. Feedback: failed (3554, 52) § Naïve diagnosis: icgi 1 Leave. Fdbk 8 My. Fdbk View. Fdbk 205 Jan 12, 2004 1. Pool=icgi 1 and Txn. Name=Leave. Feedback icgi 2 1 2. Pool=icgi 1 and Txn. Name=My. Feedback Respond 3. Pool=icgi 2 and Txn. Name=Respond 4. Pool=icgi 2 and Txn. Name=View. Feedback 3554 Path-based Diagnosis 11

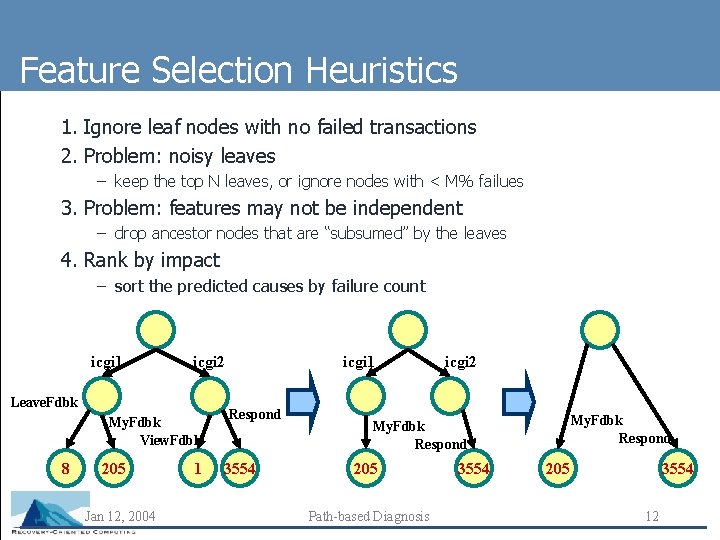

Feature Selection Heuristics 1. Ignore leaf nodes with no failed transactions 2. Problem: noisy leaves – keep the top N leaves, or ignore nodes with < M% failues 3. Problem: features may not be independent – drop ancestor nodes that are “subsumed” by the leaves 4. Rank by impact – sort the predicted causes by failure count icgi 1 icgi 2 Leave. Fdbk My. Fdbk View. Fdbk 8 205 Jan 12, 2004 1 icgi 1 Respond 3554 icgi 2 My. Fdbk Respond 205 Path-based Diagnosis 3554 205 3554 12

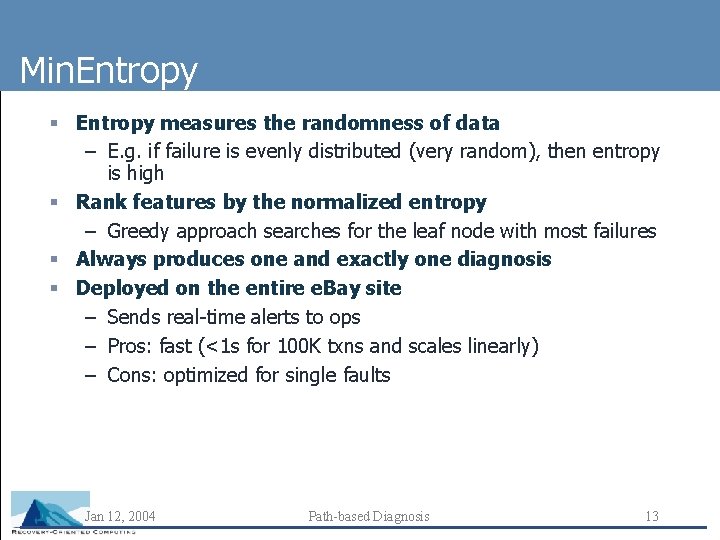

Min. Entropy § Entropy measures the randomness of data – E. g. if failure is evenly distributed (very random), then entropy is high § Rank features by the normalized entropy – Greedy approach searches for the leaf node with most failures § Always produces one and exactly one diagnosis § Deployed on the entire e. Bay site – Sends real-time alerts to ops – Pros: fast (<1 s for 100 K txns and scales linearly) – Cons: optimized for single faults Jan 12, 2004 Path-based Diagnosis 13

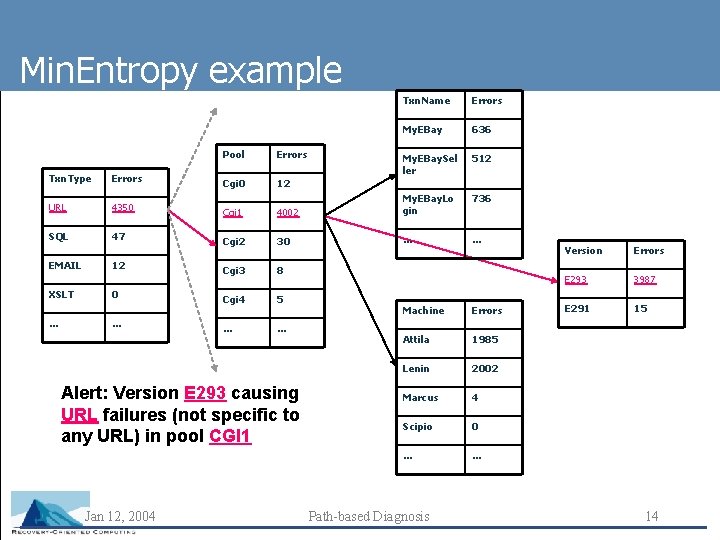

Min. Entropy example Pool Errors Txn. Name Errors My. EBay 636 My. EBay. Sel ler 512 Txn. Type Errors Cgi 0 12 URL 4350 4002 My. EBay. Lo gin 736 Cgi 1 SQL 47 Cgi 2 30 … … Cgi 3 8 Cgi 4 5 … … EMAIL XSLT … 12 0 … Alert: Version E 293 causing URL failures (not specific to any URL) in pool CGI 1 Jan 12, 2004 Machine Errors Attila 1985 Lenin 2002 Marcus 4 Scipio 0 … … Path-based Diagnosis Version Errors E 293 3987 E 291 15 14

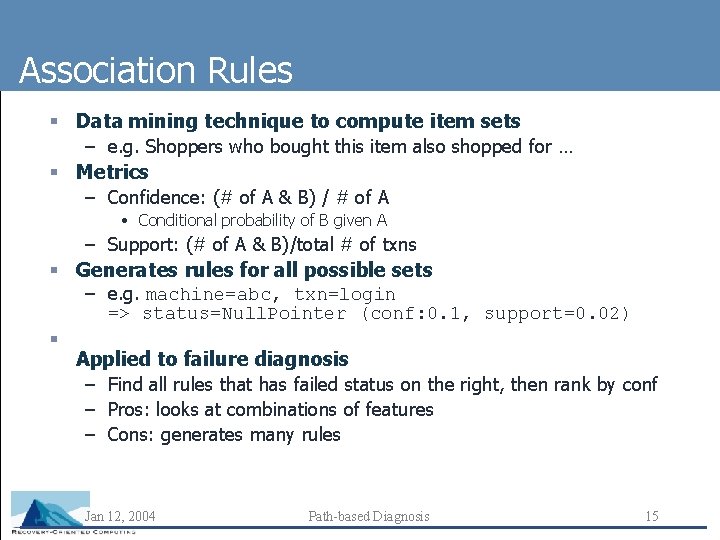

Association Rules § Data mining technique to compute item sets – e. g. Shoppers who bought this item also shopped for … § Metrics – Confidence: (# of A & B) / # of A • Conditional probability of B given A – Support: (# of A & B)/total # of txns § Generates rules for all possible sets – e. g. machine=abc, txn=login => status=Null. Pointer (conf: 0. 1, support=0. 02) § Applied to failure diagnosis – Find all rules that has failed status on the right, then rank by conf – Pros: looks at combinations of features – Cons: generates many rules Jan 12, 2004 Path-based Diagnosis 15

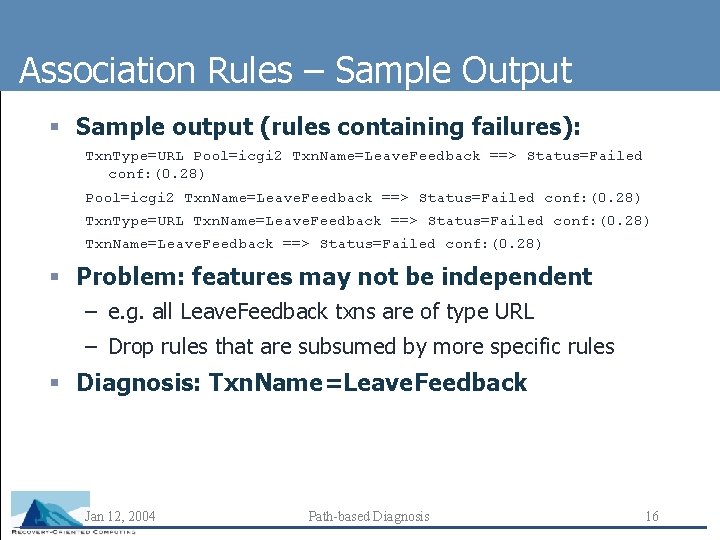

Association Rules – Sample Output § Sample output (rules containing failures): Txn. Type=URL Pool=icgi 2 Txn. Name=Leave. Feedback ==> Status=Failed conf: (0. 28) Txn. Type=URL Txn. Name=Leave. Feedback ==> Status=Failed conf: (0. 28) § Problem: features may not be independent – e. g. all Leave. Feedback txns are of type URL – Drop rules that are subsumed by more specific rules § Diagnosis: Txn. Name=Leave. Feedback Jan 12, 2004 Path-based Diagnosis 16

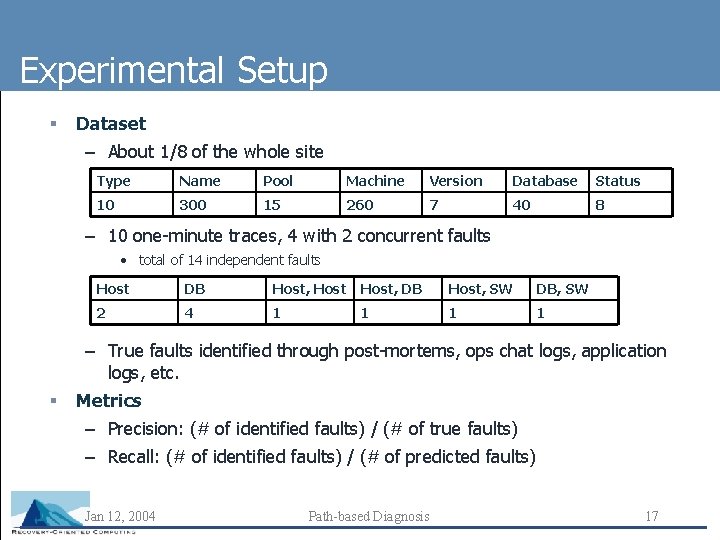

Experimental Setup § Dataset – About 1/8 of the whole site Type Name Pool Machine Version Database Status 10 300 15 260 7 40 8 – 10 one-minute traces, 4 with 2 concurrent faults • total of 14 independent faults Host DB Host, DB Host, SW DB, SW 2 4 1 1 – True faults identified through post-mortems, ops chat logs, application logs, etc. § Metrics – Precision: (# of identified faults) / (# of true faults) – Recall: (# of identified faults) / (# of predicted faults) Jan 12, 2004 Path-based Diagnosis 17

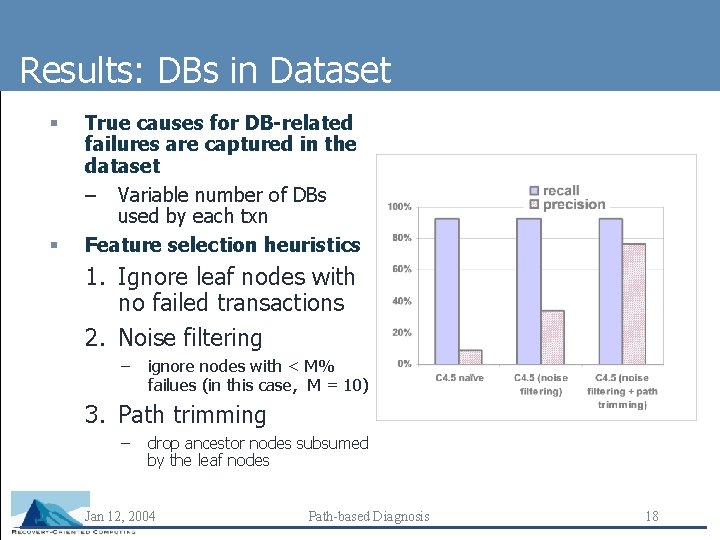

Results: DBs in Dataset § § True causes for DB-related failures are captured in the dataset – Variable number of DBs used by each txn Feature selection heuristics 1. Ignore leaf nodes with no failed transactions 2. Noise filtering – ignore nodes with < M% failues (in this case, M = 10) 3. Path trimming – drop ancestor nodes subsumed by the leaf nodes Jan 12, 2004 Path-based Diagnosis 18

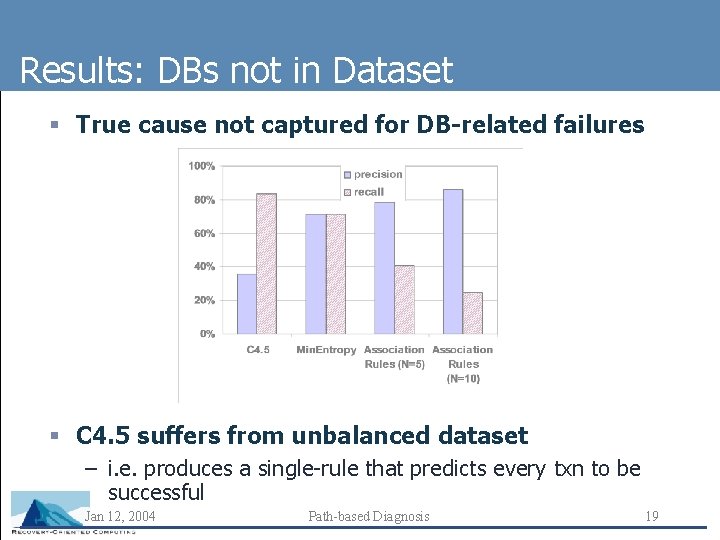

Results: DBs not in Dataset § True cause not captured for DB-related failures § C 4. 5 suffers from unbalanced dataset – i. e. produces a single-rule that predicts every txn to be successful Jan 12, 2004 Path-based Diagnosis 19

What’s next? § ROC curves – show tradeoff between precision and recall § Transient failures – Up-sample to balance dataset or use cost matrix § Some measure of the “confidence” of the prediction § More data points – Have 20 hrs of logs that have failures Jan 12, 2004 Path-based Diagnosis 20

Open Questions § How to deal with multiple symptoms? – E. g. DB outage causing multiple types of requests to fail – Treat it as multiple failures? § Failure importance (count vs. rate) – Two failures may have similar failure count – Low volume and higher failure rate vs. high volume and lower failure rate Jan 12, 2004 Path-based Diagnosis 21

- Slides: 21