Week 4 Presentation Tamique de Brito What Ive

Week 4 Presentation Tamique de Brito

What I’ve done this week ● Implemented model prototype ○ ○ Using C 3 D as encoder and LSTM as autoregressive function Used MSE and contrastive loss ● Implemented code utilities for debugging, recording training statistics, and other uses ○ These utilities will make it easier to prototype new ideas quickly ● Ran tests with small version of models for quick prototyping ○ Model appears to be learning distinguishable representations of clips

Model prototype ● Architecture: ○ ○ Currently testing on small version of architecture Encoder is C 3 D; currently with 6 conv layers Latent representation size is 2^9 LSTM has hidden size 128 ● Contrastive loss: ○ ○ Used negative log of softmax of cosine similarities ■ Did similarities between predicted representations and true representations within a batch There were some numerical instabilities with original algorithm that I had to figure out

Code utilities ● Spent time organizing and optimizing code now to make idea prototyping/running many experiments easier later on ○ E. g. allowing for array batch scripts to be submitted for testing the effect of many hyperparameters, ability to quickly change between different architecture sizes for large- or small-scale testing, recording arbitrary metrics on performance (e. g. similarity matrices for representations)

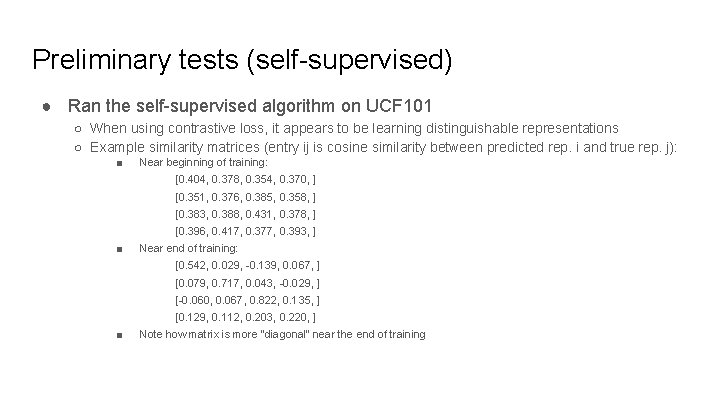

Preliminary tests (self-supervised) ● Ran the self-supervised algorithm on UCF 101 ○ When using contrastive loss, it appears to be learning distinguishable representations ○ Example similarity matrices (entry ij is cosine similarity between predicted rep. i and true rep. j): ■ Near beginning of training: [0. 404, 0. 378, 0. 354, 0. 370, ] [0. 351, 0. 376, 0. 385, 0. 358, ] [0. 383, 0. 388, 0. 431, 0. 378, ] [0. 396, 0. 417, 0. 377, 0. 393, ] ■ Near end of training: [0. 542, 0. 029, -0. 139, 0. 067, ] [0. 079, 0. 717, 0. 043, -0. 029, ] [-0. 060, 0. 067, 0. 822, 0. 135, ] [0. 129, 0. 112, 0. 203, 0. 220, ] ■ Note how matrix is more “diagonal” near the end of training

Preliminary tests (classification baselines) ● Ran tests on a tiny subset of UCF 101 on my local machine as a initial validation ○ Features learned from CPC were better than randomly initialized features ● Ran a shorter baseline for classification to get quicker preliminary results ● Now running the baseline for different architecture scales of C 3 D network ○ Baselines for training on both 100% and 20% of dataset ● Also running supervised algorithm for larger scale of network ● Currently looking at effects of different hyperparameters

Next steps ● Evaluation: ○ ○ Features learned from model prototype for both contrastive and MSE loss will need to be evaluated on downstream classification task and compared to baseline Will need to do the same thing for a prototype trained on only 20% of data ● Contrastive loss: ○ ○ ○ Currently, the contrastive examples come from other clips in the batch ■ This means that batch size is a hyperparameter of the loss, which may be undesirable Furthermore, the contrastive loss does not utilize clips from the same video These should both be fixed, however, the dependency on batch size is not a significant issue ● Further in the future: ○ Try variations on the architecture (i. e. use different decoder, different aggregator, or final prediction network) ■ Specific idea: instead of just predicting latent representation conditioned on temporal embedding, add a component to predict temporal embedding conditioned on latent representation

- Slides: 7