Tirgul 4 Dast 2011 Lower bounds on Sorting

Tirgul 4 - Dast 2011. -Lower bounds on Sorting -Quick sort -Order-Statistics School of Computer Science and Engineering, The Hebrew University of Jerusalem. 2/24/2021 1

Lower bound on sorting 2/24/2021 4

Ω(nlogn) bound on comparison sorting � In a comparison sort, we use only comparisons between elements to gain order information about the input. � Comparison sorts can be viewed abstractly in terms of decisions trees. � A decisions tree is a full binary tree that represents the answers to the comparisons. *For simplicity we assume that the elements are distinct. 2/24/2021 5

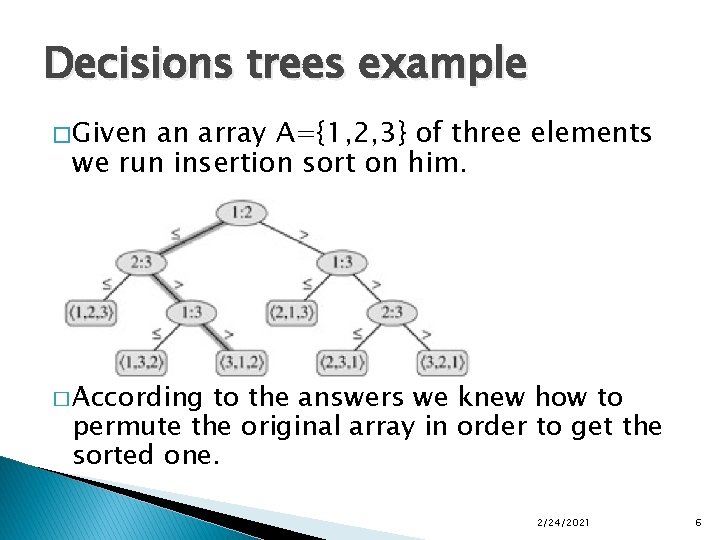

Decisions trees example � Given an array A={1, 2, 3} of three elements we run insertion sort on him. � According to the answers we knew how to permute the original array in order to get the sorted one. 2/24/2021 6

Decisions trees �A run of the algorithm corresponds to a path from the root to a leaf. � Each leaf holds the permutation that the algorithm performed on the input. 2/24/2021 7

Decisions trees � Because any correct sorting algorithm must be able to produce each permutation of its input, we get that the decision tree has to have at least n! leafs. � The worst running time of an algorithm is the longest path between the root and a leaf of the decisions tree that correspond to it, meaning the height of the tree. 2/24/2021 8

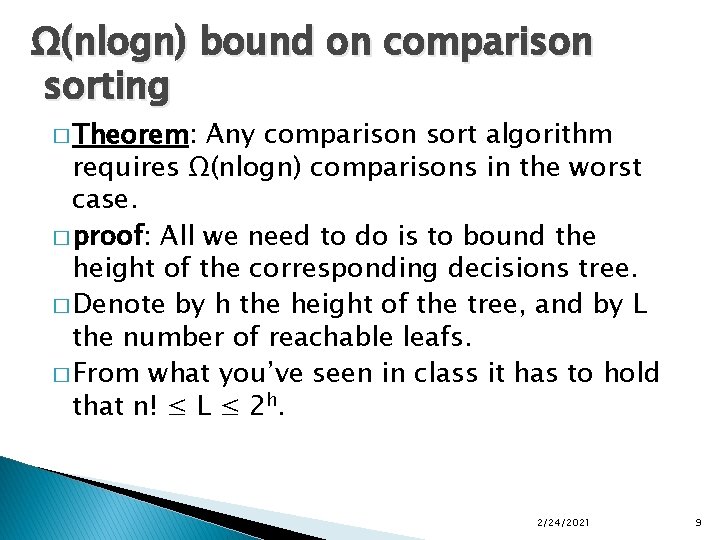

Ω(nlogn) bound on comparison sorting � Theorem: Any comparison sort algorithm requires Ω(nlogn) comparisons in the worst case. � proof: All we need to do is to bound the height of the corresponding decisions tree. � Denote by h the height of the tree, and by L the number of reachable leafs. � From what you’ve seen in class it has to hold that n! ≤ L ≤ 2 h. 2/24/2021 9

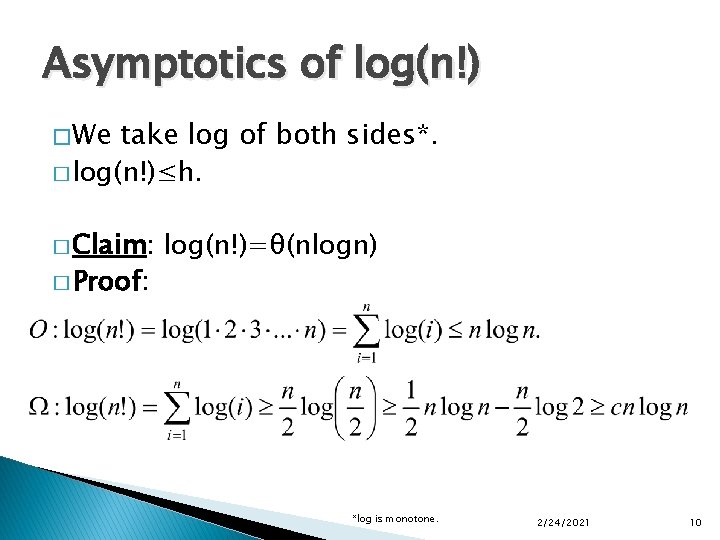

Asymptotics of log(n!) � We take log of both sides*. � log(n!)≤h. � Claim: � Proof: log(n!)=θ(nlogn) *log is monotone. 2/24/2021 10

Ω(nlogn) bound on comparison sorting � From the claim we get that h=Ω(nlogn). � Hence no comparison algorithm can achieve a better worst-case running time than Ω(nlogn). 2/24/2021 11

Quicksort 2/24/2021 12

Example Quick. Sort 2/24/2021 13

![Description of Quick. Sort � Three-step A[p. . r] divide-and-conquer for sorting ◦ Divide: Description of Quick. Sort � Three-step A[p. . r] divide-and-conquer for sorting ◦ Divide:](http://slidetodoc.com/presentation_image_h/0ffbeafe761cb034daaf0912e689bec9/image-14.jpg)

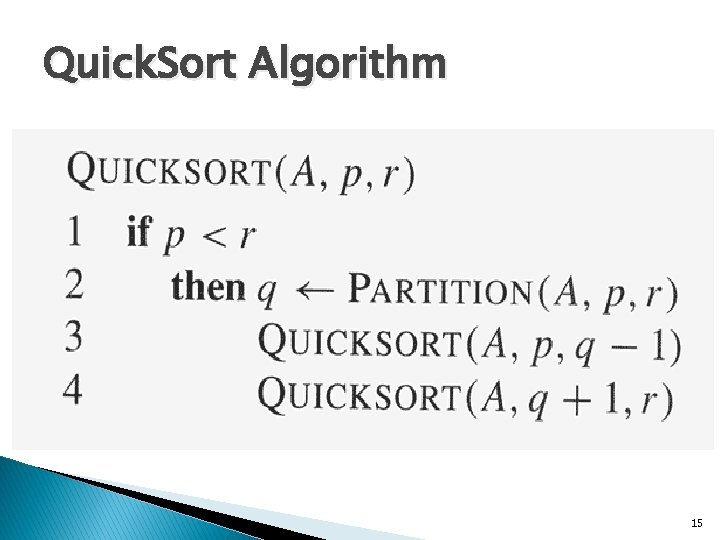

Description of Quick. Sort � Three-step A[p. . r] divide-and-conquer for sorting ◦ Divide: partition (rearrange) the array A[p. . r] into two (possibly empty) subarrays A[p. . q-1] and A[q+1. . r] such that each element of A[p. . q-1] is less than or equal to A[q], which is, in turn, less than or equal to each element of A[q+1. . r]. Compute the pivot q as part of this partitioning procedure ◦ Conquer: sort the two subarrays A[p. . q-1] and A[q+1. . r] by recursive calls to quicksort ◦ Combine: since the subarrays are sorted in place, no work is needed to combine them: the entire array A[p. . r] is now sorted 14

Quick. Sort Algorithm 15

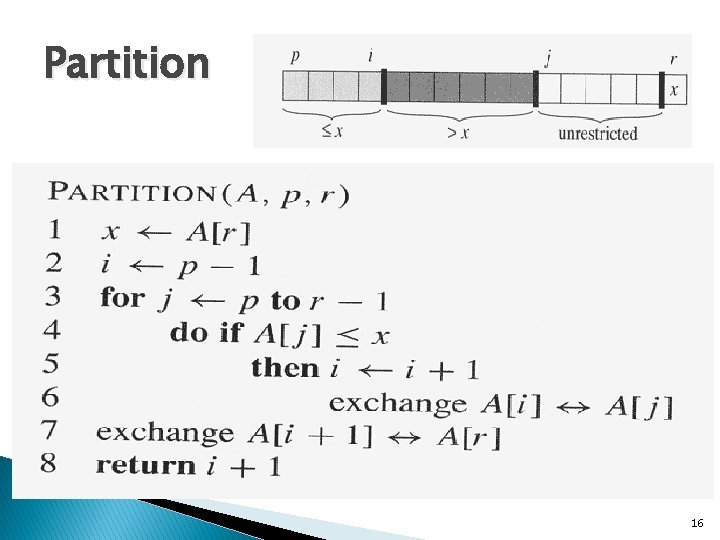

Partition 16

![Example of Partition � � 17 If If p k i A[k] x i+1 Example of Partition � � 17 If If p k i A[k] x i+1](http://slidetodoc.com/presentation_image_h/0ffbeafe761cb034daaf0912e689bec9/image-17.jpg)

Example of Partition � � 17 If If p k i A[k] x i+1 k j-1 A[k] > x k=r, A[k] = x j k r-1 un-restricted

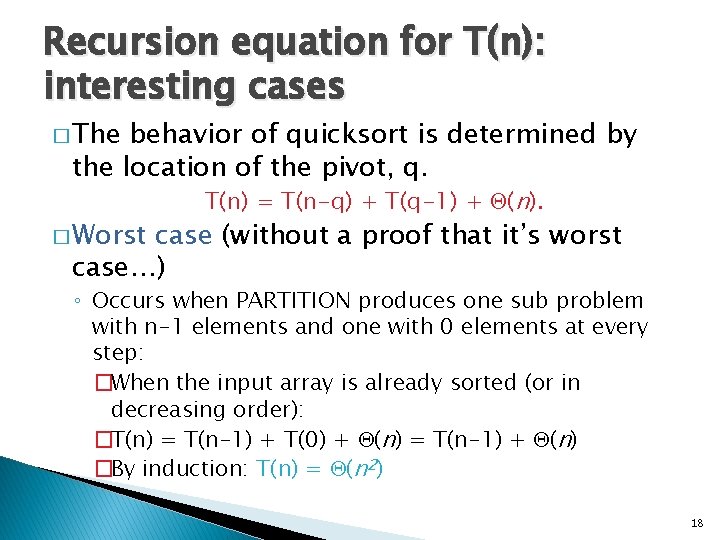

Recursion equation for T(n): interesting cases � The behavior of quicksort is determined by the location of the pivot, q. � Worst T(n) = T(n-q) + T(q-1) + (n). case (without a proof that it’s worst case…) ◦ Occurs when PARTITION produces one sub problem with n-1 elements and one with 0 elements at every step: �When the input array is already sorted (or in decreasing order): �T(n) = T(n-1) + T(0) + (n) = T(n-1) + (n) �By induction: T(n) = (n 2) 18

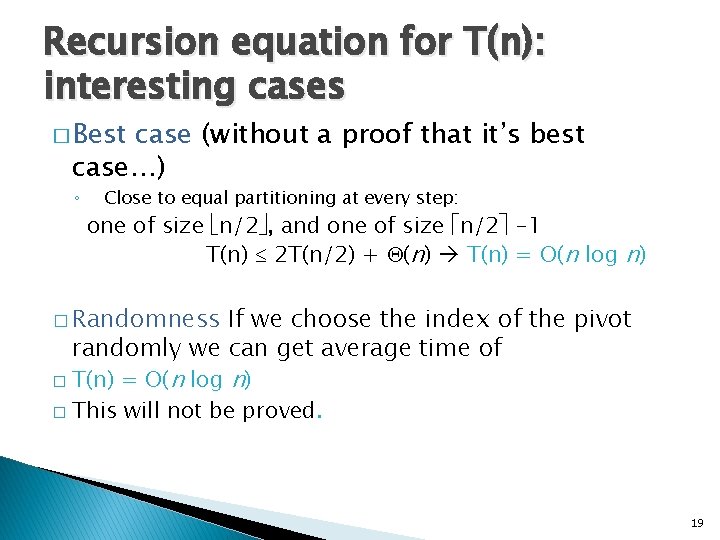

Recursion equation for T(n): interesting cases � Best case (without a proof that it’s best case…) ◦ Close to equal partitioning at every step: one of size n/2 , and one of size n/2 -1 T(n) 2 T(n/2) + (n) T(n) = O(n log n) � Randomness If we choose the index of the pivot randomly we can get average time of � T(n) = O(n log n) � This will not be proved. 19

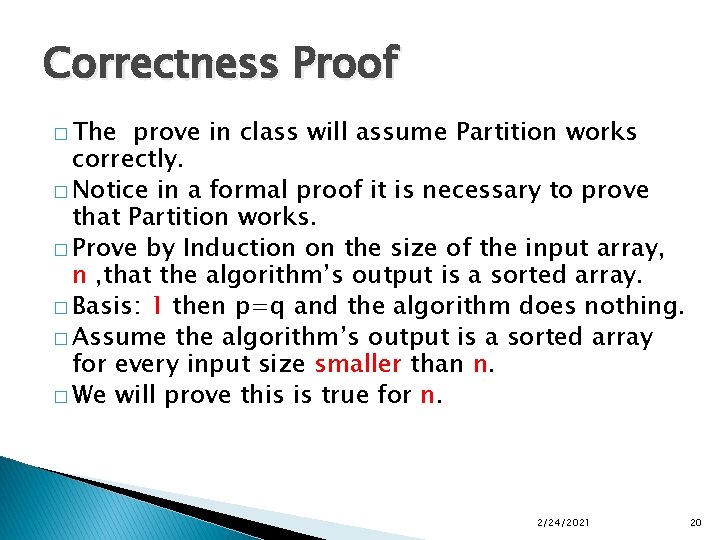

Correctness Proof � The prove in class will assume Partition works correctly. � Notice in a formal proof it is necessary to prove that Partition works. � Prove by Induction on the size of the input array, n , that the algorithm’s output is a sorted array. � Basis: 1 then p=q and the algorithm does nothing. � Assume the algorithm’s output is a sorted array for every input size smaller than n. � We will prove this is true for n. 2/24/2021 20

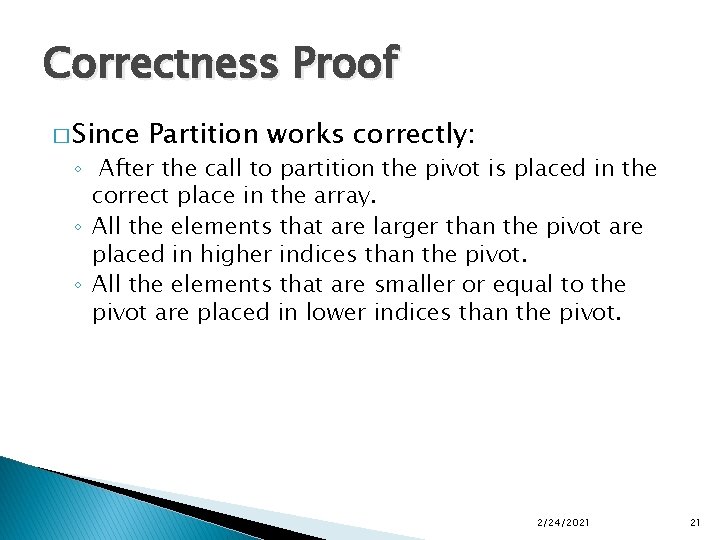

Correctness Proof � Since Partition works correctly: ◦ After the call to partition the pivot is placed in the correct place in the array. ◦ All the elements that are larger than the pivot are placed in higher indices than the pivot. ◦ All the elements that are smaller or equal to the pivot are placed in lower indices than the pivot. 2/24/2021 21

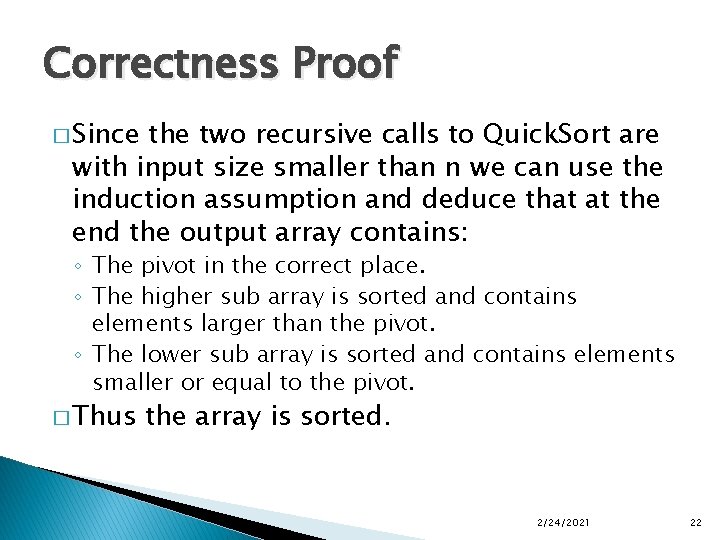

Correctness Proof � Since the two recursive calls to Quick. Sort are with input size smaller than n we can use the induction assumption and deduce that at the end the output array contains: ◦ The pivot in the correct place. ◦ The higher sub array is sorted and contains elements larger than the pivot. ◦ The lower sub array is sorted and contains elements smaller or equal to the pivot. � Thus the array is sorted. 2/24/2021 22

Order Statistics 2/24/2021 23

Order-statistics i th order-statistic of a set on n elements is the i th smallest element. � The Order-Statistic Problem (OSP): ◦ Input: A set A of n (distinct*) numbers and a number i, with. ◦ Output: The element that is larger than exactly i-1 other elements of A. *For simplicity we assume that the elements are distinct. 2/24/2021 24

Solving the OSP in O(nlog(n)) � Trivial solution: 1. Sort A using quicksort or some other “fast” sorting algorithm. 2. Return A[i]. � Question: Can this be done in less time? � Answer: yes! We can do it in θ(n). � Intuition: Quick Sort sorts both sides of the pivot, but the OSP needs only one… 2/24/2021 25

![OSP using Partition-intuition � Partition (rearrange) the array A[p. . r] into two (possibly OSP using Partition-intuition � Partition (rearrange) the array A[p. . r] into two (possibly](http://slidetodoc.com/presentation_image_h/0ffbeafe761cb034daaf0912e689bec9/image-26.jpg)

OSP using Partition-intuition � Partition (rearrange) the array A[p. . r] into two (possibly empty) subarrays A[p. . q-1] and A[q+1. . r] such that each element of A[p. . q-1] is less than or equal to A[q]. Compute the pivot q as part of this partitioning procedure � If i equals q return � If i is smaller than q find i in the left subarray. � If i is larger than q find i in the right subarray. � What is the running time for OSP? 2/24/2021 26

Worst case Ω(n 2) � On the worst case input one of the subarrays will always be empty. � Thus the worst case running time is: T(n)=T(n-1)+ θ(n). � Solving the recursion gives a θ(n 2) running time. 2/24/2021 27

How can we improve? � If we could ensure somehow that PARTITION partitions A into two equal size subarrays, we would improve the running time to: T(n)=T(n/2)+θ(n). � Then by the master theorem, since: a/bk =1/2 <1 � we’d get: T(n) = θ(n). 2/24/2021 28

Average case � Replacing Average case: PARTITION with RANDOMIZEDPARTITION (the location of the pivot is chosen randomly) will achieve partitioning into two roughly equal parts. This will indeed give θ(n) running time on average. 2/24/2021 29

- Slides: 29