Stream Analytics with SQL on Apache Flink Fabian

Stream Analytics with SQL on Apache Flink® Fabian Hueske @fhueske Strata Data Conference, New York September, 27 th 2017 1

About me § Apache Flink PMC member • Contributing since day 1 at TU Berlin • Focusing on Flink’s relational APIs since 2 years § Co-author of “Stream Processing with Apache Flink” • Work in progress… § Co-founder & Software Engineer at data Artisans 2

Original creators of Apache Flink® d. A Platform 2 Open Source Apache Flink + d. A Application Manager 3

Productionizing and operating stream processing made easy 4

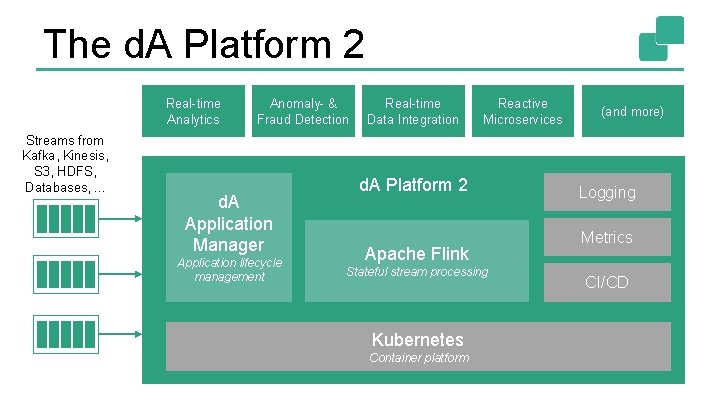

The d. A Platform 2 Real-time Analytics Streams from Kafka, Kinesis, S 3, HDFS, Databases, . . . Anomaly- & Fraud Detection d. A Application Manager Application lifecycle management Real-time Data Integration Reactive Microservices d. A Platform 2 Apache Flink Stateful stream processing Kubernetes Container platform (and more) Logging Metrics CI/CD

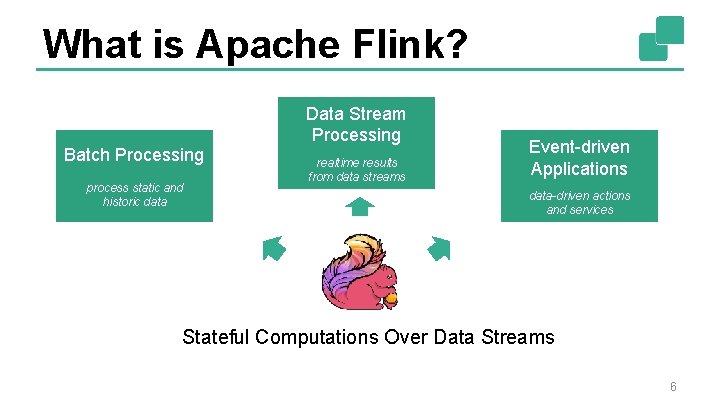

What is Apache Flink? Data Stream Processing Batch Processing process static and historic data realtime results from data streams Event-driven Applications data-driven actions and services Stateful Computations Over Data Streams 6

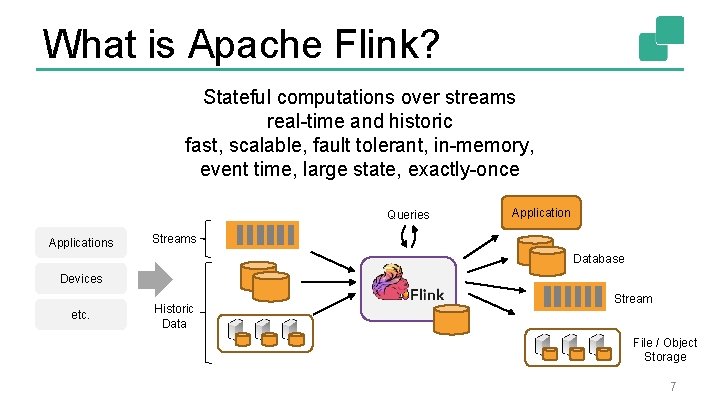

What is Apache Flink? Stateful computations over streams real-time and historic fast, scalable, fault tolerant, in-memory, event time, large state, exactly-once Queries Application Streams Database Devices etc. Historic Data Stream File / Object Storage 7

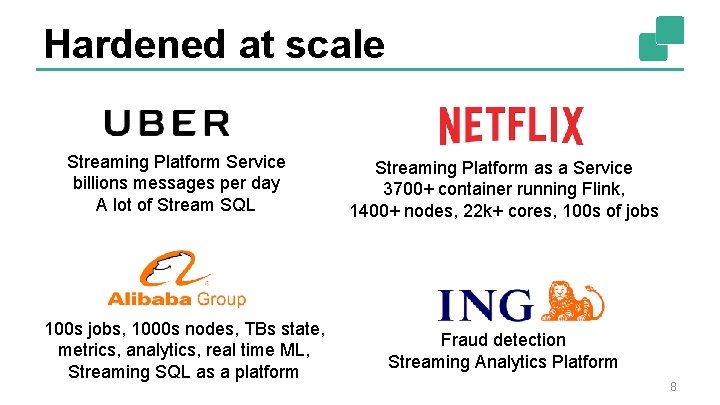

Hardened at scale Streaming Platform Service billions messages per day A lot of Stream SQL 100 s jobs, 1000 s nodes, TBs state, metrics, analytics, real time ML, Streaming SQL as a platform Streaming Platform as a Service 3700+ container running Flink, 1400+ nodes, 22 k+ cores, 100 s of jobs Fraud detection Streaming Analytics Platform 8

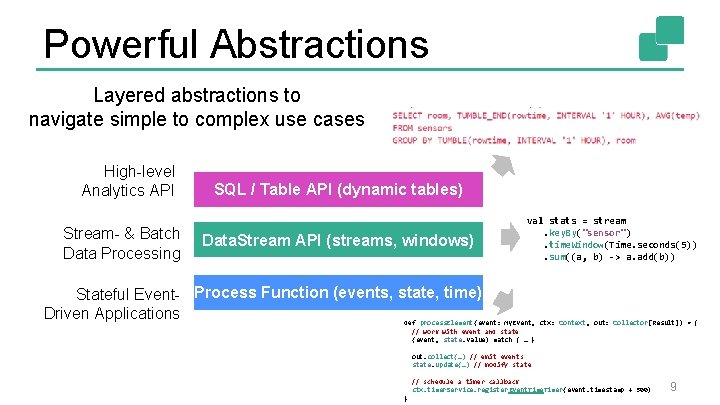

Powerful Abstractions Layered abstractions to navigate simple to complex use cases High-level Analytics API Stream- & Batch Data Processing SQL / Table API (dynamic tables) Data. Stream API (streams, windows) val stats = stream. key. By("sensor"). time. Window(Time. seconds(5)). sum((a, b) -> a. add(b)) Stateful Event- Process Function (events, state, time) Driven Applications def process. Element(event: My. Event, ctx: Context, out: Collector[Result]) = { // work with event and state (event, state. value) match { … } out. collect(…) // emit events state. update(…) // modify state // schedule a timer callback ctx. timer. Service. register Event. Timer(event. timestamp + 500) } 9

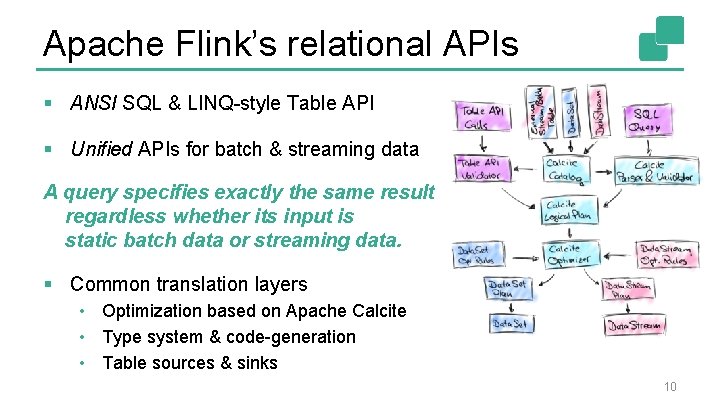

Apache Flink’s relational APIs § ANSI SQL & LINQ-style Table API § Unified APIs for batch & streaming data A query specifies exactly the same result regardless whether its input is static batch data or streaming data. § Common translation layers • Optimization based on Apache Calcite • Type system & code-generation • Table sources & sinks 10

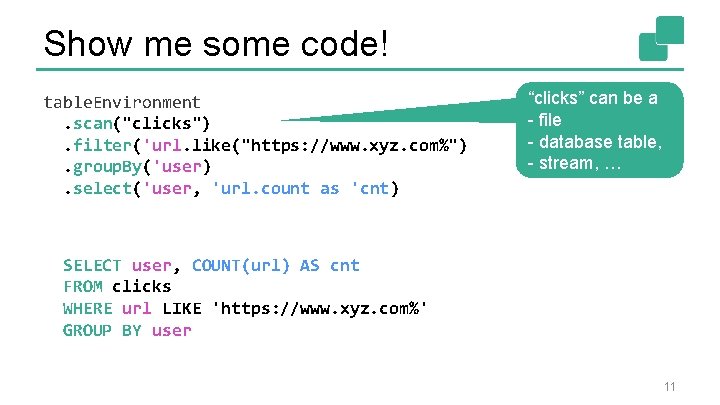

Show me some code! table. Environment. scan("clicks"). filter('url. like("https: //www. xyz. com%"). group. By('user). select('user, 'url. count as 'cnt) “clicks” can be a - file - database table, - stream, … SELECT user, COUNT(url) AS cnt FROM clicks WHERE url LIKE 'https: //www. xyz. com%' GROUP BY user 11

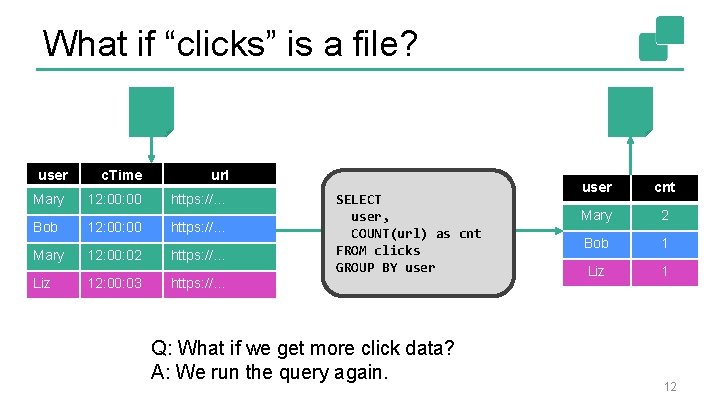

What if “clicks” is a file? user c. Time url Mary 12: 00 https: //… Bob 12: 00 https: //… Mary 12: 00: 02 https: //… Liz 12: 00: 03 https: //… SELECT user, COUNT(url) as cnt FROM clicks GROUP BY user Q: What if we get more click data? A: We run the query again. user cnt Mary 2 Bob 1 Liz 1 12

What if “clicks” is a stream? § We want the same results as for batch input! § Does SQL work on streams as well? 13

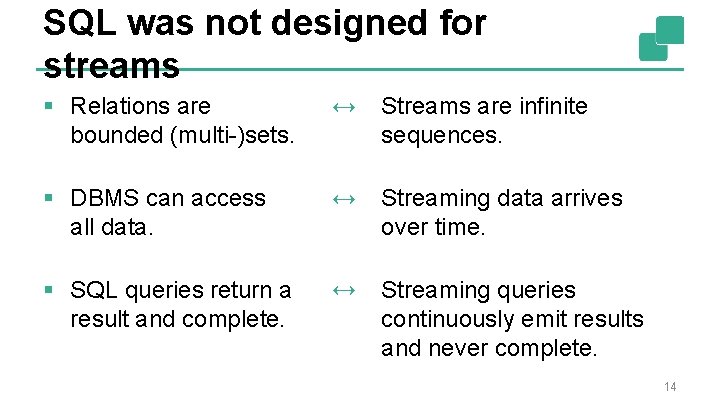

SQL was not designed for streams § Relations are bounded (multi-)sets. ↔ Streams are infinite sequences. § DBMS can access all data. ↔ Streaming data arrives over time. § SQL queries return a result and complete. ↔ Streaming queries continuously emit results and never complete. 14

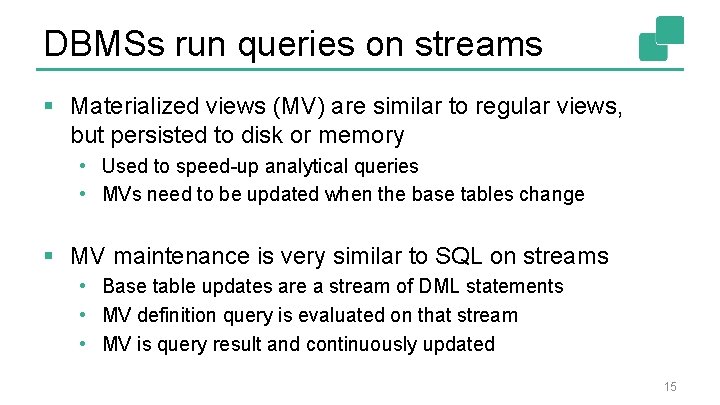

DBMSs run queries on streams § Materialized views (MV) are similar to regular views, but persisted to disk or memory • Used to speed-up analytical queries • MVs need to be updated when the base tables change § MV maintenance is very similar to SQL on streams • Base table updates are a stream of DML statements • MV definition query is evaluated on that stream • MV is query result and continuously updated 15

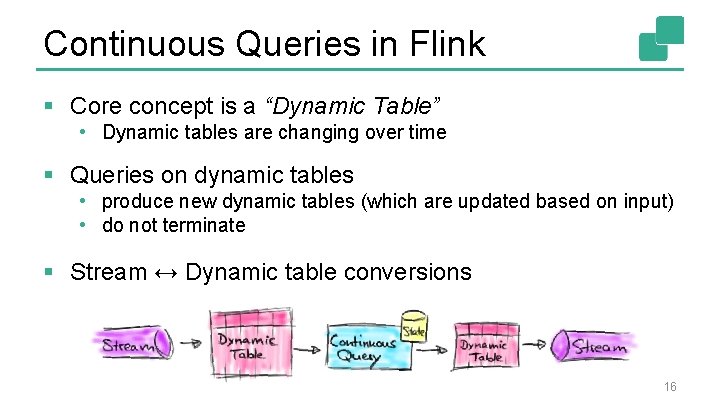

Continuous Queries in Flink § Core concept is a “Dynamic Table” • Dynamic tables are changing over time § Queries on dynamic tables • produce new dynamic tables (which are updated based on input) • do not terminate § Stream ↔ Dynamic table conversions 16

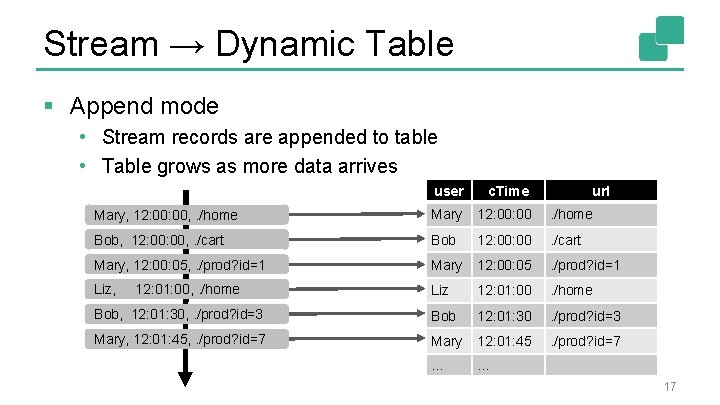

Stream → Dynamic Table § Append mode • Stream records are appended to table • Table grows as more data arrives user c. Time Mary, 12: 00, . /home Mary 12: 00 . /home Bob, 12: 00, . /cart Bob 12: 00 . /cart Mary, 12: 00: 05, . /prod? id=1 Mary 12: 00: 05 . /prod? id=1 Liz, Liz 12: 01: 00 . /home Bob, 12: 01: 30, . /prod? id=3 Bob 12: 01: 30 . /prod? id=3 Mary, 12: 01: 45, . /prod? id=7 Mary 12: 01: 45 . /prod? id=7 … … 12: 01: 00, . /home url 17

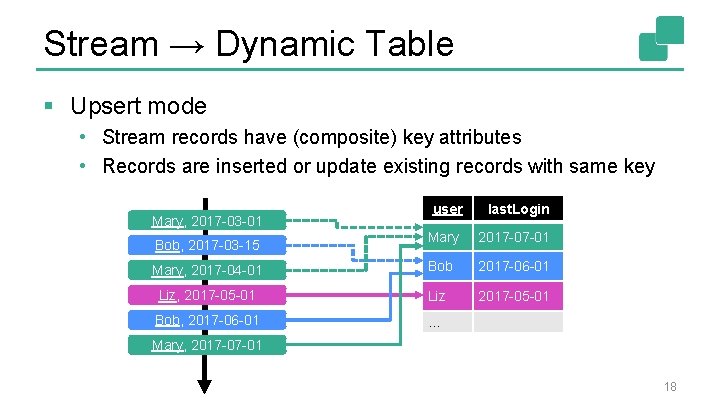

Stream → Dynamic Table § Upsert mode • Stream records have (composite) key attributes • Records are inserted or update existing records with same key user last. Login Mary 2017 -07 -01 Mary, 2017 -04 -01 Bob 2017 -06 -01 Liz, 2017 -05 -01 Liz 2017 -05 -01 Bob, 2017 -06 -01 … Mary, 2017 -03 -01 Bob, 2017 -03 -15 Mary, 2017 -07 -01 18

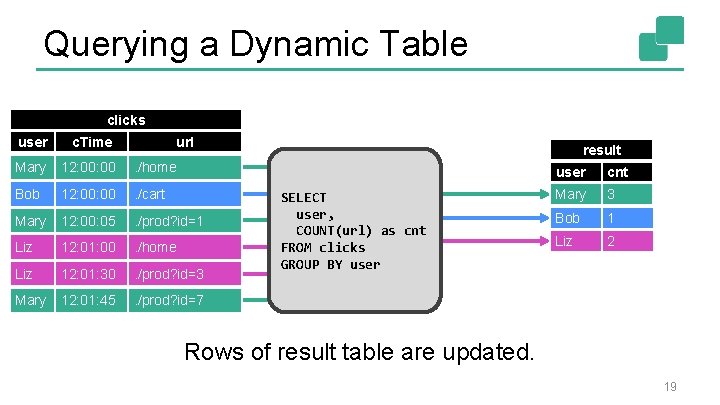

Querying a Dynamic Table clicks user c. Time url Mary 12: 00 . /home Bob 12: 00 . /cart Mary 12: 00: 05 . /prod? id=1 Liz 12: 01: 00 . /home Liz 12: 01: 30 . /prod? id=3 Mary 12: 01: 45 . /prod? id=7 result SELECT user, COUNT(url) as cnt FROM clicks GROUP BY user cnt Mary 2 3 1 Bob 1 Liz 1 2 Rows of result table are updated. 19

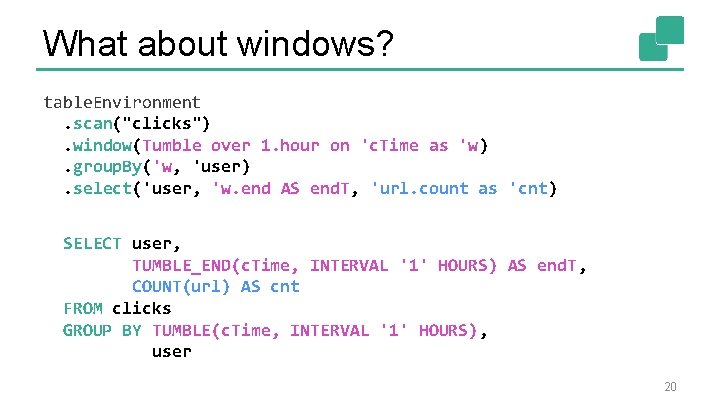

What about windows? table. Environment. scan("clicks"). window(Tumble over 1. hour on 'c. Time as 'w). group. By('w, 'user). select('user, 'w. end AS end. T, 'url. count as 'cnt) SELECT user, TUMBLE_END(c. Time, INTERVAL '1' HOURS) AS end. T, COUNT(url) AS cnt FROM clicks GROUP BY TUMBLE(c. Time, INTERVAL '1' HOURS), user 20

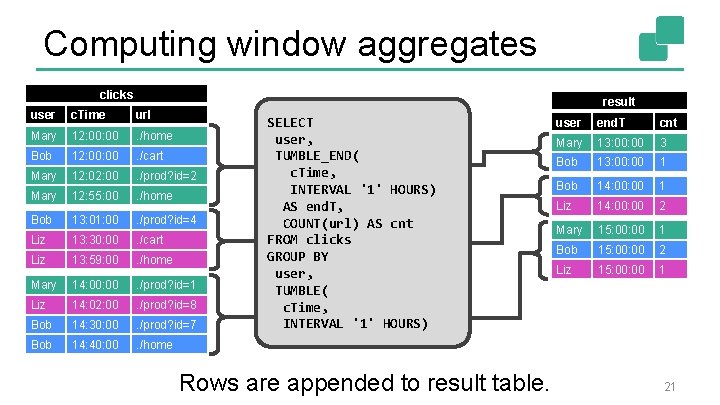

Computing window aggregates clicks result user c. Time url Mary 12: 00 . /home Bob 12: 00 . /cart Mary 12: 00 . /prod? id=2 Mary 12: 55: 00 . /home Bob 13: 01: 00 . /prod? id=4 Liz 13: 30: 00 . /cart Liz 13: 59: 00 . /home Mary 14: 00 . /prod? id=1 Liz 14: 02: 00 . /prod? id=8 Bob 14: 30: 00 . /prod? id=7 Bob 14: 40: 00 . /home SELECT user, TUMBLE_END( c. Time, INTERVAL '1' HOURS) AS end. T, COUNT(url) AS cnt FROM clicks GROUP BY user, TUMBLE( c. Time, INTERVAL '1' HOURS) Rows are appended to result table. user end. T cnt Mary 13: 00 3 Bob 13: 00 1 Bob 14: 00 1 Liz 14: 00 2 Mary 15: 00 1 Bob 15: 00 2 Liz 15: 00 1 21

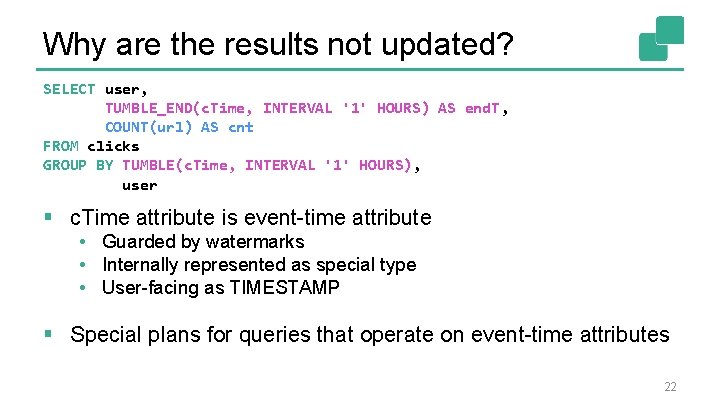

Why are the results not updated? SELECT user, TUMBLE_END(c. Time, INTERVAL '1' HOURS) AS end. T, COUNT(url) AS cnt FROM clicks GROUP BY TUMBLE(c. Time, INTERVAL '1' HOURS), user § c. Time attribute is event-time attribute • Guarded by watermarks • Internally represented as special type • User-facing as TIMESTAMP § Special plans for queries that operate on event-time attributes 22

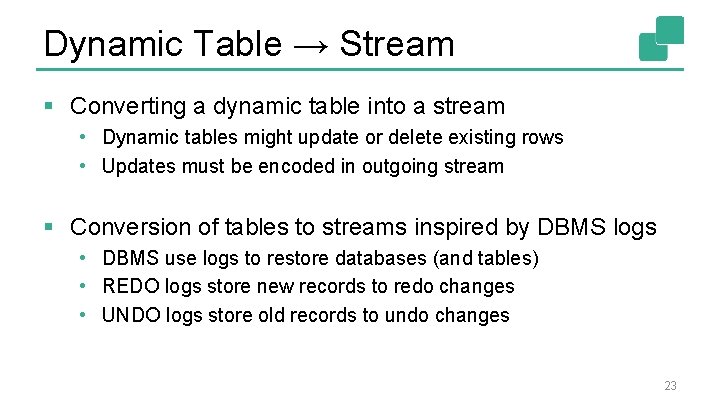

Dynamic Table → Stream § Converting a dynamic table into a stream • Dynamic tables might update or delete existing rows • Updates must be encoded in outgoing stream § Conversion of tables to streams inspired by DBMS logs • DBMS use logs to restore databases (and tables) • REDO logs store new records to redo changes • UNDO logs store old records to undo changes 23

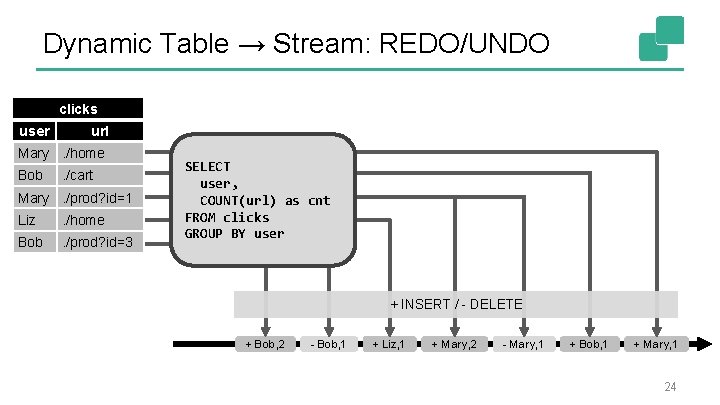

Dynamic Table → Stream: REDO/UNDO clicks user url Mary. /home Bob . /cart Mary. /prod? id=1 Liz . /home Bob . /prod? id=3 SELECT user, COUNT(url) as cnt FROM clicks GROUP BY user + INSERT / - DELETE + Bob, 2 - Bob, 1 + Liz, 1 + Mary, 2 - Mary, 1 + Bob, 1 + Mary, 1 24

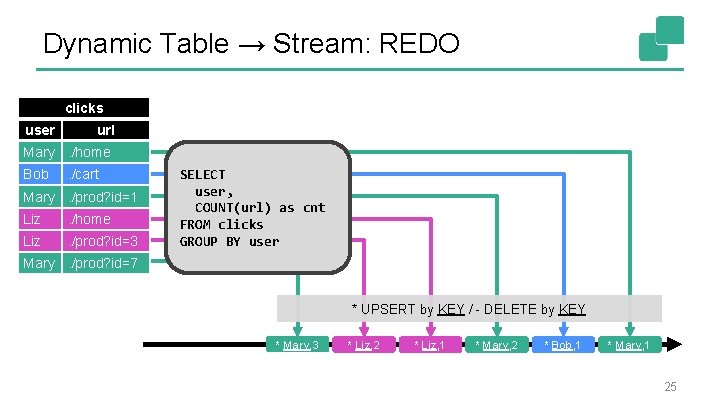

Dynamic Table → Stream: REDO clicks user url Mary. /home Bob . /cart Mary. /prod? id=1 Liz . /home Liz . /prod? id=3 SELECT user, COUNT(url) as cnt FROM clicks GROUP BY user Mary. /prod? id=7 * UPSERT by KEY / - DELETE by KEY * Mary, 3 * Liz, 2 * Liz, 1 * Mary, 2 * Bob, 1 * Mary, 1 25

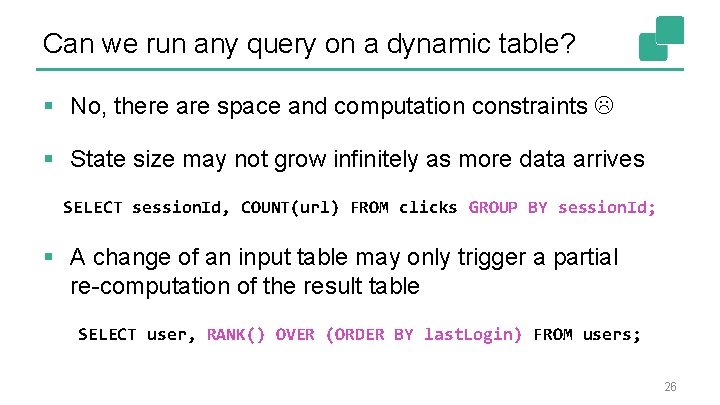

Can we run any query on a dynamic table? § No, there are space and computation constraints § State size may not grow infinitely as more data arrives SELECT session. Id, COUNT(url) FROM clicks GROUP BY session. Id; § A change of an input table may only trigger a partial re-computation of the result table SELECT user, RANK() OVER (ORDER BY last. Login) FROM users; 26

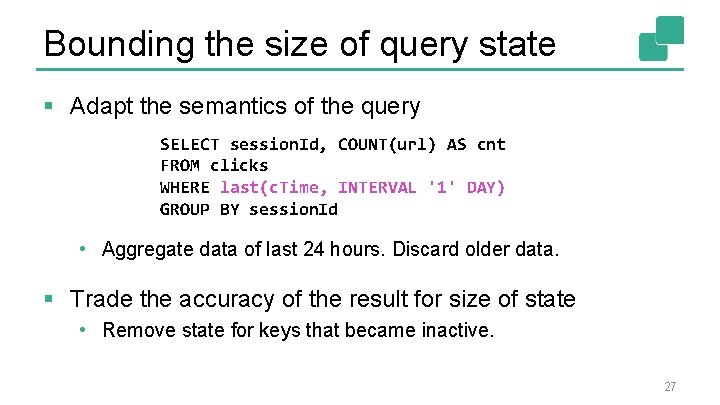

Bounding the size of query state § Adapt the semantics of the query SELECT session. Id, COUNT(url) AS cnt FROM clicks WHERE last(c. Time, INTERVAL '1' DAY) GROUP BY session. Id • Aggregate data of last 24 hours. Discard older data. § Trade the accuracy of the result for size of state • Remove state for keys that became inactive. 27

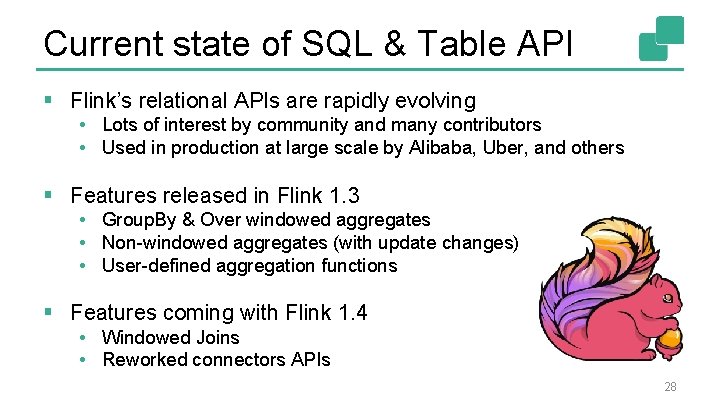

Current state of SQL & Table API § Flink’s relational APIs are rapidly evolving • Lots of interest by community and many contributors • Used in production at large scale by Alibaba, Uber, and others § Features released in Flink 1. 3 • Group. By & Over windowed aggregates • Non-windowed aggregates (with update changes) • User-defined aggregation functions § Features coming with Flink 1. 4 • Windowed Joins • Reworked connectors APIs 28

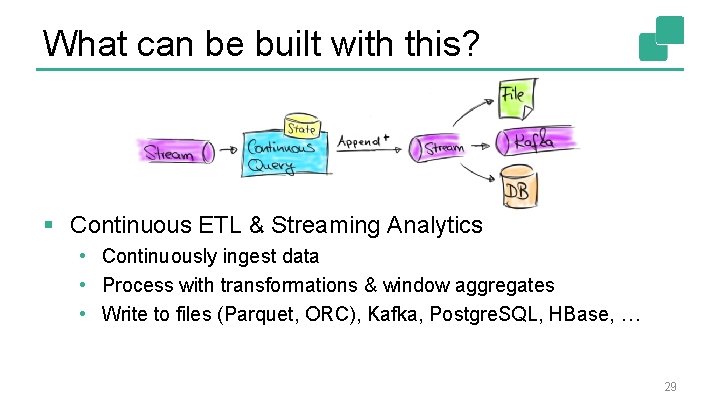

What can be built with this? § Continuous ETL & Streaming Analytics • Continuously ingest data • Process with transformations & window aggregates • Write to files (Parquet, ORC), Kafka, Postgre. SQL, HBase, … 29

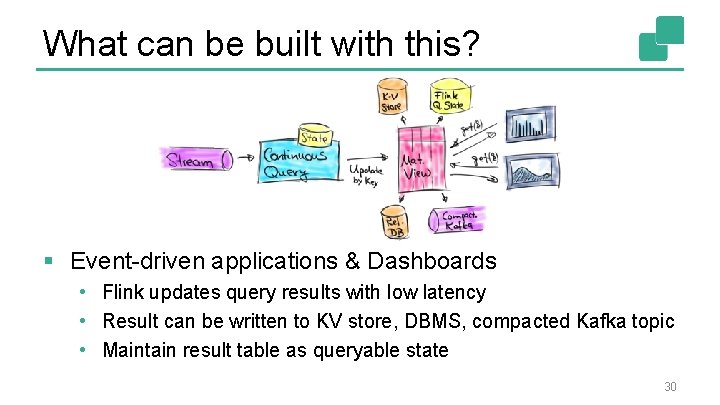

What can be built with this? § Event-driven applications & Dashboards • Flink updates query results with low latency • Result can be written to KV store, DBMS, compacted Kafka topic • Maintain result table as queryable state 30

Wrap up! § Used in production heavily at Alibaba, Uber, and others § Unified Batch and Stream Processing § Lots of great features • Continuously updating results like Materialized Views • Sophisticated event-time model with retractions • User-defined scalar, table & aggregation functions § Check it out! 31

Thank you! @fhueske @Apache. Flink @data. Artisans Available on O’Reilly Early Release!

We are hiring! data-artisans. com/careers

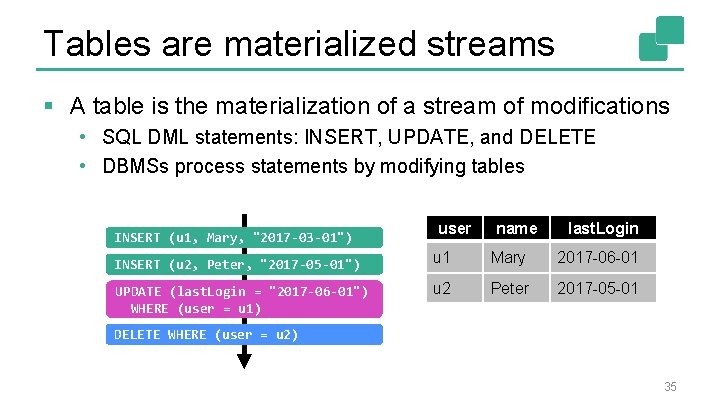

Tables are materialized streams § A table is the materialization of a stream of modifications • SQL DML statements: INSERT, UPDATE, and DELETE • DBMSs process statements by modifying tables INSERT (u 1, Mary, "2017 -03 -01") INSERT (u 2, Peter, "2017 -05 -01") UPDATE (last. Login = "2017 -06 -01") WHERE (user = u 1) user name last. Login u 1 Mary 2017 -03 -01 2017 -06 -01 u 2 Peter 2017 -05 -01 DELETE WHERE (user = u 2) 35

Flink’s Data. Stream API § The Data. Stream API is very expressive • Application logic implemented as user-defined functions • Windows, triggers, evictors, state, timers, async calls, … § Many applications follow similar patterns • Do not require the expressiveness of the Data. Stream API • Can be specified more concisely and easily with a DSL Q: What’s the most popular DSL for data processing? A: SQL! 36

- Slides: 36