SHARCNET 2 Moving Forward Partner Institutions Academic Brock

SHARCNET 2 Moving Forward…

Partner Institutions Academic • Brock University • Mc. Master University • University of Guelph • University of Ontario Institute of Technology • University of Waterloo • University of Western Ontario • University of Windsor • Wilfred Laurier University • York University SHARCNET 2 Research Institutes • Robarts Institute • Fields Institute • Perimeter Institute Private Sector • Hewlett Packard • SGI • Quadrics Supercomputing World • Platform Computing • Nortel Networks • Bell Canada Government • Canada Foundation for Innovation • Ontario Innovation Trust • Ontario R&D Challenge Fund • Optical Regional Advanced Network of Ontario (ORANO)

Philosophy • “A multi-university and college, interdisciplinary institute with academic-industry-government partnerships, enabling computational research in critical areas of science, engineering and business. ” • SHARCNET provides access to and support for high performance computing resources for the researcher community – Goals: • reduce time to science • provision of otherwise unattainable compute resources • remote collaboration SHARCNET 2

SHARCNET Resources: Three Perspectives • People – User support • System Administrator, HPC Analyst – Administrative support • Site Leader • Hardware – machines, processors, networking • Software – design, compilers, libraries, development tools SHARCNET 2

High Performance Computing Analyst • A point of contact for development support and education – central resource – analysts have natural areas of expertise---address issues to one with the requisite knowledge to best assist you – http: //www. sharcnet. ca • Analyst’s role: – – development support analysis of requirements development/delivery of educational resources research computing consultations SHARCNET 2

System Administrator • Administration and maintenance of installations – responsible for specific cluster(s) – typically focus on particular clusters or packages • Administrator’s role: – – user accounts system software and middleware hardware and software maintenance research computing consultations SHARCNET 2

Site Leader • Liaison between SHARCNET and user community at a specific site – primary point of contact for the research community at a site • Site Leader’s Role: – – – site coordination representative for local researchers user comments and questions event organization political intrigue SHARCNET 2

Hardware Resources: Networking • Sites are interconnected by dedicated high bandwidth fiber links – – fast access to all hardware regardless of physical location common file access (distributing file systems) shared resources dedicated channel for Access Grid • 10 Gbps/1 Gbps dedicated connection between all sites Installation: All Sites SHARCNET 2 ETA: Q 4 2005

Hardware: Capability Cluster • Architecture: – substantial number of 64 -bit CPUs emphasizing large, fast memory and high bandwidth/low latency interconnect – dual-processor systems (2 -way nodes --- ~1500 compute cores) – Opteron processors, 4 GB RAM per CPU, 70 TB onsite disk storage • Interconnect: – fast, extremely low latency, high bandwidth (Quadrics) • Intended use: – large scale, fine grained parallel, memory-intensive MPI jobs Installation: Mc. Master University SHARCNET 2 ETA: Q 3 2005

Hardware: Utility Parallel Cluster • Architecture: – reasonable number of 64 -bit CPUs with mid-range performance across the board for general purpose parallel applications – dual-processor/core systems (4 -way nodes -- ~1000 compute cores) – Opteron processors, 2 GB RAM per CPU, 70 TB onsite disk storage • Interconnect: – low latency, good bandwidth (Infini. Band/Myrinet/Quadrics) • Intended use: – small to medium scale MPI, arbitrary parallel jobs; small scale SMP Installation: University of Guelph SHARCNET 2 ETA: Q 4 2005

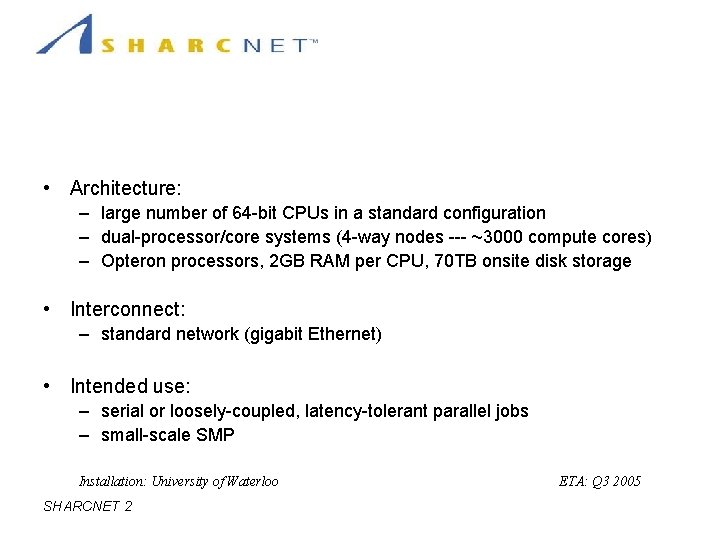

Hardware: Throughput Cluster • Architecture: – large number of 64 -bit CPUs in a standard configuration – dual-processor/core systems (4 -way nodes --- ~3000 compute cores) – Opteron processors, 2 GB RAM per CPU, 70 TB onsite disk storage • Interconnect: – standard network (gigabit Ethernet) • Intended use: – serial or loosely-coupled, latency-tolerant parallel jobs – small-scale SMP Installation: University of Waterloo SHARCNET 2 ETA: Q 3 2005

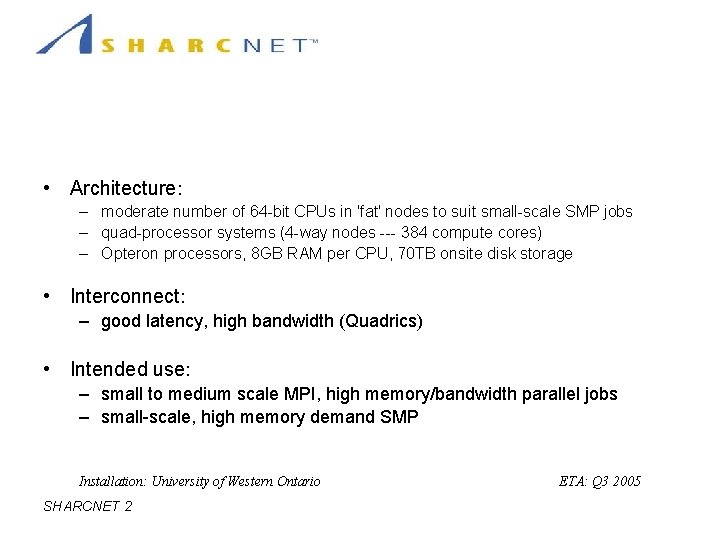

Hardware: SMP-Friendly Cluster • Architecture: – moderate number of 64 -bit CPUs in 'fat' nodes to suit small-scale SMP jobs – quad-processor systems (4 -way nodes --- 384 compute cores) – Opteron processors, 8 GB RAM per CPU, 70 TB onsite disk storage • Interconnect: – good latency, high bandwidth (Quadrics) • Intended use: – small to medium scale MPI, high memory/bandwidth parallel jobs – small-scale, high memory demand SMP Installation: University of Western Ontario SHARCNET 2 ETA: Q 3 2005

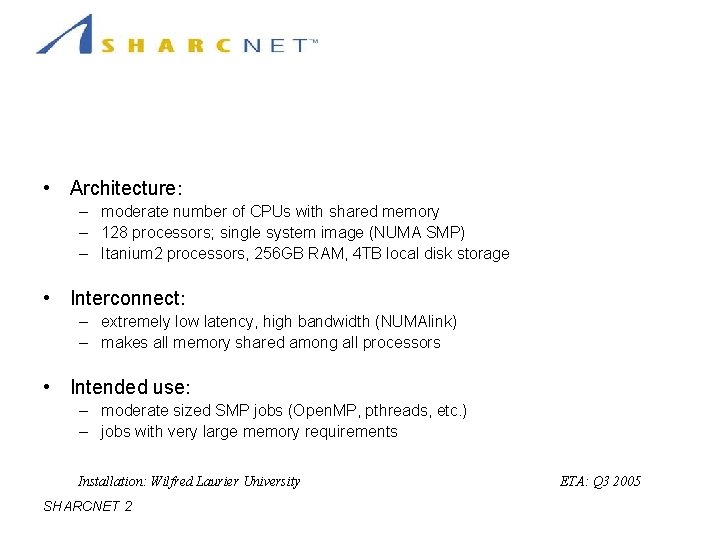

Hardware: Mid-range SMP System • Architecture: – moderate number of CPUs with shared memory – 128 processors; single system image (NUMA SMP) – Itanium 2 processors, 256 GB RAM, 4 TB local disk storage • Interconnect: – extremely low latency, high bandwidth (NUMAlink) – makes all memory shared among all processors • Intended use: – moderate sized SMP jobs (Open. MP, pthreads, etc. ) – jobs with very large memory requirements Installation: Wilfred Laurier University SHARCNET 2 ETA: Q 3 2005

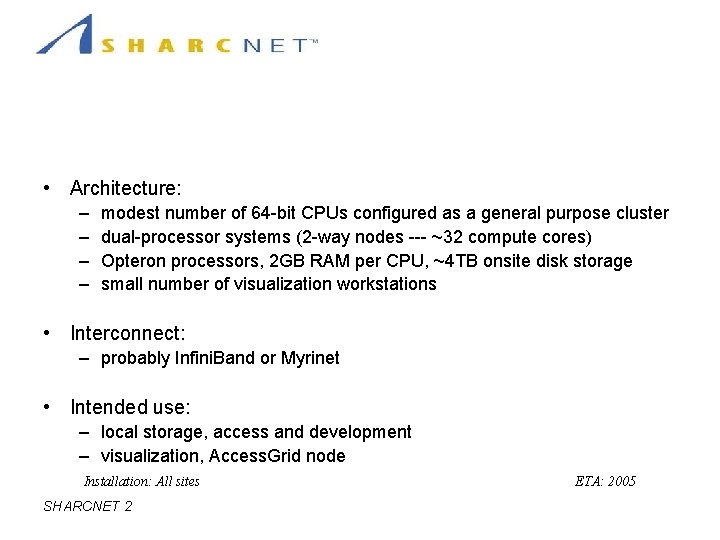

Hardware: Point of Presence Clusters • Architecture: – – modest number of 64 -bit CPUs configured as a general purpose cluster dual-processor systems (2 -way nodes --- ~32 compute cores) Opteron processors, 2 GB RAM per CPU, ~4 TB onsite disk storage small number of visualization workstations • Interconnect: – probably Infini. Band or Myrinet • Intended use: – local storage, access and development – visualization, Access. Grid node Installation: All sites SHARCNET 2 ETA: 2005

Software Resources • Compilers – C, C++, Fortran • Key parallel development support – MPI (Message Passing Interface) – Multi-threading (pthreads, Open. MP) • Libraries and Tools – BLAS, LAPACK, FFTW, PETSc, … – debugging, profiling, performance tools • Common between clusters – Some cluster specific tools SHARCNET 2

Unified Account System • User accounts unified across all clusters+web – Your files are available no matter which cluster you log into • PI accounts disabled if no research is reported – SHARCNET must report results to maintain our funding • Sponsor must re-enable subsidiary accounts annually – Prevent old student accounts from building up – Files will be archived • One account person (no sharing!) – Accounts are free and easy to obtain SHARCNET 2

Filesystems • Single home directory visible on any machine • Per-cluster /work and /scratch; per-node /tmp • /home quota is 200 MB (tentative) – Source code only – On raid file system – Will be backed up and replicated • put/get interface for archiving other files to long term storage • Environment variables to help users organize their work – $ARCH $CLUSTER $SCRATCH $WORK SHARCNET 2

Running jobs • Unified user commands: submit, show, kill. . . – Same interface to scheduler on every cluster • Fairshare based on usage across all clusters – Ensures all users get fair access to all resources • Large projects can apply for “cycle grants” – Increased priority for a period of time • Priority bias for particular jobs, depending on the specialty of the cluster – Jobs should be directed to a cluster best suited to their requirements SHARCNET 2

Conclusion • Huge increase in resources • Common tools and interface on all clusters • Efficient access to all resources regardless of actual location SHARCNET 2

- Slides: 19