Sequential Ensemble Learning for Outlier Detection A BiasVariance

Sequential Ensemble Learning for Outlier Detection: A Bias-Variance Perspective Shebuti Rayana Wen Zhong Leman Akoglu ICDM 2016 Barcelona, Spain

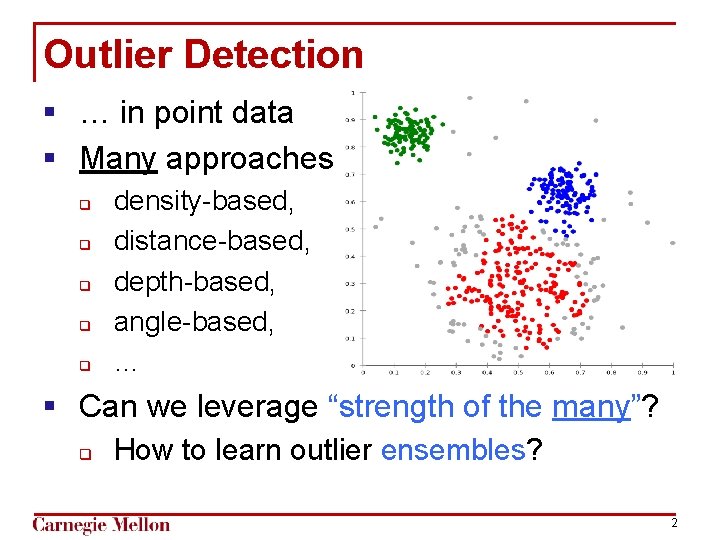

Outlier Detection § … in point data § Many approaches q q q density-based, distance-based, depth-based, angle-based, … § Can we leverage “strength of the many”? q How to learn outlier ensembles? 2

![Ensemble Learning § Bootstrap Aggregating [Breiman, 1996] § Adaptive Boosting [Freund & Schapire, 1997] Ensemble Learning § Bootstrap Aggregating [Breiman, 1996] § Adaptive Boosting [Freund & Schapire, 1997]](http://slidetodoc.com/presentation_image/77c341eb8fa3aa7e7c8cba059a5de7bc/image-3.jpg)

Ensemble Learning § Bootstrap Aggregating [Breiman, 1996] § Adaptive Boosting [Freund & Schapire, 1997] § Random Forests [Breiman, 2001] 3

![Ensemble Learning § Bootstrap Aggregating [Breiman, 1996] 4 Ensemble Learning § Bootstrap Aggregating [Breiman, 1996] 4](http://slidetodoc.com/presentation_image/77c341eb8fa3aa7e7c8cba059a5de7bc/image-4.jpg)

Ensemble Learning § Bootstrap Aggregating [Breiman, 1996] 4

![Ensemble Learning § Random Forests [Breiman, 2001] 5 Ensemble Learning § Random Forests [Breiman, 2001] 5](http://slidetodoc.com/presentation_image/77c341eb8fa3aa7e7c8cba059a5de7bc/image-5.jpg)

Ensemble Learning § Random Forests [Breiman, 2001] 5

![Ensemble Learning § Adaptive Boosting [Freund & Schapire, 1997] 6 Ensemble Learning § Adaptive Boosting [Freund & Schapire, 1997] 6](http://slidetodoc.com/presentation_image/77c341eb8fa3aa7e7c8cba059a5de7bc/image-6.jpg)

Ensemble Learning § Adaptive Boosting [Freund & Schapire, 1997] 6

Outlier Ensembles 1) What detectors? How to assemble? 2) Parallel? Sequential? 7

Outlier Ensembles 1) What detectors? How to assemble? q q q Feature Bagging [Lazarevic & Kumar, 2005] § avg, max Isolation Forest [Liu, Ting, Zhou; 2008] ULARA [Klementiev, Roth, Small; 2007] § weighted voting 2) Parallel? Sequential? 8

![agreement rates –to–> error rates [Platanios, Blum, Mitchell; 2014] pairwise agreement rates (computed from agreement rates –to–> error rates [Platanios, Blum, Mitchell; 2014] pairwise agreement rates (computed from](http://slidetodoc.com/presentation_image/77c341eb8fa3aa7e7c8cba059a5de7bc/image-9.jpg)

agreement rates –to–> error rates [Platanios, Blum, Mitchell; 2014] pairwise agreement rates (computed from outputs) error rates (unknown) 9

Outlier Ensembles 1) What detectors? How to assemble? 2) Parallel? Sequential? BOTH! 10

![Bias-Variance decomposition of Error expected error [Aggarwal & Sathe, 2015] Bias 2 Variance 11 Bias-Variance decomposition of Error expected error [Aggarwal & Sathe, 2015] Bias 2 Variance 11](http://slidetodoc.com/presentation_image/77c341eb8fa3aa7e7c8cba059a5de7bc/image-11.jpg)

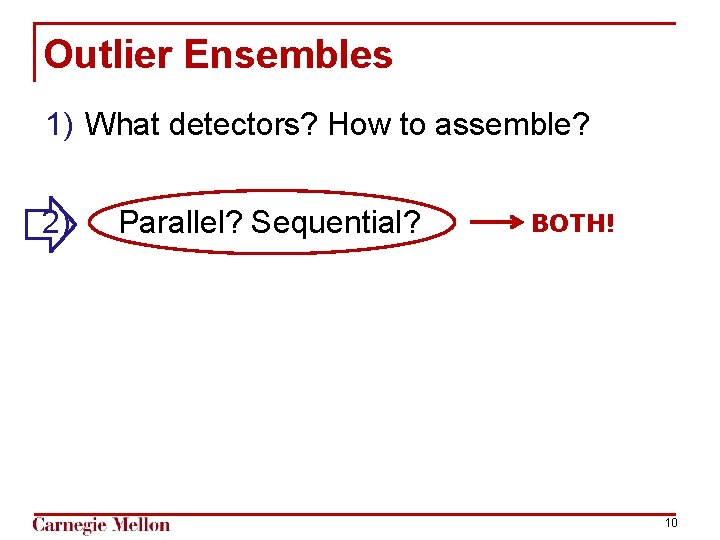

Bias-Variance decomposition of Error expected error [Aggarwal & Sathe, 2015] Bias 2 Variance 11

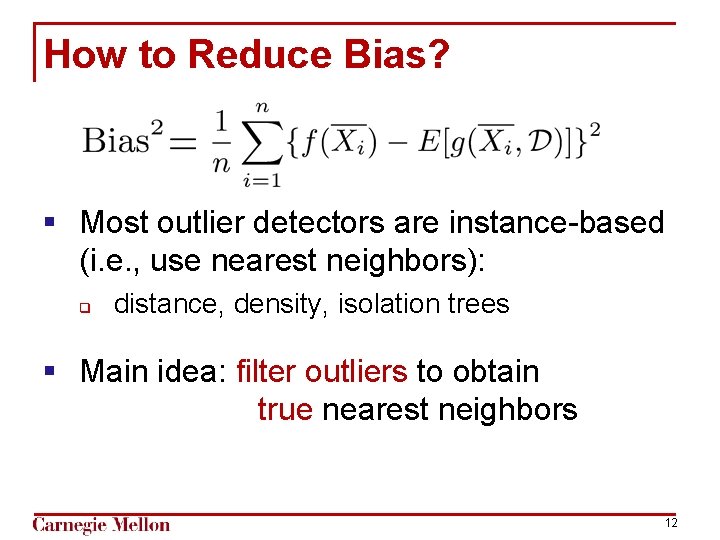

How to Reduce Bias? § Most outlier detectors are instance-based (i. e. , use nearest neighbors): q distance, density, isolation trees § Main idea: filter outliers to obtain true nearest neighbors 12

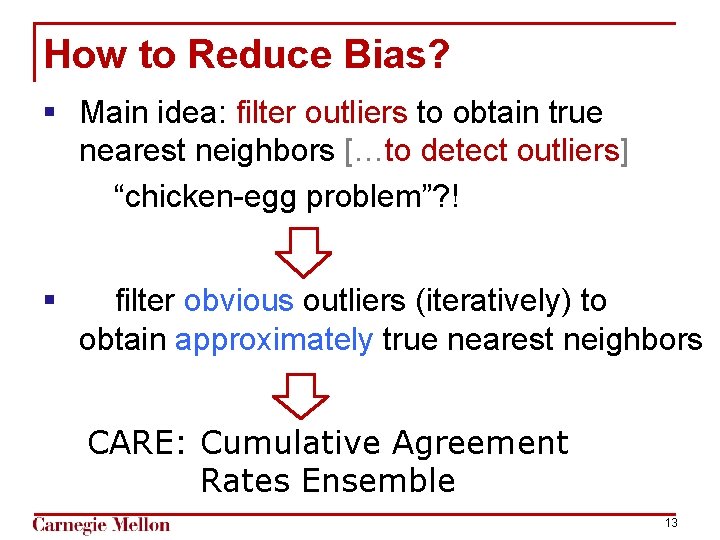

How to Reduce Bias? § Main idea: filter outliers to obtain true nearest neighbors […to detect outliers] “chicken-egg problem”? ! § filter obvious outliers (iteratively) to obtain approximately true nearest neighbors CARE: Cumulative Agreement Rates Ensemble 13

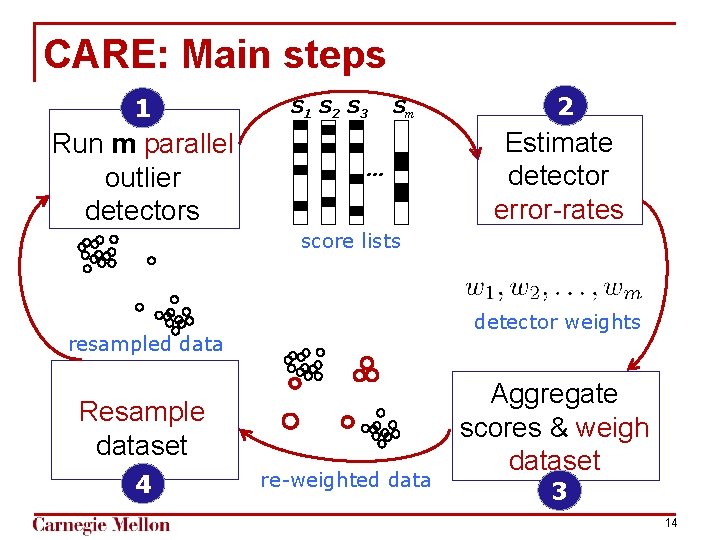

CARE: Main steps 1 Run m parallel outlier detectors S 1 S 2 S 3 Sm … 2 Estimate detector error-rates score lists detector weights resampled data Resample dataset 4 re-weighted data Aggregate scores & weigh dataset 3 14

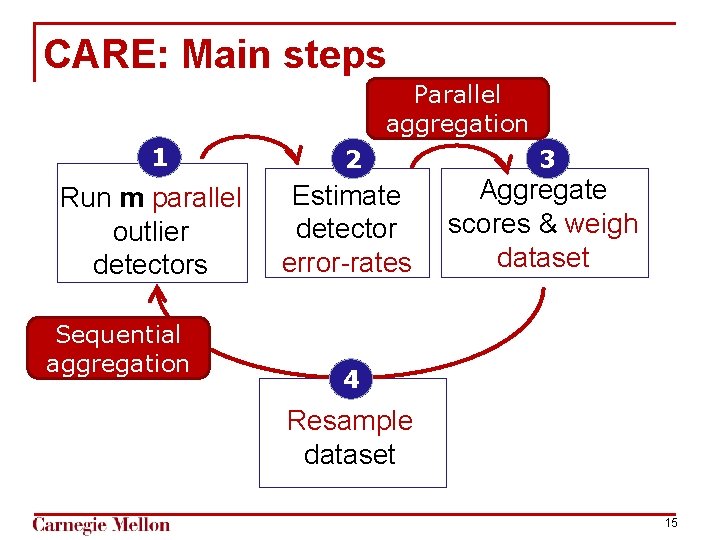

CARE: Main steps Parallel aggregation 1 Run m parallel outlier detectors Sequential aggregation 2 3 Estimate detector error-rates Aggregate scores & weigh dataset 4 Resample dataset 15

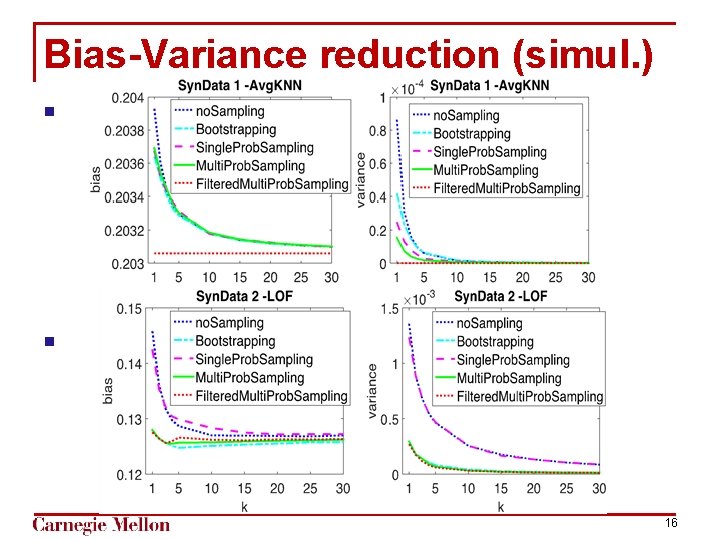

Bias-Variance reduction (simul. ) § : § 16

Experimented datasets http: //odds. cs. stonybrook. edu/ 17

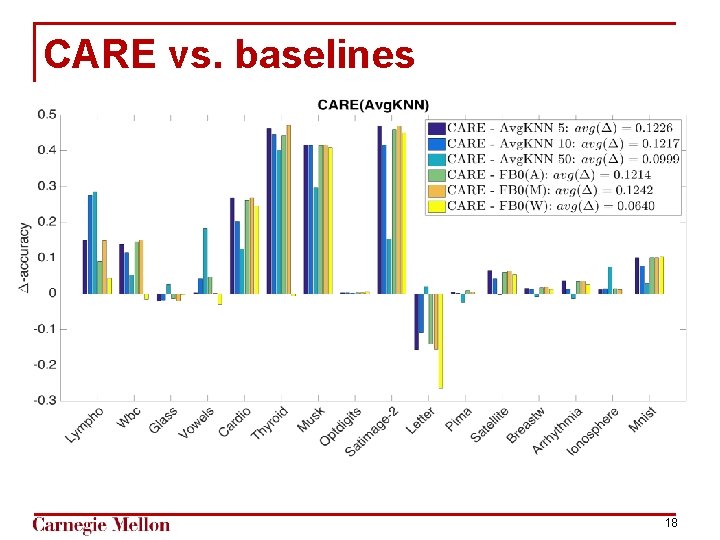

CARE vs. baselines 18

![Existing Outlier Ensembles § Liu et al. ’s Isolation Forest (i. F) [ICDM 2008] Existing Outlier Ensembles § Liu et al. ’s Isolation Forest (i. F) [ICDM 2008]](http://slidetodoc.com/presentation_image/77c341eb8fa3aa7e7c8cba059a5de7bc/image-19.jpg)

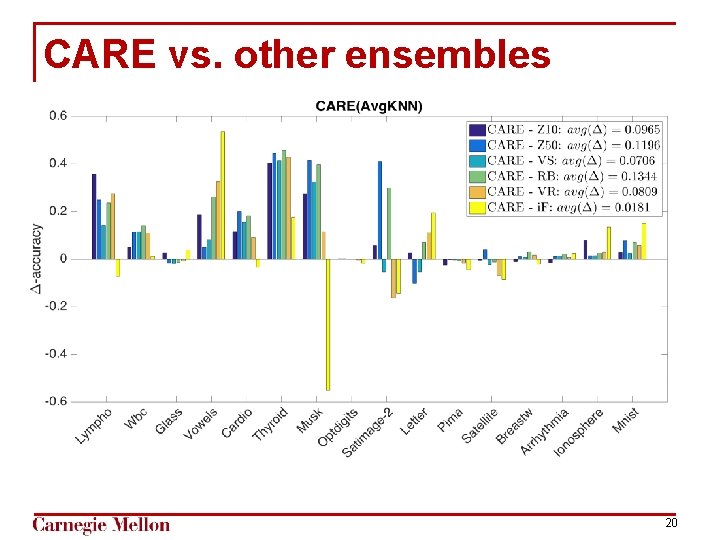

Existing Outlier Ensembles § Liu et al. ’s Isolation Forest (i. F) [ICDM 2008] § Zimek et al. ’s Subsampling [KDD 2013] § Sample size – 10% (Z 10), 50%(Z 50) § Aggarwal & Sathe’s [KDD 2015], § Variable Sampling (VR) § Rotated Bagging (RB) § Variable sampling with Rotated bagging (VR) 19 19

CARE vs. other ensembles 20

CARE for CODE DATA ? http: //shebuti. com for Outlier Detection Data Sets http: //odds. cs. stonybrook. edu/ Thanks: 21

- Slides: 21