SEEDING CLOUDBASED SERVICES DISTRIBUTED RATE LIMITING DRL Kevin

SEEDING CLOUD-BASED SERVICES: DISTRIBUTED RATE LIMITING (DRL) Kevin Webb, Barath Raghavan, Kashi Vishwanath, Sriram Ramabhadran, Kenneth Yocum, and Alex C. Snoeren

Seeding the Cloud Technologies to deliver on the promise cloud computing Previously: Process data in the cloud (Mortar) � Produced/stored across providers � Find Ken Yocum or Dennis Logothetis for more info Today: Control resource usage: “cloud control” with DRL � Use resources at multiple sites (e. g. , CDN) � Complicates resource accounting and control � Provide cost control

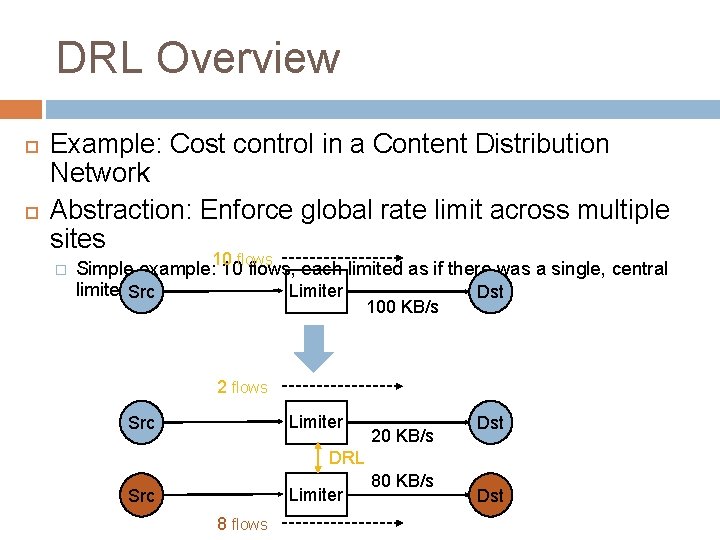

DRL Overview Example: Cost control in a Content Distribution Network Abstraction: Enforce global rate limit across multiple sites � 10 flows Simple example: 10 flows, each limited as if there was a single, central limiter Src Limiter Dst 100 KB/s 2 flows Limiter Src 20 KB/s Dst DRL Limiter Src 8 flows 80 KB/s Dst

Goals & Challenges Up to now � � Develop architecture and protocols for distributed rate limiting (SIGCOMM 07) Particular approach (FPS) is practical in the wide area Current goals: � � � Move DRL out of the lab and impact real services Validate SIGCOMM results in real-world conditions Provide Internet testbed with ability to manage bandwidth in a distributed fashion Improve usability of Planet. Lab Challenges � � Run-time overheads: CPU, memory, communication Environment: link/node failures, software quirks

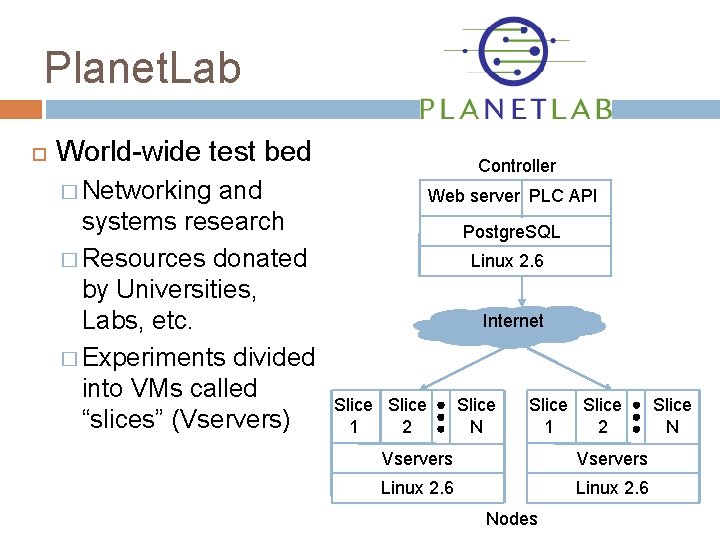

Planet. Lab World-wide test bed and systems research � Resources donated by Universities, Labs, etc. � Experiments divided into VMs called “slices” (Vservers) Controller � Networking Web server PLC API Postgre. SQL Linux 2. 6 Internet Slice 2 1 Slice N Slice 2 1 Vservers Linux 2. 6 Nodes Slice N

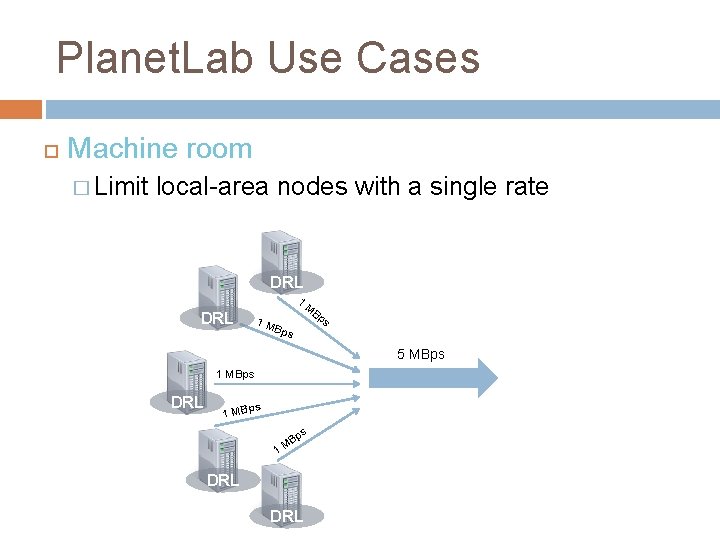

Planet. Lab Use Cases Planet. Lab needs DRL! � Donated bandwidth � Ease of administration Machine room � Limit Per slice � Limit local-area nodes to a single rate experiments in the wide area Per organization � Limit all slices belonging to an organization

Planet. Lab Use Cases Machine room � Limit local-area nodes with a single rate DRL 1 DRL M Bp s 1 M Bps 5 MBps 1 MBps DRL s 1 MBp ps B 1 M DRL

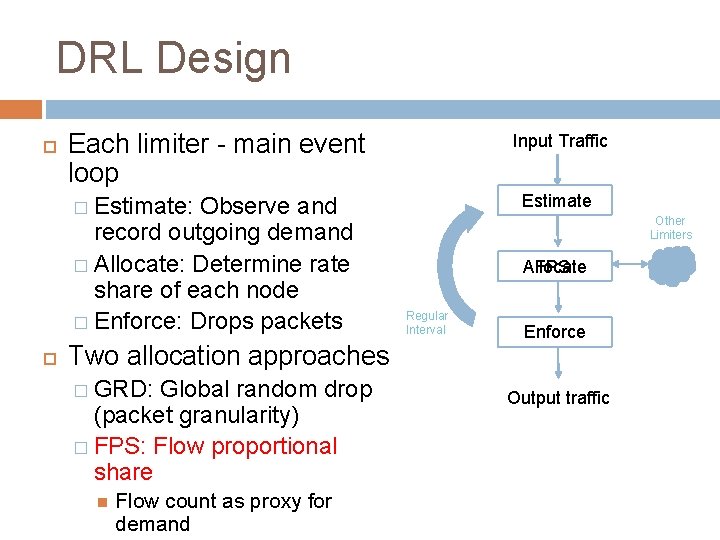

DRL Design Each limiter - main event loop Observe and record outgoing demand � Allocate: Determine rate share of each node � Enforce: Drops packets Input Traffic Estimate � Estimate: Two allocation approaches � GRD: Global random drop (packet granularity) � FPS: Flow proportional share Flow count as proxy for demand Other Limiters FPS Allocate Regular Interval Enforce Output traffic

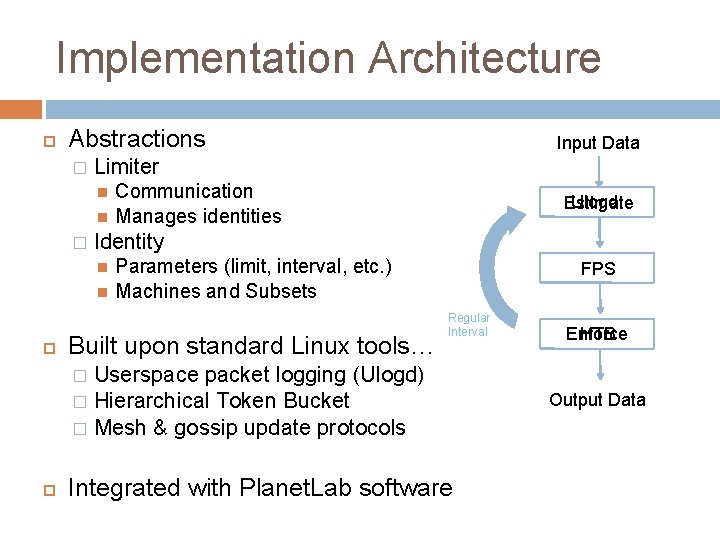

Implementation Architecture Abstractions � Limiter � Communication Manages identities Ulogd Estimate Identity Input Data Parameters (limit, interval, etc. ) Machines and Subsets Built upon standard Linux tools… FPS Regular Interval Userspace packet logging (Ulogd) � Hierarchical Token Bucket � Mesh & gossip update protocols Enforce HTB � Integrated with Planet. Lab software Output Data

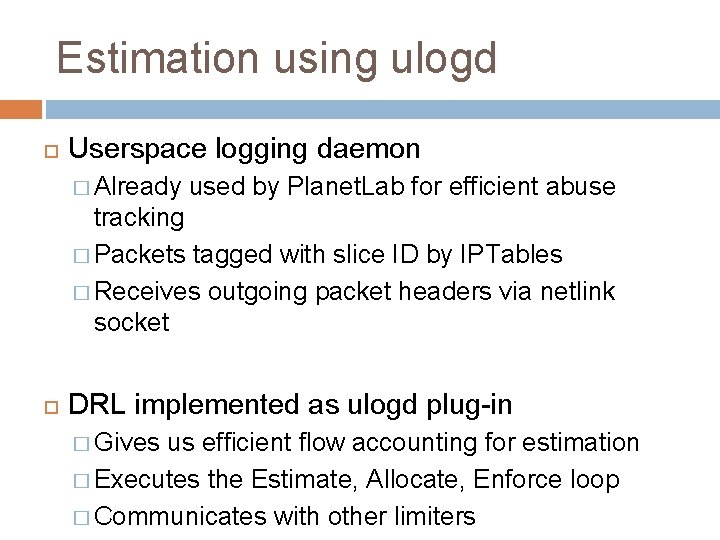

Estimation using ulogd Userspace logging daemon � Already used by Planet. Lab for efficient abuse tracking � Packets tagged with slice ID by IPTables � Receives outgoing packet headers via netlink socket DRL implemented as ulogd plug-in � Gives us efficient flow accounting for estimation � Executes the Estimate, Allocate, Enforce loop � Communicates with other limiters

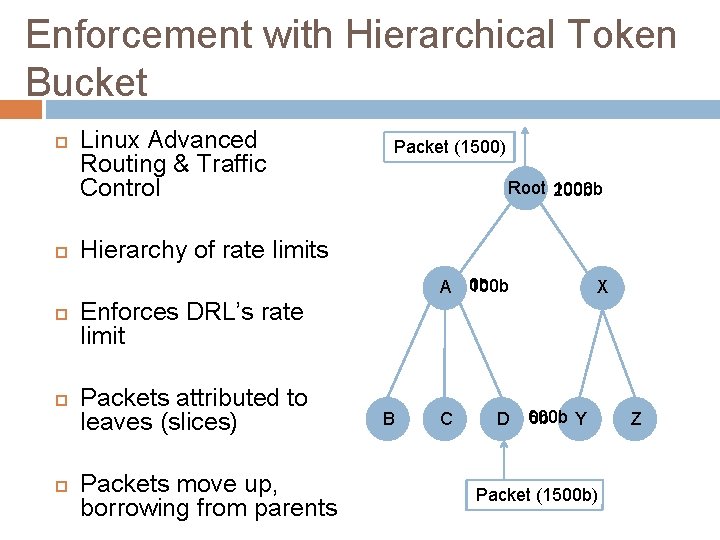

Enforcement with Hierarchical Token Bucket Linux Advanced Routing & Traffic Control Packet (1500) Root 200 b 1000 b Hierarchy of rate limits 100 b A 0 b X Enforces DRL’s rate limit Packets attributed to leaves (slices) Packets move up, borrowing from parents B C D 600 b 0 b Y Packet (1500 b) Z

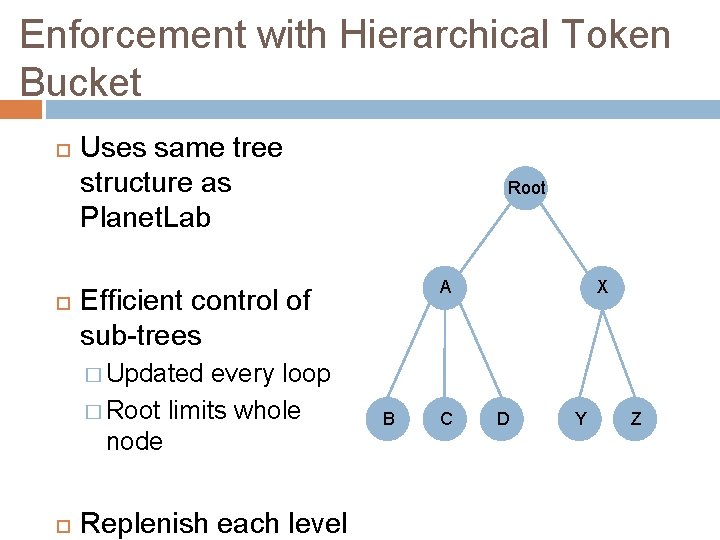

Enforcement with Hierarchical Token Bucket Uses same tree structure as Planet. Lab Root A Efficient control of sub-trees every loop � Root limits whole node X � Updated Replenish each level B C D Y Z

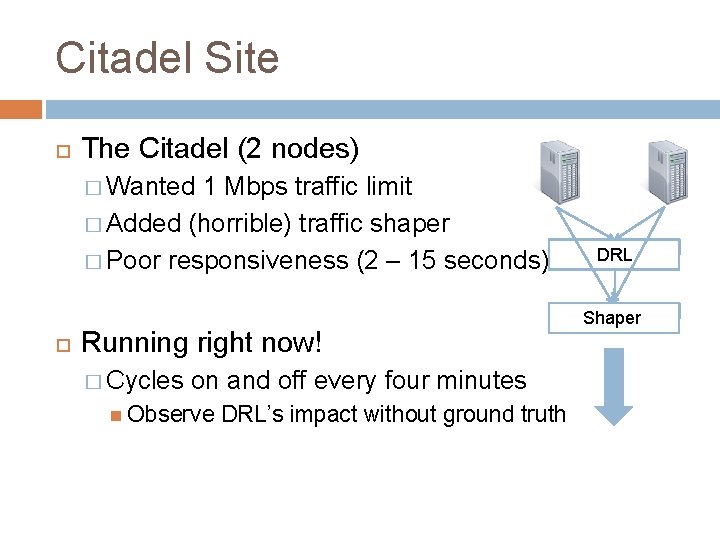

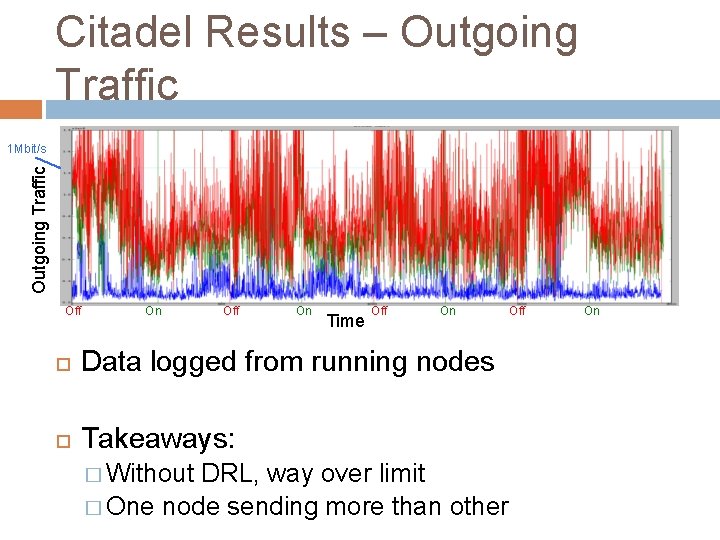

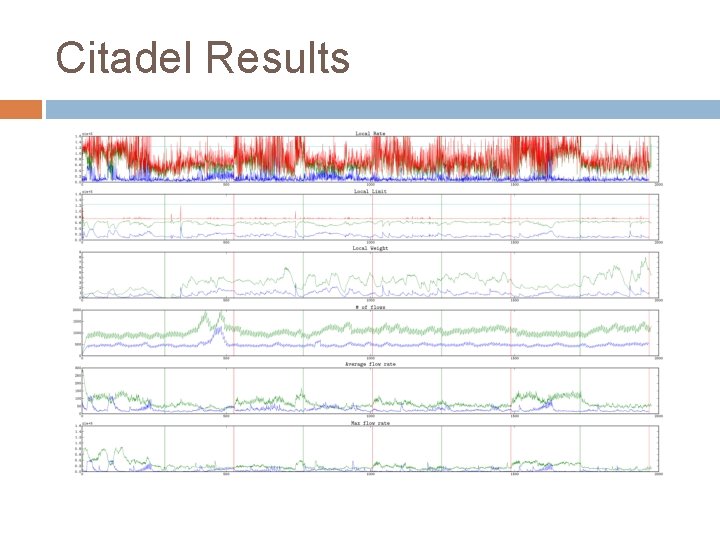

Citadel Site The Citadel (2 nodes) � Wanted 1 Mbps traffic limit � Added (horrible) traffic shaper � Poor responsiveness (2 – 15 seconds) Running right now! � Cycles on and off every four minutes Observe DRL’s impact without ground truth DRL Shaper

Citadel Results – Outgoing Traffic 1 Mbit/s Off On Time Off On Data logged from running nodes Takeaways: � Without DRL, way over limit � One node sending more than other Off On

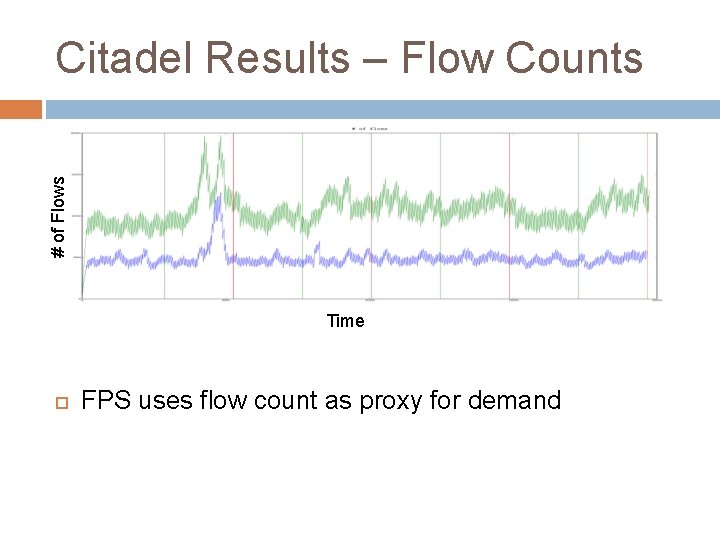

# of Flows Citadel Results – Flow Counts Time FPS uses flow count as proxy for demand

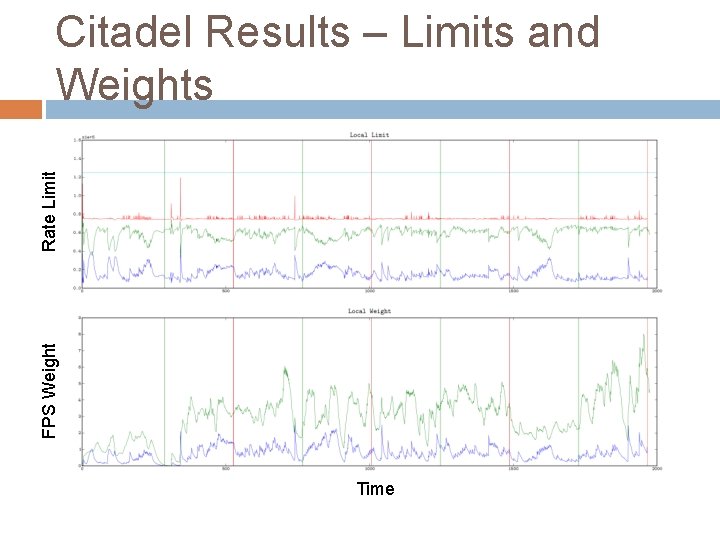

FPS Weight Rate Limit Citadel Results – Limits and Weights Time

Lessons Learned Flow counting is not always the best proxy for demand � FPS state transitions were irregular � Added checks and dampening/hysteresis in problem cases Can estimate after enforce � Ulogd only shows packets after HTB � FPS is forgiving to software limitations HTB is difficult � HYSTERESIS variable � TCP Segmentation offloading

Ongoing work Other use cases Larger-scale tests Complete Planet. Lab administrative interface Standalone version Continue DRL rollout on Planet. Lab � UCSD’s Planet. Lab nodes soon

Questions? Code is available from Planet. Lab svn � http: //svn. planet-lab. org/svn/Distributed. Rate. Limiting/

Citadel Results

- Slides: 21