PIC port dinformaci cientfica Experiences with httpWeb DAV

PIC port d’informació científica Experiences with http/Web. DAV protocols for data access in high throughput computing Gerard. Bernabeu@pic. es 1

PIC port d’informació científica • • Index Motivation The target Why Web. Dav/HTTP How to access Web. Dav d. Cache as Web. Dav server Performance tests Conclusions

PIC port d’informació científica Motivation Current usage of non standard protocols for data access at the WLCG Tier 1 (at PIC mainly [gsi]d. Cap). Hard to justify new projects to use non standard protocols. Data access inefficiencies with current protocols – 2 data access patterns observed at the Tier 1 • Bulk data transfer (dccp like): high throughput • Read as need (randomly or stream like, dcopen from the application): usually low throughput

PIC port d’informació científica The target An efficient data access model should provide – – Fast data transfer – – Local (Worker. Node) caching support Lightweight protocol (minimize protocol/client overhead) Random access capabilities Standard protocol Easy to use (POSIX) NFSv 4. 1 might be our solution – – Native kernel support (caching) d. Cache supports it! POSIX-like (read OR write)

PIC port d’informació científica Why Web. Dav/http then? NFSv 4. 1 is still in experimental stage – – Requires experimental kernel in the client But it works already! (tested with d. Cache 1. 9. 10 -1 & SLC 5 client) Web. Dav is standard (HTTP) – We do not expect to reach NFSv 4. 1 performance, specially for remote random data access. – but it is efficient in bulk data transfer (high throughput)

PIC port d’informació científica How to access Web. Dav A Web. Dav share can be accessed in many ways Read as need – Mounted: davfs, fusedav POSIX like (read OR write) with d. Cache server – Native support from GNOME, KDE and MS Windows POSIX like (read OR write) with d. Cache server see http: //www. dcache. org/articles/i, article-20100114001. html by Dr. Gerd Berhmann, NDGF – root client direct HTTP access (not working with 1. 9. 10 -1) Bulk data transfer – Standard HTTP/Web. Dav clients: wget, curl, etc.

PIC port d’informació científica Already deployed at many Tier 1 & Tier 2 Using d. Cache as a server. Why? well known by WLCG community Single server for many data access protocols ([gsi]dcap, [grid]ftp, xrootd, NFSv 4. 1, Web. Dav) Smooth protocol transition Storage Resource Management (SRMv 2) Distributed & scalable Easy to administrate (~3 PB served to 7 projects with 1, 5 FTE!)

PIC port d’informació científica Web. Dav at d. Cache 1. 9. 10 -1 • Web. Dav mount utilities for Linux (fusedav and davfs 2) need webdav. redirect. on-read=false on dcache. conf • • This means that all data transfers will flow through the Web. Dav door and it may become a bottleneck. It might change in the future! • Root client is not able to access files using HTTP protocol with d. Cache's 1. 9. 10 -1 Web. Dav server (error 203) – – Tested with root client version: http: //xrootd. slac. stanford. edu/download/20091028 -1003/xrootd 20091028 -1003. x 86_64_linux_26_dbg. tgz d. Cache. org is working on it • Following performance tests are focused in bulk data transfer (wget)

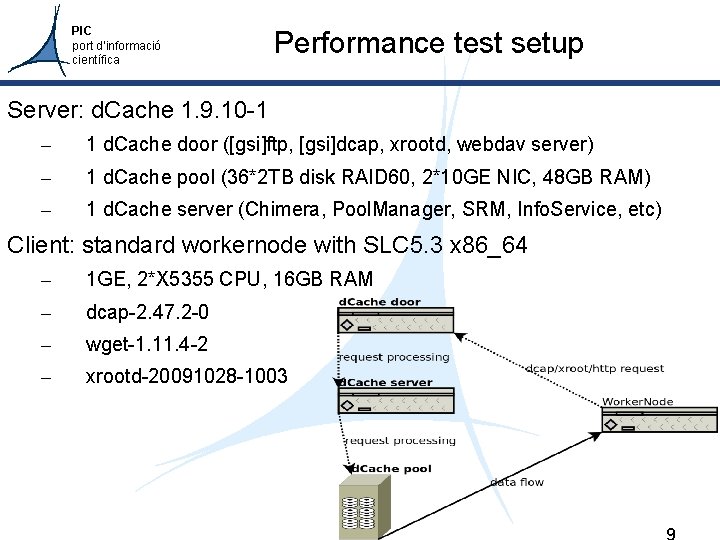

PIC port d’informació científica Performance test setup Server: d. Cache 1. 9. 10 -1 – 1 d. Cache door ([gsi]ftp, [gsi]dcap, xrootd, webdav server) – 1 d. Cache pool (36*2 TB disk RAID 60, 2*10 GE NIC, 48 GB RAM) – 1 d. Cache server (Chimera, Pool. Manager, SRM, Info. Service, etc) Client: standard workernode with SLC 5. 3 x 86_64 – 1 GE, 2*X 5355 CPU, 16 GB RAM – dcap-2. 47. 2 -0 – wget-1. 11. 4 -2 – xrootd-20091028 -1003

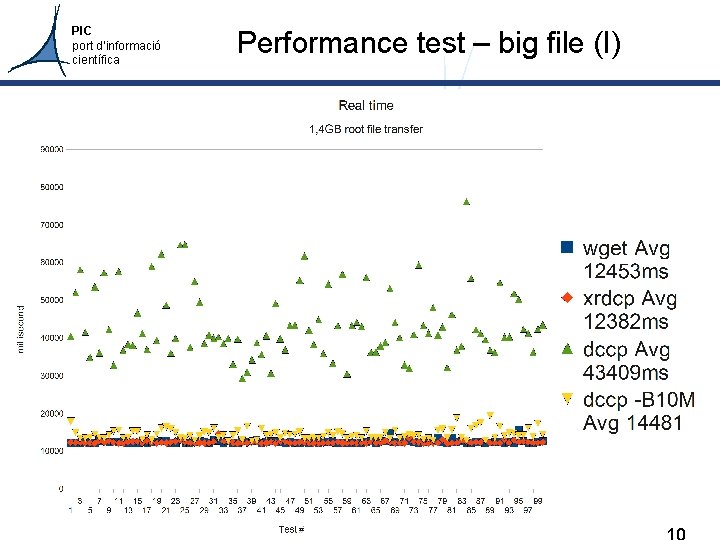

PIC port d’informació científica Performance test – big file (I)

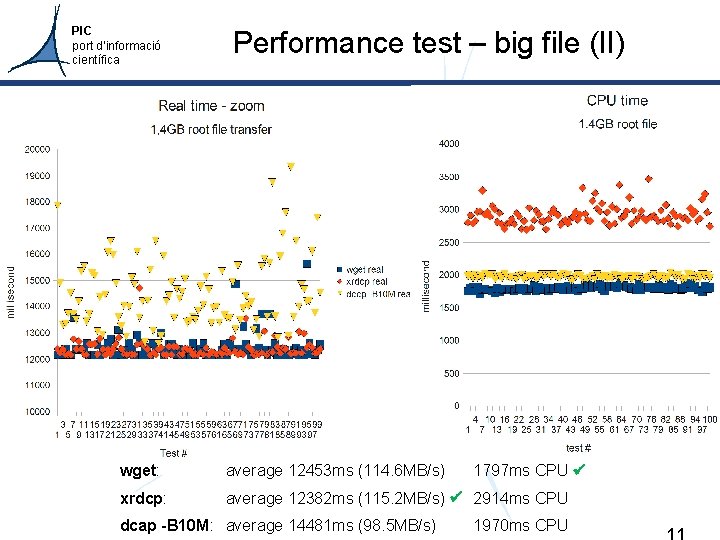

PIC port d’informació científica Performance test – big file (II) wget: average 12453 ms (114. 6 MB/s) xrdcp: average 12382 ms (115. 2 MB/s) 2914 ms CPU dcap -B 10 M: average 14481 ms (98. 5 MB/s) 1797 ms CPU 1970 ms CPU

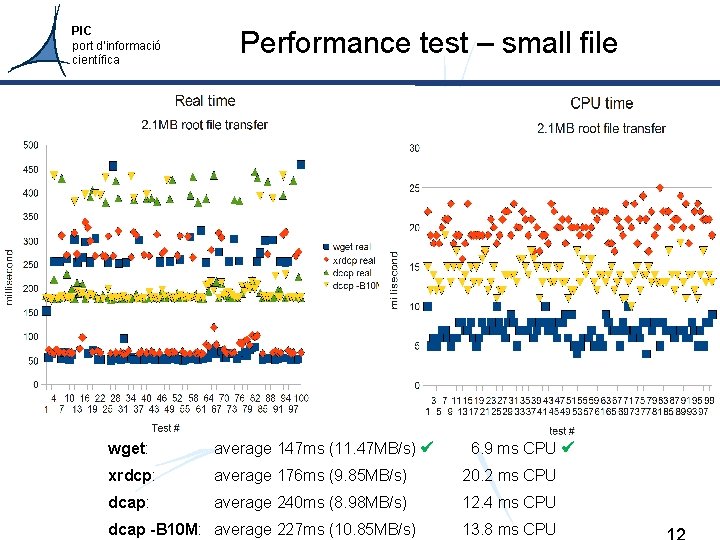

PIC port d’informació científica Performance test – small file wget: average 147 ms (11. 47 MB/s) xrdcp: average 176 ms (9. 85 MB/s) 20. 2 ms CPU dcap: average 240 ms (8. 98 MB/s) 12. 4 ms CPU dcap -B 10 M: average 227 ms (10. 85 MB/s) 6. 9 ms CPU 13. 8 ms CPU

PIC port d’informació científica Conclusions HTTP+wget is fast as a bulk file transfer solution for both big and small files. HTTP+wget is the most CPU efficient tested solution. HTTP+wget is standard – There are other clients besides wget! – HTTP proxies can be used More efforts are required to use HTTP for “read as need” data access (ie: using it as a mounted FS). – Shouldn't we focus on NFSv 4. 1 for this use case?

PIC port d’informació científica Questions? Thanks to d. Cache. org Carlos Osuna from IFAE

PIC port d’informació científica About NFSv 4. 1 in d. Cache - For kernel client 2. 6. 32 and above at least d. Cache 1. 9. 10 -1 is required. - d. Cache NFSv 4. 1 server is still experimental and in development - d. Cache 1. 9. 10 -1 NFSv 4. 1 server is focused in focus is read data access. - In d. Cache 1. 9. 10 -1 NFSv 3 & NFSv 4. 1 services can not be in the same server - d. Cache 1. 9. 10 -1 is the latest version available today

PIC port d’informació científica Performance test methodology Because of the observation of two groups of transfer times per protocol, performance test with small files was repeated twice, getting the same results. Test commands: (file=/pnfs/pic. es/at 3/data 10_7 Te. V. 00160963_physics_Muons_20100812_00_D 2 AODM_TOPQCDMU. root; for i in `seq 1 100`; do echo WGET TEST number $i `date`; time wget -q -O /dev/null http: //gridftpdisk. pic. es: 2880$file; echo DCCP TEST number $i `date`; time dccp dcap: //gridftp-disk. pic. es$file /dev/null; echo XRDCP TEST number $i `date`; time /root/20091028 -1003/bin/xrdcp -s -f root: //gridftpdisk. pic. es$file /dev/null; echo DCCPB 10 M TEST number $i `date`; time dccp -B 10000000 dcap: //gridftpdisk. pic. es$file /dev/null; done) > wget. VSdccp 2 MB. output 2>&1

- Slides: 16