Lessons Learned from Managing a Petabyte Jacek Becla

Lessons Learned from Managing a Petabyte Jacek Becla Stanford Linear Accelerator Center (SLAC) Daniel Wang now University of CA in Irvine, formerly SLAC

Roadmap u Who we are Don’t miss the “lessons”, just look for yellow stickers Simplified data processing u Core architecture and migration u u Challenges/surprises/problems u Summary CIDR’ 05, Asilomar, CA 2 of 18

Who We Are u Stanford Linear Accelerator Center – Do. E National Lab, operated by Stanford University u Ba. Bar – one of the largest High Energy Physics (HEP) experiments online – in production since 1999 – over petabyte of production data u HEP – data intensive science – statistical studies – needle in a haystack searches CIDR’ 05, Asilomar, CA 3 of 18

Simplified Data Processing CIDR’ 05, Asilomar, CA 4 of 18

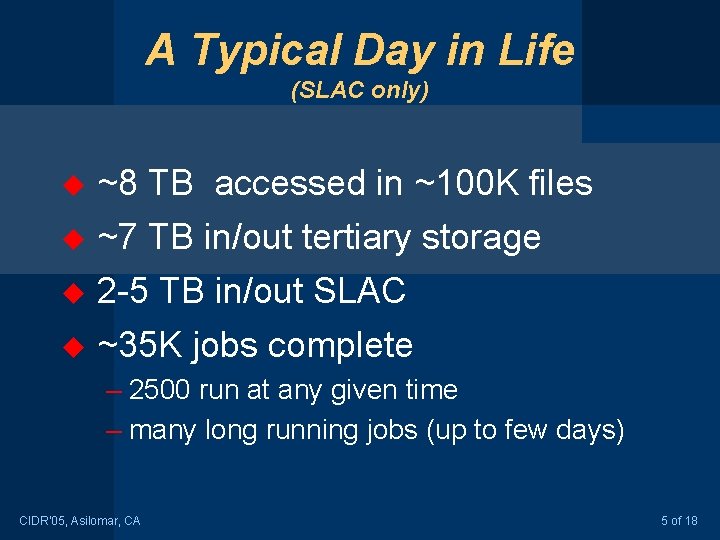

A Typical Day in Life (SLAC only) u ~8 TB accessed in ~100 K files ~7 TB in/out tertiary storage u 2 -5 TB in/out SLAC u u ~35 K jobs complete – 2500 run at any given time – many long running jobs (up to few days) CIDR’ 05, Asilomar, CA 5 of 18

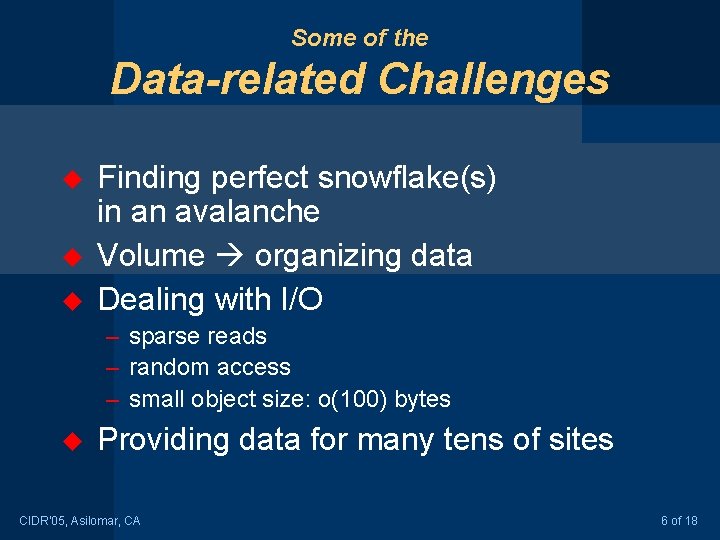

Some of the Data-related Challenges u u u Finding perfect snowflake(s) in an avalanche Volume organizing data Dealing with I/O – sparse reads – random access – small object size: o(100) bytes u Providing data for many tens of sites CIDR’ 05, Asilomar, CA 6 of 18

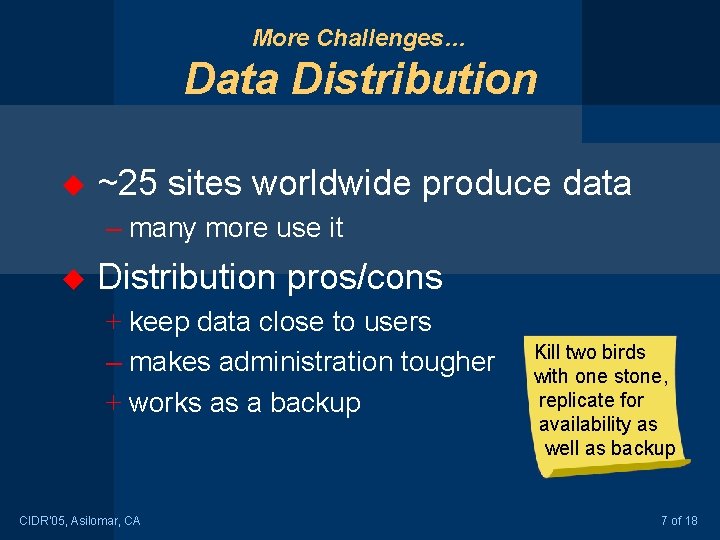

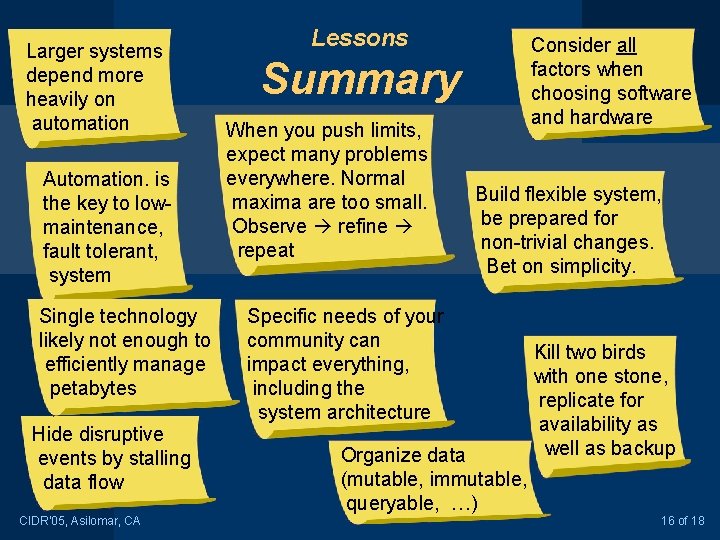

More Challenges… Data Distribution u ~25 sites worldwide produce data – many more use it u Distribution pros/cons + keep data close to users – makes administration tougher + works as a backup CIDR’ 05, Asilomar, CA Kill two birds with one stone, replicate for availability as well as backup 7 of 18

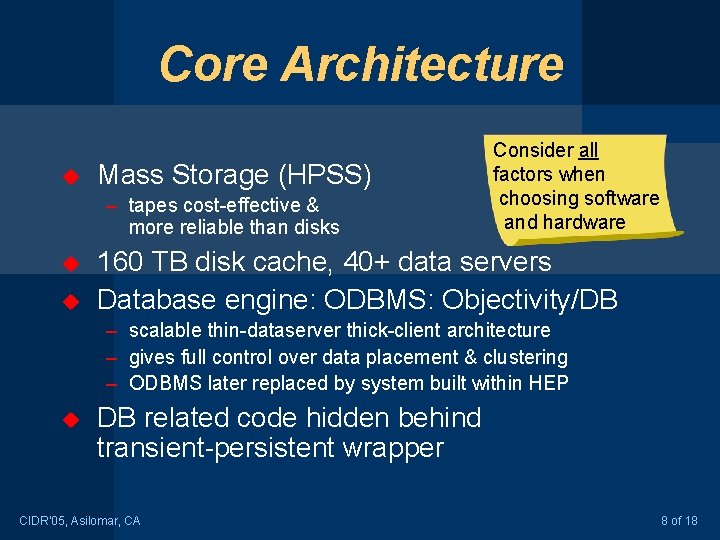

Core Architecture u Mass Storage (HPSS) – tapes cost-effective & more reliable than disks u u Consider all factors when choosing software and hardware 160 TB disk cache, 40+ data servers Database engine: ODBMS: Objectivity/DB – scalable thin-dataserver thick-client architecture – gives full control over data placement & clustering – ODBMS later replaced by system built within HEP u DB related code hidden behind transient-persistent wrapper CIDR’ 05, Asilomar, CA 8 of 18

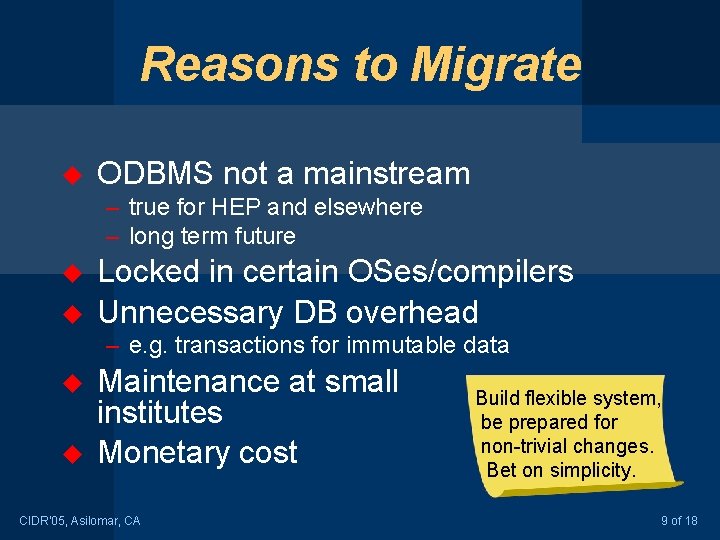

Reasons to Migrate u ODBMS not a mainstream – true for HEP and elsewhere – long term future u u Locked in certain OSes/compilers Unnecessary DB overhead – e. g. transactions for immutable data u u Maintenance at small institutes Monetary cost CIDR’ 05, Asilomar, CA Build flexible system, be prepared for non-trivial changes. Bet on simplicity. 9 of 18

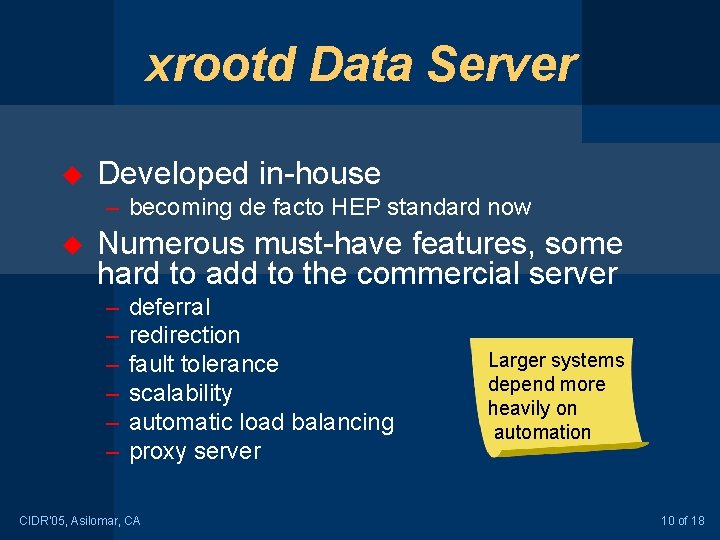

xrootd Data Server u Developed in-house – becoming de facto HEP standard now u Numerous must-have features, some hard to add to the commercial server – – – deferral redirection fault tolerance scalability automatic load balancing proxy server CIDR’ 05, Asilomar, CA Larger systems depend more heavily on automation 10 of 18

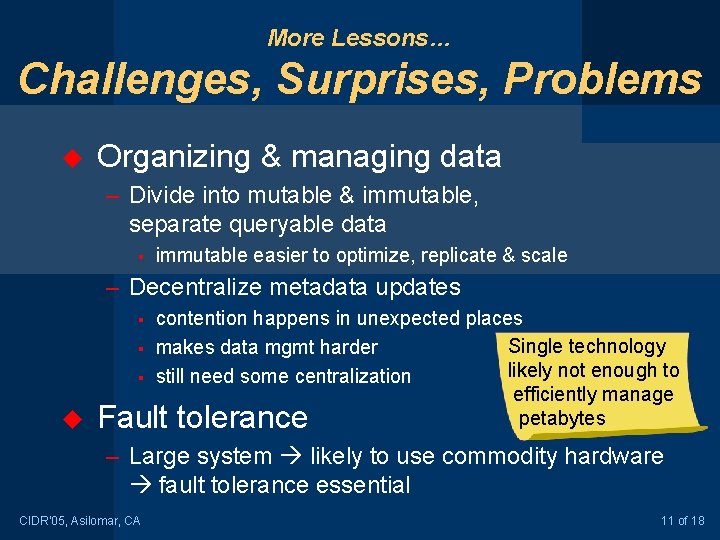

More Lessons… Challenges, Surprises, Problems u Organizing & managing data – Divide into mutable & immutable, separate queryable data § immutable easier to optimize, replicate & scale – Decentralize metadata updates § § § u contention happens in unexpected places Single technology makes data mgmt harder likely not enough to still need some centralization efficiently manage petabytes Fault tolerance – Large system likely to use commodity hardware fault tolerance essential CIDR’ 05, Asilomar, CA 11 of 18

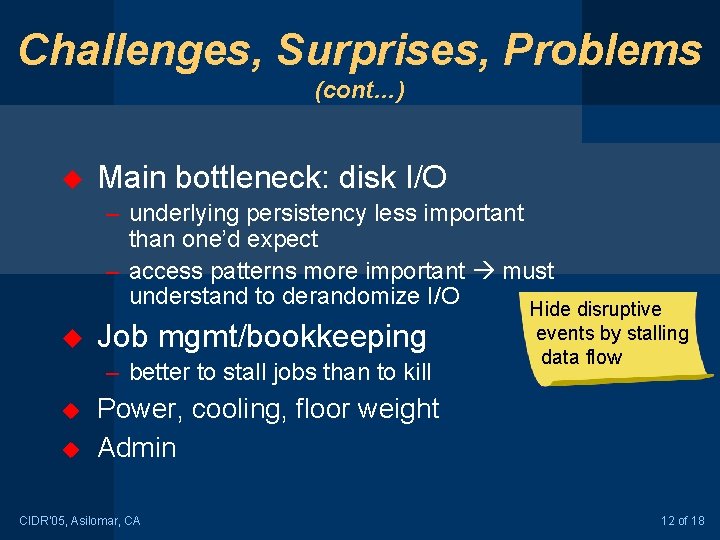

Challenges, Surprises, Problems (cont…) u Main bottleneck: disk I/O – underlying persistency less important than one’d expect – access patterns more important must understand to derandomize I/O Hide disruptive u Job mgmt/bookkeeping – better to stall jobs than to kill u u events by stalling data flow Power, cooling, floor weight Admin CIDR’ 05, Asilomar, CA 12 of 18

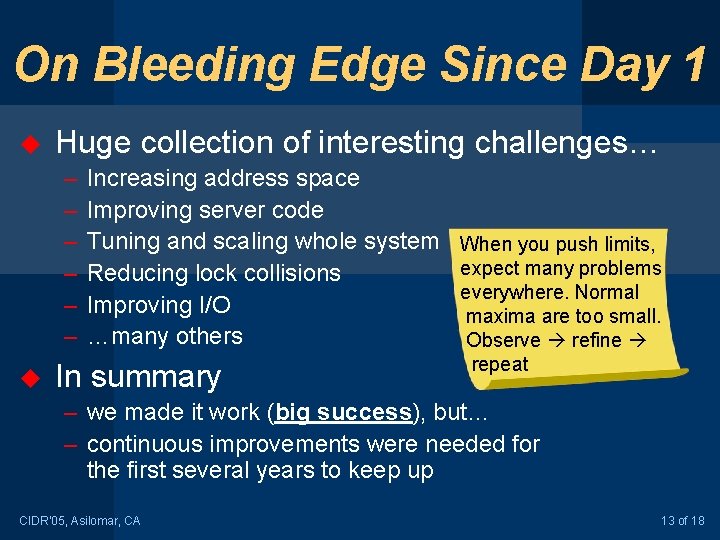

On Bleeding Edge Since Day 1 u Huge collection of interesting challenges… – – – u Increasing address space Improving server code Tuning and scaling whole system When you push limits, expect many problems Reducing lock collisions everywhere. Normal Improving I/O maxima are too small. …many others Observe refine In summary repeat – we made it work (big success), but… – continuous improvements were needed for the first several years to keep up CIDR’ 05, Asilomar, CA 13 of 18

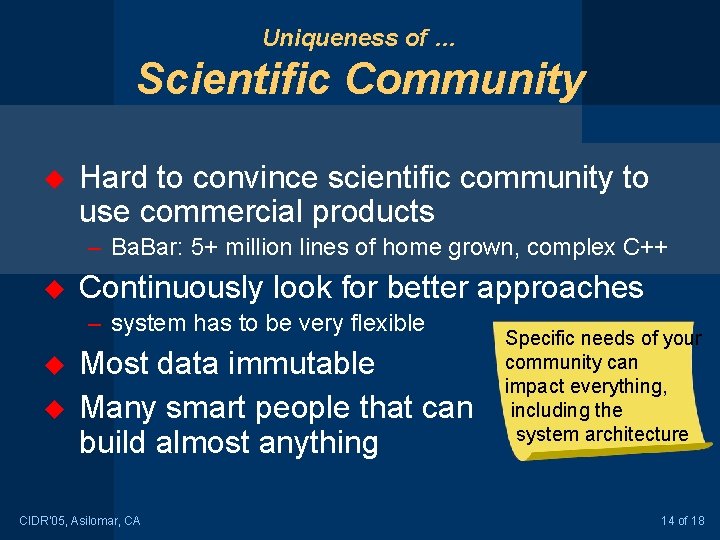

Uniqueness of … Scientific Community u Hard to convince scientific community to use commercial products – Ba. Bar: 5+ million lines of home grown, complex C++ u Continuously look for better approaches – system has to be very flexible u u Most data immutable Many smart people that can build almost anything CIDR’ 05, Asilomar, CA Specific needs of your community can impact everything, including the system architecture 14 of 18

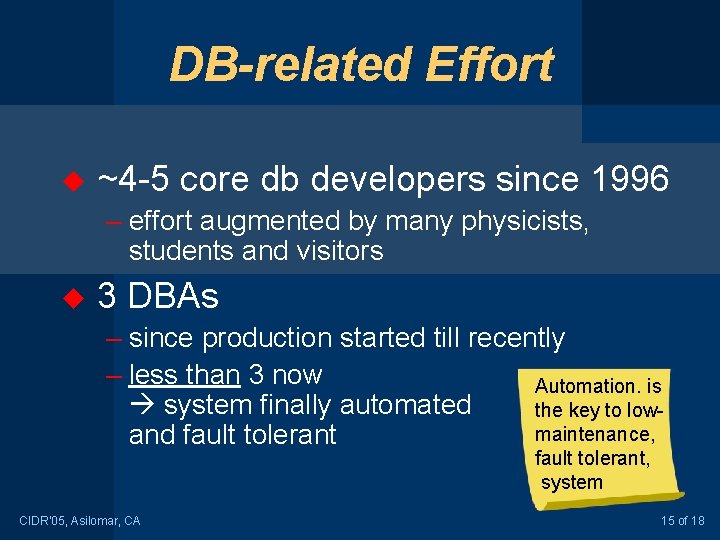

DB-related Effort u ~4 -5 core db developers since 1996 – effort augmented by many physicists, students and visitors u 3 DBAs – since production started till recently – less than 3 now Automation. is system finally automated the key to lowmaintenance, and fault tolerant, system CIDR’ 05, Asilomar, CA 15 of 18

Larger systems depend more heavily on automation Automation. is the key to lowmaintenance, fault tolerant, system Single technology likely not enough to efficiently manage petabytes Hide disruptive events by stalling data flow CIDR’ 05, Asilomar, CA Lessons Consider all factors when choosing software and hardware Summary When you push limits, expect many problems everywhere. Normal maxima are too small. Observe refine repeat Build flexible system, be prepared for non-trivial changes. Bet on simplicity. Specific needs of your community can impact everything, including the system architecture Organize data (mutable, immutable, queryable, …) Kill two birds with one stone, replicate for availability as well as backup 16 of 18

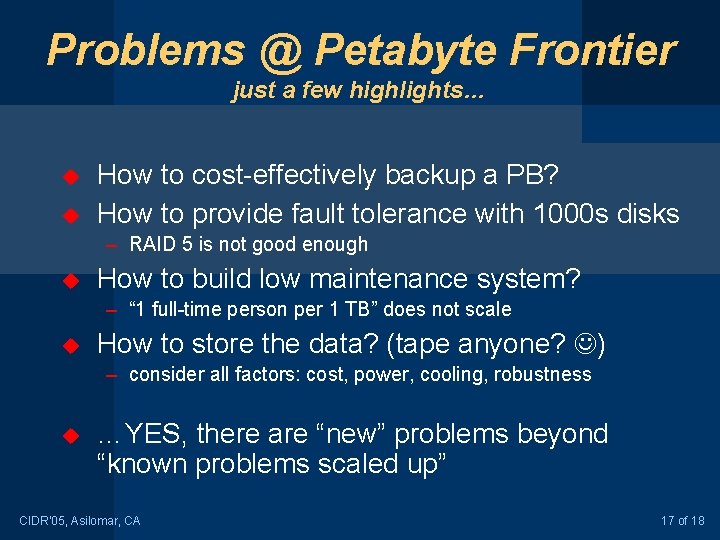

Problems @ Petabyte Frontier just a few highlights… u u How to cost-effectively backup a PB? How to provide fault tolerance with 1000 s disks – RAID 5 is not good enough u How to build low maintenance system? – “ 1 full-time person per 1 TB” does not scale u How to store the data? (tape anyone? ) – consider all factors: cost, power, cooling, robustness u …YES, there are “new” problems beyond “known problems scaled up” CIDR’ 05, Asilomar, CA 17 of 18

The Summary u Great success – ODBMS based system, migration & 2 nd generation – Some Do. D projects are being built on ODBMS u Lots of useful experience with managing (very) large datasets – Would not be able to achieve all that with any RDBMS (today) – Thin server thick client architecture works well – Starting to help astronomers (LSST) to manage their petabytes CIDR’ 05, Asilomar, CA 18 of 18

- Slides: 18