Introduction to Parallel Architectures Dr Laurence Boxer Niagara

Introduction to Parallel Architectures Dr. Laurence Boxer Niagara University CIS 270 - December '99

Parallel Computers • Purpose - speed • Divide a problem among processors • Let each processor work on its portion of problem in parallel (simultaneously) with other processors • Ideal - if p is the number of processors, get solution in 1/p of the time used by a computer of 1 processor • Actual - rarely get that much speedup, due to delays for interprocessor communications CIS 270 - December '99

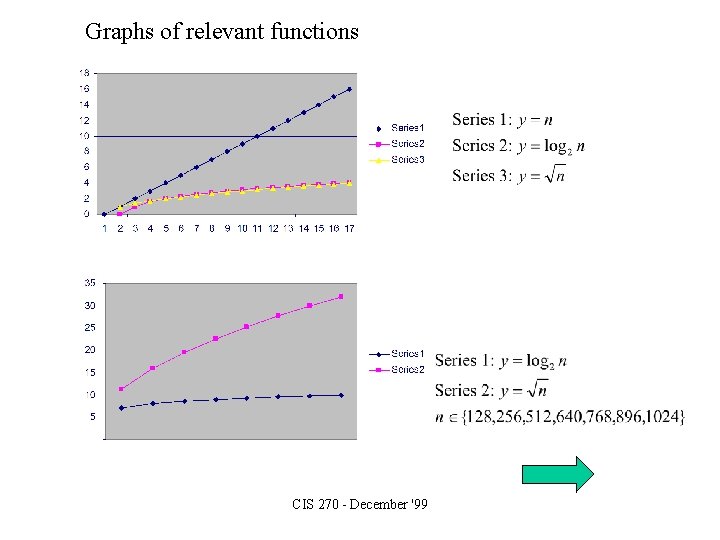

Graphs of relevant functions CIS 270 - December '99

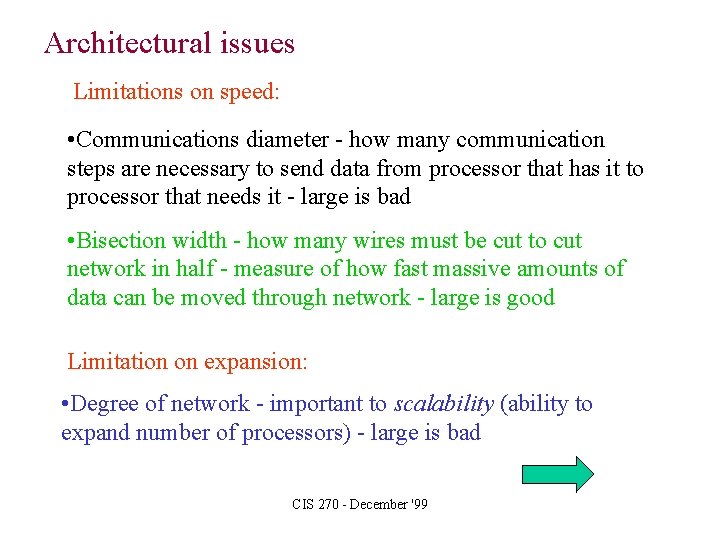

Architectural issues Limitations on speed: • Communications diameter - how many communication steps are necessary to send data from processor that has it to processor that needs it - large is bad • Bisection width - how many wires must be cut to cut network in half - measure of how fast massive amounts of data can be moved through network - large is good Limitation on expansion: • Degree of network - important to scalability (ability to expand number of processors) - large is bad CIS 270 - December '99

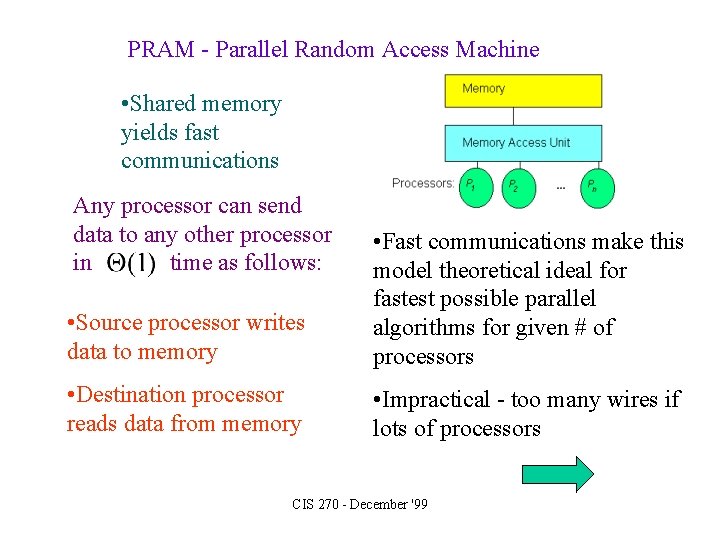

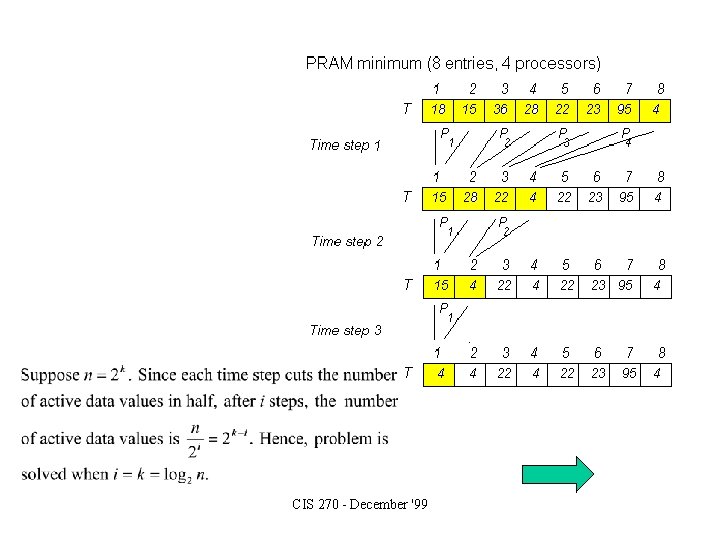

PRAM - Parallel Random Access Machine • Shared memory yields fast communications Any processor can send data to any other processor in time as follows: • Source processor writes data to memory • Fast communications make this model theoretical ideal for fastest possible parallel algorithms for given # of processors • Destination processor reads data from memory • Impractical - too many wires if lots of processors CIS 270 - December '99

CIS 270 - December '99

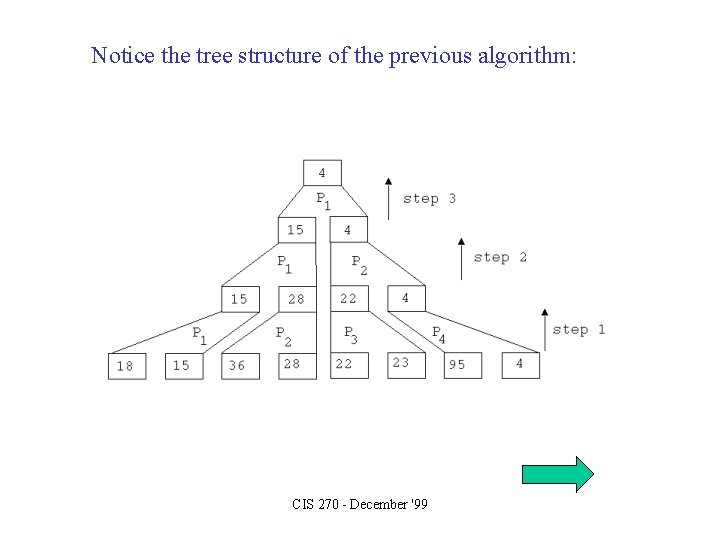

Notice the tree structure of the previous algorithm: CIS 270 - December '99

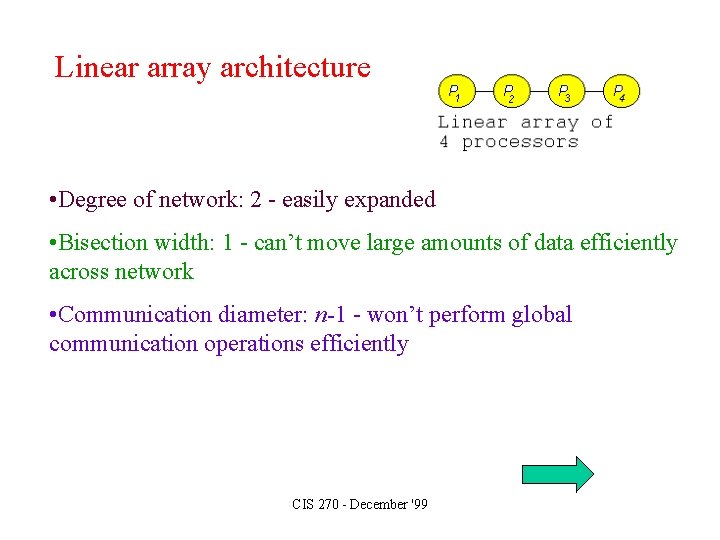

Linear array architecture • Degree of network: 2 - easily expanded • Bisection width: 1 - can’t move large amounts of data efficiently across network • Communication diameter: n-1 - won’t perform global communication operations efficiently CIS 270 - December '99

Total on linear array: • Assume 1 item per processor • Communications diameter implies • Since this is the time required to total n items on a RAM, there is no asymptotic benefit to using a linear array for this problem CIS 270 - December '99

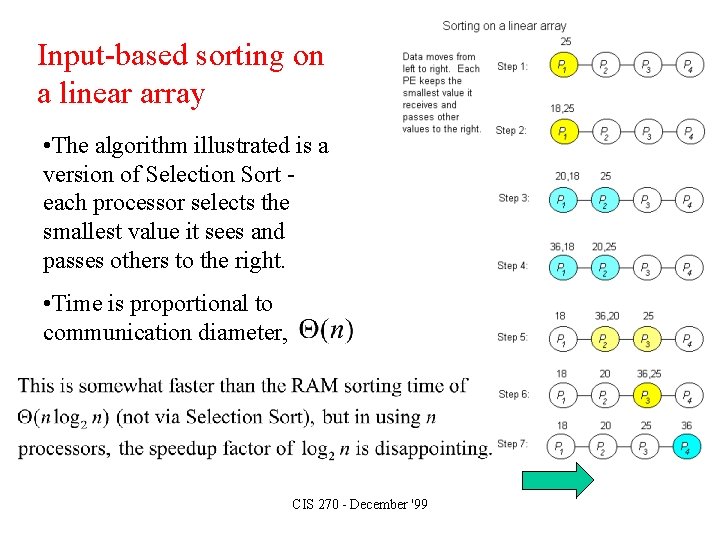

Input-based sorting on a linear array • The algorithm illustrated is a version of Selection Sort each processor selects the smallest value it sees and passes others to the right. • Time is proportional to communication diameter, CIS 270 - December '99

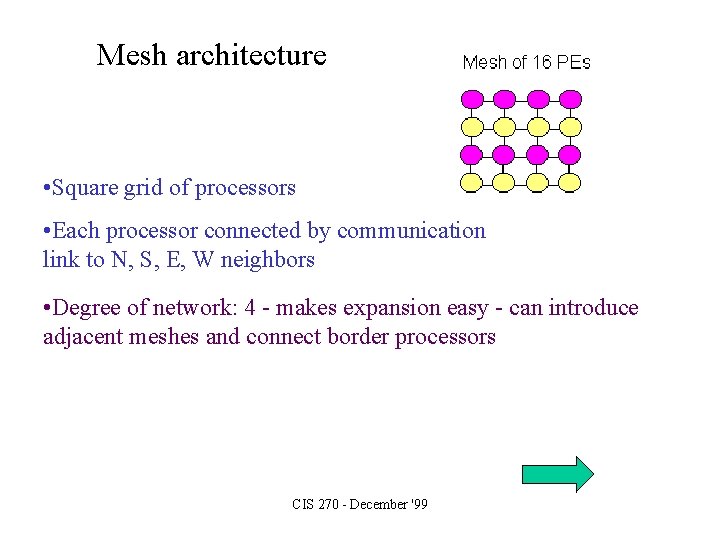

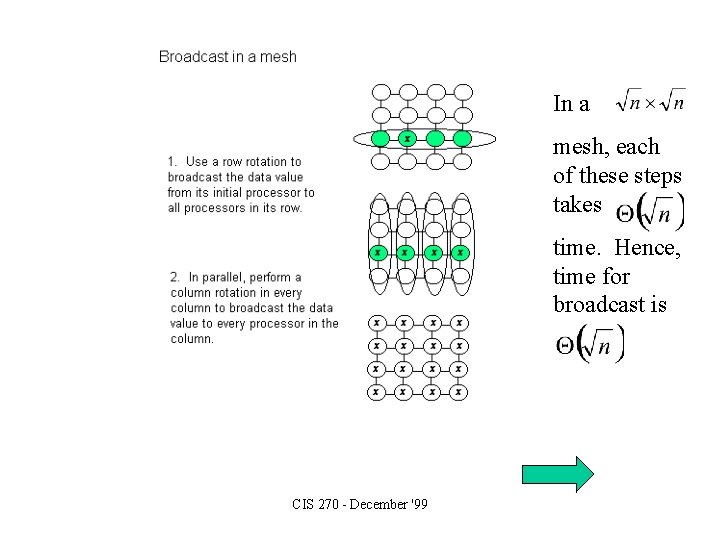

Mesh architecture • Square grid of processors • Each processor connected by communication link to N, S, E, W neighbors • Degree of network: 4 - makes expansion easy - can introduce adjacent meshes and connect border processors CIS 270 - December '99

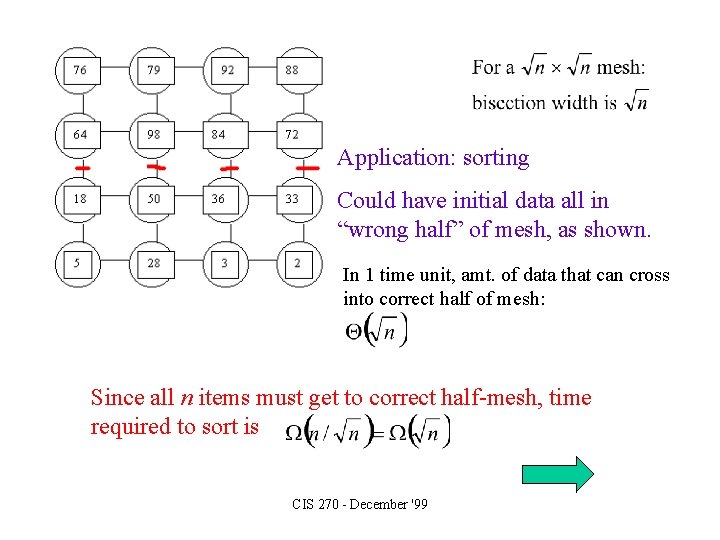

Application: sorting Could have initial data all in “wrong half” of mesh, as shown. In 1 time unit, amt. of data that can cross into correct half of mesh: Since all n items must get to correct half-mesh, time required to sort is CIS 270 - December '99

In a mesh, each of these steps takes time. Hence, time for broadcast is CIS 270 - December '99

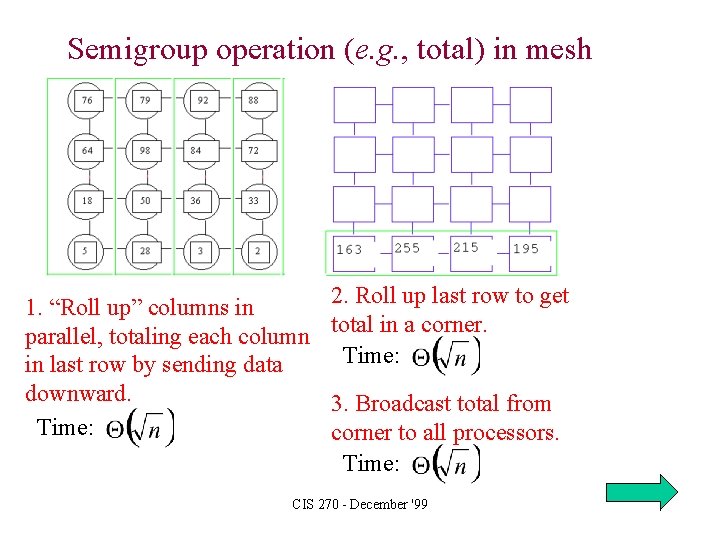

Semigroup operation (e. g. , total) in mesh 1. “Roll up” columns in parallel, totaling each column in last row by sending data downward. Time: 2. Roll up last row to get total in a corner. Time: 3. Broadcast total from corner to all processors. Time: CIS 270 - December '99

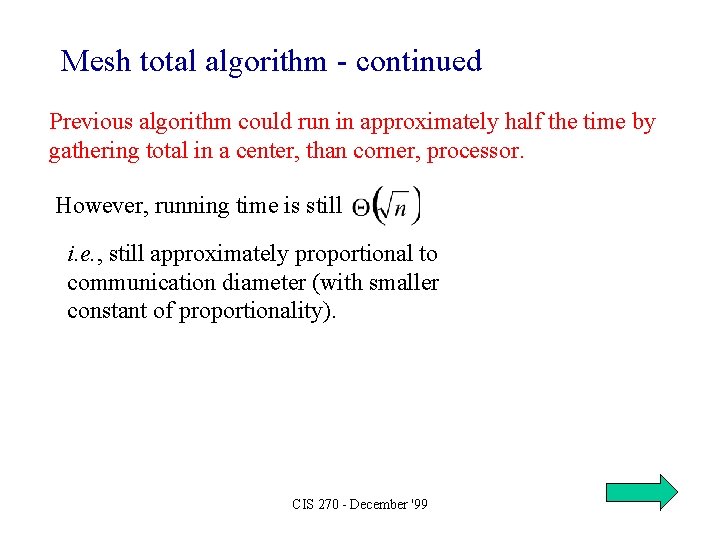

Mesh total algorithm - continued Previous algorithm could run in approximately half the time by gathering total in a center, than corner, processor. However, running time is still i. e. , still approximately proportional to communication diameter (with smaller constant of proportionality). CIS 270 - December '99

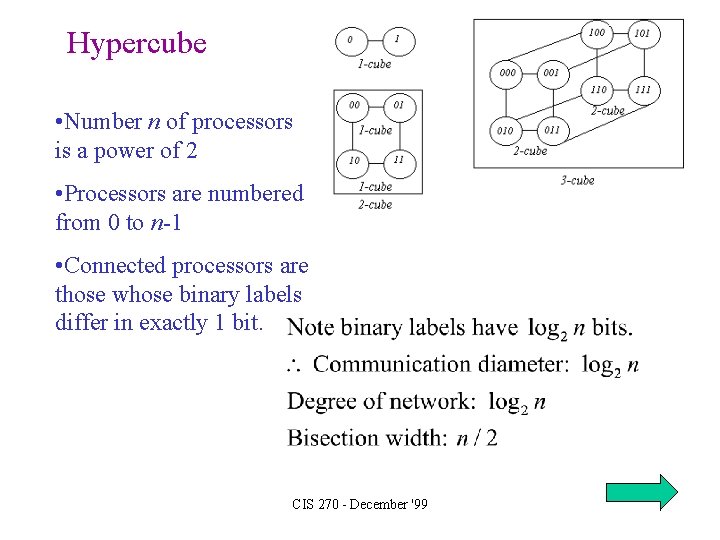

Hypercube • Number n of processors is a power of 2 • Processors are numbered from 0 to n-1 • Connected processors are those whose binary labels differ in exactly 1 bit. CIS 270 - December '99

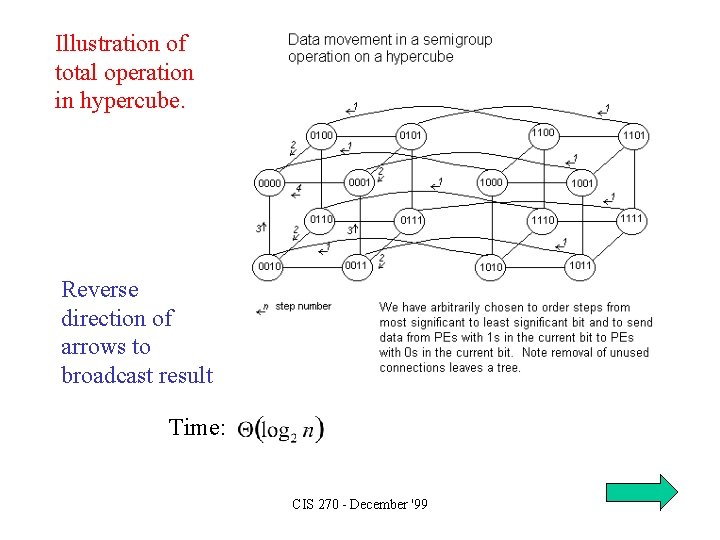

Illustration of total operation in hypercube. Reverse direction of arrows to broadcast result Time: CIS 270 - December '99

Coarse-grained parallelism • Most of previous discussion was of fine-grained parallelism - # of processors comparable to # of data items • Realistically, few budgets accommodate such expensive computers - more likely to use coarse-grained parallelism with relatively few processors compared with # of data items. • Coarse grained algorithms often based on each processor boiling its share of data down to single partial result, then using fine-grained algorithm to combine these partial results CIS 270 - December '99

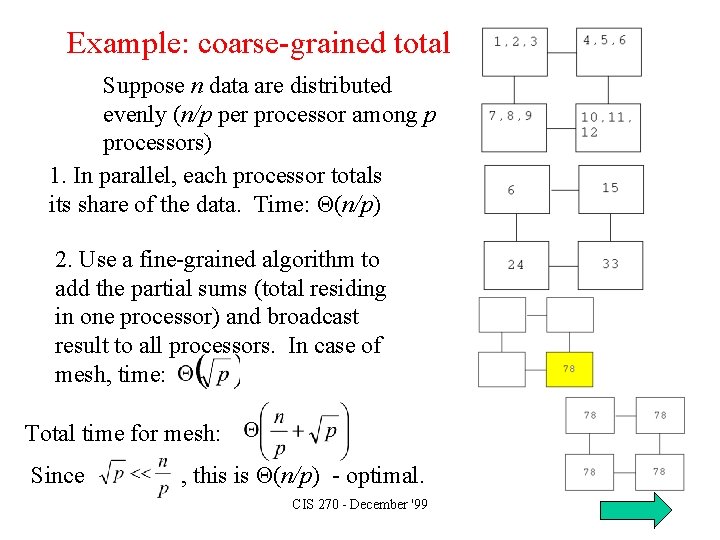

Example: coarse-grained total Suppose n data are distributed evenly (n/p per processor among p processors) 1. In parallel, each processor totals its share of the data. Time: Θ(n/p) 2. Use a fine-grained algorithm to add the partial sums (total residing in one processor) and broadcast result to all processors. In case of mesh, time: Total time for mesh: Since , this is Θ(n/p) - optimal. CIS 270 - December '99

More info: Algorithms Sequential and Parallel by Russ Miller and Laurence Boxer Prentice-Hall, 2000 (available December, 1999) CIS 270 - December '99

- Slides: 20