GPFS and Burst Buffer Integration Bo F David

GPFS and Burst Buffer Integration - Bo. F David Paul Computational Systems Group Lawrence Berkeley National Lab DPAUL@LBL. GOV December 1, 2020 1

Overview: Ø Cori System Ø Datawarp / Burst Buffer Overview Ø Burst Buffer components Ø Burst Buffer processes Ø GPFS / Burst Buffer Integration Ø The Challenge ² Note: Busrt. Buffer. Bo. F @ CUG 2017, Redmond, WA – May 7 11 th, 2017

Cori / NERSC 8 / XC 40 Ø System specifics: • 9, 688 KNL nodes • 2, 004 Haswell nodes • 27 PB Lustre Parallel Filesystem $SCRATCH • Global GPFS - $HOMEs, $PROJECTs, S/W, Modules, etc. • Mounted on all NERSC systems • 288 Datawarp servers (576 Intel SSDs, two DW servers/blade) • Burst Buffer of 1. 5 PBs @ ~1. 6 TB/sec, 12. 5 M IOPS

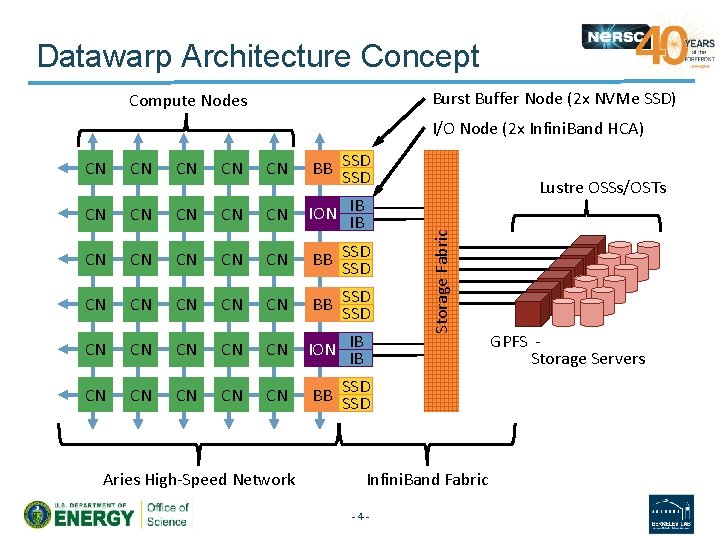

Datawarp Architecture Concept Burst Buffer Node (2 x NVMe SSD) Compute Nodes I/O Node (2 x Infini. Band HCA) CN CN BB SSD CN CN CN ION IB IB CN CN CN BB SSD SSD CN CN CN ION IB IB CN CN CN BB SSD Aries High Speed Network Lustre OSSs/OSTs Storage Fabric CN Infini. Band Fabric 4 GPFS Storage Servers

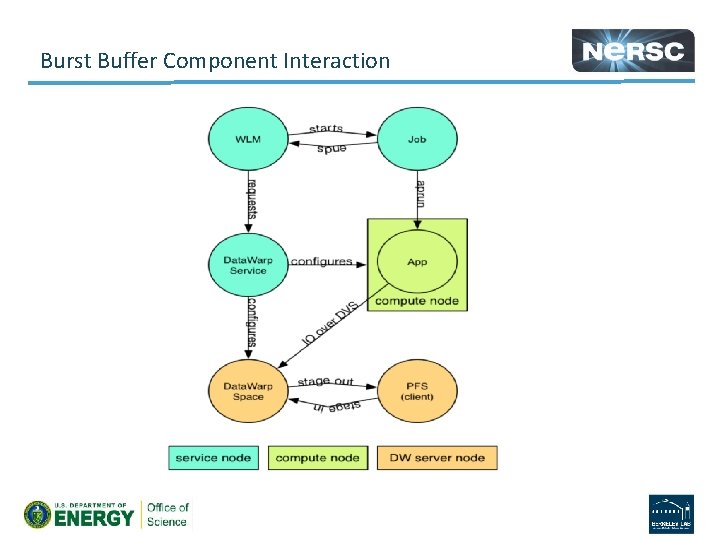

Burst Buffer Component Interaction

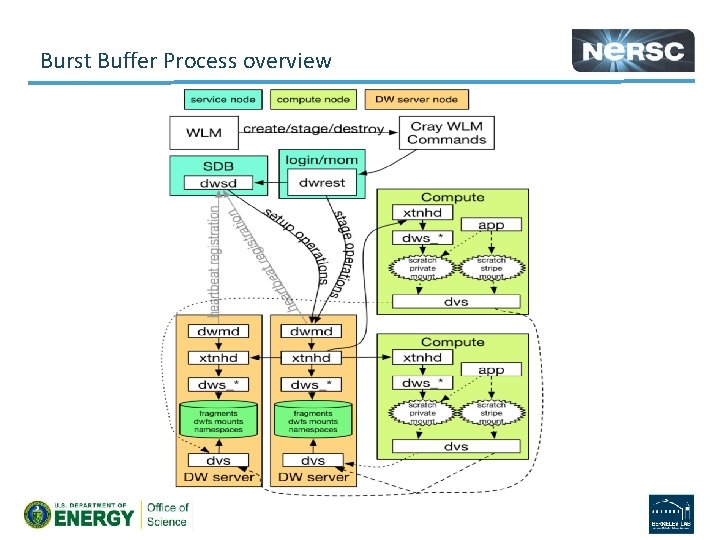

Burst Buffer Process overview

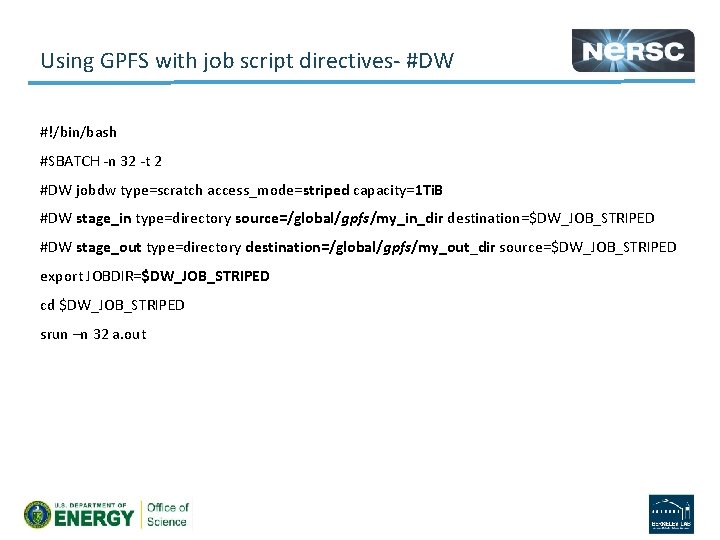

Using GPFS with job script directives #DW #!/bin/bash #SBATCH n 32 t 2 #DW jobdw type=scratch access_mode=striped capacity=1 Ti. B #DW stage_in type=directory source=/global/gpfs/my_in_dir destination=$DW_JOB_STRIPED #DW stage_out type=directory destination=/global/gpfs/my_out_dir source=$DW_JOB_STRIPED export JOBDIR=$DW_JOB_STRIPED cd $DW_JOB_STRIPED srun –n 32 a. out

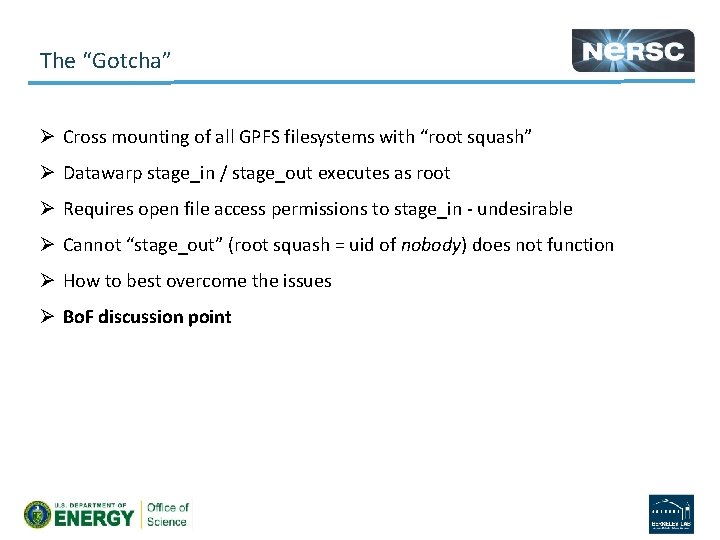

The “Gotcha” Ø Cross mounting of all GPFS filesystems with “root squash” Ø Datawarp stage_in / stage_out executes as root Ø Requires open file access permissions to stage_in undesirable Ø Cannot “stage_out” (root squash = uid of nobody) does not function Ø How to best overcome the issues Ø Bo. F discussion point

Bo. F Discussion

Bo. F Backup Slides

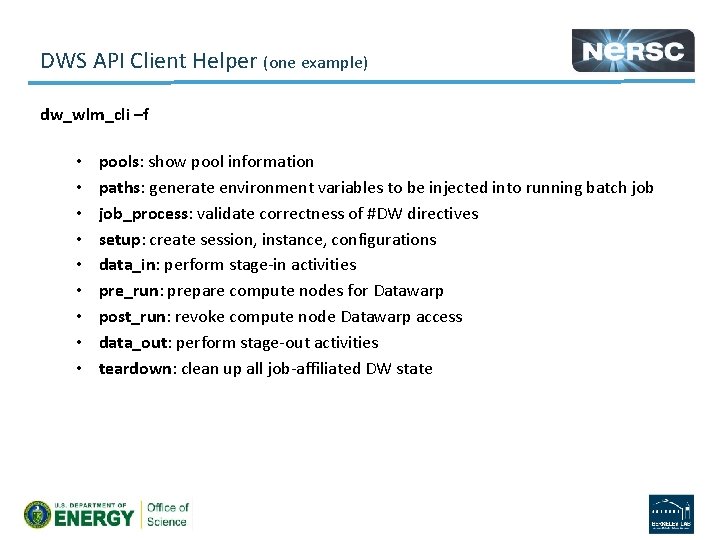

DWS API Client Helper (one example) dw_wlm_cli –f • • • pools: show pool information paths: generate environment variables to be injected into running batch job_process: validate correctness of #DW directives setup: create session, instance, configurations data_in: perform stage in activities pre_run: prepare compute nodes for Datawarp post_run: revoke compute node Datawarp access data_out: perform stage out activities teardown: clean up all job affiliated DW state

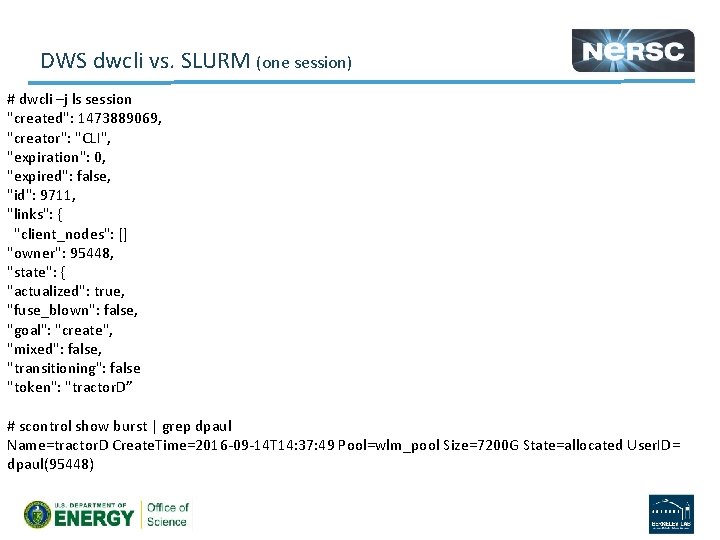

DWS dwcli vs. SLURM (one session) # dwcli –j ls session "created": 1473889069, "creator": "CLI", "expiration": 0, "expired": false, "id": 9711, "links": { "client_nodes": [] "owner": 95448, "state": { "actualized": true, "fuse_blown": false, "goal": "create", "mixed": false, "transitioning": false "token": "tractor. D” # scontrol show burst | grep dpaul Name=tractor. D Create. Time=2016 09 14 T 14: 37: 49 Pool=wlm_pool Size=7200 G State=allocated User. ID= dpaul(95448)

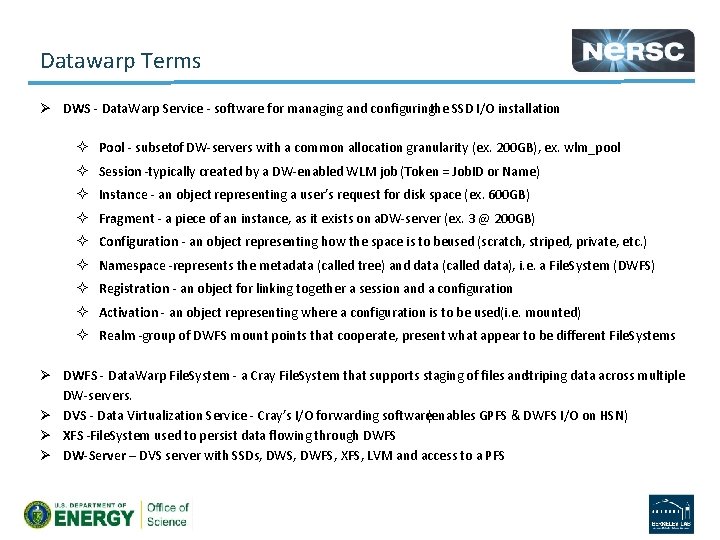

Datawarp Terms Ø DWS Data. Warp Service software for managing and configuringthe SSD I/O installation ² Pool subsetof DW servers with a common allocation granularity (ex. 200 GB), ex. wlm_pool ² Session typically created by a DW enabled WLM job (Token = Job. ID or Name) ² Instance an object representing a user’s request for disk space (ex. 600 GB) ² Fragment a piece of an instance, as it exists on a. DW server (ex. 3 @ 200 GB) ² Configuration an object representing how the space is to beused (scratch, striped, private, etc. ) ² Namespace represents the metadata (called tree) and data (called data), i. e. a File. System (DWFS) ² Registration an object for linking together a session and a configuration ² Activation an object representing where a configuration is to be used(i. e. mounted) ² Realm group of DWFS mount points that cooperate, present what appear to be different File. Systems Ø DWFS Data. Warp File. System a Cray File. System that supports staging of files andstriping data across multiple DW servers. Ø DVS Data Virtualization Service Cray’s I/O forwarding software(enables GPFS & DWFS I/O on HSN) Ø XFS File. System used to persist data flowing through DWFS Ø DW-Server – DVS server with SSDs, DWS, DWFS, XFS, LVM and access to a PFS

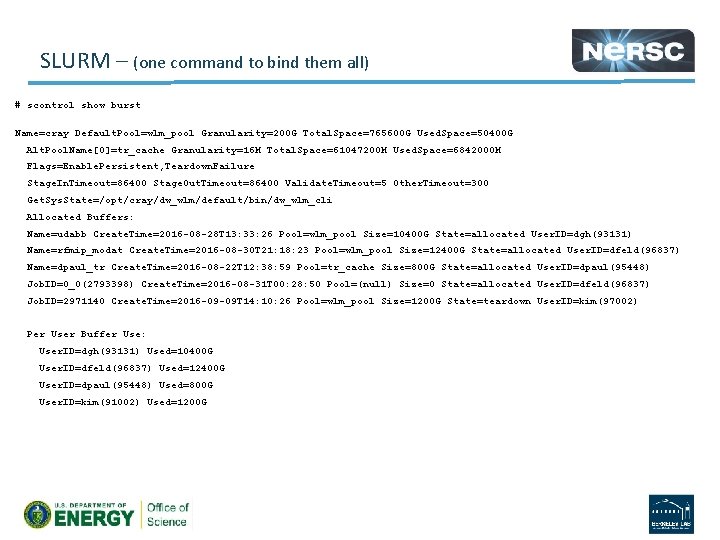

SLURM – (one command to bind them all) # scontrol show burst Name=cray Default. Pool=wlm_pool Granularity=200 G Total. Space=765600 G Used. Space=50400 G Alt. Pool. Name[0]=tr_cache Granularity=16 M Total. Space=61047200 M Used. Space=6842000 M Flags=Enable. Persistent, Teardown. Failure Stage. In. Timeout=86400 Stage. Out. Timeout=86400 Validate. Timeout=5 Other. Timeout=300 Get. Sys. State=/opt/cray/dw_wlm/default/bin/dw_wlm_cli Allocated Buffers: Name=udabb Create. Time=2016 -08 -28 T 13: 33: 26 Pool=wlm_pool Size=10400 G State=allocated User. ID=dgh(93131) Name=rfmip_modat Create. Time=2016 -08 -30 T 21: 18: 23 Pool=wlm_pool Size=12400 G State=allocated User. ID=dfeld(96837) Name=dpaul_tr Create. Time=2016 -08 -22 T 12: 38: 59 Pool=tr_cache Size=800 G State=allocated User. ID=dpaul(95448) Job. ID=0_0(2793398) Create. Time=2016 -08 -31 T 00: 28: 50 Pool=(null) Size=0 State=allocated User. ID=dfeld(96837) Job. ID=2971140 Create. Time=2016 -09 -09 T 14: 10: 26 Pool=wlm_pool Size=1200 G State=teardown User. ID=kim(97002) Per User Buffer Use: User. ID=dgh(93131) Used=10400 G User. ID=dfeld(96837) Used=12400 G User. ID=dpaul(95448) Used=800 G User. ID=kim(91002) Used=1200 G

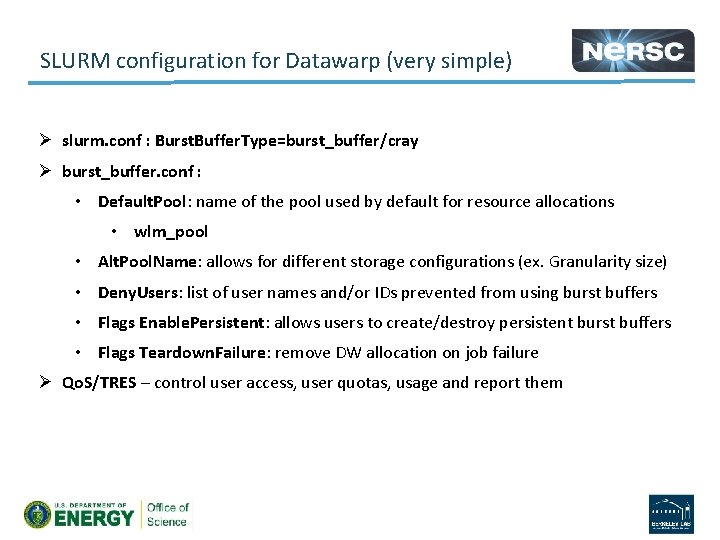

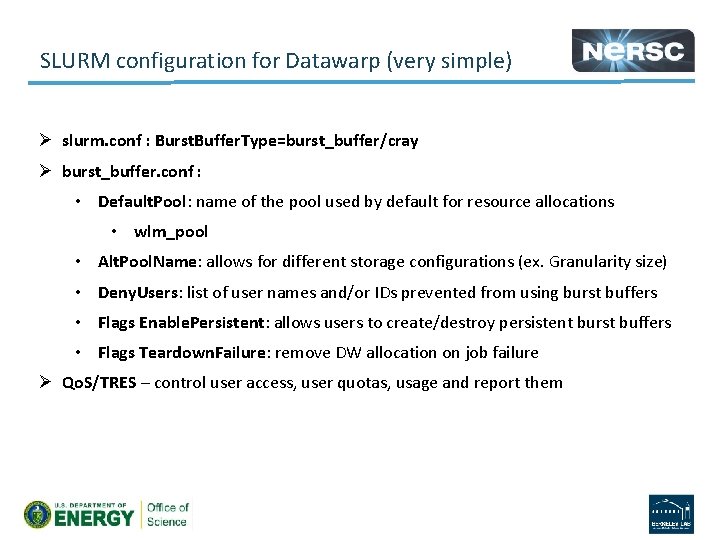

SLURM configuration for Datawarp (very simple) Ø slurm. conf : Burst. Buffer. Type=burst_buffer/cray Ø burst_buffer. conf : • Default. Pool: name of the pool used by default for resource allocations • wlm_pool • Alt. Pool. Name: allows for different storage configurations (ex. Granularity size) • Deny. Users: list of user names and/or IDs prevented from using burst buffers • Flags Enable. Persistent: allows users to create/destroy persistent burst buffers • Flags Teardown. Failure: remove DW allocation on job failure Ø Qo. S/TRES – control user access, user quotas, usage and report them

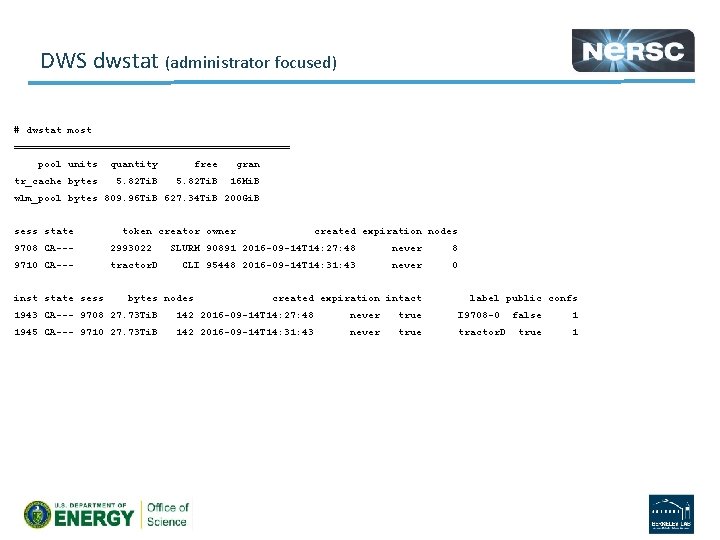

DWS dwstat (administrator focused) # dwstat most ======================= pool units quantity free gran tr_cache bytes 5. 82 Ti. B 16 Mi. B wlm_pool bytes 809. 96 Ti. B 627. 34 Ti. B 200 Gi. B sess state token creator owner 9708 CA--- 2993022 9710 CA--- tractor. D inst state sess created expiration nodes SLURM 90891 2016 -09 -14 T 14: 27: 48 never 8 CLI 95448 2016 -09 -14 T 14: 31: 43 never 0 bytes nodes created expiration intact label public confs 1943 CA--- 9708 27. 73 Ti. B 142 2016 -09 -14 T 14: 27: 48 never true I 9708 -0 false 1 1945 CA--- 9710 27. 73 Ti. B 142 2016 -09 -14 T 14: 31: 43 never true tractor. D true 1

SLURM configuration for Datawarp (very simple) Ø slurm. conf : Burst. Buffer. Type=burst_buffer/cray Ø burst_buffer. conf : • Default. Pool: name of the pool used by default for resource allocations • wlm_pool • Alt. Pool. Name: allows for different storage configurations (ex. Granularity size) • Deny. Users: list of user names and/or IDs prevented from using burst buffers • Flags Enable. Persistent: allows users to create/destroy persistent burst buffers • Flags Teardown. Failure: remove DW allocation on job failure Ø Qo. S/TRES – control user access, user quotas, usage and report them

- Slides: 17