Fermi Cloud OnDemand Services How Cloud can be

Fermi. Cloud On-Demand Services: How Cloud can be used for Data preservation Steven C. Timm Grid and Cloud Services Dept. Data Preservation Workshop March 28, 2014

Outline • • • Intro to Fermi. Cloud Project Intro to Fermi. Cloud On-Demand Services Compute and Data Movement challenges for Fermilab Current Projects Of Interest to Data Preservation Cloud Technologies that could be of help in future DISCLAIMER: – These slides have not been reviewed by SCD Management or by the Data Preservation Group – Any mention of cloud technologies on these slides should not be construed that the SCD is necessarily committing to offer these technologies as production services or that they are part of the official Fermilab DP Plan. 2 Steven C. Timm | Fermi. Cloud On-Demand Services 3/13/2014

Fermi. Cloud Project Accomplishments to Date • Identified Open. Nebula Technology for Infrastructure-as-a-Service, • Deployed a production quality Infrastructure-as-a-Service facility, • Made technical breakthroughs in authentication, authorization, fabric deployment, accounting and high availability cloud infrastructure, • Continues to evolve and develop cloud computing techniques for scientific workflows, • Supporting both development/integration and production user communities on a common infrastructure. • Project started in 2010. • Open. Nebula 2. 0 cloud available to users since fall 2010. • Open. Nebula 3. 2 cloud up since June 2012 • FOCUS on using these technologies to deliver On-Demand Services to scientific users 3 Fermi. Cloud--OSG AHM 2013 S. Timm http: //fclweb. fnal. gov 14 -Mar-2013

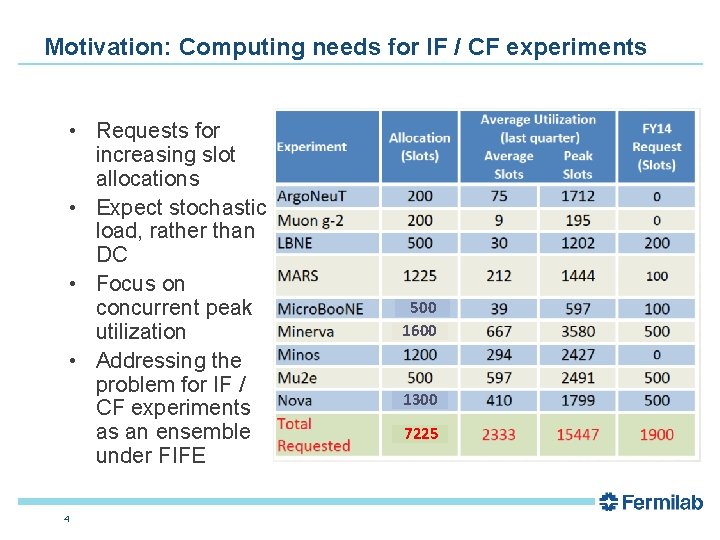

Motivation: Computing needs for IF / CF experiments • Requests for increasing slot allocations • Expect stochastic load, rather than DC • Focus on concurrent peak utilization • Addressing the problem for IF / CF experiments as an ensemble under FIFE 4 500 1600 1300 7225

Compute Challenges for Fermilab • Workloads become more heterogeneous – – – Very large data sets (DES, Darkside) Consecutive tasks on same large data set (DES) Large memory requirements (LBNE) Large shower libraries (Neutrino) Order of minutes execution on O(30 GB) data. Whole node scheduling • Need flexible ways to reconfigure compute clusters for changing workload • In FY 16: 8 major experiments at Fermilab from 3 frontiers. Usage patterns: hard to plan over “slow” (yearly? ) cycles. Need to be more dynamic 5 Steven C. Timm | Fermi. Cloud On-Demand Services 3/13/2014

“Data” Movement Challenges for Fermilab • Many types of network bandwidth – Large set of Input files for simulation and analysis (5 -30 GB) – Output files produced by simulation and analysis – Code (2 -20 GB) – OS Image (2 -16 GB) – Database access (Postgres, My. SQL) • Load is stochastic—different experiments running at different times • Whether remote cloud or grid we need: – Web caching service – Database forwarding services – Temporary storage element – Coordinated launch so that compute nodes know where all of the above are. 6 Steven C. Timm | Fermi. Cloud On-Demand Services 3/13/2014

On-Demand Services: Batch Computing • The de facto standard is the Amazon “EC 2” API – Most open-source cloud computing packages try to emulate it. – (Including Open. Nebula and Open. Stack). • Grid Bursting – Launch generic worker node virtual machines on your own site to extend your own grid cluster, based on demand. • Cloud Bursting – Launch experiment-specific worker node virtual machines on Fermi. Cloud and other clouds based on demand. – Launch associated auxiliary virtual machines to meet the data movement, database forwarding, and web caching needs of the workflow, both at the submission site and on the remote cloud. 7 Steven C. Timm | Fermi. Cloud On-Demand Services 3/13/2014

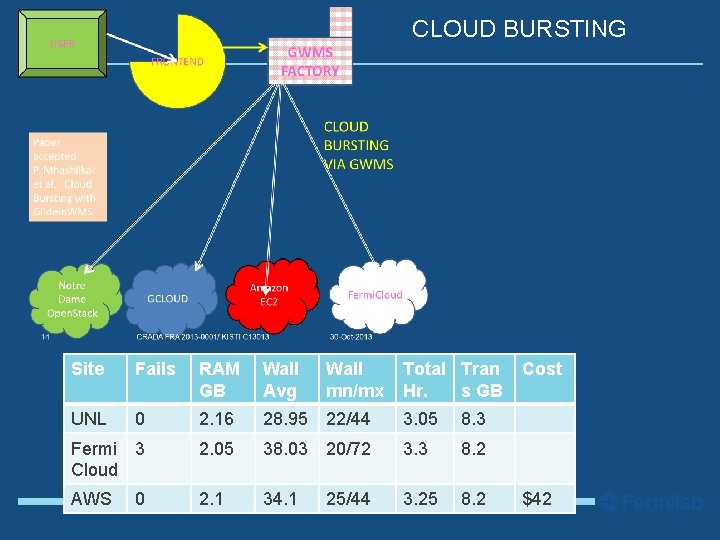

CLOUD BURSTING 8 Site Fails RAM GB Wall Avg Wall mn/mx Total Tran Hr. s GB UNL 0 2. 16 28. 95 22/44 3. 05 8. 3 Fermi 3 Cloud 2. 05 38. 03 20/72 3. 3 8. 2 AWS 2. 1 34. 1 25/44 3. 25 8. 2 0 Steven C. Timm | Fermi. Cloud On-Demand Services Cost $42 3/13/2014

On-Demand Services: Data movement • Stochastic load of data movement – Different experiments running different workflows at different times – Need web caching and database proxies on the remote grid/cloud site – Helps to have a temporary storage element on remote grid/cloud site as well – Need data movement servers to scale dynamically with expected data load. 9 Steven C. Timm | Fermi. Cloud On-Demand Services 3/13/2014

Projects of Interest to Data Preservation: Complex Workflow • Coordinated Launch of complex workflow (compute + caching + storage) • Launch a set of virtual machines – Let them know where all the others are – What web caching server, what database server, etc. – Early work done on this by IIT student Siyuan Ma, 2012. • Investigate benefits of far-end temporary storage element: – Is it better to have 400 machines waiting to send back their I/O (and charging you while they run) – Or should you queue it all on a few machines (incurring S 3 storage charges)? How many is a few? – Current strategy relies on locking mechanism, one of many processes takes out a lock and attempts to send back all data queued at the time. 10 Steven C. Timm | Fermi. Cloud On-Demand Services 3/13/2014

Projects: VM Batch Submission Facility • Have done demo of up to 100 simultaneous worker node VM’s on Fermi. Cloud, ran NOv. A workflows, soon Mu 2 e. • Plan to scale up to 1000 over summer (using old worker nodes to expand the cloud). • Problem: – VM’s take lots of IP addresses, we don’t have that many. – So simulate private net structure of the public cloud • Start with Network Address Translation • Consider private (Software-defined) net that is routable only on-site • This could be key technology if you also need access to storage. – Very good way to find out if there is anything depending on NFS access, direct database access, etc. 11 Steven C. Timm | Fermi. Cloud On-Demand Services 3/13/2014

Unique Cloud capabilities for data preservation • Support for Legacy OS (within limits) – Need tcl 8. 0. 3? Not a problem. • Support for legacy software stack elements and complicated configurations that are not easily installed at remote grid sites • Could save virtual machines of batch head node plus worker nodes plus code repo plus storage and bring them alive only when needed. – Could have software defined network so they can talk only to each other. • Could have small legacy cluster that could grow and add capacity ondemand. • Capacity to run on distributed resource of future. • European Grid sites gradually converting to Cloud sites, led by CERN • Expect US will follow eventually. 12 Steven C. Timm | Fermi. Cloud On-Demand Services 3/13/2014

Recent publications • Automatic Cloud Bursting Under Fermi. Cloud – ICPADS CSS workshop, Dec. 2013. • A Reference Model for Virtual Machine Launching Overhead – CCGrid conference, May 2014 • Grids, Virtualization, and Clouds at Fermilab – CHEP conference, Oct. 2013. • Exploring Infiniband Hardware Virtualization in Open. Nebula towards Efficient High-Performance Computing. – CCGrid Scale workshop, May 2014. 13 Steven C. Timm | Fermi. Cloud On-Demand Services 3/13/2014

- Slides: 13