Experiences Building a Multiplatform Compiler for Coarray Fortran

Experiences Building a Multi-platform Compiler for Co-array Fortran John Mellor-Crummey Cristian Coarfa, Yuri Dotsenko Department of Computer Science Rice University AHPCRC PGAS Workshop September, 2005 1

Goals for HPC Languages • Expressiveness • Ease of programming • Portable performance • Ubiquitous availability 2

PGAS Languages • Global address space programming model – one-sided communication (GET/PUT) simpler than msg passing • Programmer has control over performance-critical factors – data distribution and locality control lacking in Open. MP – computation partitioning HPF & Open. MP compilers must get this right – communication placement • Data movement and synchronization as language primitives – amenable to compiler-based communication optimization 3

Co-array Fortran Programming Model • SPMD process images – fixed number of images during execution – images operate asynchronously • Both private and shared data – real x(20, 20) – real y(20, 20)[*] a private 20 x 20 array in each image a shared 20 x 20 array in each image • Simple one-sided shared-memory communication – x(: , j: j+2) = y(: , p: p+2)[r] copy columns from image r into local columns • Synchronization intrinsic functions – sync_all – a barrier and a memory fence – sync_mem – a memory fence – sync_team([team members to notify], [team members to wait for]) • Pointers and (perhaps asymmetric) dynamic allocation • Parallel I/O 4

![One-sided Communication with Co-Arrays integer a(10, 20)[*] a(10, 20) image 1 image 2 image One-sided Communication with Co-Arrays integer a(10, 20)[*] a(10, 20) image 1 image 2 image](http://slidetodoc.com/presentation_image_h2/7675154132ddf384ffbf8ffcefa2f5bf/image-5.jpg)

One-sided Communication with Co-Arrays integer a(10, 20)[*] a(10, 20) image 1 image 2 image N if (this_image() > 1) a(1: 10, 1: 2) = a(1: 10, 19: 20)[this_image()-1] image 1 image 2 image N 5

CAF Compilers • Cray compilers for X 1 & T 3 E architectures • Rice Co-Array Fortran Compiler (cafc) 6

Rice cafc Compiler • Source-to-source compiler – source-to-source yields multi-platform portability • Implements core language features – core sufficient for non-trivial codes – preliminary support for derived types • soon support for allocatable components • Open source Performance comparable to that of hand-tuned MPI codes 7

Implementation Strategy • Goals – portability – high performance on a wide range of platforms • Approach – source-to-source compilation of CAF codes • use Open 64/SL Fortran 90 infrastructure • CAF Fortran 90 + communication operations – communication • ARMCI and GASNet one-sided comm libraries for portability • load/store communication on shared-memory platforms 8

Key Implementation Concerns • Fast access to local co-array data • Fast communication • Overlap of communication and computation 9

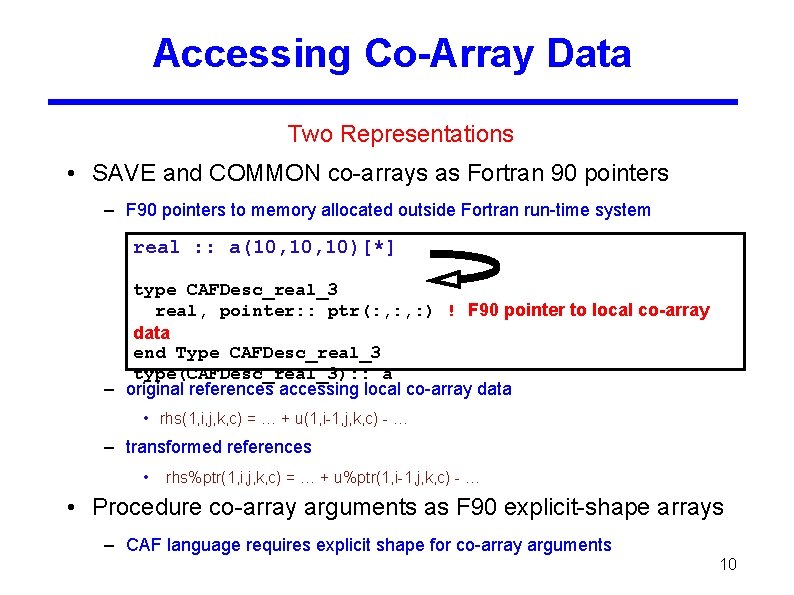

Accessing Co-Array Data Two Representations • SAVE and COMMON co-arrays as Fortran 90 pointers – F 90 pointers to memory allocated outside Fortran run-time system real : : a(10, 10)[*] type CAFDesc_real_3 real, pointer: : ptr(: , : ) ! F 90 pointer to local co-array data end Type CAFDesc_real_3 type(CAFDesc_real_3): : a – original references accessing local co-array data • rhs(1, i, j, k, c) = … + u(1, i-1, j, k, c) - … – transformed references • rhs%ptr(1, i, j, k, c) = … + u%ptr(1, i-1, j, k, c) - … • Procedure co-array arguments as F 90 explicit-shape arrays – CAF language requires explicit shape for co-array arguments 10

Performance Challenges • Problem – Fortran 90 pointer-based representation does not convey • the lack of co-array aliasing • contiguity of co-array data • co-array bounds information – lack of knowledge inhibits important code optimizations • Approach: procedure splitting 11

![Procedure Splitting CAF to CAF optimization subroutine f(…) real, save : : c(100)[*]. . Procedure Splitting CAF to CAF optimization subroutine f(…) real, save : : c(100)[*]. .](http://slidetodoc.com/presentation_image_h2/7675154132ddf384ffbf8ffcefa2f5bf/image-12.jpg)

Procedure Splitting CAF to CAF optimization subroutine f(…) real, save : : c(100)[*]. . . = c(50). . . end subroutine f Benefits • better alias analysis • contiguity of co-array data • co-array bounds information • better dependence analysis subroutine f(…) real, save : : c(100)[*] interface subroutine f_inner(…, c_arg) real : : c_arg[*] end subroutine f_inner end interface call f_inner(…, c(1)) end subroutine f_inner(…, c_arg) real : : c_arg(100)[*]. . . = c_arg(50). . . end subroutine f_inner result: back-end compiler can generate better code 12

![Implementing Communication • x(1: n) = a(1: n)[p] + … • General approach: use Implementing Communication • x(1: n) = a(1: n)[p] + … • General approach: use](http://slidetodoc.com/presentation_image_h2/7675154132ddf384ffbf8ffcefa2f5bf/image-13.jpg)

Implementing Communication • x(1: n) = a(1: n)[p] + … • General approach: use buffer to hold off processor data – allocate buffer – perform GET to fill buffer – perform computation: x(1: n) = buffer(1: n) + … – deallocate buffer • Optimizations – no buffer for co-array to co-array copies – unbuffered load/store on shared-memory systems 13

Strided vs. Contiguous Transfers • Problem – CAF remote reference might induce many small data transfers • a(i, 1: n)[p] = b(j, 1: n) • Solution – pack strided data on source and unpack it on destination • Constraints – can’t express both source-level packing and unpacking for a one-sided transfer – two-sided packing/unpacking is awkward for users • Preferred approach – have communication layer perform packing/unpacking 14

Pragmatics of Packing Who should implement packing? • CAF programmer – difficult to program • CAF compiler – must convert PUTs into two-sided communication to unpack • difficult whole-program transformation • Communication library – most natural place – ARMCI currently performs packing on Myrinet (at least) 15

Synchronization • Original CAF specification: team synchronization only – sync_all, sync_team • Limits performance on loosely-coupled architectures • Point-to-point extensions – sync_notify(q) – sync_wait(p) Point to point synchronization semantics Delivery of a notify to q from p all communication from p to q issued before the notify has been delivered to q 16

Hiding Communication Latency Goal: enable communication/computation overlap • Impediments to generating non-blocking communication – use of indexed subscripts in co-dimensions – lack of whole program analysis • Approach: support hints for non-blocking communication – overcome conservative compiler analysis – enable sophisticated programmers to achieve good performance today 17

Questions about PGAS Languages • Performance – can performance match hand-tuned msg passing programs? – what are the obstacles to top performance? – what should be done to overcome them? • language modifications or extensions? • program implementation strategies? • compiler technology? • run-time system enhancements? • Programmability – how easy is it to develop high performance programs? 18

Investigating these Issues Evaluate CAF, UPC, and MPI versions of NAS benchmarks • Performance – compare CAF and UPC performance to that of MPI versions • use hardware performance counters to pinpoint differences – determine optimization techniques common for both languages as well as language specific optimizations • language features • program implementation strategies • compiler optimizations • runtime optimizations • Programmability – assess programmability of the CAF and UPC variants 19

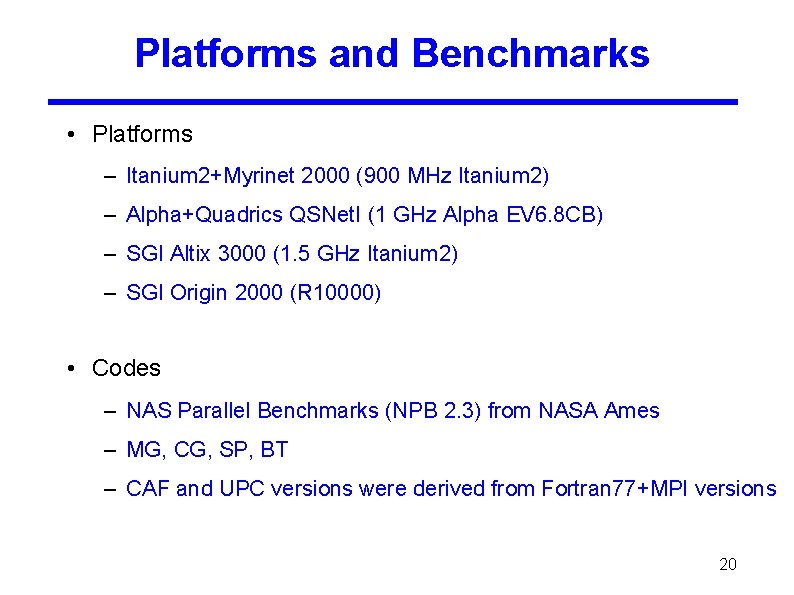

Platforms and Benchmarks • Platforms – Itanium 2+Myrinet 2000 (900 MHz Itanium 2) – Alpha+Quadrics QSNet. I (1 GHz Alpha EV 6. 8 CB) – SGI Altix 3000 (1. 5 GHz Itanium 2) – SGI Origin 2000 (R 10000) • Codes – NAS Parallel Benchmarks (NPB 2. 3) from NASA Ames – MG, CG, SP, BT – CAF and UPC versions were derived from Fortran 77+MPI versions 20

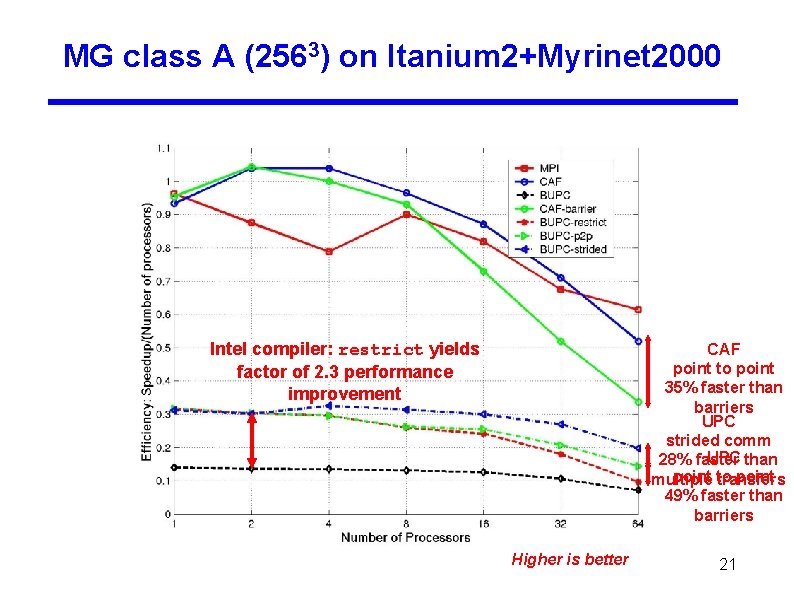

MG class A (2563) on Itanium 2+Myrinet 2000 Intel compiler: restrict yields factor of 2. 3 performance improvement CAF point to point 35% faster than barriers UPC strided comm UPC than 28% faster point to point multiple transfers 49% faster than barriers Higher is better 21

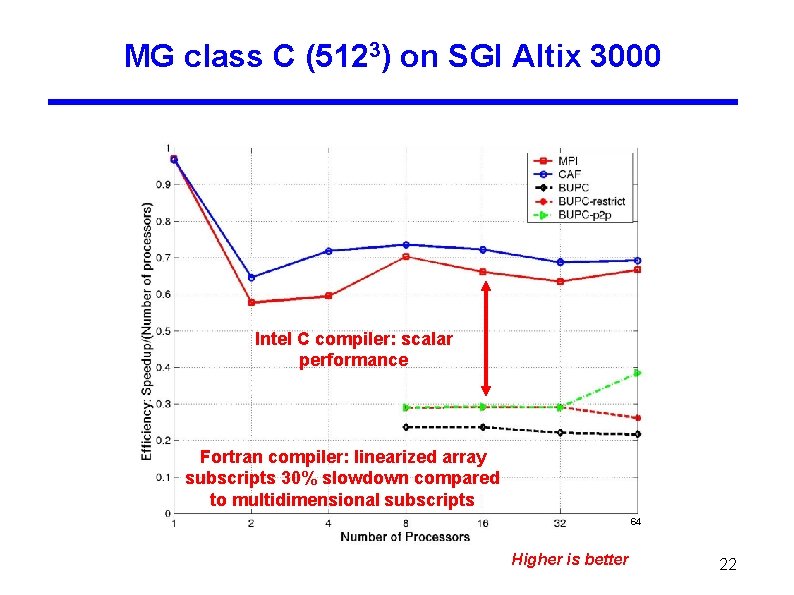

MG class C (5123) on SGI Altix 3000 Intel C compiler: scalar performance Fortran compiler: linearized array subscripts 30% slowdown compared to multidimensional subscripts 64 Higher is better 22

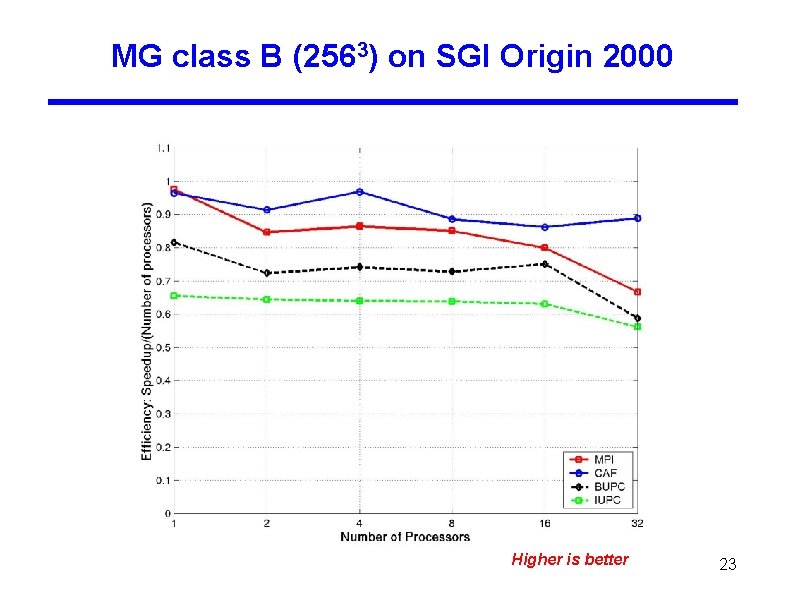

MG class B (2563) on SGI Origin 2000 Higher is better 23

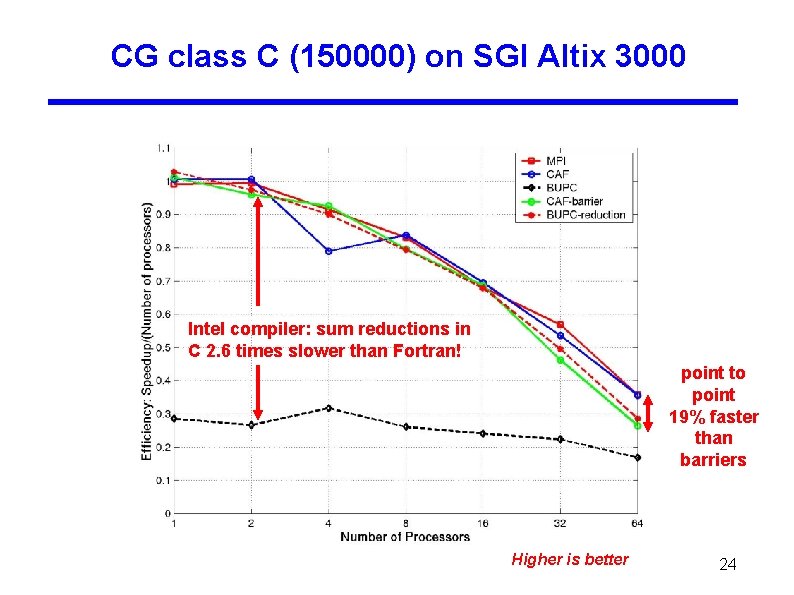

CG class C (150000) on SGI Altix 3000 Intel compiler: sum reductions in C 2. 6 times slower than Fortran! point to point 19% faster than barriers Higher is better 24

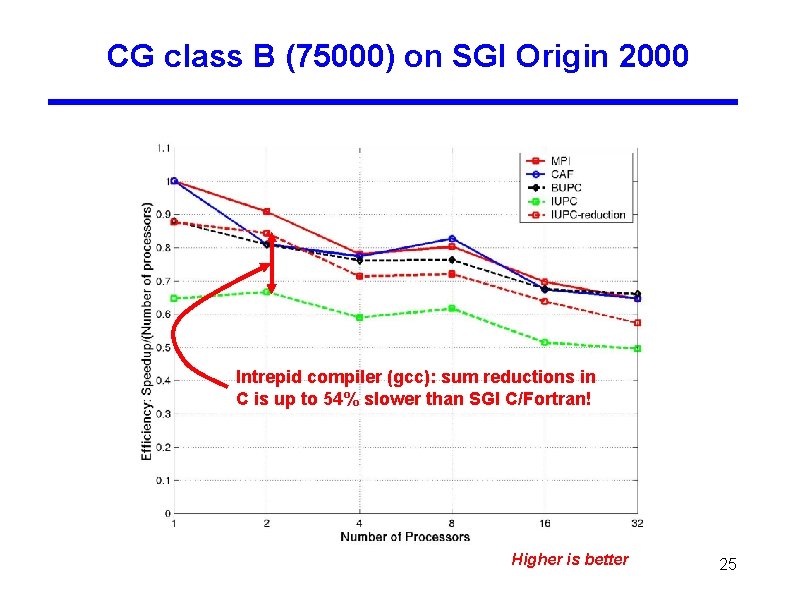

CG class B (75000) on SGI Origin 2000 Intrepid compiler (gcc): sum reductions in C is up to 54% slower than SGI C/Fortran! Higher is better 25

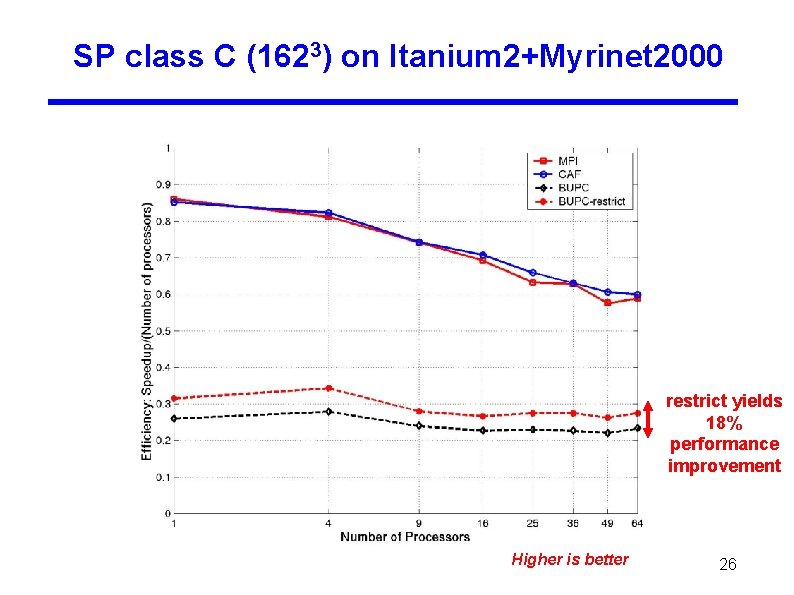

SP class C (1623) on Itanium 2+Myrinet 2000 restrict yields 18% performance improvement Higher is better 26

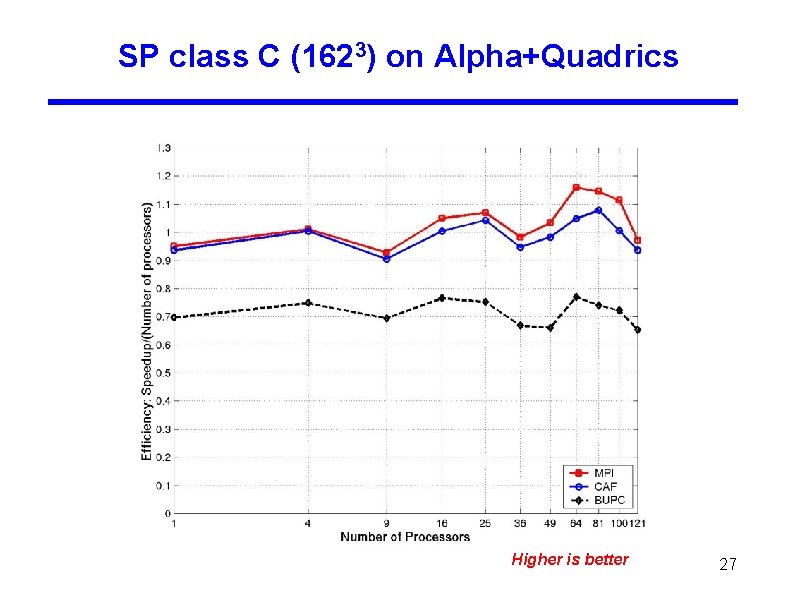

SP class C (1623) on Alpha+Quadrics Higher is better 27

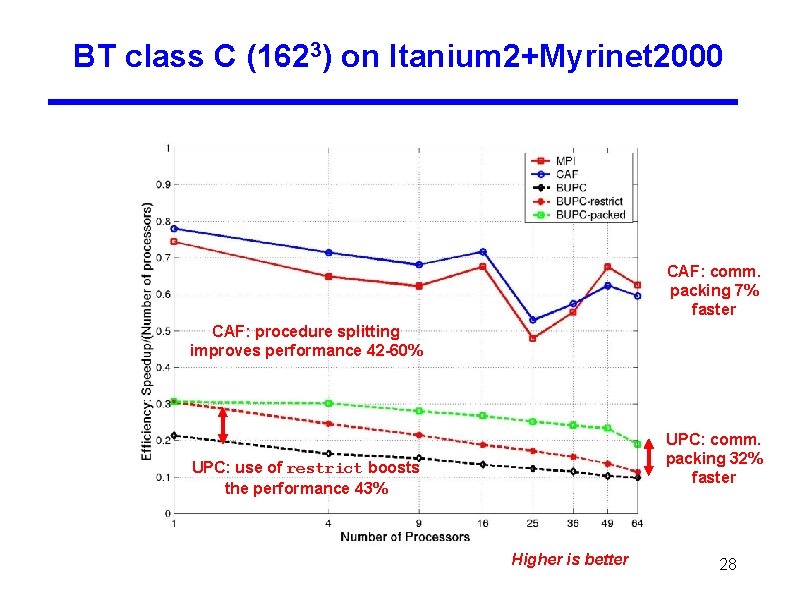

BT class C (1623) on Itanium 2+Myrinet 2000 CAF: comm. packing 7% faster CAF: procedure splitting improves performance 42 -60% UPC: comm. packing 32% faster UPC: use of restrict boosts the performance 43% Higher is better 28

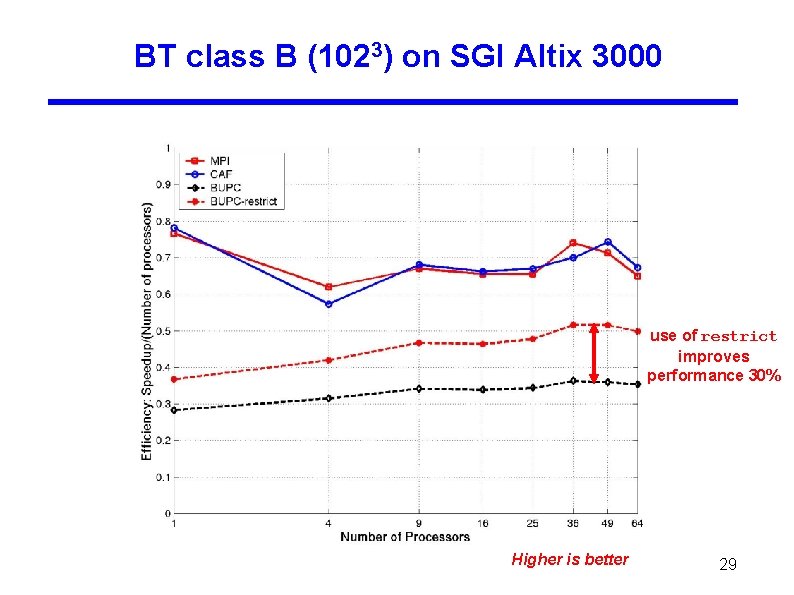

BT class B (1023) on SGI Altix 3000 use of restrict improves performance 30% Higher is better 29

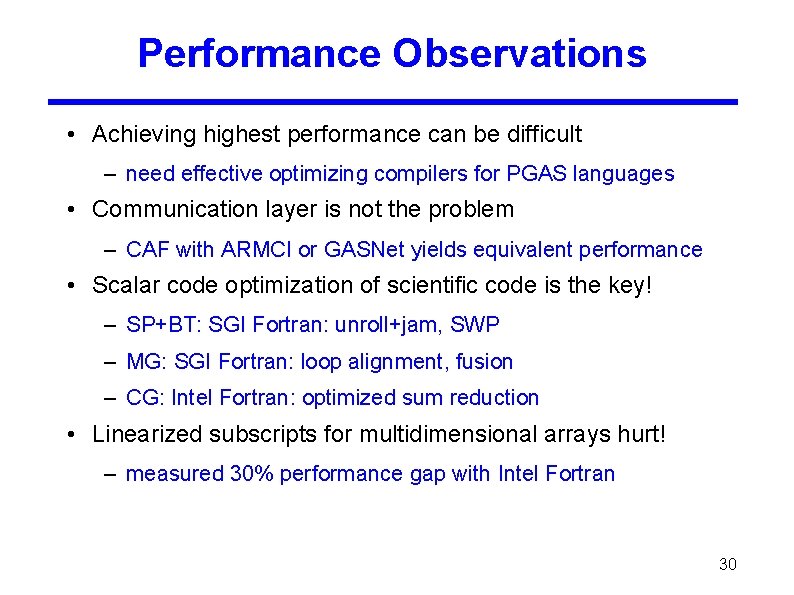

Performance Observations • Achieving highest performance can be difficult – need effective optimizing compilers for PGAS languages • Communication layer is not the problem – CAF with ARMCI or GASNet yields equivalent performance • Scalar code optimization of scientific code is the key! – SP+BT: SGI Fortran: unroll+jam, SWP – MG: SGI Fortran: loop alignment, fusion – CG: Intel Fortran: optimized sum reduction • Linearized subscripts for multidimensional arrays hurt! – measured 30% performance gap with Intel Fortran 30

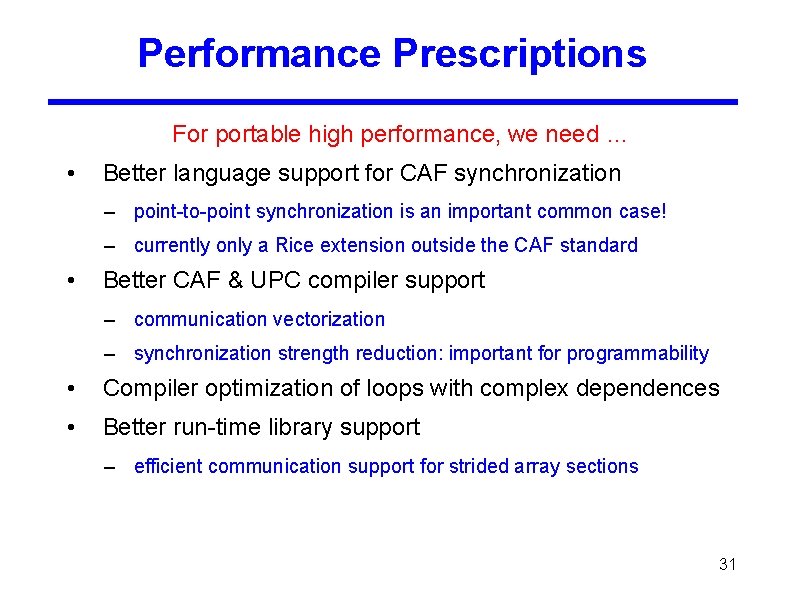

Performance Prescriptions For portable high performance, we need … • Better language support for CAF synchronization – point-to-point synchronization is an important common case! – currently only a Rice extension outside the CAF standard • Better CAF & UPC compiler support – communication vectorization – synchronization strength reduction: important for programmability • Compiler optimization of loops with complex dependences • Better run-time library support – efficient communication support for strided array sections 31

Programmability Observations • Matching MPI performance required using bulk communication – communicating multi-dimensional array sections is natural in CAF – library-based primitives are cumbersome in UPC • Strided communication is problematic for performance – tedious programming of packing/unpacking at src level • Wavefront computations – MPI buffered communication easily decouples sender/receiver – PGAS models: buffering explicitly managed by programmer 32

- Slides: 32