EndtoEnd Object Detection with Transformers DETR DEtection TRansformer

End-to-End Object Detection with Transformers

DETR • DEtection + TRansformer • Streamline the detection pipeline. • Bipartite matching Jaemin Jeong Seminar (세미나) 2

Introduction Modern Detector : Anchors, Window Centers, Proposals. -> Complex pipeline Object detection isn't end-to-end because postprocessing such NMS, Soft NMS Jaemin Jeong Seminar (세미나) 3

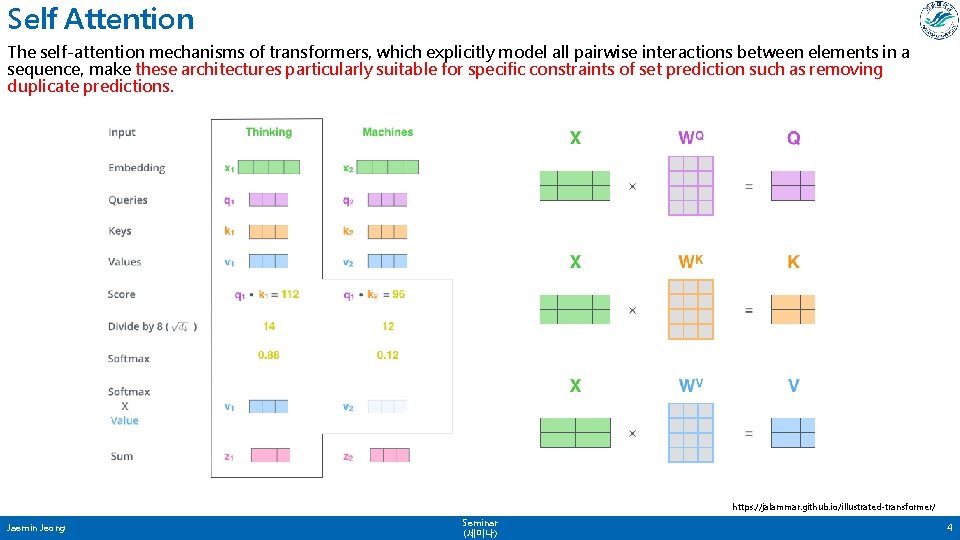

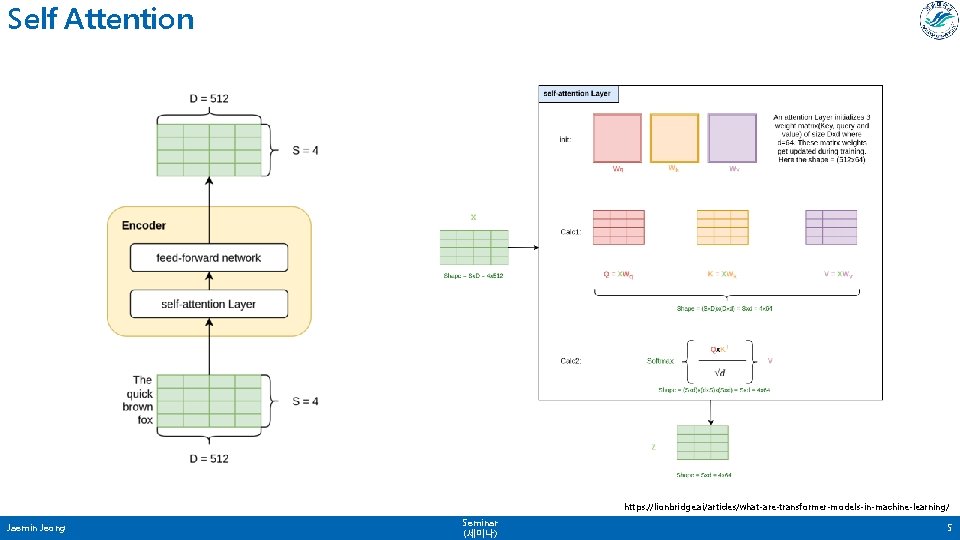

Self Attention The self-attention mechanisms of transformers, which explicitly model all pairwise interactions between elements in a sequence, make these architectures particularly suitable for specific constraints of set prediction such as removing duplicate predictions. https: //jalammar. github. io/illustrated-transformer/ Jaemin Jeong Seminar (세미나) 4

Self Attention https: //lionbridge. ai/articles/what-are-transformer-models-in-machine-learning/ Jaemin Jeong Seminar (세미나) 5

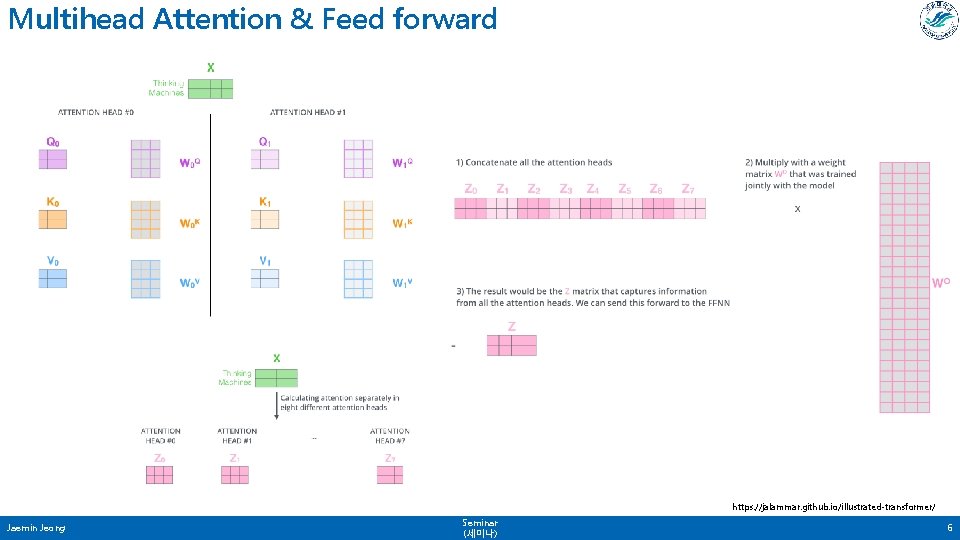

Multihead Attention & Feed forward https: //jalammar. github. io/illustrated-transformer/ Jaemin Jeong Seminar (세미나) 6

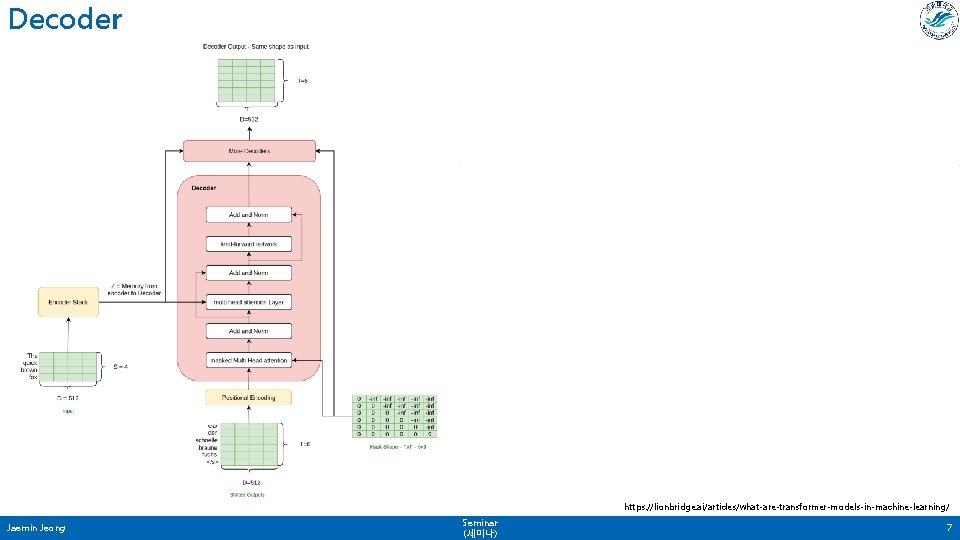

Decoder https: //lionbridge. ai/articles/what-are-transformer-models-in-machine-learning/ Jaemin Jeong Seminar (세미나) 7

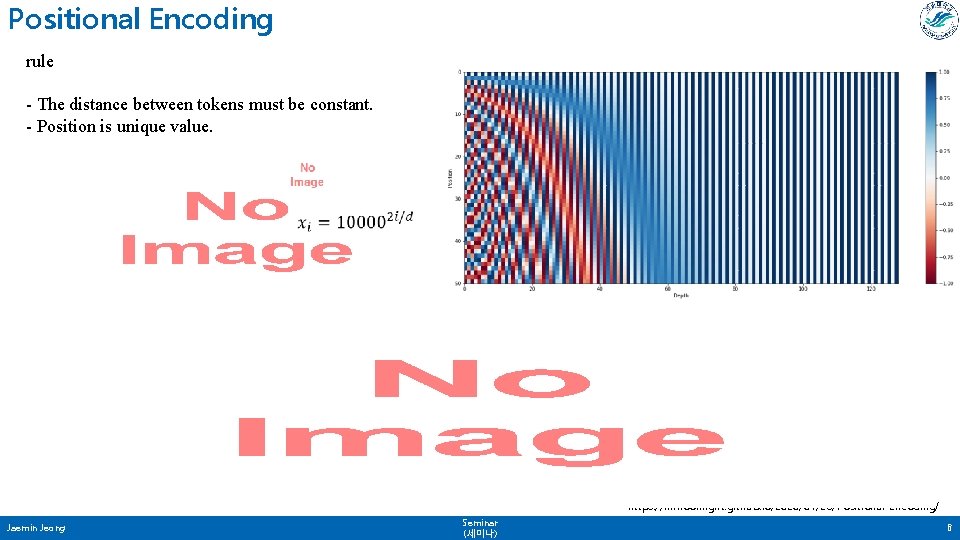

Positional Encoding rule - The distance between tokens must be constant. - Position is unique value. https: //inmoonlight. github. io/2020/01/26/Positional-Encoding/ Jaemin Jeong Seminar (세미나) 8

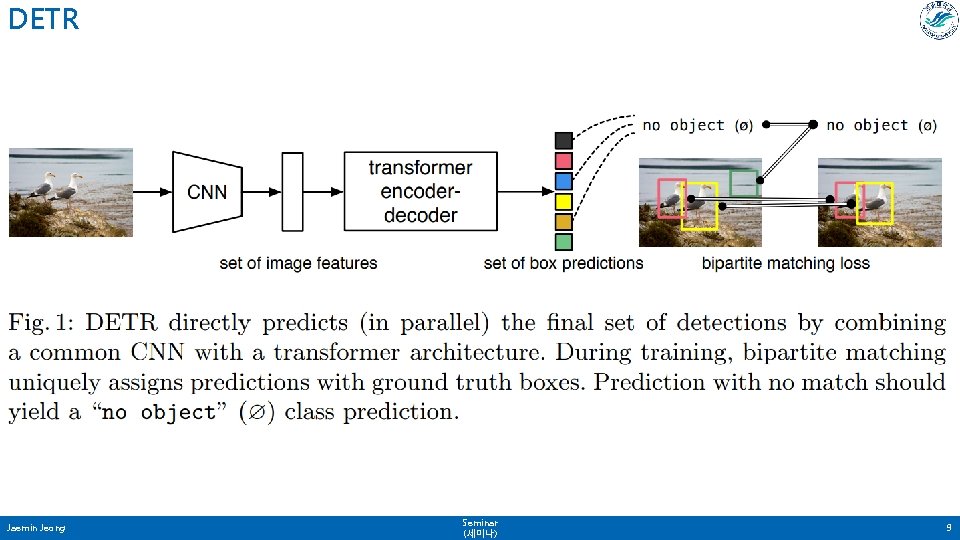

DETR Jaemin Jeong Seminar (세미나) 9

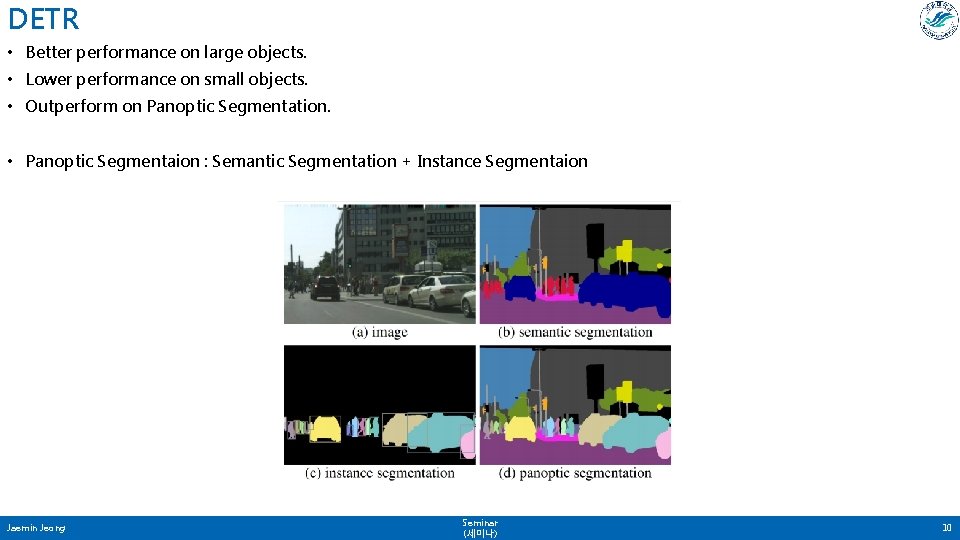

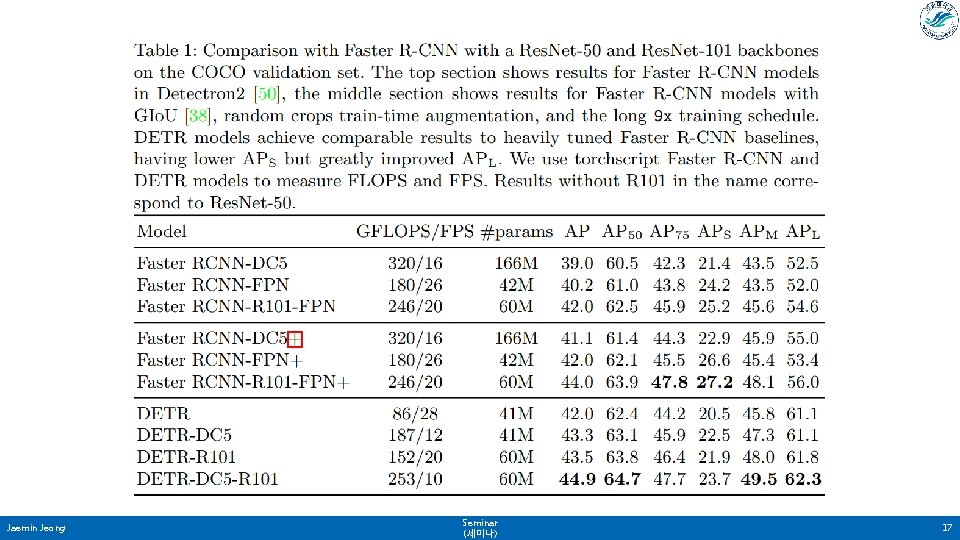

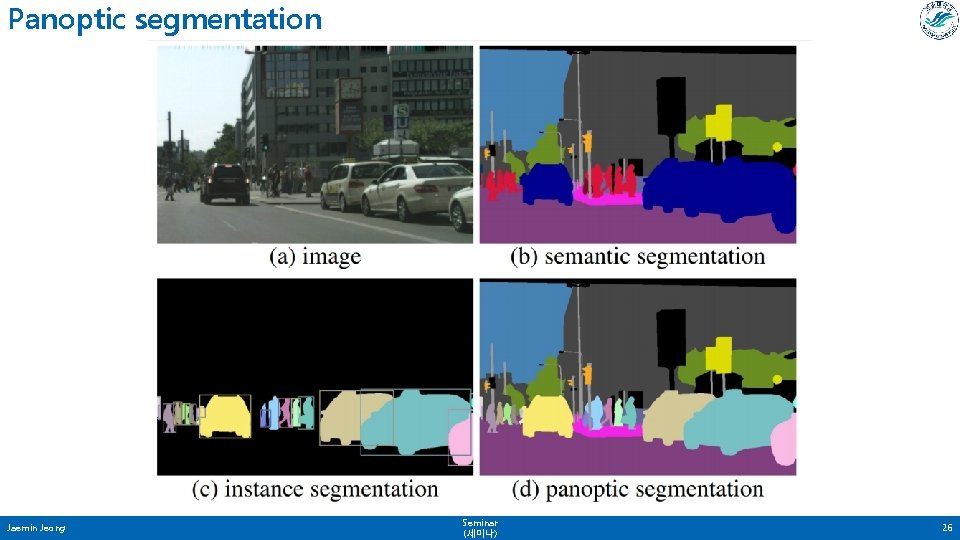

DETR • Better performance on large objects. • Lower performance on small objects. • Outperform on Panoptic Segmentation. • Panoptic Segmentaion : Semantic Segmentation + Instance Segmentaion Jaemin Jeong Seminar (세미나) 10

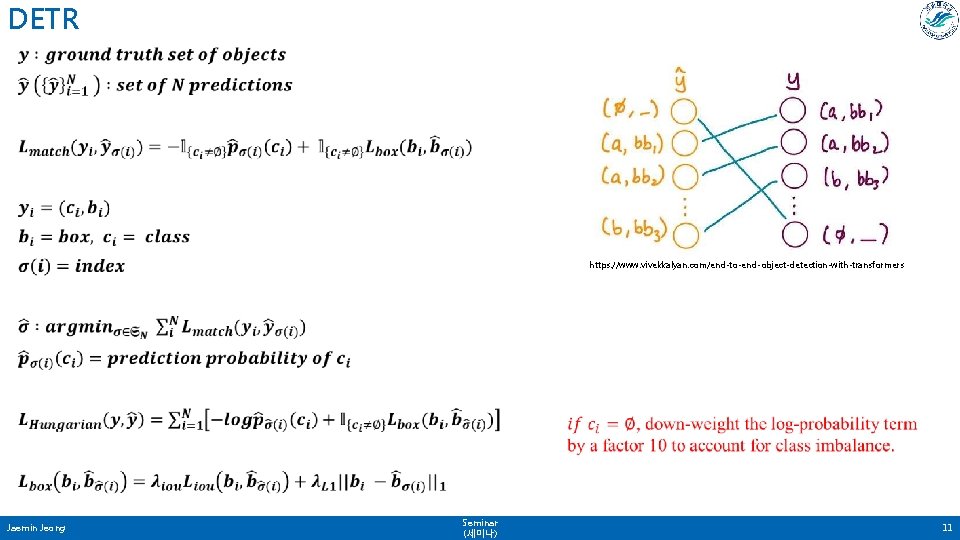

DETR • https: //www. vivekkalyan. com/end-to-end-object-detection-with-transformers Jaemin Jeong Seminar (세미나) 11

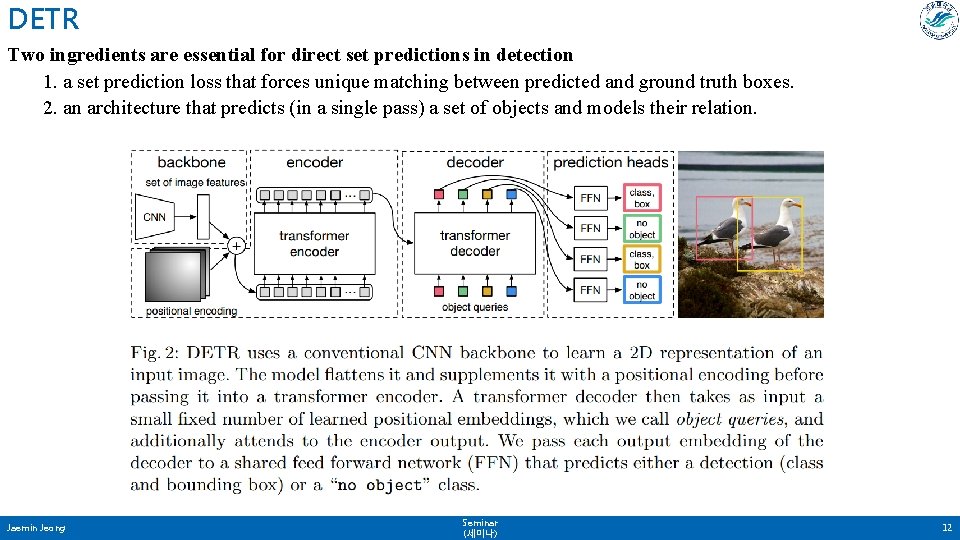

DETR Two ingredients are essential for direct set predictions in detection 1. a set prediction loss that forces unique matching between predicted and ground truth boxes. 2. an architecture that predicts (in a single pass) a set of objects and models their relation. Jaemin Jeong Seminar (세미나) 12

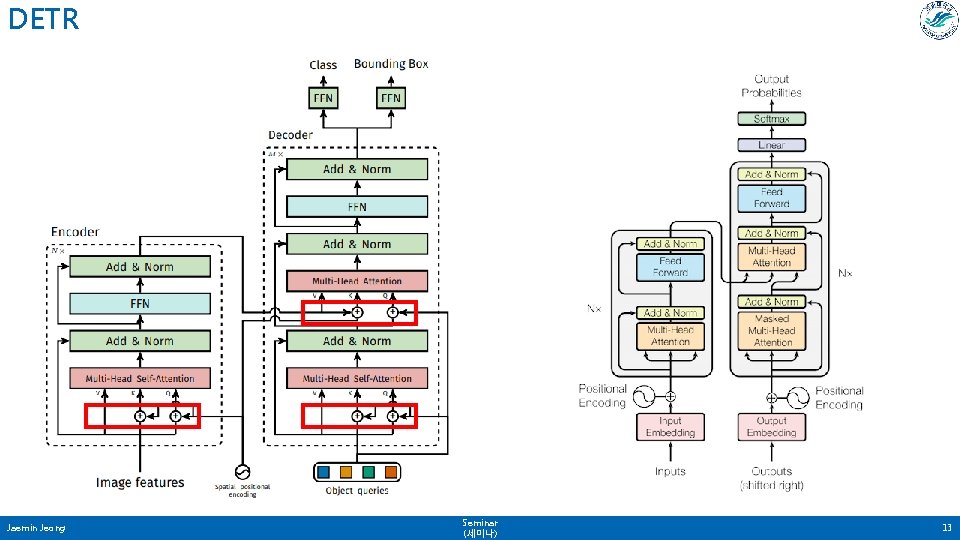

DETR Jaemin Jeong Seminar (세미나) 13

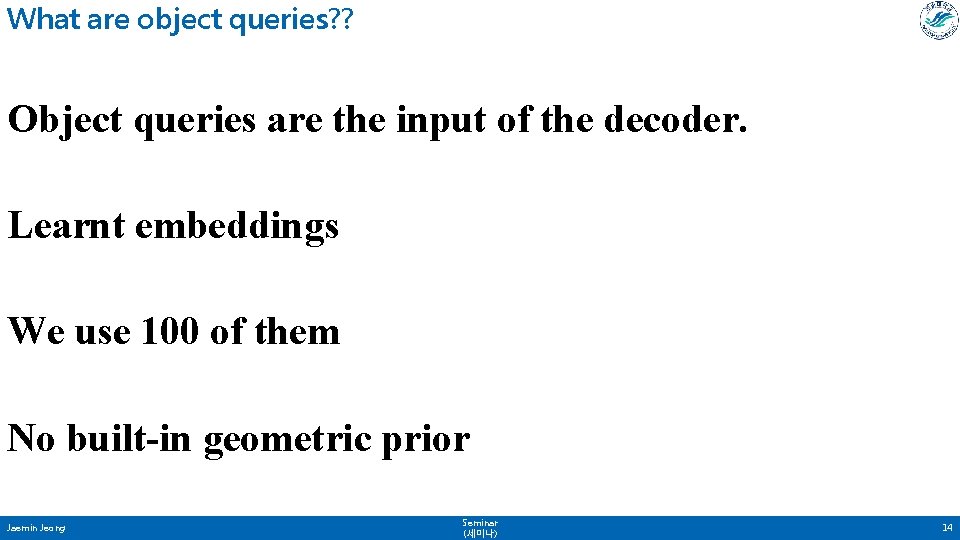

What are object queries? ? Object queries are the input of the decoder. Learnt embeddings We use 100 of them No built-in geometric prior Jaemin Jeong Seminar (세미나) 14

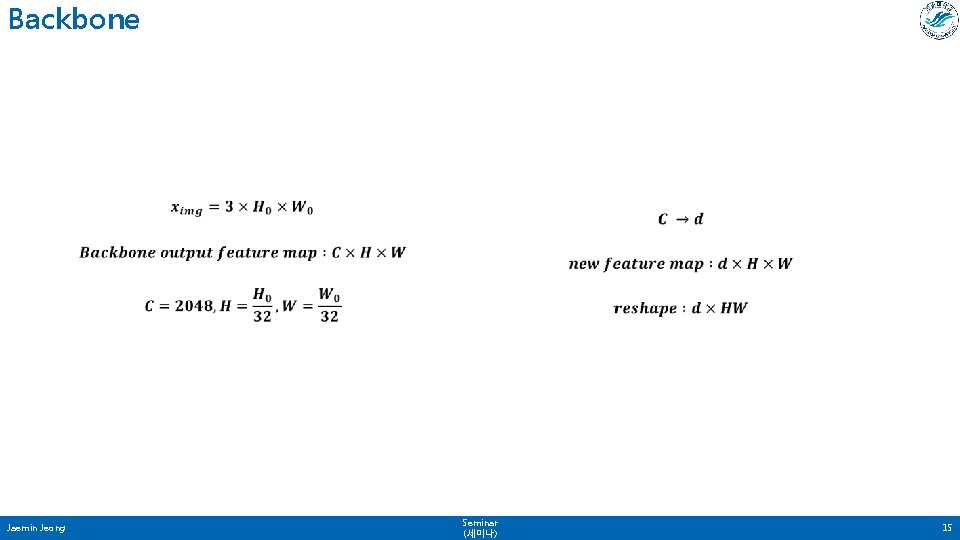

Backbone • Jaemin Jeong Seminar (세미나) 15

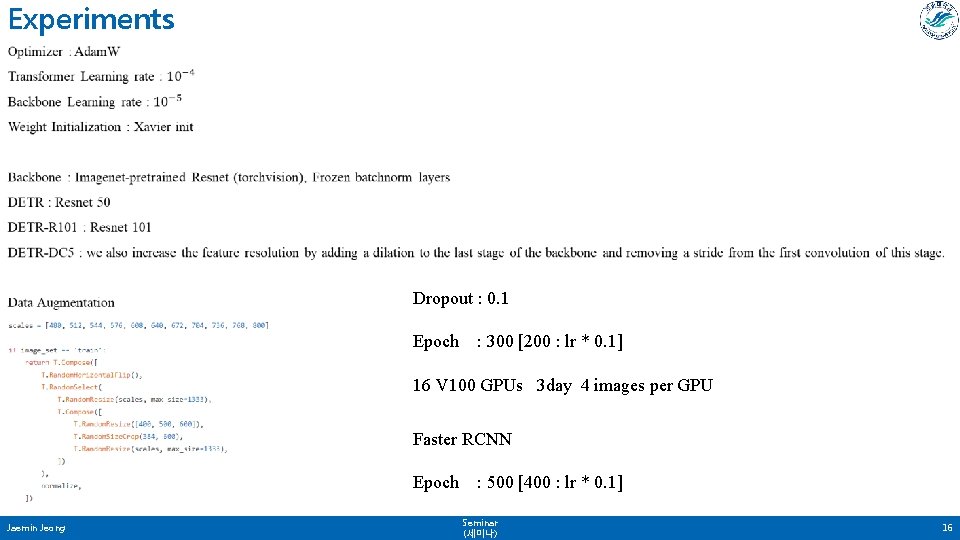

Experiments • Dropout : 0. 1 Epoch : 300 [200 : lr * 0. 1] 16 V 100 GPUs 3 day 4 images per GPU Faster RCNN Epoch Jaemin Jeong : 500 [400 : lr * 0. 1] Seminar (세미나) 16

Jaemin Jeong Seminar (세미나) 17

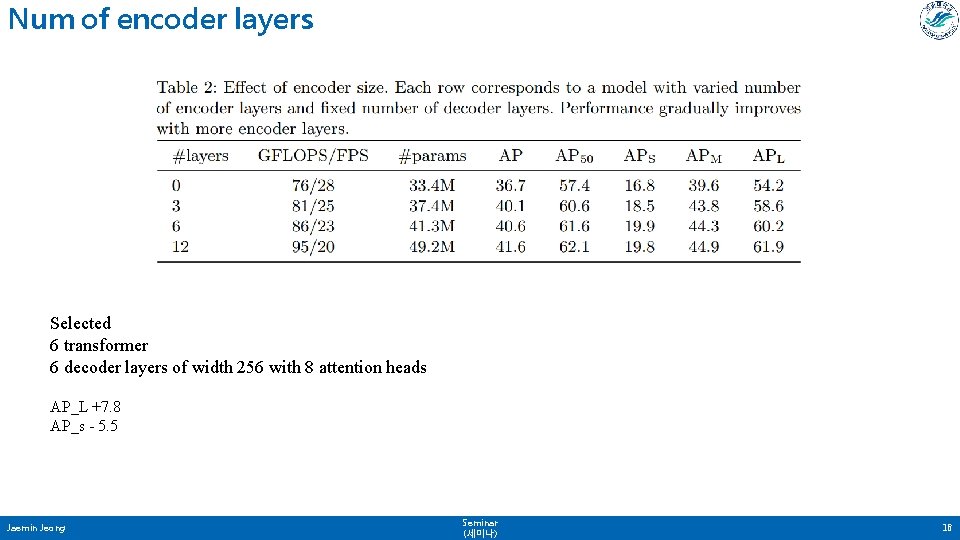

Num of encoder layers Selected 6 transformer 6 decoder layers of width 256 with 8 attention heads AP_L +7. 8 AP_s - 5. 5 Jaemin Jeong Seminar (세미나) 18

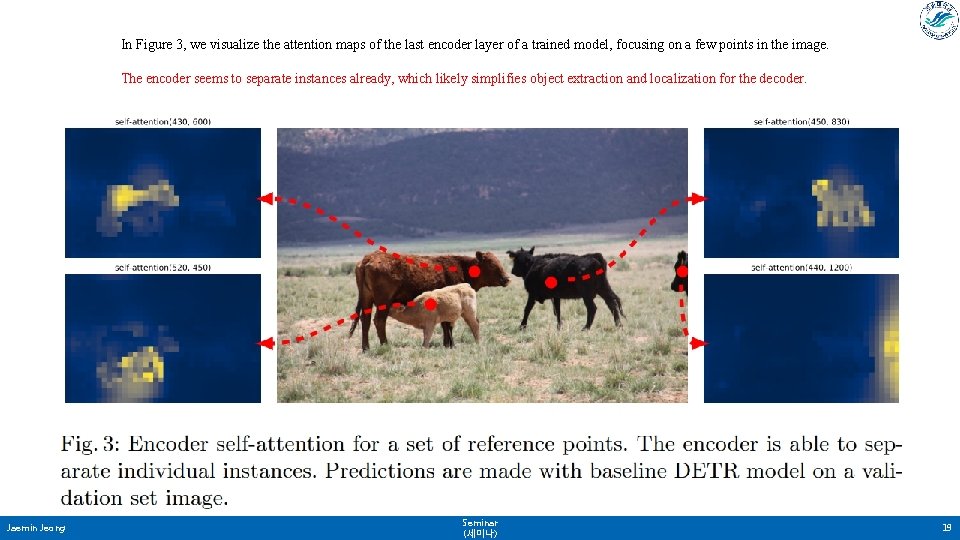

In Figure 3, we visualize the attention maps of the last encoder layer of a trained model, focusing on a few points in the image. The encoder seems to separate instances already, which likely simplifies object extraction and localization for the decoder. Jaemin Jeong Seminar (세미나) 19

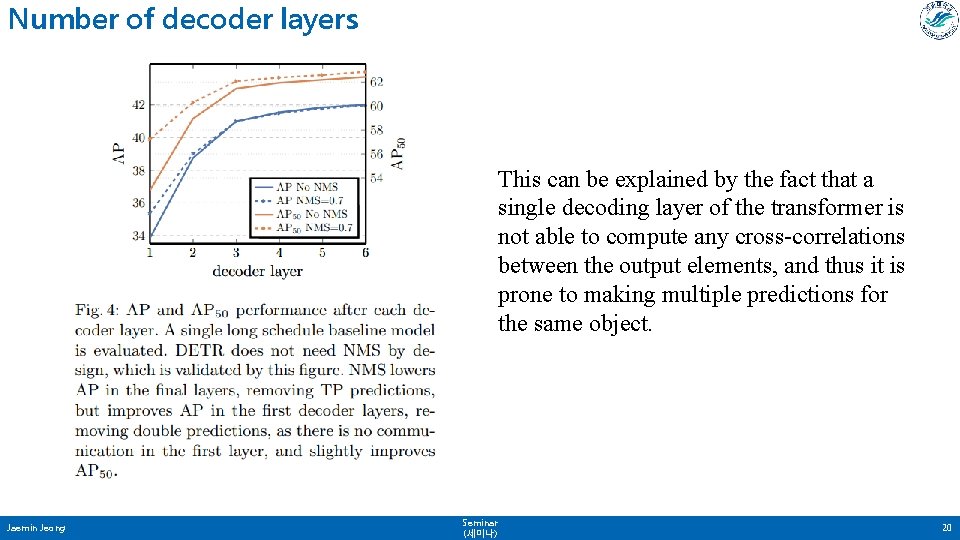

Number of decoder layers This can be explained by the fact that a single decoding layer of the transformer is not able to compute any cross-correlations between the output elements, and thus it is prone to making multiple predictions for the same object. Jaemin Jeong Seminar (세미나) 20

Importance of FFN Remove FFN -> Performance drops by 2. 3 AP Jaemin Jeong Seminar (세미나) 21

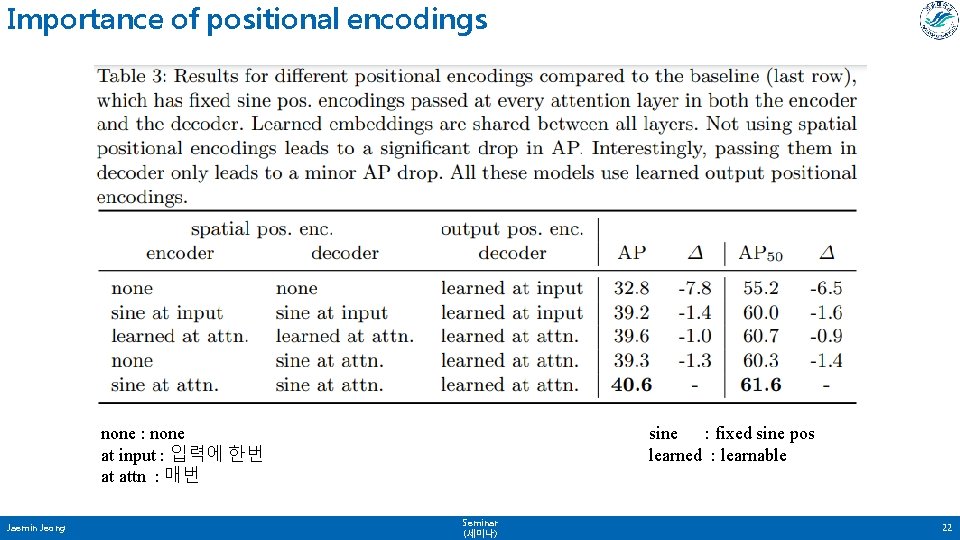

Importance of positional encodings none : none at input : 입력에 한번 at attn : 매번 Jaemin Jeong sine : fixed sine pos learned : learnable Seminar (세미나) 22

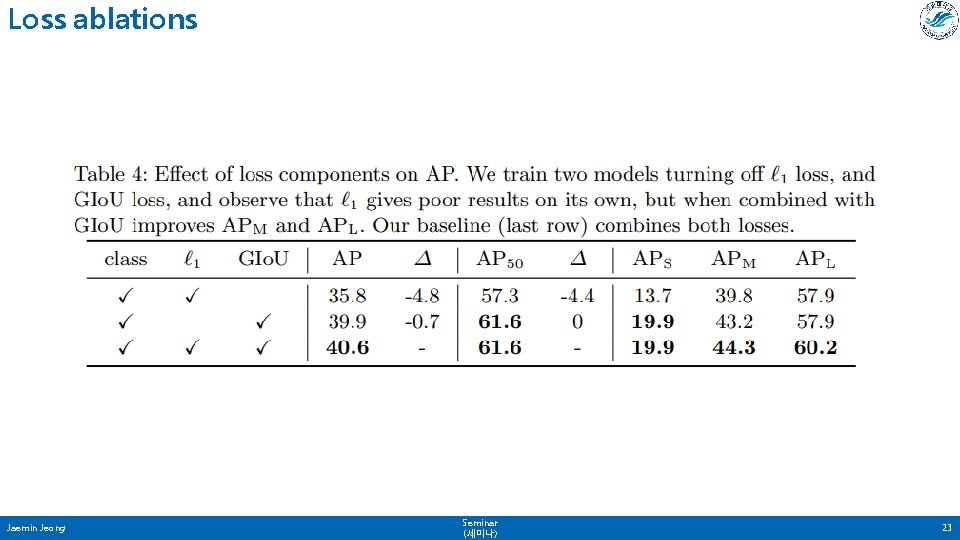

Loss ablations Jaemin Jeong Seminar (세미나) 23

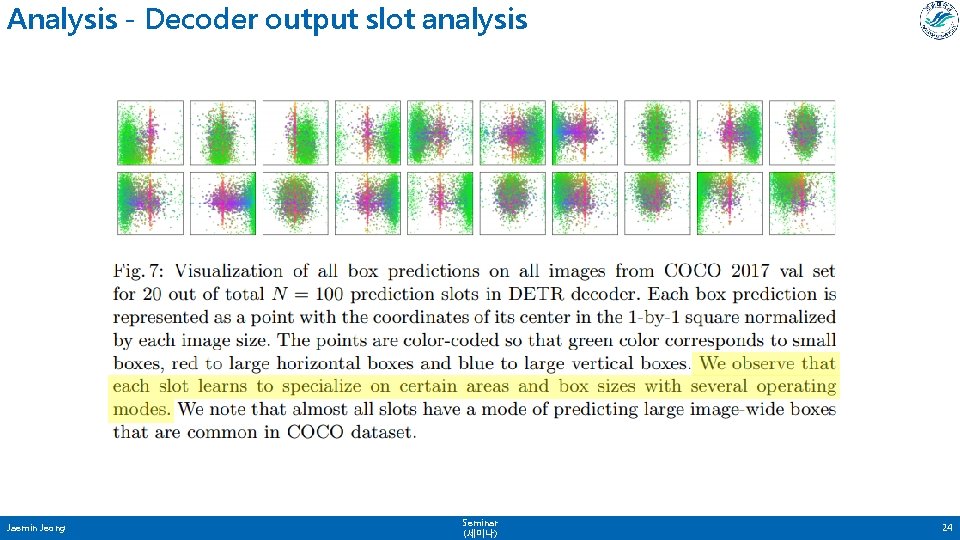

Analysis - Decoder output slot analysis Jaemin Jeong Seminar (세미나) 24

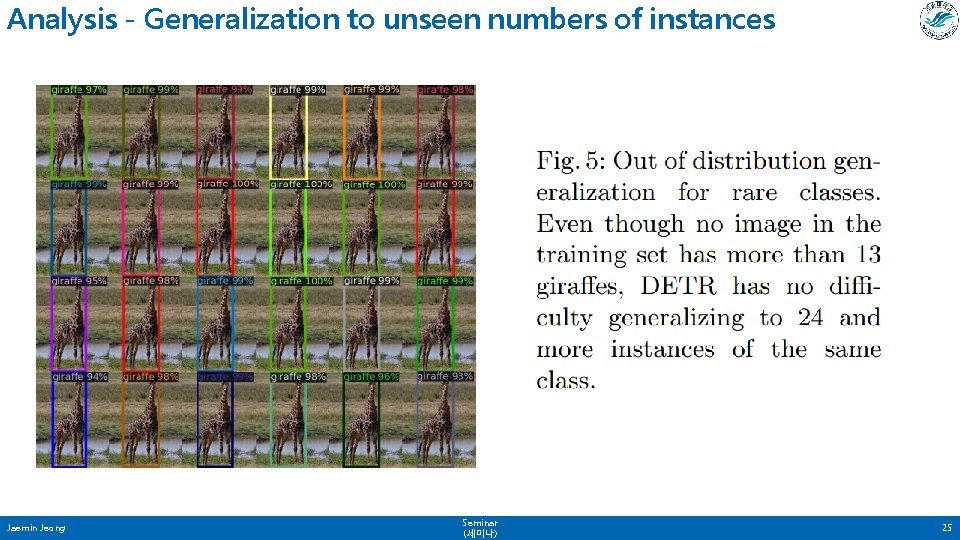

Analysis - Generalization to unseen numbers of instances Jaemin Jeong Seminar (세미나) 25

Panoptic segmentation Jaemin Jeong Seminar (세미나) 26

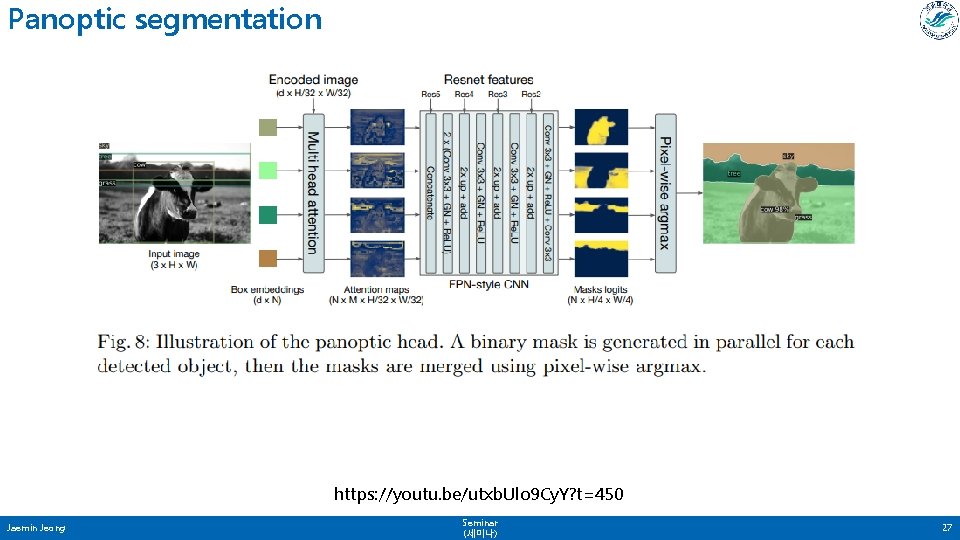

Panoptic segmentation https: //youtu. be/utxb. Ulo 9 Cy. Y? t=450 Jaemin Jeong Seminar (세미나) 27

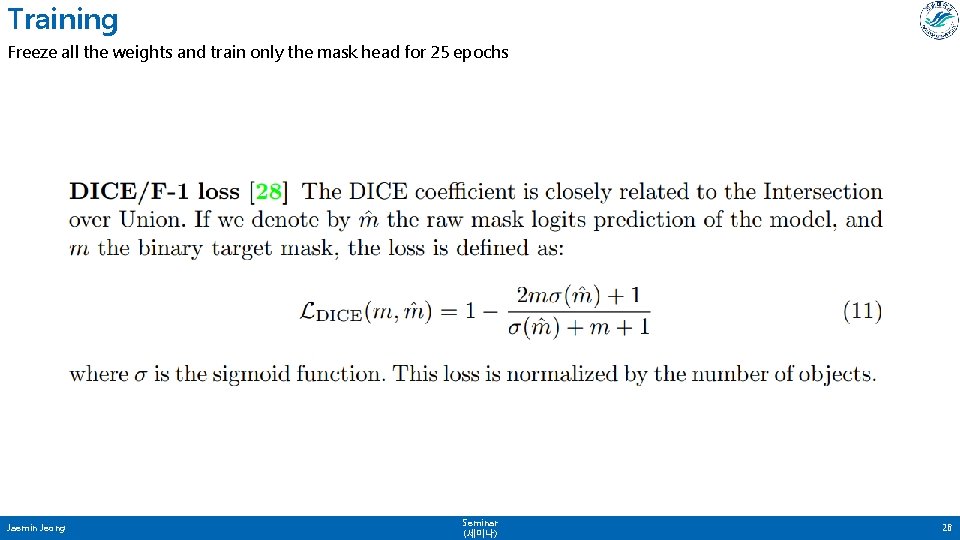

Training Freeze all the weights and train only the mask head for 25 epochs Jaemin Jeong Seminar (세미나) 28

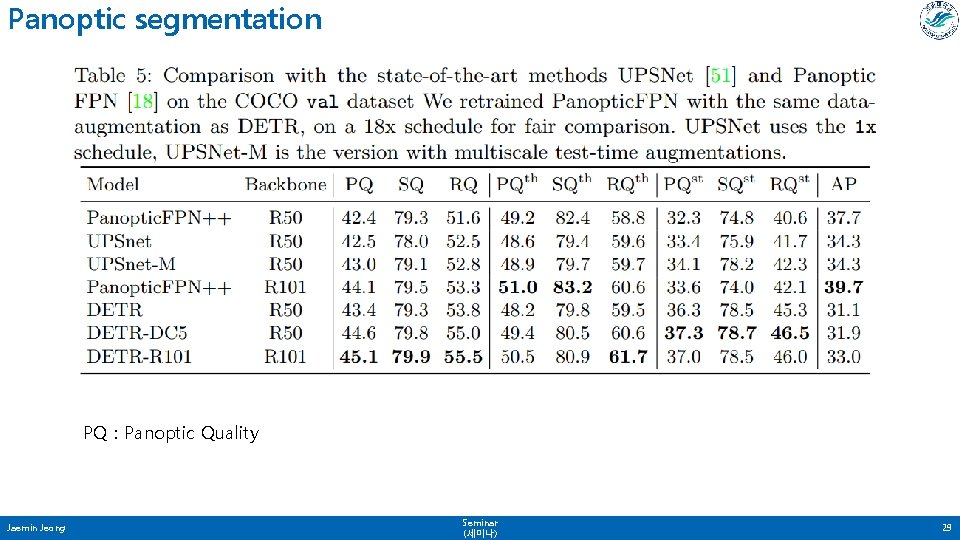

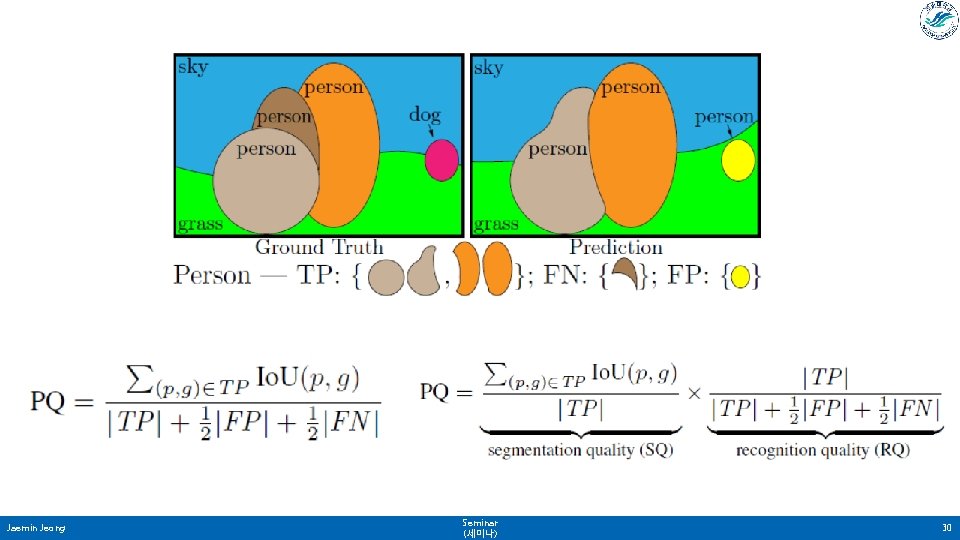

Panoptic segmentation PQ : Panoptic Quality Jaemin Jeong Seminar (세미나) 29

Jaemin Jeong Seminar (세미나) 30

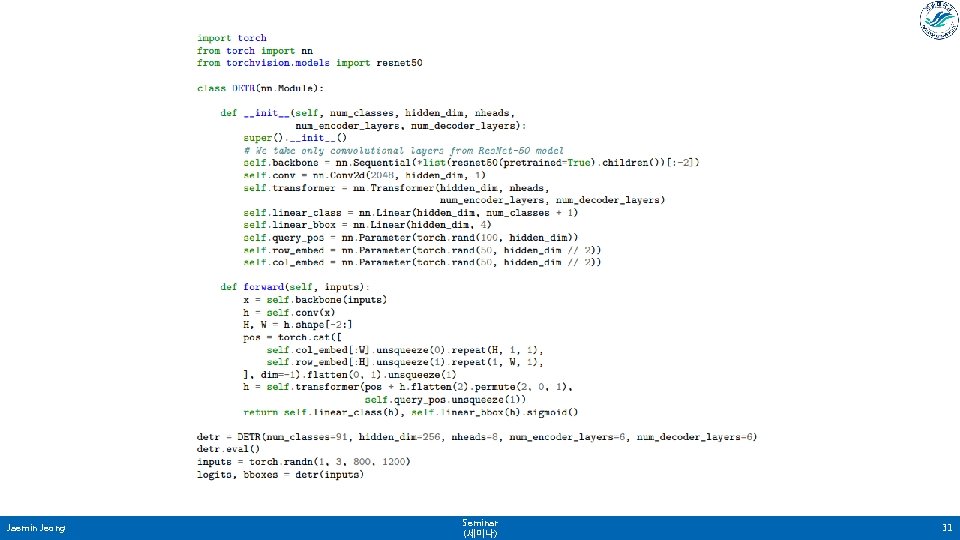

Jaemin Jeong Seminar (세미나) 31

- Slides: 31