Distributed Crossbar Schedulers Cyriel Minkenberg 1 Francois Abel

Distributed Crossbar Schedulers Cyriel Minkenberg 1, Francois Abel 1, Enrico Schiattarella 2 1 IBM Research, Zurich Research Laboratory 2 Dipartimento di Elettronica, Politecnico di Torino HPSR 2006

OSMOSIS Outline § OSMOSIS overview § Challenges in the OSMOSIS scheduler design § Basics of crossbar scheduling § Distributed scheduler Ø Architecture Ø Problems Ø Solutions Ø Results § Implementation HPSR 2006 © 2006 IBM Corporation

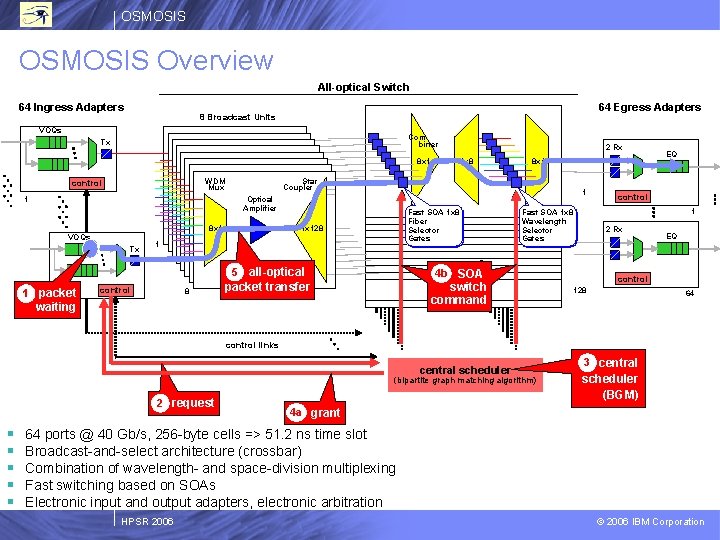

OSMOSIS Overview All-optical Switch 64 Ingress Adapters 64 Egress Adapters 8 Broadcast Units 128 Select Units VOQs Combiner Tx 8 x 1 WDM Mux control 1 Optical Amplifier 8 x 1 Tx Fast SOA 1 x 8 Fiber Selector Gates 1 x 128 1 all-optical packet transfer 5 1 packet control 8 8 x 1 Star Coupler 1 VOQs 2 Rx 1 x 8 2 Rx 4 b SOA 64 waiting control 1 Fast SOA 1 x 8 Wavelength Selector Gates switch command EQ EQ control 128 64 control links central scheduler (bipartite graph matching algorithm) 2 request § § § 3 central scheduler (BGM) 4 a grant 64 ports @ 40 Gb/s, 256 -byte cells => 51. 2 ns time slot Broadcast-and-select architecture (crossbar) Combination of wavelength- and space-division multiplexing Fast switching based on SOAs Electronic input and output adapters, electronic arbitration HPSR 2006 © 2006 IBM Corporation

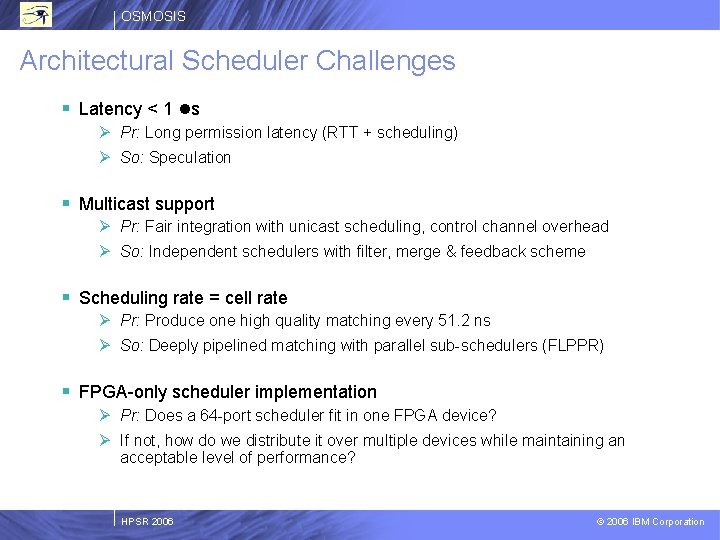

OSMOSIS Architectural Scheduler Challenges § Latency < 1 ls Ø Pr: Long permission latency (RTT + scheduling) Ø So: Speculation § Multicast support Ø Pr: Fair integration with unicast scheduling, control channel overhead Ø So: Independent schedulers with filter, merge & feedback scheme § Scheduling rate = cell rate Ø Pr: Produce one high quality matching every 51. 2 ns Ø So: Deeply pipelined matching with parallel sub-schedulers (FLPPR) § FPGA-only scheduler implementation Ø Pr: Does a 64 -port scheduler fit in one FPGA device? Ø If not, how do we distribute it over multiple devices while maintaining an acceptable level of performance? HPSR 2006 © 2006 IBM Corporation

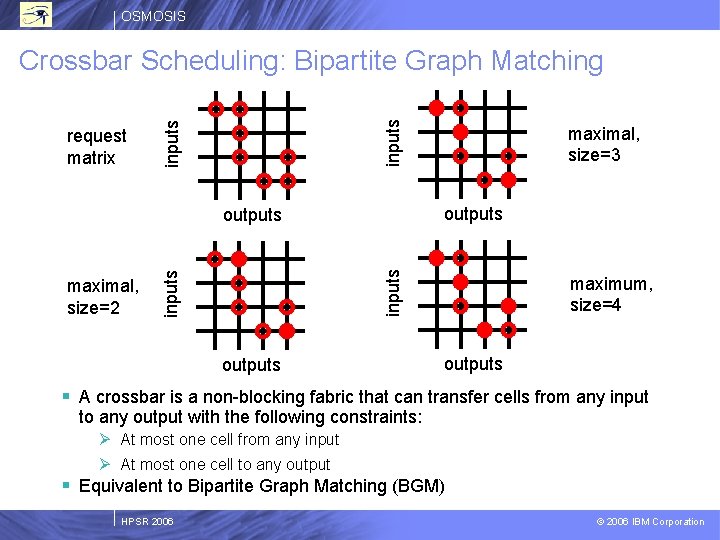

OSMOSIS inputs request matrix inputs Crossbar Scheduling: Bipartite Graph Matching outputs inputs outputs maximal, size=2 maximal, size=3 outputs maximum, size=4 outputs § A crossbar is a non-blocking fabric that can transfer cells from any input to any output with the following constraints: Ø At most one cell from any input Ø At most one cell to any output § Equivalent to Bipartite Graph Matching (BGM) HPSR 2006 © 2006 IBM Corporation

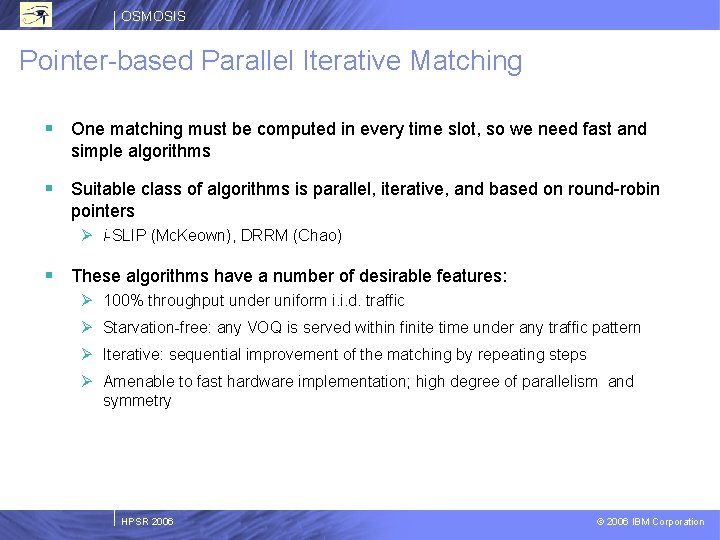

OSMOSIS Pointer-based Parallel Iterative Matching § One matching must be computed in every time slot, so we need fast and simple algorithms § Suitable class of algorithms is parallel, iterative, and based on round-robin pointers Ø i-SLIP (Mc. Keown), DRRM (Chao) § These algorithms have a number of desirable features: Ø 100% throughput under uniform i. i. d. traffic Ø Starvation-free: any VOQ is served within finite time under any traffic pattern Ø Iterative: sequential improvement of the matching by repeating steps Ø Amenable to fast hardware implementation; high degree of parallelism and symmetry HPSR 2006 © 2006 IBM Corporation

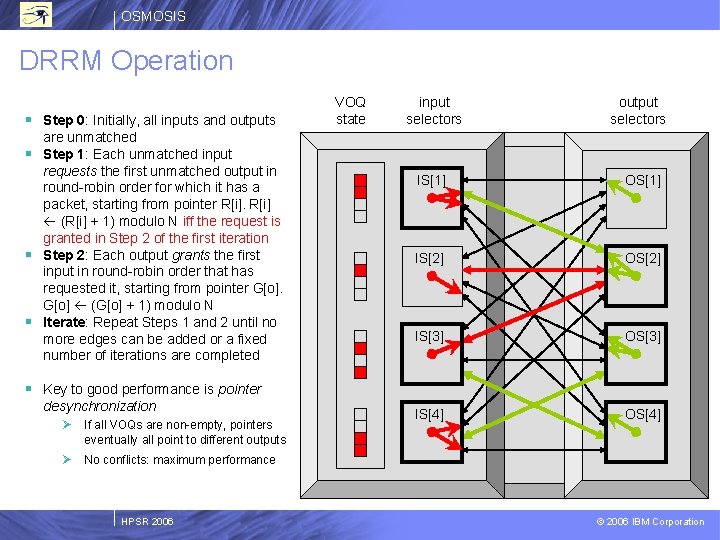

OSMOSIS DRRM Operation § Step 0: Initially, all inputs and outputs are unmatched § Step 1: Each unmatched input requests the first unmatched output in round-robin order for which it has a packet, starting from pointer R[i] (R[i] + 1) modulo N iff the request is granted in Step 2 of the first iteration § Step 2: Each output grants the first input in round-robin order that has requested it, starting from pointer G[o] (G[o] + 1) modulo N § Iterate: Repeat Steps 1 and 2 until no more edges can be added or a fixed number of iterations are completed VOQ state input selectors output selectors IS[1] OS[1] IS[2] OS[2] IS[3] OS[3] IS[4] OS[4] § Key to good performance is pointer desynchronization Ø If all VOQs are non-empty, pointers eventually all point to different outputs Ø No conflicts: maximum performance HPSR 2006 © 2006 IBM Corporation

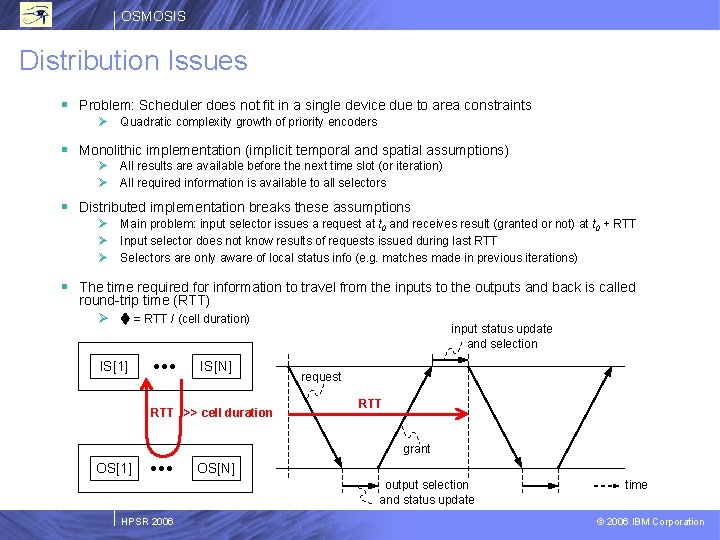

OSMOSIS Distribution Issues § Problem: Scheduler does not fit in a single device due to area constraints Ø Quadratic complexity growth of priority encoders § Monolithic implementation (implicit temporal and spatial assumptions) Ø Ø All results are available before the next time slot (or iteration) All required information is available to all selectors § Distributed implementation breaks these assumptions Ø Ø Ø Main problem: input selector issues a request at t 0 and receives result (granted or not) at t 0 + RTT Input selector does not know results of requests issued during last RTT Selectors are only aware of local status info (e. g. matches made in previous iterations) § The time required for information to travel from the inputs to the outputs and back is called round-trip time (RTT) Ø = RTT / (cell duration) IS[1] IS[N] RTT >> cell duration input status update and selection request RTT grant OS[1] OS[N] output selection and status update HPSR 2006 time © 2006 IBM Corporation

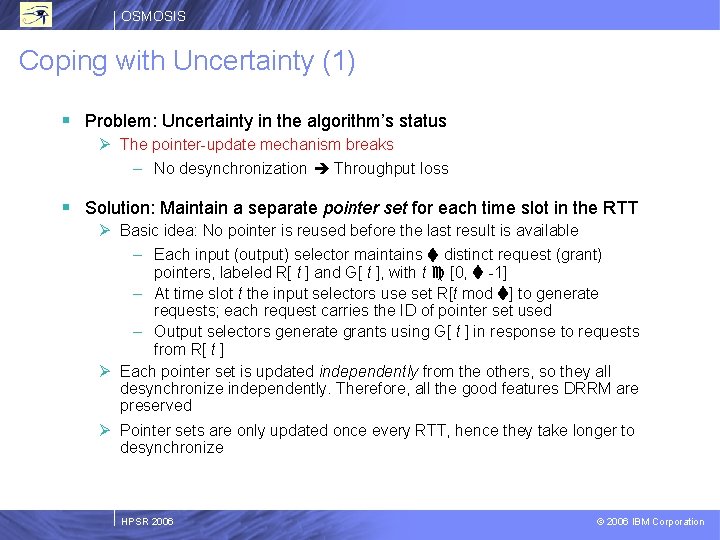

OSMOSIS Coping with Uncertainty (1) § Problem: Uncertainty in the algorithm’s status Ø The pointer-update mechanism breaks – No desynchronization Throughput loss § Solution: Maintain a separate pointer set for each time slot in the RTT Ø Basic idea: No pointer is reused before the last result is available – Each input (output) selector maintains distinct request (grant) pointers, labeled R[ t ] and G[ t ], with t [0, -1] – At time slot t the input selectors use set R[t mod ] to generate requests; each request carries the ID of pointer set used – Output selectors generate grants using G[ t ] in response to requests from R[ t ] Ø Each pointer set is updated independently from the others, so they all desynchronize independently. Therefore, all the good features DRRM are preserved Ø Pointer sets are only updated once every RTT, hence they take longer to desynchronize HPSR 2006 © 2006 IBM Corporation

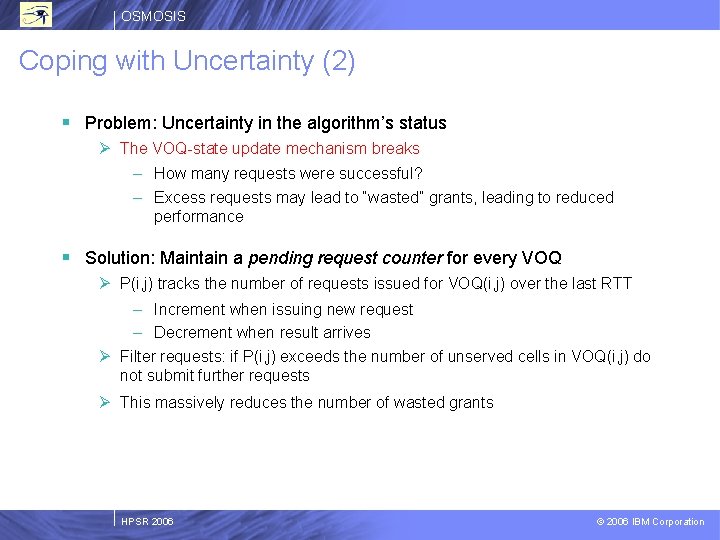

OSMOSIS Coping with Uncertainty (2) § Problem: Uncertainty in the algorithm’s status Ø The VOQ-state update mechanism breaks – How many requests were successful? – Excess requests may lead to “wasted” grants, leading to reduced performance § Solution: Maintain a pending request counter for every VOQ Ø P(i, j) tracks the number of requests issued for VOQ(i, j) over the last RTT – Increment when issuing new request – Decrement when result arrives Ø Filter requests: if P(i, j) exceeds the number of unserved cells in VOQ(i, j) do not submit further requests Ø This massively reduces the number of wasted grants HPSR 2006 © 2006 IBM Corporation

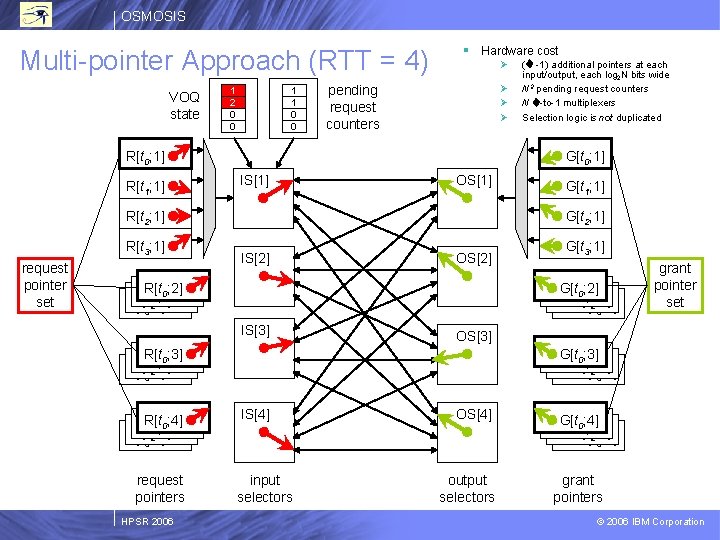

OSMOSIS Multi-pointer Approach (RTT = 4) VOQ state 1 2 0 0 1 1 0 0 § Hardware cost Ø Ø pending request counters R[t 0; 1] R[t 1; 1] G[t 0; 1] IS[1] OS[1] R[t 2; 1] R[t 3; 1] request pointer set IS[2] OS[2] grant pointer set OS[3] R[t; 2] 0; 3] R[t 1 R[t ; 2] 2 R[t 3; 2] HPSR 2006 G[t 3; 1] G[t 0; 2] R[t 1; 2] R[t 2; 2] 3; 2] IS[3] request pointers G[t 1; 1] G[t 2; 1] R[t; 2] 0; 2] R[t 1 R[t ; 2] 2 R[t 3; 2] R[t; 2] 0; 4] R[t 1 R[t ; 2] 2 R[t 3; 2] ( -1) additional pointers at each input/output, each log 2 N bits wide N 2 pending request counters N -to-1 multiplexers Selection logic is not duplicated G[t 0; 3] R[t 1; 2] R[t 2; 2] 3; 2] IS[4] input selectors OS[4] output selectors G[t 0; 4] R[t 1; 2] R[t 2; 2] 3; 2] grant pointers © 2006 IBM Corporation

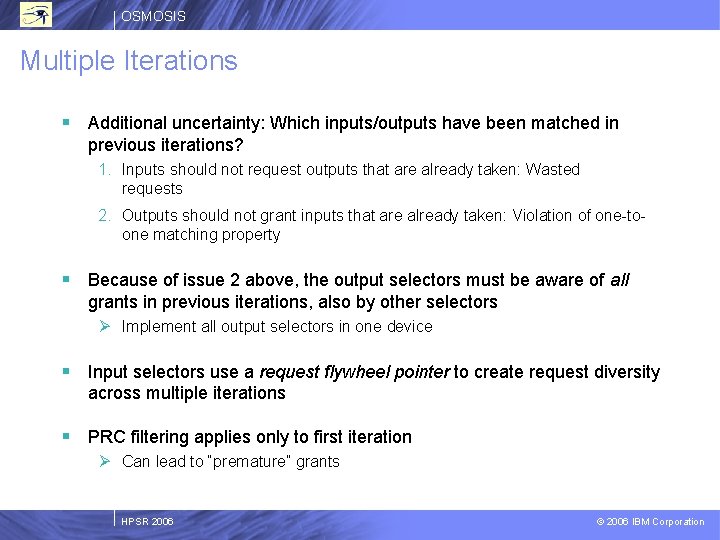

OSMOSIS Multiple Iterations § Additional uncertainty: Which inputs/outputs have been matched in previous iterations? 1. Inputs should not request outputs that are already taken: Wasted requests 2. Outputs should not grant inputs that are already taken: Violation of one-toone matching property § Because of issue 2 above, the output selectors must be aware of all grants in previous iterations, also by other selectors Ø Implement all output selectors in one device § Input selectors use a request flywheel pointer to create request diversity across multiple iterations § PRC filtering applies only to first iteration Ø Can lead to “premature” grants HPSR 2006 © 2006 IBM Corporation

![OSMOSIS Distributed Scheduler Architecture VOQ state input selectors output selectors IS[1] OS[1] IS[2] OS[2] OSMOSIS Distributed Scheduler Architecture VOQ state input selectors output selectors IS[1] OS[1] IS[2] OS[2]](http://slidetodoc.com/presentation_image_h/bf97f472748c9097a1bc6a943d1cabfa/image-13.jpg)

OSMOSIS Distributed Scheduler Architecture VOQ state input selectors output selectors IS[1] OS[1] IS[2] OS[2] IS[3] OS[3] IS[4] OS[4] control channel Control channel interfaces (each on a control channel separate card) control channel HPSR 2006 switch command channels Allocators (on midplane) © 2006 IBM Corporation

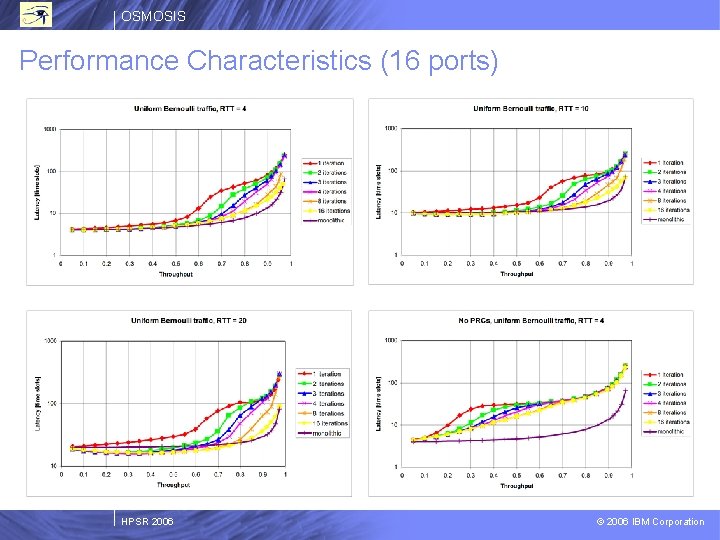

OSMOSIS Performance Characteristics (16 ports) HPSR 2006 © 2006 IBM Corporation

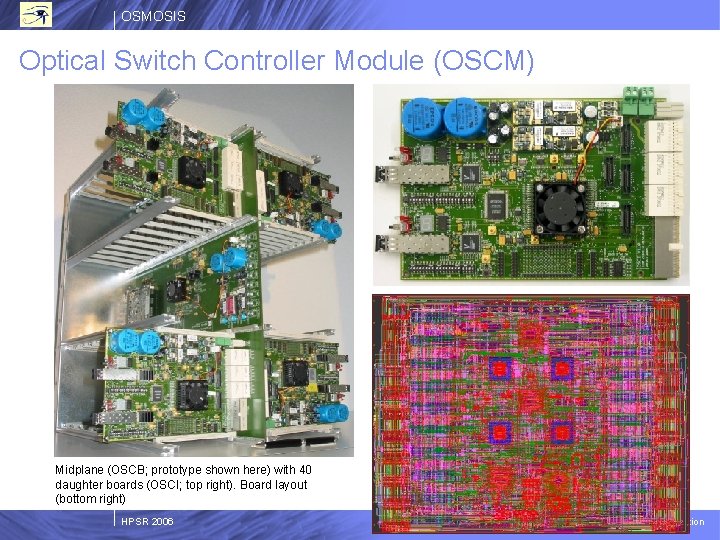

OSMOSIS Optical Switch Controller Module (OSCM) Midplane (OSCB; prototype shown here) with 40 daughter boards (OSCI; top right). Board layout (bottom right) HPSR 2006 © 2006 IBM Corporation

OSMOSIS Thank You! HPSR 2006 © 2006 IBM Corporation

- Slides: 16