Distributed Analytics Analytics as a Service Use case

Distributed Analytics (Analytics as a Service) - Use case

Requirements • Network Analytics Closer to the data source - Bring up analytics frameworks in multiple cloud regions. - Bring up analytics applications on the right location based on VNF/NFVI requirements • Support for various kinds of analytics - Traditional Analytics - ML/DL analytics – Training and Inferencing • Support for various types of analytics - Analytics on infrastructure level statistics : NFVI, VIM, K 8 S etc… - Analytics on Application level (IOT) statistics • Support for - Shared analytics services (Analytics services shared across multiple NFVIs, Multiple VNFs or Multiple VNF instances) - Dedicated analytics services (Per VNF instance) • Support for - Day 0 configuration - Day 2 configuration • Modularity - Analytics system should be as generic as possible (One should be able to just use this without rest of ONAP). That is, ONAP Glue and ONAP action processors to be independent. - Analytics system and applications are considered as workloads as far as ONAP SDC/SO/OOF/Multi-Cloud are concerned. 2

Assumptions for Dublin • Kubernetes support in Cloud regions - (Future) support others in future. What to be supported is TBD. • Big Data analytics framework (Spark, HDFS, HBASE, Open. TSDB/Influx. DB/M 3 DB) • Spark framework even for inference - (Future) make the inference as a Micro Service for easier deployment. - (Future) make the inference as set of executable to be deployed even within application/NF workload or in the compute node (in case of single node edge deployment). • Instantiates the entire analytics framework in remote cloud regions. - (Future) work with partial deployment that already exists (For example, support existing HDFS deployment by only instantiating the other components) • Instantiates in new name space (not on existing namespace) in remote cloud regions - (Future) will consider deploying in the existing namespace (We believe it is mostly testing and fixing any gaps) • Dynamic configuration updates to analytics applications will be using operators. Other mechanisms for further study. • Testing with third party analytics frameworks/apps is stretch – Depending on the framework vendors. 3

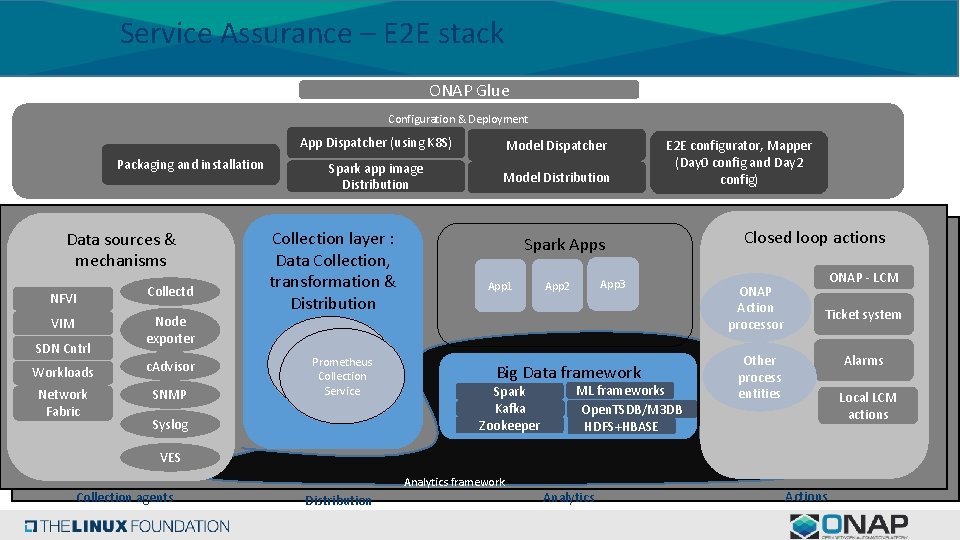

Service Assurance – E 2 E stack ONAP Glue Configuration & Deployment Packaging and installation Data sources & mechanisms NFVI VIM SDN Cntrl Collectd Node exporter Workloads c. Advisor Network Fabric SNMP App Dispatcher (using K 8 S) Model Dispatcher Spark app image Distribution Model Distribution Collection layer : Data Collection, transformation & Distribution Prometheus Collection Service Syslog E 2 E configurator, Mapper (Day 0 config and Day 2 config) Spark Apps App 1 App 3 App 2 Big Data framework Spark Kafka Zookeeper ML frameworks Open. TSDB/M 3 DB HDFS+HBASE Closed loop actions ONAP Action processor ONAP - LCM Ticket system Other process entities Alarms Local LCM actions VES Analytics framework Collection agents Distribution Analytics Actions

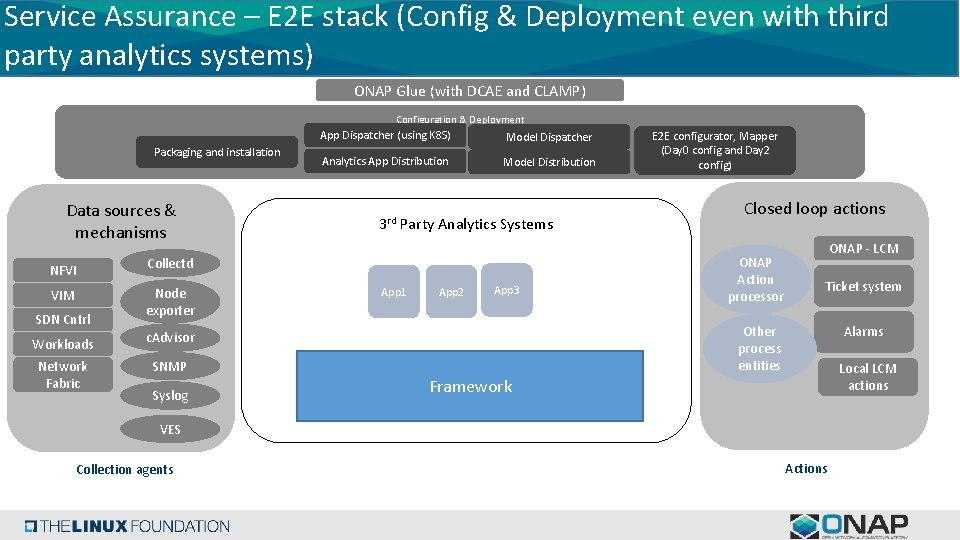

Service Assurance – E 2 E stack (Config & Deployment even with third party analytics systems) ONAP Glue (with DCAE and CLAMP) Configuration & Deployment Packaging and installation Data sources & mechanisms NFVI VIM SDN Cntrl App Dispatcher (using K 8 S) Model Dispatcher Analytics App Distribution Model Distribution 3 rd Party Analytics Systems Collectd Node exporter Workloads c. Advisor Network Fabric SNMP Syslog App 1 App 2 App 3 E 2 E configurator, Mapper (Day 0 config and Day 2 config) Closed loop actions ONAP Action processor ONAP - LCM Ticket system Other process entities Alarms Local LCM actions Framework VES Collection agents Actions

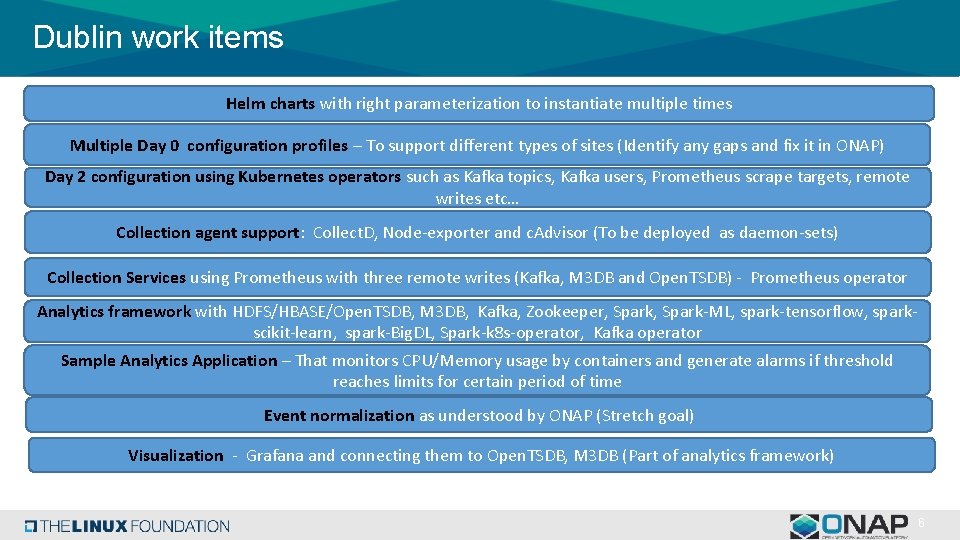

Dublin work items Helm charts with right parameterization to instantiate multiple times Multiple Day 0 configuration profiles – To support different types of sites (Identify any gaps and fix it in ONAP) Day 2 configuration using Kubernetes operators such as Kafka topics, Kafka users, Prometheus scrape targets, remote writes etc… Collection agent support: Collect. D, Node-exporter and c. Advisor (To be deployed as daemon-sets) Collection Services using Prometheus with three remote writes (Kafka, M 3 DB and Open. TSDB) - Prometheus operator Analytics framework with HDFS/HBASE/Open. TSDB, M 3 DB, Kafka, Zookeeper, Spark-ML, spark-tensorflow, sparkscikit-learn, spark-Big. DL, Spark-k 8 s-operator, Kafka operator Sample Analytics Application – That monitors CPU/Memory usage by containers and generate alarms if threshold reaches limits for certain period of time Event normalization as understood by ONAP (Stretch goal) Visualization - Grafana and connecting them to Open. TSDB, M 3 DB (Part of analytics framework) 6

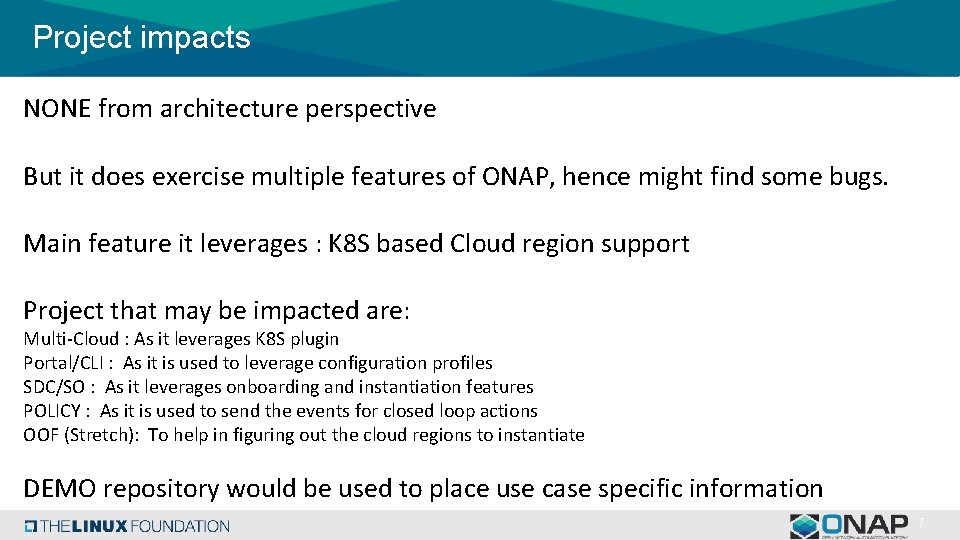

Project impacts NONE from architecture perspective But it does exercise multiple features of ONAP, hence might find some bugs. Main feature it leverages : K 8 S based Cloud region support Project that may be impacted are: Multi-Cloud : As it leverages K 8 S plugin Portal/CLI : As it is used to leverage configuration profiles SDC/SO : As it leverages onboarding and instantiation features POLICY : As it is used to send the events for closed loop actions OOF (Stretch): To help in figuring out the cloud regions to instantiate DEMO repository would be used to place use case specific information 7

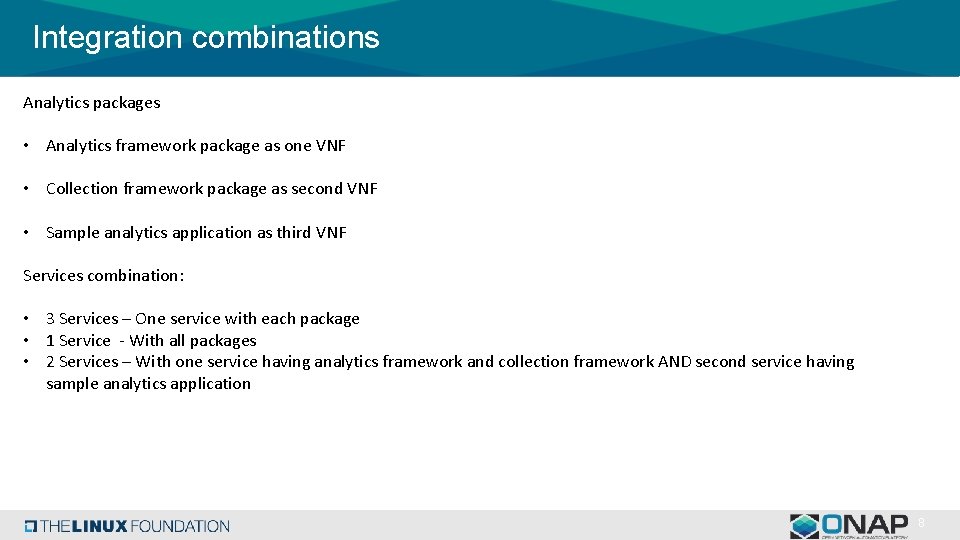

Integration combinations Analytics packages • Analytics framework package as one VNF • Collection framework package as second VNF • Sample analytics application as third VNF Services combination: • 3 Services – One service with each package • 1 Service - With all packages • 2 Services – With one service having analytics framework and collection framework AND second service having sample analytics application 8

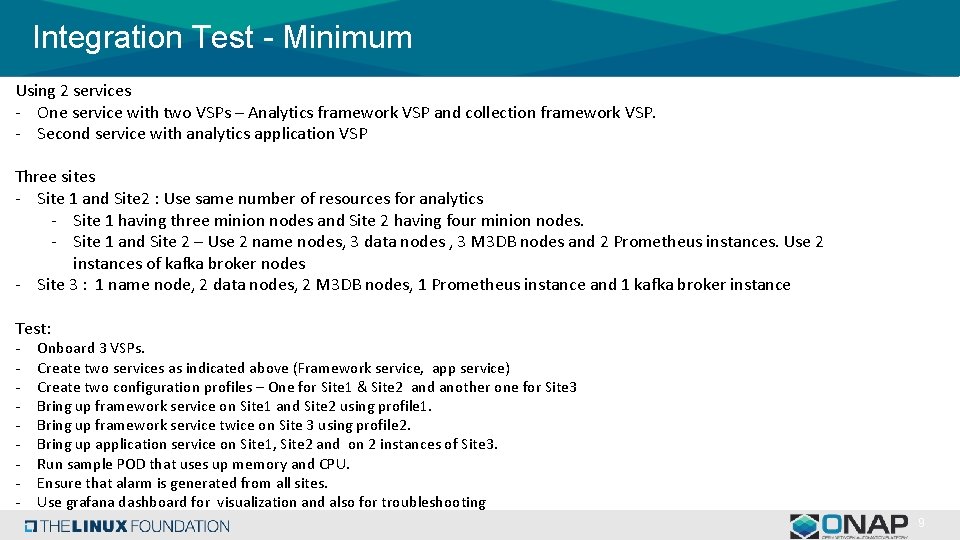

Integration Test - Minimum Using 2 services - One service with two VSPs – Analytics framework VSP and collection framework VSP. - Second service with analytics application VSP Three sites - Site 1 and Site 2 : Use same number of resources for analytics - Site 1 having three minion nodes and Site 2 having four minion nodes. - Site 1 and Site 2 – Use 2 name nodes, 3 data nodes , 3 M 3 DB nodes and 2 Prometheus instances. Use 2 instances of kafka broker nodes - Site 3 : 1 name node, 2 data nodes, 2 M 3 DB nodes, 1 Prometheus instance and 1 kafka broker instance Test: - Onboard 3 VSPs. Create two services as indicated above (Framework service, app service) Create two configuration profiles – One for Site 1 & Site 2 and another one for Site 3 Bring up framework service on Site 1 and Site 2 using profile 1. Bring up framework service twice on Site 3 using profile 2. Bring up application service on Site 1, Site 2 and on 2 instances of Site 3. Run sample POD that uses up memory and CPU. Ensure that alarm is generated from all sites. Use grafana dashboard for visualization and also for troubleshooting 9

Contributing Engineers • Intel - Dileep Ranganathan, Rajamohan and Sri Vahini - Srinivasa Addepalli (Architecture) • Vmware • Ethan Lynn or TBD • Ramki Krishnan (* - Architecture) 10

BACKUP

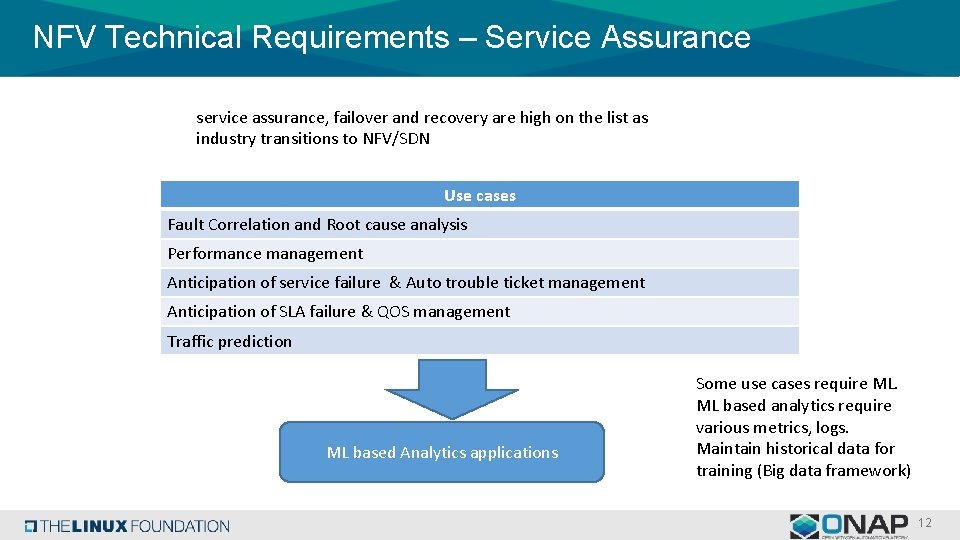

NFV Technical Requirements – Service Assurance service assurance, failover and recovery are high on the list as industry transitions to NFV/SDN Use cases Fault Correlation and Root cause analysis Performance management Anticipation of service failure & Auto trouble ticket management Anticipation of SLA failure & QOS management Traffic prediction ML based Analytics applications Some use cases require ML. ML based analytics require various metrics, logs. Maintain historical data for training (Big data framework) 12

s

- Slides: 13