CS 160 Lecture 23 Professor John Canny Spring

- Slides: 25

CS 160: Lecture 23 Professor John Canny Spring 2003 10/30/2021 1

Preamble 4 Handout for next lecture. 4 Quiz on Help systems. 10/30/2021 2

Multimodal Interfaces 4 Multi-modal refers to interfaces that support non-GUI interaction. 4 Speech and pen input are two common examples - and are complementary. 10/30/2021 3

Speech+pen Interfaces 4 Speech is the preferred medium for subject, verb, object expression. 4 Writing or gesture provide locative information (pointing etc). 10/30/2021 4

Speech+pen Interfaces 4 Speech+pen for visual-spatial tasks (compared to speech only) * * 10% faster. 36% fewer task-critical errors. Shorter and simpler linguistic constructions. 90 -100% user preference to interact this way. 10/30/2021 5

Put-That-There 4 User points at object, and says “put that” (grab), then points to destination and says “there” (drop). * Very good for deictic actions, (speak and point), but these are only 20% of actions. For the rest, need complex gestures. 10/30/2021 6

Multimodal advantages 4 Advantages for error recovery: * Users intuitively pick the mode that is less error-prone. * Language is often simplified. * Users intuitively switch modes after an error, so the same problem is not repeated. 10/30/2021 7

Multimodal advantages 4 Other situations where mode choice helps: * Users with disability. * People with a strong accent or a cold. * People with RSI. * Young children or non-literate users. 10/30/2021 8

Multimodal advantages 4 For collaborative work, multimodal interfaces can communicate a lot more than text: * Speech contains prosodic information. * Gesture communicates emotion. * Writing has several expressive dimensions. 10/30/2021 9

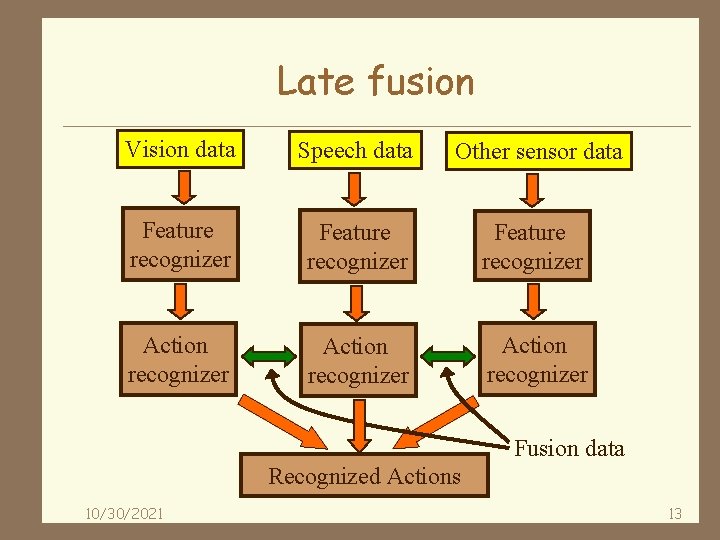

Multimodal challenges 4 Using multimodal input generally requires advanced recognition methods: * For each mode. * For combining redundant information. * For combining non-redundant information: “open this file (pointing)” 4 Information is combined at two levels: * Feature level (early fusion). * Semantic level (late fusion). 10/30/2021 10

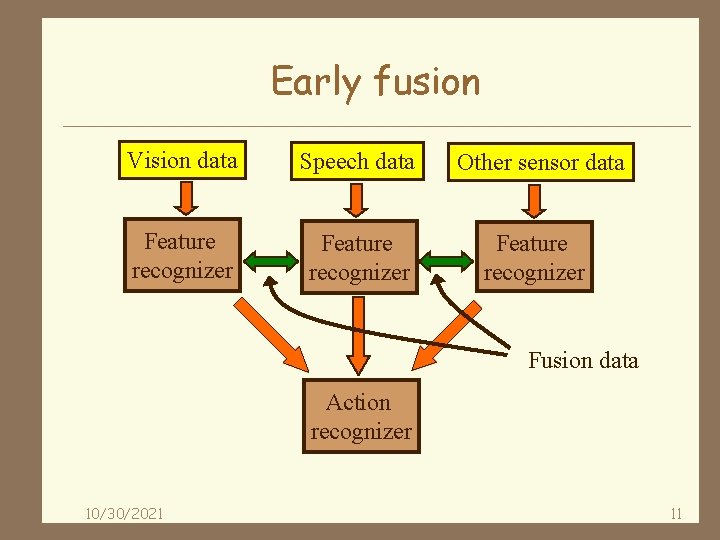

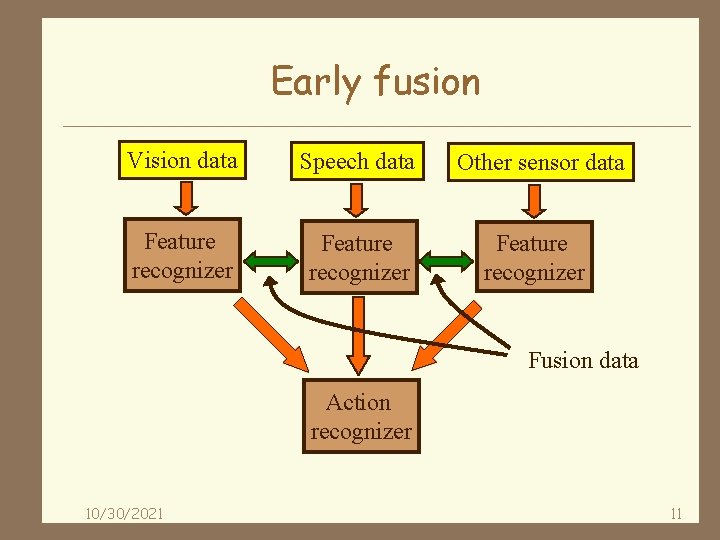

Early fusion Vision data Speech data Other sensor data Feature recognizer Fusion data Action recognizer 10/30/2021 11

Early fusion 4 Early fusion applies to combinations like speech+lip movement. It is difficult because: * Of the need for MM training data. * Because data need to be closely synchronized. * Computational and training costs. 10/30/2021 12

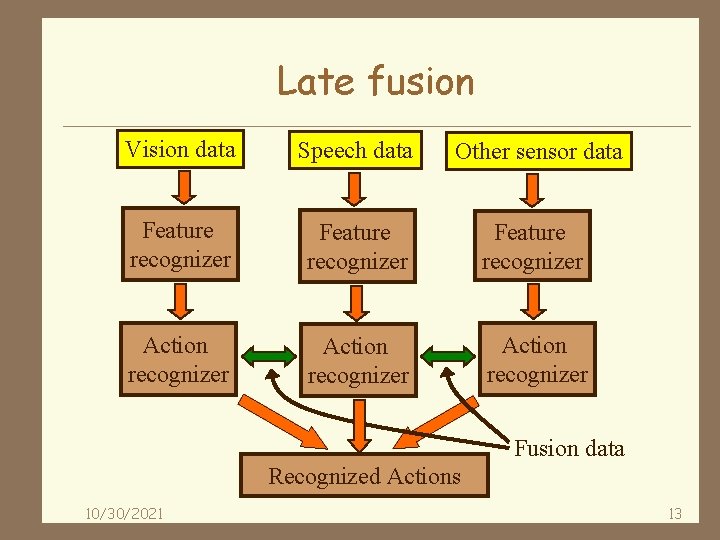

Late fusion Vision data Speech data Other sensor data Feature recognizer Action recognizer Fusion data Recognized Actions 10/30/2021 13

Late fusion 4 Late fusion is appropriate for combinations of complementary information, like pen+speech. * Recognizers are trained and used separately. * Unimodal recognizers are available off-the-shelf. * Its still important to accurately time-stamp all inputs: typical delays are known between e. g. gesture and speech. 10/30/2021 14

Contrast between MM and GUIs 4 GUI interfaces often restrict input to single non-overlapping events, while MM interfaces handle all inputs at once. 4 GUI events are unambiguous, MM inputs are based on recognition and require a probabilistic approach 4 MM interfaces are often distributed on a network. 10/30/2021 15

Agent architectures 4 Allow parts of an MM system to be written separately, in the most appropriate language, and integrated easily. 4 OAA: Open-Agent Architecture (Cohen et al) supports MM interfaces. 4 Blackboards and message queues are often used to simplify inter-agent communication. * Jini, Javaspaces, Tspaces, JXTA, JMS, MSMQ. . . 10/30/2021 16

Adminstrative 4 Final project presentations on May 12 and 13. 4 Presentations go by group number. Groups 7 - 12 on Monday 12, groups 1 -6 on Tuesday 13. 4 Final reports are due on Weds May 7. 10/30/2021 17

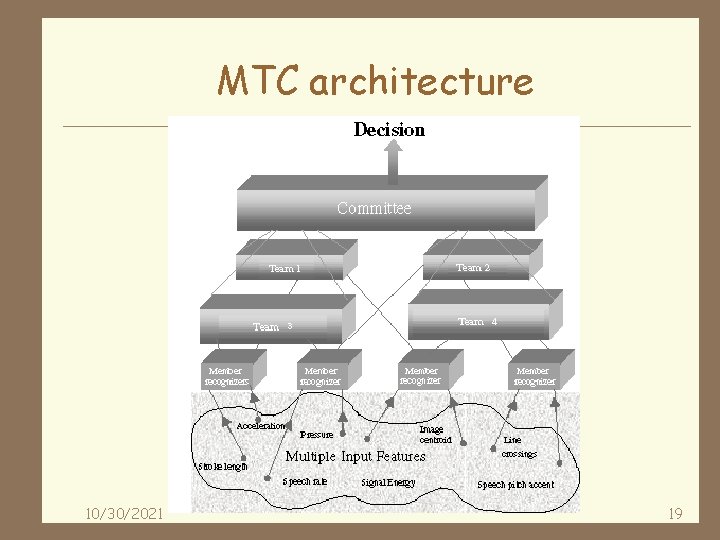

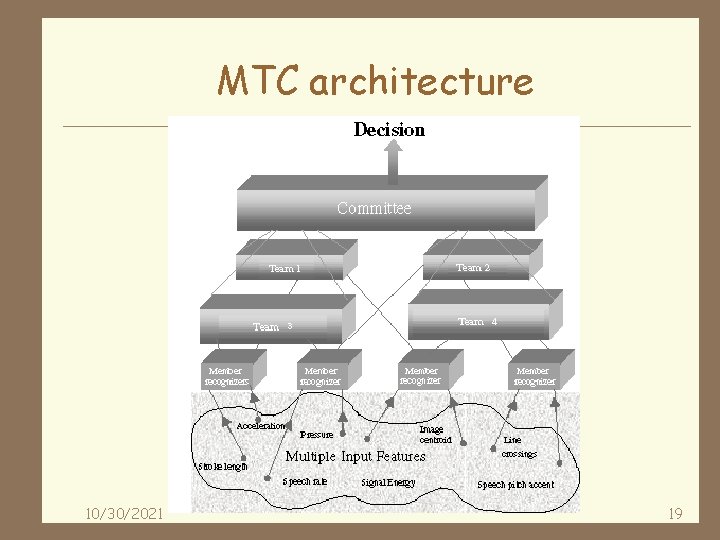

Symbolic/statistical approaches 4 Allow symbolic operations like unification (binding of terms like “this”) + probabilistic reasoning (possible interpretations of “this”). 4 The MTC system is an example * Members are recognizers. * Teams cluster data from recognizers. * The committee weights results from various teams. 10/30/2021 18

MTC architecture 10/30/2021 19

Probabilistic Toolkits 4 The “graphical models toolkit” U. Washington (Bilmes and Zweig). * Good for speech and time-series data. 4 MSBNx Bayes Net toolkit from Microsoft (Kadie et al. ) 4 UCLA MUSE: middleware for sensor fusion (also using Bayes nets). 10/30/2021 20

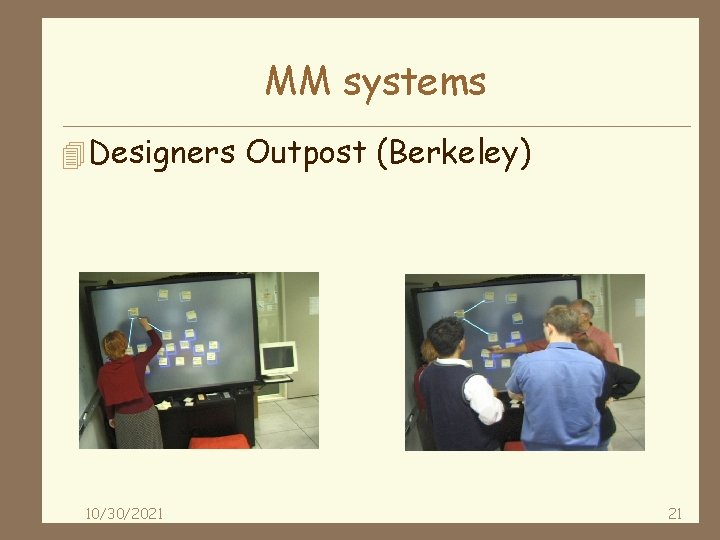

MM systems 4 Designers Outpost (Berkeley) 10/30/2021 21

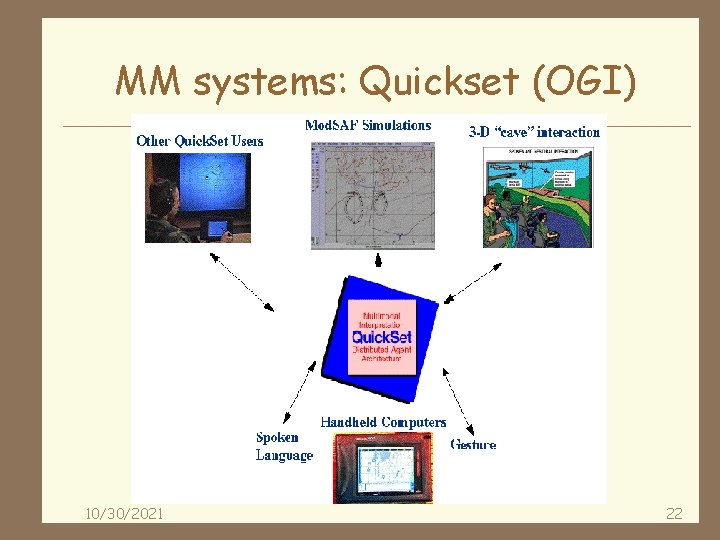

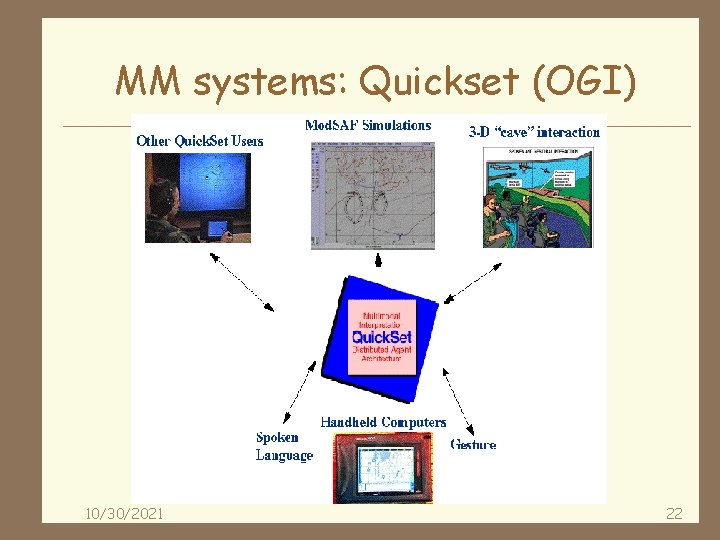

MM systems: Quickset (OGI) 10/30/2021 22

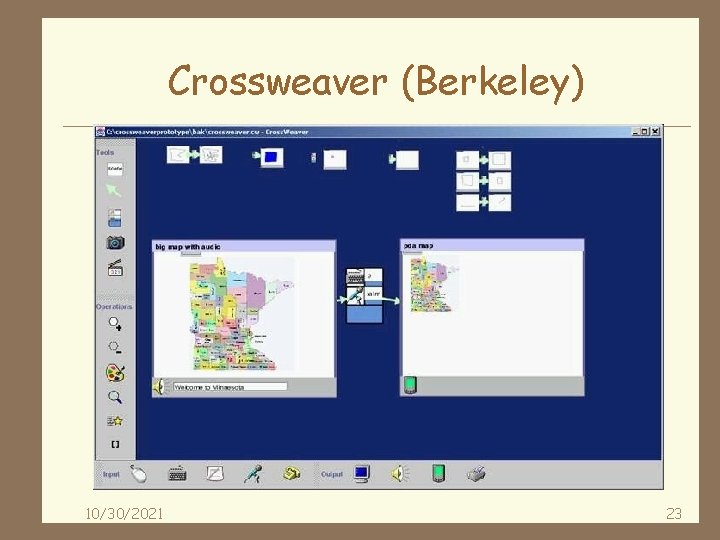

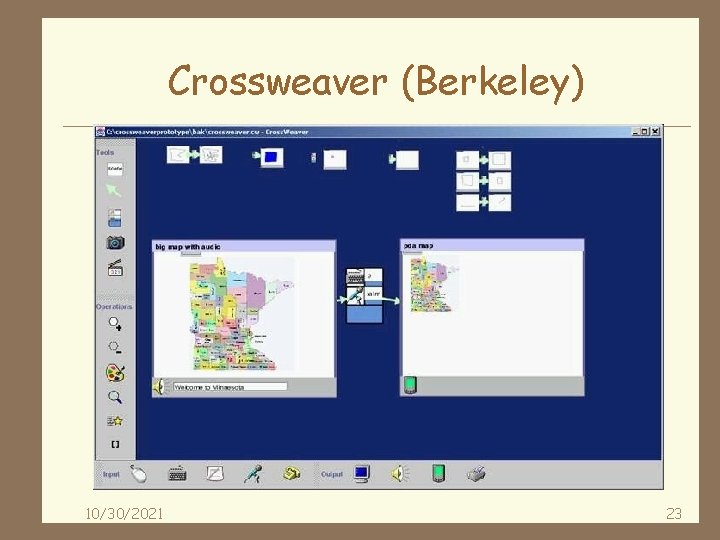

Crossweaver (Berkeley) 10/30/2021 23

Crossweaver (Berkeley) 4 Crossweaver is a prototyping system for multi-modal (primarily pen and speech) UIs. 4 Also allows cross-platform development (for PDAs, Tablet-PCs, desktops. 10/30/2021 24

Summary 4 Multi-modal systems provide several advantages. 4 Speech and pointing are complementary. 4 Challenges for multi-modal. 4 Early vs. late fusion. 4 MM architectures, fusion approaches. 4 Examples of MM systems. 10/30/2021 25