Combining Bagging and Random Subspaces to Create Better

Combining Bagging and Random Subspaces to Create Better Ensembles Panče Panov, Sašo Džeroski Jožef Stefan Institute

Outline • Motivation • Overview of Randomization Methods for constructing Ensembles (bagging, random subspace method, random forests) • Combining Bagging and Random Subspaces • Experiments and results • Summary and further work

Motivation • Random Forests is one of best performing ensemble methods – Use random sub samples of the training data – Use randomized base level algorithm • Our proposal is to use similar approach – Combination of bagging and random subspace method to achieve similar effect – Advantages: • The method is applicable to any base level algorithm • There is no need of randomizing the base level algorithm

Randomization methods for constructing ensembles • Find set of base-level algorithms that are diverse in their decisions and complement each other • Different possibilities – bootstrap sampling – random subset of features – randomized version of the base-level algorithms

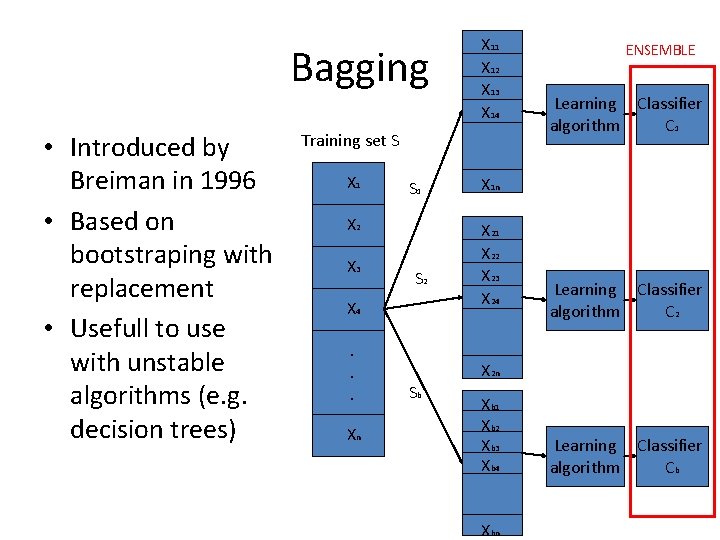

Bagging • Introduced by Breiman in 1996 • Based on bootstraping with replacement • Usefull to use with unstable algorithms (e. g. decision trees) X 11 X 12 X 13 X 14 Training set S X 1 S 1 X 2 X 3 S 2 X 4. . . Xn ENSEMBLE Learning Classifier algorithm C 1 X 1 n X 21 X 22 X 23 X 24 Learning Classifier algorithm C 2 X 2 n Sb Xb 1 Xb 2 Xb 3 Xb 4 Xbn Learning Classifier algorithm Cb

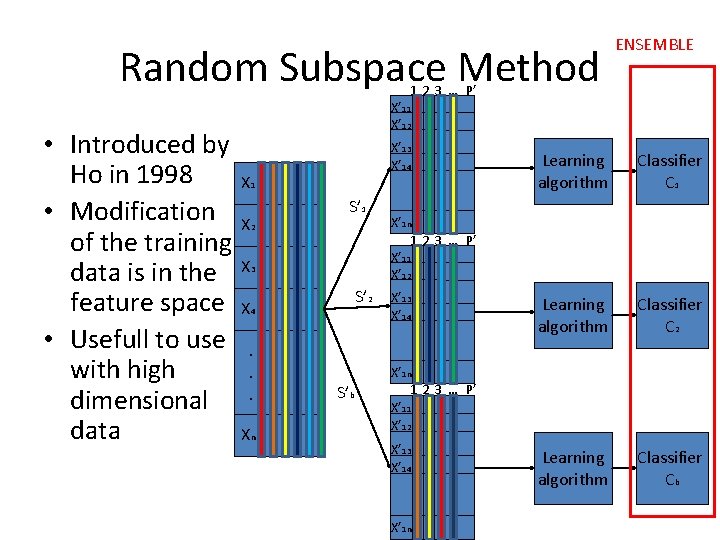

Random Subspace Method ENSEMBLE 1 2 3 … P’ • Introduced by Ho in 1998 • Modification of the training data is in the feature space • Usefull to use with high dimensional data X’ 11 X’ 12 X’ 13 X’ 14 S 1 X 2 S’ 1 X 3 S’ 2 X 4. . . Xn Classifier C 1 Learning algorithm Classifier C 2 Learning algorithm Classifier Cb X’ 1 n 1 2 3 … P’ X’ 11 X’ 12 X’ 13 X’ 14 S 1 S’b Learning algorithm X’ 1 n 1 2 3 … P’ X’ 11 X’ 12 X’ 13 X’ 14 S 1 X’ 1 n

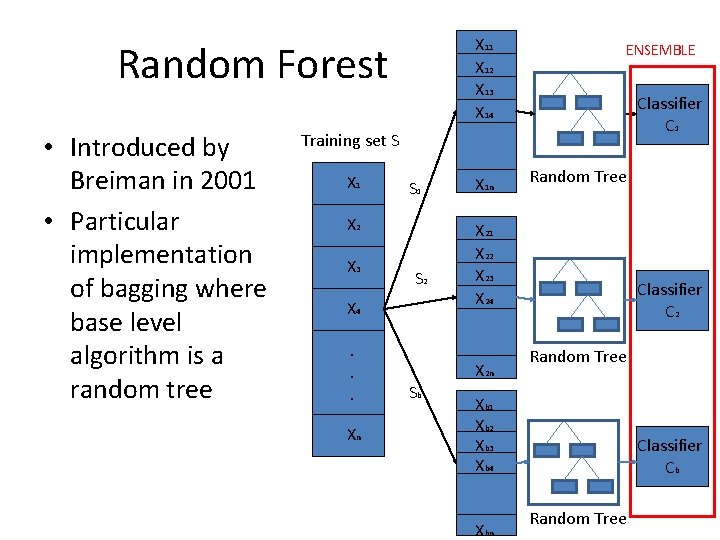

X 11 X 12 X 13 X 14 Random Forest • Introduced by Breiman in 2001 • Particular implementation of bagging where base level algorithm is a random tree ENSEMBLE Classifier C 1 Training set S X 1 S 1 X 2 X 3 S 2 X 4. . . Xn X 1 n X 21 X 22 X 23 X 24 X 2 n Sb Random Tree Classifier C 2 Random Tree Xb 1 Xb 2 Xb 3 Xb 4 Xbn Classifier Cb Random Tree

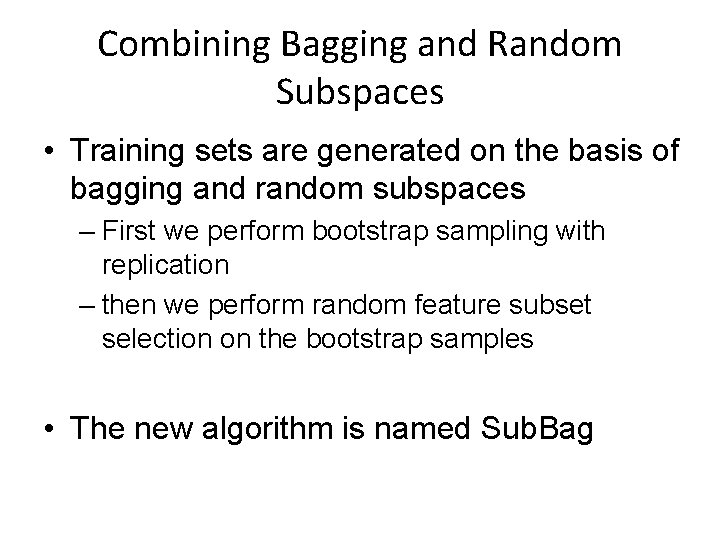

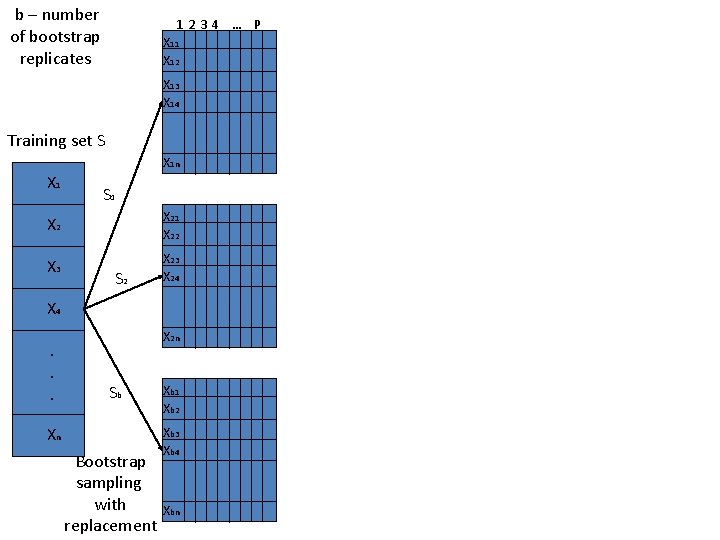

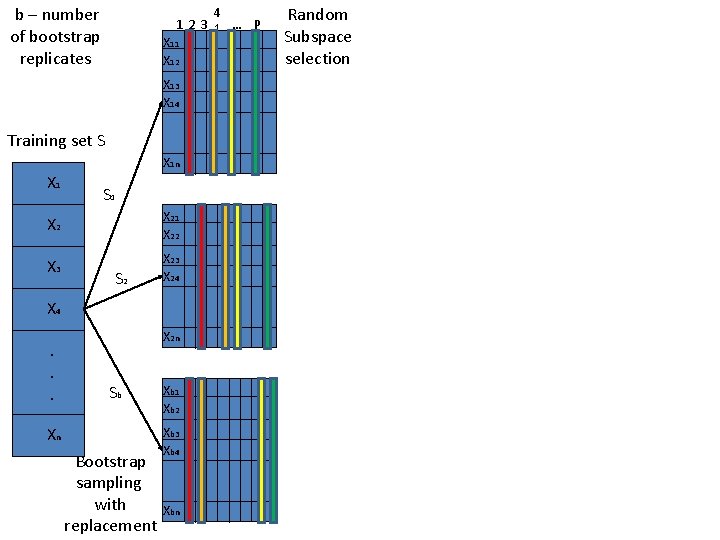

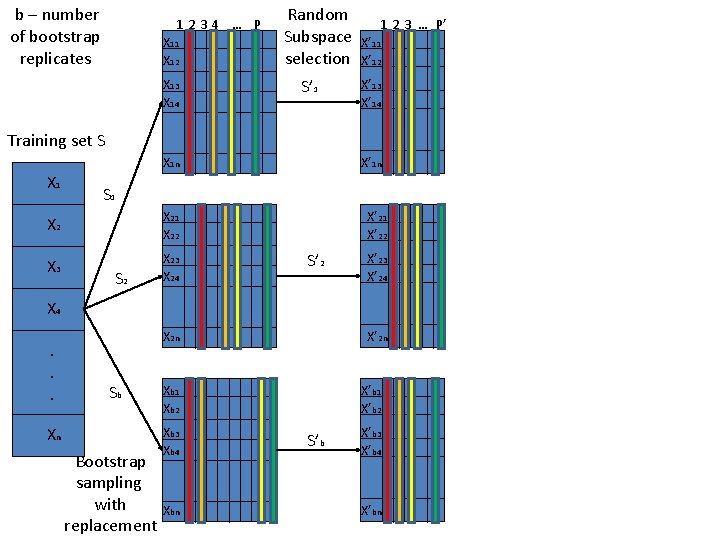

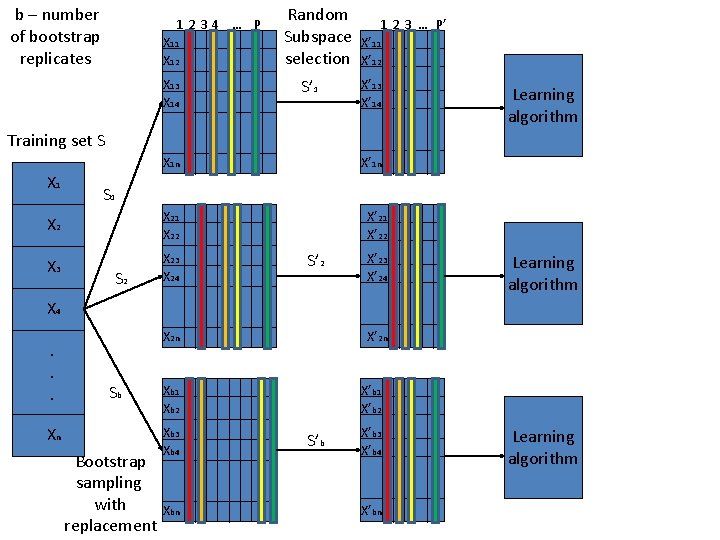

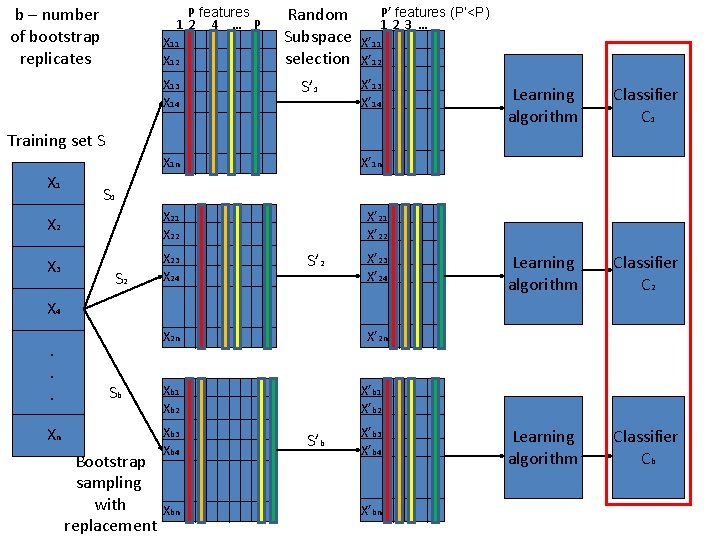

Combining Bagging and Random Subspaces • Training sets are generated on the basis of bagging and random subspaces – First we perform bootstrap sampling with replication – then we perform random feature subset selection on the bootstrap samples • The new algorithm is named Sub. Bag

Training set S X 1 X 2 X 3 X 4. . . Xn

b – number of bootstrap replicates 1234 … P X 11 X 12 X 13 X 14 S 1 Training set S X 1 n X 1 S 1 X 21 X 22 X 3 X 24 S 2 X 4. . . X 2 n Sb Xn Bootstrap sampling with replacement Xb 1 Xb 2 Xb 3 Xb 4 Xbn

b – number of bootstrap replicates 4 123 1 … P X 11 X 12 X 13 X 14 S 1 Training set S X 1 n X 1 S 1 X 21 X 22 X 3 X 24 S 2 X 4. . . X 2 n Sb Xn Bootstrap sampling with replacement Xb 1 Xb 2 Xb 3 Xb 4 Xbn Random Subspace selection

b – number of bootstrap replicates 1234 … P X 11 X 12 X 13 X 14 Random Subspace selection S’ 1 S 1 1 2 3 … P’ X’ 11 X’ 12 X’ 13 X’ 14 S 1 Training set S X 1 n X 1 X’ 1 n S 1 X 21 X 22 X 3 X 24 S 2 X’ 21 X’ 22 S’ 2 X’ 23 X’ 24 S 1 X 4. . . X 2 n Sb Xn Bootstrap sampling with replacement X’ 2 n Xb 1 Xb 2 Xb 3 Xb 4 Xbn X’b 1 X’b 2 S’b X’b 3 X’b 4 S 1 X’bn

b – number of bootstrap replicates 1234 … P X 11 X 12 X 13 X 14 Random Subspace selection S’ 1 S 1 1 2 3 … P’ X’ 11 X’ 12 X’ 13 X’ 14 S 1 Learning algorithm Training set S X 1 n X 1 X’ 1 n S 1 X 21 X 22 X 3 X 24 S 2 X’ 21 X’ 22 S’ 2 X’ 23 X’ 24 S 1 Learning algorithm X 4. . . X 2 n Sb Xn Bootstrap sampling with replacement X’ 2 n Xb 1 Xb 2 Xb 3 Xb 4 Xbn X’b 1 X’b 2 S’b X’b 3 X’b 4 S 1 X’bn Learning algorithm

b – number of bootstrap replicates P features 12 4 … P X 11 X 12 X 13 X 14 Random Subspace selection S’ 1 P’ features (P’<P) 123 … X’ 11 X’ 12 X’ 13 X’ 14 S 1 Learning algorithm Classifier C 2 Learning algorithm Classifier Cb Training set S X’ 1 n X 1 S 1 X 21 X 22 X 3 X 24 S 2 X’ 21 X’ 22 S’ 2 X’ 23 X’ 24 S 1 X 4. . . X 2 n Sb Xn Bootstrap sampling with replacement X’ 2 n Xb 1 Xb 2 Xb 3 Xb 4 Xbn X’b 1 X’b 2 S’b X’b 3 X’b 4 S 1 X’bn

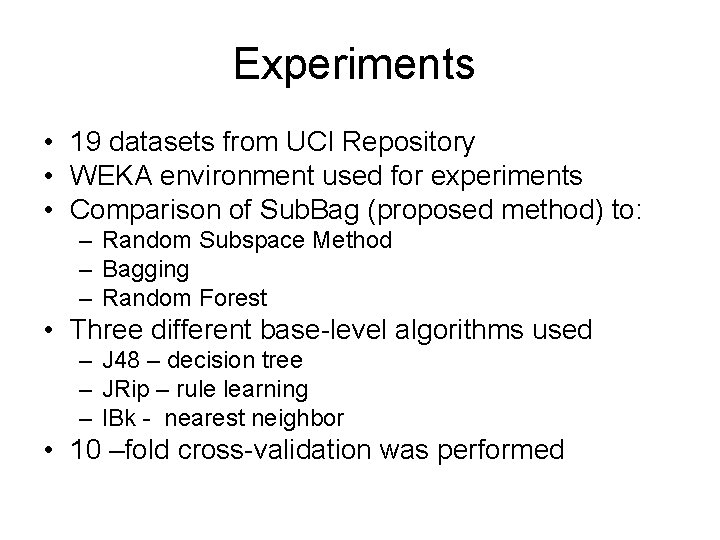

Experiments • 19 datasets from UCI Repository • WEKA environment used for experiments • Comparison of Sub. Bag (proposed method) to: – Random Subspace Method – Bagging – Random Forest • Three different base-level algorithms used – J 48 – decision tree – JRip – rule learning – IBk - nearest neighbor • 10 –fold cross-validation was performed

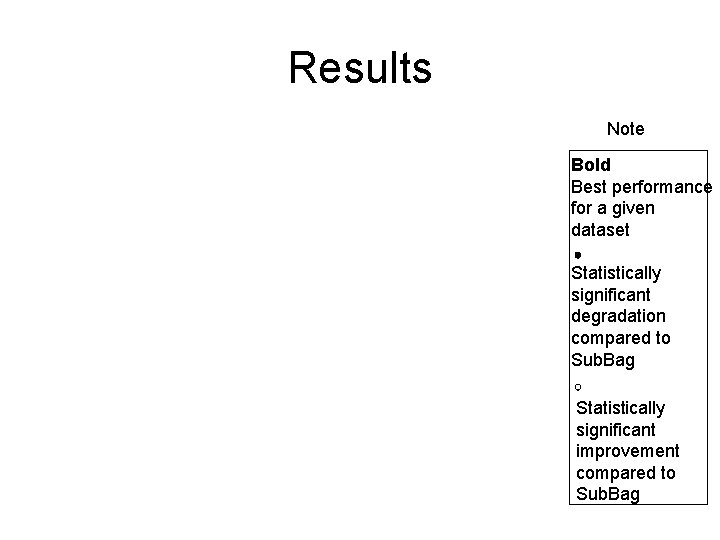

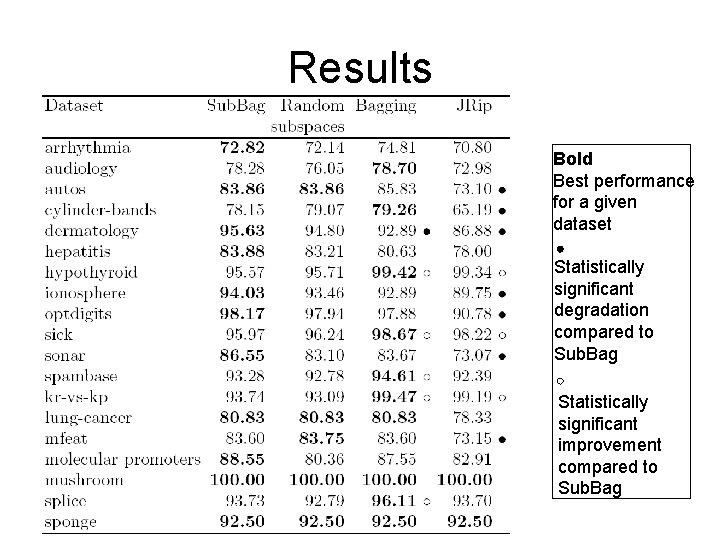

Results Note Bold Best performance for a given dataset Statistically significant degradation compared to Sub. Bag Statistically significant improvement compared to Sub. Bag

Results Bold Best performance for a given dataset Statistically significant degradation compared to Sub. Bag Statistically significant improvement compared to Sub. Bag

Results Bold Best performance for a given dataset Statistically significant degradation compared to Sub. Bag Statistically significant improvement compared to Sub. Bag

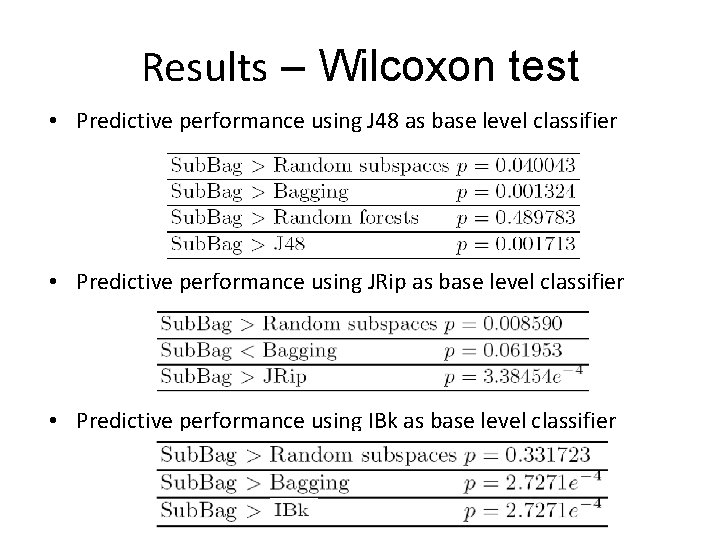

Results – Wilcoxon test • Predictive performance using J 48 as base level classifier • Predictive performance using JRip as base level classifier • Predictive performance using IBk as base level classifier

Summary • Sub. Bag is comparable to Random Forests in case of J 48 as base and better than Bagging and Random Subspaces • Sub. Bag is comparable to Bagging and better than Random Subspaces in case of JRip • Sub. Bag is better than Bagging and Random Subspaces in case of IBk

Further work • Investigate the diversity of ensemble and compare it with other methods • Use different combinations of bagging and random subspaces (e. g. bags of RSM ensembles and RSM ensembles of bags) • Compare bagged ensembles of randomized algorithms (e. g. rules)

- Slides: 21