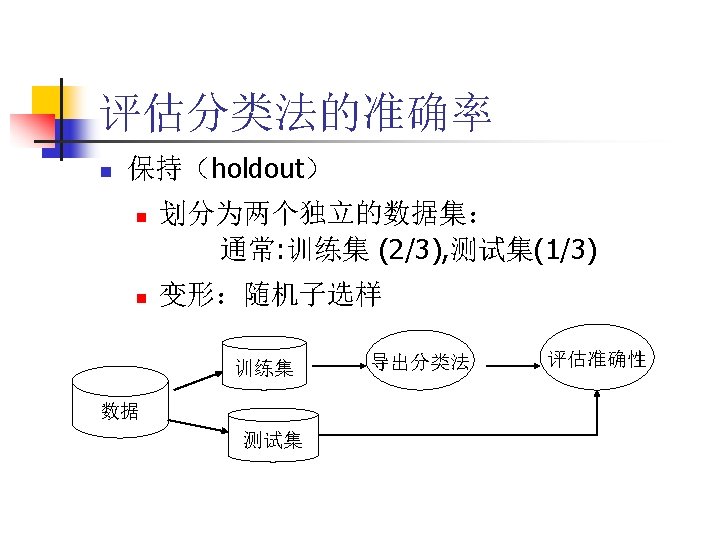

Bagging Boosting Bagging cx maxcntt ctx C x

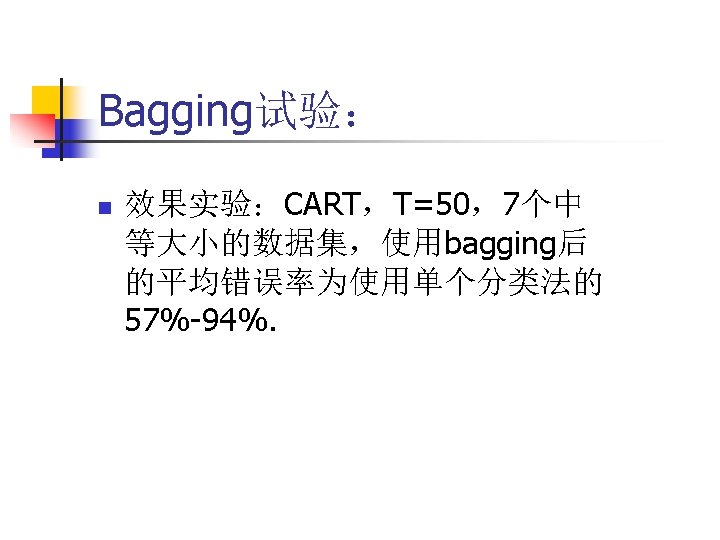

Bagging & Boosting

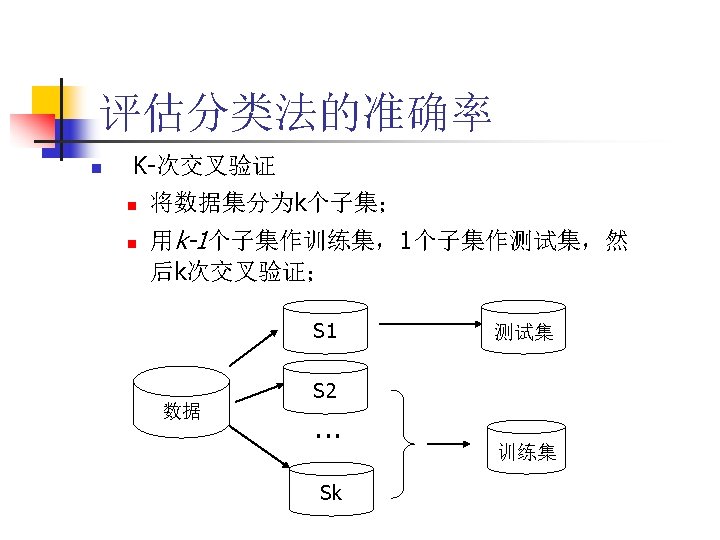

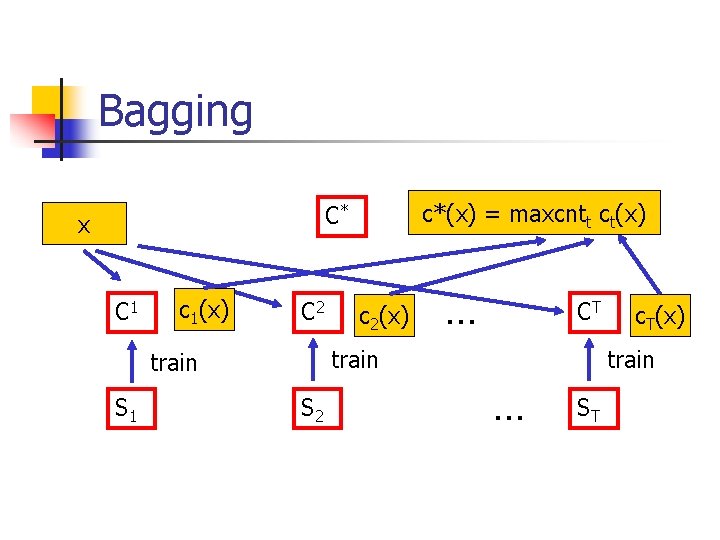

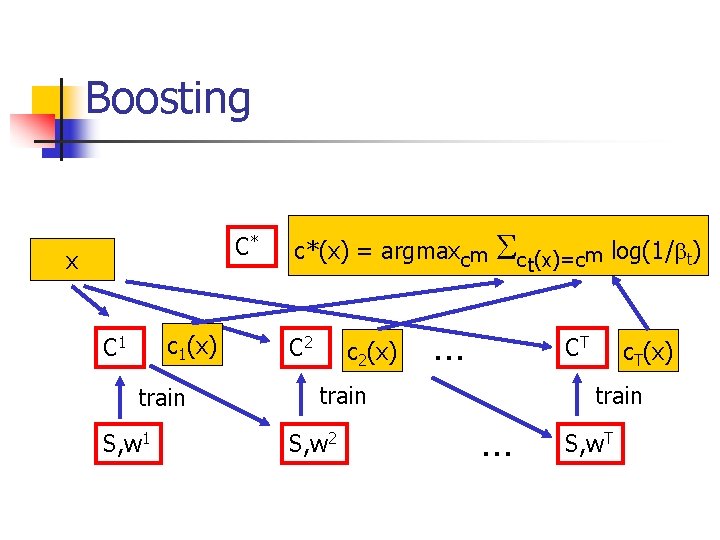

Bagging c*(x) = maxcntt ct(x) C* x C 1 c 1(x) C 2 … CT train S 1 c 2(x) S 2 c. T(x) train … ST

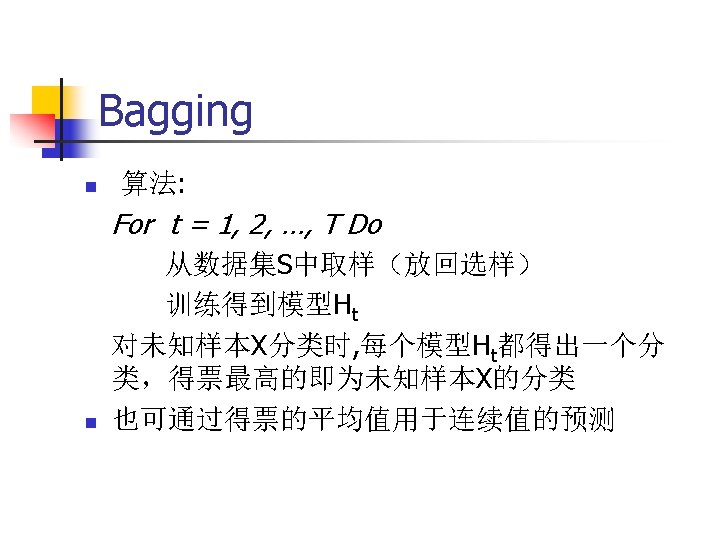

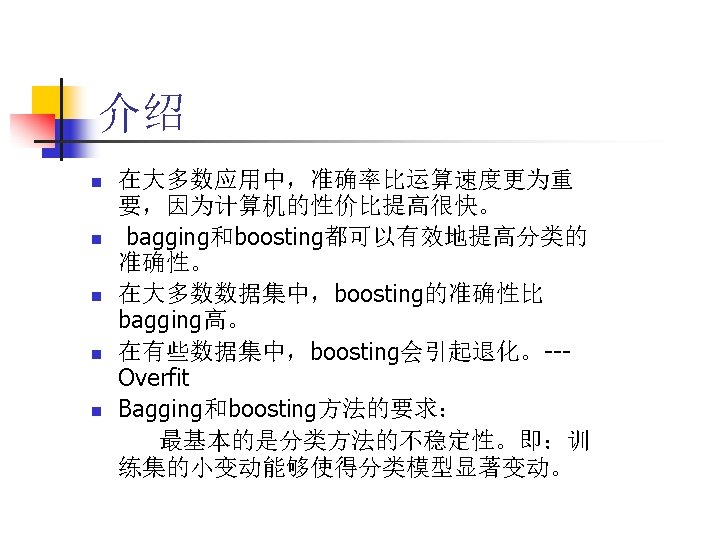

Bagging n n n Bagging要求“不稳定”的分类方法; 比如:决策树,神经网络算法 不稳定:数据集的小的变动能够使得分类 结果的显著的变动。 “The vital element is the instability of the prediction method. If perturbing the learning set can cause significant changes in the predictor constructed, then bagging can improve accuracy. ” (Breiman 1996)

Boosting背景 n n 最初的boosting算法 Schapire 1989 Ada. Boost算法 Freund and Schapire 1995

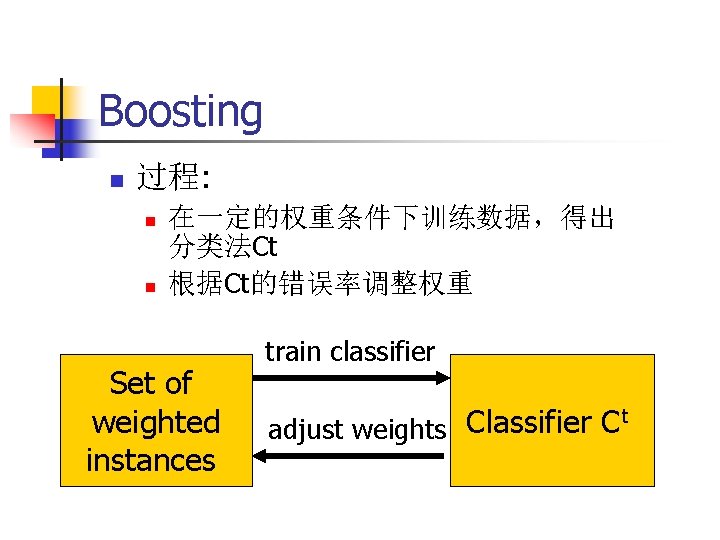

Boosting n 过程: n n 在一定的权重条件下训练数据,得出 分类法Ct 根据Ct的错误率调整权重 Set of weighted instances train classifier t Classifier C adjust weights

Boosting n n Ada. Boost. M 1 Ada. Boost. M 2 …

Boosting C* x c 1(x) C 1 train S, w 1 c*(x) = argmaxcm C 2 c 2(x) Sct(x)=cm log(1/bt) … CT train S, w 2 c. T(x) … S, w. T

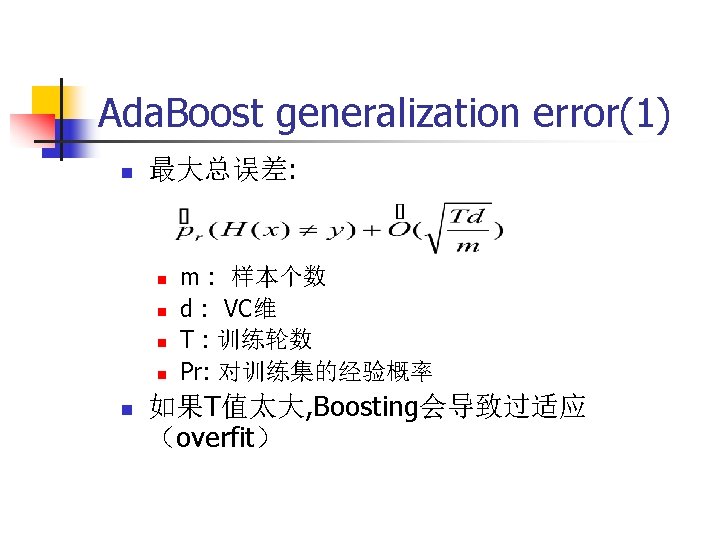

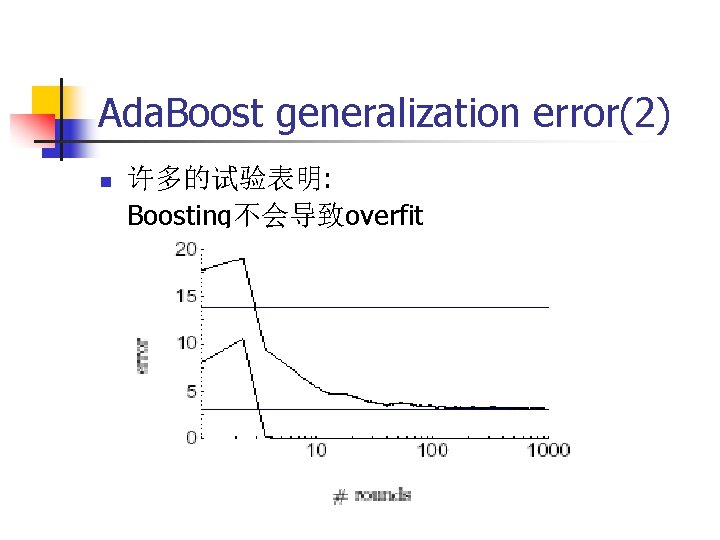

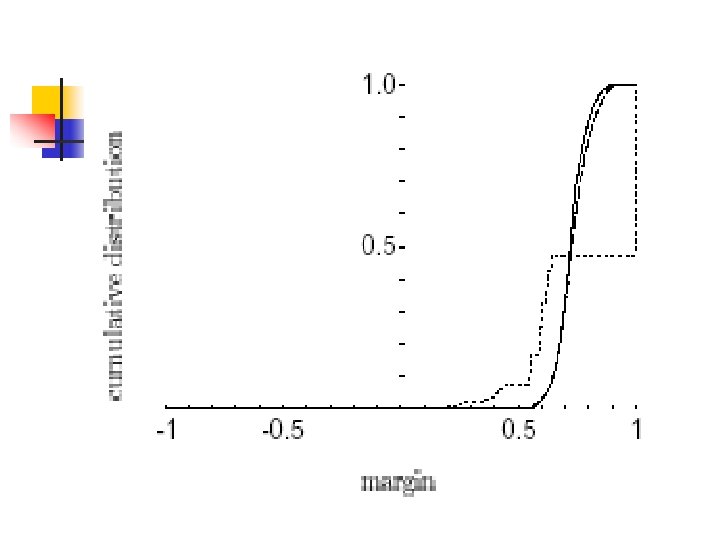

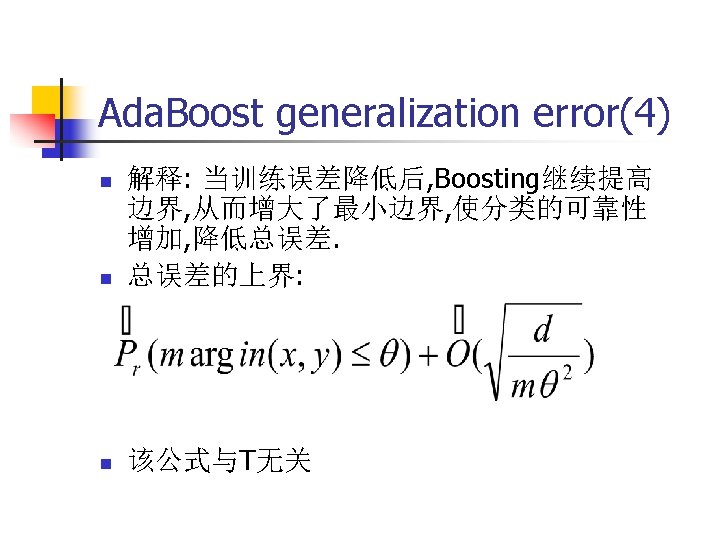

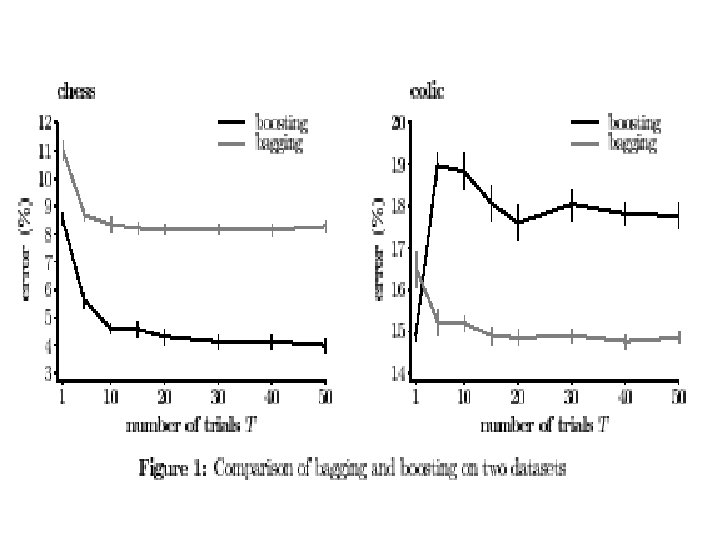

Ada. Boost generalization error(2) n 许多的试验表明: Boosting不会导致overfit

Bagging, boosting, and C 4. 5 J. R. Quinlan

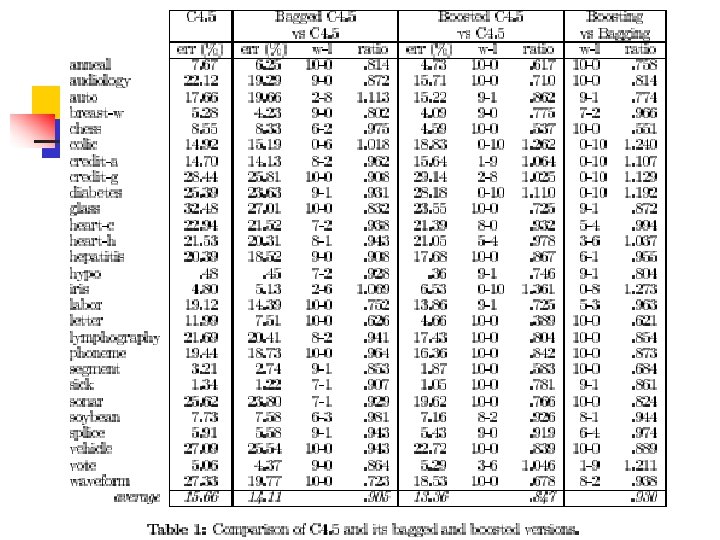

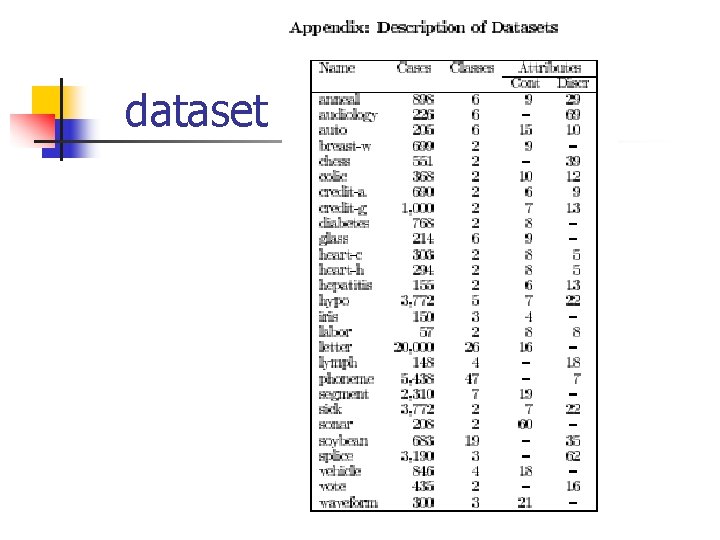

dataset

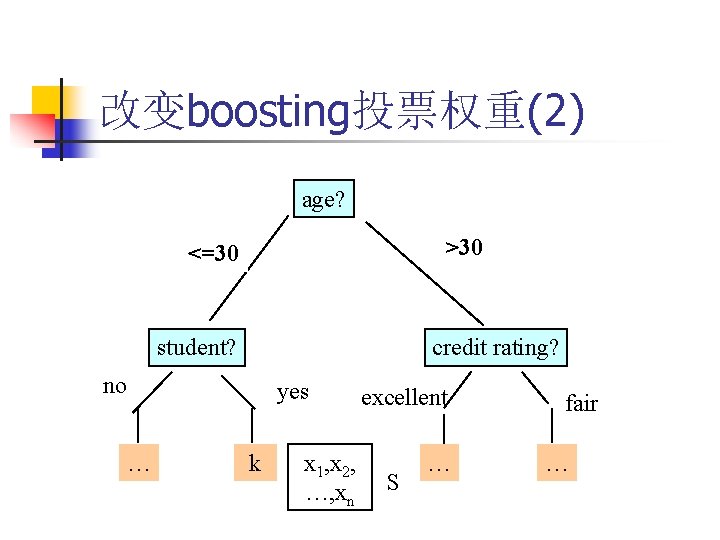

改变boosting投票权重(2) age? >30 <=30 student? credit rating? no … yes k x 1, x 2, …, xn excellent S … fair …

- Slides: 37