Classifying Human Dynamics Without Contact Forces Alessandro Bissacco

Classifying Human Dynamics Without Contact Forces Alessandro Bissacco, Stefano Soatto (CVPR 2006) Alvina Goh Reading group: 08/03/06

Motivation • Concentrate on using dynamics as a perceptual cue for human motion recognition • Viewing humans as dynamical systems • Problem of performing detection and recognition in the space of dynamical models is remained unexplored. • Deciding how far two models and learning a distribution in model space remains an open issue

Aim of Paper • Model dynamics of human gaits with hybrid linear models • However, inference of the state and model parameters for a switching linear model is NP complete • No optimal algorithm of reasonable complexity for the desired model orders – Concentrate on autoregressive models: optimal estimator for each mode is closed-form • Previous work – Algebraic approaches to filtering and identification do not provide probabilistic information on the estimates – Distance defined on deterministic unknown parameters, rather than a distribution of them. The extension does not yield desirable results.

Modeling human dynamics for classification AR Autoregressive Models Gaussian LTI autoregressive model of order n: X n yt = Ai yt¡i + et 2 i=1 » N Rp ; e y (0; R) t t Rewrite this in normal form: yt = 't µ + et I ' = [y ¡ >t t> 1 µ = [µ µ >p 2 µ = [A 1 (i; 1) i yt¡ 2 ¢¢¢ > Ip ¢¢¢ yt¡n Ip ] µ ] p ¢¢¢ A 1 (i; p) An (i; 1) An (i; p)] is the Kronecker product, where matrix of dimension p > is the transpose and Ip is the identity

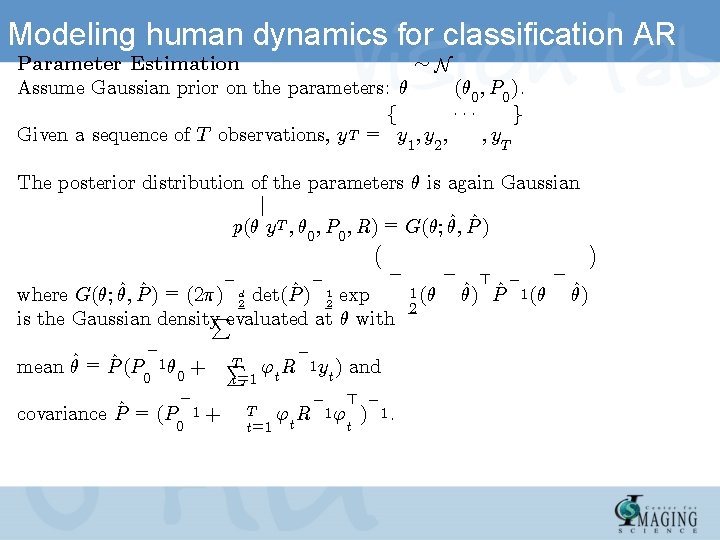

Modeling human dynamics for classification AR » N Parameter Estimation Assume Gaussian prior on the parameters: µ (µ 0 ; P 0 ). ¢¢¢ f g Given a sequence of T observations, y. T = y 1 ; y 2 ; ; y. T The posterior distribution of the parameters µ is again Gaussian j ^ P^ ) p(µ y T ; µ 0 ; P 0 ; R) = G(µ; µ; ¡ ¢ ¡ ¡ ¡ ^ P^ ) = (2¼) d det(P^ ) 1 exp where G(µ; µ; 2 2 P is the Gaussian density evaluated at µ with mean µ^ = P^ (P ¡ 0 T 1µ + P 't R 0 = covariance P^ = (P t 1 ¡ 1 0 + T t=1 ¡ 1 y ) t and ¡ > ¡ 't R 1 ' ) 1. t 1 (µ 2 ¡ > ^ P^ µ) ¡ 1 (µ ¡ ^ µ)

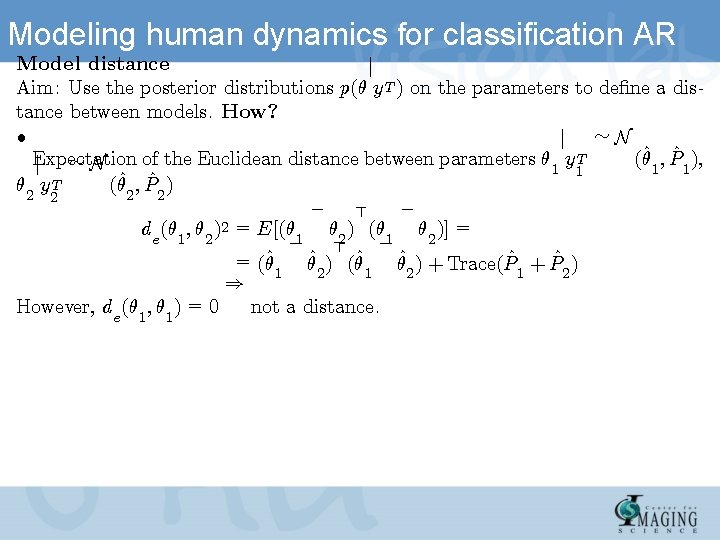

Modeling human dynamics for classification AR Model distance j Aim: Use the posterior distributions p(µ y T ) on the parameters to de¯ne a distance between models. How? ² j » N Expectation of the Euclidean distance between parameters µ 1 y T (µ^1 ; P^1 ), j » N 1 µ 2 y T (µ^2 ; P^2 ) 2 ¡ ¡ > de (µ 1 ; µ 2 )2 = E[(µ 1 µ 2 )] = ¡ ¡ > = (µ^ µ^2 ) (µ^1 µ^2 ) + Trace(P^1 + P^2 ) 1 ) However, de (µ 1 ; µ 1 ) = 0 not a distance.

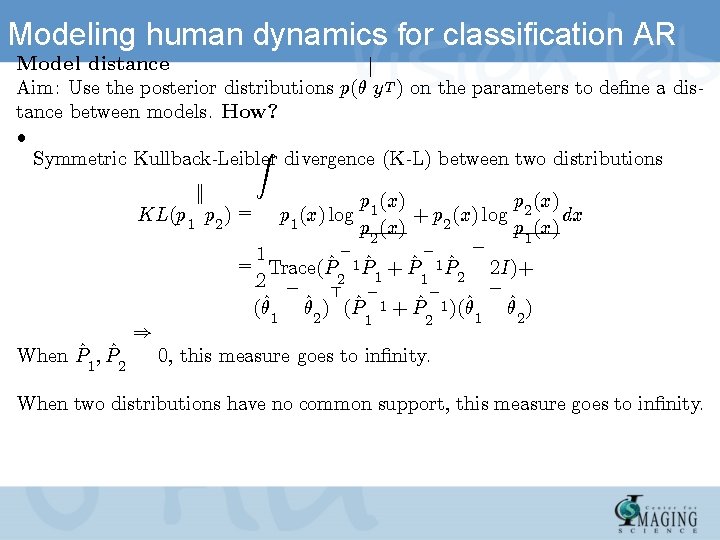

Modeling human dynamics for classification AR Model distance j Aim: Use the posterior distributions p(µ y T ) on the parameters to de¯ne a distance between models. How? ² Z Symmetric Kullback-Leibler divergence (K-L) between two distributions k KL(p 1 p 2 ) = When P^1 ; P^2 ) p 1 (x) p 2 (x) + p 2 (x) log dx p 1 (x) log p 2 (x) p 1 (x) ¡ ¡ ¡ 1 = Trace(P^ 1 P^ + P^ 1 P^ 2 I)+ 1 2 2 1 2 ¡ ¡ > (µ^1 µ^2 ) (P^ 1 + P^ 1 )(µ^1 µ^2 ) 1 2 0, this measure goes to in¯nity. When two distributions have no common support, this measure goes to in¯nity.

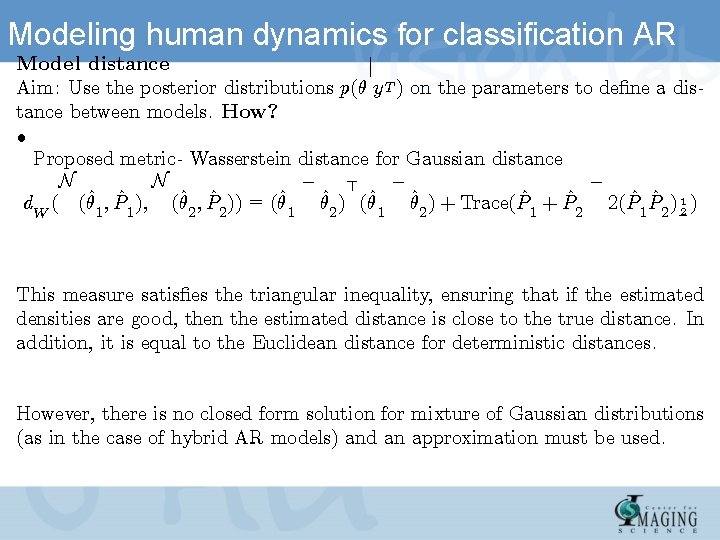

Modeling human dynamics for classification AR Model distance j Aim: Use the posterior distributions p(µ y T ) on the parameters to de¯ne a distance between models. How? ² Proposed metric- Wasserstein distance for Gaussian distance ¡ ¡ ¡ N N > d. W ( (µ^1 ; P^1 ); (µ^2 ; P^2 )) = (µ^1 µ^2 ) + Trace(P^1 + P^2 2(P^1 P^2 ) 12 ) This measure satis¯es the triangular inequality, ensuring that if the estimated densities are good, then the estimated distance is close to the true distance. In addition, it is equal to the Euclidean distance for deterministic distances. However, there is no closed form solution for mixture of Gaussian distributions (as in the case of hybrid AR models) and an approximation must be used.

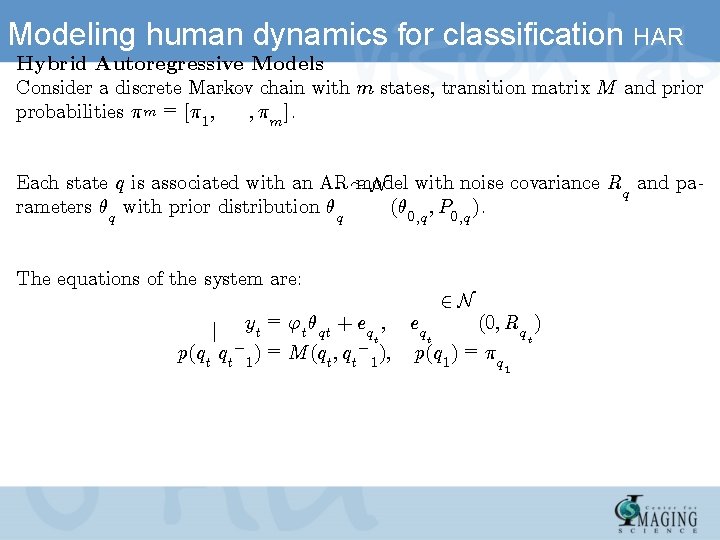

Modeling human dynamics for classification HAR Hybrid Autoregressive Models Consider a discrete Markov ¢ ¢ ¢ chain with m states, transition matrix M and prior ; ¼m ]. probabilities ¼ m = [¼ 1 ; Each state q is associated with an AR » model N with noise covariance Rq and parameters µq with prior distribution µq (µ 0; q ; P 0; q ). The equations of the system are: 2 N = (0; Rq ) j yt 't µqt + eqt ; eqt t ¡ ¡ p(qt qt 1 ) = M (qt ; qt 1 ); p(q 1 ) = ¼q 1

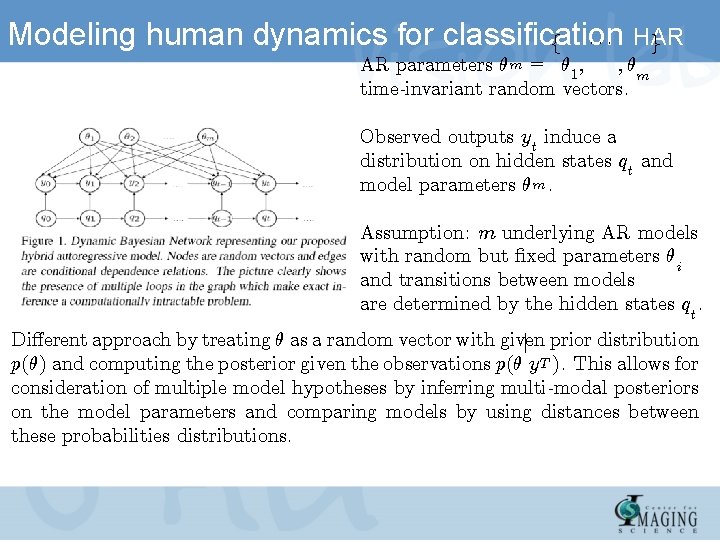

Modeling human dynamics for classification f ¢ ¢ ¢ HAR g ; µm AR parameters µm = µ 1 ; time-invariant random vectors. Observed outputs yt induce a distribution on hidden states qt and model parameters µ m. Assumption: m underlying AR models with random but ¯xed parameters µi and transitions between models are determined by the hidden states qt. Di®erent approach by treating µ as a random vector with given j prior distribution p(µ) and computing the posterior given the observations p(µ y T ). This allows for consideration of multiple model hypotheses by inferring multi-modal posteriors on the model parameters and comparing models by using distances between these probabilities distributions.

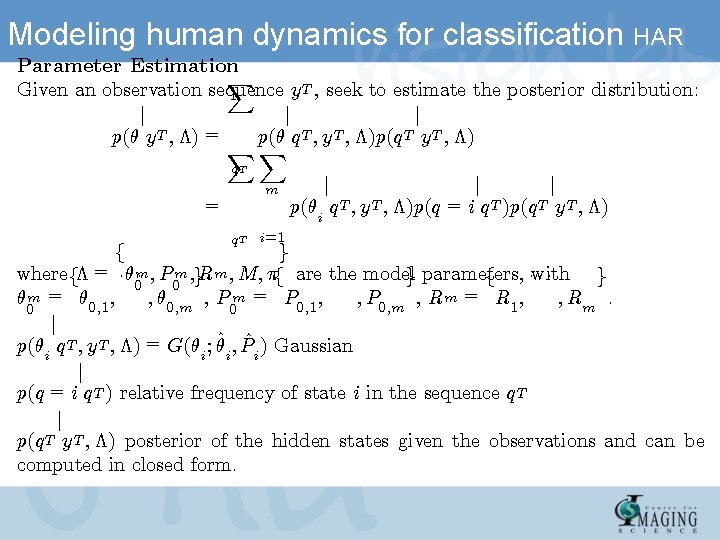

Modeling human dynamics for classification HAR Parameter Estimation X Given an observation sequence y T , seek to estimate the posterior distribution: j j j p(µ y T ; ¤) = p(µ q T ; y T ; ¤)p(q T y T ; ¤) XX q. T = m q T i=1 j j j p(µi q T ; y T ; ¤)p(q = i q T )p(q T y T ; ¤) f g where f¤ = ¢ µ¢ m ¢ ; P 0 m ; g. Rm ; M; ¼f are ¢the ¢ ¢ modelg parameters, ¢with ¢¢ f g 0 µ m = µ 0; 1 ; ; µ 0; m , P m = P 0; 1 ; ; P 0; m , Rm = R 1 ; ; Rm. 0 0 j p(µi q T ; y T ; ¤) = G(µi ; µ^i ; P^i ) Gaussian j p(q = i q T ) relative frequency of state i in the sequence q T j p(q T y T ; ¤) posterior of the hidden states given the observations and can be computed in closed form.

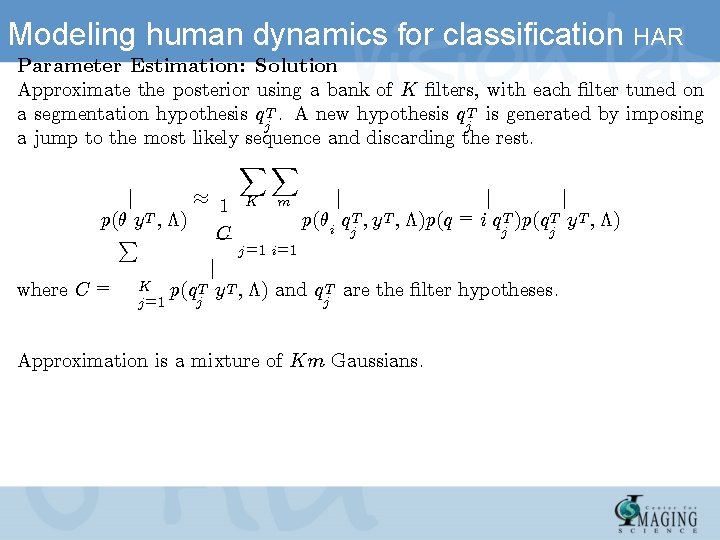

Modeling human dynamics for classification HAR Parameter Estimation: Solution Approximate the posterior using a bank of K ¯lters, with each ¯lter tuned on a segmentation hypothesis q T. A new hypothesis q T is generated by imposing j j a jump to the most likely sequence and discarding the rest. XX ¼ 1 j p(µ y T ; ¤) C P where C = K j =1 K m j j j p(µi q T ; y T ; ¤)p(q = i q T )p(q T y T ; ¤) j j =1 i=1 j p(q T y T ; ¤) and q T are the ¯lter hypotheses. j j Approximation is a mixture of Km Gaussians.

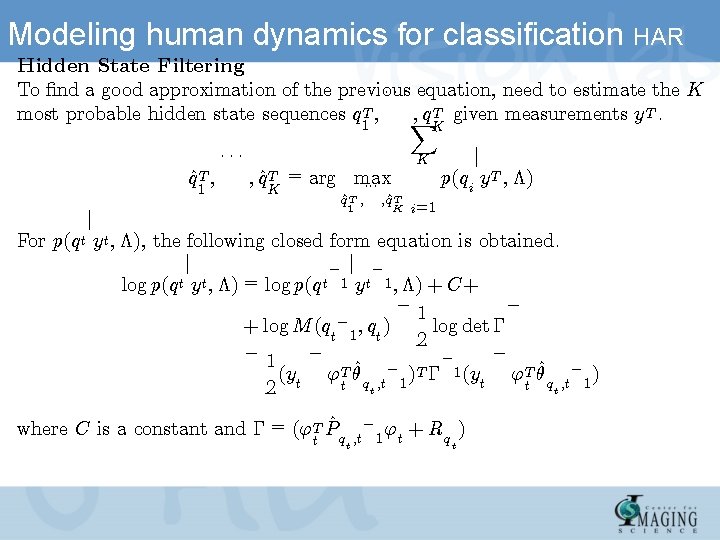

Modeling human dynamics for classification HAR Hidden State Filtering To ¯nd a good approximation of the previous ¢ ¢ ¢ equation, need to estimate the K ; q T given measurements y. T. most probable hidden state sequences q T ; X 1 q^T ; 1 ¢¢¢ K K ; q^T = arg max ¢¢¢ K q^T ; 1 ; ^ q. T K j p(qi y T ; ¤) i=1 j For p(q t y t ; ¤), the following closed form equation is obtained. j ¡ log p(q t y t ; ¤) = log p(q t 1 y t 1 ; ¤) + C+ ¡ 1 ¡ + log M (qt¡ 1 ; qt ) log det ¡ 2 ¡ 1 ¡ ¡ ¡ (yt 'T µ^q ; t¡ 1 )T ¡ 1 (yt 'T µ^q ; t¡ 1 ) t t 2 t t where C is a constant and ¡ = ('T P^q t ¡ ' ; t 1 t t + Rq ) t

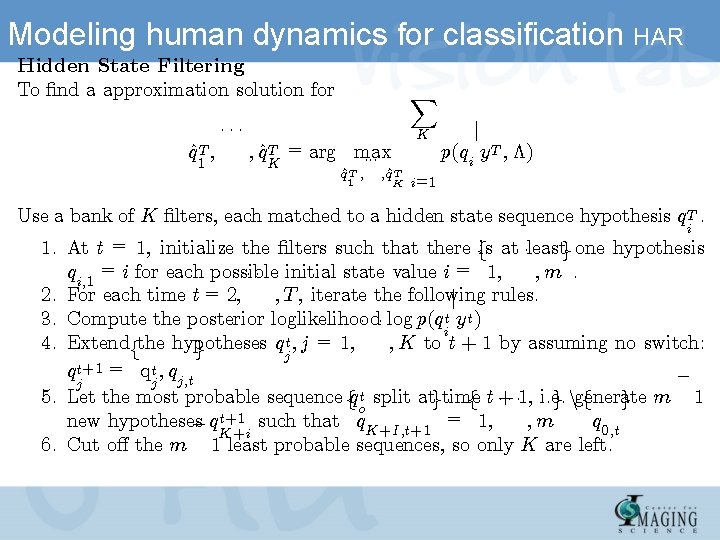

Modeling human dynamics for classification HAR Hidden State Filtering To ¯nd a approximation solution for q^T ; 1 ¢¢¢ X K ; q^T = arg max ¢¢¢ K q^T ; 1 ; ^ q. T K j p(qi y T ; ¤) i=1 Use a bank of K ¯lters, each matched to a hidden state sequence hypothesis q T. i 1. At t = 1, initialize the ¯lters such that there fis at ¢ ¢ ¢leastg one hypothesis qi; 1 = i for each possible ; m. ¢ ¢ ¢ initial state value i = 1; 2. For each time t = 2; ; T , iterate the following rules. j 3. Compute the posterior loglikelihood ¢ ¢ ¢ log p(qit y t ) 4. Extendfthe hypotheses q t ; j = 1; ; K to t + 1 by assuming no switch: g j q t+1 = qt ; qj; t ¡ j j 5. Let the most probable sequence fq t split atgtime f t ¢+¢ ¢ 1, i. e. g ngenerate f g m 1 o t+1 such that q = 1; new hypotheses q 0; t ; m ¡ q. K+i K+I; t+1 6. Cut o® the m 1 least probable sequences, so only K are left.

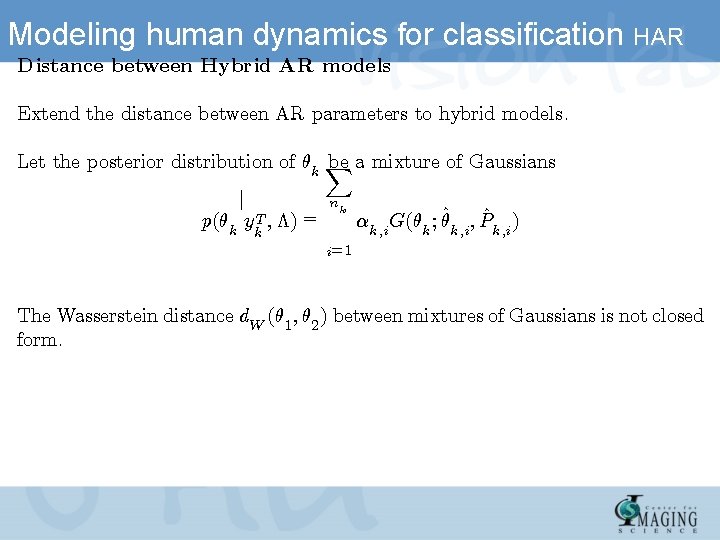

Modeling human dynamics for classification HAR Distance between Hybrid AR models Extend the distance between AR parameters to hybrid models. Let the posterior distribution of µk X be a mixture of Gaussians j p(µk y T ; ¤) = nk k ®k; i G(µk ; µ^k; i ; P^k; i ) i=1 The Wasserstein distance d. W (µ 1 ; µ 2 ) between mixtures of Gaussians is not closed form.

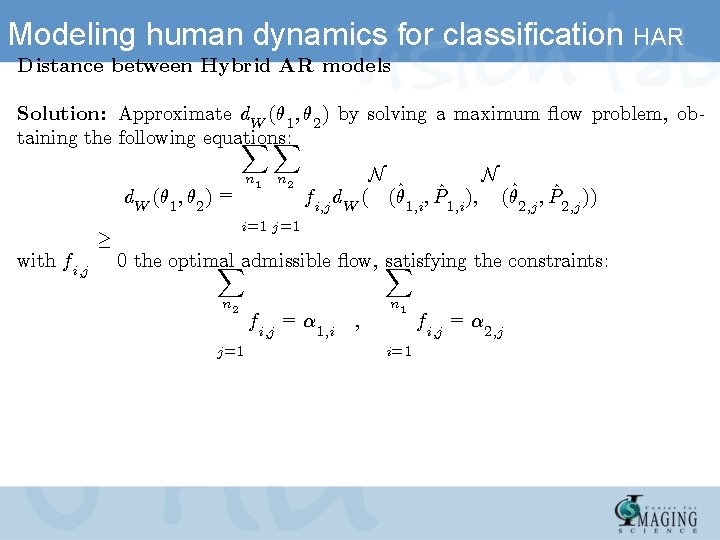

Modeling human dynamics for classification HAR Distance between Hybrid AR models Solution: Approximate d. W (µ 1 ; µ 2 ) by solving a maximum °ow problem, obtaining the following equations: XX n 1 d. W (µ 1 ; µ 2 ) = with fi; j ¸ n 2 N N fi; j d. W ( (µ^1; i ; P^1; i ); (µ^2; j ; P^2; j )) i=1 j =1 0 the optimal satisfying the constraints: Xadmissible °ow, X n 2 j =1 fi; j = ® 1; i ; n 1 i=1 fi; j = ® 2; j

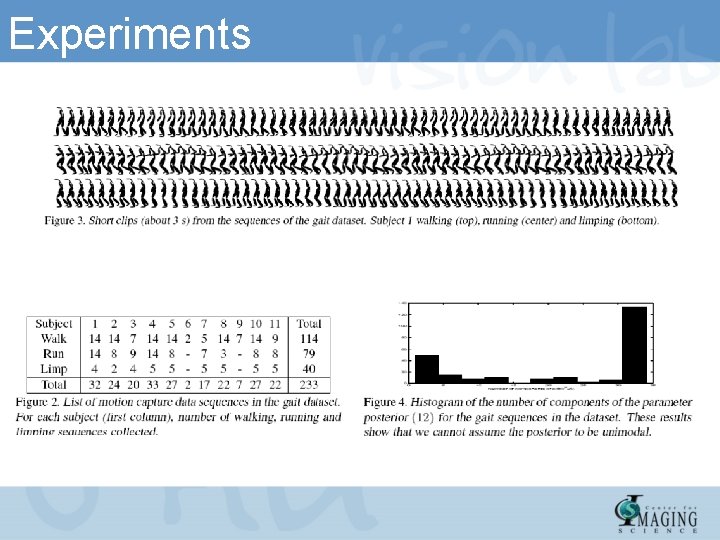

Experiments

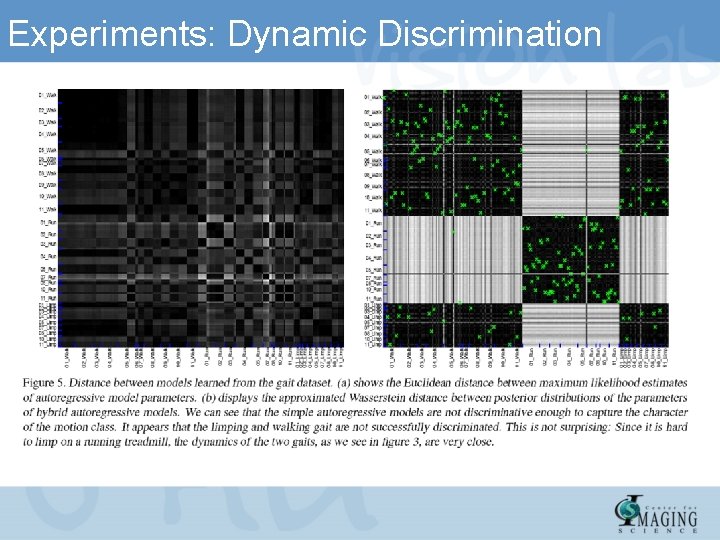

Experiments: Dynamic Discrimination

- Slides: 18