Burke Theorem Reversibility and Jackson Networks of Queues

Burke Theorem, Reversibility, and Jackson Networks of Queues 1

Reverse CTMC • Basic idea is to run time in reverse – Departures become arrivals and vice versa – The reverse chain is also a CTMC • Some basic properties of the reverse chain – The fraction of time spent in state i in the forward chain is the same as the fraction of time spent in state i in the reverse chain: π*i = πi – Rate of transitions from i to j in the reverse chain is equal to the rate of transitions from j to i in the forward chain: π*iq*ij = πjqji • If a CTMC is time-reversible, i. e. , πiqij = πjqji and Σiπi=1, then the forward and reverse chains are statistically identical and q*ij = qij 2

Burke’s Theorem • Consider an M/M/k system with arrival rate λ. At steady state, the following holds 1. 2. • The departure process is Poisson(λ) At all times t, the number of jobs in the system at t, N(t), is independent of the sequence of departures times prior to time t Implications – Tandem M/M/k queues can be analyzed as independent M/M/k queue Acyclic networks of M/M/k queues with probabilistic routing can be analyzed as networks of independent M/M/k queues – • Arrival process to each queue is Poisson 3

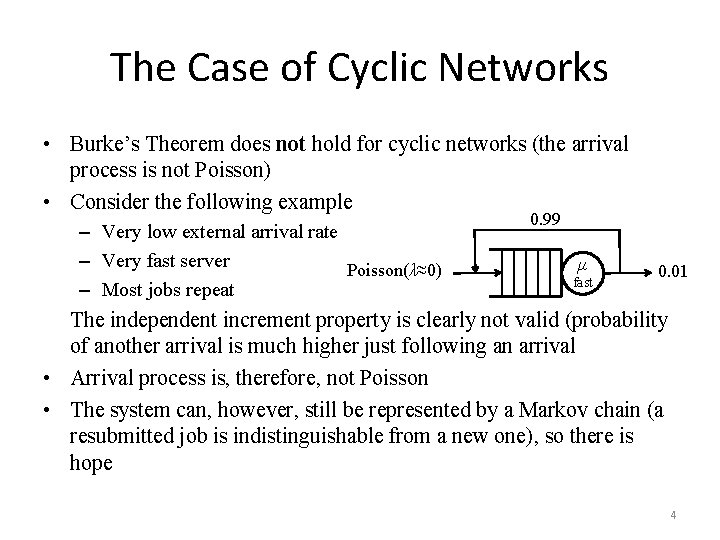

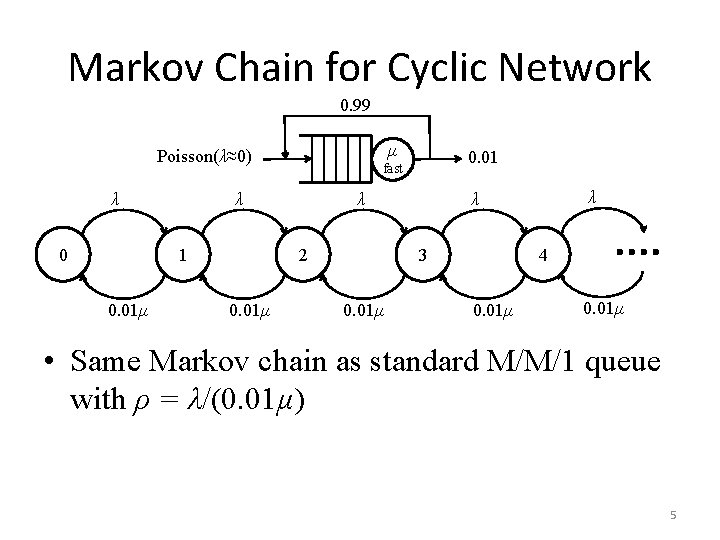

The Case of Cyclic Networks • Burke’s Theorem does not hold for cyclic networks (the arrival process is not Poisson) • Consider the following example – Very low external arrival rate – Very fast server Poisson(λ≈0) – Most jobs repeat 0. 99 μ fast 0. 01 The independent increment property is clearly not valid (probability of another arrival is much higher just following an arrival • Arrival process is, therefore, not Poisson • The system can, however, still be represented by a Markov chain (a resubmitted job is indistinguishable from a new one), so there is hope 4

Markov Chain for Cyclic Network 0. 99 μ Poisson(λ≈0) λ 0 λ 1 0. 01μ 3 0. 01μ λ λ λ 2 0. 01μ 0. 01 fast 4 0. 01μ • Same Markov chain as standard M/M/1 queue with ρ = λ/(0. 01μ) 5

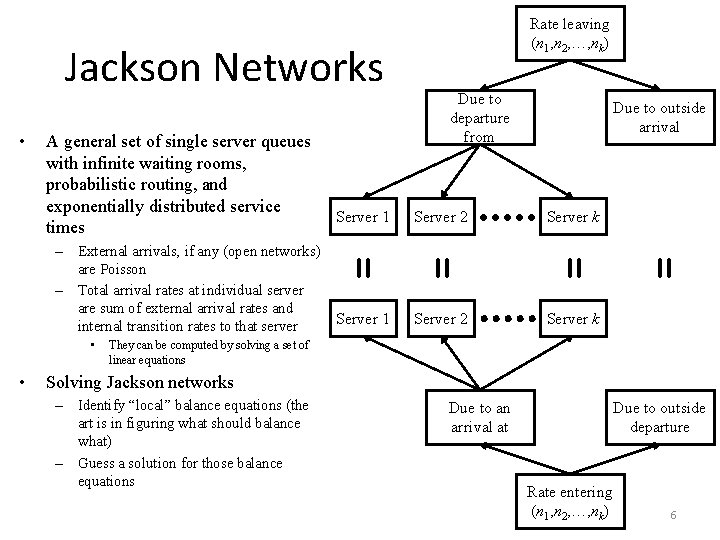

Jackson Networks • Due to outside arrival – External arrivals, if any (open networks) are Poisson – Total arrival rates at individual server are sum of external arrival rates and Server 1 internal transition rates to that server = Server k Server 2 Server k = Server 2 = Server 1 • • Due to departure from = A general set of single server queues with infinite waiting rooms, probabilistic routing, and exponentially distributed service times Rate leaving (n 1, n 2, …, nk) They can be computed by solving a set of linear equations Solving Jackson networks – Identify “local” balance equations (the art is in figuring what should balance what) – Guess a solution for those balance equations Due to an arrival at Due to outside departure Rate entering (n 1, n 2, …, nk) 6

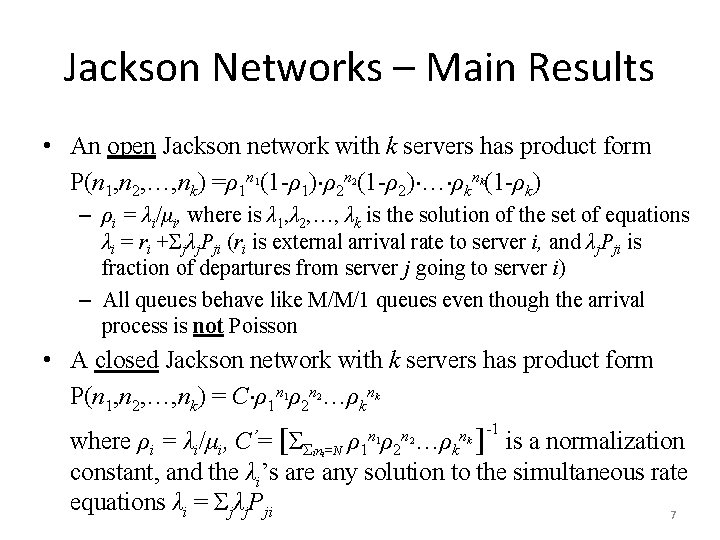

Jackson Networks – Main Results • An open Jackson network with k servers has product form P(n 1, n 2, …, nk) =ρ1 n (1 -ρ1) ρ2 n (1 -ρ2) … ρkn (1 -ρk) 1 2 k – ρi = λi/μi, where is λ 1, λ 2, …, λk is the solution of the set of equations λi = ri +Σjλj. Pji (ri is external arrival rate to server i, and λj. Pji is fraction of departures from server j going to server i) – All queues behave like M/M/1 queues even though the arrival process is not Poisson • A closed Jackson network with k servers has product form P(n 1, n 2, …, nk) = C ρ1 n ρ2 n …ρkn 1 2 k -1 n n n 1 2 k ρ1 ρ2 …ρk where ρi = λi/μi, [ΣΣ n =N ] is a normalization constant, and the λi’s are any solution to the simultaneous rate equations λi = Σjλj. Pji 7 C ’= i i

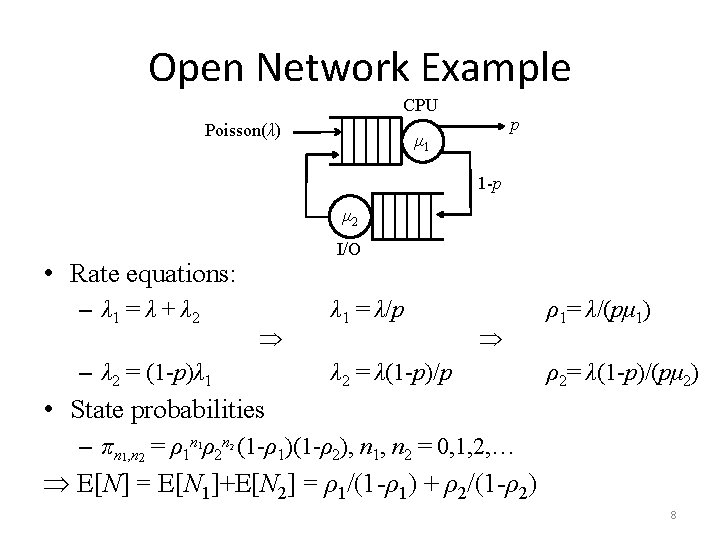

Open Network Example CPU Poisson(λ) p μ 1 1 -p μ 2 I/O • Rate equations: – λ 1 = λ + λ 2 – λ 2 = (1 -p)λ 1 = λ/p λ 2 = λ(1 -p)/p ρ1= λ/(pμ 1) ρ2= λ(1 -p)/(pμ 2) • State probabilities – πn 1, n 2 = ρ1 n 1ρ2 n (1 -ρ1)(1 -ρ2), n 1, n 2 = 0, 1, 2, … 2 E[N] = E[N 1]+E[N 2] = ρ1/(1 -ρ1) + ρ2/(1 -ρ2) 8

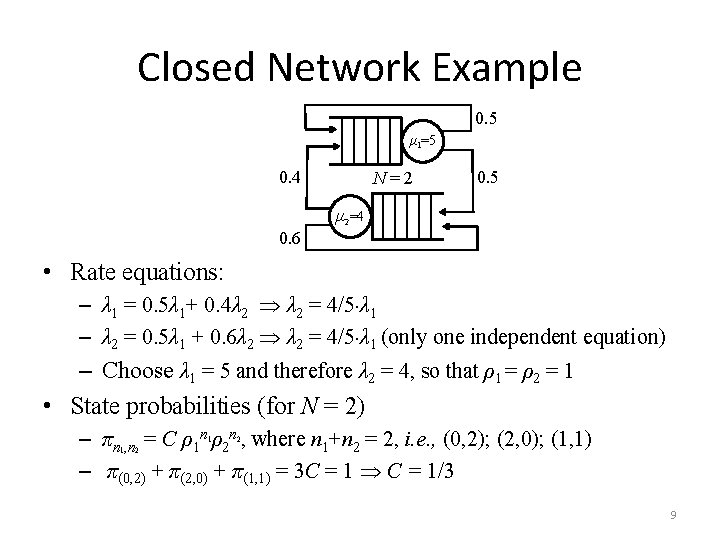

Closed Network Example 0. 5 μ 1=5 0. 4 N=2 0. 5 μ 2=4 0. 6 • Rate equations: – λ 1 = 0. 5λ 1+ 0. 4λ 2 = 4/5 λ 1 – λ 2 = 0. 5λ 1 + 0. 6λ 2 = 4/5 λ 1 (only one independent equation) – Choose λ 1 = 5 and therefore λ 2 = 4, so that ρ1 = ρ2 = 1 • State probabilities (for N = 2) – πn , n = C ρ1 n ρ2 n , where n 1+n 2 = 2, i. e. , (0, 2); (2, 0); (1, 1) – π(0, 2) + π(2, 0) + π(1, 1) = 3 C = 1 C = 1/3 1 1 2 2 9

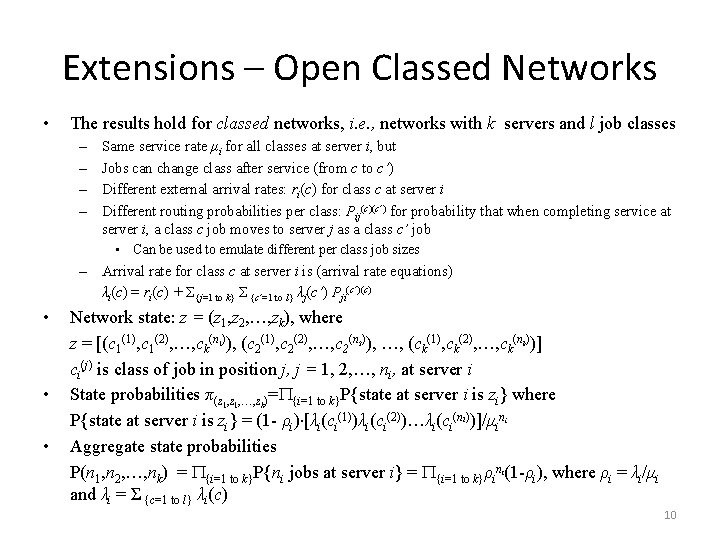

Extensions – Open Classed Networks • The results hold for classed networks, i. e. , networks with k servers and l job classes – – Same service rate μi for all classes at server i, but Jobs can change class after service (from c to c’) Different external arrival rates: ri(c) for class c at server i Different routing probabilities per class: Pij(c)(c’) for probability that when completing service at server i, a class c job moves to server j as a class c’ job • Can be used to emulate different per class job sizes – Arrival rate for class c at server i is (arrival rate equations) λi(c) = ri(c) + Σ{j=1 to k} Σ {c’=1 to l} λj(c’) Pji(c’)(c) • Network state: z = (z 1, z 2, …, zk), where z = [(c 1(1), c 1(2), …, ck(n )), (c 2(1), c 2(2), …, c 2(n )), …, (ck(1), ck(2), …, ck(n ))] ci(j) is class of job in position j, j = 1, 2, …, ni, at server i State probabilities π(z 1, …, zk)= {i=1 to k}P{state at server i is zi} where P{state at server i is zi} = (1 - ρi) [λi(ci(1))λi(ci(2))…λi(ci(ni))]/μin Aggregate state probabilities P(n 1, n 2, …, nk) = {i=1 to k}P{ni jobs at server i} = {i=1 to k}ρini(1 -ρi), where ρi = λi/μi and λi = Σ {c=1 to l} λi(c) 1 • 2 k i • 10

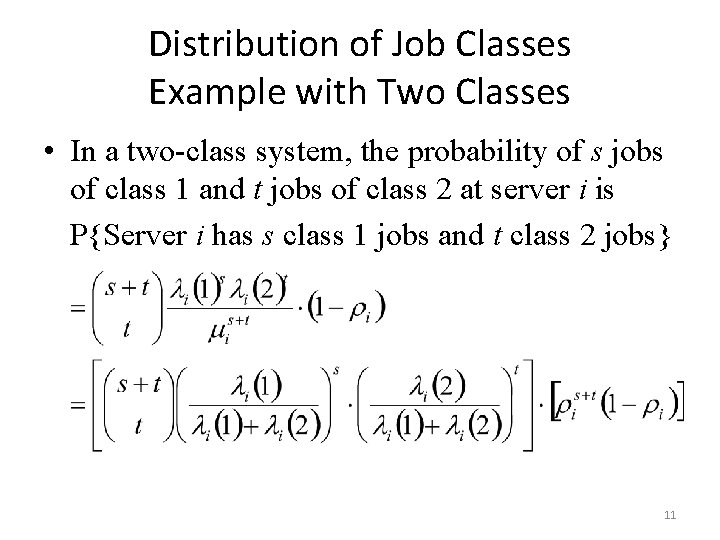

Distribution of Job Classes Example with Two Classes • In a two-class system, the probability of s jobs of class 1 and t jobs of class 2 at server i is P{Server i has s class 1 jobs and t class 2 jobs} 11

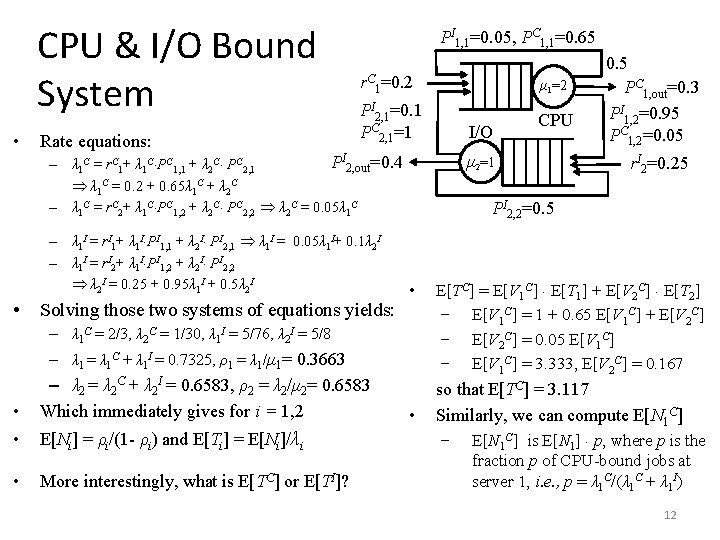

CPU & I/O Bound System • Rate equations: PI 1, 1=0. 05, PC 1, 1=0. 65 r. C 1=0. 2 PI 2, 1=0. 1 PC 2, 1=1 • Solving those two systems of equations yields: • – λ 1 = λ 1 C + λ 1 I = 0. 7325, ρ1 = λ 1/μ 1= 0. 3663 • • E[Ni] = ρi/(1 - ρi) and E[Ti] = E[Ni]/λi • More interestingly, what is E[TC] or E[TI]? CPU r. I 2=0. 25 PI 2, 2=0. 5 – λ 1 C = 2/3, λ 2 C = 1/30, λ 1 I = 5/76, λ 2 I = 5/8 – λ 2 = λ 2 C + λ 2 I = 0. 6583, ρ2 = λ 2/μ 2= 0. 6583 Which immediately gives for i = 1, 2 I/O μ 2=1 PI 2, out=0. 4 – λ 1 C = r. C 1+ λ 1 C PC 1, 1 + λ 2 C PC 2, 1 λ 1 C = 0. 2 + 0. 65λ 1 C + λ 2 C – λ 1 C = r. C 2+ λ 1 C PC 1, 2 + λ 2 C PC 2, 2 λ 2 C = 0. 05λ 1 C – λ 1 I = r. I 1+ λ 1 I PI 1, 1 + λ 2 I PI 2, 1 λ 1 I = 0. 05λ 1 I+ 0. 1λ 2 I – λ 1 I = r. I 2+ λ 1 I PI 1, 2 + λ 2 I PI 2, 2 λ 2 I = 0. 25 + 0. 95λ 1 I + 0. 5λ 2 I μ 1=2 0. 5 PC 1, out=0. 3 PI 1, 2=0. 95 PC 1, 2=0. 05 • E[TC] = E[V 1 C] E[T 1] + E[V 2 C] E[T 2] E[V 1 C] = 1 + 0. 65 E[V 1 C] + E[V 2 C] = 0. 05 E[V 1 C] = 3. 333, E[V 2 C] = 0. 167 so that E[TC] = 3. 117 Similarly, we can compute E[N 1 C] is E[N 1] p, where p is the fraction p of CPU-bound jobs at server 1, i. e. , p = λ 1 C/(λ 1 C + λ 1 I) 12

Back to Closed Networks • Recall that in a closed Jackson network with k servers and N jobs, the state probabilities are of the form P(n 1, n 2, …, nk) = C ρ1 n ρ2 n …ρkn 1 2 k -1 n n n 1 2 k ρ1 ρ2 …ρk where ρi = λi/μi, and [ΣΣ n =N ] is a normalization constant • Solving for the λi’s calls for solving a system of k simultaneous rate equations λi = Σjλj. Pji • Computing C calls for adding up a total of terms C ’= i i – This grows exponentially in N and k • We need a better approach 13

Arrival Theorem • In a closed Jackson network with M > 1 jobs, an arrival to (any) server j sees a distribution of the number of jobs at each server equal to the distribution of the number of jobs at each server in the same network, but with only M – 1 jobs. – The mean number of jobs the arrival sees at server j is equal to E[Nj(M – 1)] • We can use the Arrival Theorem to derive a recursion for the mean response time at server j 14

![Mean Value Analysis • A simple recursive approach to computing E[Ti(M)] (and E[Ni(M)]) in Mean Value Analysis • A simple recursive approach to computing E[Ti(M)] (and E[Ni(M)]) in](http://slidetodoc.com/presentation_image_h/0b2897219115483d8d672648e0fb718a/image-15.jpg)

Mean Value Analysis • A simple recursive approach to computing E[Ti(M)] (and E[Ni(M)]) in a system with M > 1 jobs and k servers E[Ti(M)] = 1/μi (1+pi λ(M-1)E[Ti(M-1)]), where – λ(M-1) is the total arrival rate to all servers – pi = λi(M-1)/λ(M-1) is the fraction of those arrivals headed for server i (pi is independent of M) – pi = Vi/Σ{j=1 to k}Vj , Vi is # visits of server i for each job completion • In a system with k servers, λ(M) is given by λ(M) = Σ{i=1 to k}λi(M) = M/[Σ{i=1 to k}pi. E[Ti(M)]] (*) Based on Little’s Law and the fact that M = Σ{i=1 to k} E[Ni(M)] 15

![Mean Value Analysis – Recursion • Initial condition of recursion: E[Tj(1)] = 1/μj • Mean Value Analysis – Recursion • Initial condition of recursion: E[Tj(1)] = 1/μj •](http://slidetodoc.com/presentation_image_h/0b2897219115483d8d672648e0fb718a/image-16.jpg)

Mean Value Analysis – Recursion • Initial condition of recursion: E[Tj(1)] = 1/μj • Recursive step: E[Tj(M)] = 1/μj + E[Number at server j seen by arrival at j]/μj = 1/μj + E[Nj(M-1)]/μj – by Arrival Theorem = 1/μj + λj(M-1)E[Tj(M-1)]/μj – by Little’s Law = 1/μj + pjλ(M-1)E[Tj(M-1)]/μj – since pj = λj(M-1)/λ(M-1) • Next step is to computer λ(M-1) using Little’s Law and the fact that M – 1 = Σ{j=1 to k} E[Nj(M-1)] = Σ{j=1 to k}λj(M-1) E[Tj(M-1)] = Σ{j=1 to k} pjλ(M-1) E[Tj(M-1)] = λ(M-1) Σ{j=1 to k} pj E[Tj(M-1)] λ(M-1) = (M– 1)/[Σ{j=1 to k}pj. E[Tj(M-1)]] 16

![MVA Example ( E[Ti(M)] = 1/μi 1+pi λ(M-1)E[Ti(M-1)] ) λ(M) = M/Σ{i=1 to k}pi. MVA Example ( E[Ti(M)] = 1/μi 1+pi λ(M-1)E[Ti(M-1)] ) λ(M) = M/Σ{i=1 to k}pi.](http://slidetodoc.com/presentation_image_h/0b2897219115483d8d672648e0fb718a/image-17.jpg)

MVA Example ( E[Ti(M)] = 1/μi 1+pi λ(M-1)E[Ti(M-1)] ) λ(M) = M/Σ{i=1 to k}pi. E[Ti(M)] (*) M=3 μ=1 2μ • What are E[N 1(3)] and E[N 2(3)], for M = 3? – Note that in this system p 1 = p 2 =1/2 (each server sees each job once, and so experience half of all job visits to servers in the system) • Recursion for E[T 1(i)] and E[T 2(i)] – E[T 1(1)] = 1/μ 1= 1, E[T 2(1)] = 1/μ 2= 1/2, λ(1) = 4/3 (by (*)) – E[T 1(2)] = 1+(1/2 4/3 1)/1 = 5/3, E[T 2(2)] = 1/2+(1/2 4/3 1/2)/2 = 2/3, λ(2) = 12/7 – E[T 1(3)] = 1+(1/2 12/7 5/3)/1 = 17/7, E[T 2(3)] = 1/2+(1/2 12/7 2/3)/2 = 11/14 = 1/2, λ(3) = 28/15 • This gives E[N 1(3)] = E[T 1(3)] λ 1(3) = E[T 1(3)] p 1λ(3) = 17/7 1/2 28/15 = 34/15 17

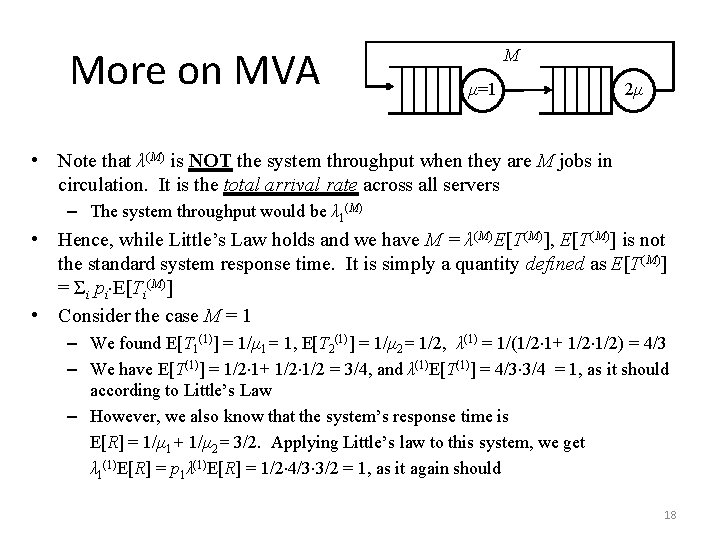

More on MVA M μ=1 2μ • Note that λ(M) is NOT the system throughput when they are M jobs in circulation. It is the total arrival rate across all servers – The system throughput would be λ 1(M) • Hence, while Little’s Law holds and we have M = λ(M)E[T(M)], E[T(M)] is not the standard system response time. It is simply a quantity defined as E[T(M)] = Σi pi E[Ti(M)] • Consider the case M = 1 – We found E[T 1(1)] = 1/μ 1= 1, E[T 2(1)] = 1/μ 2= 1/2, λ(1) = 1/(1/2 1+ 1/2) = 4/3 – We have E[T(1)] = 1/2 1+ 1/2 = 3/4, and λ(1)E[T(1)] = 4/3 3/4 = 1, as it should according to Little’s Law – However, we also know that the system’s response time is E[R] = 1/μ 1+ 1/μ 2= 3/2. Applying Little’s law to this system, we get λ 1(1)E[R] = p 1λ(1)E[R] = 1/2 4/3 3/2 = 1, as it again should 18

- Slides: 18