Brno University Of Technology SpeechFIT Luk Burget Michal

Brno University Of Technology Speech@FIT Lukáš Burget, Michal Fapšo, Valiantsina Hubeika, Ondřej Glembek, Martin Karafiát, Marcel Kockmann, Pavel Matějka, Petr Schwarz and Honza Černocký NIST Speaker Recognition Workshop 2008 MOBIO NIST SRE 2008 /24

Outline • • • Submitted systems Factor Analysis systems SVM-MLLR system Side information based calibration and fusion Contribution of subsystems in fusion Analysis of FA system – – – Gender dependent vs. independent Flavors of FA system Sensitivity to number of eigenchannels Importance of ZT-norm Optimization for microphone data • Techniques that did not make it to the submission • Conclusion

Submitted systems • BUT 01 - primary (3 systems) – – Channel and language side information in fusion FA-MFCC 13 39 FA-MFCC 20 60 SVM-MLLR • BUT 02 - (3 systems) – The same as BUT 01, but no side information in fusion • BUT 03 - (2 systems) – Channel and language side information in fusion – FA-MFCC 13 39 – FA-MFCC 20 60

FA-MFCC 13 39 system • MAP adapted UBM with 2048 Gaussian components – Single UBM trained on Switchboard and NIST 2004, 5 data • 12 MFCC + C 0 (20 ms window, 10 ms shift ) • Short time Gaussianization – Rank of the current frame coefficient in 3 sec window transformed by inverse Gaussian cumulative distribution function. • Delta + double delta + triple delta coefficients – Together 52 coefficients, 12 frames context • HLDA (dimensionality reduction from 52 to 39) • Factor Analysis Model – gender independent – 300 eigenvoices (Switchboards, NIST 2004, 5) – 100 eigenchannels for telephone speech (NIST 2004, 5 tel data) – 100 eigenchannels for microphone speech (NIST 2005 mic data) • ZT-norm – gender dependent

FA-MFCC 20 60 system • The same as FA-MFCC 13 39 with the following differences: – 60 dimensional features are: 19 MFCC + Energy + deltas + double deltas (no HLDA) – Two gender dependent Factor Analysis models

![SVM – MLLR system Linear kernels Rank normalization Lib. SVM C++ library [Chang 2001] SVM – MLLR system Linear kernels Rank normalization Lib. SVM C++ library [Chang 2001]](http://slidetodoc.com/presentation_image_h2/bdb8586c75c4a721a719992fe0a7ce91/image-6.jpg)

SVM – MLLR system Linear kernels Rank normalization Lib. SVM C++ library [Chang 2001] Pre-computed Gram matrices Features are MLLR transformations adapting LVCSR system (developed for AMI project) to speaker of given speech segment • Estimation of MLLR transformations makes use of the ASR transcripts provided by NIST • • •

SVM – MLLR system • Cascade of CMLLR and MLLR – 2 CMLLR transformation (silence and speech) – 3 MLLR transformation (silence and 2 phoneme clusters) • Silence transformations are discarded for SRE • Supervector = 1 CMLLR + 2 MLLR = = 3*392+3*39=4680 • Impostors: NIST 2004 + mic data from NIST 2005 • ZT-norm: speakers from NIST 2004

Side info based calibration and fusion • Side information for each trial is given by its hard assignment to classes: – Trial channel condition provided by NIST: tel-tel, tel-mic, mictel, mic-mic – English/non-English decision given by our LID system • Side information is used as follows: – For each system: • Split trials by channel condition and calibrate scores using linear logistic regression (LLR) in each split separately • Split trials according to English/non-English decision and calibrate scores using LLR in each split separately – Fuse the calibrated scores of all subsystems using LLR without making use of any side information • For convenience , Fo. Cal Bilinear toolkit by Niko Brummer was used, although we did not make use of its extensions over standard LLR. NIST SRE 2008 8/24

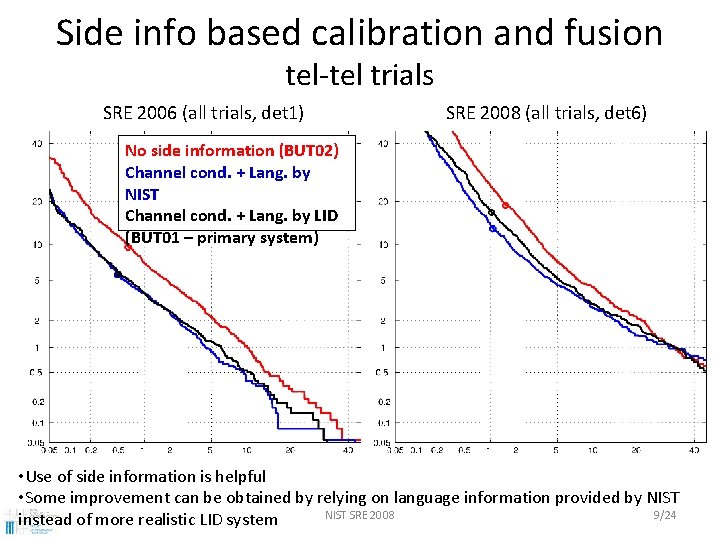

Side info based calibration and fusion tel-tel trials SRE 2006 (all trials, det 1) SRE 2008 (all trials, det 6) No side information (BUT 02) Channel cond. + Lang. by NIST Channel cond. + Lang. by LID (BUT 01 – primary system) • Use of side information is helpful • Some improvement can be obtained by relying on language information provided by NIST SRE 2008 9/24 instead of more realistic LID system

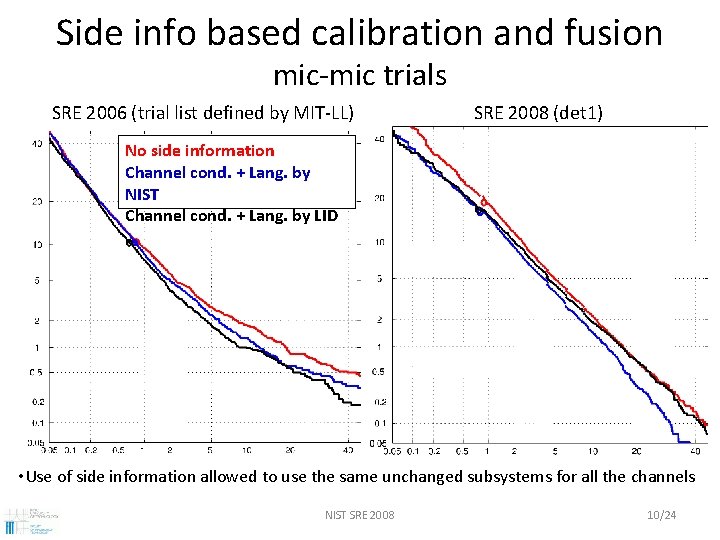

Side info based calibration and fusion mic-mic trials SRE 2006 (trial list defined by MIT-LL) SRE 2008 (det 1) No side information Channel cond. + Lang. by NIST Channel cond. + Lang. by LID • Use of side information allowed to use the same unchanged subsystems for all the channels NIST SRE 2008 10/24

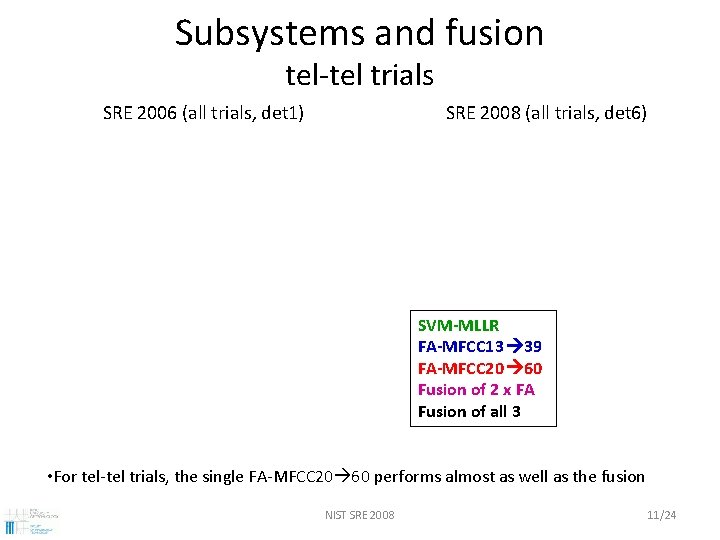

Subsystems and fusion tel-tel trials SRE 2006 (all trials, det 1) SRE 2008 (all trials, det 6) SVM-MLLR FA-MFCC 13 39 FA-MFCC 20 60 Fusion of 2 x FA Fusion of all 3 • For tel-tel trials, the single FA-MFCC 20 60 performs almost as well as the fusion NIST SRE 2008 11/24

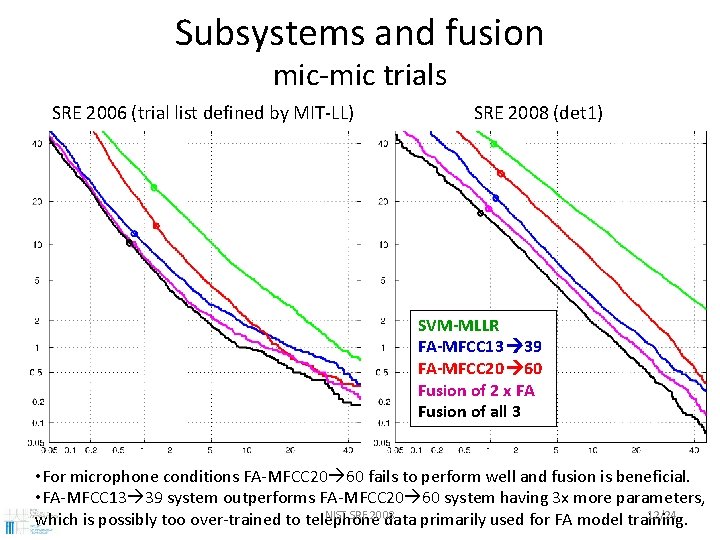

Subsystems and fusion mic-mic trials SRE 2006 (trial list defined by MIT-LL) SRE 2008 (det 1) SVM-MLLR FA-MFCC 13 39 FA-MFCC 20 60 Fusion of 2 x FA Fusion of all 3 • For microphone conditions FA-MFCC 20 60 fails to perform well and fusion is beneficial. • FA-MFCC 13 39 system outperforms FA-MFCC 20 60 system having 3 x more parameters, NIST SRE 2008 12/24 which is possibly too over-trained to telephone data primarily used for FA model training.

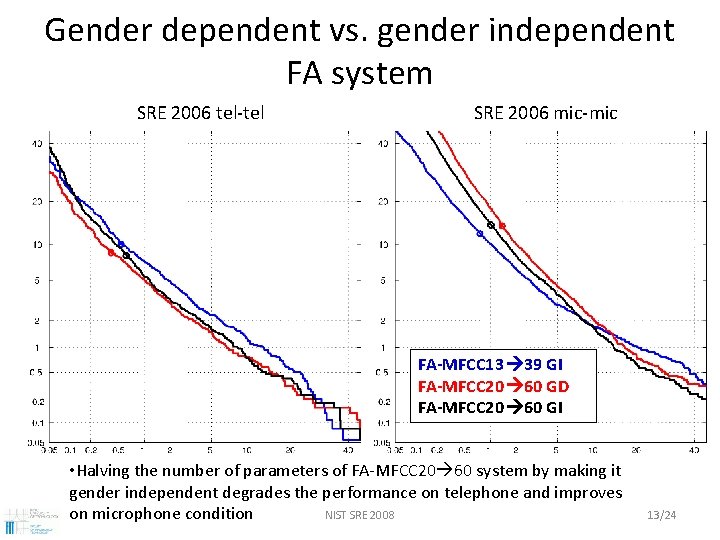

Gender dependent vs. gender independent FA system SRE 2006 tel-tel SRE 2006 mic-mic FA-MFCC 13 39 GI FA-MFCC 20 60 GD FA-MFCC 20 60 GI • Halving the number of parameters of FA-MFCC 20 60 system by making it gender independent degrades the performance on telephone and improves NIST SRE 2008 on microphone condition 13/24

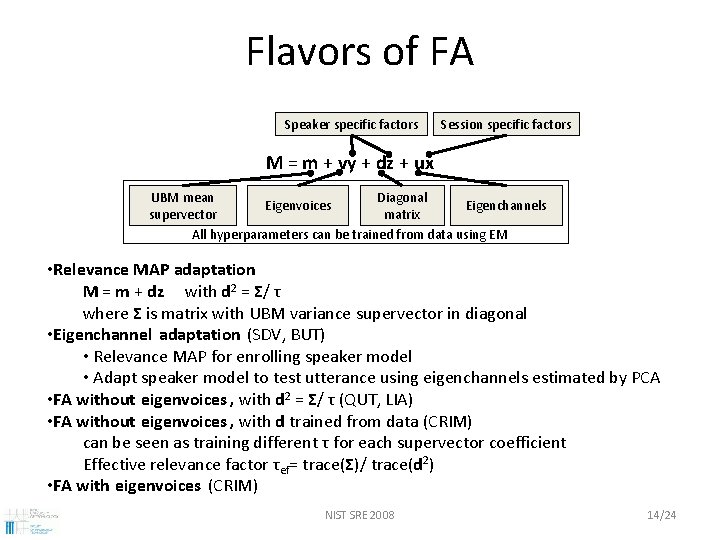

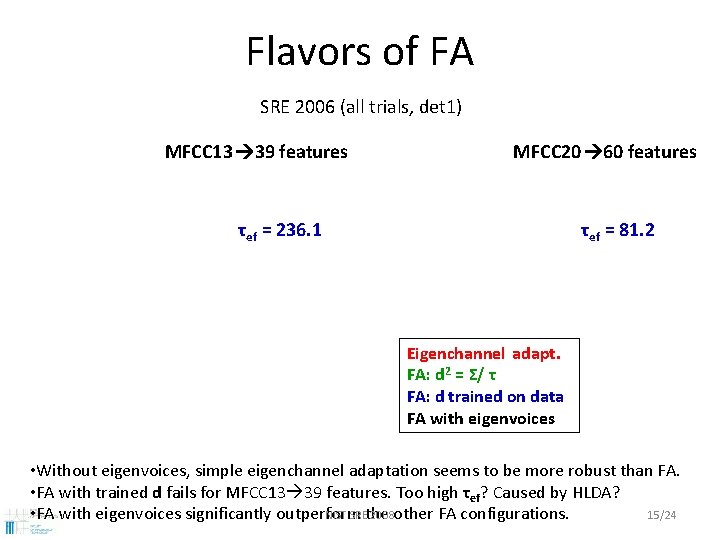

Flavors of FA Speaker specific factors Session specific factors M = m + vy + dz + ux UBM mean Diagonal Eigenvoices Eigenchannels supervector matrix All hyperparameters can be trained from data using EM • Relevance MAP adaptation M = m + dz with d 2 = Σ/ τ where Σ is matrix with UBM variance supervector in diagonal • Eigenchannel adaptation (SDV, BUT) • Relevance MAP for enrolling speaker model • Adapt speaker model to test utterance using eigenchannels estimated by PCA • FA without eigenvoices , with d 2 = Σ/ τ (QUT, LIA) • FA without eigenvoices , with d trained from data (CRIM) can be seen as training different τ for each supervector coefficient Effective relevance factor τef= trace(Σ)/ trace(d 2) • FA with eigenvoices (CRIM) NIST SRE 2008 14/24

Flavors of FA SRE 2006 (all trials, det 1) MFCC 13 39 features MFCC 20 60 features τef = 236. 1 τef = 81. 2 Eigenchannel adapt. FA: d 2 = Σ/ τ FA: d trained on data FA with eigenvoices • Without eigenvoices, simple eigenchannel adaptation seems to be more robust than FA. • FA with trained d fails for MFCC 13 39 features. Too high τef? Caused by HLDA? NIST SREthe 2008 other FA configurations. 15/24 • FA with eigenvoices significantly outperform

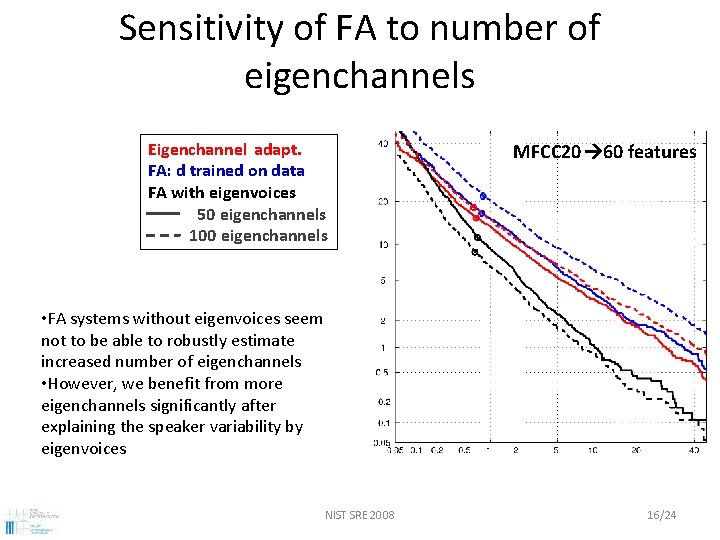

Sensitivity of FA to number of eigenchannels Eigenchannel adapt. FA: d trained on data FA with eigenvoices 50 eigenchannels 100 eigenchannels MFCC 20 60 features • FA systems without eigenvoices seem not to be able to robustly estimate increased number of eigenchannels • However, we benefit from more eigenchannels significantly after explaining the speaker variability by eigenvoices NIST SRE 2008 16/24

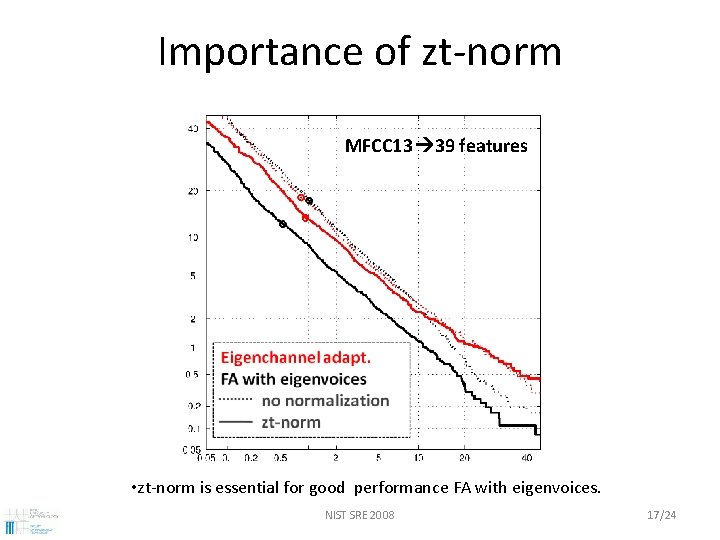

Importance of zt-norm MFCC 13 39 features • zt-norm is essential for good performance FA with eigenvoices. NIST SRE 2008 17/24

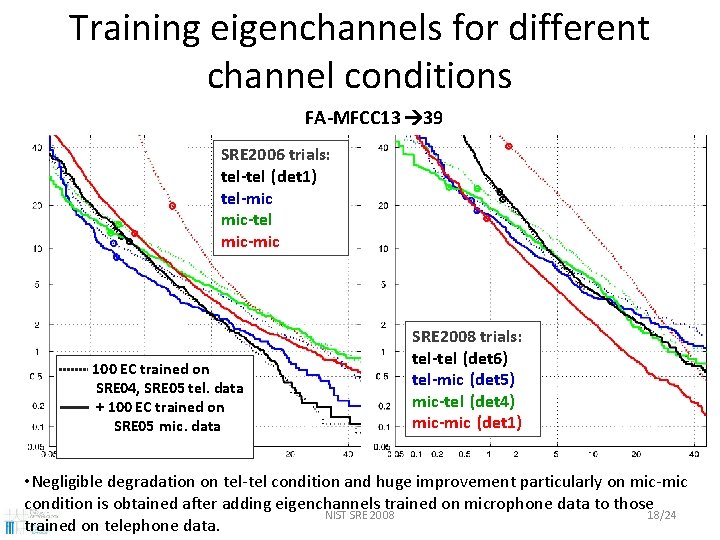

Training eigenchannels for different channel conditions FA-MFCC 13 39 SRE 2006 trials: tel-tel (det 1) tel-mic mic-tel mic-mic 100 EC trained on SRE 04, SRE 05 tel. data + 100 EC trained on SRE 05 mic. data SRE 2008 trials: tel-tel (det 6) tel-mic (det 5) mic-tel (det 4) mic-mic (det 1) • Negligible degradation on tel-tel condition and huge improvement particularly on mic-mic condition is obtained after adding eigenchannels trained on microphone data to those NIST SRE 2008 18/24 trained on telephone data.

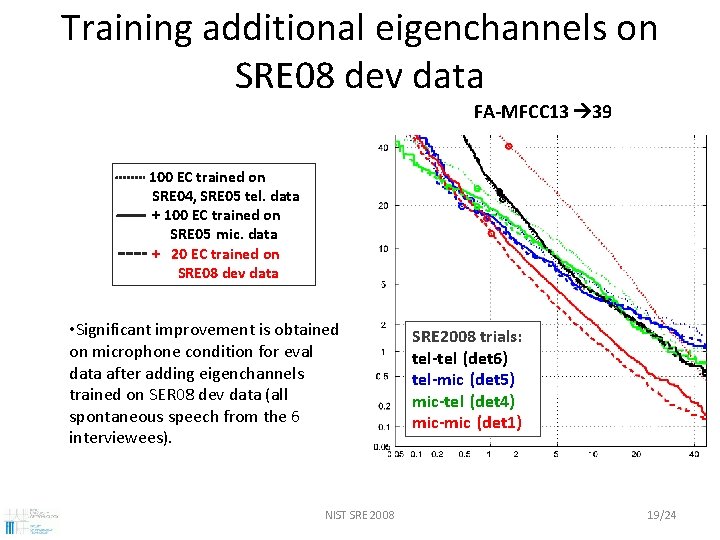

Training additional eigenchannels on SRE 08 dev data FA-MFCC 13 39 100 EC trained on SRE 04, SRE 05 tel. data + 100 EC trained on SRE 05 mic. data + 20 EC trained on SRE 08 dev data • Significant improvement is obtained on microphone condition for eval data after adding eigenchannels trained on SER 08 dev data (all spontaneous speech from the 6 interviewees). NIST SRE 2008 trials: tel-tel (det 6) tel-mic (det 5) mic-tel (det 4) mic-mic (det 1) 19/24

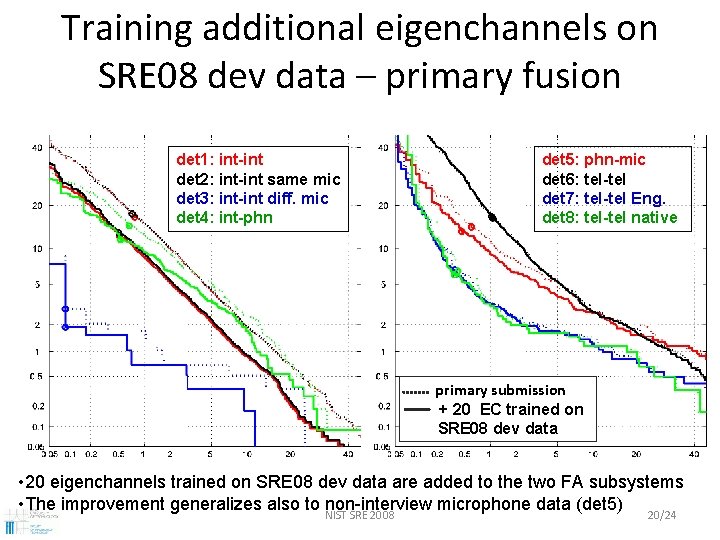

Training additional eigenchannels on SRE 08 dev data – primary fusion det 1: int-int det 2: int-int same mic det 3: int-int diff. mic det 4: int-phn det 5: phn-mic det 6: tel-tel det 7: tel-tel Eng. det 8: tel-tel native primary submission + 20 EC trained on SRE 08 dev data • 20 eigenchannels trained on SRE 08 dev data are added to the two FA subsystems • The improvement generalizes also to non-interview microphone data (det 5) NIST SRE 2008 20/24

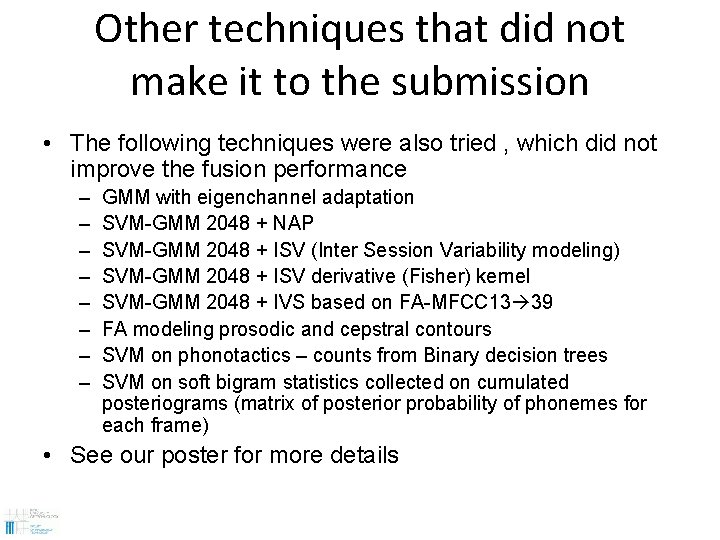

Other techniques that did not make it to the submission • The following techniques were also tried , which did not improve the fusion performance – – – – GMM with eigenchannel adaptation SVM-GMM 2048 + NAP SVM-GMM 2048 + ISV (Inter Session Variability modeling) SVM-GMM 2048 + ISV derivative (Fisher) kernel SVM-GMM 2048 + IVS based on FA-MFCC 13 39 FA modeling prosodic and cepstral contours SVM on phonotactics – counts from Binary decision trees SVM on soft bigram statistics collected on cumulated posteriograms (matrix of posterior probability of phonemes for each frame) • See our poster for more details

![Conclusions • FA systems build according to recipe from [Kenny 2008] performs excellently, though Conclusions • FA systems build according to recipe from [Kenny 2008] performs excellently, though](http://slidetodoc.com/presentation_image_h2/bdb8586c75c4a721a719992fe0a7ce91/image-22.jpg)

Conclusions • FA systems build according to recipe from [Kenny 2008] performs excellently, though there is still some mystery to be solved. • It was hard to find another complementary system that would contribute to fusion of our two FA system. • Although our system was primarily trained on and tuned for telephone data, FA subsystems can be simply augmented with eigenchannels trained on microphone data (as also proposed in [Kenny 2008]), which makes the system performing well also on microphone conditions. • Another significant improvement was obtained by training additional eigenchannels on data with matching channels condition, even thought there was very limited amount of such data provided by NIST.

![Thanks • To Patrick Kenny for [Kenny 2008] recipe to building FA system that Thanks • To Patrick Kenny for [Kenny 2008] recipe to building FA system that](http://slidetodoc.com/presentation_image_h2/bdb8586c75c4a721a719992fe0a7ce91/image-23.jpg)

Thanks • To Patrick Kenny for [Kenny 2008] recipe to building FA system that really works and for providing list of files for training FA system • MIT-LL for creating and sharing the trial lists based on SRE 06 data, which we used for the system development • Niko Bummer for Fo. Cal Bilinear, which allowed us to start playing with the fusion just the last day before the submission deadline NIST SRE 2008 23/24

![References [Kenny 2008] P. Kenny et al. : A Study of Inter-Speaker Variability in References [Kenny 2008] P. Kenny et al. : A Study of Inter-Speaker Variability in](http://slidetodoc.com/presentation_image_h2/bdb8586c75c4a721a719992fe0a7ce91/image-24.jpg)

References [Kenny 2008] P. Kenny et al. : A Study of Inter-Speaker Variability in Speaker Verification IEEE TASLP, July 2008. [Brummer 2008] N. Brummer: Fo. Cal Bilinear: Tools for detector fusion and calibration, with use of side-information http: //niko. brummer. googlepages. com/focalbilinear [Chang 2001] C. Chang et al. : LIBSVM: a library for Support Vector Machines, http: //www. csie. ntu. edu. tw/~cjlin/libsvm [Stolcke 2005/6] A. Stolcke: MLLR Transforms as Features in Spk. ID, Eurospeech 2005, Odyssey 2006 [Hain 2005] T. Hain et al. : The 2005 AMI system for RTS, Meeting Recognition Evaluation Workshop, Edinburgh, July 2005.

NIST SRE 2008 25/24

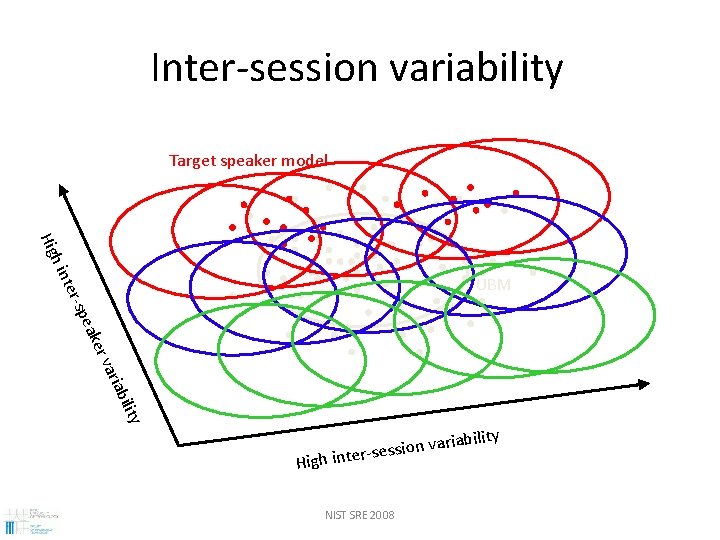

Inter-session variability Target speaker model h in Hig ility riab a er v eak -sp ter UBM High riability a v n o i s s inter-se NIST SRE 2008

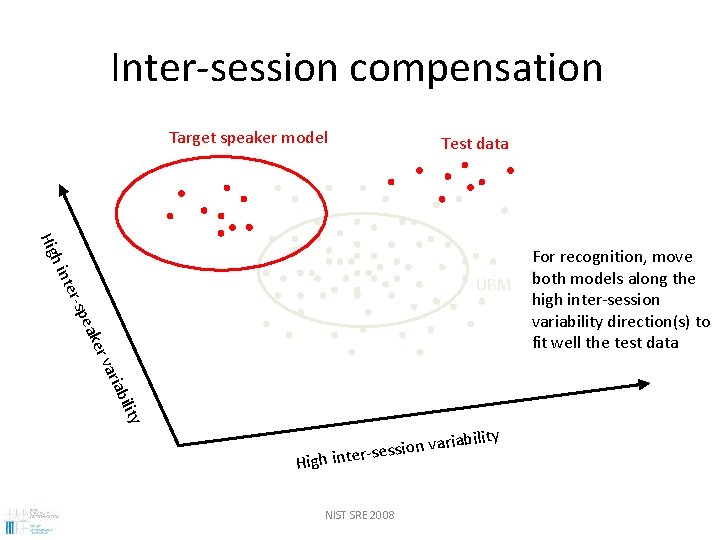

Inter-session compensation Target speaker model Test data h in Hig ility riab a er v eak -sp ter UBM High riability a v n o i s s inter-se NIST SRE 2008 For recognition, move both models along the high inter-session variability direction(s) to fit well the test data

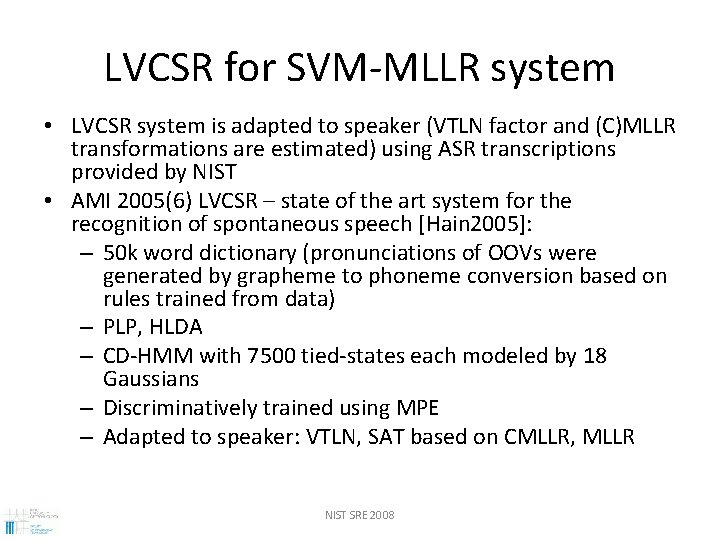

LVCSR for SVM-MLLR system • LVCSR system is adapted to speaker (VTLN factor and (C)MLLR transformations are estimated) using ASR transcriptions provided by NIST • AMI 2005(6) LVCSR – state of the art system for the recognition of spontaneous speech [Hain 2005]: – 50 k word dictionary (pronunciations of OOVs were generated by grapheme to phoneme conversion based on rules trained from data) – PLP, HLDA – CD-HMM with 7500 tied-states each modeled by 18 Gaussians – Discriminatively trained using MPE – Adapted to speaker: VTLN, SAT based on CMLLR, MLLR NIST SRE 2008

- Slides: 28