BM308 Paralel Programlamaya Giri Yaz 2020 7 Sunu

BM-308 Paralel Programlamaya Giriş Yaz 2020 (7. Sunu) (Dr. Öğr. Üyesi Deniz Dal)

Computer Memory Computer hardware does not directly support the concept of multidimensional arrays. Computer memory is one-dimensional, providing memory addresses that start at zero and increase serially to the highest available location. Multi-dimensional arrays are therefore a software concept: software maps the elements of a multi-dimensional array into a contiguous linear span of memory addresses. There are two ways that such an array can be represented in one-dimensional linear memory. These two options are commonly called row major and column major. All programming languages that support multi-dimensional arrays must choose one of these two possibilities. This choice is a fundamental property of the language, and it affects how programs written in different languages share data with each other. http: //www. physics. nyu. edu/grierlab/idl_html_help/arrays 10. html

![Row-major Order Memory Allocation (C/C++) double A[3][3]; A (0, 0) A (1, 0) A Row-major Order Memory Allocation (C/C++) double A[3][3]; A (0, 0) A (1, 0) A](http://slidetodoc.com/presentation_image_h2/0901557945b5e3d02be30c99781e3ab0/image-3.jpg)

Row-major Order Memory Allocation (C/C++) double A[3][3]; A (0, 0) A (1, 0) A (2, 0) A A (0, 1) (0, 2) A A (1, 1) (1, 2) A A (2, 1) (2, 2) Linear Memory(LM) LM A (0, 0) A (0, 1) A A (0, 2) (1, 0) A (1, 1) A A (1, 2) (2, 0) A (2, 1) A (2, 2) offset = row*NUMBEROFCOLUMNS + column A[row][column] = LM[offset] Accessing array elements that are contiguous in memory is usually faster than accessing elements which are not, due to caching.

![Column-major Order Memory Allocation (Fortran/MATLAB) double A[3][3]; A (0, 0) A (1, 0) A Column-major Order Memory Allocation (Fortran/MATLAB) double A[3][3]; A (0, 0) A (1, 0) A](http://slidetodoc.com/presentation_image_h2/0901557945b5e3d02be30c99781e3ab0/image-4.jpg)

Column-major Order Memory Allocation (Fortran/MATLAB) double A[3][3]; A (0, 0) A (1, 0) A (2, 0) A A (0, 1) (0, 2) A A (1, 1) (1, 2) A A (2, 1) (2, 2) Linear Memory(LM) LM A (0, 0) A (1, 0) A A (2, 0) (0, 1) A (1, 1) A (2, 1) offset = column*NUMBEROFROWS+row A[row][column] = LM[offset] A A (0, 2) (1, 2) A (2, 2)

MPI_Type_commit • Commits new data type to the system. • Required for all user constructed (derived) data types. int MPI_Type_commit(MPI_Datatype *datatype)

MPI_Type_free • Deallocates the specified data type object. • Use of this routine is especially important to prevent memory exhaustion if many data type objects are created, as in a loop. int MPI_Type_free(MPI_Datatype *datatype)

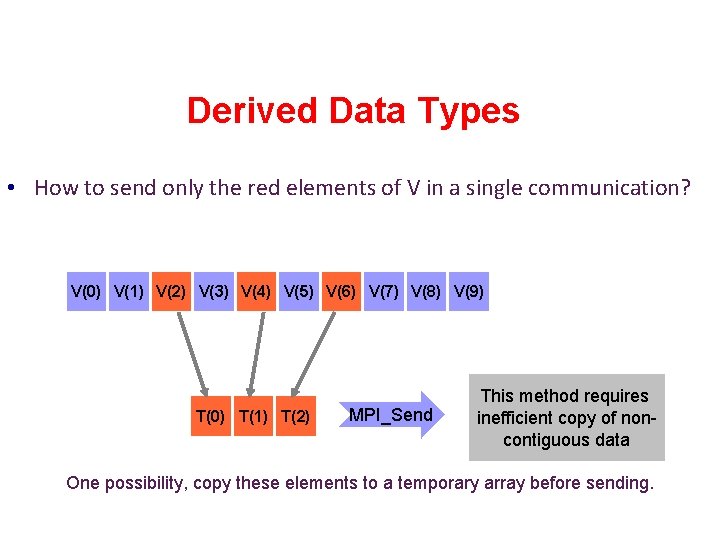

Derived Data Types • How to send only the red elements of V in a single communication? V(0) V(1) V(2) V(3) V(4) V(5) V(6) V(7) V(8) V(9) T(0) T(1) T(2) MPI_Send This method requires inefficient copy of noncontiguous data One possibility, copy these elements to a temporary array before sending.

Derived Data Types • There are routines available in MPI library that are more suitable for an array or vector-like data structures: • MPI_Type_contiguous • MPI_Type_vector • MPI_Type_indexed

MPI_Type_contiguous Constructs a type consisting of the replication of a data type into continuous locations.

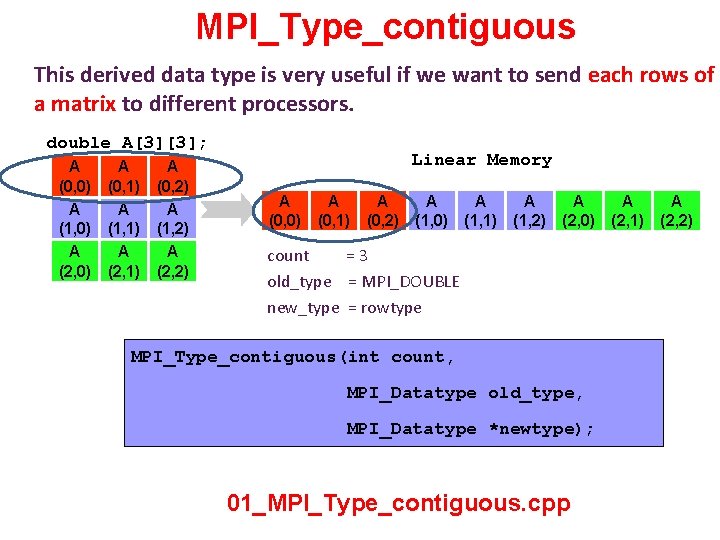

MPI_Type_contiguous This derived data type is very useful if we want to send each rows of a matrix to different processors. double A[3][3]; A (0, 0) A (1, 0) A (2, 0) A A (0, 1) (0, 2) A A (1, 1) (1, 2) A A (2, 1) (2, 2) Linear Memory A (0, 0) A (0, 1) A A (0, 2) (1, 0) A (1, 1) A A (1, 2) (2, 0) count =3 old_type = MPI_DOUBLE new_type = rowtype MPI_Type_contiguous(int count, MPI_Datatype old_type, MPI_Datatype *newtype); 01_MPI_Type_contiguous. cpp A (2, 1) A (2, 2)

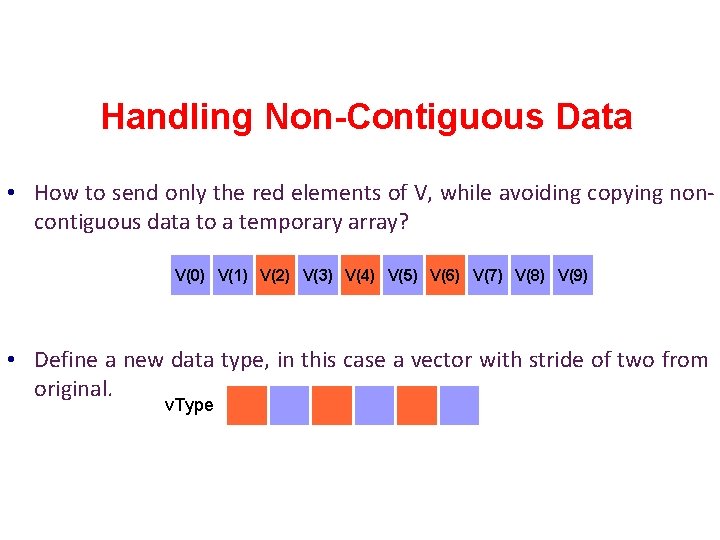

Handling Non-Contiguous Data • How to send only the red elements of V, while avoiding copying noncontiguous data to a temporary array? V(0) V(1) V(2) V(3) V(4) V(5) V(6) V(7) V(8) V(9) • Define a new data type, in this case a vector with stride of two from original. v. Type

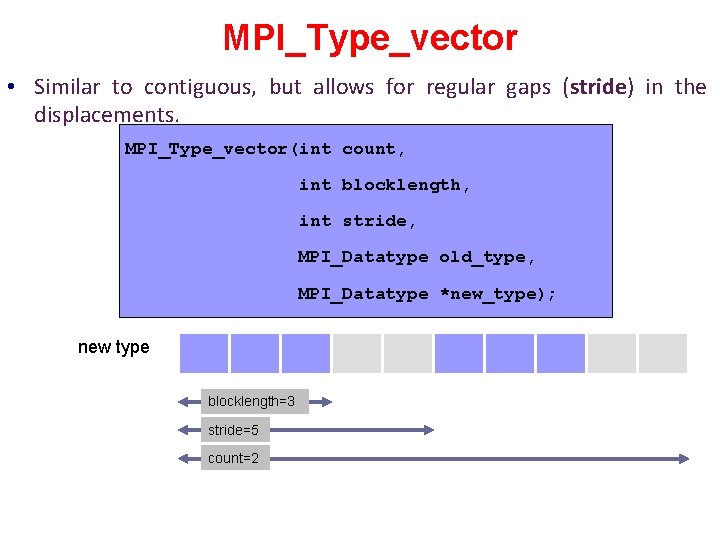

MPI_Type_vector • Similar to contiguous, but allows for regular gaps (stride) in the displacements. MPI_Type_vector(int count, int blocklength, int stride, MPI_Datatype old_type, MPI_Datatype *new_type); new type blocklength=3 stride=5 count=2

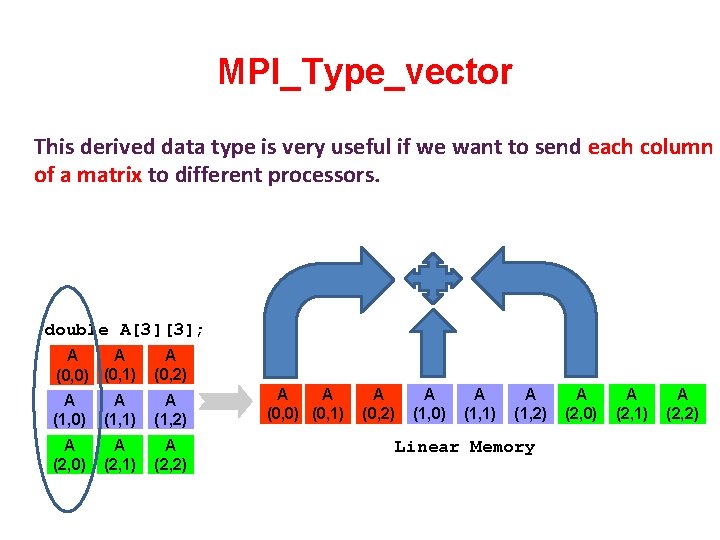

MPI_Type_vector This derived data type is very useful if we want to send each column of a matrix to different processors. double A[3][3]; A A (0, 0) (0, 1) A (0, 2) A (1, 0) A (1, 1) A (1, 2) A (2, 0) A (2, 1) A (2, 2) A A (0, 0) (0, 1) A (0, 2) A (1, 0) A (1, 1) A (1, 2) Linear Memory A (2, 0) A (2, 1) A (2, 2)

![MPI_Type_vector double A[3][3]; A A (0, 0) (0, 1) A (0, 2) A (1, MPI_Type_vector double A[3][3]; A A (0, 0) (0, 1) A (0, 2) A (1,](http://slidetodoc.com/presentation_image_h2/0901557945b5e3d02be30c99781e3ab0/image-14.jpg)

MPI_Type_vector double A[3][3]; A A (0, 0) (0, 1) A (0, 2) A (1, 0) A (1, 1) A (1, 2) A (2, 0) A (2, 1) A (2, 2) Linear Memory A A (0, 0) (0, 1) A (0, 2) A (1, 0) A (1, 1) A A (1, 2) (2, 0) blocklength=1 stride=3 count=3 02_MPI_Type_vector. cpp A (2, 1) A (2, 2)

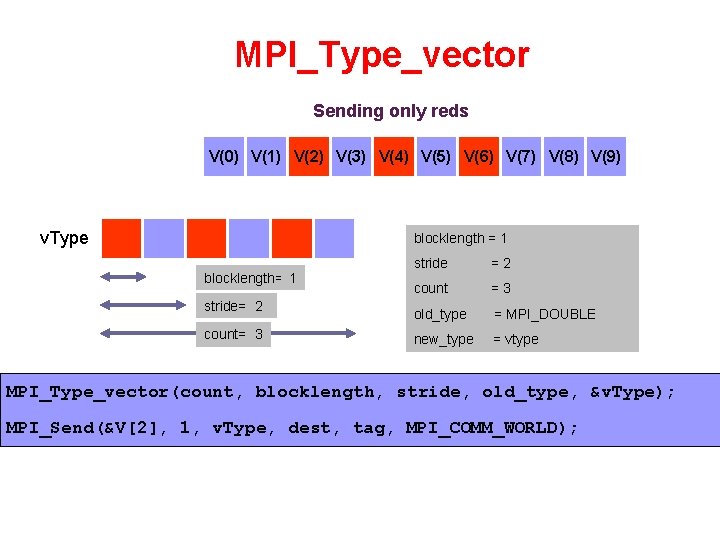

MPI_Type_vector Sending only reds V(0) V(1) V(2) V(3) V(4) V(5) V(6) V(7) V(8) V(9) v. Type blocklength = 1 blocklength= ? 1 stride= ? 2 count= ? 3 stride =2 count =3 old_type = MPI_DOUBLE new_type = vtype MPI_Type_vector(count, blocklength, stride, old_type, &v. Type); MPI_Send(&V[2], 1, v. Type, dest, tag, MPI_COMM_WORLD);

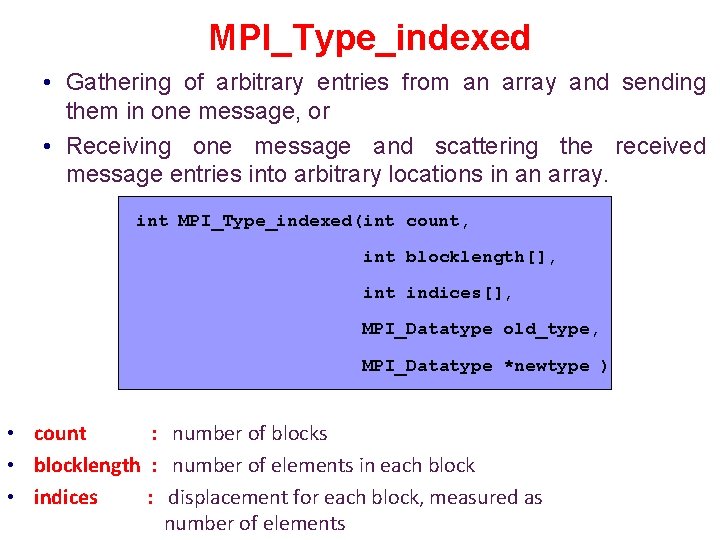

MPI_Type_indexed • Indexed constructor allows one to specify a non-contiguous data layout where displacements between successive blocks need not be equal.

MPI_Type_indexed • Gathering of arbitrary entries from an array and sending them in one message, or • Receiving one message and scattering the received message entries into arbitrary locations in an array. int MPI_Type_indexed(int count, int blocklength[], int indices[], MPI_Datatype old_type, MPI_Datatype *newtype ) • count : number of blocks • blocklength : number of elements in each block • indices : displacement for each block, measured as number of elements

![MPI_Type_indexed blen[0]= 2 indices[0]=0 blen[1]= 3 indices[1]=3 blen[2]= 1 indices[2]=8 count= 3 Toplam 6 MPI_Type_indexed blen[0]= 2 indices[0]=0 blen[1]= 3 indices[1]=3 blen[2]= 1 indices[2]=8 count= 3 Toplam 6](http://slidetodoc.com/presentation_image_h2/0901557945b5e3d02be30c99781e3ab0/image-18.jpg)

MPI_Type_indexed blen[0]= 2 indices[0]=0 blen[1]= 3 indices[1]=3 blen[2]= 1 indices[2]=8 count= 3 Toplam 6 elemanlı bir dizi oluşturulmak isteniyor.

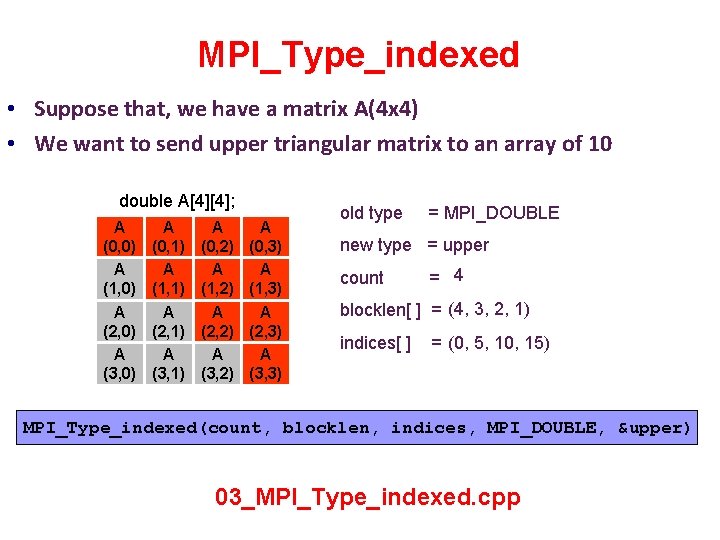

MPI_Type_indexed • Suppose that, we have a matrix A(4 x 4) • We want to send upper triangular matrix to an array of 10 double A[4][4]; A (0, 0) A (1, 0) A (2, 0) A (3, 0) A (0, 1) A (1, 1) A (2, 1) A (3, 1) A (0, 2) A (1, 2) A (2, 2) A (3, 2) A (0, 3) A (1, 3) A (2, 3) A (3, 3) old type = MPI_DOUBLE new type = upper count = 4 blocklen[ ] = (4, 3, 2, 1) indices[ ] = (0, 5, 10, 15) MPI_Type_indexed(count, blocklen, indices, MPI_DOUBLE, &upper) 03_MPI_Type_indexed. cpp

- Slides: 19