Binary Dependent Variable Week 3 Chapter 11 SW

Binary Dependent Variable Week 3 (Chapter 11, S&W) 1

Discrete Variables • So far we worked with the following cases: – Y and X both continuous • Interpretation of b. – Y continuous, X discrete • Interpretation • Now we will talk about the case where Y is binary. – X can be discrete or continuous. 2

Examples of binary Y • Employment status • Attending school or not • Smoking or not • Having malaria or not • etc 3

The Linear Probability Model • Can we just run OLS as usual? • Example: mosquito nets and malaria. • How do we interpret b? • What does the straight line mean? • How to interpret the predicted value of Y? 4

Example: Mosquito nets and malaria • X: indicator for whether a village received free mosquito nets (the “treatment”). • Y: indicator for whether an individual in one of the villages has malaria. 5

![How to interpret the regression line? • Given OLS Assumption #1, E[Yi| Xi] = How to interpret the regression line? • Given OLS Assumption #1, E[Yi| Xi] =](http://slidetodoc.com/presentation_image_h/03d4a8de01bd4c92bc28a8fd2d31ab2b/image-6.jpg)

How to interpret the regression line? • Given OLS Assumption #1, E[Yi| Xi] = a + b. Xi • When Y is binary, E(Y)= 1 x. Pr(Y=1) + 0 x. Pr(Y=0) = Pr(Y=1) • Then, E(Y|X) = Pr(Y=1|X) = a + b. X • The regression line tells us the probability of Y=1, given a value of X. – How x affects the probability of Y=1 6

The Linear Probability Model (ii) • When Y is binary, the linear regression model is called “linear probability model”. • How to interpret Yhat? – The predicted value of Y is a probability. – Predicted probability of Y=1, given X. • How to interpret bhat? – Change in the probability of Y=1, given a small change in x. 7

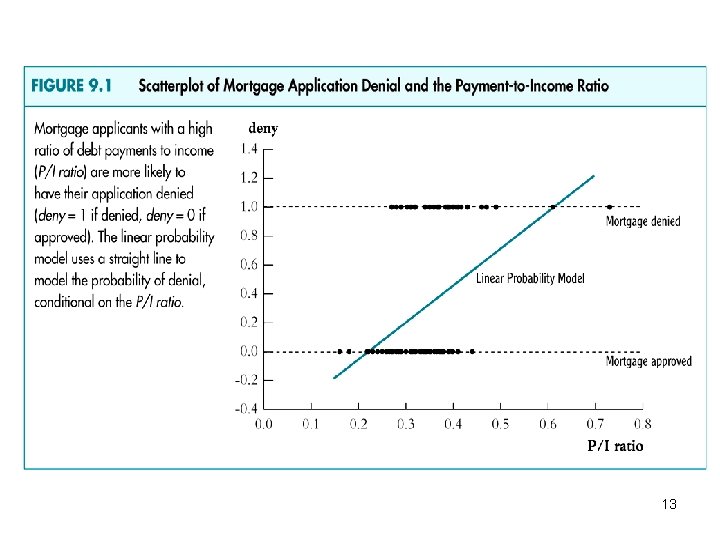

Continuous X • What if X is continuous? • Example: effect of household income on the probability of getting a loan. – Y: getting a loan or not. – X: household income – Also race, etc. • It seems strange to approximate this relationship with a straight line! 8

Example Binary Dependent Variable The effect of income and race on mortgage application denial 9

Mortgage application denial • Data from Boston, 1990. • 2. 380 observations of mortgage applications. • Dependent variable: 1 if mortgage is denied (0 if granted) 10

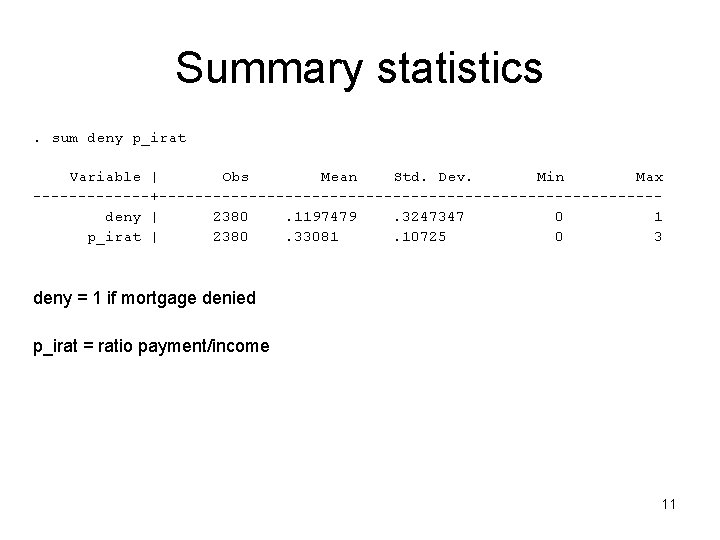

Summary statistics. sum deny p_irat Variable | Obs Mean Std. Dev. Min Max -------+----------------------------deny | 2380. 1197479. 3247347 0 1 p_irat | 2380. 33081. 10725 0 3 deny = 1 if mortgage denied p_irat = ratio payment/income 11

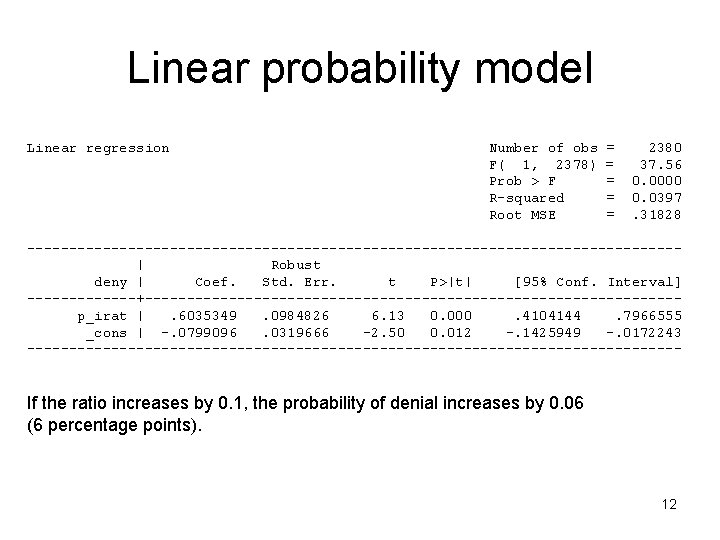

Linear probability model Linear regression Number of obs F( 1, 2378) Prob > F R-squared Root MSE = = = 2380 37. 56 0. 0000 0. 0397. 31828 ---------------------------------------| Robust deny | Coef. Std. Err. t P>|t| [95% Conf. Interval] -------+--------------------------------p_irat |. 6035349. 0984826 6. 13 0. 000. 4104144. 7966555 _cons | -. 0799096. 0319666 -2. 50 0. 012 -. 1425949 -. 0172243 --------------------------------------- If the ratio increases by 0. 1, the probability of denial increases by 0. 06 (6 percentage points). 12

13

Summary of the LPM • Advantages – Easily estimated and interpreted – Same inference as in multiple regression • Disadvantages – Does it make sense that the probability of Y=1 be linear in X? – Predicted probabilities can be >1 or <0! • These can be overcome by using a non-linear probability model: – Probit or logit 14

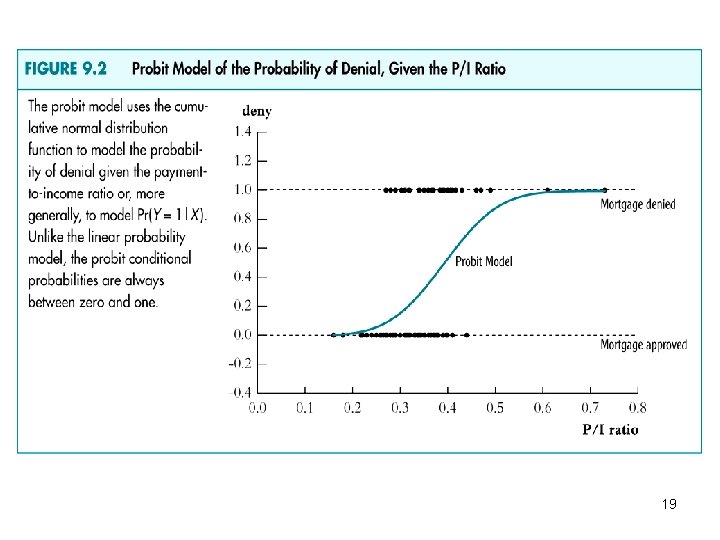

Probit and Logit Regression • We would like 0<= Pr(Y=1| x) <=1 • For b>0, Pr(Y=1|x) should increase in X. • This requires a non-linear functional form for the probability. • Could it be S-shaped? • Do you know a function that fullfills those requirements? – How about any cumulative probability distribution function? 15

Probit • The probit regression models the Pr(Y=1) following a cumulative normal distribution function (the standard normal cdf), evaluated at z = a + b. X: • Pr(Y=1|X) = F(a + b. X) – F is the standard normal cdf – z = a + b. X is the z-value of the probit model 16

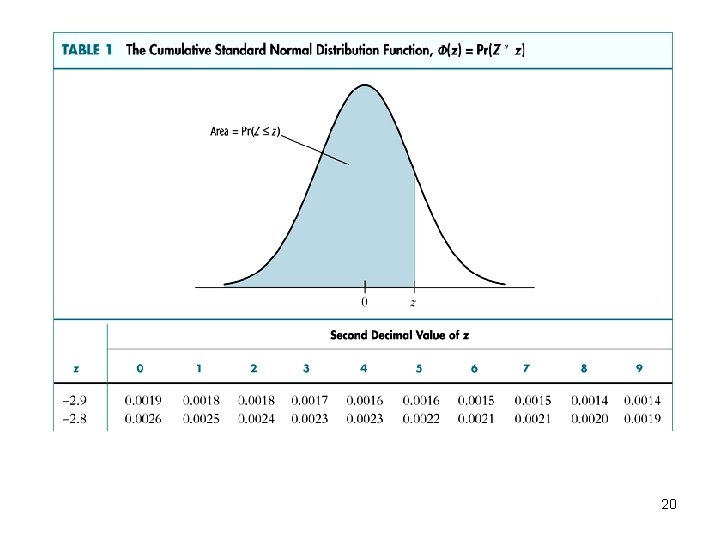

Example • Suppose a=-2. 2, b=3, X=0. 5 • Predicted probability of mortgage denial? • Pr(Y=1|X) = F(a + b. X) = F(-2. 2 + 3 x 0. 5) = F(-0. 70) = 0. 24 (see table!) 17

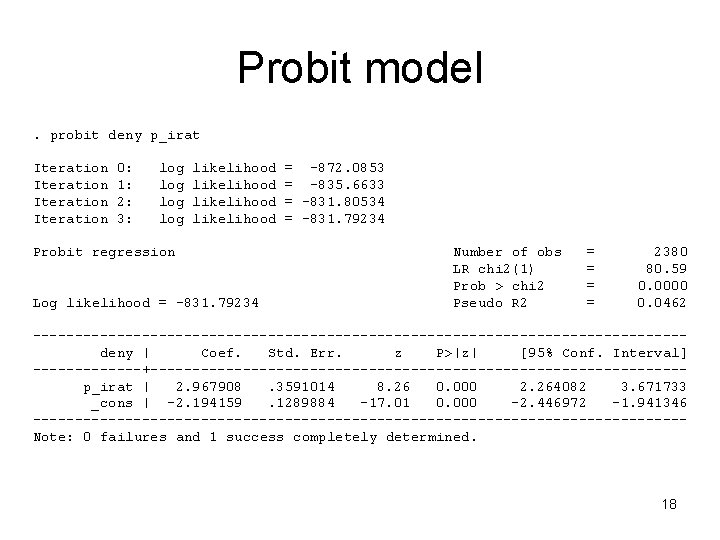

Probit model. probit deny p_irat Iteration 0: 1: 2: 3: log log likelihood Probit regression Log likelihood = -831. 79234 = -872. 0853 = -835. 6633 = -831. 80534 = -831. 79234 Number of obs LR chi 2(1) Prob > chi 2 Pseudo R 2 = = 2380 80. 59 0. 0000 0. 0462 ---------------------------------------deny | Coef. Std. Err. z P>|z| [95% Conf. Interval] -------+--------------------------------p_irat | 2. 967908. 3591014 8. 26 0. 000 2. 264082 3. 671733 _cons | -2. 194159. 1289884 -17. 01 0. 000 -2. 446972 -1. 941346 ---------------------------------------Note: 0 failures and 1 success completely determined. 18

19

20

The Probit model • Why do we use the standard normal cdf? • It has the properties that we were looking for, • It’s easy to use (tables available) • BUT: coefficient interpretation is hard! 21

Stata example • Can we interpret the coefficient? • Sign and significance (std error), yes! • Magnitude, no! • Estimated “effects”? Predicted probabilities? 22

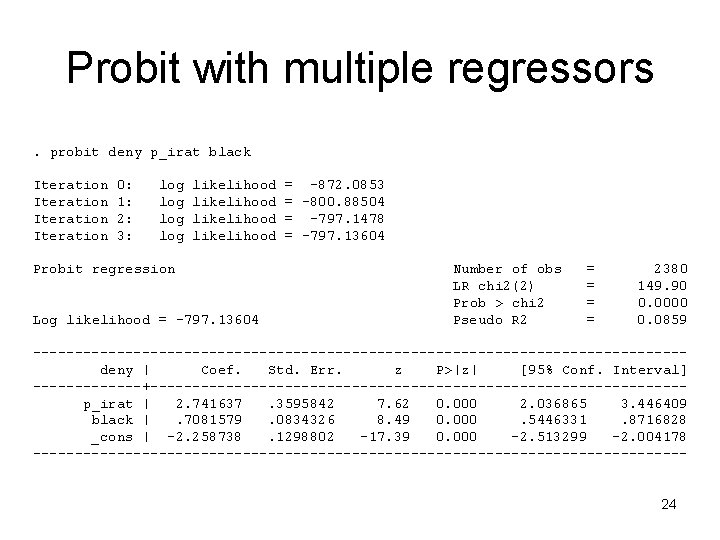

Probit with multiple regressors • As in OLS, excluding relevant variables will bias our coefficients. • Example: adding “race” to the regression. 23

Probit with multiple regressors. probit deny p_irat black Iteration 0: 1: 2: 3: log log likelihood Probit regression Log likelihood = -797. 13604 = -872. 0853 = -800. 88504 = -797. 1478 = -797. 13604 Number of obs LR chi 2(2) Prob > chi 2 Pseudo R 2 = = 2380 149. 90 0. 0000 0. 0859 ---------------------------------------deny | Coef. Std. Err. z P>|z| [95% Conf. Interval] -------+--------------------------------p_irat | 2. 741637. 3595842 7. 62 0. 000 2. 036865 3. 446409 black |. 7081579. 0834326 8. 49 0. 000. 5446331. 8716828 _cons | -2. 258738. 1298802 -17. 39 0. 000 -2. 513299 -2. 004178 ---------------------------------------24

The Logit model • Instead of using the standard normal cdf, we could use other distributions. • The logit uses the cdf of the logistic distribution. • Example. • Why logit versus probit? – Historical reasons – In practice, very similar to probit. 25

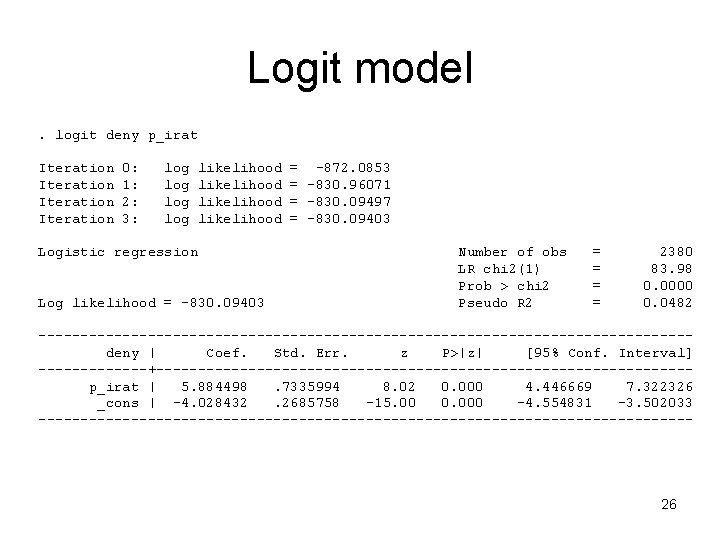

Logit model. logit deny p_irat Iteration 0: 1: 2: 3: log log likelihood Logistic regression Log likelihood = -830. 09403 = -872. 0853 = -830. 96071 = -830. 09497 = -830. 09403 Number of obs LR chi 2(1) Prob > chi 2 Pseudo R 2 = = 2380 83. 98 0. 0000 0. 0482 ---------------------------------------deny | Coef. Std. Err. z P>|z| [95% Conf. Interval] -------+--------------------------------p_irat | 5. 884498. 7335994 8. 02 0. 000 4. 446669 7. 322326 _cons | -4. 028432. 2685758 -15. 00 0. 000 -4. 554831 -3. 502033 --------------------------------------- 26

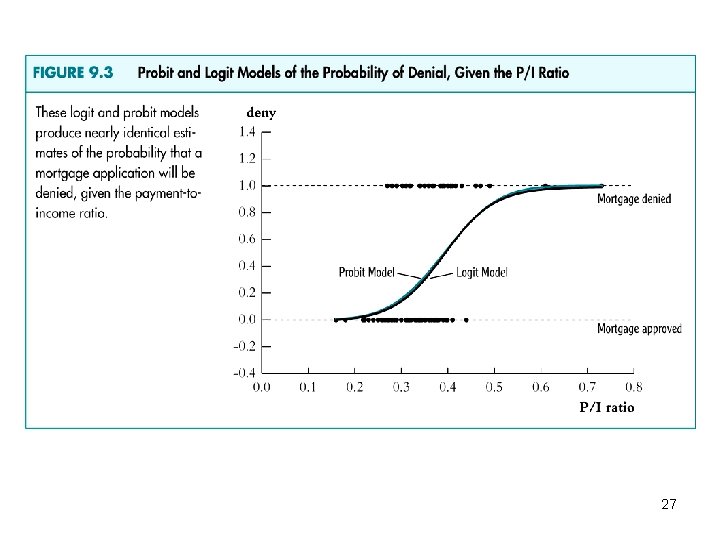

27

Probit and Logit models • The predicted probabilities and the estimated marginal effects are non-linear functions of the parameter estimates. 28

Marginal effects 1. Linear probability model (LPM): ME = 2. Probit: ME = 3. Logit: ME = 29

Estimation and inference in probit and logit models • How do we estimate a and b? • What’s their sampling distribution? • Why can we follow our usual methods for inference? 30

Non-linear least squares • We could use non-linear LS to get our estimates for a and b. • But: we can’t really solve explicitly. • We could solve it numerically. • In practice, it’s not used because it’s no efficient. • A more efficient (lower variance) estimator is maximum likelihood. 31

Maximum Likelihood • The likelihood function is the conditional density of Y 1, …Yn, dado X 1, …Xn, as a function of the unknown parameters a and b. • The ML estimator is the value of a and b that maximizes the likelihood function. • The value of a and b. that best describes the full distribution of the data. 32

Properties of ML • In large samples, ML is: – Consistent – Normally distributed – Efficient (the estimator with lowest variance). 33

ML in a Probit with one X 1. Density of Y 1, given X 1: - Then Y 2, Y 2 given X 1, X 2, etc. 2. The likelihood function is the joint density of Y 1, …Yn, dado X 1, …Xn, as a function of a and b: 3. Find a and b that maximize f. - It cannot be maximized explicitly - We have to use numerical methods 34

Properties of ML Probit • In large samples, the MLE of a and b are: – Consistent – Normally distributed – Their standard errors can be calculated, so we can do inference – We can calculate confidence intervals in the usual way. 35

Maximum Likelihood Logit • The only difference is that the standard normal cdf is replaced by the logistic cdf. • The likelihood function is similar. • The properties of the estimators are the same. – Consistency – Normality – Can calculate SE 36

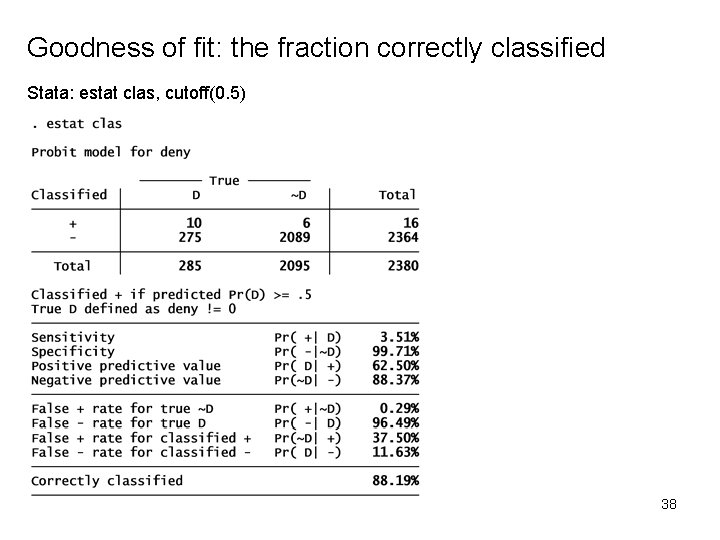

Goodness of fit • The R-squared does not make sense now. • We can use two other measures: • The fraction correctly predicted. • The pseudo-Rsq. 37

Goodness of fit: the fraction correctly classified Stata: estat clas, cutoff(0. 5) 38

- Slides: 38