Bayes Theorem An extension of conditional probability When

Bayes’ Theorem An extension of conditional probability

• When to Apply Bayes' Theorem • Part of the challenge in applying Bayes' theorem involves recognizing the types of problems that warrant its use. You should consider Bayes' theorem when the following conditions exist. • The sample space is partitioned into a set of mutually exclusive events { A 1, A 2, . . . , An }. • Within the sample space, there exists an event B, for which P(B) > 0. • The analytical goal is to compute a conditional probability of the form: P( Ak | B ). • You know at least one of the two sets of probabilities described below. • P( Ak ∩ B ) for each Ak • P( Ak ) and P( B | Ak ) for each Ak

Bayes’ Theorem Suppose we have estimated prior probabilities for events we are concerned with, and then obtain new information. We would like to devise method to compute the revised or posterior probabilities. Bayes’ theorem gives us a way to do this.

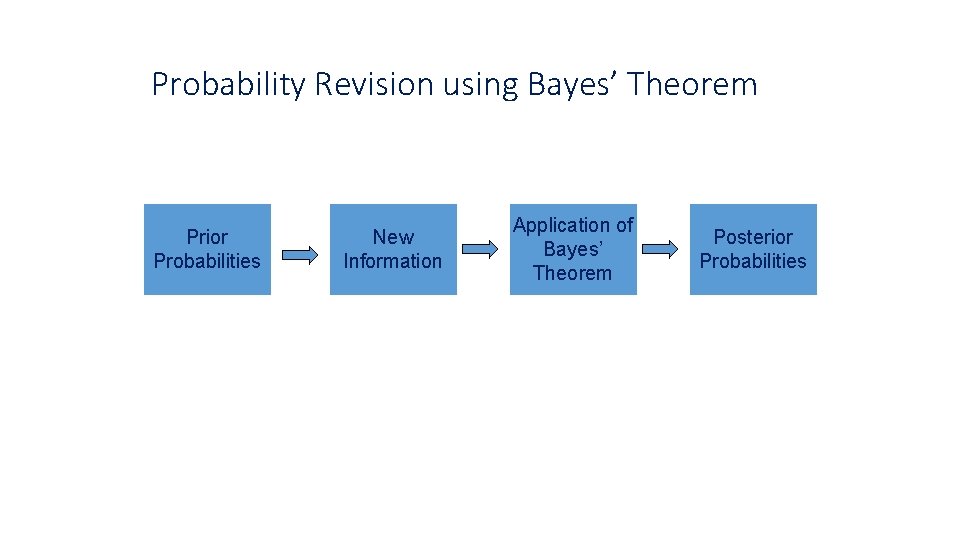

Probability Revision using Bayes’ Theorem Prior Probabilities New Information Application of Bayes’ Theorem Posterior Probabilities

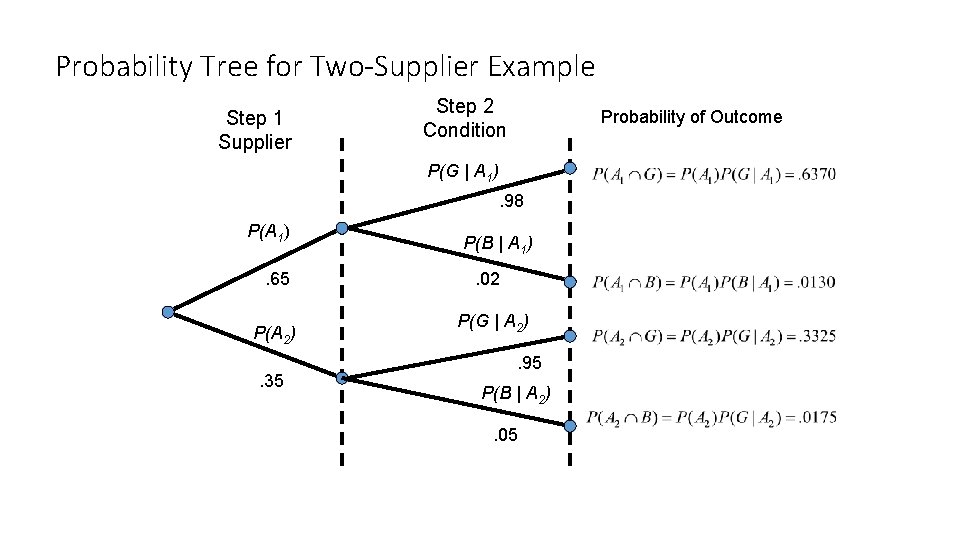

Application of Bayes’ Theorem • Consider a manufacturing firm that receives shipment of parts from two suppliers. • Let A 1 denote the event that a part is received from supplier 1; A 2 is the event the part is received from supplier 2

We get 65 percent of our parts from supplier 1 and 35 percent from supplier 2. Thus: P(A 1) =. 65 and P(A 2) =. 35

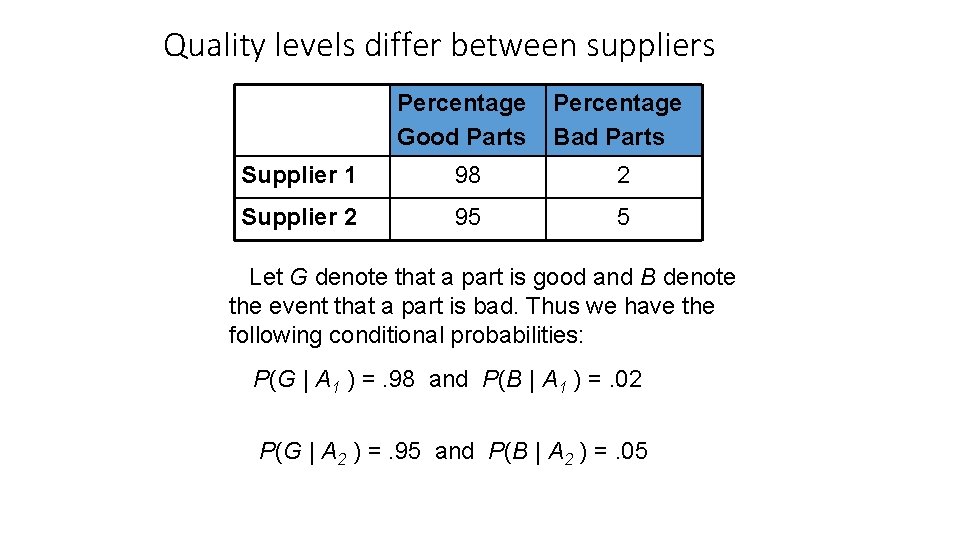

Quality levels differ between suppliers Percentage Good Parts Percentage Bad Parts Supplier 1 98 2 Supplier 2 95 5 Let G denote that a part is good and B denote the event that a part is bad. Thus we have the following conditional probabilities: P(G | A 1 ) =. 98 and P(B | A 1 ) =. 02 P(G | A 2 ) =. 95 and P(B | A 2 ) =. 05

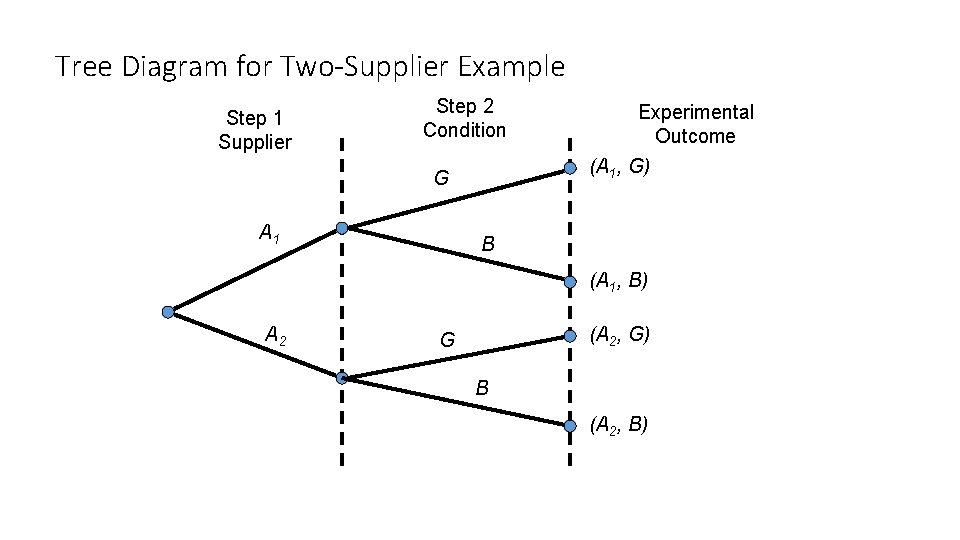

Tree Diagram for Two-Supplier Example Step 1 Supplier Step 2 Condition (A 1, G) G A 1 Experimental Outcome B (A 1, B) A 2 (A 2, G) G B (A 2, B)

Each of the experimental outcomes is the intersection of 2 events. For example, the probability of selecting a part from supplier 1 that is good is given by:

Probability Tree for Two-Supplier Example Step 1 Supplier Step 2 Condition Probability of Outcome P(G | A 1). 98 P(A 1). 65 P(A 2). 35 P(B | A 1). 02 P(G | A 2). 95 P(B | A 2). 05

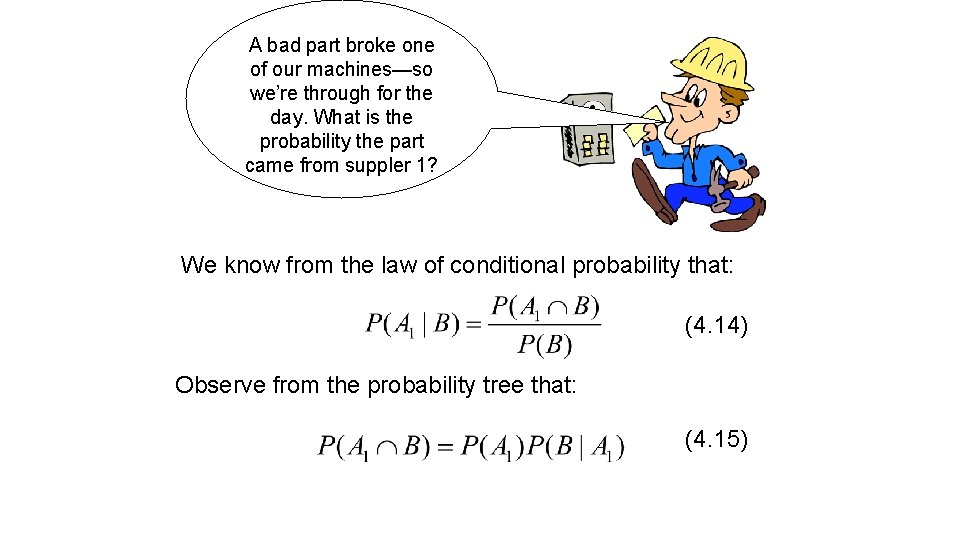

A bad part broke one of our machines—so we’re through for the day. What is the probability the part came from suppler 1? We know from the law of conditional probability that: (4. 14) Observe from the probability tree that: (4. 15)

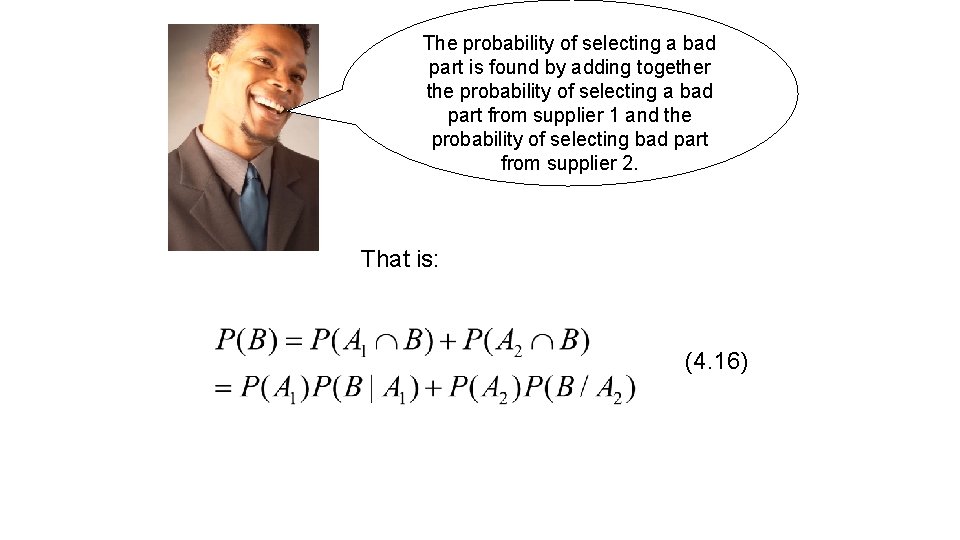

The probability of selecting a bad part is found by adding together the probability of selecting a bad part from supplier 1 and the probability of selecting bad part from supplier 2. That is: (4. 16)

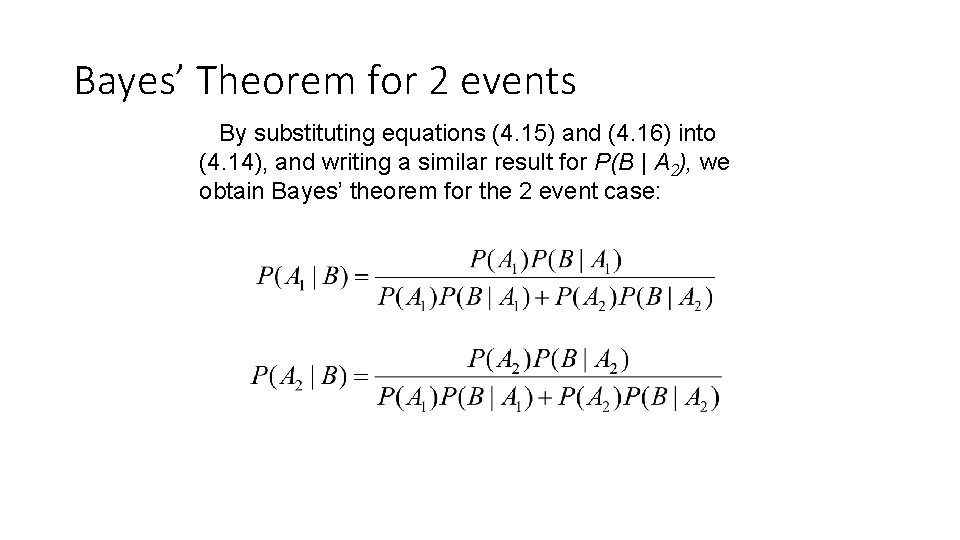

Bayes’ Theorem for 2 events By substituting equations (4. 15) and (4. 16) into (4. 14), and writing a similar result for P(B | A 2), we obtain Bayes’ theorem for the 2 event case:

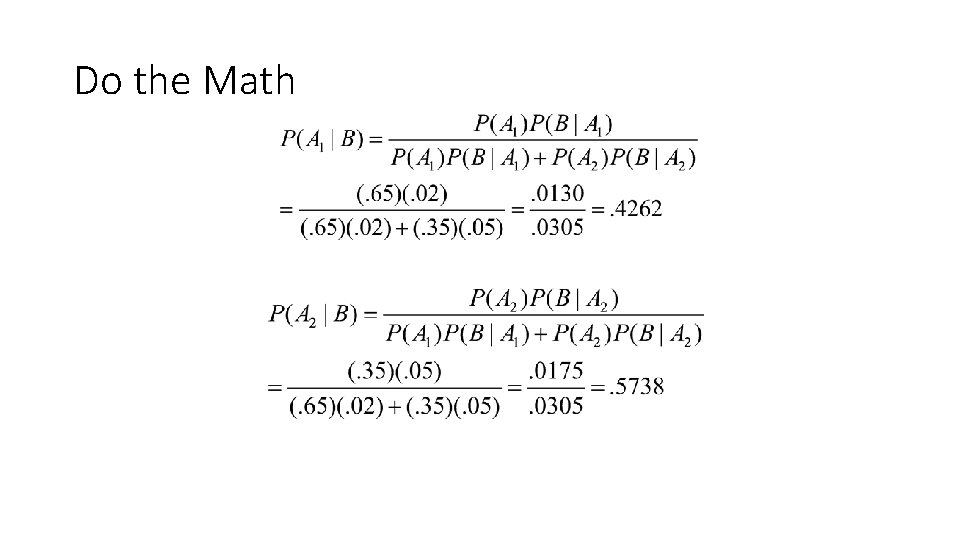

Do the Math

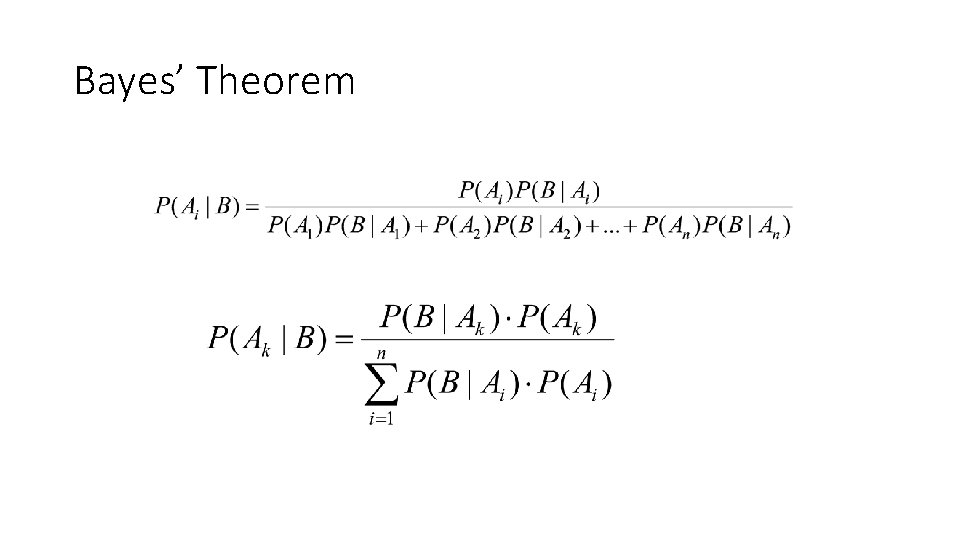

Bayes’ Theorem

Bayes' Theorem • P(A|B) = P(B|A) P(A) • P(B) • P(A) – the PRIOR PROBABILITY – represents your knowledge about A before you have gathered data. • e. g. if 0. 01 of a population has schizophrenia then the probability that a person drawn at random would have schizophrenia is 0. 01

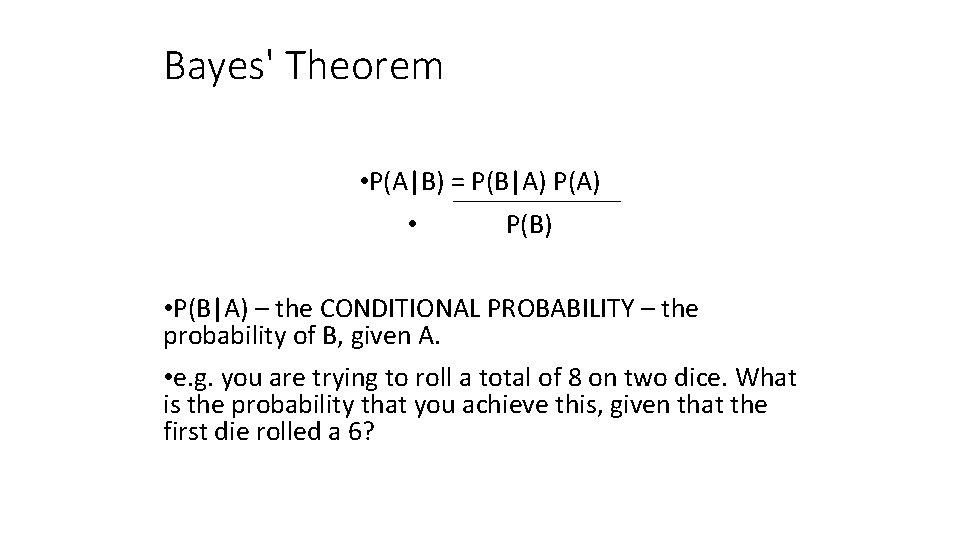

Bayes' Theorem • P(A|B) = P(B|A) P(A) • P(B|A) – the CONDITIONAL PROBABILITY – the probability of B, given A. • e. g. you are trying to roll a total of 8 on two dice. What is the probability that you achieve this, given that the first die rolled a 6?

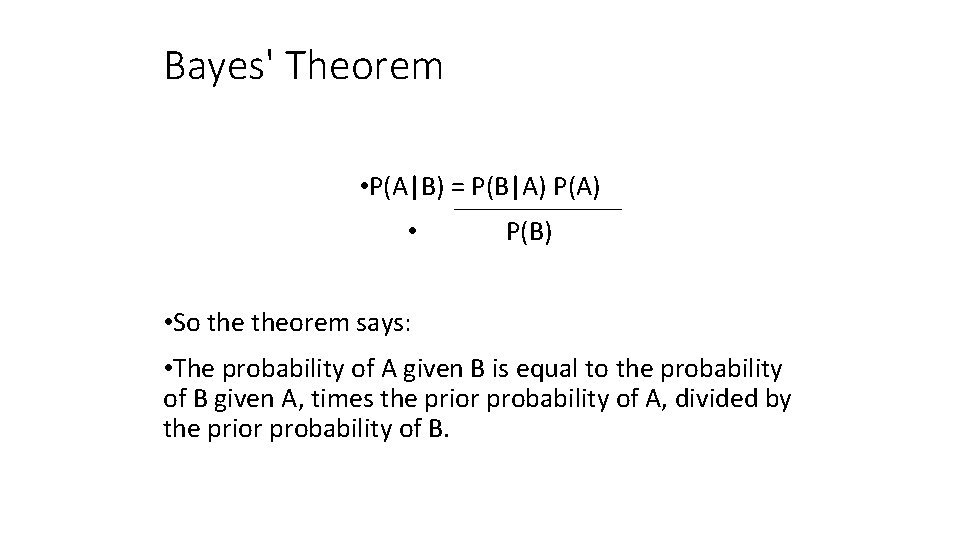

Bayes' Theorem • P(A|B) = P(B|A) P(A) • P(B) • So theorem says: • The probability of A given B is equal to the probability of B given A, times the prior probability of A, divided by the prior probability of B.

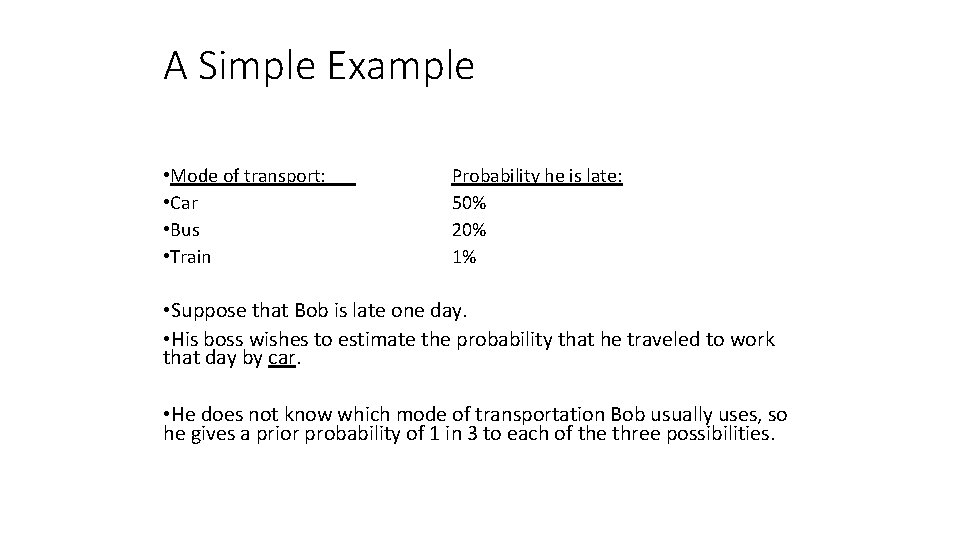

A Simple Example • Mode of transport: • Car • Bus • Train Probability he is late: 50% 20% 1% • Suppose that Bob is late one day. • His boss wishes to estimate the probability that he traveled to work that day by car. • He does not know which mode of transportation Bob usually uses, so he gives a prior probability of 1 in 3 to each of the three possibilities.

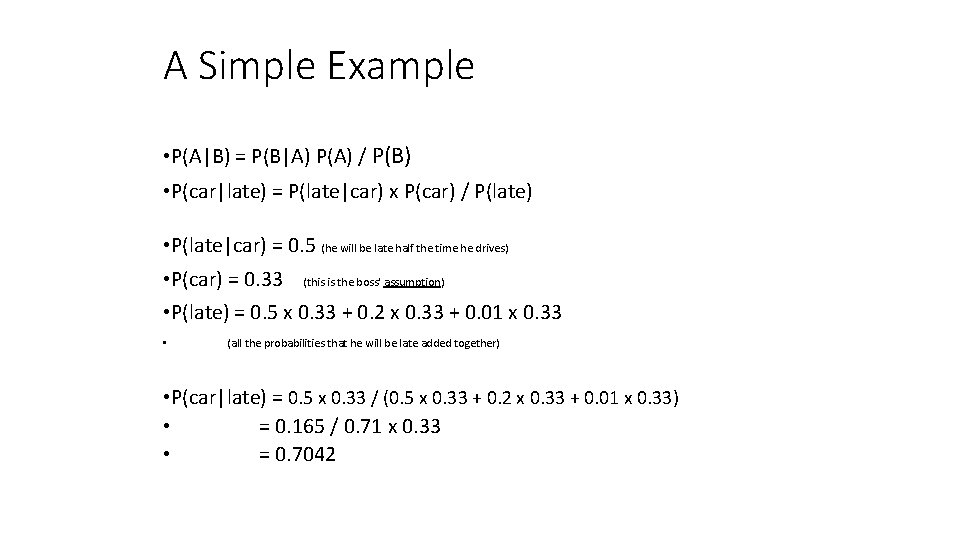

A Simple Example • P(A|B) = P(B|A) P(A) / P(B) • P(car|late) = P(late|car) x P(car) / P(late) • P(late|car) = 0. 5 (he will be late half the time he drives) • P(car) = 0. 33 (this is the boss' assumption) • P(late) = 0. 5 x 0. 33 + 0. 2 x 0. 33 + 0. 01 x 0. 33 • (all the probabilities that he will be late added together) • P(car|late) = 0. 5 x 0. 33 / (0. 5 x 0. 33 + 0. 2 x 0. 33 + 0. 01 x 0. 33) • = 0. 165 / 0. 71 x 0. 33 • = 0. 7042

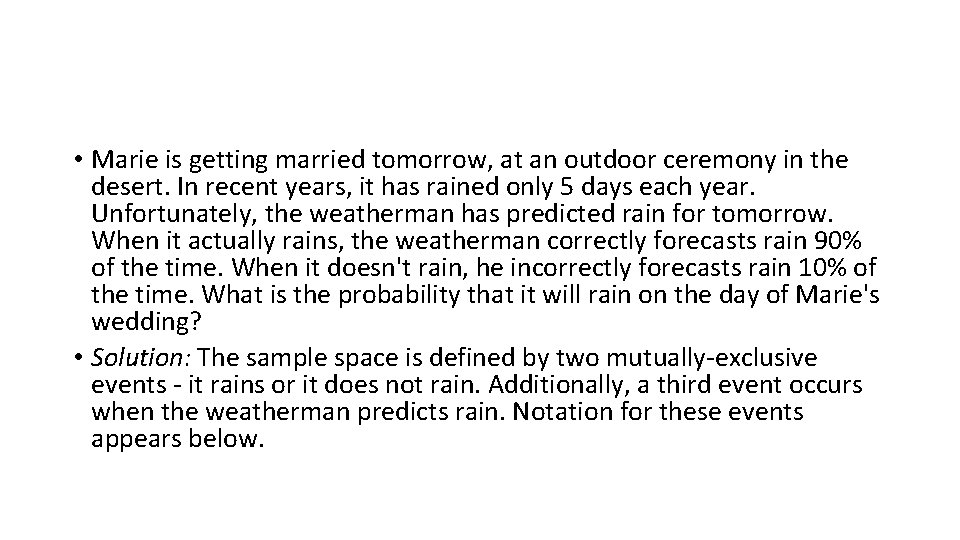

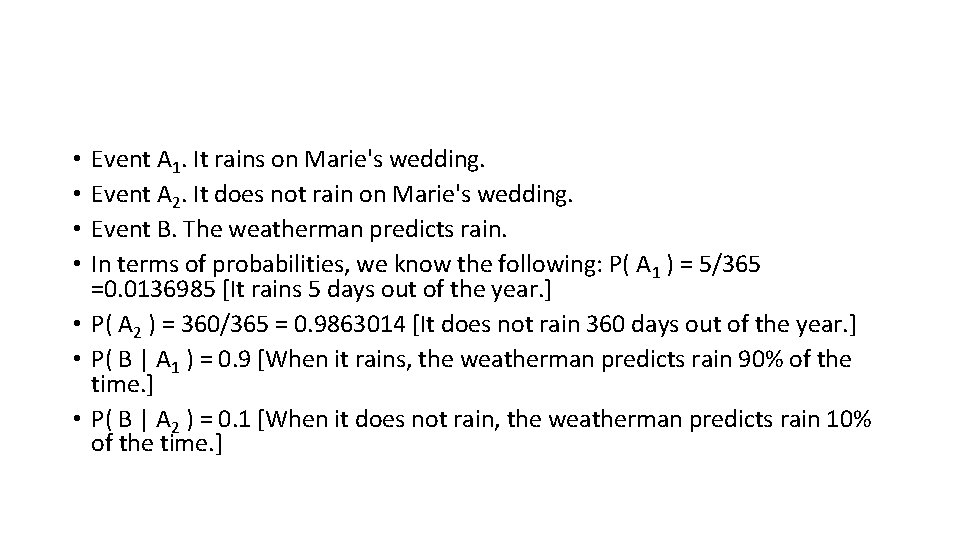

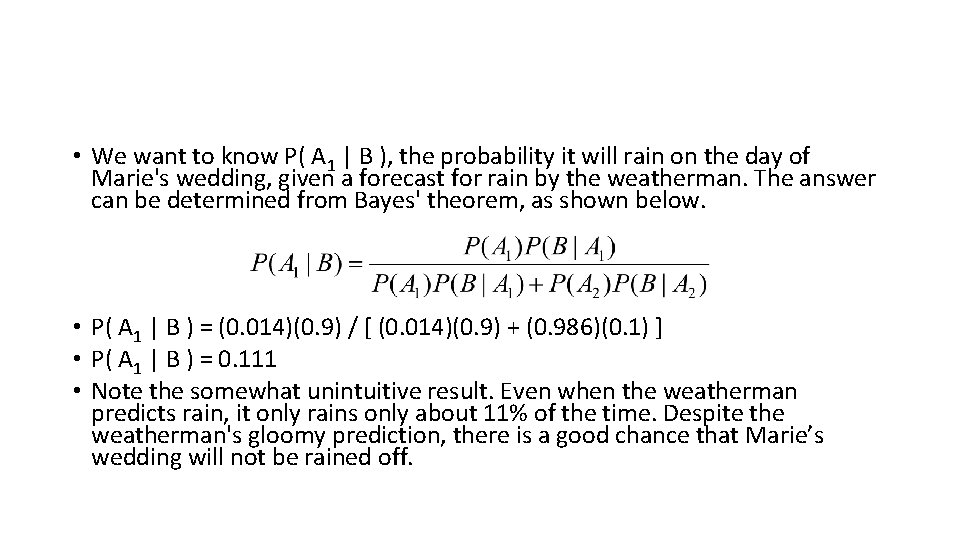

• Marie is getting married tomorrow, at an outdoor ceremony in the desert. In recent years, it has rained only 5 days each year. Unfortunately, the weatherman has predicted rain for tomorrow. When it actually rains, the weatherman correctly forecasts rain 90% of the time. When it doesn't rain, he incorrectly forecasts rain 10% of the time. What is the probability that it will rain on the day of Marie's wedding? • Solution: The sample space is defined by two mutually-exclusive events - it rains or it does not rain. Additionally, a third event occurs when the weatherman predicts rain. Notation for these events appears below.

Event A 1. It rains on Marie's wedding. Event A 2. It does not rain on Marie's wedding. Event B. The weatherman predicts rain. In terms of probabilities, we know the following: P( A 1 ) = 5/365 =0. 0136985 [It rains 5 days out of the year. ] • P( A 2 ) = 360/365 = 0. 9863014 [It does not rain 360 days out of the year. ] • P( B | A 1 ) = 0. 9 [When it rains, the weatherman predicts rain 90% of the time. ] • P( B | A 2 ) = 0. 1 [When it does not rain, the weatherman predicts rain 10% of the time. ] • •

• We want to know P( A 1 | B ), the probability it will rain on the day of Marie's wedding, given a forecast for rain by the weatherman. The answer can be determined from Bayes' theorem, as shown below. • P( A 1 | B ) = (0. 014)(0. 9) / [ (0. 014)(0. 9) + (0. 986)(0. 1) ] • P( A 1 | B ) = 0. 111 • Note the somewhat unintuitive result. Even when the weatherman predicts rain, it only rains only about 11% of the time. Despite the weatherman's gloomy prediction, there is a good chance that Marie’s wedding will not be rained off.

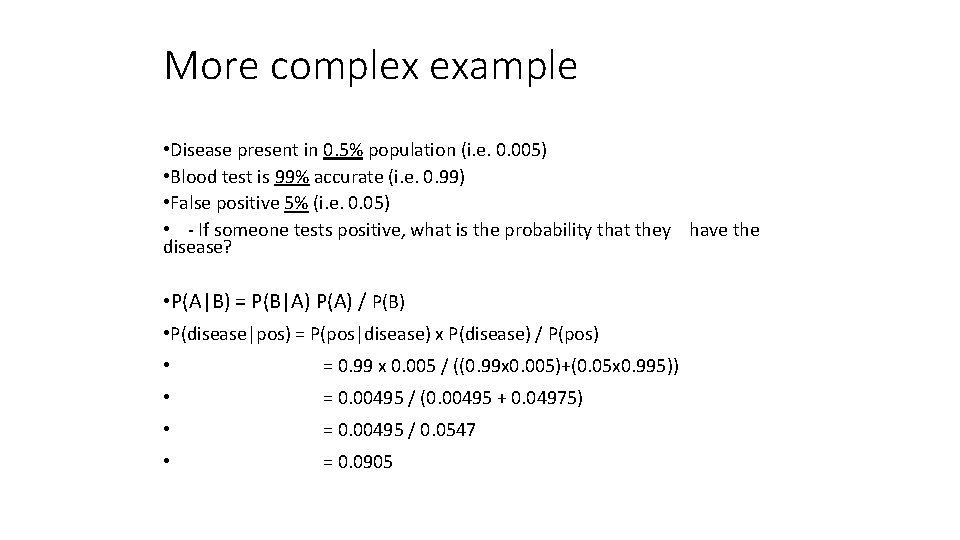

More complex example • Disease present in 0. 5% population (i. e. 0. 005) • Blood test is 99% accurate (i. e. 0. 99) • False positive 5% (i. e. 0. 05) • - If someone tests positive, what is the probability that they have the disease? • P(A|B) = P(B|A) P(A) / P(B) • P(disease|pos) = P(pos|disease) x P(disease) / P(pos) • = 0. 99 x 0. 005 / ((0. 99 x 0. 005)+(0. 05 x 0. 995)) • = 0. 00495 / (0. 00495 + 0. 04975) • = 0. 00495 / 0. 0547 • = 0. 0905

What does this mean? • If someone tests positive for the disease, they have a 0. 0905 chance of having the disease. • i. e. there is just a 9% chance that they have it. • Even though the test is very accurate, because the condition is so rare the test may not be useful.

So why is Bayesian probability useful? • It allows us to put probability values on unknowns. We can make logical inferences even regarding uncertain statements. • This can show counterintuitive results – e. g. that the disease test may not be useful.

Definitions of some more statistics terms • Parameter: it is a numerical measurement describing some characteristic of a population. • A statistic is a numerical measurement describing some characteristic of a sample. • Quantitative data consist of numbers representing counts or measurements. • Qualitative data (or categorical or attribute): These can be separated into different categories that are distinguished by some numeric characteristic.

- Slides: 27