Anomaly detection through Bayesian Support Vector Machines Vasilis

Anomaly detection through Bayesian Support Vector Machines Vasilis A. Sotiris Michael Pecht AMSC 663 Project Proposal 1

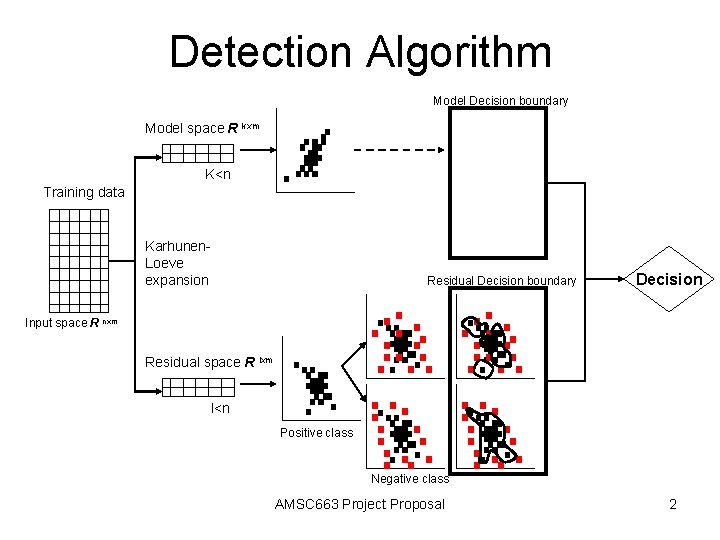

Detection Algorithm Model Decision boundary Model space R kxm K<n Training data Karhunen. Loeve expansion Residual Decision boundary Decision Input space R nxm Residual space R lxm l<n Positive class Negative class AMSC 663 Project Proposal 2

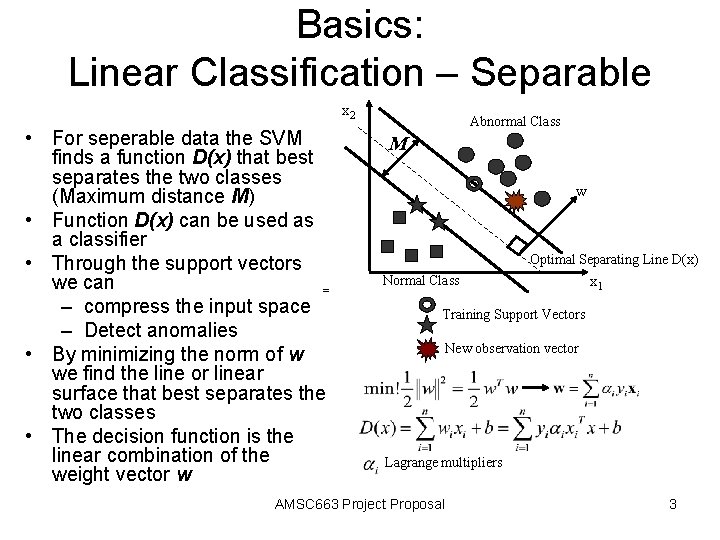

Basics: Linear Classification – Separable x 2 • For seperable data the SVM finds a function D(x) that best separates the two classes (Maximum distance M) • Function D(x) can be used as a classifier • Through the support vectors we can = – compress the input space – Detect anomalies • By minimizing the norm of w we find the line or linear surface that best separates the two classes • The decision function is the linear combination of the weight vector w Abnormal Class M w Optimal Separating Line D(x) Normal Class x 1 Training Support Vectors New observation vector Lagrange multipliers AMSC 663 Project Proposal 3

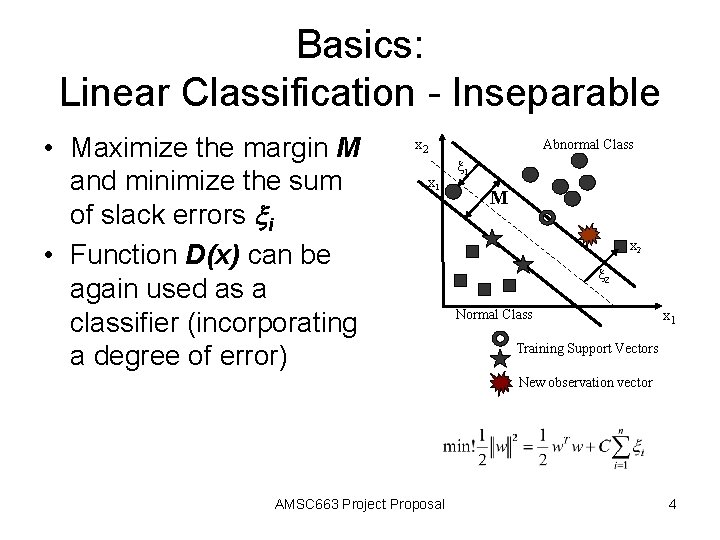

Basics: Linear Classification - Inseparable • Maximize the margin M and minimize the sum of slack errors xi • Function D(x) can be again used as a classifier (incorporating a degree of error) x 2 x 1 Abnormal Class x 1 M x 2 Normal Class x 1 Training Support Vectors New observation vector AMSC 663 Project Proposal 4

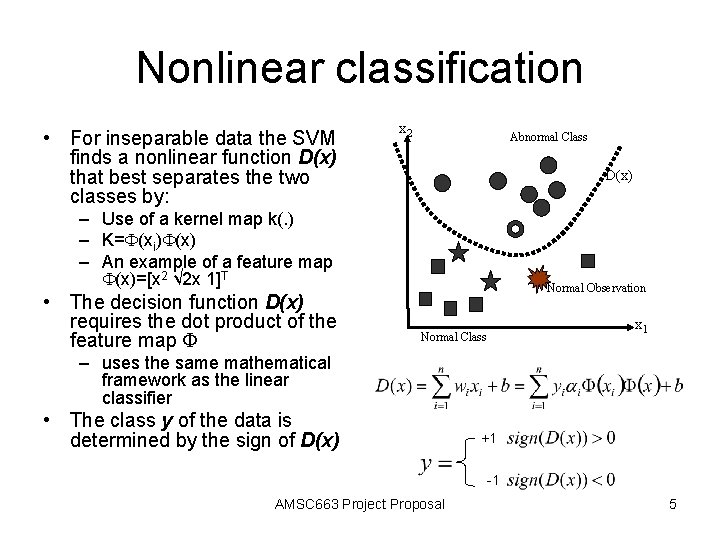

Nonlinear classification • For inseparable data the SVM finds a nonlinear function D(x) that best separates the two classes by: x 2 Abnormal Class D(x) – Use of a kernel map k(. ) – K=F(xi)F(x) – An example of a feature map F(x)=[x 2 √ 2 x 1]T • The decision function D(x) requires the dot product of the feature map F Normal Observation x 1 Normal Class – uses the same mathematical framework as the linear classifier • The class y of the data is determined by the sign of D(x) +1 -1 AMSC 663 Project Proposal 5

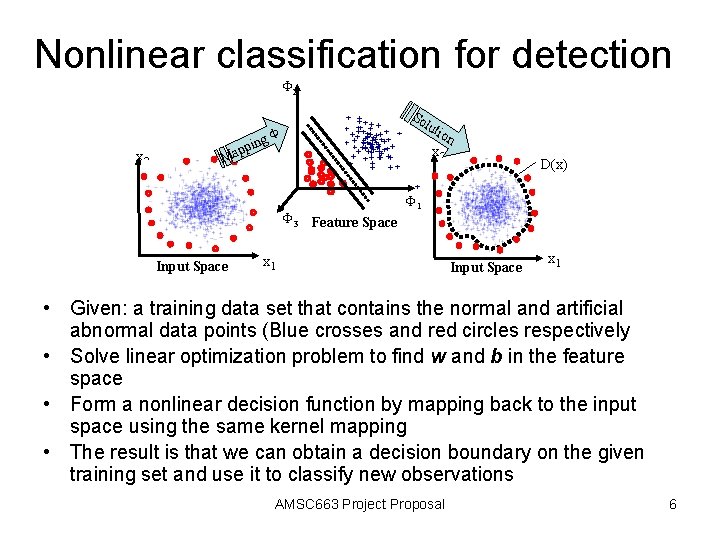

Nonlinear classification for detection F 2 x 2 p Ma g pin So lut F x 2 F 3 Feature Space Input Space ion D(x) F 1 x 1 Input Space x 1 • Given: a training data set that contains the normal and artificial abnormal data points (Blue crosses and red circles respectively • Solve linear optimization problem to find w and b in the feature space • Form a nonlinear decision function by mapping back to the input space using the same kernel mapping • The result is that we can obtain a decision boundary on the given training set and use it to classify new observations AMSC 663 Project Proposal 6

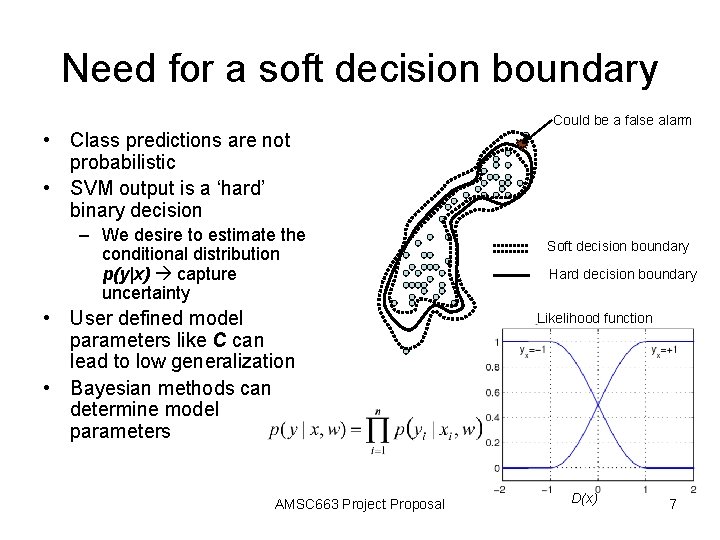

Need for a soft decision boundary Could be a false alarm • Class predictions are not probabilistic • SVM output is a ‘hard’ binary decision – We desire to estimate the conditional distribution p(y|x) capture uncertainty • User defined model parameters like C can lead to low generalization • Bayesian methods can determine model parameters AMSC 663 Project Proposal Soft decision boundary Hard decision boundary Likelihood function D(x) 7

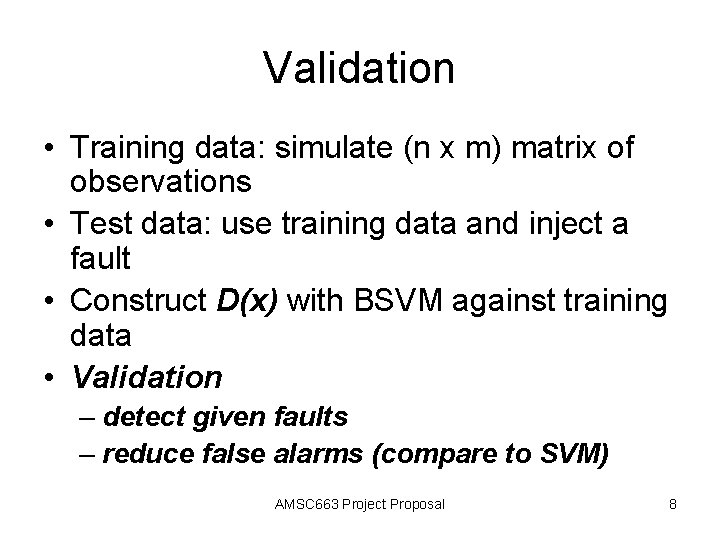

Validation • Training data: simulate (n x m) matrix of observations • Test data: use training data and inject a fault • Construct D(x) with BSVM against training data • Validation – detect given faults – reduce false alarms (compare to SVM) AMSC 663 Project Proposal 8

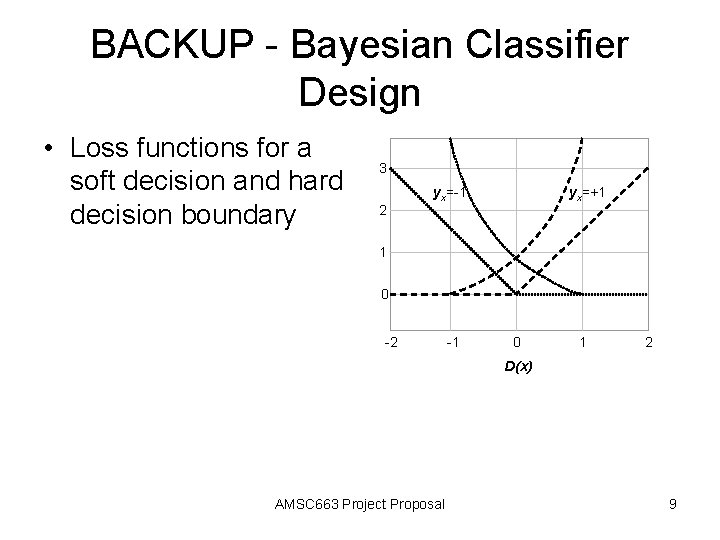

BACKUP - Bayesian Classifier Design • Loss functions for a soft decision and hard decision boundary 3 2 yx=-1 yx=+1 1 0 -2 -1 0 1 2 D(x) AMSC 663 Project Proposal 9

- Slides: 9