An overview of Infiniband Reykjavik June 24 th

An overview of Infiniband Reykjavik, June 24 th 2008 Eric Nivel eric@ru. is REYKJAVIK UNIVERSITY Dept. Computer Science Center for Analysis and Design of Intelligent Agents cadia. ru. is

motivation technologies hardware software Conclusion Motivation HPC: Large data sets, large amount of messages, n-to-n connectivity Lowest latencies as possible: physical layer, HCA, switch, protocol overhead (CPU load, memory operations) High bandwidth Scalability Low cost

motivation technologies hardware software Conclusion Available technologies Quadrics Elan 4 Very low latencies (0. 75 x) – 10 Gbps– high cost (2 x) – MPI, RDMA Myrinet 10 G Very low latencies (2 x) - 10 Gbps– low cost (1 x) – MPI, TCP/UDP/IP Infiniband Very low latencies (1 x) – 10. . . 60 Gbps – low cost (1 x) – MPI, RDMA, u. DAPL, SDP, SRP, TCP/UDP/IP Ethernet 10 Gbps High latencies (10 x) – 10 Gbps – high cost (2 x and more) – MPI, TCP/UDP/IP Ethernet 1 Gbps Very high latencies (100 x) – 1 Gbps – verl low cost (0. 1 x) – TCP/UDP/IP Quadrics / IB for HPC - Myrinet / 10 G ethernet for data centers Quadrics performs slightly better than IB for ~ twice the cost

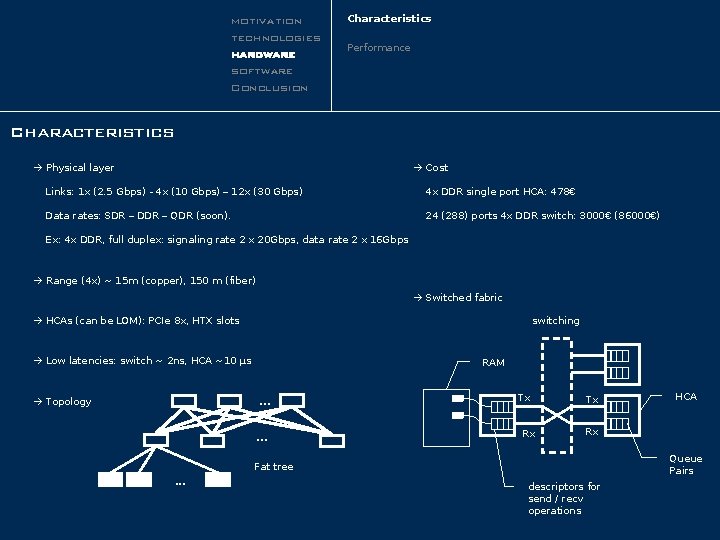

motivation technologies hardware software Conclusion Characteristics Performance Characteristics Physical layer Cost Links: 1 x (2. 5 Gbps) - 4 x (10 Gbps) – 12 x (30 Gbps) 4 x DDR single port HCA: 478€ Data rates: SDR – DDR – QDR (soon). 24 (288) ports 4 x DDR switch: 3000€ (86000€) Ex: 4 x DDR, full duplex: signaling rate 2 x 20 Gbps, data rate 2 x 16 Gbps Range (4 x) ~ 15 m (copper), 150 m (fiber) Switched fabric switching HCAs (can be LOM): PCIe 8 x, HTX slots Low latencies: switch ~ 2 ns, HCA ~10 µs Topology RAM . . . Tx Rx Queue Pairs Fat tree. . . HCA descriptors for send / recv operations

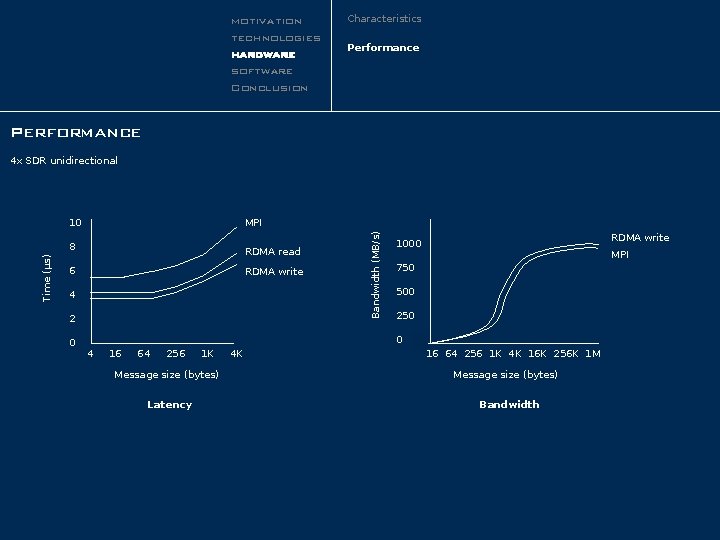

motivation technologies hardware software Conclusion Characteristics Performance 10 MPI 8 RDMA read 6 RDMA write 4 2 0 Bandwidth (MB/s) Time (µs) 4 x SDR unidirectional RDMA write 1000 MPI 750 500 250 0 4 16 64 256 1 K Message size (bytes) Latency 4 K 16 64 256 1 K 4 K 16 K 256 K 1 M Message size (bytes) Bandwidth

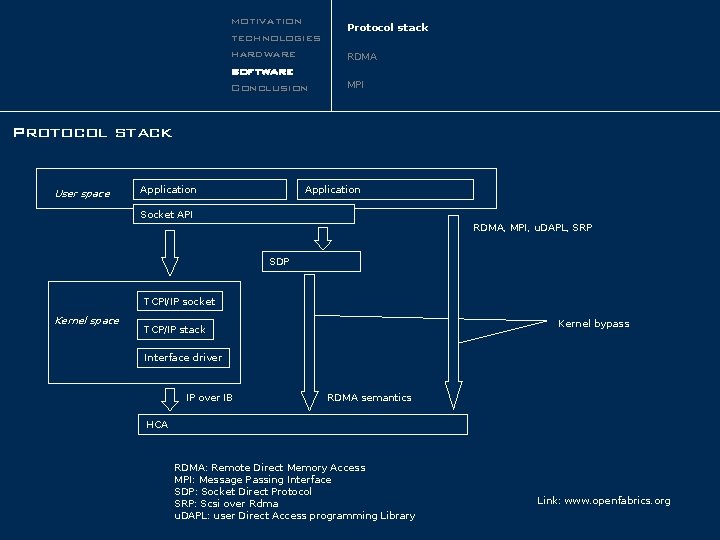

motivation technologies hardware software Conclusion Protocol stack RDMA MPI Protocol stack User space Application Socket API RDMA, MPI, u. DAPL, SRP SDP TCPI/IP socket Kernel space Kernel bypass TCP/IP stack Interface driver IP over IB RDMA semantics HCA RDMA: Remote Direct Memory Access MPI: Message Passing Interface SDP: Socket Direct Protocol SRP: Scsi over Rdma u. DAPL: user Direct Access programming Library Link: www. openfabrics. org

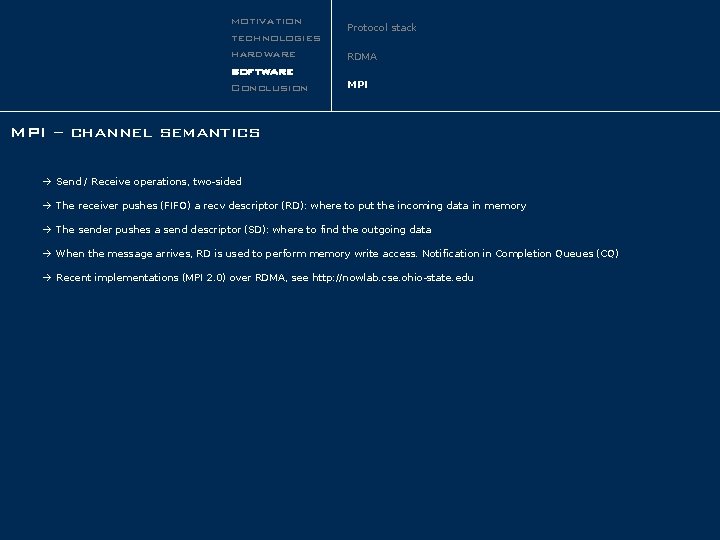

motivation technologies hardware software Conclusion Protocol stack RDMA MPI – channel semantics Send / Receive operations, two-sided The receiver pushes (FIFO) a recv descriptor (RD): where to put the incoming data in memory The sender pushes a send descriptor (SD): where to find the outgoing data When the message arrives, RD is used to perform memory write access. Notification in Completion Queues (CQ) Recent implementations (MPI 2. 0) over RDMA, see http: //nowlab. cse. ohio-state. edu

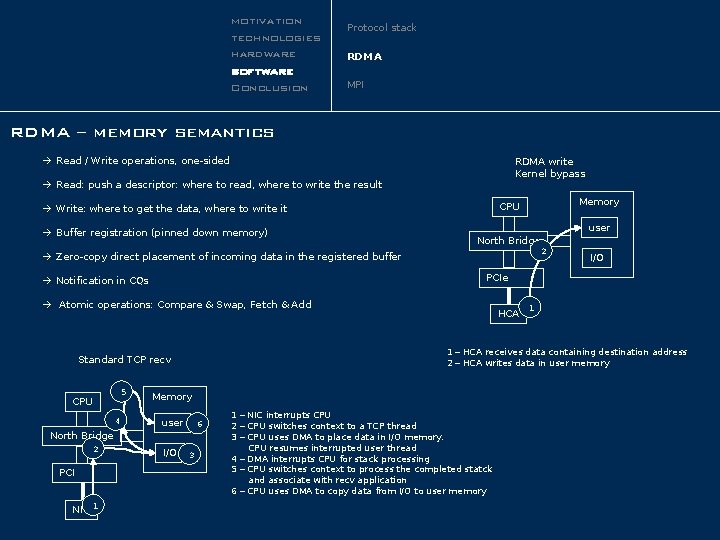

motivation technologies hardware software Conclusion Protocol stack RDMA MPI RDMA – memory semantics Read / Write operations, one-sided RDMA write Kernel bypass Read: push a descriptor: where to read, where to write the result Buffer registration (pinned down memory) Memory CPU Write: where to get the data, where to write it user North Bridge 2 Zero-copy direct placement of incoming data in the registered buffer PCIe Notification in CQs Atomic operations: Compare & Swap, Fetch & Add 5 4 Memory user 6 North Bridge 2 PCI NIC 1 HCA I/O 1 1 – HCA receives data containing destination address 2 – HCA writes data in user memory Standard TCP recv CPU I/O 3 1 – NIC interrupts CPU 2 – CPU switches context to a TCP thread 3 – CPU uses DMA to place data in I/O memory. CPU resumes interrupted user thread 4 – DMA interrupts CPU for stack processing 5 – CPU switches context to process the completed statck and associate with recv application 6 – CPU uses DMA to copy data from I/O to user memory

motivation technologies hardware software Conclusion IB/RDMA well suited for HPC within reasonable costs MPI, MVAPICH have now reached good performance levels Compatibility with Berkley style sockets – don’t expect too much on Infinihost III. Connect. X: TCP/IP protocol CPU offload in hardware

- Slides: 9