Using Vector Capabilities of GPUs to Accelerate FFT

Using Vector Capabilities of GPUs to Accelerate FFT Vasily Volkov and Brian Kazian CS 258 Spring 2008

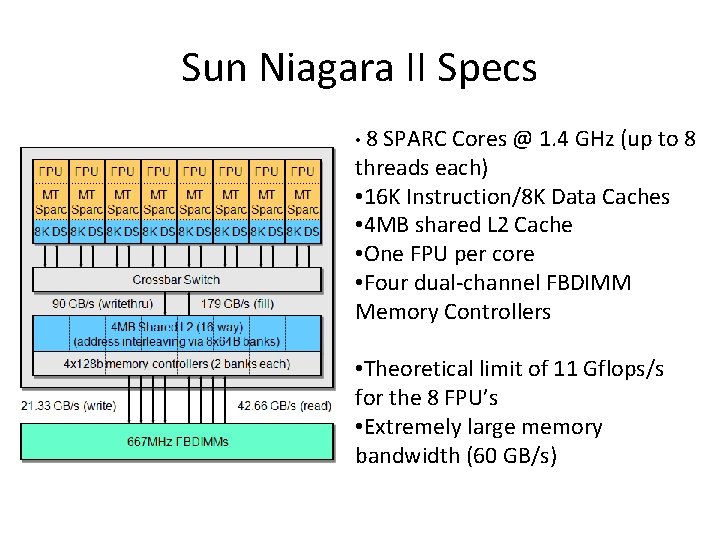

Sun Niagara II Specs • 8 SPARC Cores @ 1. 4 GHz (up to 8 threads each) • 16 K Instruction/8 K Data Caches • 4 MB shared L 2 Cache • One FPU per core • Four dual-channel FBDIMM Memory Controllers • Theoretical limit of 11 Gflops/s for the 8 FPU’s • Extremely large memory bandwidth (60 GB/s)

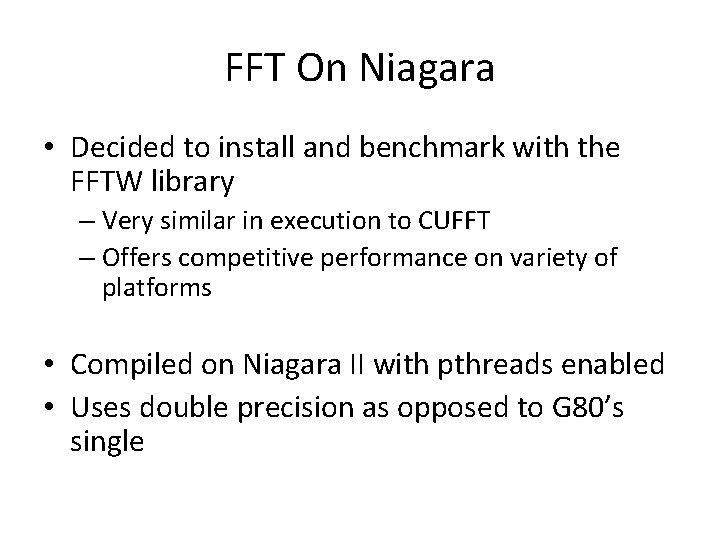

FFT On Niagara • Decided to install and benchmark with the FFTW library – Very similar in execution to CUFFT – Offers competitive performance on variety of platforms • Compiled on Niagara II with pthreads enabled • Uses double precision as opposed to G 80’s single

CUDA Niagara II 6 51 2 10 24 20 48 40 96 81 92 16 38 4 32 76 8 65 53 13 6 10 7 26 2 21 4 52 4 42 10 88 48 5 20 76 97 1 41 52 94 3 83 04 88 60 8 25 8 12 64 GFlops/s Single FFT Comparison CUDA vs Niagara II - Single 1 D FFT 30 25 20 15 10 5 0 FFT Size

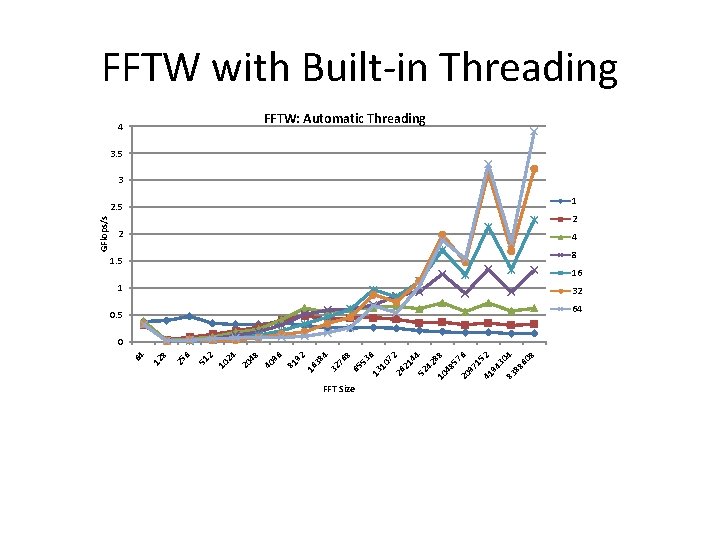

4 32 76 8 65 53 6 13 10 72 26 21 44 52 42 8 10 8 48 57 20 6 97 15 41 2 94 30 83 4 88 60 8 16 38 81 92 4 40 96 20 48 2 10 24 51 6 8 25 12 64 GFlops/s FFTW with Built-in Threading FFTW: Automatic Threading 3. 5 3 2. 5 1 2 2 4 1. 5 8 1 16 32 0. 5 64 0 FFT Size

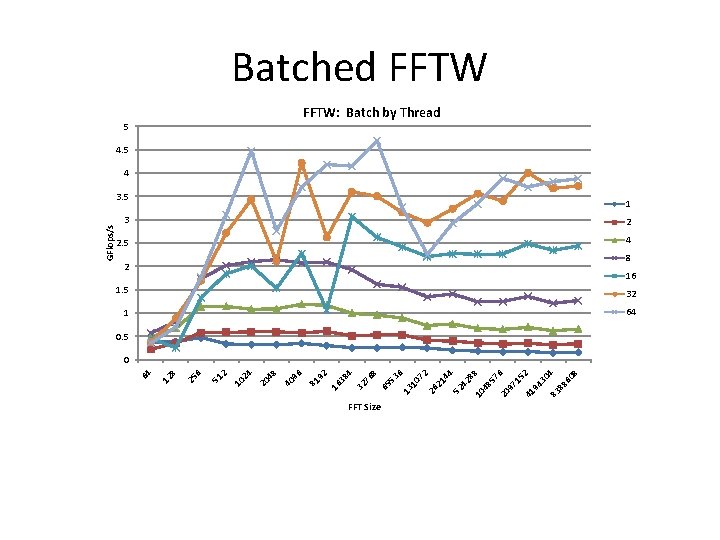

FFT Size 6 07 2 26 21 44 52 42 8 10 8 48 57 20 6 97 15 41 2 94 30 83 4 88 60 8 13 1 65 53 8 32 76 4 16 38 5 81 92 40 96 20 48 10 24 2 51 6 25 8 12 64 GFlops/s Batched FFTW: Batch by Thread 4. 5 4 3. 5 1 3 2 2. 5 4 2 8 16 1. 5 32 1 64 0. 5 0

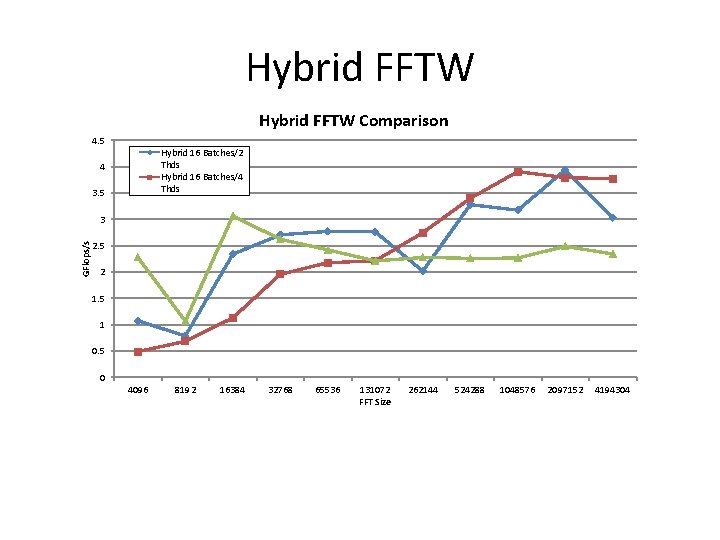

Hybrid FFTW Comparison 4. 5 Hybrid 16 Batches/2 Thds Hybrid 16 Batches/4 Thds 4 3. 5 GFlops/s 3 2. 5 2 1. 5 1 0. 5 0 4096 8192 16384 32768 65536 131072 FFT Size 262144 524288 1048576 2097152 4194304

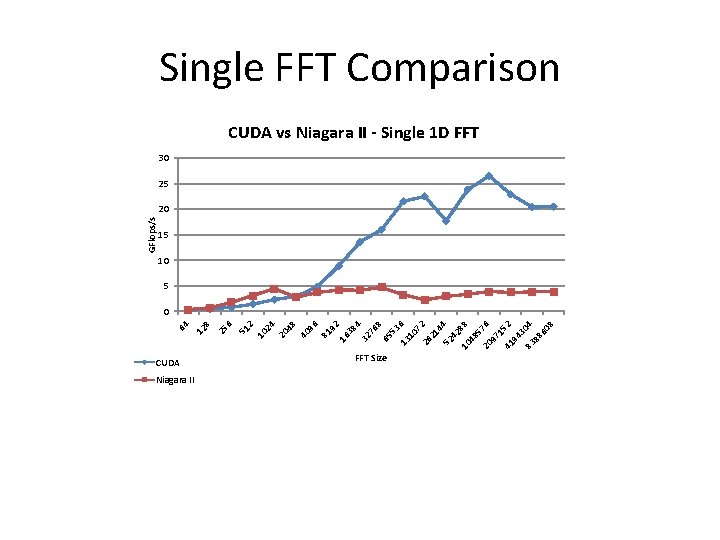

Results • Found that the Hybrid gave best results – Tune thread count for problem size • Limited by the number of threads in comparison to CUDA • Issues with data alignment in cache • Not stellar performance out of the box with FFTW

- Slides: 8