Studies in Parallel Distributed Systems Parallel Computing Using

Studies in Parallel & Distributed Systems – Parallel Computing Using FPGA (Field Arrays) 159. 735 Programmable Gate Sohaib Ahmed 15 th May, 2009

Outlines v FPGAs and their internal structures v Why use FPGAs for parallel computing ? v Types of FPGAs v Application Examples and Processing in Applications v FPGAs in Parallel Computing v FPGA Limitations v Design Methods for FPGAs v Conclusion

FPGAs - Introduction v Ross Freeman, one of the Xilinx founder (www. xilinx. com) invented FPGAs in mid 1980 s v Other vendors include Altera, Actel, Lattice Semiconductor and Atmel v Support the notion of reconfigurable computing q Reconfigurable Computing v Use of multiple reconfigurable devices (such as FPGAs) and multiple microprocessors v Processor(s) execute sequential and non-critical code while reconfigurable fabric (FPGAs) performed that code which can be mapped efficiently to hardware

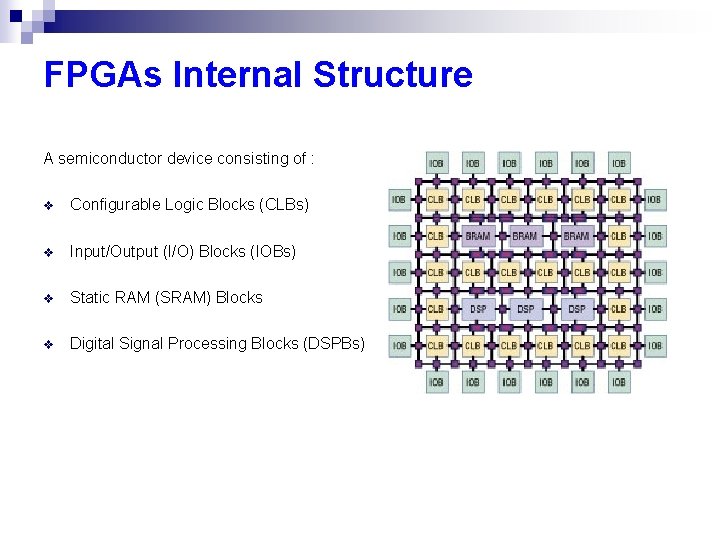

FPGAs Internal Structure A semiconductor device consisting of : v Configurable Logic Blocks (CLBs) v Input/Output (I/O) Blocks (IOBs) v Static RAM (SRAM) Blocks v Digital Signal Processing Blocks (DSPBs)

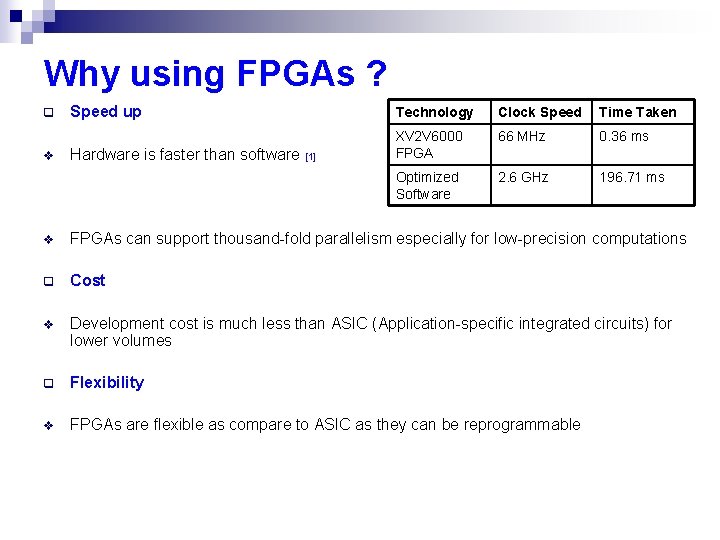

Why using FPGAs ? q v Speed up Technology Clock Speed Time Taken 66 MHz 0. 36 ms Hardware is faster than software [1] XV 2 V 6000 FPGA Optimized Software 2. 6 GHz 196. 71 ms v FPGAs can support thousand-fold parallelism especially for low-precision computations q Cost v Development cost is much less than ASIC (Application-specific integrated circuits) for lower volumes q Flexibility v FPGAs are flexible as compare to ASIC as they can be reprogrammable

Types of FPGAs q CPLDs ( Complex Programmable Logic Devices) v Requires voltage levels that are not usually present on computer systems q Anti-fuse based devices v Program only once q Static-RAM-Based Services v Can be programmed while the device is running

Application Examples v Virtex-II Pro v Virtex-4 v Xilinx Devices v Recent success of FPGA in Tsubame Cluster in Tokyo v Improved performance by additional 25%

![Processing in Applications [2] Processing in Applications [2]](http://slidetodoc.com/presentation_image_h/22a36d723c8af7e6f1611272aa470667/image-8.jpg)

Processing in Applications [2]

FPGAs in Parallel Computing v Dynamic matching of a node to the computational requirement of an application v Application specific computers become more flexible v Enables the support of multi modes of parallel computing : MIMD, SIMD etc v Partial reconfiguration can allow better hardware resource utilization v Can extend dynamic task allocation scheme to allow for dynamic hardware allocation v Support for variable grain size

FPGAs Limitations q Capacity v Logic blocks have not dense representation as instructions have v Conventional processor run 90 % of code that takes 10 % of execution time v Reconfigurable logic takes 10 % of code that takes 90 % of execution time q Tools v Compilers for reconfigurable logic are not very good v Some operations are hard to implement on FPGAs like random access and pointerbased data structures

![Design Methods for FPGA [3] q Use an algorithm optimal for FPGAs v Systolic Design Methods for FPGA [3] q Use an algorithm optimal for FPGAs v Systolic](http://slidetodoc.com/presentation_image_h/22a36d723c8af7e6f1611272aa470667/image-11.jpg)

Design Methods for FPGA [3] q Use an algorithm optimal for FPGAs v Systolic arrays for correlation are efficient q Use a computing mode appropriate for FPGAs v v Streaming, systolic, arrays of fine-grained automata preferable Searching biomedical databases for similar sequences q Use appropriate FPGA structures v Analyzing DNA or protein sequences A straightforward systolic array v

![Design Methods for FPGA [3] q Living with Amdahl’s Law v v Speeding up Design Methods for FPGA [3] q Living with Amdahl’s Law v v Speeding up](http://slidetodoc.com/presentation_image_h/22a36d723c8af7e6f1611272aa470667/image-12.jpg)

Design Methods for FPGA [3] q Living with Amdahl’s Law v v Speeding up an application significantly through an enhancement requires most of the application to be enhanced NAMD & Proto. Mol framework was designed for computational experimentation q Hide latency of independent functions v v Latency hiding is a basic technique for achieving high performance in parallel applications Functions on the same chip to operate in parallel q Use rate-matching to remove bottlenecks v Function level parallelism is built in

![Design Methods for FPGA [3] q Take advantage of FPGA-specific hardware v Hard-wired components Design Methods for FPGA [3] q Take advantage of FPGA-specific hardware v Hard-wired components](http://slidetodoc.com/presentation_image_h/22a36d723c8af7e6f1611272aa470667/image-13.jpg)

Design Methods for FPGA [3] q Take advantage of FPGA-specific hardware v Hard-wired components such as integer multipliers and independently accessible BRAMs (Block RAMs) v Xilinx VP 100 has 400 independent accessible, 32 -bit quad-ported BRAMs can help in achieving 20 Terabytes per sec at capacity q Use appropriate arithmetic precision q Use appropriate arithmetic mode q Minimize use of high-cost arithmetic operations

Current Progress in Hardware & Software v SRC-6 and SRC-7 are parallel architectures in which cross bar switch that can be piled for scalability v High performance computing vendors like Silicon Graphics Inc. (SGI), Cray and Linux Networx incorporated FPGAs in their parallel architectures [4] v VHDL, Verilog are used to create hardware kernel v Other hardware description languages like Carte C, Carte Fortran, Impulse C, Mitrion C and Handel-C are used. v Annapolis Micro Systems’ Core. Fire, Starbridge Systems’ Viva, Xilinx System Generator and DSPlogic’s reconfigurable computing toolbox are the high-level graphical programming development tools [5]

Conclusion Using FPGAs in Parallel computing offer following benefits : v Application acceleration v Flexibility in terms of application domain v Potential cost benefits over ASICs v The ability to exploit variable levels and modes of parallelism v More effective use of hardware resources

![q References [1] Todman, T. J, Constantinides, G. A, Witon, S. J. E, Mencer, q References [1] Todman, T. J, Constantinides, G. A, Witon, S. J. E, Mencer,](http://slidetodoc.com/presentation_image_h/22a36d723c8af7e6f1611272aa470667/image-16.jpg)

q References [1] Todman, T. J, Constantinides, G. A, Witon, S. J. E, Mencer, O. , Luk, W. & cheung, P. Y. K (2005) Reconfigurable computing : architectures and design methods [2] Altera Cooperation White Paper (2007). Accerating high performance computing with FPGAs. October 2007 [3] Herbordt, M. C. , Van. Court, T. , Yongfeng, G. , Shukhwani, B. , Conti, A. , Model, J. & Disabello, D. (2007). Achieving high performance with FPGA-Based computing [4] Buell, D. , El-Ghazawi, T. , Gaj, K. , & Kindratenko, V. (2007). High-Performance reconfigurable computing. IEEE Computer Society, March, 2007 [5] El-Ghazawi, T. , El-Araby, E. , Miaoqing Huang, Gaj, K. , Kindratenko, V. , & Buell, D. (2008). The promise of highperformance reconfigurable computing. IEEE computer society, February, 2008 pp. 69 -76. Any Questions ? Thank You

- Slides: 16