Semantic Analysis SyntaxDriven Semantics CS 4705 Semantics and

- Slides: 21

Semantic Analysis: Syntax-Driven Semantics CS 4705

Semantics and Syntax • Some representations of meaning: The cat howls at the moon. – Logic: howl(cat, moon) – Frames: Event: howling Agent: cat Patient: moon • How do we decide what we want to represent? – Entities, categories, events, time, aspect – Predicates, arguments (constants, variables) – And…quantifiers, operators (e. g. temporal) • Today: How can we compute meaning about these categories from these representations?

Compositional Semantics • Assumption: The meaning of the whole is comprised of the meaning of its parts – George cooks. Dan eats. Dan is sick. – Cook(George) Eat(Dan) Sick(Dan) – If George cooks and Dan eats, Dan will get sick. (Cook(George) ^ eat(Dan)) Sick(Dan) • Meaning derives from – The people and activities represented (predicates and arguments, or, nouns and verbs) – The way they are ordered and related: syntax of the representation, which may also reflect the syntax of the sentence

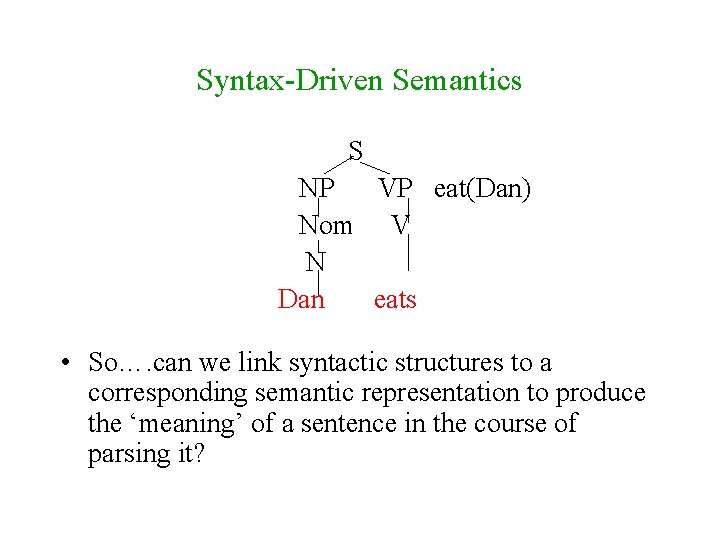

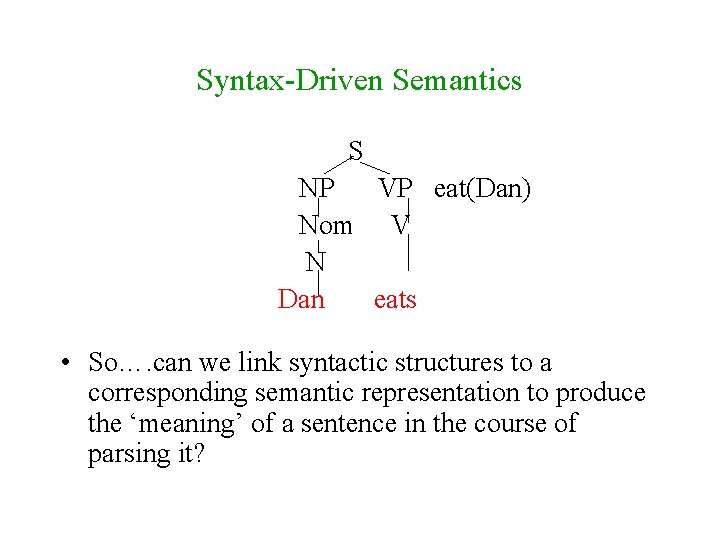

Syntax-Driven Semantics S NP VP eat(Dan) Nom V N Dan eats • So…. can we link syntactic structures to a corresponding semantic representation to produce the ‘meaning’ of a sentence in the course of parsing it?

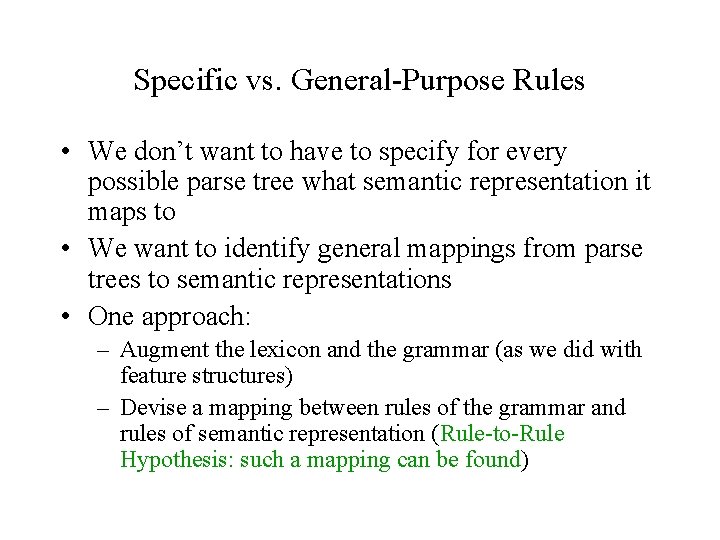

Specific vs. General-Purpose Rules • We don’t want to have to specify for every possible parse tree what semantic representation it maps to • We want to identify general mappings from parse trees to semantic representations • One approach: – Augment the lexicon and the grammar (as we did with feature structures) – Devise a mapping between rules of the grammar and rules of semantic representation (Rule-to-Rule Hypothesis: such a mapping can be found)

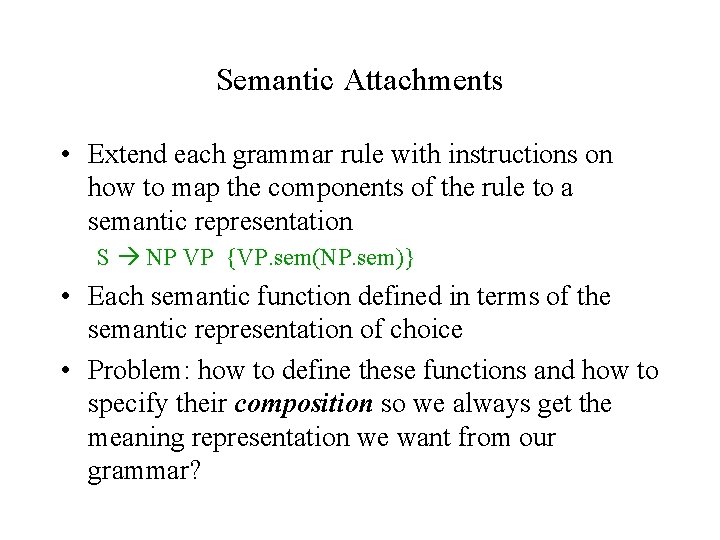

Semantic Attachments • Extend each grammar rule with instructions on how to map the components of the rule to a semantic representation S NP VP {VP. sem(NP. sem)} • Each semantic function defined in terms of the semantic representation of choice • Problem: how to define these functions and how to specify their composition so we always get the meaning representation we want from our grammar?

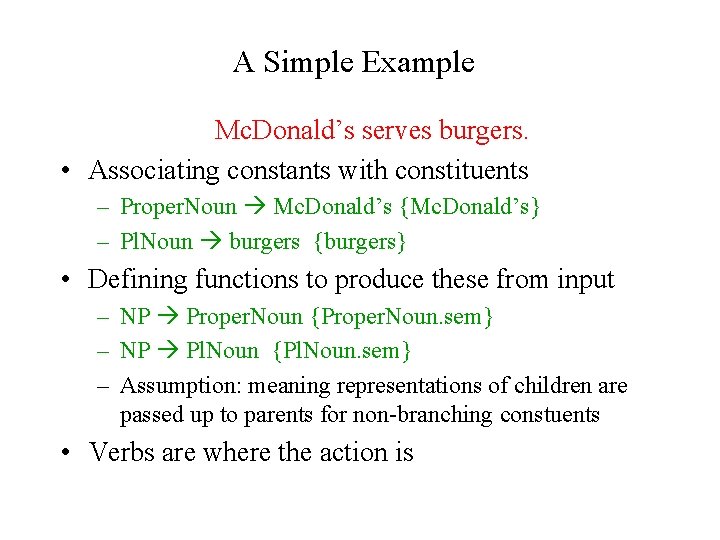

A Simple Example Mc. Donald’s serves burgers. • Associating constants with constituents – Proper. Noun Mc. Donald’s {Mc. Donald’s} – Pl. Noun burgers {burgers} • Defining functions to produce these from input – NP Proper. Noun {Proper. Noun. sem} – NP Pl. Noun {Pl. Noun. sem} – Assumption: meaning representations of children are passed up to parents for non-branching constuents • Verbs are where the action is

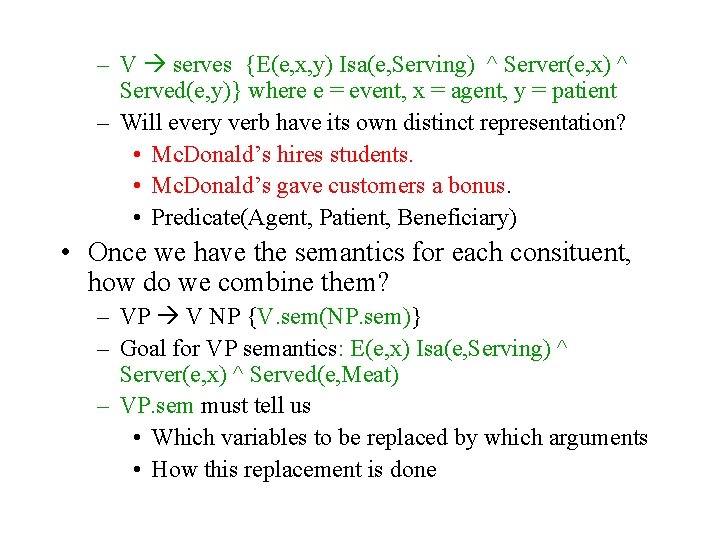

– V serves {E(e, x, y) Isa(e, Serving) ^ Server(e, x) ^ Served(e, y)} where e = event, x = agent, y = patient – Will every verb have its own distinct representation? • Mc. Donald’s hires students. • Mc. Donald’s gave customers a bonus. • Predicate(Agent, Patient, Beneficiary) • Once we have the semantics for each consituent, how do we combine them? – VP V NP {V. sem(NP. sem)} – Goal for VP semantics: E(e, x) Isa(e, Serving) ^ Server(e, x) ^ Served(e, Meat) – VP. sem must tell us • Which variables to be replaced by which arguments • How this replacement is done

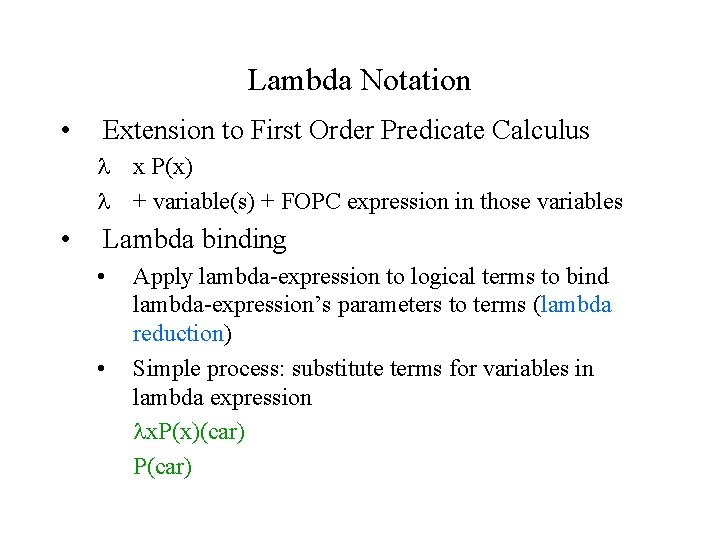

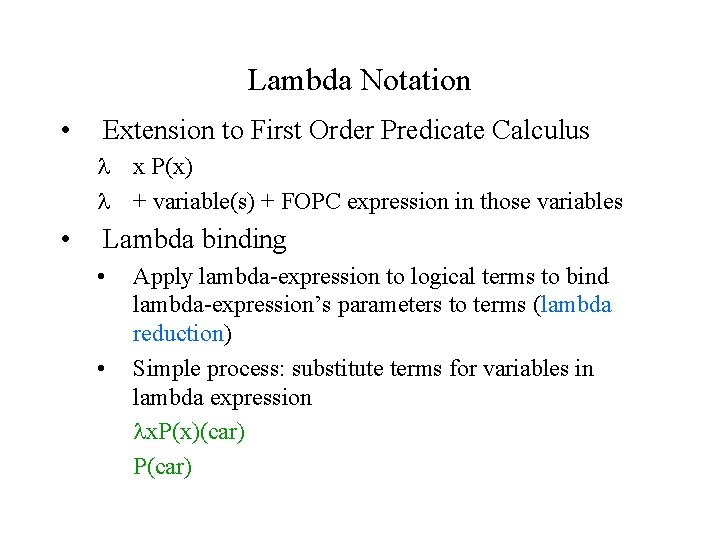

Lambda Notation • Extension to First Order Predicate Calculus x P(x) + variable(s) + FOPC expression in those variables • Lambda binding • • Apply lambda-expression to logical terms to bind lambda-expression’s parameters to terms (lambda reduction) Simple process: substitute terms for variables in lambda expression x. P(x)(car) P(car)

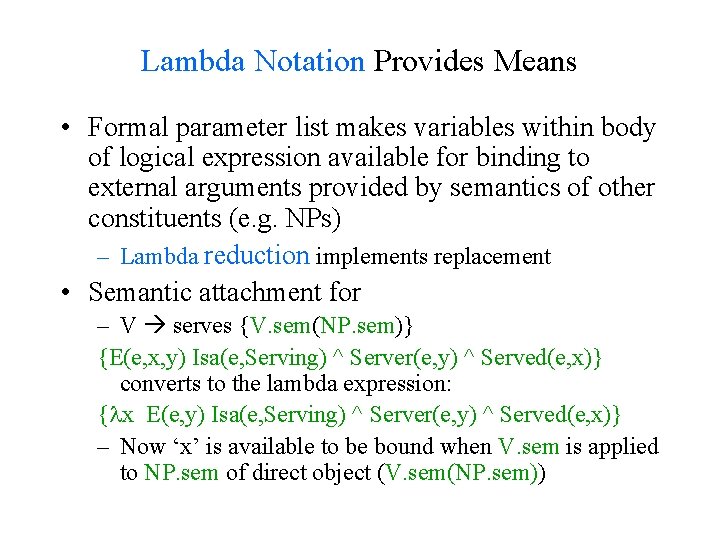

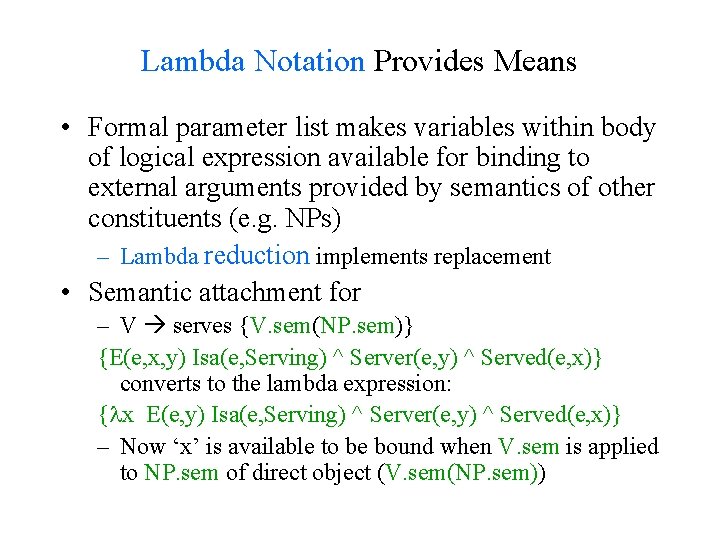

Lambda Notation Provides Means • Formal parameter list makes variables within body of logical expression available for binding to external arguments provided by semantics of other constituents (e. g. NPs) – Lambda reduction implements replacement • Semantic attachment for – V serves {V. sem(NP. sem)} {E(e, x, y) Isa(e, Serving) ^ Server(e, y) ^ Served(e, x)} converts to the lambda expression: { x E(e, y) Isa(e, Serving) ^ Server(e, y) ^ Served(e, x)} – Now ‘x’ is available to be bound when V. sem is applied to NP. sem of direct object (V. sem(NP. sem))

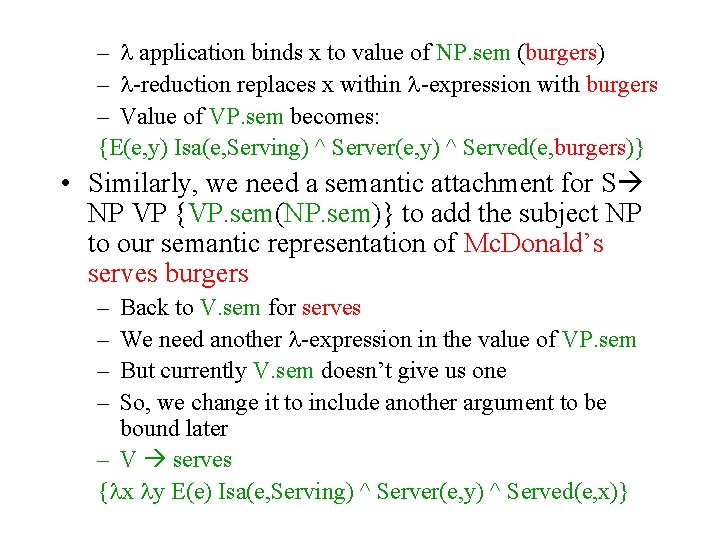

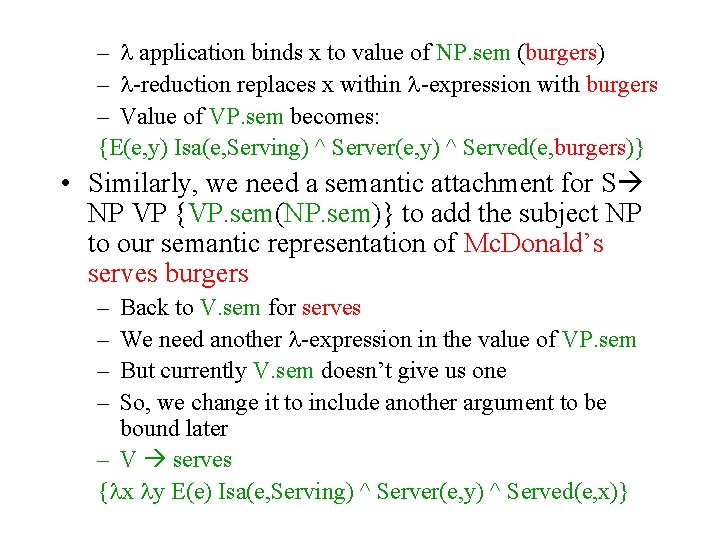

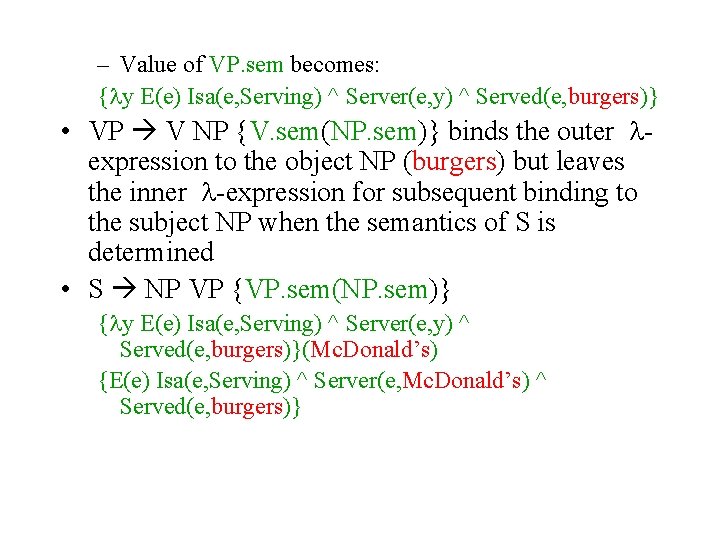

– application binds x to value of NP. sem (burgers) – -reduction replaces x within -expression with burgers – Value of VP. sem becomes: {E(e, y) Isa(e, Serving) ^ Server(e, y) ^ Served(e, burgers)} • Similarly, we need a semantic attachment for S NP VP {VP. sem(NP. sem)} to add the subject NP to our semantic representation of Mc. Donald’s serves burgers – – Back to V. sem for serves We need another -expression in the value of VP. sem But currently V. sem doesn’t give us one So, we change it to include another argument to be bound later – V serves { x y E(e) Isa(e, Serving) ^ Server(e, y) ^ Served(e, x)}

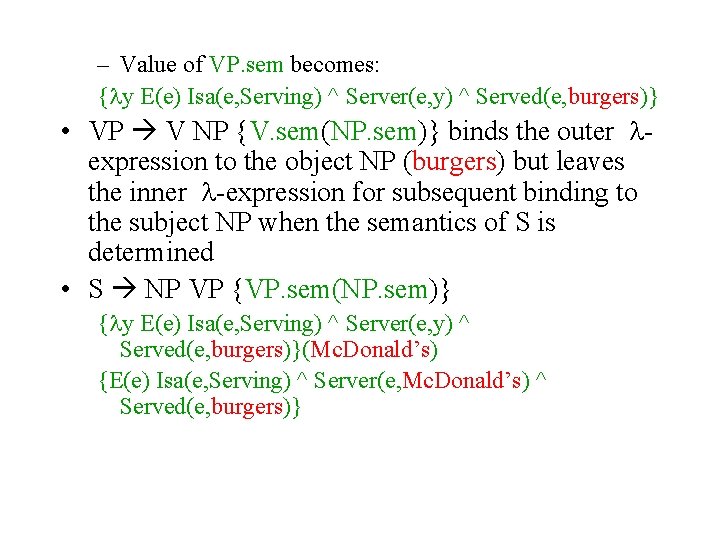

– Value of VP. sem becomes: { y E(e) Isa(e, Serving) ^ Server(e, y) ^ Served(e, burgers)} • VP V NP {V. sem(NP. sem)} binds the outer expression to the object NP (burgers) but leaves the inner -expression for subsequent binding to the subject NP when the semantics of S is determined • S NP VP {VP. sem(NP. sem)} { y E(e) Isa(e, Serving) ^ Server(e, y) ^ Served(e, burgers)}(Mc. Donald’s) {E(e) Isa(e, Serving) ^ Server(e, Mc. Donald’s) ^ Served(e, burgers)}

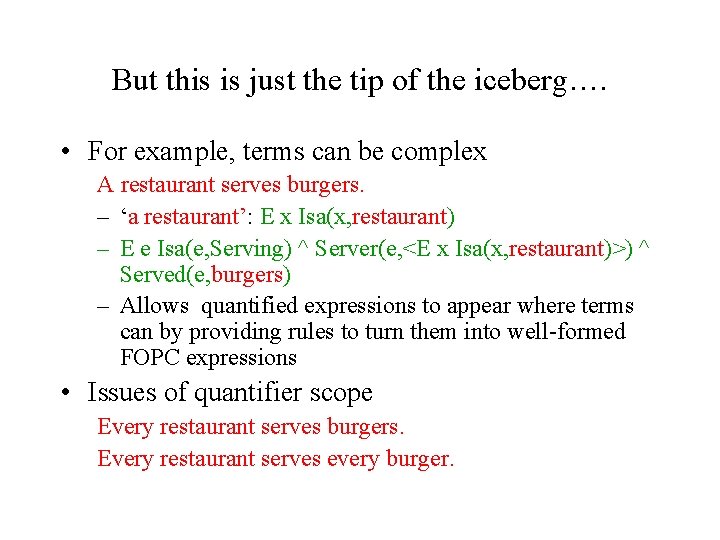

But this is just the tip of the iceberg…. • For example, terms can be complex A restaurant serves burgers. – ‘a restaurant’: E x Isa(x, restaurant) – E e Isa(e, Serving) ^ Server(e, <E x Isa(x, restaurant)>) ^ Served(e, burgers) – Allows quantified expressions to appear where terms can by providing rules to turn them into well-formed FOPC expressions • Issues of quantifier scope Every restaurant serves burgers. Every restaurant serves every burger.

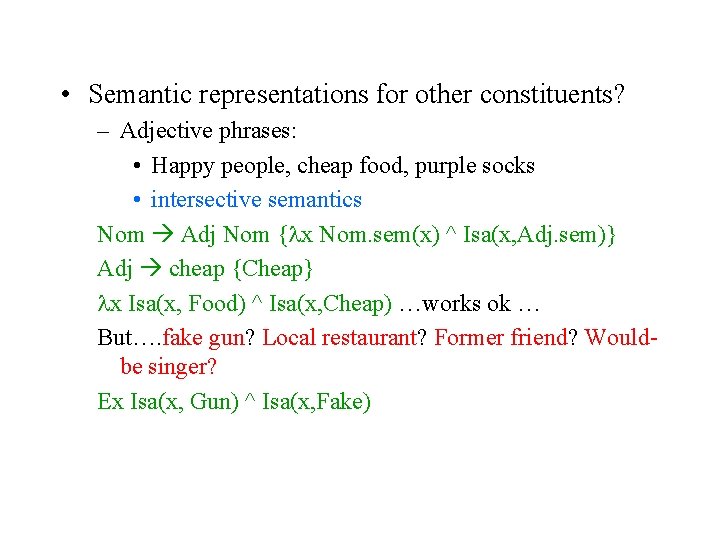

• Semantic representations for other constituents? – Adjective phrases: • Happy people, cheap food, purple socks • intersective semantics Nom Adj Nom { x Nom. sem(x) ^ Isa(x, Adj. sem)} Adj cheap {Cheap} x Isa(x, Food) ^ Isa(x, Cheap) …works ok … But…. fake gun? Local restaurant? Former friend? Wouldbe singer? Ex Isa(x, Gun) ^ Isa(x, Fake)

Doing Compositional Semantics • To incorporate semantics into grammar we must – Figure out right representation for each constituent based on the parts of that constituent (e. g. Adj) – Figure out the right representation for a category of constituents based on other grammar rules, making use of that constituent (e. g. V. sem) • This gives us a set of function-like semantic attachments incorporated into our CFG – E. g. Nom Adj Nom { x Nom. sem(x) ^ Isa(x, Adj. sem)}

What do we do with them? • As we did with feature structures: – Alter, e. g. , an Early-style parser so when constituents (dot at the end of the rule) are completed, the attached semantic function applied and meaning representation created and stored with state • Or, let parser run to completion and then walk through resulting tree running semantic attachments from bottom-up

Option 1 (Integrated Semantic Analysis) S NP VP {VP. sem(NP. sem)} – VP. sem has been stored in state representing VP – NP. sem stored with the state for NP – When rule completed, retrieve value of VP. sem and of NP. sem, and apply VP. sem to NP. sem – Store result in S. sem. • As fragments of input parsed, semantic fragments created • Can be used to block ambiguous representations

Drawback • You also perform semantic analysis on orphaned constituents that play no role in final parse • Case for pipelined approach: Do semantics after syntactic parse

Non-Compositional Language • What do we do with language whose meaning isn’t derived from the meanings of its parts – Non-compositional modifiers: fake, former, local – Metaphor: • You’re the cream in my coffee. She’s the cream in George’s coffee. • The break-in was just the tip of the iceberg. This was only the tip of Shirley’s iceberg. – Idioms: • The old man finally kicked the bucket. The old man finally kicked the proverbial bucket. – Deferred reference: The ham sandwich wants his check. • Solutions? Mix lexical items with special grammar rules? Or? ? ?

Summing Up • Hypothesis: Principle of Compositionality – Semantics of NL sentences and phrases can be composed from the semantics of their subparts • Rules can be derived which map syntactic analysis to semantic representation (Rule-to-Rule Hypothesis) – Lambda notation provides a way to extend FOPC to this end – But coming up with rule 2 rule mappings is hard • Idioms, metaphors perplex the process

Next • Read Ch. 16 • Homework 2 assigned – START NOW!!