Scaling the Bandwidth Wall Challenges in and Avenues

Scaling the Bandwidth Wall: Challenges in and Avenues for CMP Scalability 36 th International Symposium on Computer Architecture Brian Rogers†‡, Anil Krishna†‡, Gordon Bell‡, Ken Vu‡, Xiaowei Jiang†, Yan Solihin† † NC STATE UNIVERSITY ‡

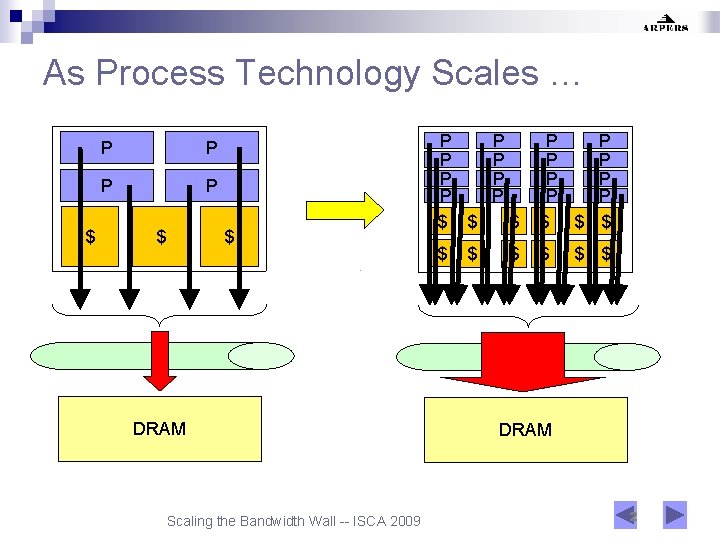

As Process Technology Scales … $ P P $ $ DRAM Scaling the Bandwidth Wall -- ISCA 2009 P P P P $ $ $ $ P P P P $ $ $ DRAM 2

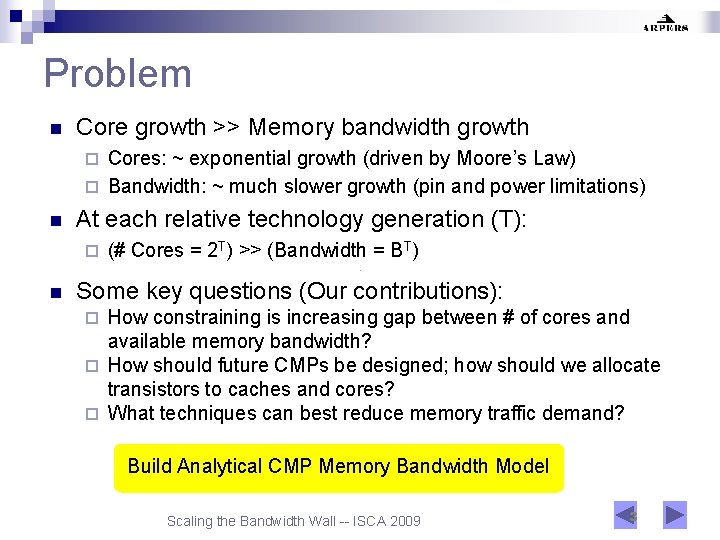

Problem n Core growth >> Memory bandwidth growth Cores: ~ exponential growth (driven by Moore’s Law) ¨ Bandwidth: ~ much slower growth (pin and power limitations) ¨ n At each relative technology generation (T): ¨ n (# Cores = 2 T) >> (Bandwidth = BT) Some key questions (Our contributions): How constraining is increasing gap between # of cores and available memory bandwidth? ¨ How should future CMPs be designed; how should we allocate transistors to caches and cores? ¨ What techniques can best reduce memory traffic demand? ¨ Build Analytical CMP Memory Bandwidth Model Scaling the Bandwidth Wall -- ISCA 2009 3

Agenda n n n Background / Motivation Assumptions / Scope CMP Memory Traffic Model Alternate Views of Model Memory Traffic Reduction Techniques Indirect ¨ Dual ¨ n Conclusions Scaling the Bandwidth Wall -- ISCA 2009 4

Assumptions / Scope n n n Homogenous cores Single-threaded cores (multi-threading adds to problem) Co-scheduled sequential applications ¨ n n Multi-threaded apps with data sharing evaluated separately Enough work to keep all cores busy Workloads static across technology generations Equal amount of cache per core Power/Energy constraints outside scope of this study Scaling the Bandwidth Wall -- ISCA 2009 5

Agenda n n n Background / Motivation Assumptions / Scope CMP Memory Traffic Model Alternate Views of Model Memory Traffic Reduction Techniques Indirect ¨ Dual ¨ n Conclusions Scaling the Bandwidth Wall -- ISCA 2009 6

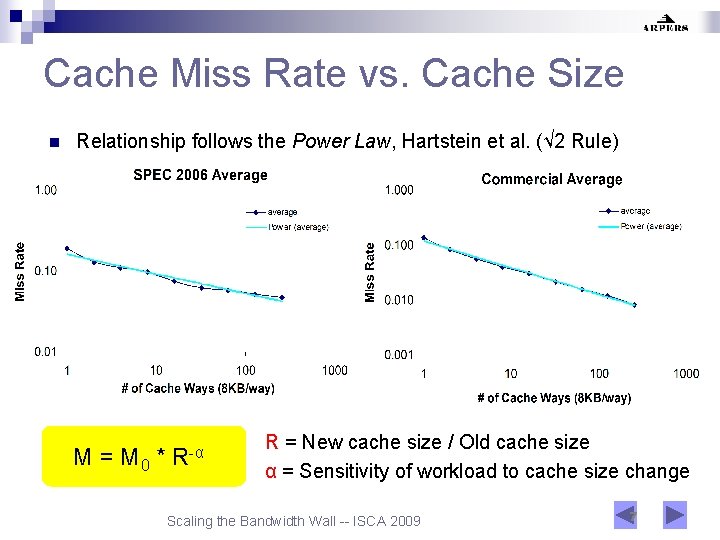

Cache Miss Rate vs. Cache Size n Relationship follows the Power Law, Hartstein et al. (√ 2 Rule) M = M 0 * R-α R = New cache size / Old cache size α = Sensitivity of workload to cache size change Scaling the Bandwidth Wall -- ISCA 2009 7

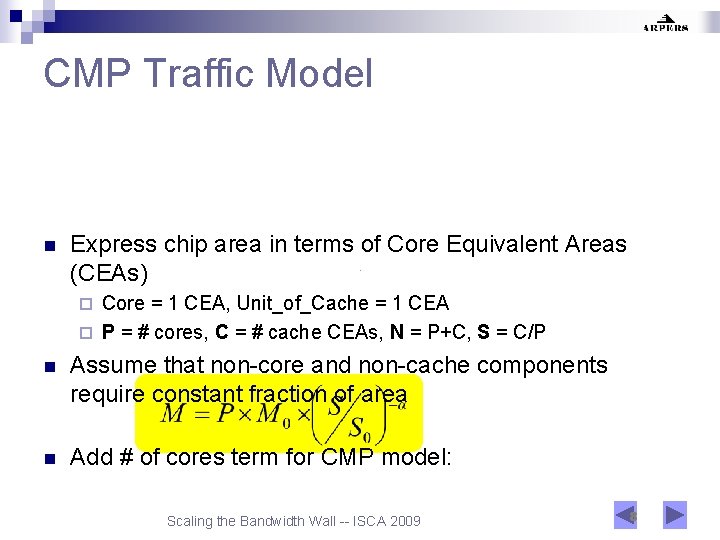

CMP Traffic Model n Express chip area in terms of Core Equivalent Areas (CEAs) Core = 1 CEA, Unit_of_Cache = 1 CEA ¨ P = # cores, C = # cache CEAs, N = P+C, S = C/P ¨ n Assume that non-core and non-cache components require constant fraction of area n Add # of cores term for CMP model: Scaling the Bandwidth Wall -- ISCA 2009 8

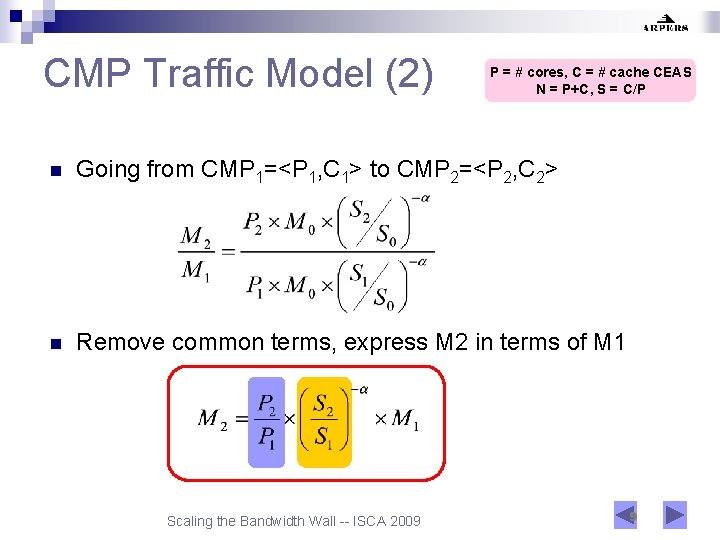

CMP Traffic Model (2) P = # cores, C = # cache CEAS N = P+C, S = C/P n Going from CMP 1=<P 1, C 1> to CMP 2=<P 2, C 2> n Remove common terms, express M 2 in terms of M 1 Scaling the Bandwidth Wall -- ISCA 2009 9

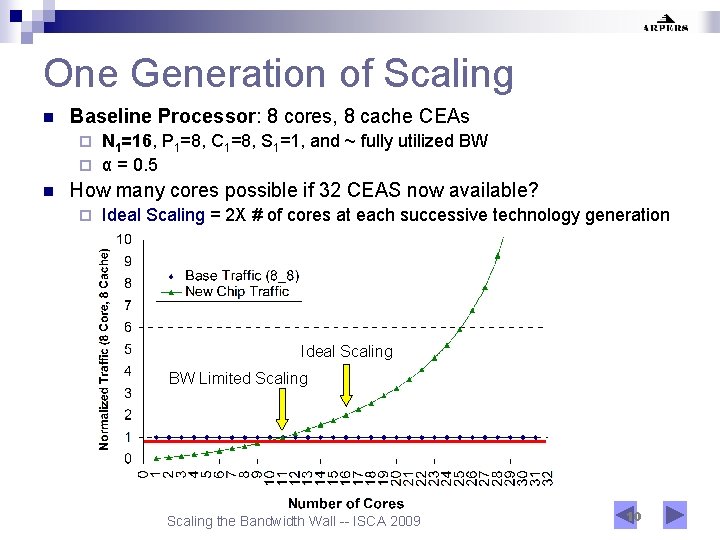

One Generation of Scaling n Baseline Processor: 8 cores, 8 cache CEAs N 1=16, P 1=8, C 1=8, S 1=1, and ~ fully utilized BW ¨ α = 0. 5 ¨ n How many cores possible if 32 CEAS now available? ¨ Ideal Scaling = 2 X # of cores at each successive technology generation Ideal Scaling BW Limited Scaling the Bandwidth Wall -- ISCA 2009 10

Agenda n n n Background / Motivation Assumptions / Scope CMP Memory Traffic Model Alternate Views of Model Memory Traffic Reduction Techniques Indirect ¨ Dual ¨ n Conclusions Scaling the Bandwidth Wall -- ISCA 2009 11

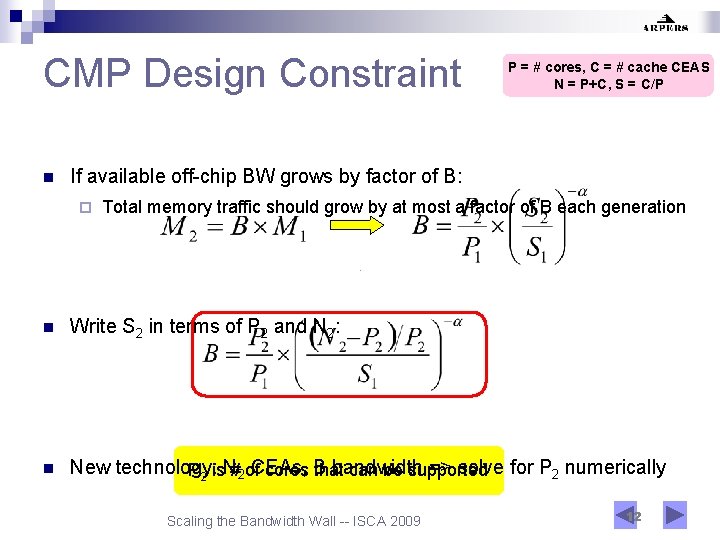

CMP Design Constraint n P = # cores, C = # cache CEAS N = P+C, S = C/P If available off-chip BW grows by factor of B: ¨ Total memory traffic should grow by at most a factor of B each generation n Write S 2 in terms of P 2 and N 2: n New technology: bandwidth => solve for P 2 numerically P 2 is. N#2 of. CEAs, cores B that can be supported Scaling the Bandwidth Wall -- ISCA 2009 12

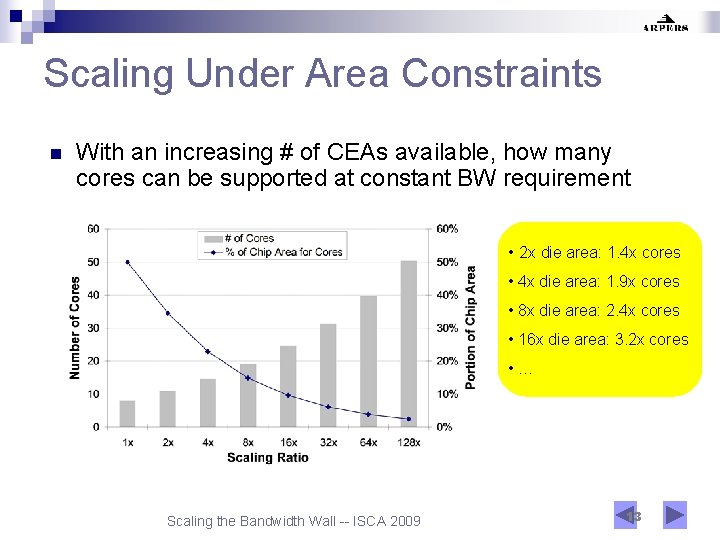

Scaling Under Area Constraints n With an increasing # of CEAs available, how many cores can be supported at constant BW requirement • 2 x die area: 1. 4 x cores • 4 x die area: 1. 9 x cores • 8 x die area: 2. 4 x cores • 16 x die area: 3. 2 x cores • … Scaling the Bandwidth Wall -- ISCA 2009 13

Agenda n n n Background / Motivation Assumptions / Scope CMP Memory Traffic Model Alternate Views of Model Memory Traffic Reduction Techniques Indirect ¨ Dual ¨ n Conclusions Scaling the Bandwidth Wall -- ISCA 2009 14

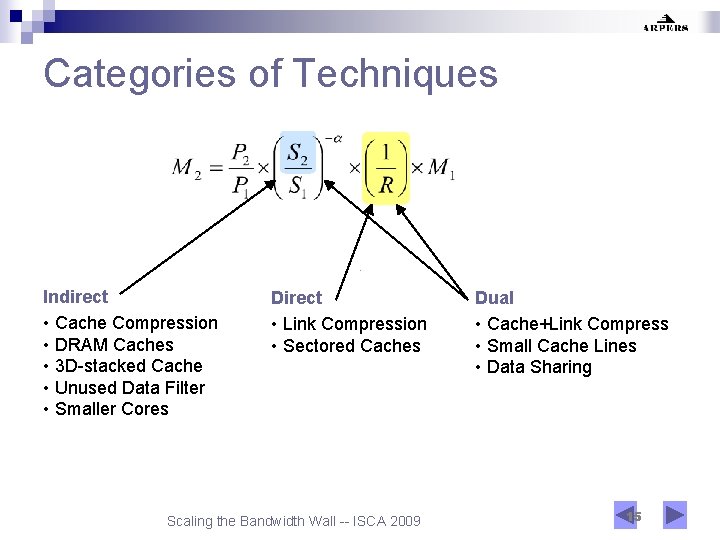

Categories of Techniques Indirect • Cache Compression • DRAM Caches • 3 D-stacked Cache • Unused Data Filter • Smaller Cores Direct • Link Compression • Sectored Caches Scaling the Bandwidth Wall -- ISCA 2009 Dual • Cache+Link Compress • Small Cache Lines • Data Sharing 15

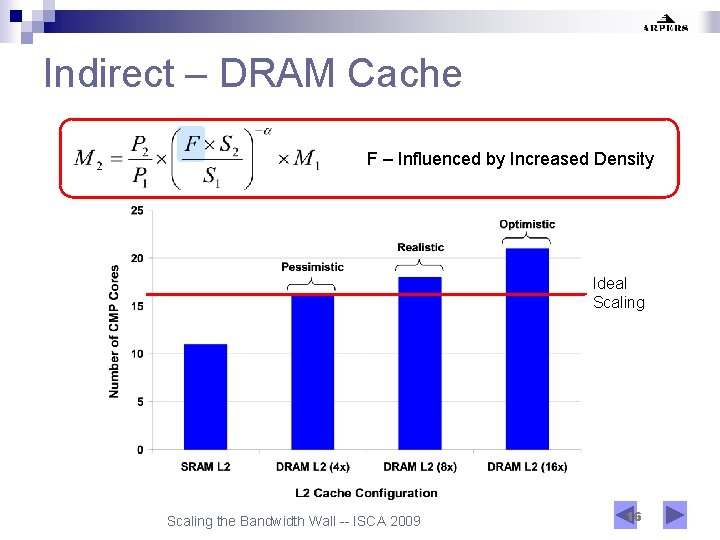

Indirect – DRAM Cache F – Influenced by Increased Density Ideal Scaling the Bandwidth Wall -- ISCA 2009 16

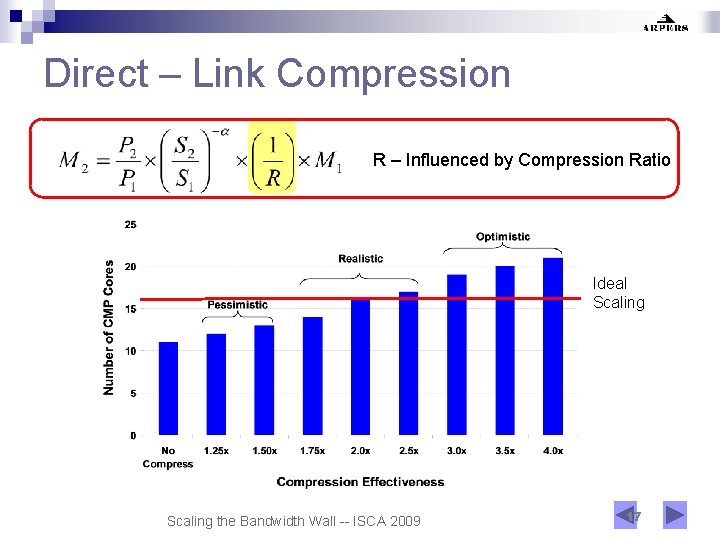

Direct – Link Compression R – Influenced by Compression Ratio Ideal Scaling the Bandwidth Wall -- ISCA 2009 17

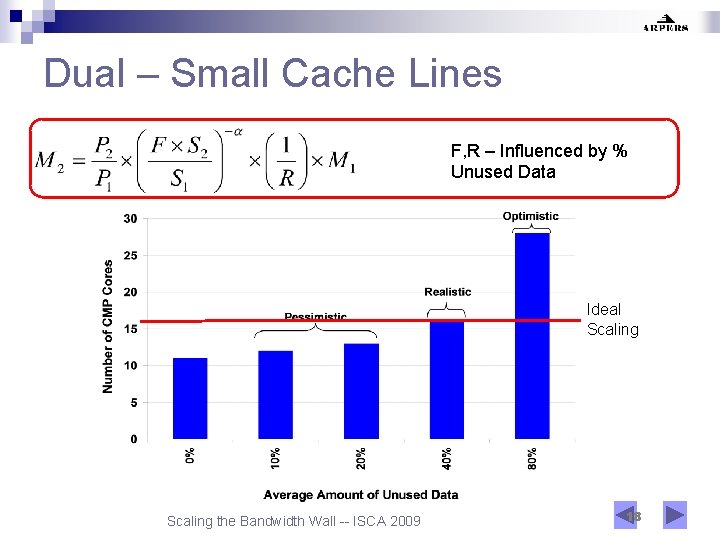

Dual – Small Cache Lines F, R – Influenced by % Unused Data Ideal Scaling the Bandwidth Wall -- ISCA 2009 18

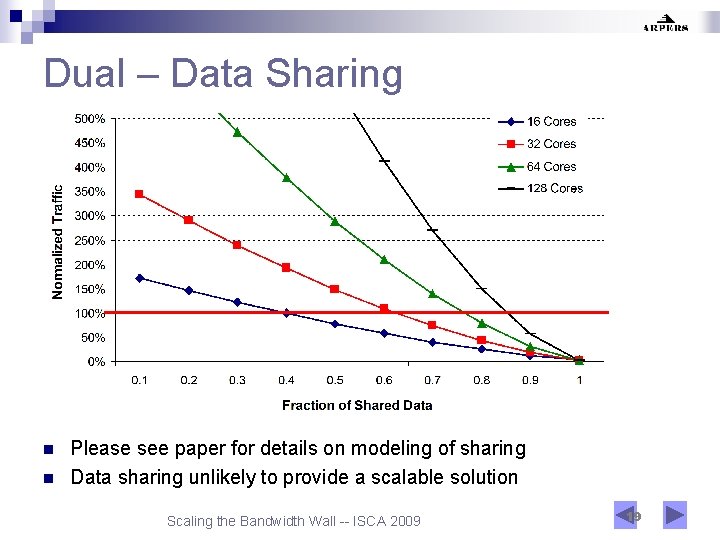

Dual – Data Sharing n n Please see paper for details on modeling of sharing Data sharing unlikely to provide a scalable solution Scaling the Bandwidth Wall -- ISCA 2009 19

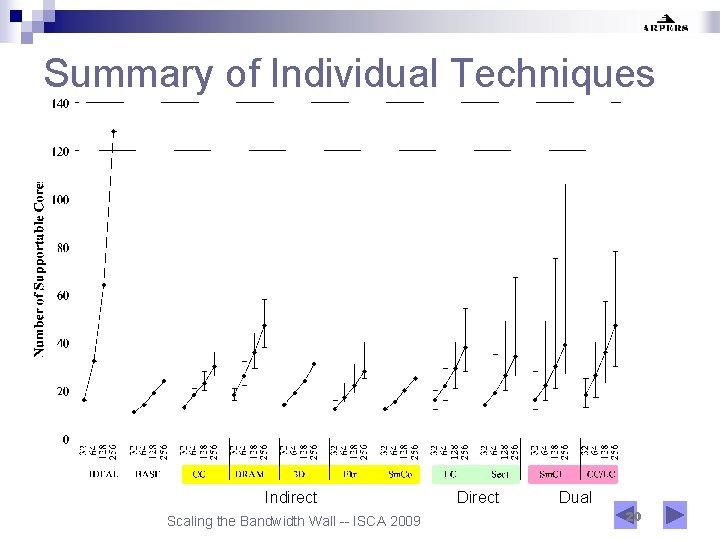

Summary of Individual Techniques Indirect Scaling the Bandwidth Wall -- ISCA 2009 Direct Dual 20

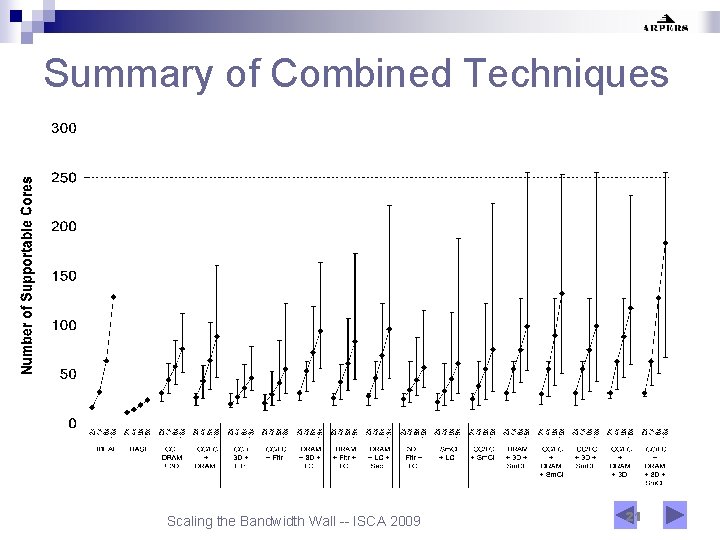

Summary of Combined Techniques Scaling the Bandwidth Wall -- ISCA 2009 21

Conclusions n Contributions ¨ ¨ Simple, powerful analytical CMP memory traffic model Quantify significance of memory BW wall problem n ¨ Guide design (cores vs. cache) of future CMPs n ¨ Given fixed chip area and BW scaling, how many cores? Evaluate memory traffic reduction techniques n n 10% chip area for cores in 4 generations if constant traffic req. Combinations can enable ideal scaling for several generations Need bandwidth-efficient computing: Hardware/Architecture level: DRAM caches, cache/link compression, prefetching, smarter memory controllers, etc. ¨ Technology level: 3 D chips, optical interconnects, etc. ¨ Application level: working set reduction, locality enhancement, data vs. pipelined parallelism, computation vs. communication, etc. ¨ Scaling the Bandwidth Wall -- ISCA 2009 22

Thank You Questions ? Brian Rogers bmrogers@ece. ncsu. edu Scaling the Bandwidth Wall -- ISCA 2009 23

- Slides: 23