CS 1652 Bandwidth Delay Product The amount of

CS 1652

Bandwidth Delay Product • The amount of data/packets to fill the “pipe” • Example: 1 Gbps x 10 ms = 10 Mbit • Amount of data that needs to be in flight to saturate a link – i. e. Achieve maximum throughput – Generally speaking: delay == RTT • Basis for the window size on the sender – Congestion control estimates current BDP between itself and receiver

Buffer Bloat • Congestion control estimates current BDP between itself and receiver – Estimate is based on what the sender “sees” • ACKs and timeouts • How current is the information that the sender sees? • How accurate is the information that the sender sees? • Depends on the queueing delay experienced by packets

Example 1 • Congested network with large router/switch queues – Packets are delayed but not dropped • What happens? – Sender estimates current RTT based on ACK arrivals • But ACKs are delayed, causing sender to see artificially large RTT – Large queues mean no packet loss • So apparent link bandwidth capacity does not change – Constant bandwidth and larger RTT • Leads to: Larger BDP estimation on sender • Leads to: Sender increasing window size • Leads to: Increased transmission rate – Sender is increasing the rate it is sending traffic into a congested network!! • Eventually router queues will overflow resulting in loss • But larger queues delayed reaction of senders to congestion – Result is more retransmissions to handle losses/delays (More Wasted Work)

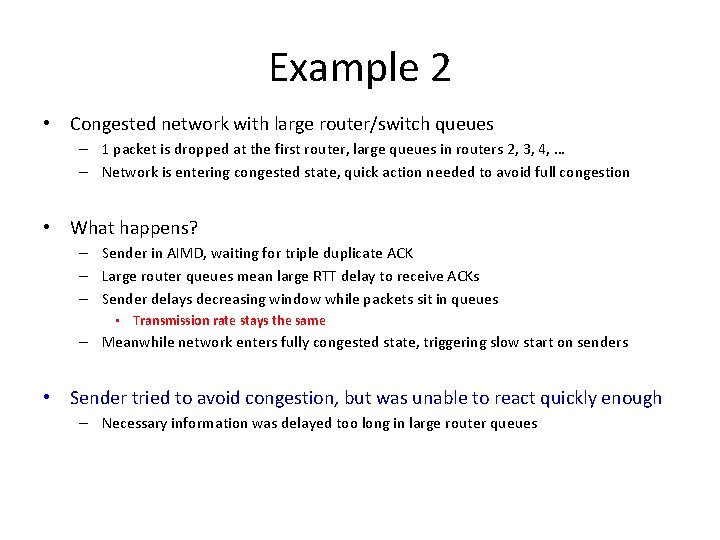

Example 2 • Congested network with large router/switch queues – 1 packet is dropped at the first router, large queues in routers 2, 3, 4, … – Network is entering congested state, quick action needed to avoid full congestion • What happens? – Sender in AIMD, waiting for triple duplicate ACK – Large router queues mean large RTT delay to receive ACKs – Sender delays decreasing window while packets sit in queues • Transmission rate stays the same – Meanwhile network enters fully congested state, triggering slow start on senders • Sender tried to avoid congestion, but was unable to react quickly enough – Necessary information was delayed too long in large router queues

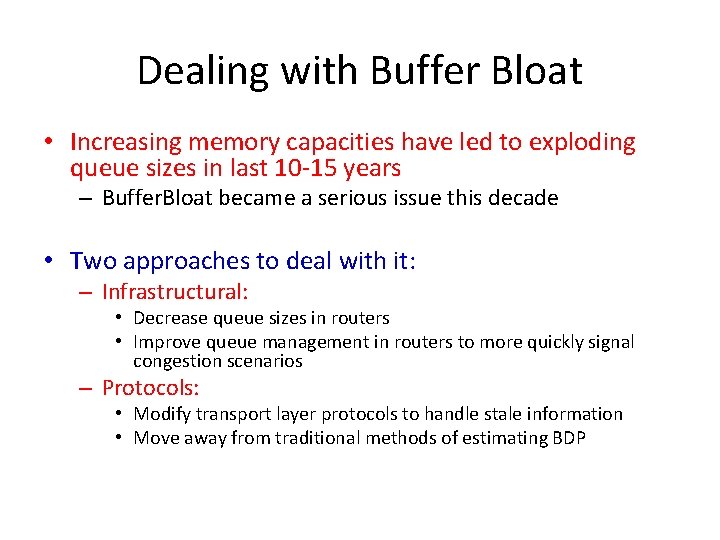

Dealing with Buffer Bloat • Increasing memory capacities have led to exploding queue sizes in last 10 -15 years – Buffer. Bloat became a serious issue this decade • Two approaches to deal with it: – Infrastructural: • Decrease queue sizes in routers • Improve queue management in routers to more quickly signal congestion scenarios – Protocols: • Modify transport layer protocols to handle stale information • Move away from traditional methods of estimating BDP

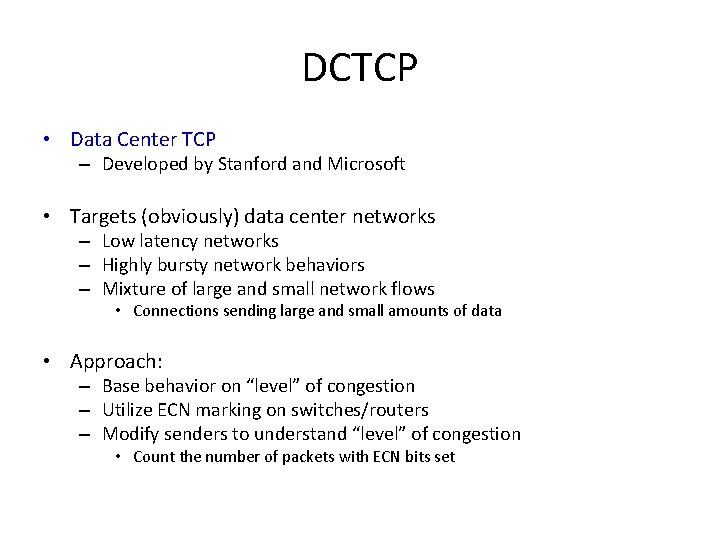

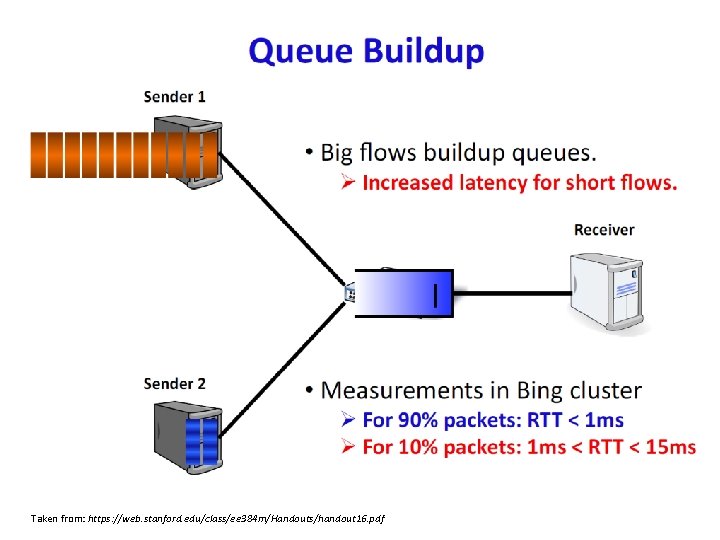

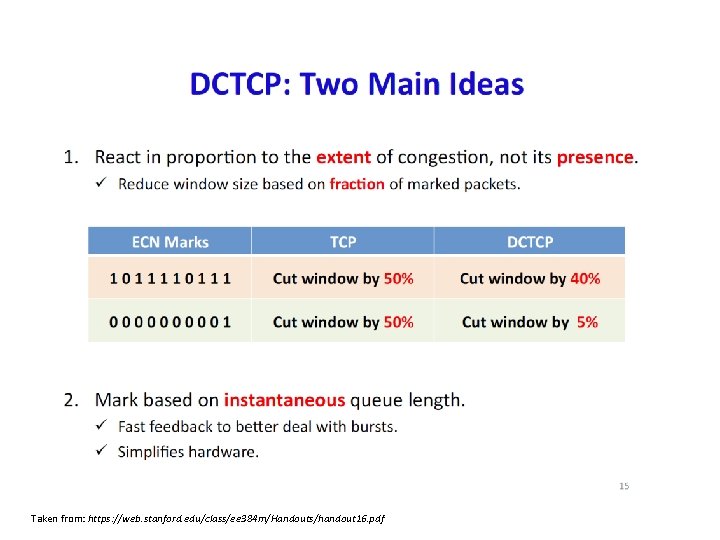

DCTCP • Data Center TCP – Developed by Stanford and Microsoft • Targets (obviously) data center networks – Low latency networks – Highly bursty network behaviors – Mixture of large and small network flows • Connections sending large and small amounts of data • Approach: – Base behavior on “level” of congestion – Utilize ECN marking on switches/routers – Modify senders to understand “level” of congestion • Count the number of packets with ECN bits set

Taken from: https: //web. stanford. edu/class/ee 384 m/Handouts/handout 16. pdf

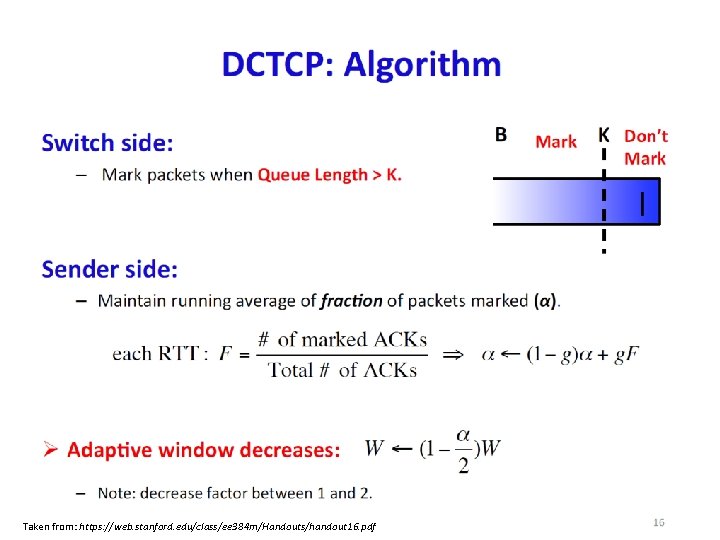

Taken from: https: //web. stanford. edu/class/ee 384 m/Handouts/handout 16. pdf

Taken from: https: //web. stanford. edu/class/ee 384 m/Handouts/handout 16. pdf

Taken from: https: //web. stanford. edu/class/ee 384 m/Handouts/handout 16. pdf

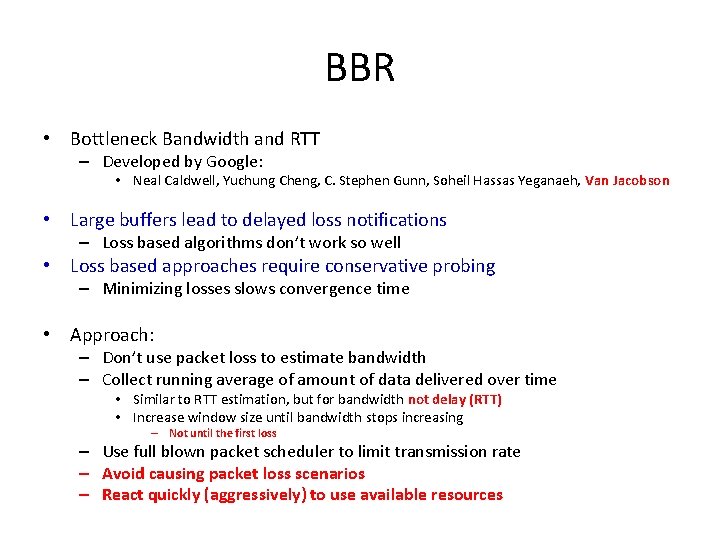

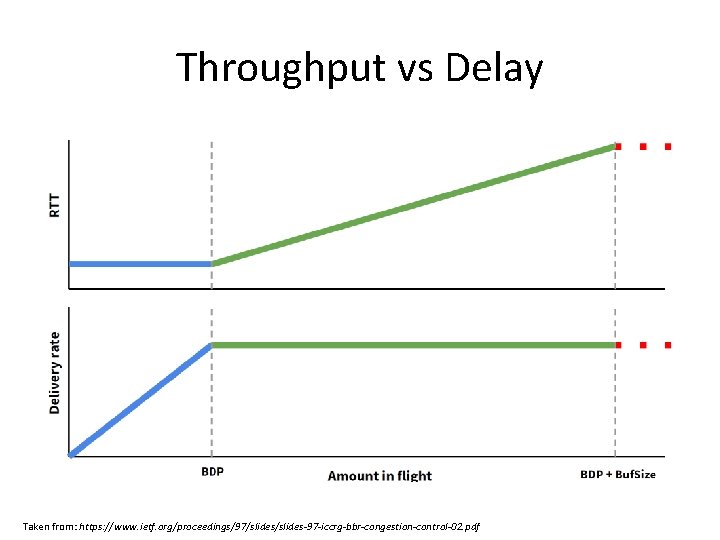

BBR • Bottleneck Bandwidth and RTT – Developed by Google: • Neal Caldwell, Yuchung Cheng, C. Stephen Gunn, Soheil Hassas Yeganaeh, Van Jacobson • Large buffers lead to delayed loss notifications – Loss based algorithms don’t work so well • Loss based approaches require conservative probing – Minimizing losses slows convergence time • Approach: – Don’t use packet loss to estimate bandwidth – Collect running average of amount of data delivered over time • Similar to RTT estimation, but for bandwidth not delay (RTT) • Increase window size until bandwidth stops increasing – Not until the first loss – Use full blown packet scheduler to limit transmission rate – Avoid causing packet loss scenarios – React quickly (aggressively) to use available resources

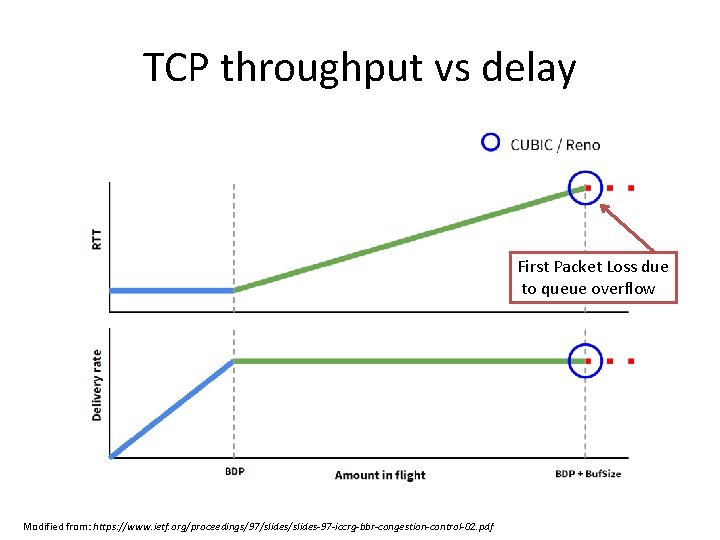

Throughput vs Delay Taken from: https: //www. ietf. org/proceedings/97/slides-97 -iccrg-bbr-congestion-control-02. pdf

TCP throughput vs delay First Packet Loss due to queue overflow Modified from: https: //www. ietf. org/proceedings/97/slides-97 -iccrg-bbr-congestion-control-02. pdf

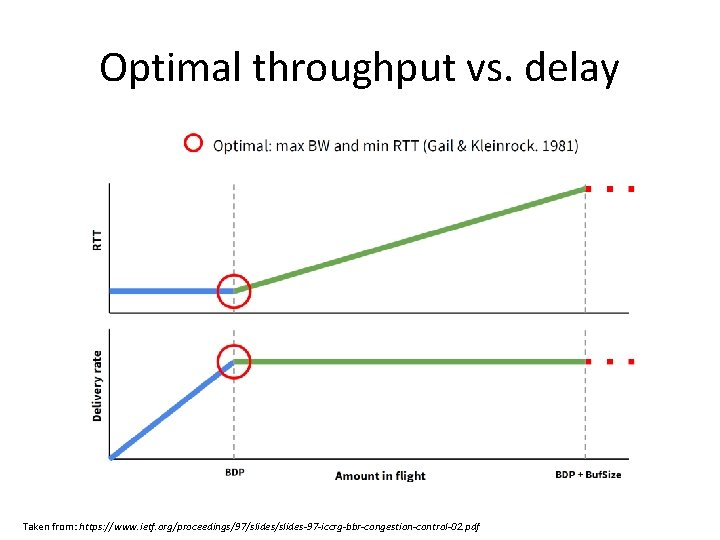

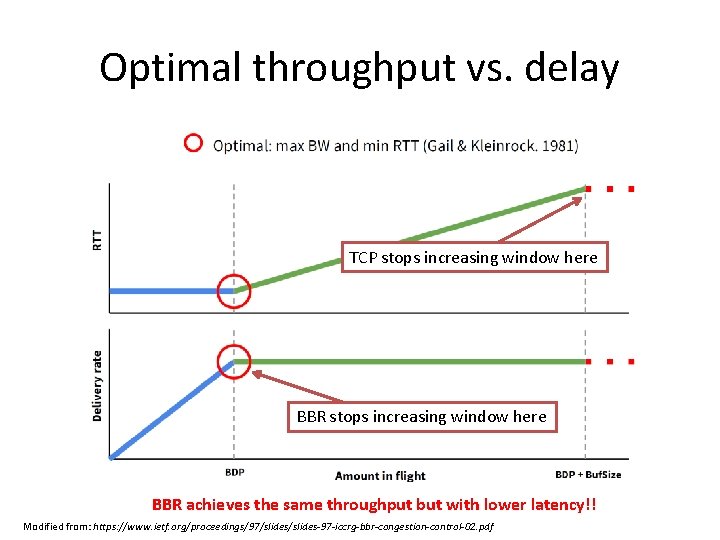

Optimal throughput vs. delay Taken from: https: //www. ietf. org/proceedings/97/slides-97 -iccrg-bbr-congestion-control-02. pdf

Optimal throughput vs. delay TCP stops increasing window here BBR achieves the same throughput but with lower latency!! Modified from: https: //www. ietf. org/proceedings/97/slides-97 -iccrg-bbr-congestion-control-02. pdf

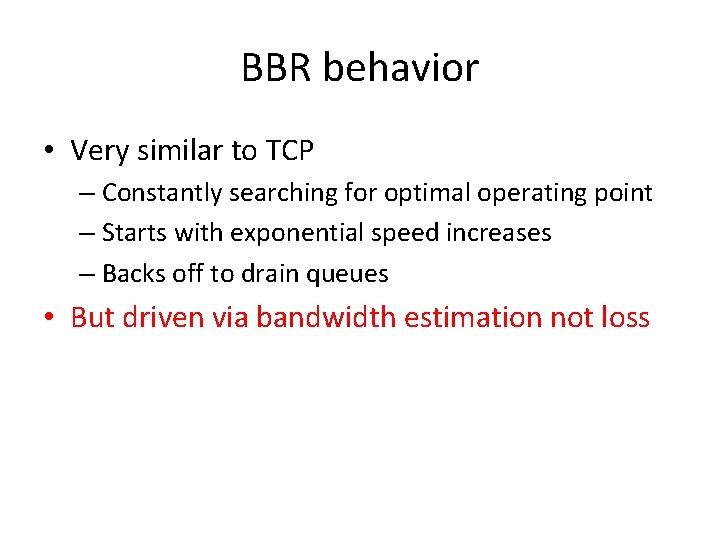

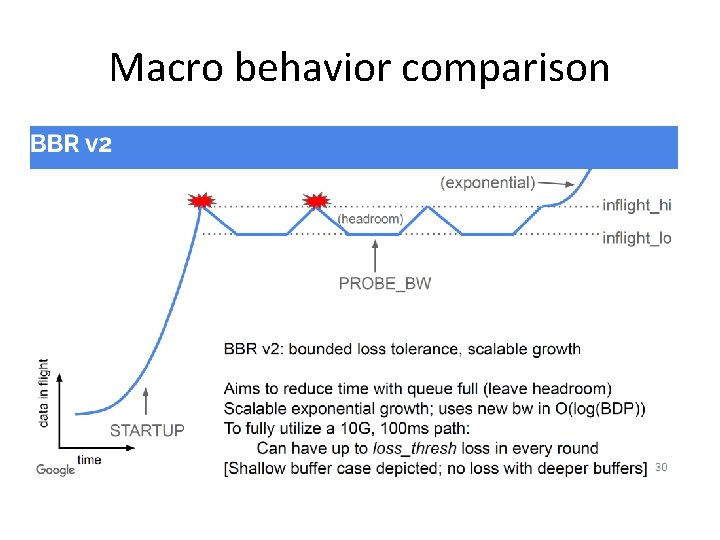

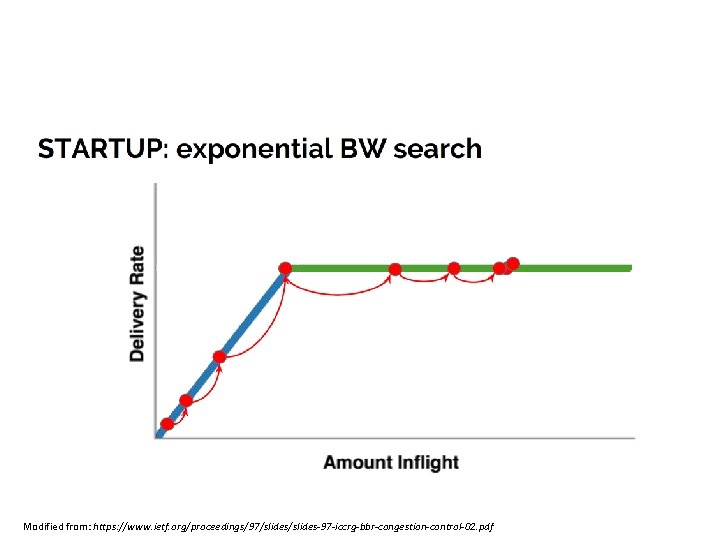

BBR behavior • Very similar to TCP – Constantly searching for optimal operating point – Starts with exponential speed increases – Backs off to drain queues • But driven via bandwidth estimation not loss

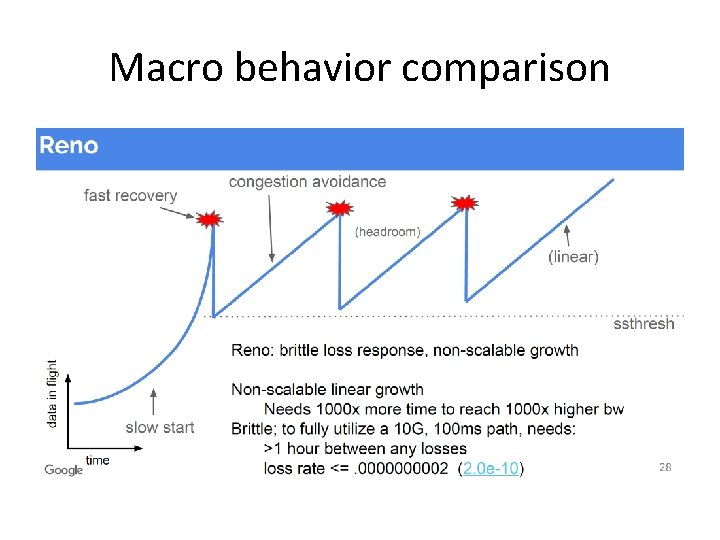

Macro behavior comparison

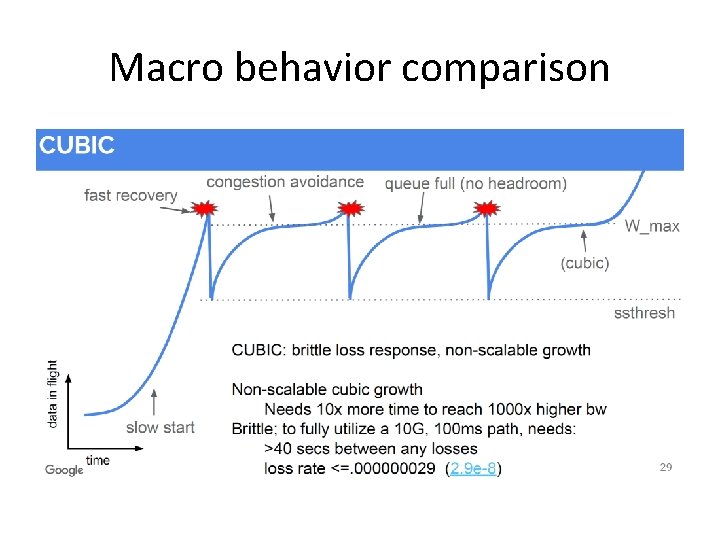

Macro behavior comparison

Macro behavior comparison

Modified from: https: //www. ietf. org/proceedings/97/slides-97 -iccrg-bbr-congestion-control-02. pdf

We overshot during startup, so we need to drain queues to minimize queueing delay Modified from: https: //www. ietf. org/proceedings/97/slides-97 -iccrg-bbr-congestion-control-02. pdf

Occasionally increase transmission rate by 25%, in case network performance has changed Modified from: https: //www. ietf. org/proceedings/97/slides-97 -iccrg-bbr-congestion-control-02. pdf

Still need accurate RTT measurements (w/out queueing delay), so we need to fully drain network queues before we measure Modified from: https: //www. ietf. org/proceedings/97/slides-97 -iccrg-bbr-congestion-control-02. pdf

QUIC • Quick UDP Internet Connections – Developed initially by Google – Modified version going through IETF – Combination Transport/HTTP/TLS protocol • Features: – – – Multiplexed streams Application layer protocol over UDP Integrated security/encryption Pluggable congestion control Forward Error Correction • Recover from (some) loss without retransmission – Connection Migration • Connections can survive IP address changes

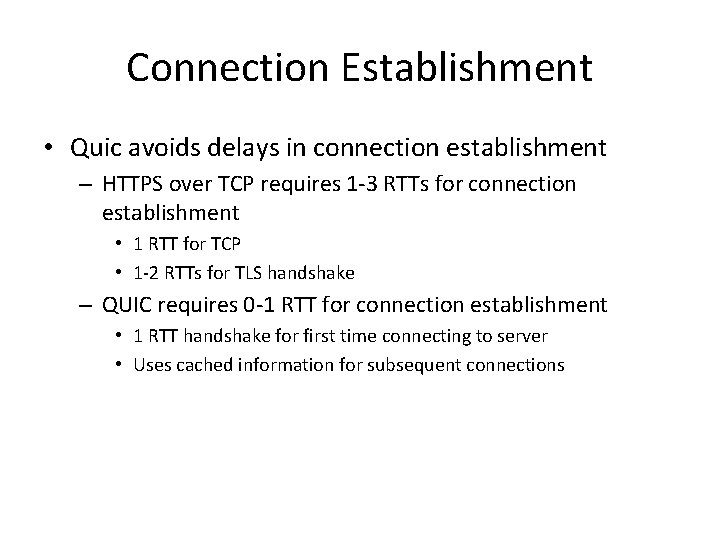

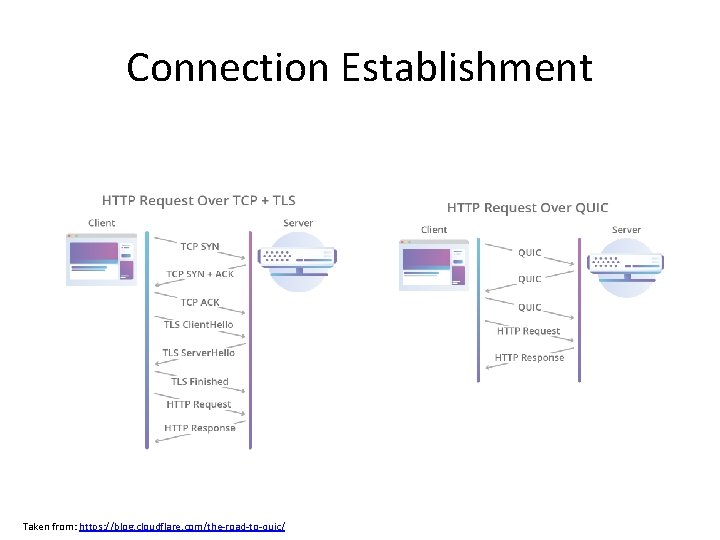

Connection Establishment • Quic avoids delays in connection establishment – HTTPS over TCP requires 1 -3 RTTs for connection establishment • 1 RTT for TCP • 1 -2 RTTs for TLS handshake – QUIC requires 0 -1 RTT for connection establishment • 1 RTT handshake for first time connecting to server • Uses cached information for subsequent connections

Connection Establishment Taken from: https: //blog. cloudflare. com/the-road-to-quic/

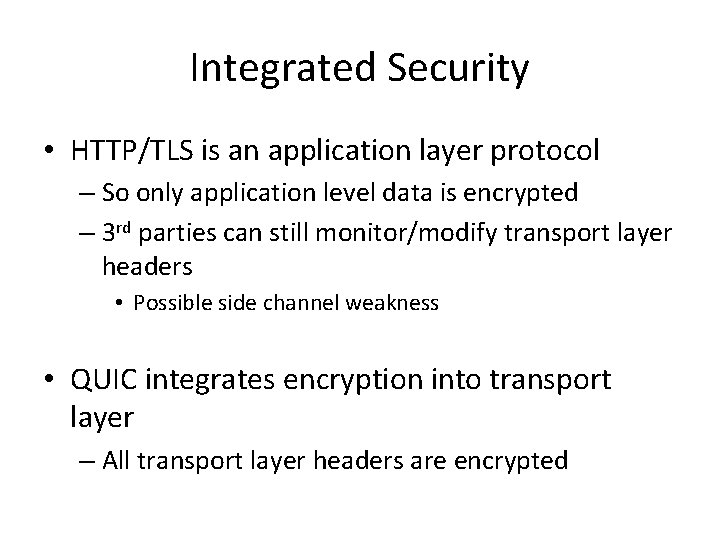

Integrated Security • HTTP/TLS is an application layer protocol – So only application level data is encrypted – 3 rd parties can still monitor/modify transport layer headers • Possible side channel weakness • QUIC integrates encryption into transport layer – All transport layer headers are encrypted

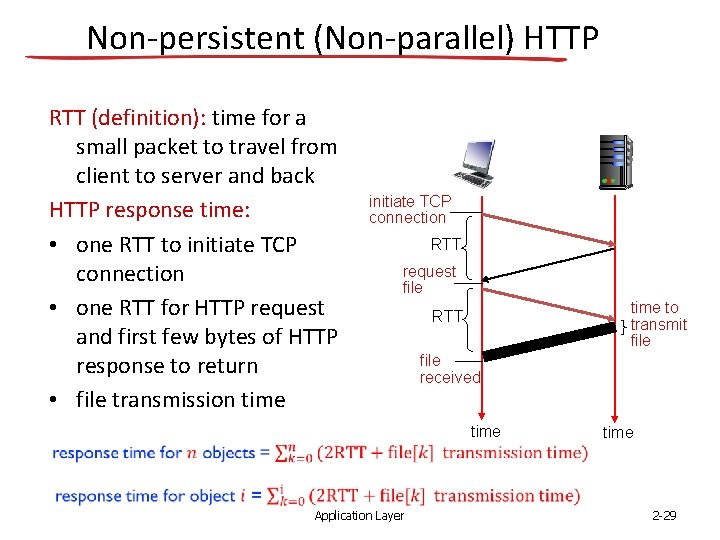

Non-persistent (Non-parallel) HTTP RTT (definition): time for a small packet to travel from client to server and back HTTP response time: • one RTT to initiate TCP connection • one RTT for HTTP request and first few bytes of HTTP response to return • file transmission time initiate TCP connection RTT request file time to transmit file RTT file received time Application Layer 2 -29

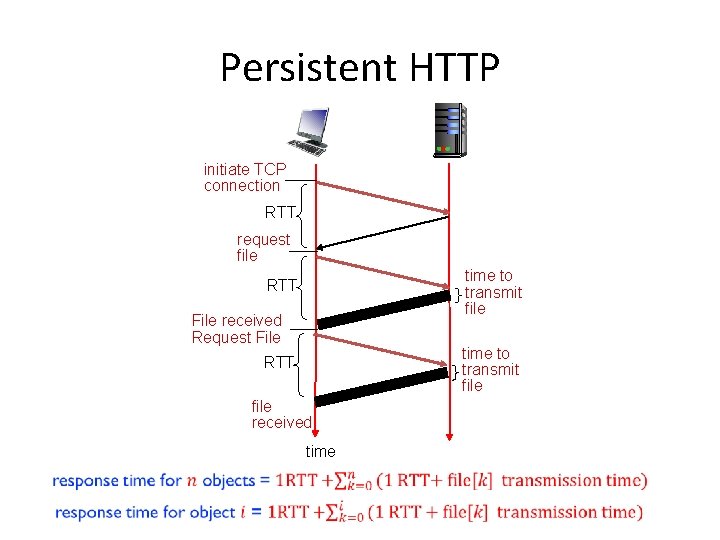

Persistent HTTP initiate TCP connection RTT request file time to transmit file RTT File received Request File time to transmit file RTT file received time

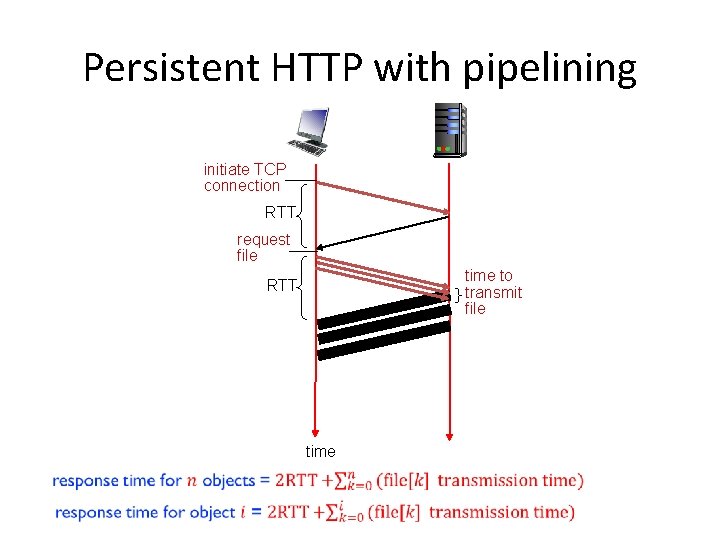

Persistent HTTP with pipelining initiate TCP connection RTT request file time to transmit file RTT time

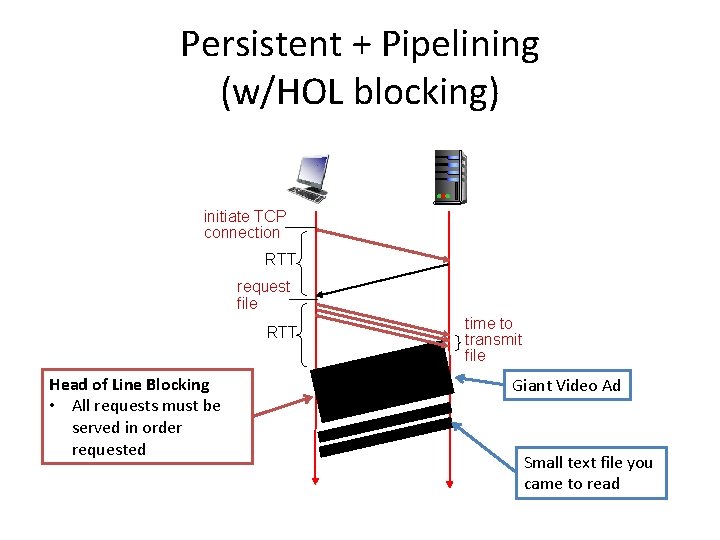

Persistent + Pipelining (w/HOL blocking) initiate TCP connection RTT request file RTT Head of Line Blocking • All requests must be served in order requested time to transmit file Giant Video Ad Small text file you came to read

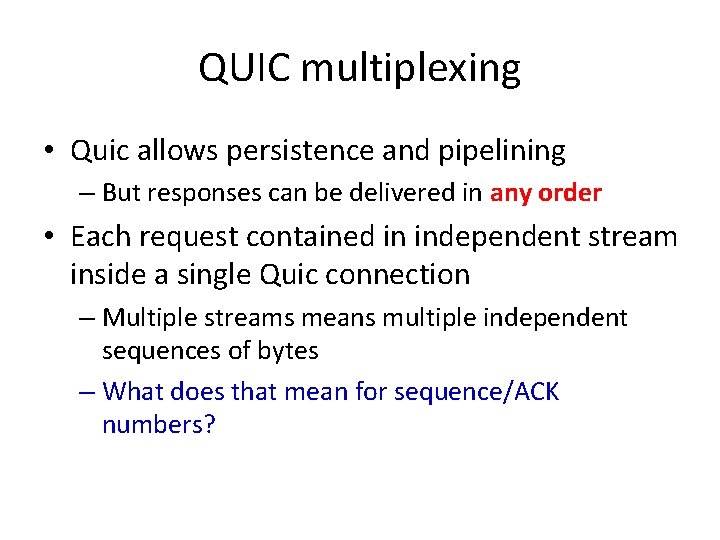

QUIC multiplexing • Quic allows persistence and pipelining – But responses can be delivered in any order • Each request contained in independent stream inside a single Quic connection – Multiple streams means multiple independent sequences of bytes – What does that mean for sequence/ACK numbers?

- Slides: 33