Qualitative Induction Dorian uc and Ivan Bratko Artificial

Qualitative Induction Dorian Šuc and Ivan Bratko Artificial Intelligence Laboratory Faculty of Computer and Information Science University of Ljubljana, Slovenia

Overview • Learning of qualitative models • Our learning problem: qualitative trees and qualitatively constrained functions • Learning of qualitative trees (ep-QUIN, QUIN) • QUIN in skill reconstruction (container crane) • Conclusions and further work

Learning of qualitative models Motivation: • building a qualitative model is a time-consuming process that requires significant knowledge • Learning from examples of system’s behaviour: – GENMODEL-Coiera, 89; KARDIO-Mozetič, 87; Bratko et al. , 89 – MISQ-Kraan et al. , 91; Richards et al. , 92 – ILP approaches-Bratko et al. , 91; Džeroski&Todorovski, 93 Learning of QDE or logical models

Our approach • Inductive learning of qualitative trees from numerical examples; qualitatively constrained functions based on qualitative proportionality predicates (Forbus, 84) • Motivation for learning of qualitative trees: experiments with reconstruction of human control skill and qualitative control strategies (crane, acrobot-Šuc and Bratko 99, 00)

Learning problem • Usual classification learning problem, but learning of qualitative trees: – in leaves are qualitatively constrained functions (QCFs); QCFs give constraints on the class change in response to a change in attributes – internal nodes (splits) define a partition of the attribute space into areas with common qualitative behavior of the class variable

A qualitative tree example • A qualitative tree for the function: z=x 2 -y 2 z is monotonically increasing in its dependence on x and monotonically decreasing in its dependence on y z is positively related to x and negatively related to y

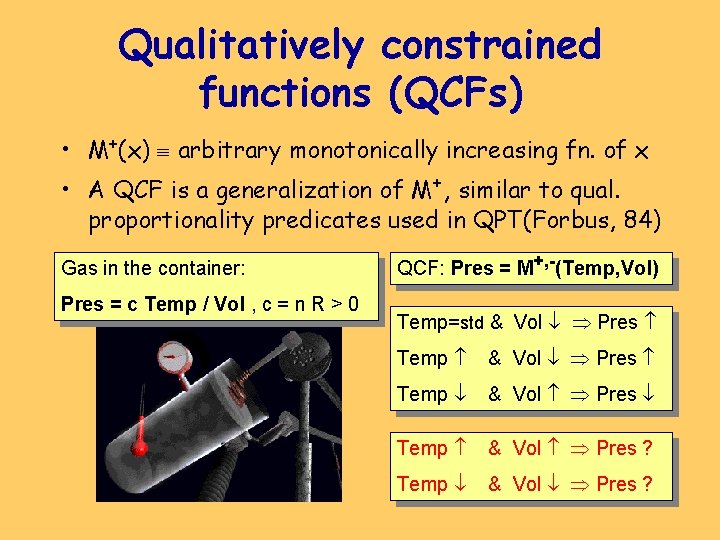

Qualitatively constrained functions (QCFs) • M+(x) arbitrary monotonically increasing fn. of x • A QCF is a generalization of M+, similar to qual. proportionality predicates used in QPT(Forbus, 84) Gas in the container: Pres = c Temp / Vol , c = n R > 0 QCF: Pres = M+, -(Temp, Vol) Temp=std & Vol Pres Temp & Vol Pres ?

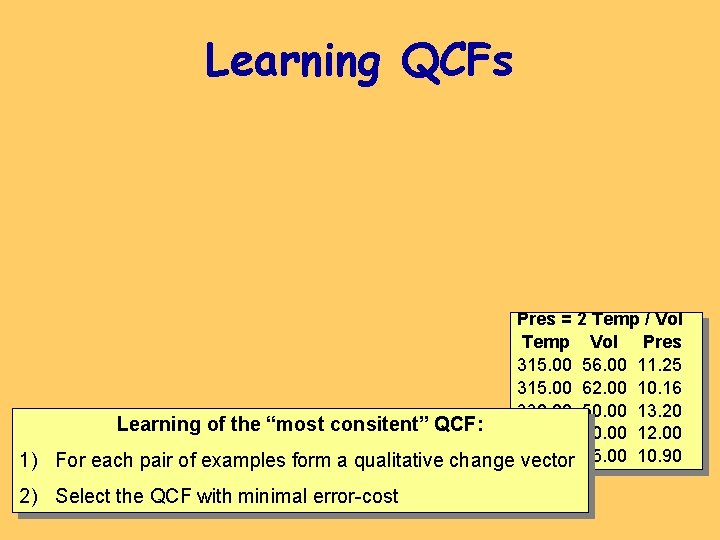

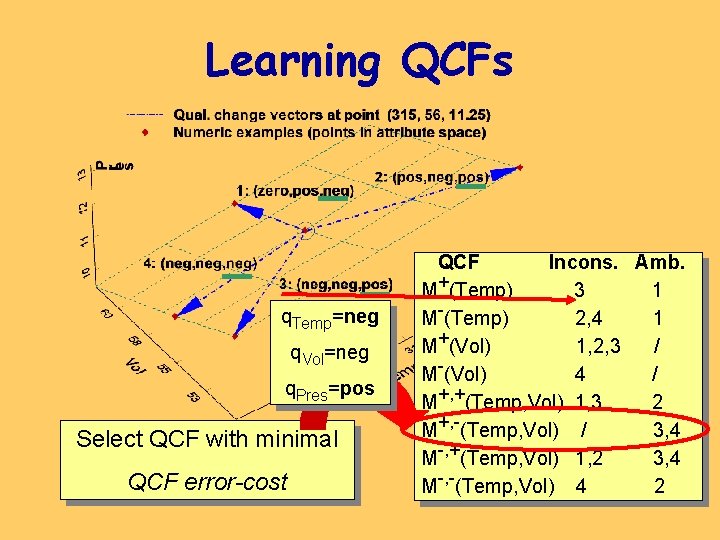

Learning QCFs Pres = 2 Temp / Vol Temp Vol Pres 315. 00 56. 00 11. 25 315. 00 62. 00 10. 16 330. 00 50. 00 13. 20 Learning of the “most consitent” QCF: 300. 00 50. 00 12. 00 1) For each pair of examples form a qualitative change 300. 00 vector 55. 00 10. 90 2) Select the QCF with minimal error-cost

Learning QCFs q. Temp=neg q. Vol=neg q. Pres=pos Select QCF with minimal QCF error-cost QCF Incons. Amb. M+(Temp) 3 1 M-(Temp) 2, 4 1 M+(Vol) 1, 2, 3 / M-(Vol) 4 / M+, +(Temp, Vol) 1, 3 2 M+, -(Temp, Vol) / 3, 4 M-, +(Temp, Vol) 1, 2 3, 4 M-, -(Temp, Vol) 4 2

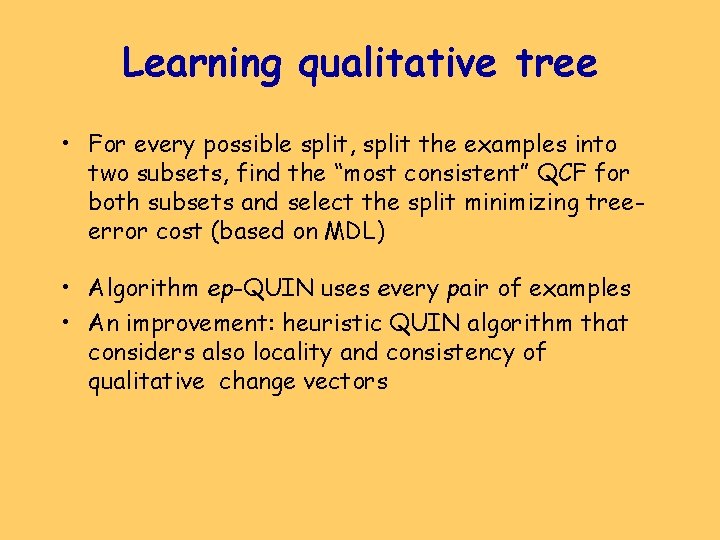

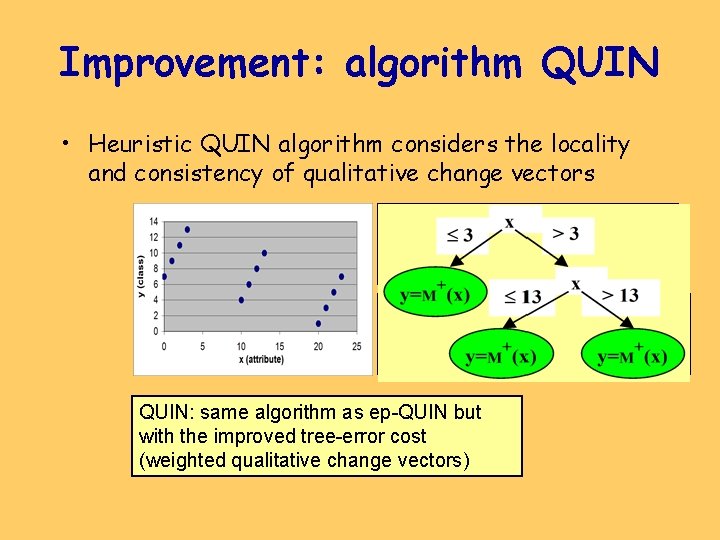

Learning qualitative tree • For every possible split, split the examples into two subsets, find the “most consistent” QCF for both subsets and select the split minimizing treeerror cost (based on MDL) • Algorithm ep-QUIN uses every pair of examples • An improvement: heuristic QUIN algorithm that considers also locality and consistency of qualitative change vectors

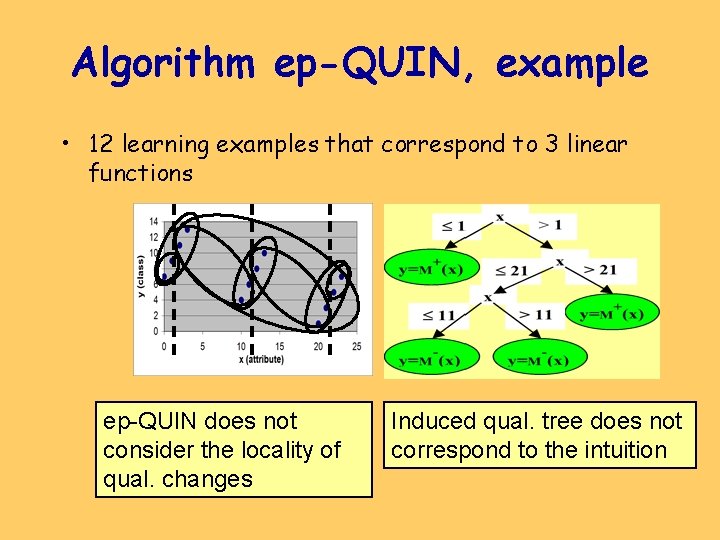

Algorithm ep-QUIN, example • 12 learning examples that correspond to 3 linear functions ep-QUIN does not consider the locality of qual. changes Induced qual. tree does not correspond to the intuition

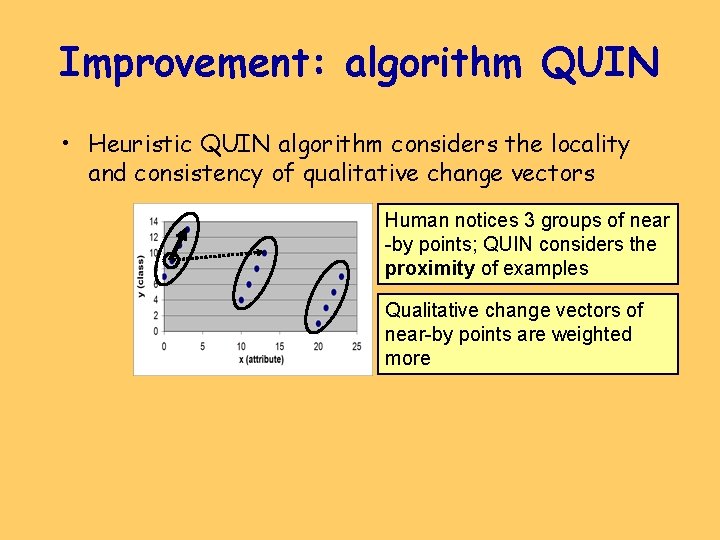

Improvement: algorithm QUIN • Heuristic QUIN algorithm considers the locality and consistency of qualitative change vectors Human notices 3 groups of near -by points; QUIN considers the proximity of examples Qualitative change vectors of near-by points are weighted more

Improvement: algorithm QUIN • Heuristic QUIN algorithm considers the locality and consistency of qualitative change vectors Human notices 3 groups of near -by points; QUIN considers the proximity of examples QUIN considers the consistency of the class’s qual. change at k nearest neighbors of the point QUIN: same algorithm as ep-QUIN but with the improved tree-error cost (weighted qualitative change vectors)

Experimental evaluation • On a set of artificial domains: – Results by QUIN better than ep-QUIN – QUIN can handle noisy data – In simple domains QUIN finds qualitative relations corresponding to our intuition • QUIN in skill reconstruction: – QUIN used to induce qual. control strategies from examples of the human control performance – Experiments in the crane domain

Skill reconstruction and behavioural cloning • Motivation: – understanding of the human skill – development of an automatic controller • ML approach to skill reconstruction: learn a control strategy from the logged data from skilled human operators (execution trace). Later called behavioural cloning (Michie, 93). • Used in domains as: – pole balancing (Miche et al. , 90) – piloting (Sammut et al. , 92; Camacho 95) – container cranes (Urbančič & Bratko, 94)

Learning problem for skill reconstruction • Execution traces used as examples for ML to induce: – a control strategy (comprehensible, symbolic) – automatic controller (criterion of success) • Operator’s execution trace: – a sequence of system states and corresponding operator’s actions, logged to a file at a certain frequency

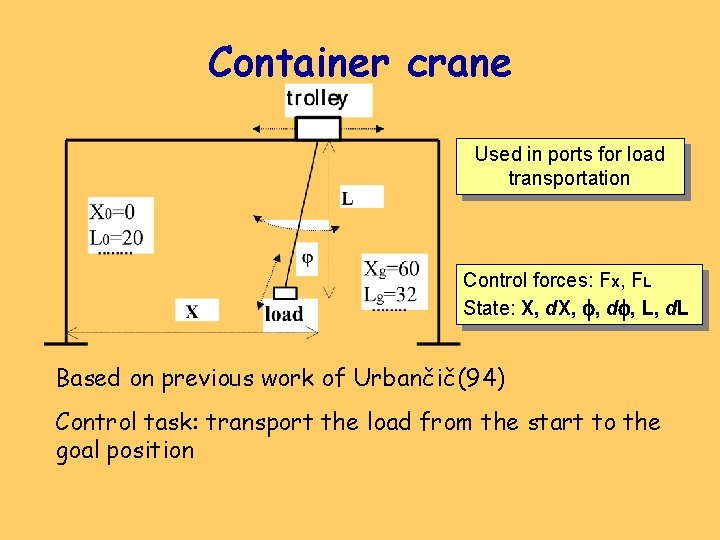

Container crane Used in ports for load transportation Control forces: Fx, FL State: X, d. X, , d , L, d. L Based on previous work of Urbančič(94) Control task: transport the load from the start to the goal position

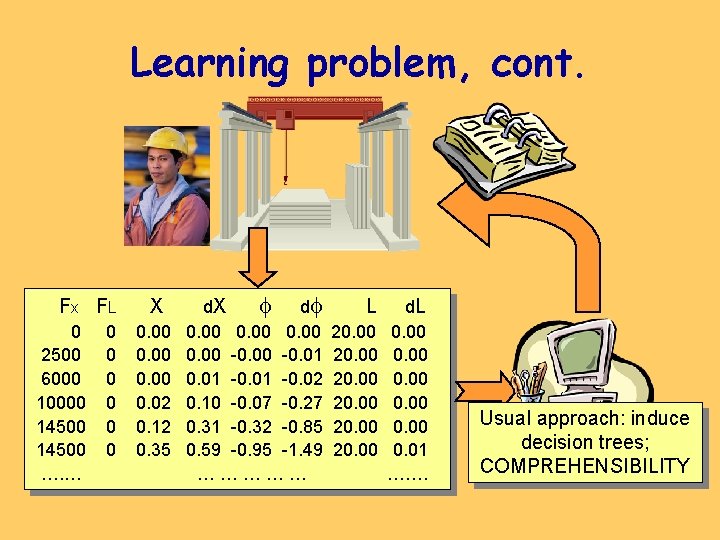

Learning problem, cont. F x FL 0 2500 6000 10000 14500 …. … 0 0 0 X 0. 00 0. 02 0. 12 0. 35 d. X d 0. 00 -0. 01 -0. 02 0. 10 -0. 07 -0. 27 0. 31 -0. 32 -0. 85 0. 59 -0. 95 -1. 49 …………… L 20. 00 d. L 0. 00 0. 01 ……. Usual approach: induce decision trees; COMPREHENSIBILITY

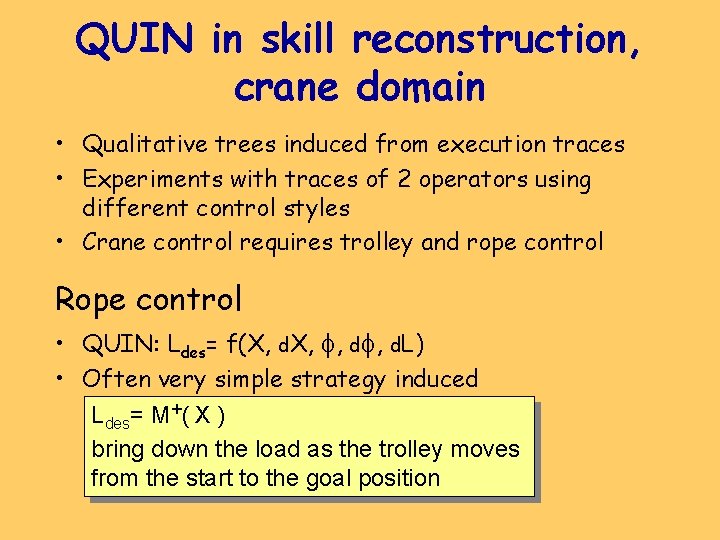

QUIN in skill reconstruction, crane domain • Qualitative trees induced from execution traces • Experiments with traces of 2 operators using different control styles • Crane control requires trolley and rope control Rope control • QUIN: Ldes= f(X, d. X, , d. L) • Often very simple strategy induced Ldes= M+( X ) bring down the load as the trolley moves from the start to the goal position

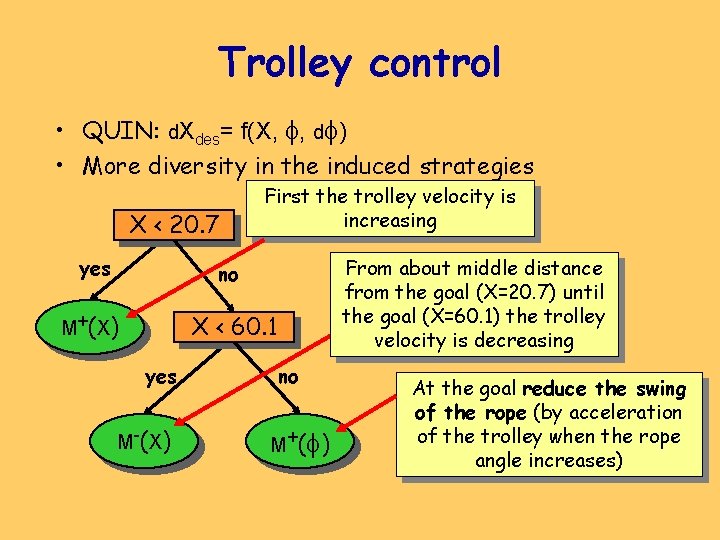

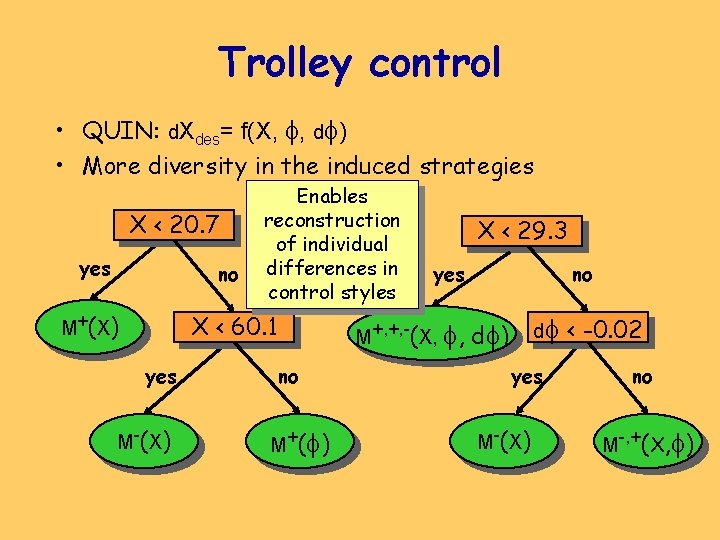

Trolley control • QUIN: d. Xdes= f(X, , d ) • More diversity in the induced strategies X < 20. 7 First the trolley velocity is increasing yes no M+ ( X ) X < 60. 1 yes M-( X ) From about middle distance from the goal (X=20. 7) until the goal (X=60. 1) the trolley velocity is decreasing no M+ ( ) At the goal reduce the swing of the rope (by acceleration of the trolley when the rope angle increases)

Trolley control • QUIN: d. Xdes= f(X, , d ) • More diversity in the induced strategies X < 20. 7 Enables reconstruction of individual differences in control styles yes no M+ ( X ) X < 60. 1 yes M-( X ) X < 29. 3 yes M+, +, -(X, , no M+ ( ) no d d ) yes M-( X ) < -0. 02 no M-, +(X, )

QUIN in skill reconstruction Qualitative control strategies: • Comprehensible • Enable the reconstruction of individual differences in control styles of different operators • Define sets of quantitative strategies and can be used as spaces for controller optimization QUIN is able to detect very subtle and important aspect of human control strategies

Further work • Qualitative simulation to generate possible explanations of a qualitative strategy • (Semi-)Qualitative reasoning to find the necessary conditions for the success of the qual. strategy • Reducing the space of admissible controllers by qualitative reasoning • QUIN is a general tool for qualitative system identification; applying QUIN in different domains

- Slides: 23