Predicting Phrasing and Accent Julia Hirschberg CS 4706

Predicting Phrasing and Accent Julia Hirschberg CS 4706 9/15/2020 1

Why worry about accent and phrasing? A car bomb attack on a police station in the northern Iraqi city of Kirkuk early Monday killed four civilians and wounded 10 others U. S. military officials said. A leading Shiite member of Iraq's Governing Council on Sunday demanded no more "stalling" on arranging for elections to rule this country once the U. S. -led occupation ends June 30. Abdel Aziz al-Hakim a Shiite cleric and Governing Council member said the U. S. -run coalition should have begun planning for elections months ago. -- Loquendo 9/15/2020 2

Why predict phrasing and accent? • TTS and CTS – Naturalness – Intelligibility • Recognition – Decrease perplexity – Modify durational predictions for words at phrase boundaries – Identify most ‘salient’ words • Summarization, information extraction 9/15/2020 3

How do we predict phrasing and accent? • Default prosodic assignment from simple text analysis The president went to Brussels to make up with Europe. – Doesn’t work all that well, e. g. particles – Hand-built rule-based systems hard to modify or adapt to new domains • Corpus-based approaches (Sproat et al ’ 92) – Train prosodic variation on large handlabeled corpora using machine learning techniques 9/15/2020 4

– Accent and phrasing decisions trained separately – E. g. Feat 1, Feat 2, …Acc – Associate prosodic labels with simple features of transcripts • distance from beginning or end of phrase • orthography: punctuation, paragraphing • part of speech, constituent information – Apply automatically learned rules when processing text 9/15/2020 5

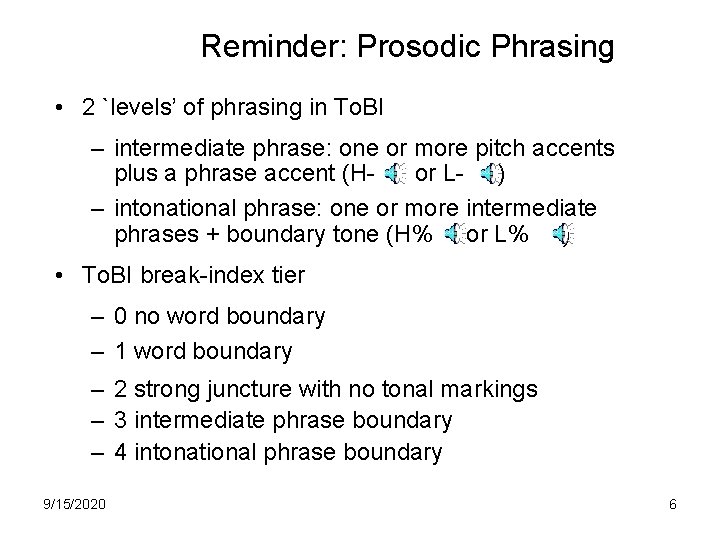

Reminder: Prosodic Phrasing • 2 `levels’ of phrasing in To. BI – intermediate phrase: one or more pitch accents plus a phrase accent (Hor L- ) – intonational phrase: one or more intermediate phrases + boundary tone (H% or L% ) • To. BI break-index tier – 0 no word boundary – 1 word boundary – 2 strong juncture with no tonal markings – 3 intermediate phrase boundary – 4 intonational phrase boundary 9/15/2020 6

What are the indicators of phrasing in speech? • Timing – Pause – Lengthening • F 0 changes • Vocal fry/glottalization 9/15/2020 7

What linguistic and contextual features are linked to phrasing? • Syntactic information – Abney ’ 91 chunking – Steedman ’ 90, Oehrle ’ 91 CCGs … – Which ‘chunks’ tend to stick together? – Which ‘chunks’ tend to be separated intonationally? • Largest constituent dominating w(i) but not w(j) [The man in the moon] |? [looks down on you] • Smallest constituent dominating w(i), w(j) The man [in |? moon] – Part-of-speech of words around potential boundary site • Sentence-level information – Length of sentence This is a very long sentence which thus might have a lot of phrase boundaries in it don’t you think? 9/15/2020 8

• • • This isn’t. Orthographic information – They live in Butte, Montana. Word co-occurrence information Vampire bat, …powerful but… Are words on each side accented or not? The cat in |? the Where is the last phrase boundary? He asked for pills | but |? What else? 9/15/2020 9

Statistical learning methods • • • Classification and regression trees (CART) Rule induction (Ripper) Support Vector Machines HMMs, Neural Nets All take vector of independent variables and one dependent (predicted) variable, e. g. ‘there’s a phrase boundary here’ or ‘there’s not’ • Input from hand labeled dependent variable and automatically extracted independent variables • Result can be integrated into TTS text processor 9/15/2020 10

How do we evaluate the result? • How to define a Gold Standard? – Natural speech corpus – Multi-speaker/same text – Subjective judgments • No simple mapping from text to prosody – Many variants can be acceptable The car was driven to the border last spring while its owner an elderly man was taking an extended vacation in the south of France. 9/15/2020 11

More Recent Results • Incremental improvements continue: – Adding higher-accuracy parsing (Koehn et al ‘ 00) • Collins ‘ 99 parser • Different learning algorithms (Schapire & Singer ‘ 00) • Different syntactic representations: relational? Tree -based? • Ranking vs. classification? • Rules always impoverished • Where to next? 9/15/2020 12

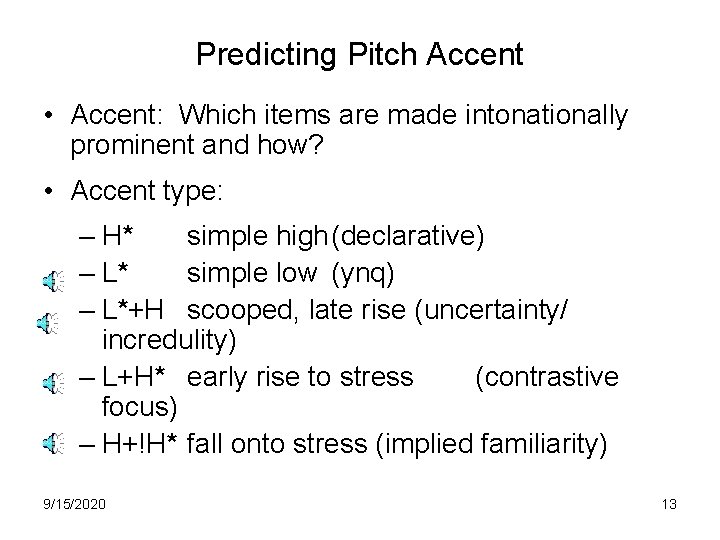

Predicting Pitch Accent • Accent: Which items are made intonationally prominent and how? • Accent type: – H* simple high(declarative) – L* simple low (ynq) – L*+H scooped, late rise (uncertainty/ incredulity) – L+H* early rise to stress (contrastive focus) – H+!H* fall onto stress (implied familiarity) 9/15/2020 13

What are the indicators of accent? • • • F 0 excursion Durational lengthening Voice quality Vowel quality Loudness 9/15/2020 14

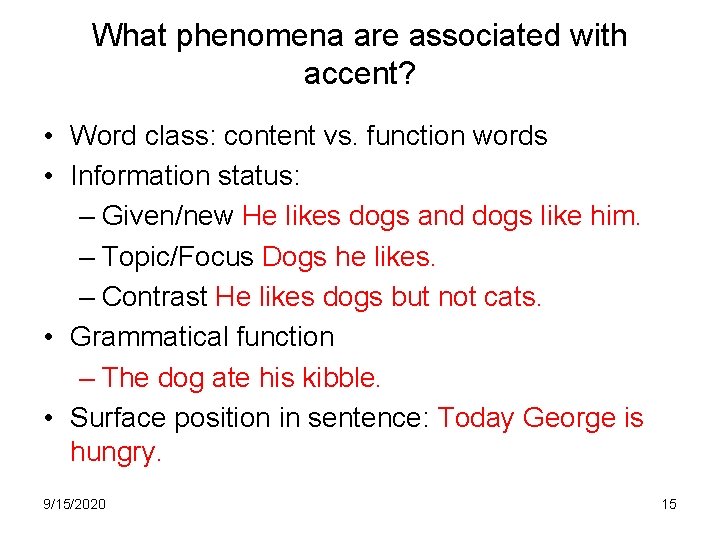

What phenomena are associated with accent? • Word class: content vs. function words • Information status: – Given/new He likes dogs and dogs like him. – Topic/Focus Dogs he likes. – Contrast He likes dogs but not cats. • Grammatical function – The dog ate his kibble. • Surface position in sentence: Today George is hungry. 9/15/2020 15

• Association with focus: – John only introduced Mary to Sue. • Semantic parallelism – John likes beer but Mary prefers wine. 9/15/2020 16

How can we capture such information simply? • • • POS window Position of candidate word in sentence Location of prior phrase boundary Pseudo-given/new Location of word in complex nominal and stress prediction for that nominal City hall, parking lot, city hall parking lot • Word co-occurrence Blood vessel, blood orange 9/15/2020 17

Current Research • Concept-to-Speech (CTS) – Pan&Mc. Keown 99 – systems should be able to specify “better” prosody: the system knows what it wants to say and can specify how – New features • Newer machine learning methods: – Boosting and bagging (Sun 02) – Combine text and acoustic cues for ASR – Co-training 9/15/2020 18

MAGIC • MM system for presenting cardiac patient data – Developed at Columbia by Mc. Keown and colleagues in conjunction with Columbia Presbyterian Medical Center to automate postoperative status reporting for bypass patients – Uses mostly traditional NLG hand-developed components – Generate text, then annotate prosodically – Corpus-trained prosodic assignment component 9/15/2020 19

• Corpus: written and oral patient reports – 50 min multi-speaker, spontaneous + 11 min single speaker, read – 1. 24 M word text corpus of discharge summaries – Transcribed, To. BI labeled – Generator features labeled/extracted: • syntactic function 9/15/2020 20

• • • 9/15/2020 p. o. s. semantic category semantic ‘informativeness’ (rarity in corpus) semantic constituent boundary location and length salience given/new focus theme/ rheme ‘importance’ ‘unexpectedness’ 21

– Very hard to label features • Results: new features to specify TTS prosody – Of CTS-specific features only semantic informativeness (likeliness of occurring in a corpus) useful so far – Looking at context, word collocation for accent placement helps predict accent – RED CELL (less predictable) vs. BLOOD cell (more) Most predictable words are accented less frequently (40 -46%) and least predictable more (73 -80%) Unigram+bigram model predicts accent status w/77% (+/-. 51) accuracy 9/15/2020 22

Future Intonation Prediction: Beyond Phrasing and Accent • Assigning affect (emotion) • Personalizing TTS • Conveying personality, charisma? 9/15/2020 23

Next Class • Look at another phenomena we’d like to capture in TTS systems: – Information status • Homework 3 a is due on March 1! 9/15/2020 24

- Slides: 24