Neural Graph Collaborative Filtering 2019 24822 Xiang Wang

Neural Graph Collaborative Filtering 발표자 2019 -24822 김정우 Xiang Wang 1, Xiangnan He 2, Meng Wang 3, Fuli Feng 1, Tat-Seng Chua 1 1 National University of Singapore, Singapore 2 University of Science and Technology of China, China 3 Hefei University of Technology, China 1

Table of Contents § Introduction § Related work § Method § § Overview Embedding & Propagation Layer Prediction Optimization § § Datasets & baseline method Evaluation metric Study of NGCF Effect of High-order Connectivity § Discussion § Experiments § Conclusion & Future work § Q&A 2

Introduction § 2 key components of Collaborative filtering § 1) Embedding § 2) Interaction modeling Ex) MF: Embedding user/item vector, Interaction modeling: dot product § Weakness § Lacks an explicit encoding of the crucial collaborative signal. § Existing methods build the embedding function with the descriptive features only (ex. ID and attributes), without considering the user-item interactions. Only used to define the objective function for model training § Sol) § Encode Collaborative signal using High-order connectivity from interaction graph 3

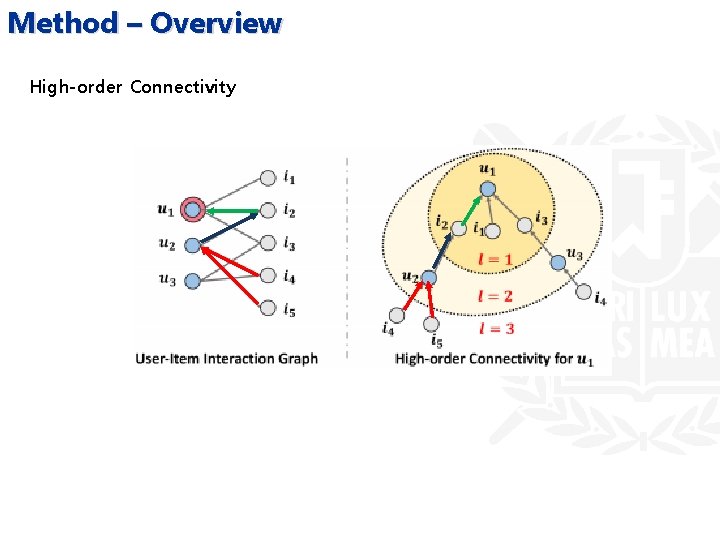

Method – Overview High-order Connectivity

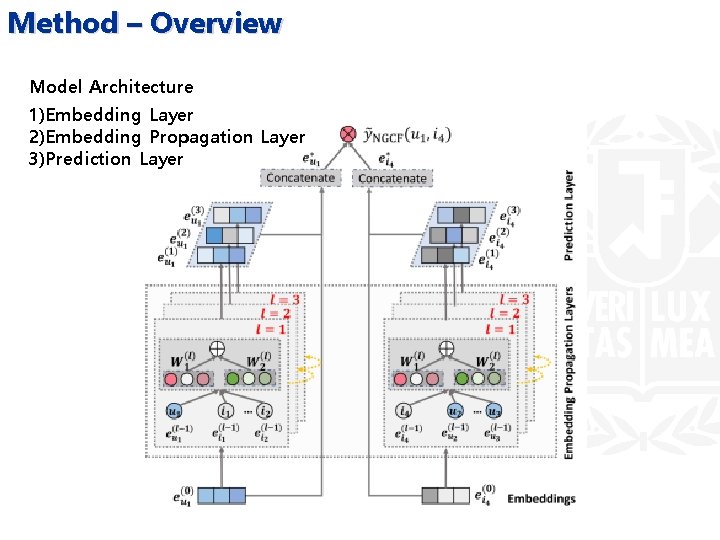

Method – Overview Model Architecture 1)Embedding Layer 2)Embedding Propagation Layer 3)Prediction Layer

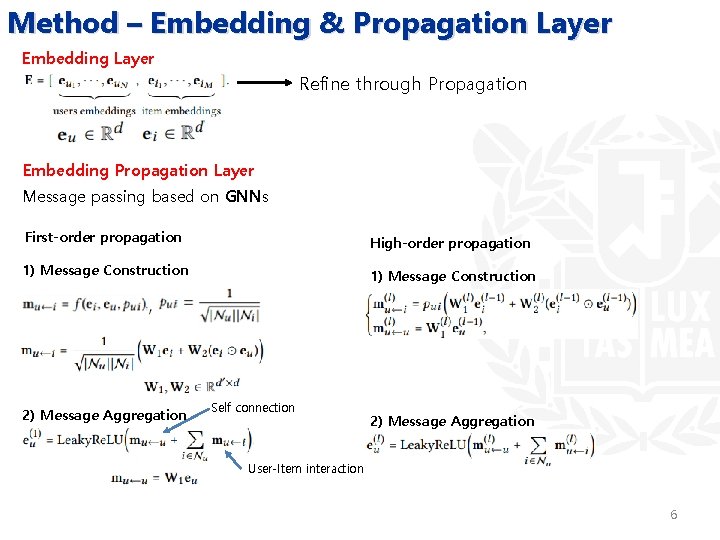

Method – Embedding & Propagation Layer Embedding Layer Refine through Propagation Embedding Propagation Layer Message passing based on GNNs First-order propagation High-order propagation 1) Message Construction 2) Message Aggregation Self connection 2) Message Aggregation User-Item interaction 6

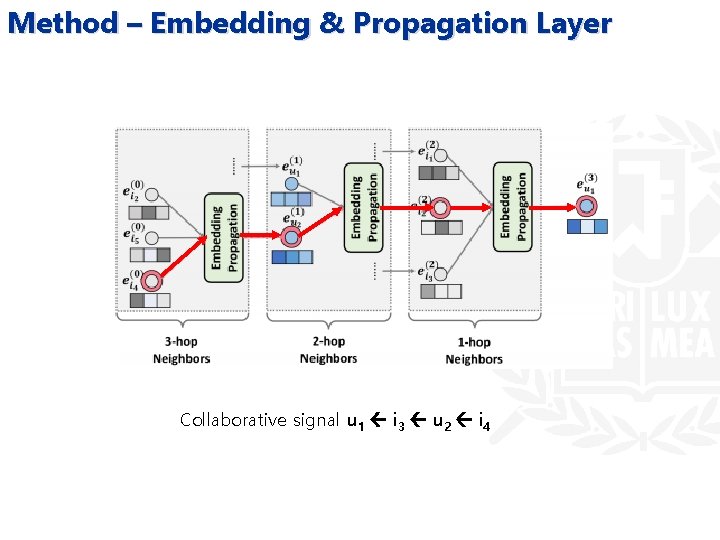

Method – Embedding & Propagation Layer Collaborative signal u 1 i 3 u 2 i 4

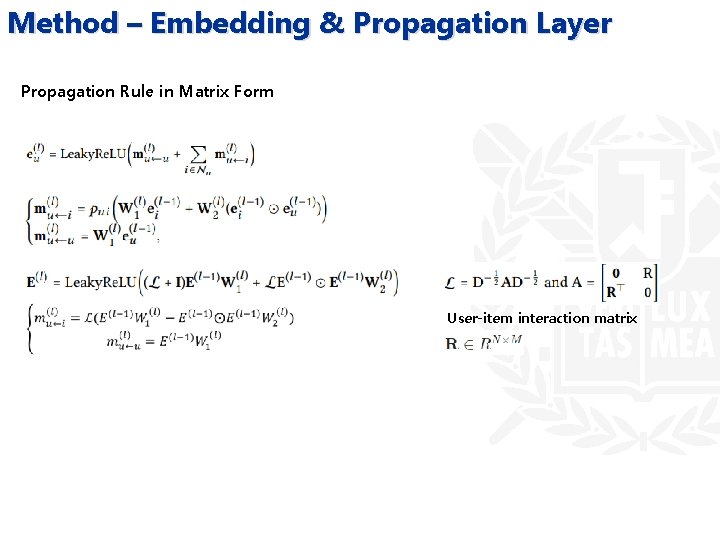

Method – Embedding & Propagation Layer Propagation Rule in Matrix Form User-item interaction matrix

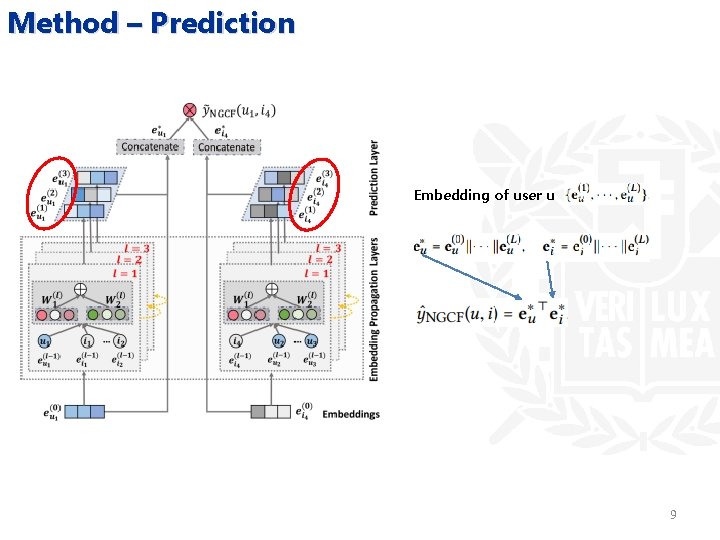

Method – Prediction Embedding of user u 9

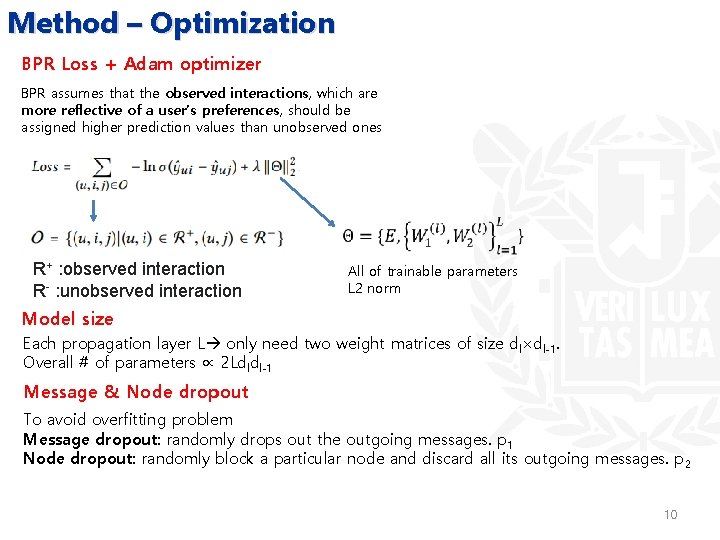

Method – Optimization BPR Loss + Adam optimizer BPR assumes that the observed interactions, which are more reflective of a user’s preferences, should be assigned higher prediction values than unobserved ones R+ : observed interaction R- : unobserved interaction All of trainable parameters L 2 norm Model size Each propagation layer L only need two weight matrices of size dl×dl-1. Overall # of parameters ∝ 2 Ldldl-1 Message & Node dropout To avoid overfitting problem Message dropout: randomly drops out the outgoing messages. p 1 Node dropout: randomly block a particular node and discard all its outgoing messages. p 2 10

Discussion NGCF generalizes SVD++ 11

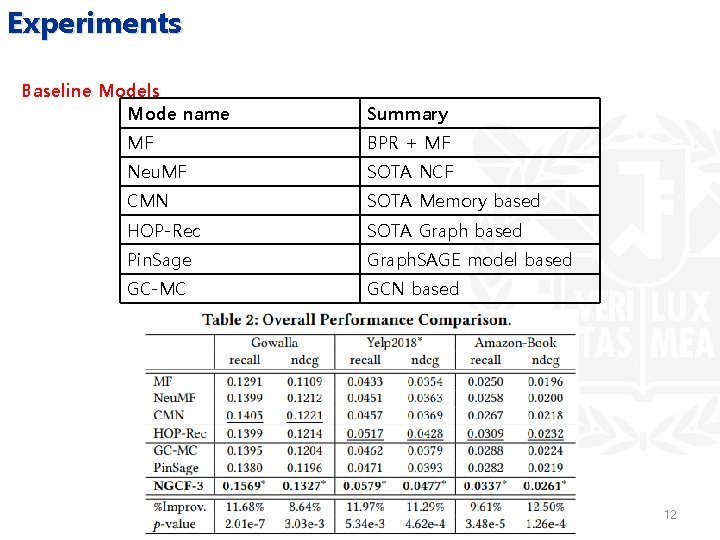

Experiments Baseline Models Mode name Summary MF BPR + MF Neu. MF SOTA NCF CMN SOTA Memory based HOP-Rec SOTA Graph based Pin. Sage Graph. SAGE model based GC-MC GCN based 12

Experiments Performance Comparison w. r. t. Interaction Sparsity Levels 13

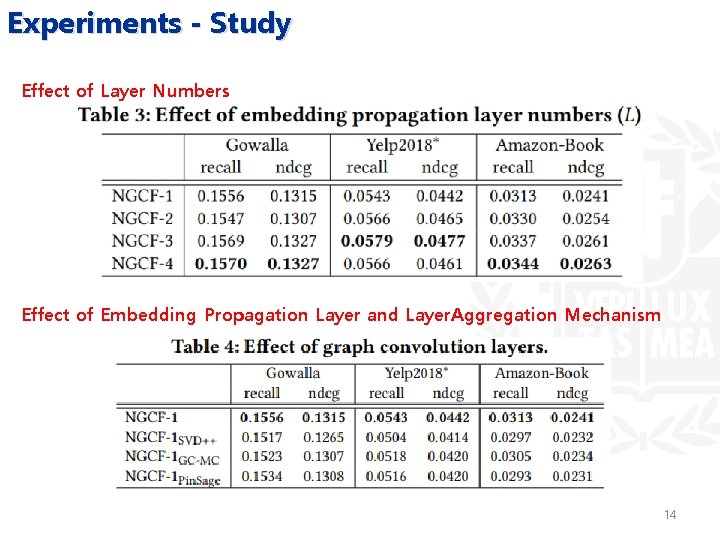

Experiments - Study Effect of Layer Numbers Effect of Embedding Propagation Layer and Layer. Aggregation Mechanism 14

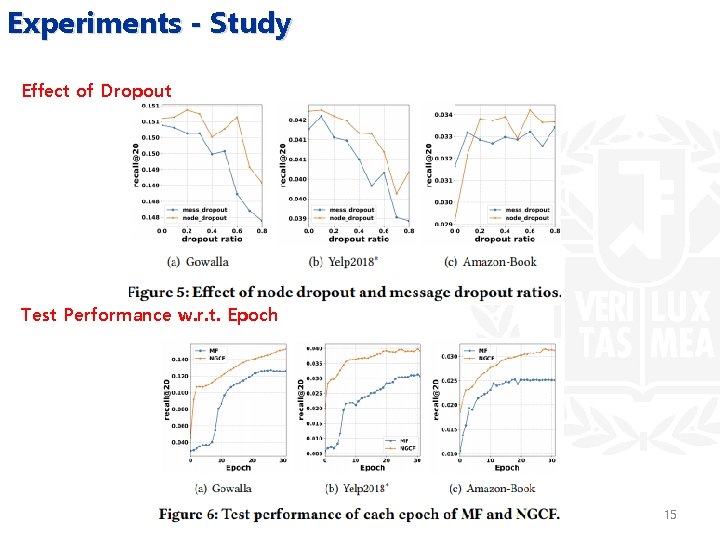

Experiments - Study Effect of Dropout Test Performance w. r. t. Epoch 15

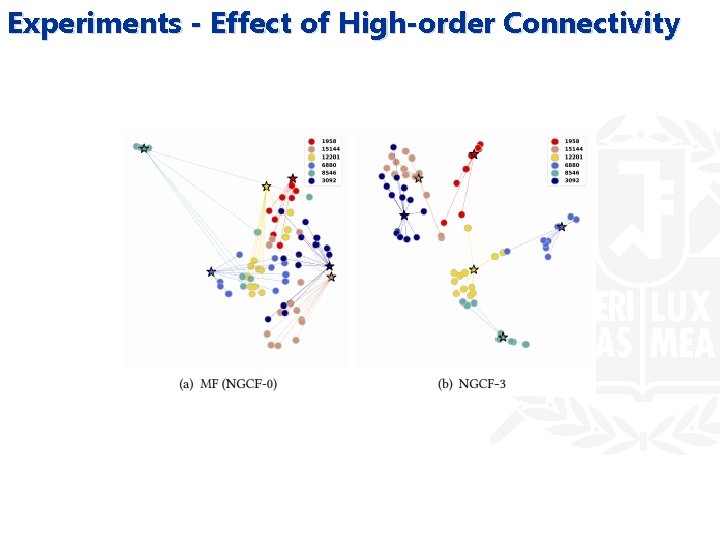

Experiments - Effect of High-order Connectivity

Conclusion Contribution • An initial attempt to exploit structural knowledge with the message-passing mechanism in model-based CF. • Explicitly incorporated collaborative signal into the embedding function of modelbased CF. Further works 1) Further improve NGCF by incorporating the attention mechanism. 2) Interested in exploring the adversarial learning on user/item embedding and the graph structure for enhancing the robustness of NGCF. 17

Thank you 18

- Slides: 18