NASA OSMA SAS 01 Software Reliability Through Hardware

NASA OSMA SAS '01 Software Reliability Through Hardware Reliability Dolores R. Wallace SRS Information Services Software Assurance Technology Center http: //satc. gsfc. nasa. gov/ Reliability-Sept 2001

The Problem • Critical NASA systems must execute successfully for a specified time under specified conditions -- Reliability • Most systems rely on software • Hence, a means to measure software reliability is essential to determining readiness for operation • Software reliability modeling provides one data point for reliability measurement Reliability-Sept 2001

The Issues • Identify mathematics of hardware reliability not used in software • Identify differences between hardware, software affecting reliability measurement • Develop improvements to software reliability modeling – Dr. Norman Schneidewind, Naval Postgraduate School • Develop and implement scenario for typical application on GSFC project data • Identify how software reliability modeling can be used at GSFC Reliability-Sept 2001

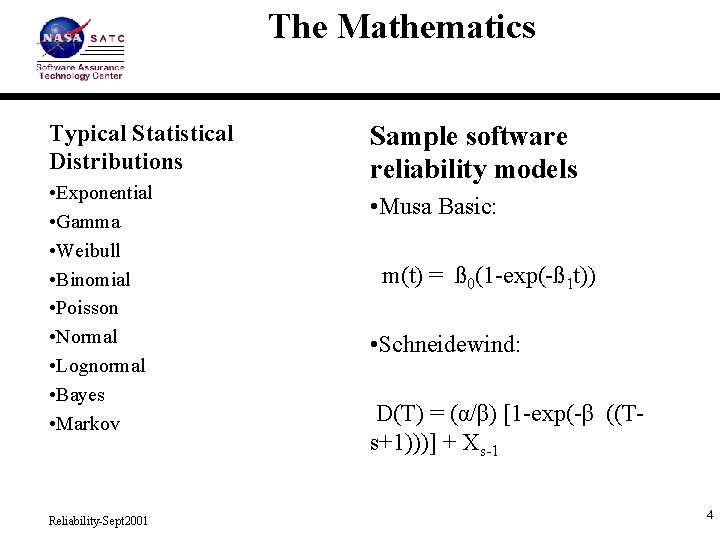

The Mathematics Typical Statistical Distributions • Exponential • Gamma • Weibull • Binomial • Poisson • Normal • Lognormal • Bayes • Markov Reliability-Sept 2001 Sample software reliability models • Musa Basic: m(t) = ß 0(1 -exp(-ß 1 t)) • Schneidewind: D(T) = (α/β) [1 -exp(-β ((Ts+1)))] + Xs-1 4

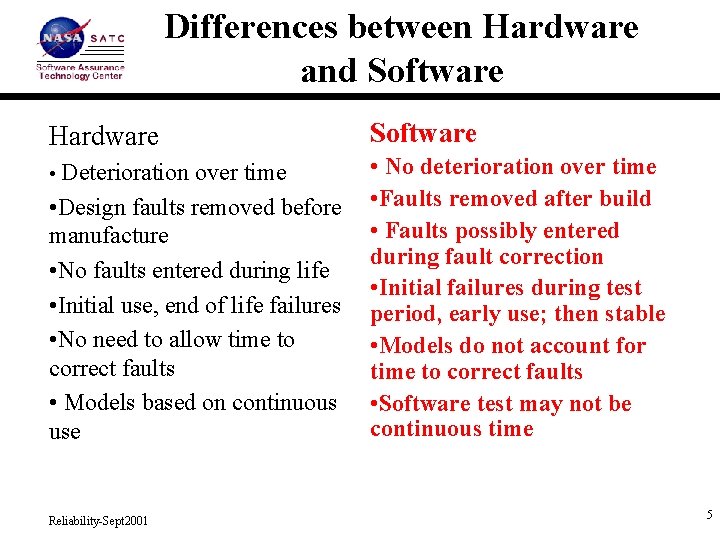

Differences between Hardware and Software Hardware Software • Deterioration over time • No deterioration over time • Faults removed after build • Faults possibly entered during fault correction • Initial failures during test period, early use; then stable • Models do not account for time to correct faults • Software test may not be continuous time • Design faults removed before manufacture • No faults entered during life • Initial use, end of life failures • No need to allow time to correct faults • Models based on continuous use Reliability-Sept 2001 5

Fault Correction Adjustments • Reliability growth occurs from fault correction • Failure correction proportional to rate of failure detection • Adjusted model with delay d. T (based on queuing service) but same general form as faults detected at time T • Process: use Schneidewind model to get parameters; apply to revised model via spreadsheet • Results – Show reliability growth due to fault correction – Predict stopping rules for testing • Optimal Selection of Failure Data • Next: GSFC data; Fault insertion Reliability-Sept 2001

Applying Software Reliability Modeling • The modeling process – Apply AIAA Recommended Practice – Learn about the system • Data collection requirements – Dates of failure, fix – Activities/ phase when failures occur – Preparation of the data (interval; time between failure) • Available software tools – Public domain SMERFS^3 – Loglet: commercial, but for our validation • Interpretation of results Reliability-Sept 2001

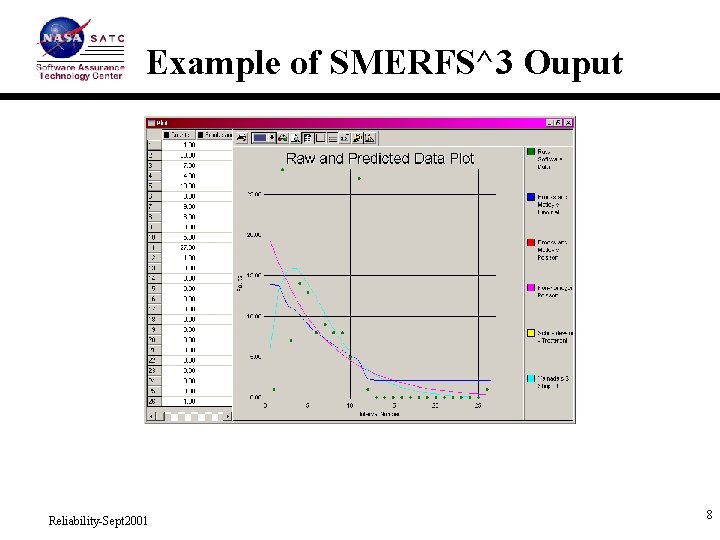

Example of SMERFS^3 Ouput Reliability-Sept 2001 8

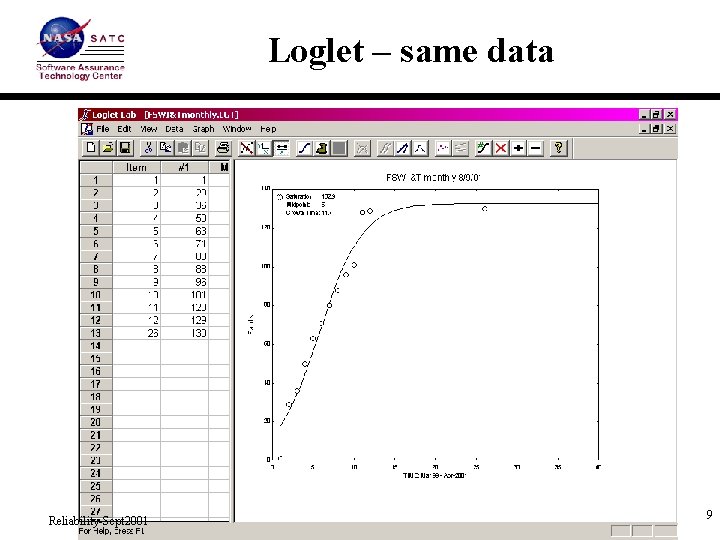

Loglet – same data Reliability-Sept 2001 9

Options for SRM at NASA • SATC Service – Projects submit failure data and project information – SATC executes models and prepares analysis • Deployment to Project staff – SATC provides training and public domain tool – Project staff utilize the models • Partnering – SATC provides tutorials and tools – Project staff may prepare the data and SATC the analysis – Project staff may exercise the models but discuss results with SATC Reliability-Sept 2001

Proposed Next Steps • Continue research on improvements to existing software reliability models • Explore non-parametric methods • Complete experiments with NASA data • Provide technology transfer of knowledge Reliability-Sept 2001

- Slides: 11