Multiple patches for black hole and other evolutions

Multiple patches for black hole and other evolutions Manuel Tiglio Hearne Institute for Theoretical Physics Louisiana State University Cactus retreat, April 2004

l The work presented here has been done in collaboration with Luis Lehner, Dave Neilsen, Oscar Reula, and Olivier Sarbach. l There is new work not presented here with Peter Diener, Nils Dorband several others.

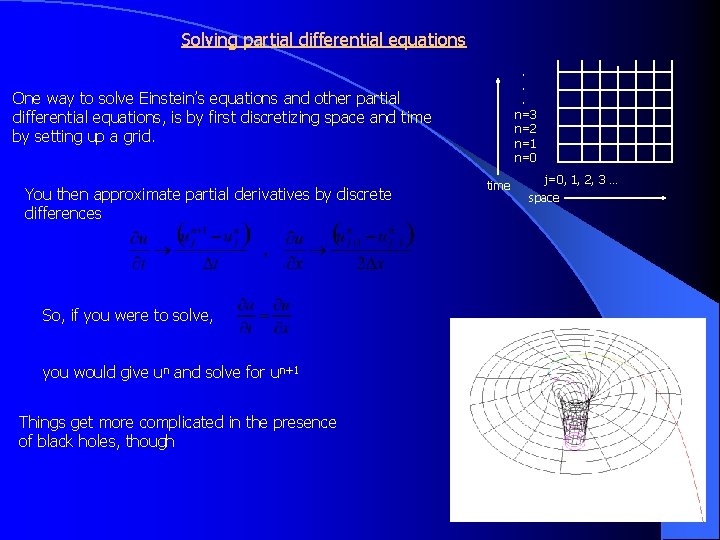

Solving partial differential equations. . . n=3 n=2 n=1 n=0 One way to solve Einstein’s equations and other partial differential equations, is by first discretizing space and time by setting up a grid. You then approximate partial derivatives by discrete differences So, if you were to solve, you would give un and solve for un+1 Things get more complicated in the presence of black holes, though time j=0, 1, 2, 3 … space

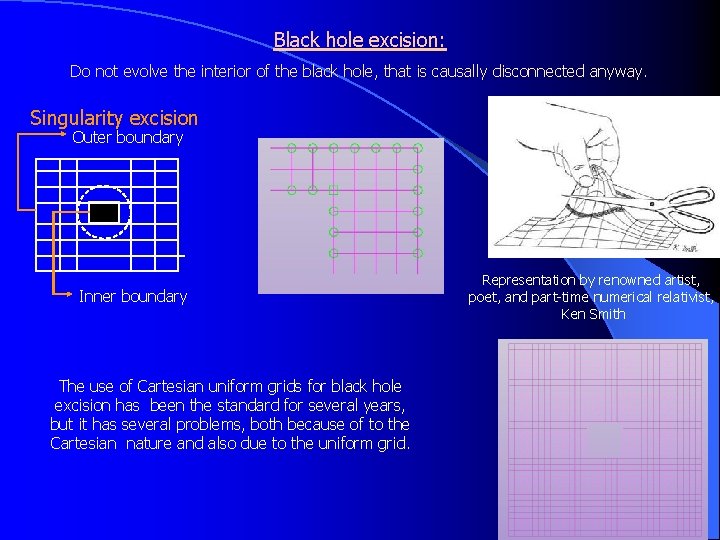

Black hole excision: Do not evolve the interior of the black hole, that is causally disconnected anyway. Singularity excision Outer boundary Inner boundary The use of Cartesian uniform grids for black hole excision has been the standard for several years, but it has several problems, both because of to the Cartesian nature and also due to the uniform grid. Representation by renowned artist, poet, and part-time numerical relativist, Ken Smith

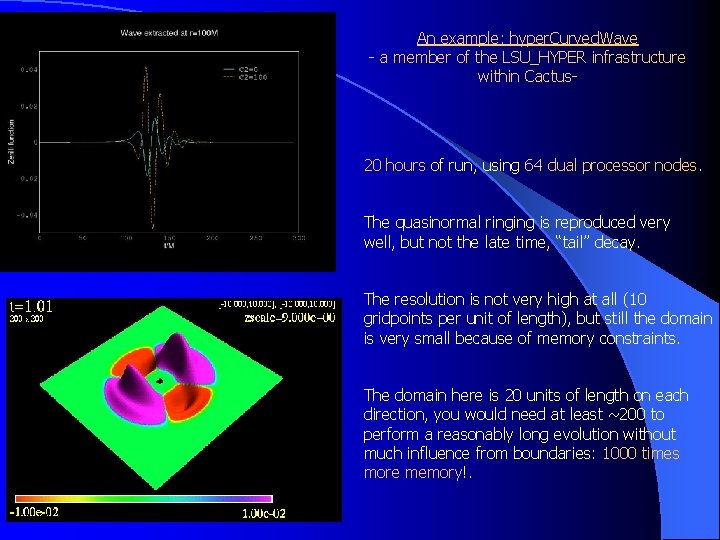

An example: hyper. Curved. Wave - a member of the LSU_HYPER infrastructure within Cactus- 20 hours of run, using 64 dual processor nodes. The quasinormal ringing is reproduced very well, but not the late time, “tail” decay. The resolution is not very high at all (10 gridpoints per unit of length), but still the domain is very small because of memory constraints. The domain here is 20 units of length on each direction, you would need at least ~200 to perform a reasonably long evolution without much influence from boundaries: 1000 times more memory!.

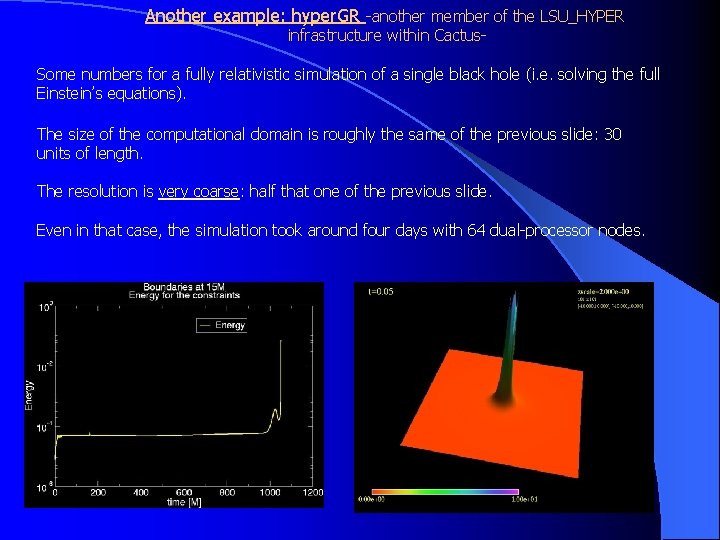

Another example: hyper. GR -another member of the LSU_HYPER infrastructure within Cactus- Some numbers for a fully relativistic simulation of a single black hole (i. e. solving the full Einstein’s equations). The size of the computational domain is roughly the same of the previous slide: 30 units of length. The resolution is very coarse: half that one of the previous slide. Even in that case, the simulation took around four days with 64 dual-processor nodes.

Summary: l Uniform cartesian grids suffer from at least two problems: l Lack of resolution l Technical problems that I haven’t talked about here because of non-smooth, cubic, boundaries (especially when there is rotation).

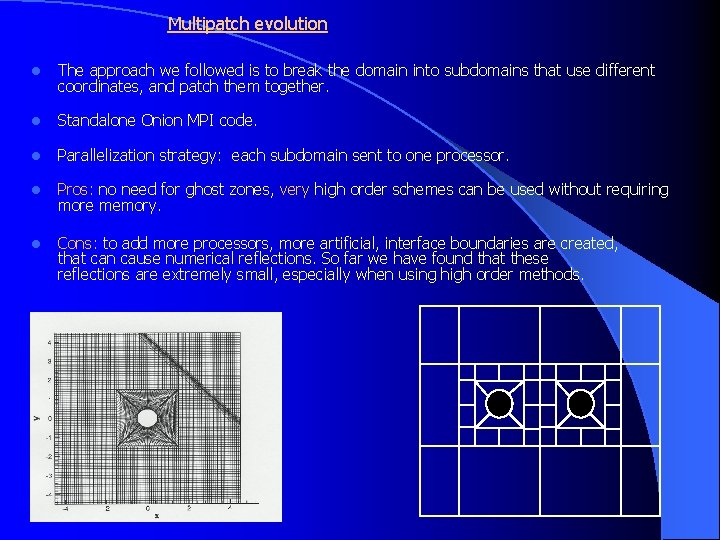

Multipatch evolution l The approach we followed is to break the domain into subdomains that use different coordinates, and patch them together. l Standalone Onion MPI code. l Parallelization strategy: each subdomain sent to one processor. l Pros: no need for ghost zones, very high order schemes can be used without requiring more memory. l Cons: to add more processors, more artificial, interface boundaries are created, that can cause numerical reflections. So far we have found that these reflections are extremely small, especially when using high order methods.

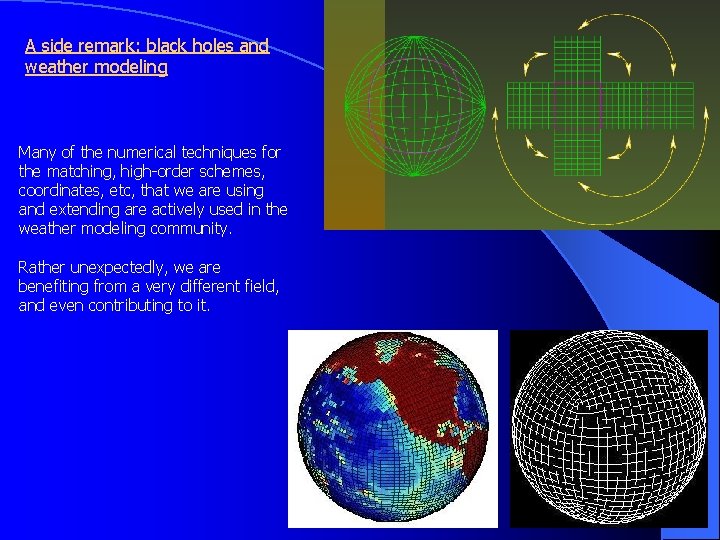

A side remark: black holes and weather modeling Many of the numerical techniques for the matching, high-order schemes, coordinates, etc, that we are using and extending are actively used in the weather modeling community. Rather unexpectedly, we are benefiting from a very different field, and even contributing to it.

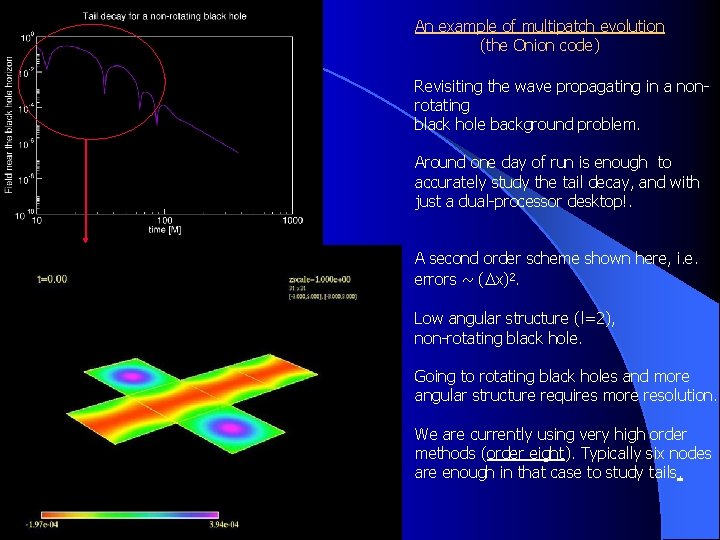

An example of multipatch evolution (the Onion code) Revisiting the wave propagating in a nonrotating black hole background problem. Around one day of run is enough to accurately study the tail decay, and with just a dual-processor desktop!. A second order scheme shown here, i. e. errors ~ (Dx)2. Low angular structure (l=2), non-rotating black hole. Going to rotating black holes and more angular structure requires more resolution. We are currently using very high order methods (order eight). Typically six nodes are enough in that case to study tails.

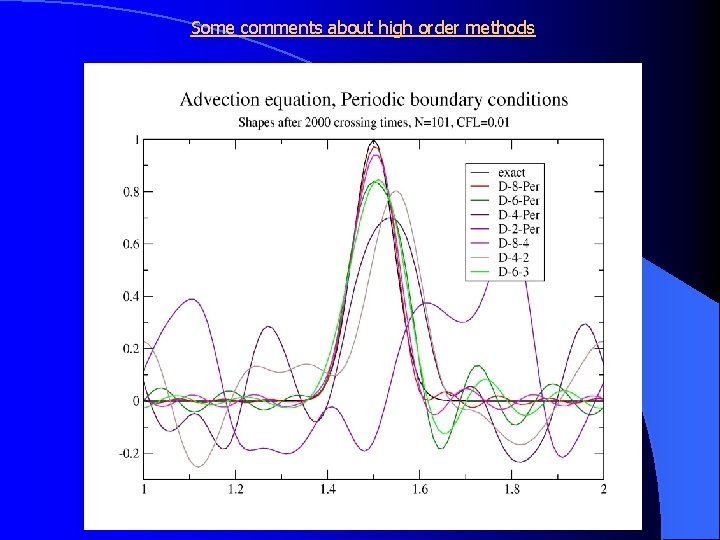

Some comments about high order methods

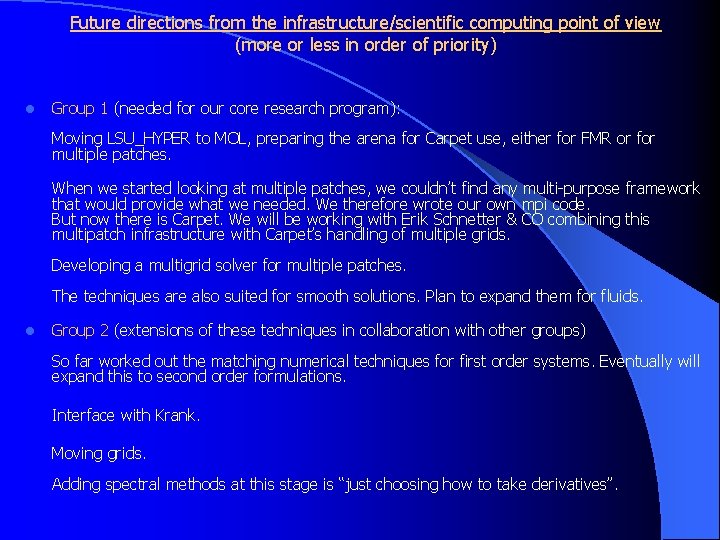

Future directions from the infrastructure/scientific computing point of view (more or less in order of priority) l Group 1 (needed for our core research program): Moving LSU_HYPER to MOL, preparing the arena for Carpet use, either for FMR or for multiple patches. When we started looking at multiple patches, we couldn’t find any multi-purpose framework that would provide what we needed. We therefore wrote our own mpi code. But now there is Carpet. We will be working with Erik Schnetter & CO combining this multipatch infrastructure with Carpet’s handling of multiple grids. Developing a multigrid solver for multiple patches. The techniques are also suited for smooth solutions. Plan to expand them for fluids. l Group 2 (extensions of these techniques in collaboration with other groups) So far worked out the matching numerical techniques for first order systems. Eventually will expand this to second order formulations. Interface with Krank. Moving grids. Adding spectral methods at this stage is “just choosing how to take derivatives”.

Who can benefit from this? l Anyone solving partial differential equations who wants to move away from Cartesian grids but for whom a semi-structured approach with multiple coordinates is enough. l Examples: Cauchy-perturbative or Cauchy-characteristic matching in numerical relativity. The conformal approach to Einstein’s equations. Black holes, whatever formulation you are using. l People using spectral methods and looking for a framework like Cactus. l People looking for high-order schemes. l Weather modelers.

- Slides: 13