Lexical Analysis Lexical Analyzer in Perspective source program

- Slides: 21

Lexical Analysis

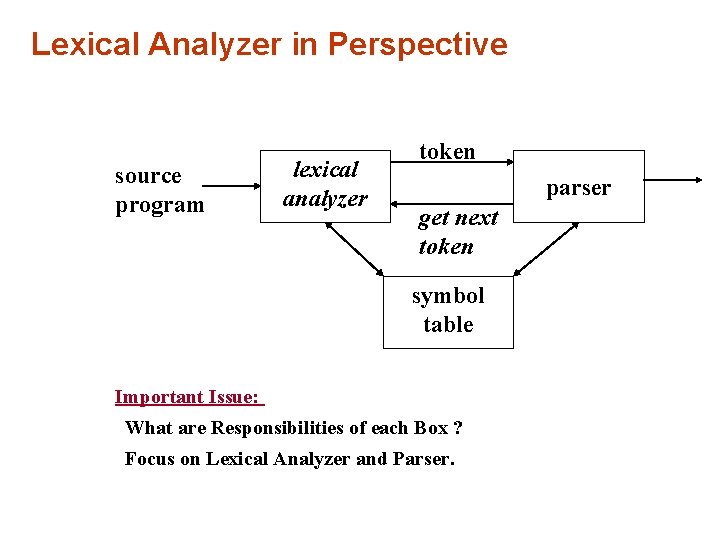

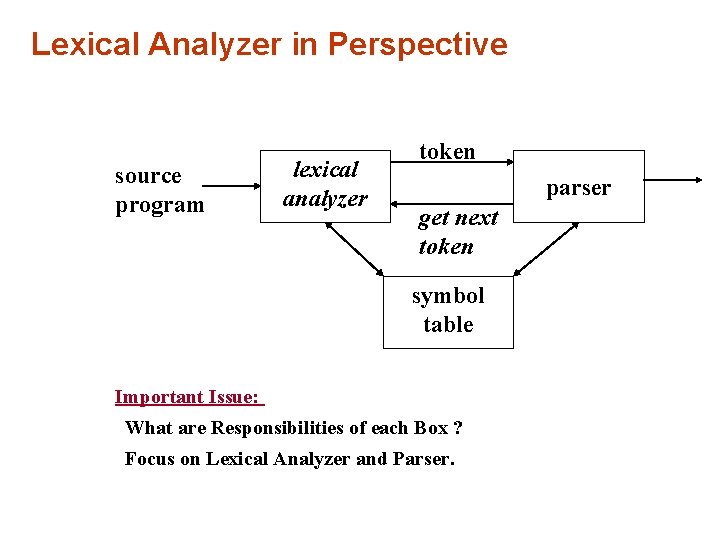

Lexical Analyzer in Perspective source program lexical analyzer token parser get next token symbol table Important Issue: What are Responsibilities of each Box ? Focus on Lexical Analyzer and Parser.

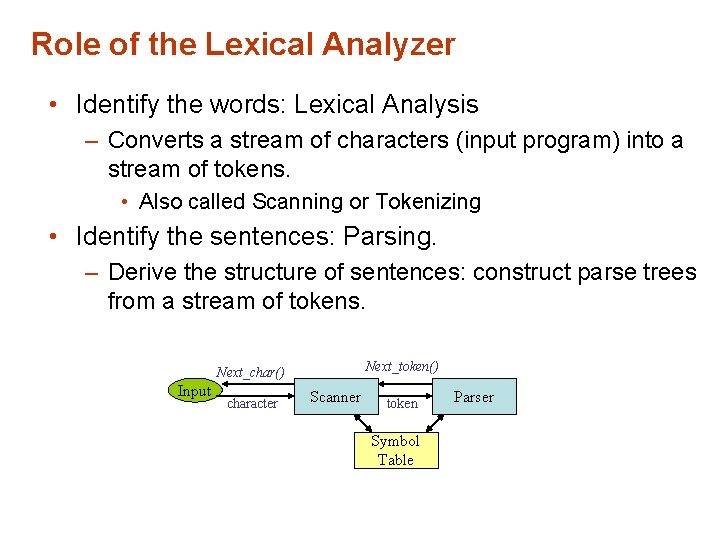

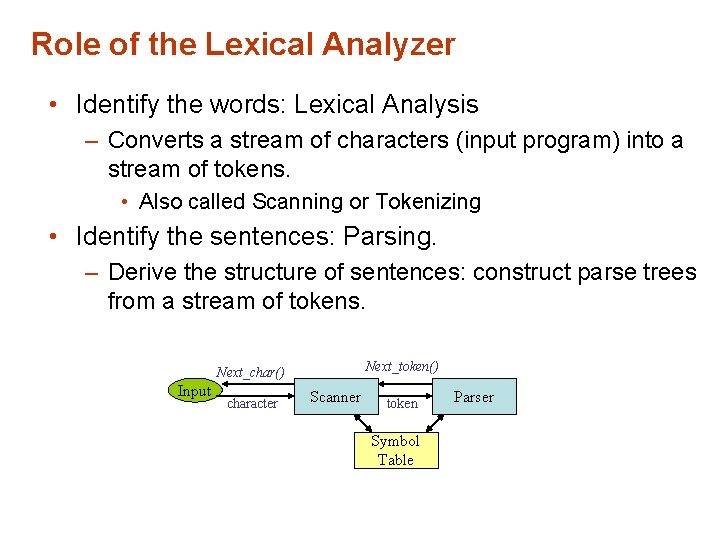

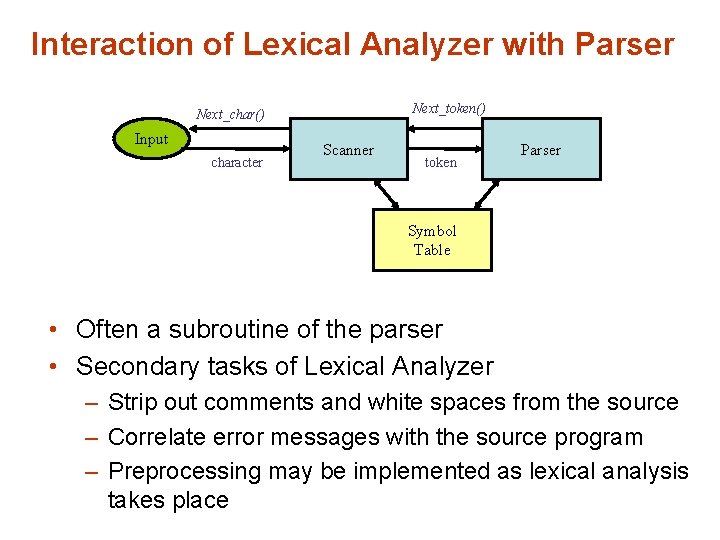

Role of the Lexical Analyzer • Identify the words: Lexical Analysis – Converts a stream of characters (input program) into a stream of tokens. • Also called Scanning or Tokenizing • Identify the sentences: Parsing. – Derive the structure of sentences: construct parse trees from a stream of tokens. Next_token() Next_char() Input character Scanner token Symbol Table Parser

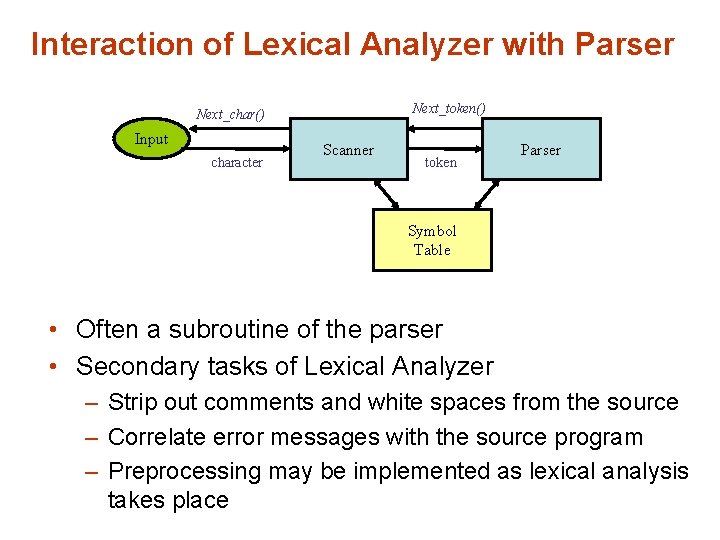

Interaction of Lexical Analyzer with Parser Next_token() Next_char() Input character Scanner token Parser Symbol Table • Often a subroutine of the parser • Secondary tasks of Lexical Analyzer – Strip out comments and white spaces from the source – Correlate error messages with the source program – Preprocessing may be implemented as lexical analysis takes place

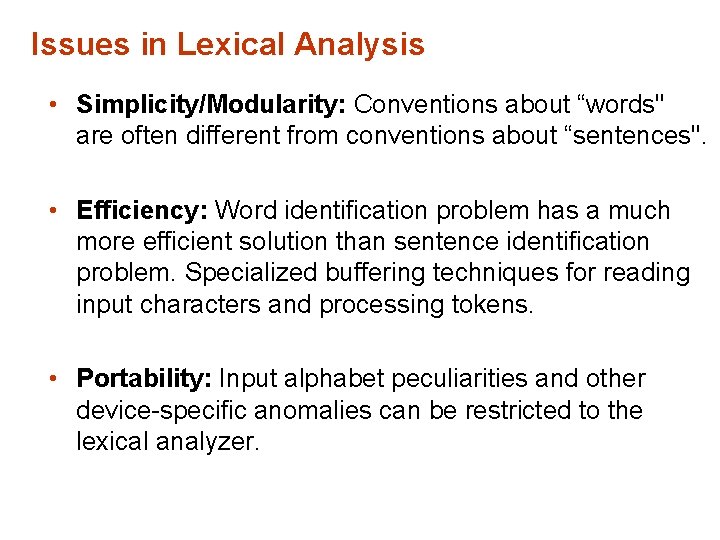

Issues in Lexical Analysis • Simplicity/Modularity: Conventions about “words" are often different from conventions about “sentences". • Efficiency: Word identification problem has a much more efficient solution than sentence identification problem. Specialized buffering techniques for reading input characters and processing tokens. • Portability: Input alphabet peculiarities and other device-specific anomalies can be restricted to the lexical analyzer.

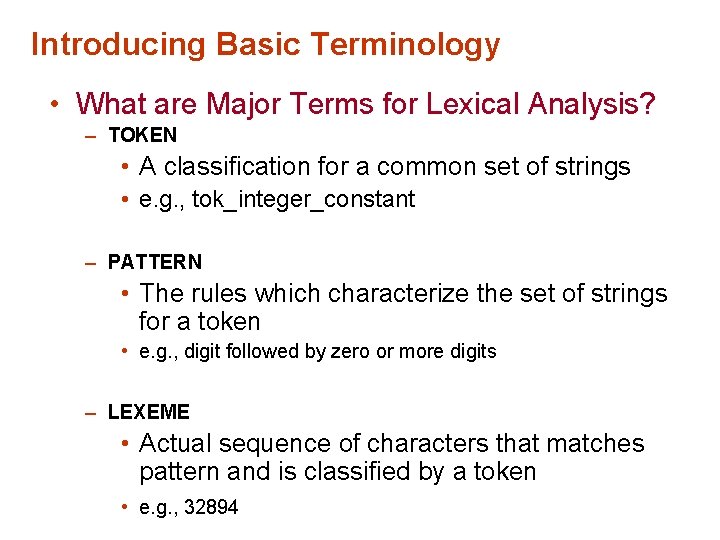

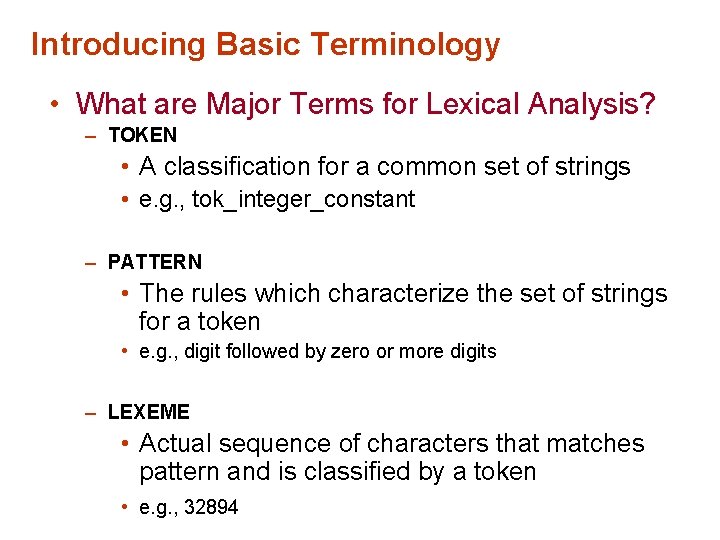

Introducing Basic Terminology • What are Major Terms for Lexical Analysis? – TOKEN • A classification for a common set of strings • e. g. , tok_integer_constant – PATTERN • The rules which characterize the set of strings for a token • e. g. , digit followed by zero or more digits – LEXEME • Actual sequence of characters that matches pattern and is classified by a token • e. g. , 32894

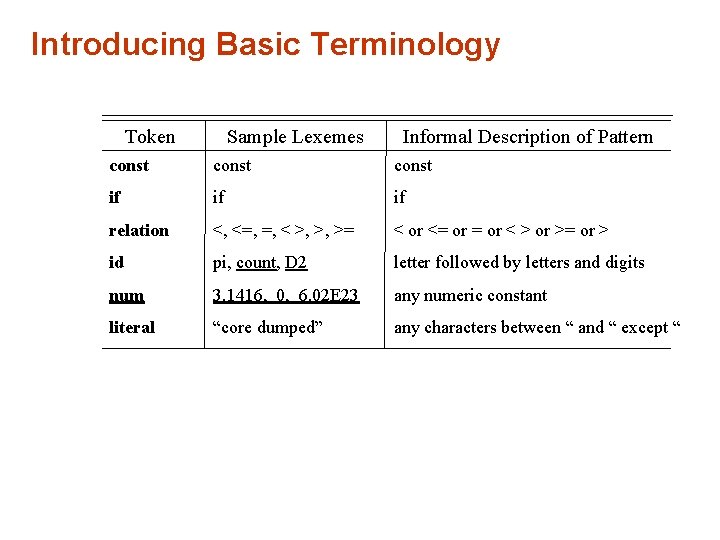

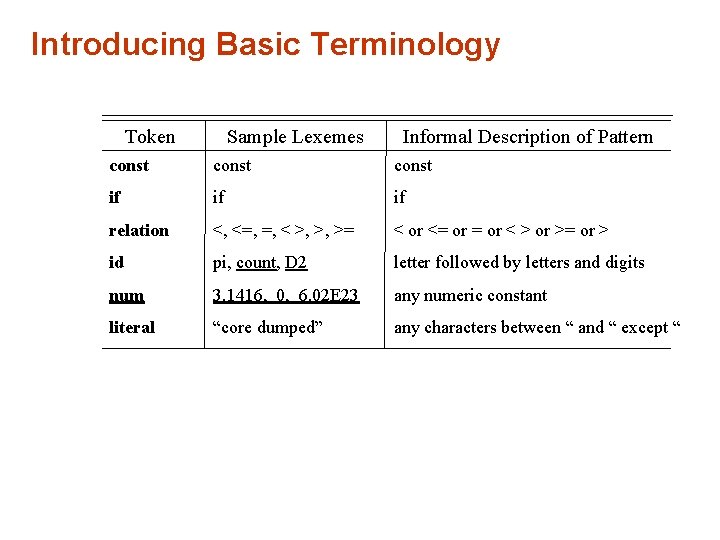

Introducing Basic Terminology Token Sample Lexemes Informal Description of Pattern const if if if relation <, <=, =, < >, >, >= < or <= or < > or >= or > id pi, count, D 2 letter followed by letters and digits num 3. 1416, 0, 6. 02 E 23 any numeric constant literal “core dumped” any characters between “ and “ except “

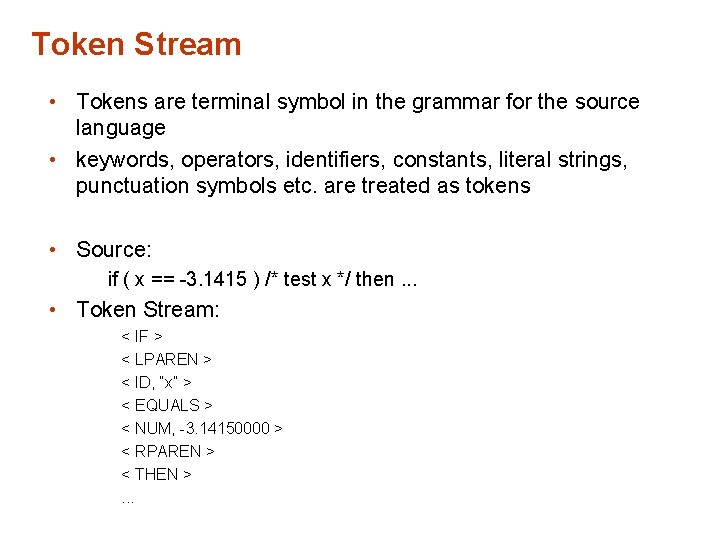

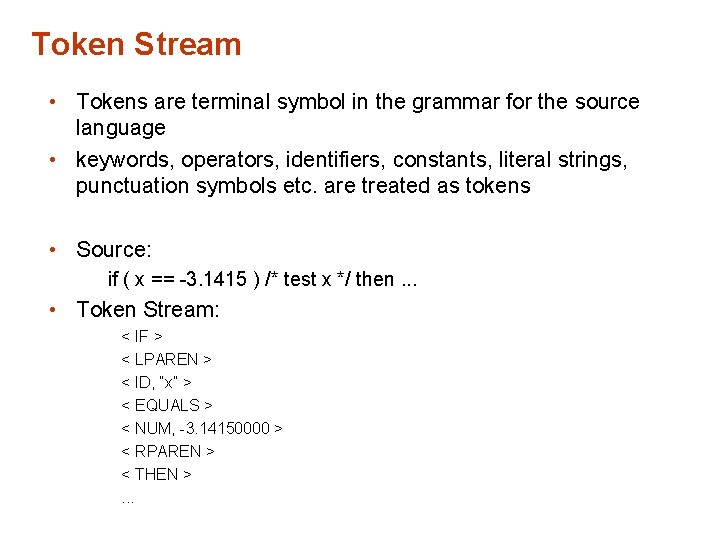

Token Stream • Tokens are terminal symbol in the grammar for the source language • keywords, operators, identifiers, constants, literal strings, punctuation symbols etc. are treated as tokens • Source: if ( x == -3. 1415 ) /* test x */ then. . . • Token Stream: < IF > < LPAREN > < ID, “x” > < EQUALS > < NUM, -3. 14150000 > < RPAREN > < THEN >. . .

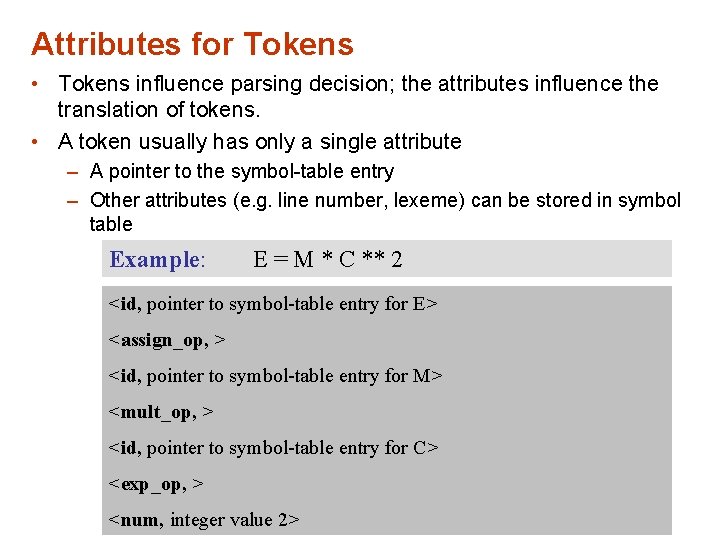

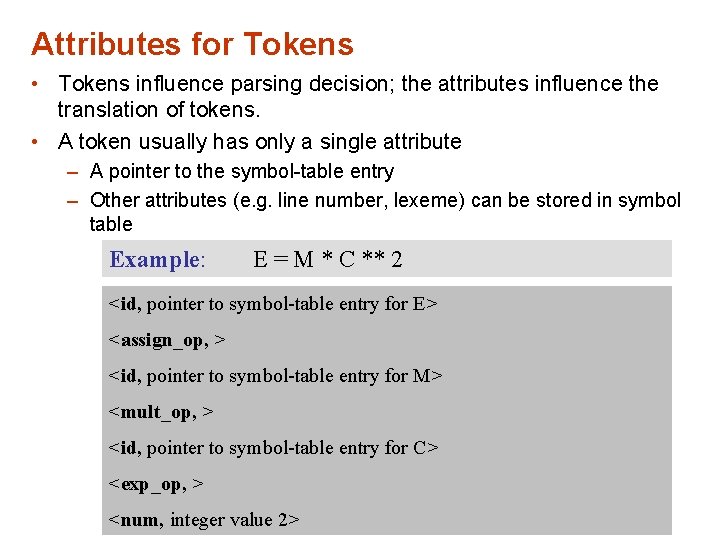

Attributes for Tokens • Tokens influence parsing decision; the attributes influence the translation of tokens. • A token usually has only a single attribute – A pointer to the symbol-table entry – Other attributes (e. g. line number, lexeme) can be stored in symbol table Example: E = M * C ** 2 <id, pointer to symbol-table entry for E> <assign_op, > <id, pointer to symbol-table entry for M> <mult_op, > <id, pointer to symbol-table entry for C> <exp_op, > <num, integer value 2>

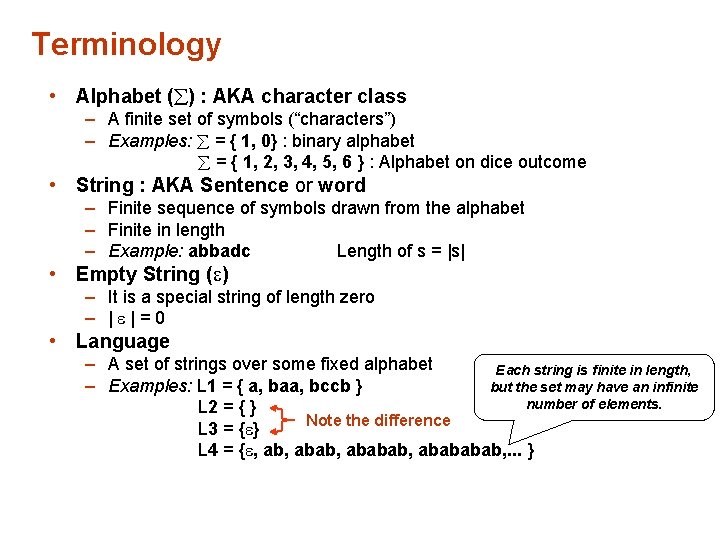

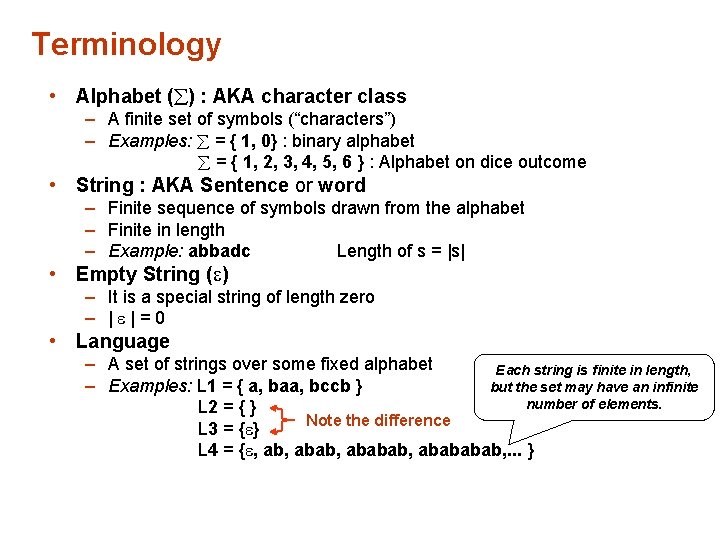

Terminology • Alphabet ( ) : AKA character class – A finite set of symbols (“characters”) – Examples: = { 1, 0} : binary alphabet = { 1, 2, 3, 4, 5, 6 } : Alphabet on dice outcome • String : AKA Sentence or word – Finite sequence of symbols drawn from the alphabet – Finite in length – Example: abbadc Length of s = |s| • Empty String ( ) – It is a special string of length zero – | |=0 • Language – A set of strings over some fixed alphabet Each string is finite in length, – Examples: L 1 = { a, baa, bccb } but the set may have an infinite number of elements. L 2 = { } Note the difference L 3 = { } L 4 = { , abab, abababab, . . . }

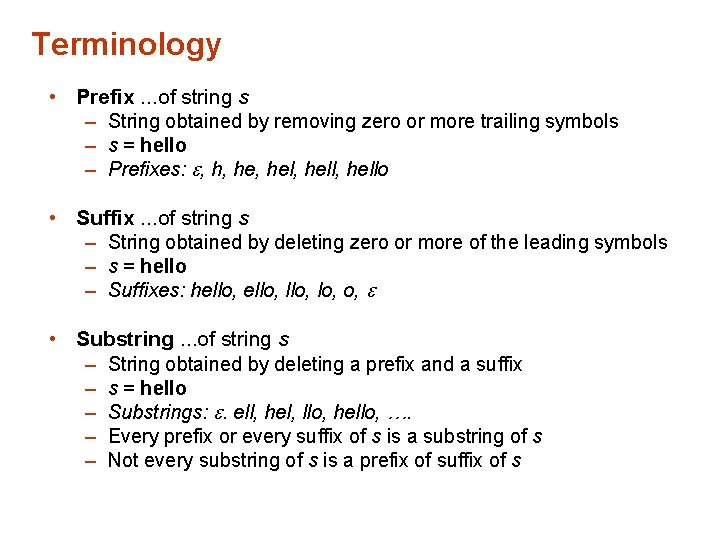

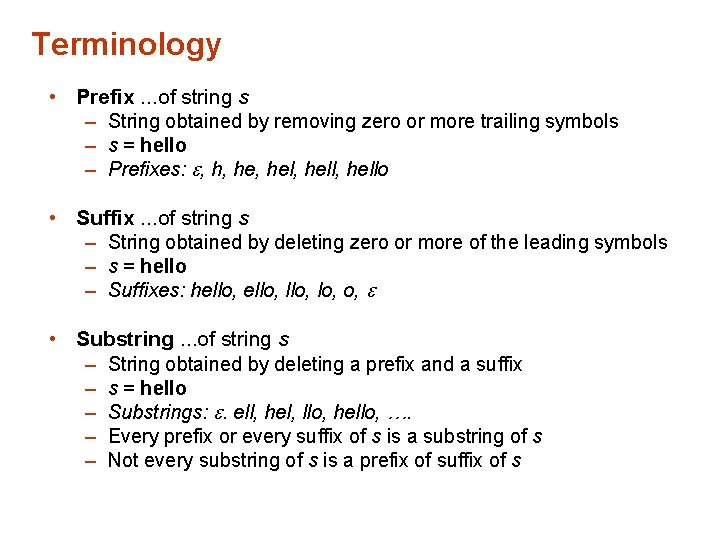

Terminology • Prefix. . . of string s – String obtained by removing zero or more trailing symbols – s = hello – Prefixes: , h, hel, hello • Suffix. . . of string s – String obtained by deleting zero or more of the leading symbols – s = hello – Suffixes: hello, o, • Substring. . . of string s – String obtained by deleting a prefix and a suffix – s = hello – Substrings: . ell, hel, llo, hello, …. – Every prefix or every suffix of s is a substring of s – Not every substring of s is a prefix of suffix of s

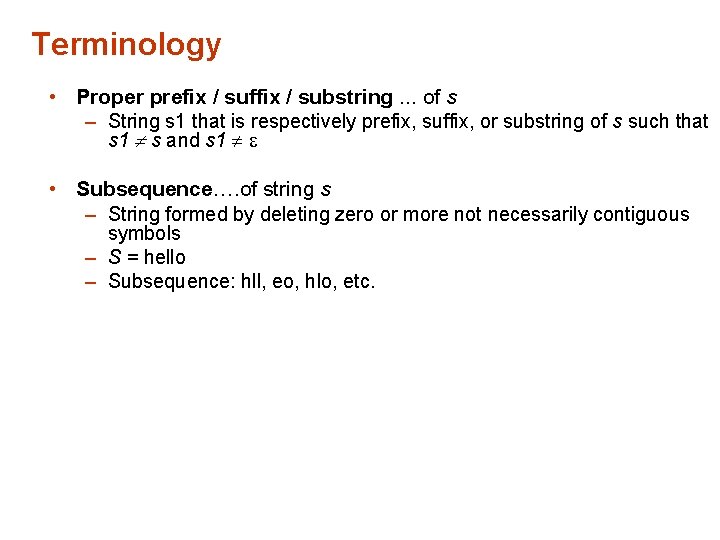

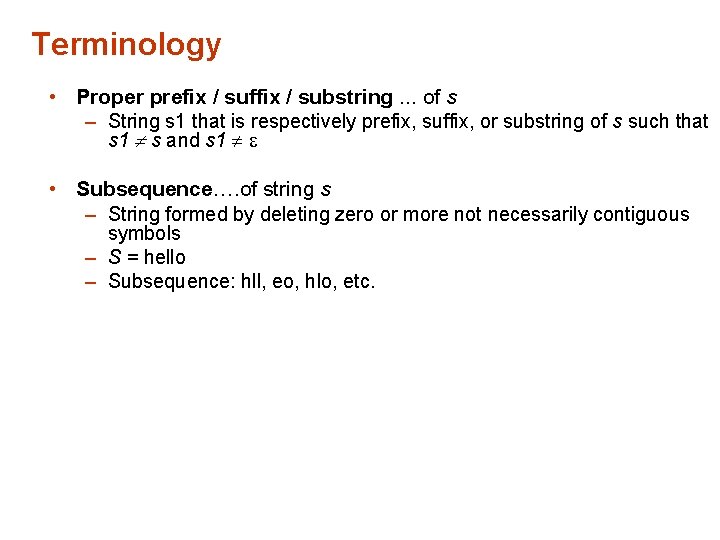

Terminology • Proper prefix / suffix / substring. . . of s – String s 1 that is respectively prefix, suffix, or substring of s such that s 1 s and s 1 • Subsequence…. of string s – String formed by deleting zero or more not necessarily contiguous symbols – S = hello – Subsequence: hll, eo, hlo, etc.

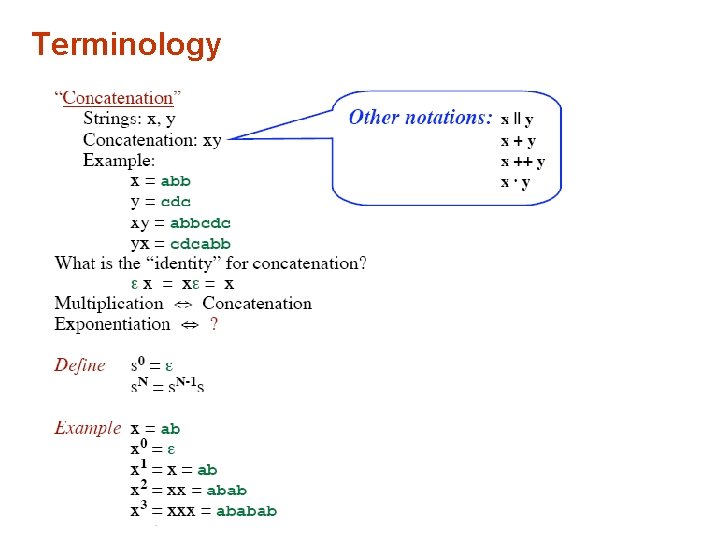

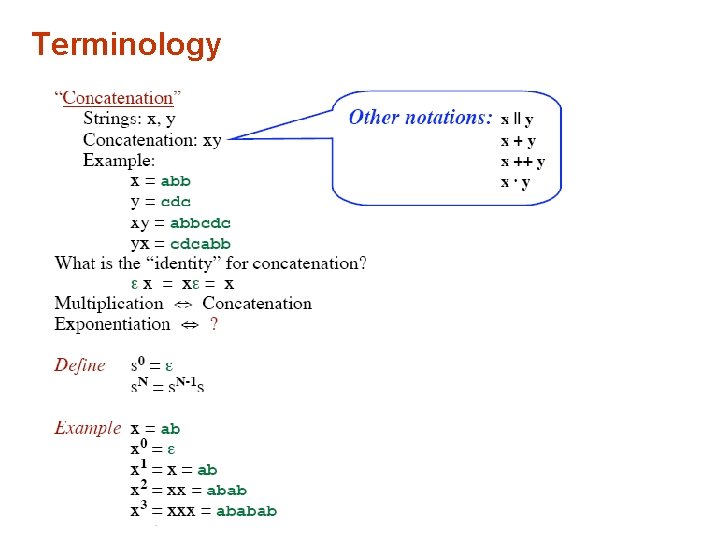

Terminology

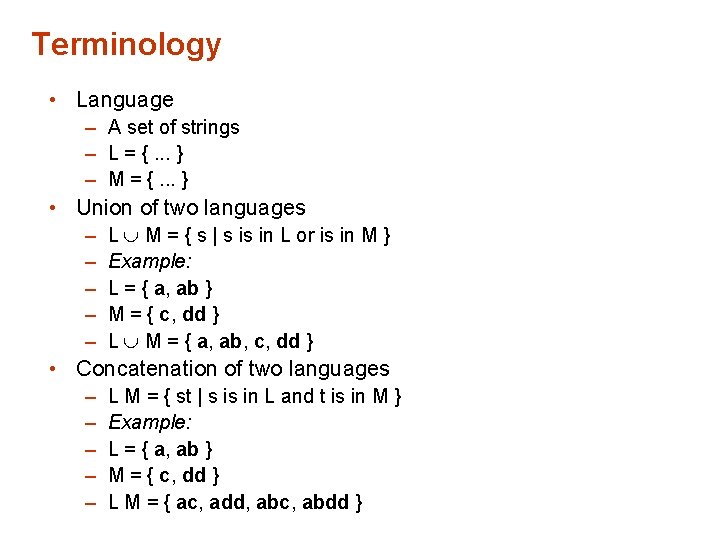

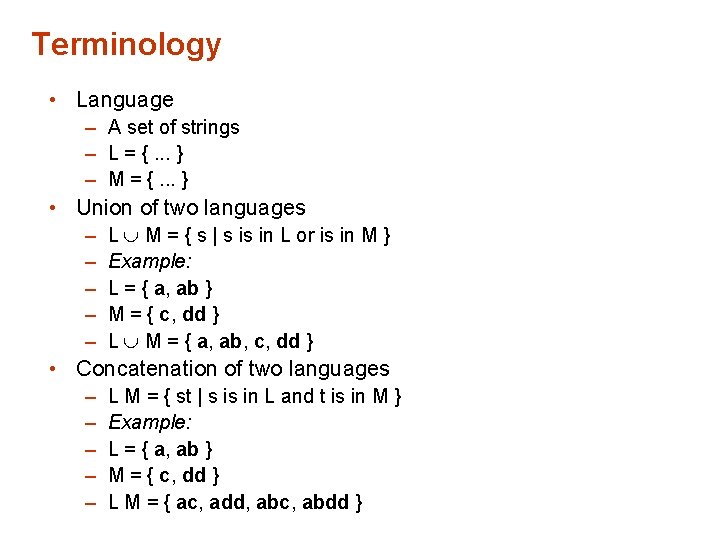

Terminology • Language – A set of strings – L = {. . . } – M = {. . . } • Union of two languages – – – L M = { s | s is in L or is in M } Example: L = { a, ab } M = { c, dd } L M = { a, ab, c, dd } • Concatenation of two languages – – – L M = { st | s is in L and t is in M } Example: L = { a, ab } M = { c, dd } L M = { ac, add, abc, abdd }

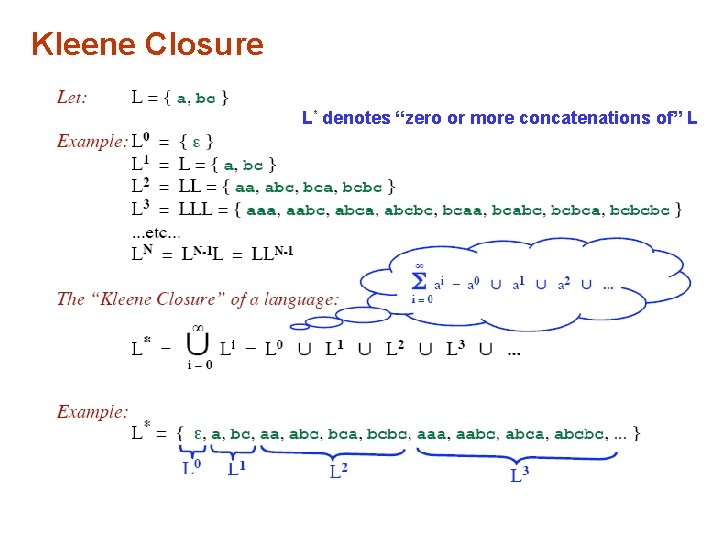

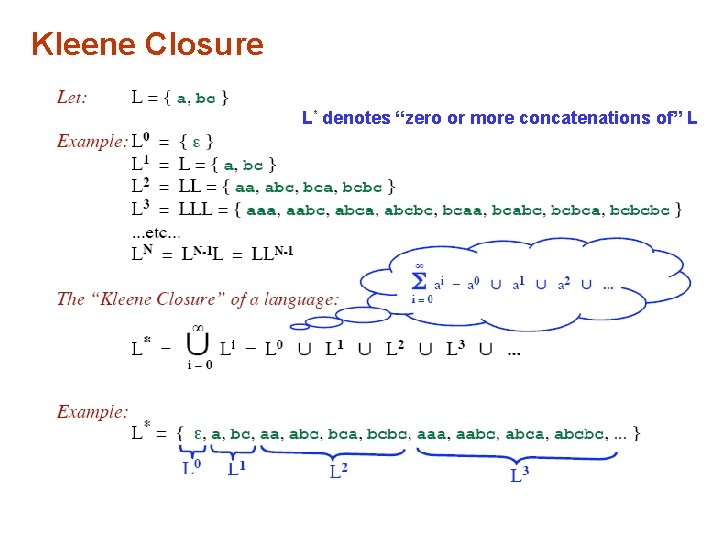

Kleene Closure L* denotes “zero or more concatenations of” L

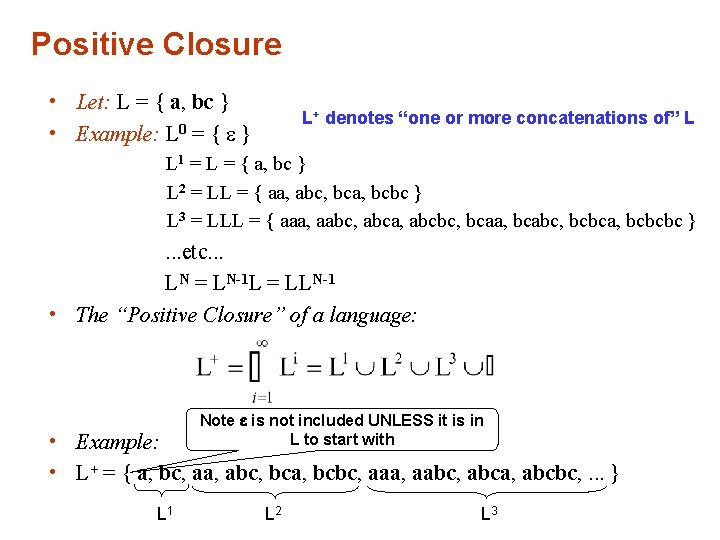

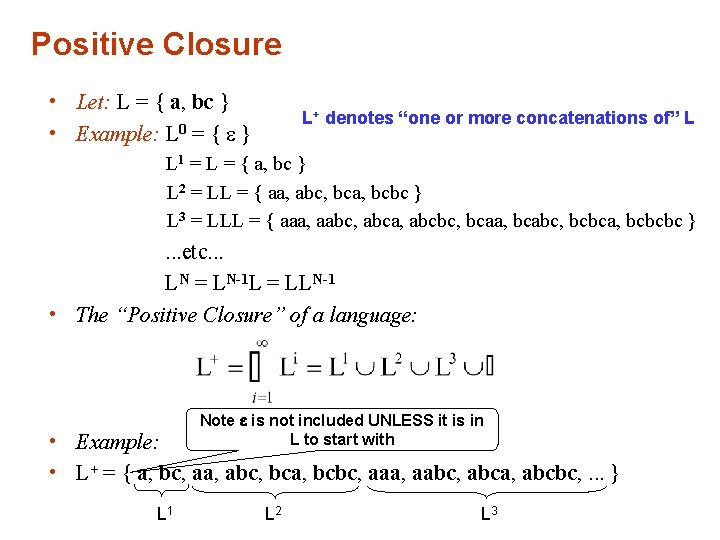

Positive Closure • Let: L = { a, bc } • Example: L 0 = { } L+ denotes “one or more concatenations of” L L 1 = L = { a, bc } L 2 = LL = { aa, abc, bca, bcbc } L 3 = LLL = { aaa, aabc, abca, abcbc, bcaa, bcabc, bcbca, bcbcbc } . . . etc. . . LN = LN-1 L = LLN-1 • The “Positive Closure” of a language: Note is not included UNLESS it is in L to start with • Example: • L+ = { a, bc, aa, abc, bca, bcbc, aaa, aabc, abca, abcbc, . . . } L 1 L 2 L 3

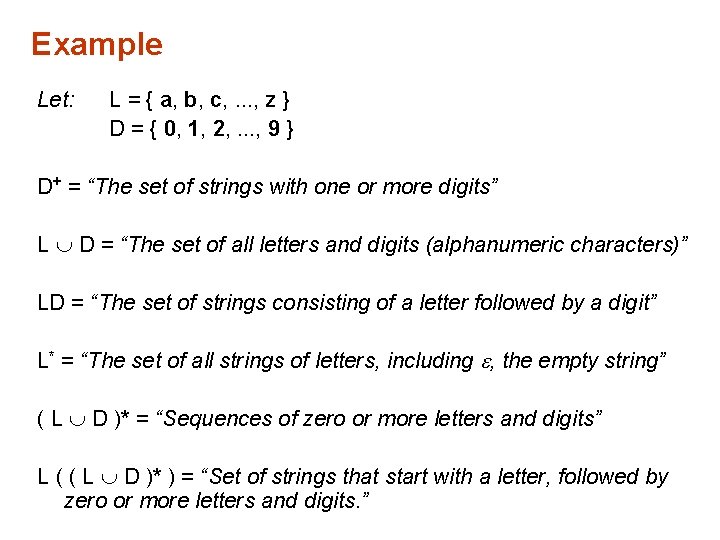

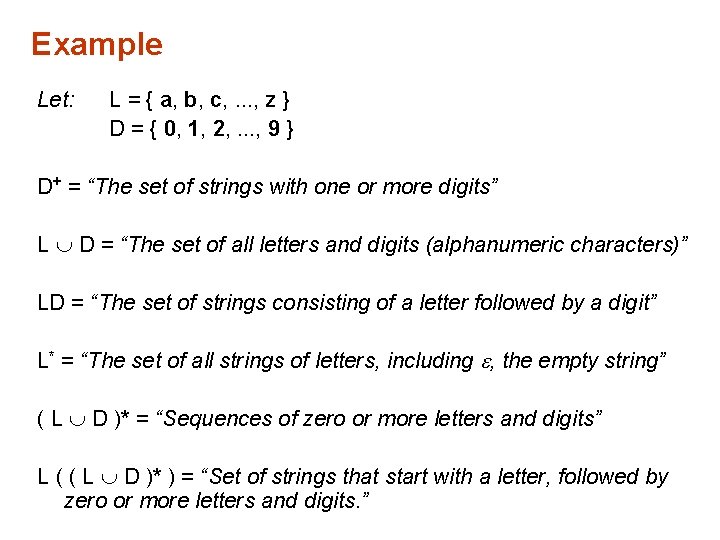

Example Let: L = { a, b, c, . . . , z } D = { 0, 1, 2, . . . , 9 } D+ = “The set of strings with one or more digits” L D = “The set of all letters and digits (alphanumeric characters)” LD = “The set of strings consisting of a letter followed by a digit” L* = “The set of all strings of letters, including , the empty string” ( L D )* = “Sequences of zero or more letters and digits” L ( ( L D )* ) = “Set of strings that start with a letter, followed by zero or more letters and digits. ”

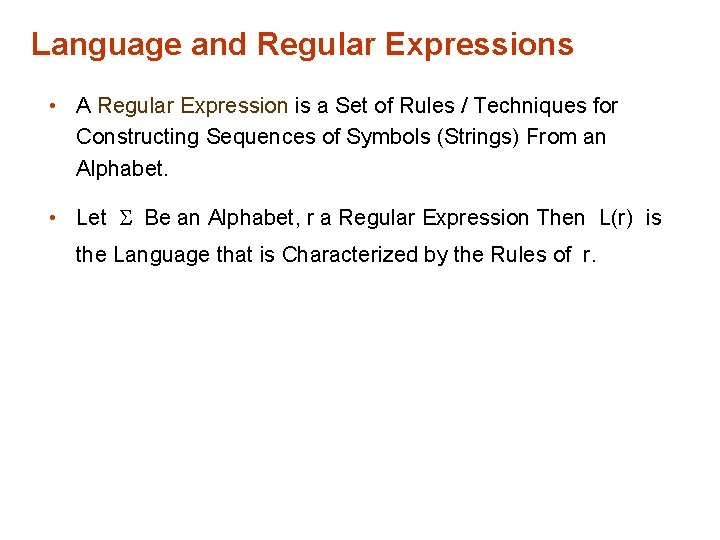

Language and Regular Expressions • A Regular Expression is a Set of Rules / Techniques for Constructing Sequences of Symbols (Strings) From an Alphabet. • Let Be an Alphabet, r a Regular Expression Then L(r) is the Language that is Characterized by the Rules of r.

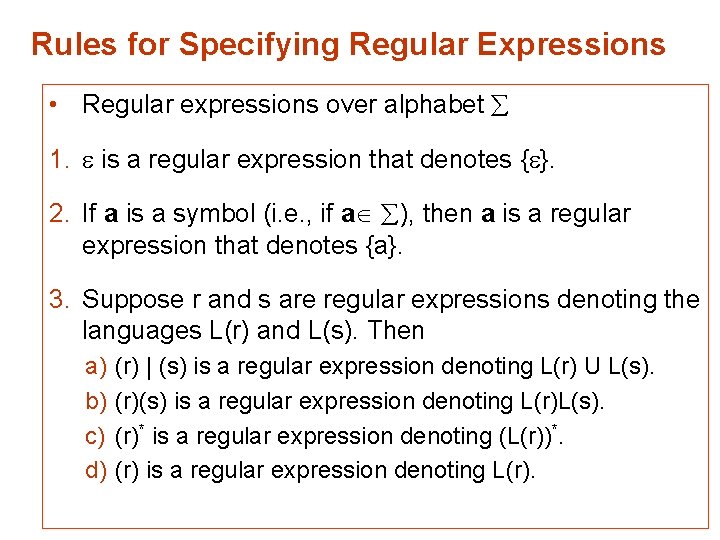

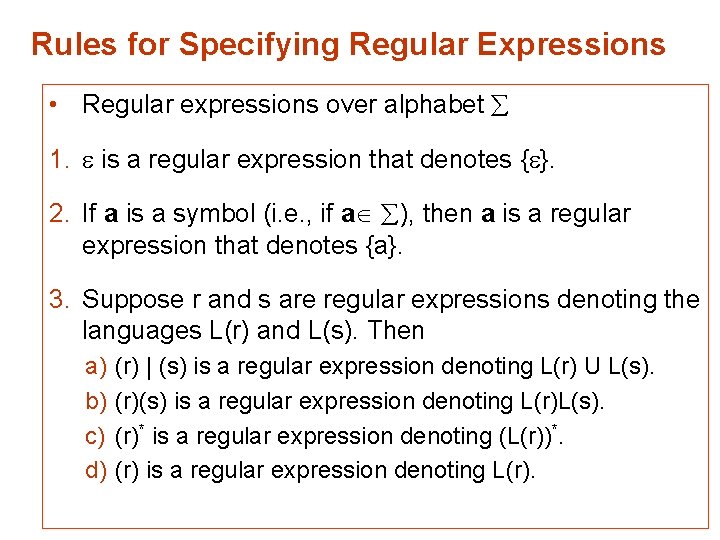

Rules for Specifying Regular Expressions • Regular expressions over alphabet 1. is a regular expression that denotes { }. 2. If a is a symbol (i. e. , if a ), then a is a regular expression that denotes {a}. 3. Suppose r and s are regular expressions denoting the languages L(r) and L(s). Then a) b) c) d) (r) | (s) is a regular expression denoting L(r) U L(s). (r)(s) is a regular expression denoting L(r)L(s). (r)* is a regular expression denoting (L(r))*. (r) is a regular expression denoting L(r).

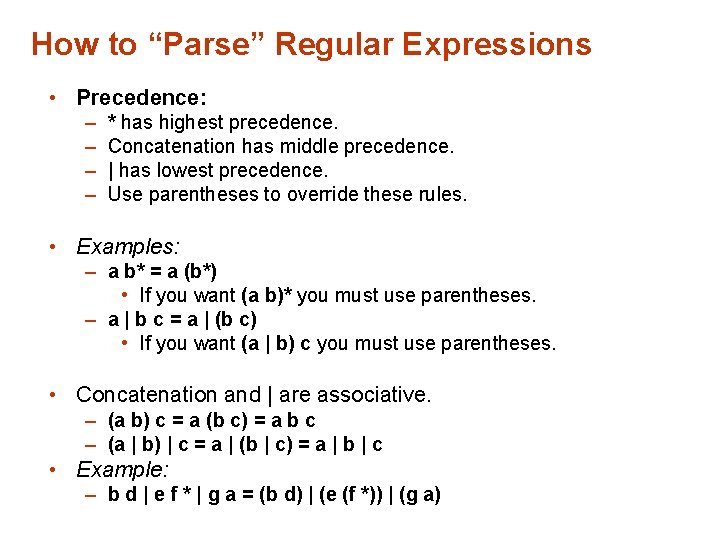

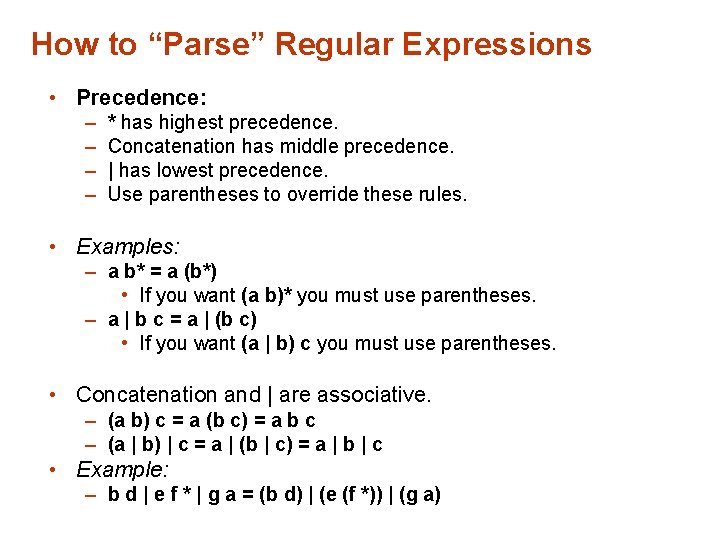

How to “Parse” Regular Expressions • Precedence: – – * has highest precedence. Concatenation has middle precedence. | has lowest precedence. Use parentheses to override these rules. • Examples: – a b* = a (b*) • If you want (a b)* you must use parentheses. – a | b c = a | (b c) • If you want (a | b) c you must use parentheses. • Concatenation and | are associative. – (a b) c = a (b c) = a b c – (a | b) | c = a | (b | c) = a | b | c • Example: – b d | e f * | g a = (b d) | (e (f *)) | (g a)

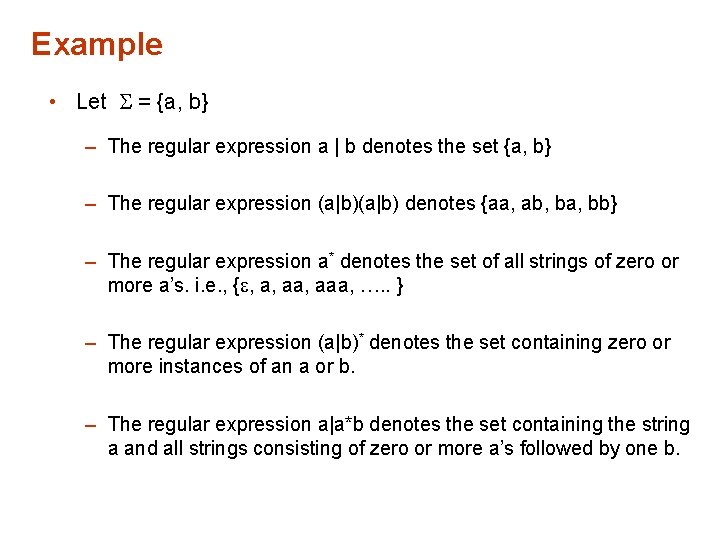

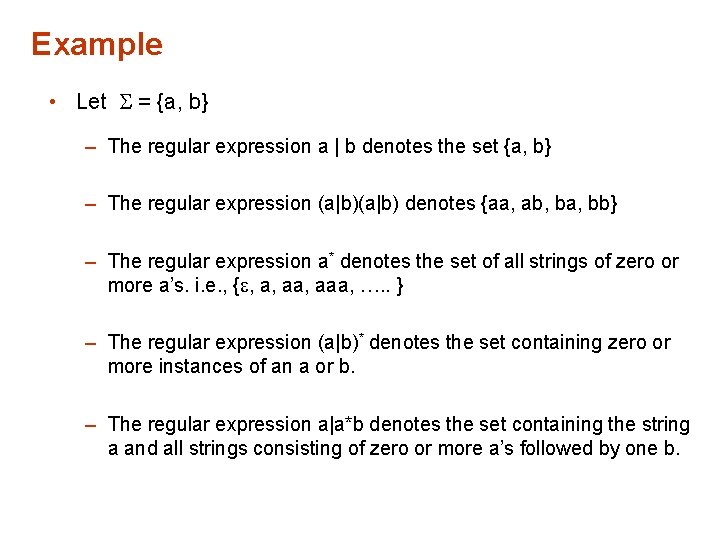

Example • Let = {a, b} – The regular expression a | b denotes the set {a, b} – The regular expression (a|b) denotes {aa, ab, ba, bb} – The regular expression a* denotes the set of all strings of zero or more a’s. i. e. , { , a, aaa, …. . } – The regular expression (a|b)* denotes the set containing zero or more instances of an a or b. – The regular expression a|a*b denotes the set containing the string a and all strings consisting of zero or more a’s followed by one b.