Learning from Data Streams CS 240 B Notes

Learning from Data Streams CS 240 B Notes by Carlo Zaniolo UCLA CSD With slides from a ICDE 2005 tutorial by Haixun Wang, Jian Pei & Philip Yu 1

What are the Challenges? z Data Volume yimpossible to mine the entire data at one time ycan only afford constant memory per data sample z Concept Drifts ypreviously learned models are invalid z Cost of Learning y model updates can be costly ycan only afford constant time per data sample. 2

On-Line Learning z Learning (Training) : y Input: a data set of (a, b), where a is a vector, b a class label y Output: a model (decision tree) z Testing: y Input: a test sample (x, ? ) y Output: a class label prediction for x z When mining data streams the two phases are often combined since concept shift requires continuous training. 3

Mining Data Streams: Challenges z On-line response (NB), limited memory, most recent windows only z Fast & Light algorithms needed that y Minimize usage of memory and CPU y Require only one (or a few) passes through data z Concept shift/drift: change mining set statistics y Render previously learned models inaccurate or invalid y Robustness and Adaptability: quickly recover/adjust after concept changes. z Popular machine learning algorithms no longer effective: y Neural nets: slow learner requires many passes y Support Vector Machines (SVM): computationally too expensive. 4

Classifiers Algorithms: from databases to data streams z New Algorithm have emerged, y Bloom Filters, y ANNCAD z Existing algorithms have adapted, y NBC survives with only minor changes, y Decision Trees require significant adaptation, y Classifier ensembles remain effective—after significant changes z Popular algorithms no longer effective: y Neural nets: slow learner requires many passes y Support Vector Machines (SVM): computationally too expensive 5

Decision Tree Classifiers z A divide-and-conquer approach y Simple algorithm, intuitive model z Typically a decision tree grows one level for each scan of data y Multiple scans are required y But if we can use small samples these problem disappears z But data structure is not ‘stable’ y Subtle changes of data can cause global changes in the data structure 6

Challenge #1 How many samples do we need to build a tree in constant time that is nearly identical to the tree built by batch learner (C 4. 5, Sprint, . . . )? Nearly identical? z Categorical attributes: y with high probability, the attribute we choose for split is the same attribute as would be chosen by a batch learner y identical decision tree z Continuous attributes: y discretize them into categorical ones . . . Forget concept shift/drift for now 7

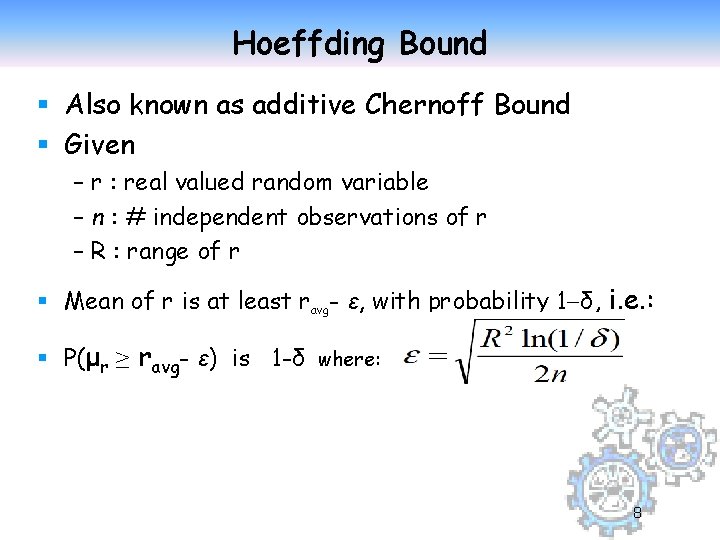

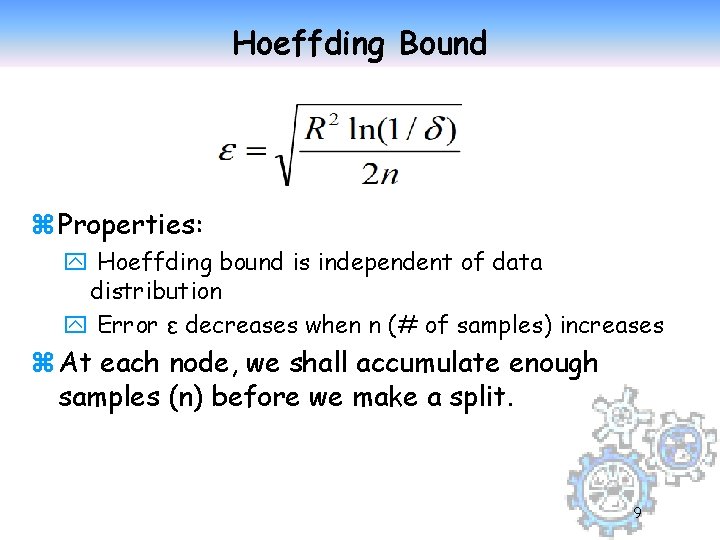

Hoeffding Bound § Also known as additive Chernoff Bound § Given – r : real valued random variable – n : # independent observations of r – R : range of r § Mean of r is at least ravg- ε, with probability 1 -δ, i. e. : § P(μr ≥ ravg- ε) is 1 -δ where: 8

Hoeffding Bound z Properties: y Hoeffding bound is independent of data distribution y Error ε decreases when n (# of samples) increases z At each node, we shall accumulate enough samples (n) before we make a split. 9

Nearly Identical? z. Categorical attributes: ywith high probability, the attribute we choose for split is the same attribute as would be chosen by a batch learner ythus we seek identical decision tree z. Continuous attributes: discretize them into categorical ones. 10

- Slides: 10