KAGGLE CHALLENGE 1 Name Sofia Saleem Baloch ERP

KAGGLE CHALLENGE 1 Name: Sofia Saleem Baloch ERP: 14777

FIRST MODEL: RANDOM FOREST • Started with Random Forest • My first few attempts involved a lot of experimentation with filtering trying to find a good combination. I got a better result with ID_CLIENT and Date time filtered out • Other nodes: Number to String (Client id, branch id, and target label were converted to string) Domain Calculator for datetime and age • Later added partitioning and ROC curve and Statistics nodes to analyze the data sets and prediction output better

SECOND MODEL: DECISION TREE • Used the same setting as random forest model but did not get good scored so I did not work long on this

THIRD MODEL: NAÏVE BAYES • Added row based filters for the training data set so that outliers and incorrectly entered data is filtered out for creating a better model. (age below 18 and above hundred, dependents more than 30, marital status where it was ‘V’, ) • String replacer for the incorrect data entry in marital status: words containing widower => widower (ie ‘Widoweringle’, ‘Widower. Married become ‘Widower’) words containing married => married (ie Married become ‘Married’) • This brought an improvement to the naïve bayes prediction but not an over all improvement to the score, random forest was working better than this. • The filters when applied to rf gave me a better over all score

FOURTH MODEL: GRADIENT BOOSTING • Score went up by 0. 02 when I replaced the Random forest learner and predictor with gradient boosting’s. • Previous rules based filters and string replacer still used. • Filtered out: client id, date and time • Learner settings: default

CHANGES TO GRADIENT BOOSTING MODEL Things that improved score • Creating a separate category called Flag_Parents. Name that said Y if either Flag_Mother. Name OR Flag_Father. Name was Y. However, if both flags were N, Flag_Parents. Name showed question mark. I used Flag_Parents. Name in prediction and my score improved. This was done using rule engine for both Testing and Training data sets. • Changing no. of models to 200. • Removing row filtering for age • Fraction of data to learn single model: 0. 8 Without replacement Use mid point splits User binary splits Things that did not improve • score • Filtering out credit analysis • Backward elimination Score dropped when I edited the rules so that Flag_Parents. Name said N when both flags said N. Decided not to question it and left those N’s as ? ’s. • Changing tree depth only • For testing set: Turning invalid ages into missing. • Changing learning rate only Replacing those missing values • Removing row filtering for using average. dependents • Creating 3 categories for age and an invalid category for invalid ages • Filtering out flags for mother name, father name and both • Salary row filtering

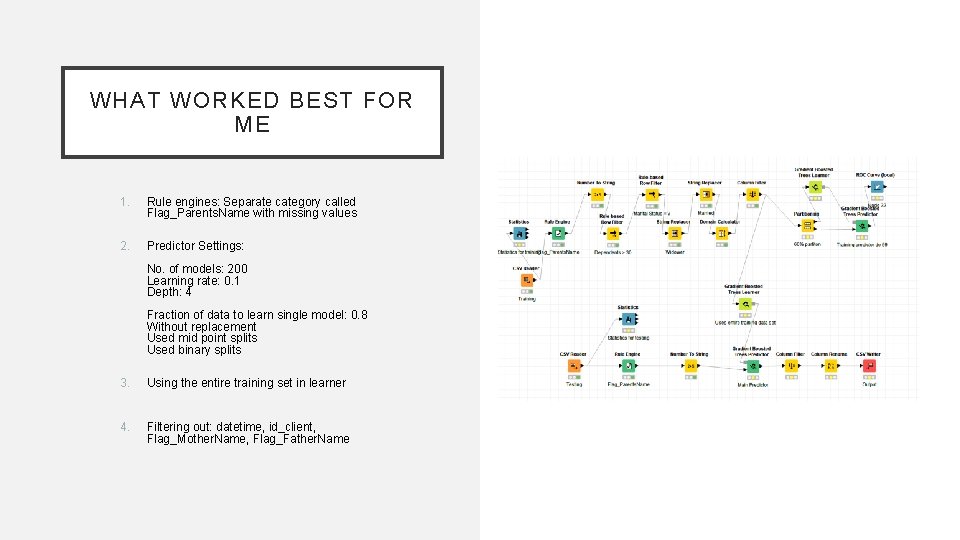

WHAT WORKED BEST FOR ME 1. Rule engines: Separate category called Flag_Parents. Name with missing values 2. Predictor Settings: No. of models: 200 Learning rate: 0. 1 Depth: 4 Fraction of data to learn single model: 0. 8 Without replacement Used mid point splits Used binary splits 3. Using the entire training set in learner 4. Filtering out: datetime, id_client, Flag_Mother. Name, Flag_Father. Name

- Slides: 7