Imbalanced Data Modeling Imbalanced Data Imbalanced Data What

Imbalanced Data Modeling Imbalanced Data

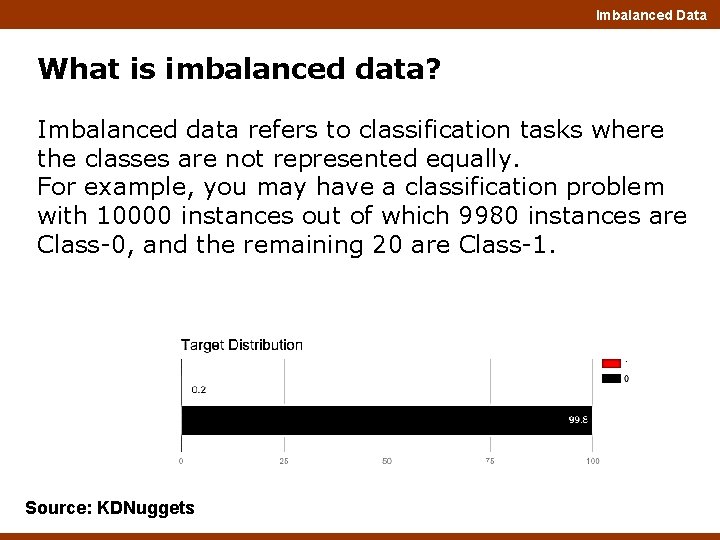

Imbalanced Data What is imbalanced data? Imbalanced data refers to classification tasks where the classes are not represented equally. For example, you may have a classification problem with 10000 instances out of which 9980 instances are Class-0, and the remaining 20 are Class-1. Source: KDNuggets

Imbalanced Data Why are imbalanced datasets a serious problem to tackle? • When the dataset has underrepresented data, the class distribution starts to skew. • Due to the inherent complex characteristics of the dataset, learning from such data requires new understandings, new approaches to transform data. Moreover, this cannot guarantee an efficient solution to business problem.

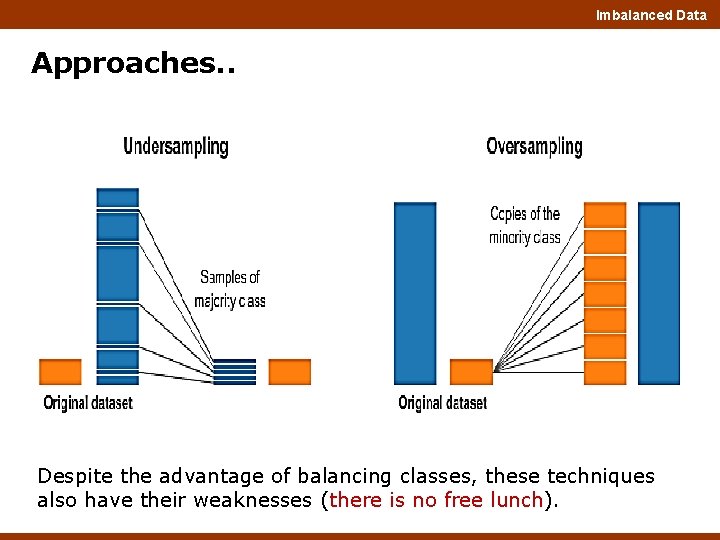

Imbalanced Data Approaches for handling imbalanced data Re-sampling the dataset: Dealing with imbalanced datasets include strategies such as: - Improving classification algorithms - Or balancing classes in the training data (essentially a data preprocessing step. The latter is preferred as it has broader application and adaptation. Moreover, the time taken to enhance an algorithm is higher. But for research purposes, both are preferred. Add copies from the minority class which is called oversampling (or more formally sampling with replacement), or delete instances from the majority class called undersampling.

Imbalanced Data Approaches. . Despite the advantage of balancing classes, these techniques also have their weaknesses (there is no free lunch).

Imbalanced Data Random under-sampling Randomly eliminate instances from the majority class of a dataset; it is known as random under-sampling. Advantages of this approach: -improve the runtime and solve memory problems by reducing the number of training data samples when the training data set is enormous. Disadvantages: - It can discard useful information about the data itself which could be necessary for modeling. - The sample chosen may be a biased sample. And it will not be an accurate representation of the population. Therefore, it can cause the classifier to perform poorly on unseen data.

![Imbalanced Data df_class_0_under = df_class_0. sample(count_class_1) df_test_under = pd. concat([df_class_0_under, df_class_1], axis=0) print('Random under-sampling: Imbalanced Data df_class_0_under = df_class_0. sample(count_class_1) df_test_under = pd. concat([df_class_0_under, df_class_1], axis=0) print('Random under-sampling:](http://slidetodoc.com/presentation_image_h2/2899daeb3ed39d0cda3a337cdb7eb04a/image-7.jpg)

Imbalanced Data df_class_0_under = df_class_0. sample(count_class_1) df_test_under = pd. concat([df_class_0_under, df_class_1], axis=0) print('Random under-sampling: ’) print(df_test_under. target. value_counts()) df_test_under. target. value_counts(). plot(kind='bar', title='Count (target)');

Imbalanced Data Random over-sampling In this case, the instances of minority class is increased by replicating them. Here, the number of instances of majority class not decreased. - Consider a dataset with 1000 instances where 20 instances correspond to the minority class. Over-sample the dataset by replicating the 20 instances up to 20 times. As a result, the total number of instances in the minority class will be 400. Advantages: • Unlike undersampling, this method leads to no information loss. Disadvantages: • It increases the likelihood of overfitting since it replicates the minority class events.

![Imbalanced Data df_class_1_over = df_class_1. sample(count_class_0, replace=True) df_test_over = pd. concat([df_class_0, df_class_1_over], axis=0) print('Random Imbalanced Data df_class_1_over = df_class_1. sample(count_class_0, replace=True) df_test_over = pd. concat([df_class_0, df_class_1_over], axis=0) print('Random](http://slidetodoc.com/presentation_image_h2/2899daeb3ed39d0cda3a337cdb7eb04a/image-9.jpg)

Imbalanced Data df_class_1_over = df_class_1. sample(count_class_0, replace=True) df_test_over = pd. concat([df_class_0, df_class_1_over], axis=0) print('Random over-sampling: ’) print(df_test_over. target. value_counts()) df_test_over. target. value_counts(). plot(kind='bar', title='Count (target)');

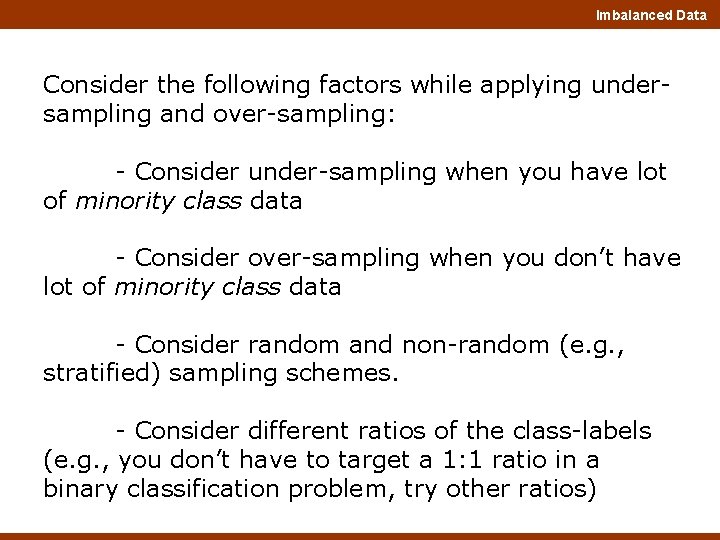

Imbalanced Data Consider the following factors while applying undersampling and over-sampling: - Consider under-sampling when you have lot of minority class data - Consider over-sampling when you don’t have lot of minority class data - Consider random and non-random (e. g. , stratified) sampling schemes. - Consider different ratios of the class-labels (e. g. , you don’t have to target a 1: 1 ratio in a binary classification problem, try other ratios)

Imbalanced Data Under and Over Sampling - Information based Approaches

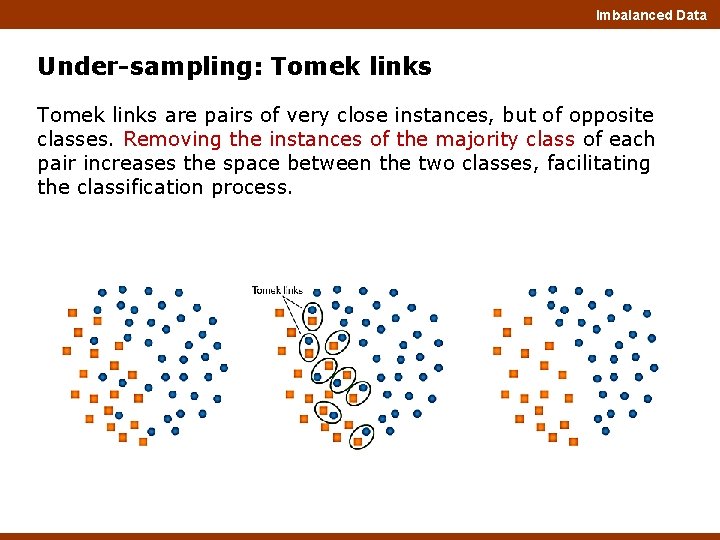

Imbalanced Data Under-sampling: Tomek links are pairs of very close instances, but of opposite classes. Removing the instances of the majority class of each pair increases the space between the two classes, facilitating the classification process.

Imbalanced Data from imblearn. under_sampling import Tomek. Links tl = Tomek. Links(return_indices=True, ratio='majority’) X_tl, y_tl, id_tl = tl. fit_sample(X, y) print('Removed indexes: ', id_tl) plot_2 d_space(X_tl, y_tl, 'Tomek links undersampling')

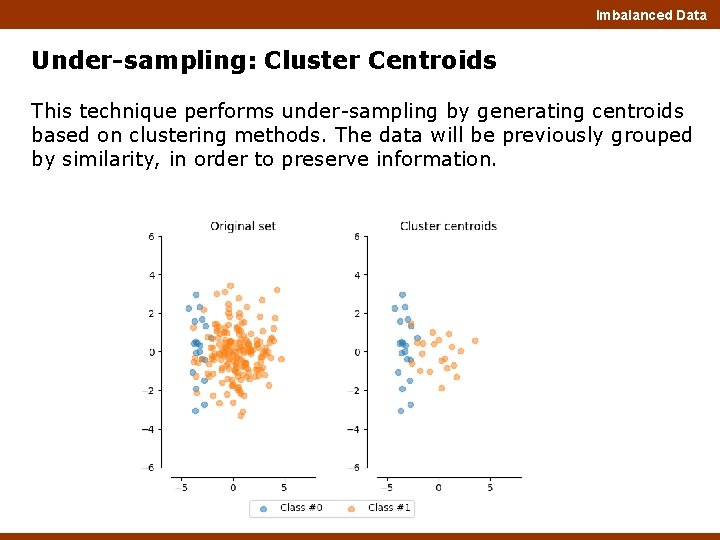

Imbalanced Data Under-sampling: Cluster Centroids This technique performs under-sampling by generating centroids based on clustering methods. The data will be previously grouped by similarity, in order to preserve information.

Imbalanced Data from imblearn. under_sampling import Cluster. Centroids cc = Cluster. Centroids(ratio={0: 10}) X_cc, y_cc = cc. fit_sample(X, y) plot_2 d_space(X_cc, y_cc, 'Cluster Centroids undersampling') In this example we passed the {0: 10} dict for the parameter ratio, to preserve 10 elements from the majority class (0), and all minority class (1).

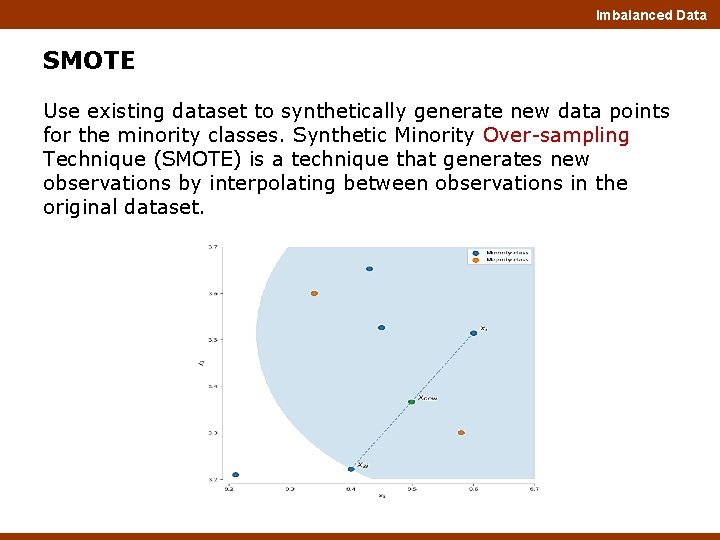

Imbalanced Data SMOTE Use existing dataset to synthetically generate new data points for the minority classes. Synthetic Minority Over-sampling Technique (SMOTE) is a technique that generates new observations by interpolating between observations in the original dataset.

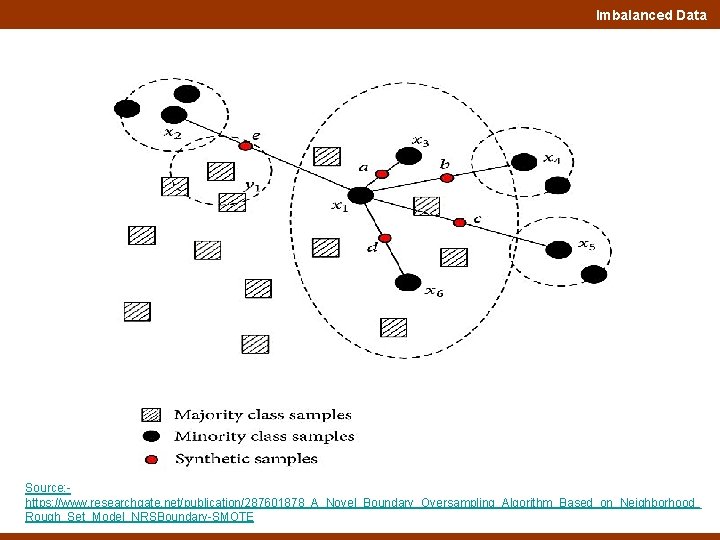

Imbalanced Data Source: https: //www. researchgate. net/publication/287601878_A_Novel_Boundary_Oversampling_Algorithm_Based_on_Neighborhood_ Rough_Set_Model_NRSBoundary-SMOTE

Imbalanced Data The intuition behind the construction algorithm is simple. - Oversampling causes overfitting, and because of repeated instances, the decision boundary gets tightened. - Generate similar samples instead of repeating them. These newly constructed instances are not exact copies, and thus it softens the decision boundary. Helps the algorithm to approximate the hypothesis more accurately.

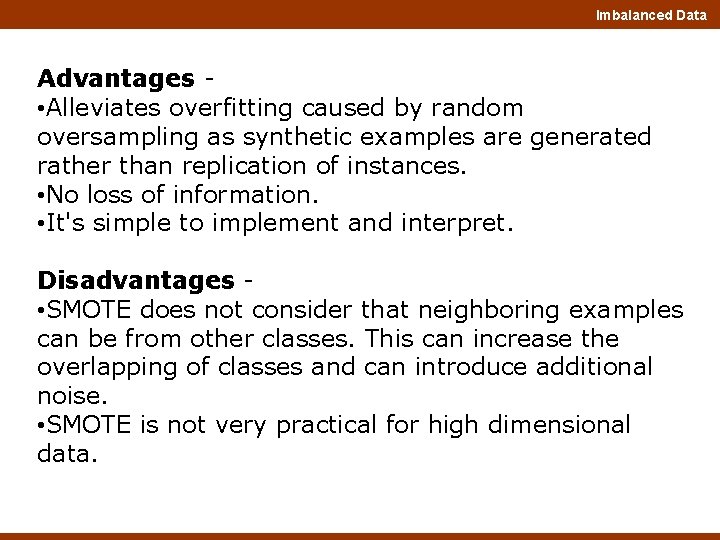

Imbalanced Data Advantages • Alleviates overfitting caused by random oversampling as synthetic examples are generated rather than replication of instances. • No loss of information. • It's simple to implement and interpret. Disadvantages • SMOTE does not consider that neighboring examples can be from other classes. This can increase the overlapping of classes and can introduce additional noise. • SMOTE is not very practical for high dimensional data.

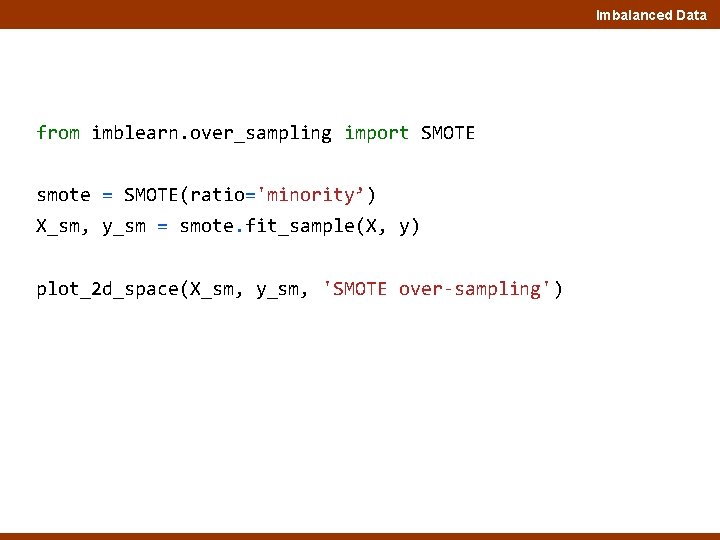

Imbalanced Data from imblearn. over_sampling import SMOTE smote = SMOTE(ratio='minority’) X_sm, y_sm = smote. fit_sample(X, y) plot_2 d_space(X_sm, y_sm, 'SMOTE over-sampling')

Imbalanced Data Thank You

- Slides: 21