Enabling Grids for Escienc E The Workload Management

Enabling Grids for E-scienc. E The Workload Management And Logging Bookkeeping System Di Qing Grid Deployment Group Academia Sinica & CERN WLCG Collaboration Workshop, 25 January, 2007 www. eu-egee. org INFSO-RI-031688

Outline Enabling Grids for E-scienc. E • • Introduction Installation and configuration Test your site Troubleshooting INFSO-RI-031688 EGEE-II WLCG Collaboration Workshop 2

Introduction(I) Enabling Grids for E-scienc. E • Focus on g. Lite job management system, specially on troubleshooting • Workload Management System (WMS) – Backward compatible with LCG-2 – WMProxy § Web service interface to the WMS § Support bulk submissions and jobs with shared sandboxes – Support for shallow resubmission. – Support BDII, R-GMA and CEMon as resource information repository – Support for Data management interfaces (DLI and Storage. Index) – Support for DAG jobs – …… INFSO-RI-031688 EGEE-II WLCG Collaboration Workshop 3

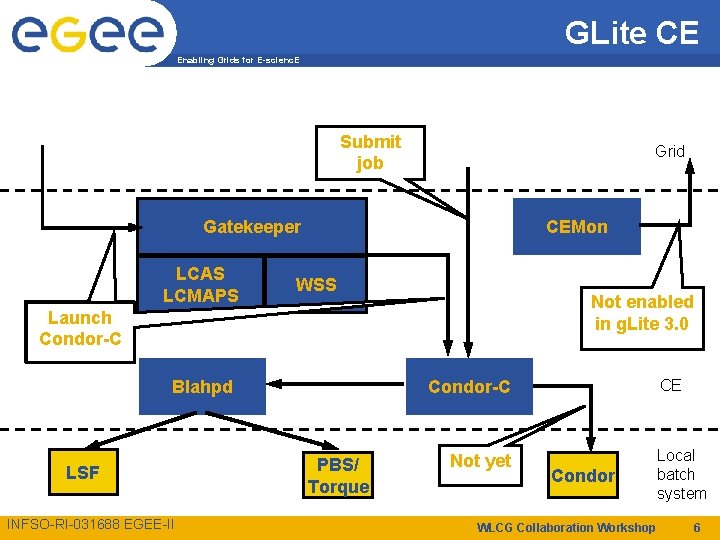

Introduction (II) Enabling Grids for E-scienc. E • Logging and Bookkeeping (L&B) – Tracks jobs during their lifetime (in terms of events) – L&B Proxy § Provides faster, synchronous and more efficient access to L&B services for Workload Management Services • Computing Element (CE) – Service representing a computing resource – CE moving towards a VO based local scheduler – Batch Local ASCII Helper (BLAH) § More efficient parsing of log files (these can be left residing on a remote machine) § Support for hold and resume in BLAH To be used e. g. to put a job on hold, waiting for e. g. the staging of the input data – Condor-C GSI enabled – CE Monitor (not enabled in g. Lite 3. 0) § Better support for the pull mode; More efficient handling of CEmon reporting § Security support INFSO-RI-031688 EGEE-II WLCG Collaboration Workshop 4

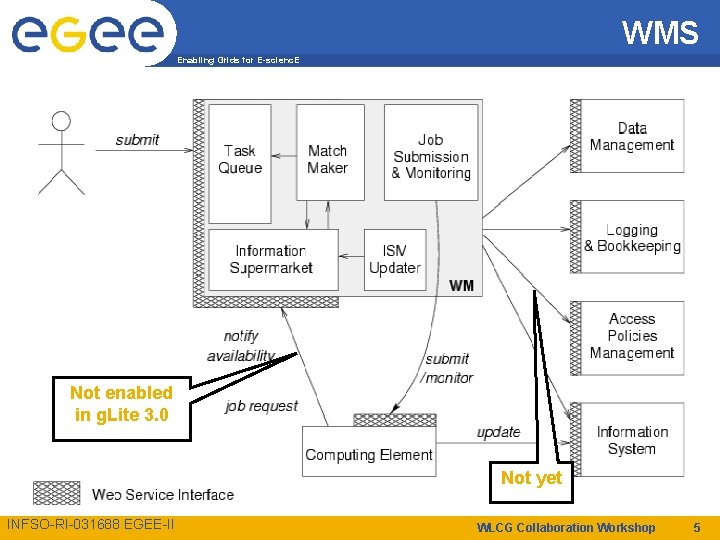

WMS Enabling Grids for E-scienc. E Not enabled in g. Lite 3. 0 Not yet INFSO-RI-031688 EGEE-II WLCG Collaboration Workshop 5

GLite CE Enabling Grids for E-scienc. E Submit job Grid Gatekeeper LCAS LCMAPS CEMon WSS Not enabled in g. Lite 3. 0 Launch Condor-C Blahpd LSF INFSO-RI-031688 EGEE-II CE Condor-C PBS/ Torque Not yet Condor WLCG Collaboration Workshop Local batch system 6

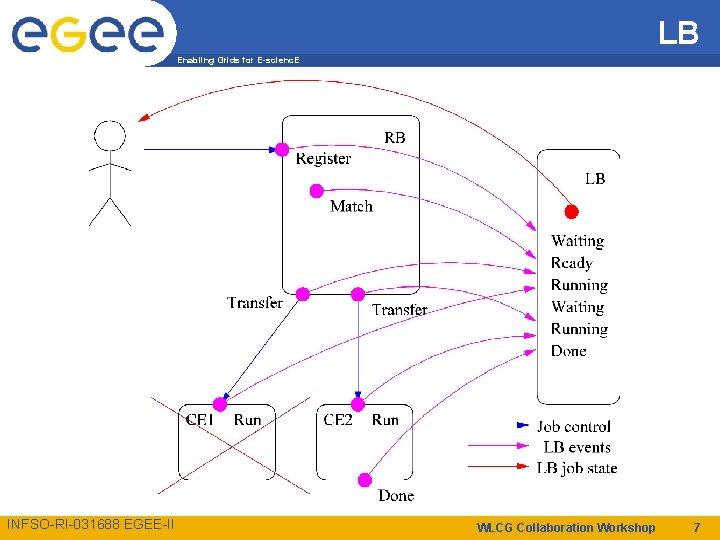

LB Enabling Grids for E-scienc. E INFSO-RI-031688 EGEE-II WLCG Collaboration Workshop 7

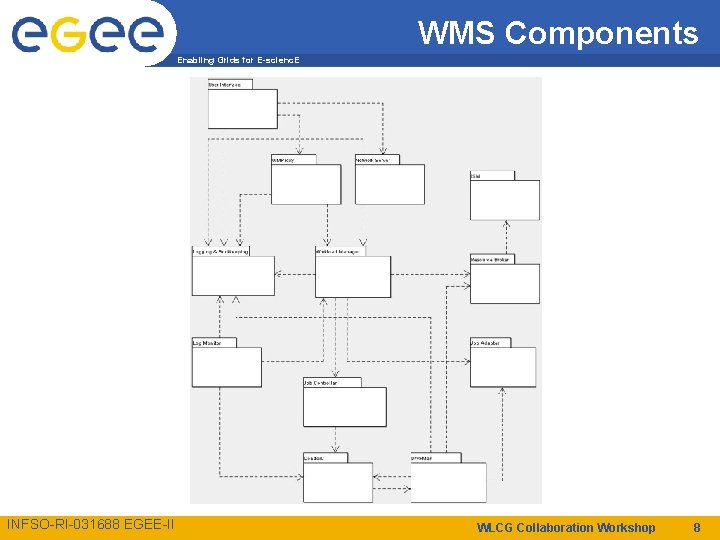

WMS Components Enabling Grids for E-scienc. E INFSO-RI-031688 EGEE-II WLCG Collaboration Workshop 8

Installation (I) Enabling Grids for E-scienc. E • apt-get, yum, manual installation, tarball – glite-WMS, glite-LB, glite-WMSLB • Architecture CPU – If you have non i 386 architecture and you need to install some i 386 pkg, DON’T use apt it will make the choice by the priority of the repository and not the architecture – Yum does not have this problem and give always the priority to the cpu arch • Dependencies – Those tools are made to satisfy dependencies automatically. § foo is depended on bar which is depended on tux , the 3 packages will be install with just an: apt-get install foo. – Problems when tux is not present § The message will not speak about tux but about bar with the bar is not installable INFSO-RI-031688 EGEE-II WLCG Collaboration Workshop 9

Installation (II) Enabling Grids for E-scienc. E • Try to install bar to check which package is really missing – apt-get install bar – Then find tux from somewhere and install it by hand • apt-get dist-upgrade may help INFSO-RI-031688 EGEE-II WLCG Collaboration Workshop 10

YAIM configuration of g. Lite services Enabling Grids for E-scienc. E • g. Lite 3. 0 is a merge of LCG 2. 7. 0 and g. Lite 1. 5. 0 middleware stacks • Both stacks are using different configuration approaches (YAIM/g. Lite Python configuration system) • Unification of configuration approaches. • YAIM 2 g. Lite. Converter: tool to transform YAIM configuration values to g. Lite XML configuration files • Transparent configuration of g. Lite services • No additional administrative overhead • For example, configure_node <site-info. def> WMSLB – Function config-glite-wms § Call YAIM 2 g. Lite. Convertor (parameter transformation) § Call glite-wms-config. py --config (service configuration) § Call glite-wms-config. py --start (service startup) – Function config-glite-lb § Call YAIM 2 g. Lite. Convertor (parameter transformation) § Call glite-lb-config. py --config (service configuration) § Call glite-lb-config. py --start (service startup) INFSO-RI-031688 EGEE-II WLCG Collaboration Workshop 11

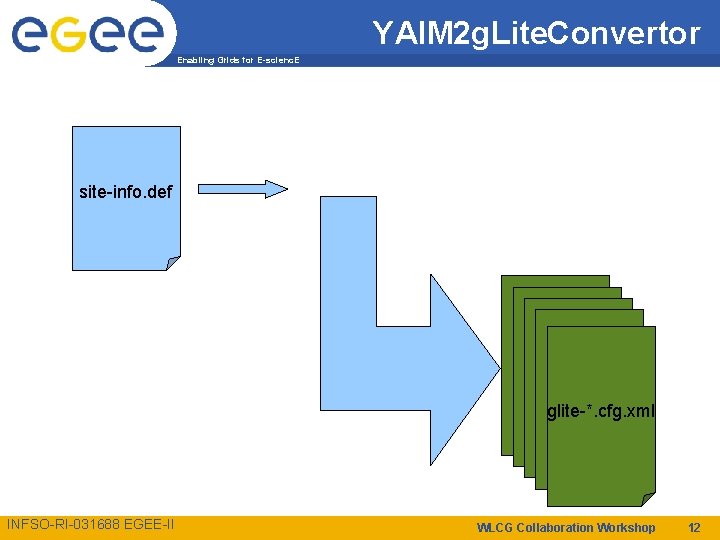

YAIM 2 g. Lite. Convertor Enabling Grids for E-scienc. E site-info. def glite-*. cfg. xml INFSO-RI-031688 EGEE-II WLCG Collaboration Workshop 12

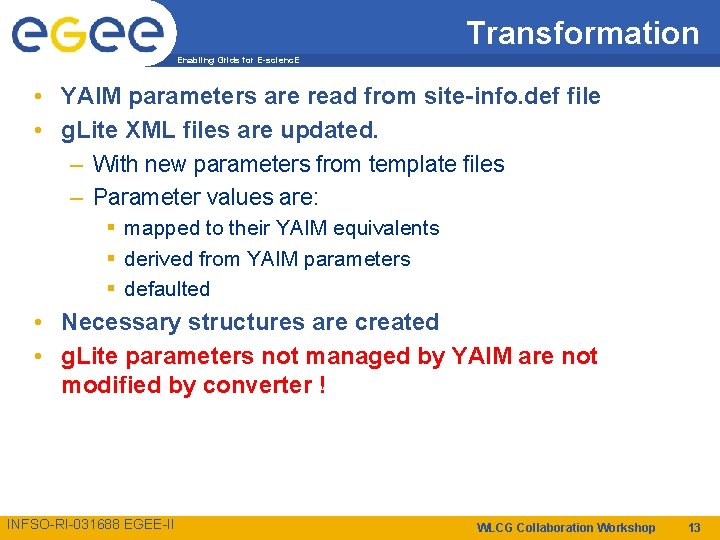

Transformation Enabling Grids for E-scienc. E • YAIM parameters are read from site-info. def file • g. Lite XML files are updated. – With new parameters from template files – Parameter values are: § mapped to their YAIM equivalents § derived from YAIM parameters § defaulted • Necessary structures are created • g. Lite parameters not managed by YAIM are not modified by converter ! INFSO-RI-031688 EGEE-II WLCG Collaboration Workshop 13

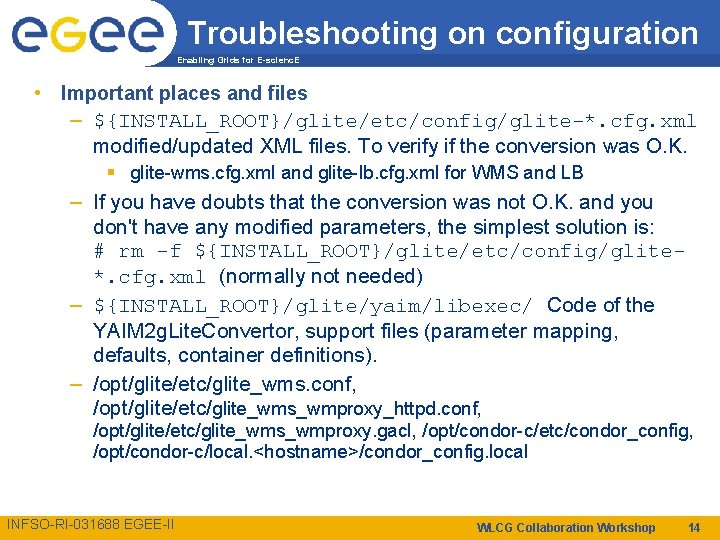

Troubleshooting on configuration Enabling Grids for E-scienc. E • Important places and files – ${INSTALL_ROOT}/glite/etc/config/glite-*. cfg. xml modified/updated XML files. To verify if the conversion was O. K. § glite-wms. cfg. xml and glite-lb. cfg. xml for WMS and LB – If you have doubts that the conversion was not O. K. and you don't have any modified parameters, the simplest solution is: # rm -f ${INSTALL_ROOT}/glite/etc/config/glite*. cfg. xml (normally not needed) – ${INSTALL_ROOT}/glite/yaim/libexec/ Code of the YAIM 2 g. Lite. Convertor, support files (parameter mapping, defaults, container definitions). – /opt/glite/etc/glite_wms. conf, /opt/glite/etc/glite_wms_wmproxy_httpd. conf, /opt/glite/etc/glite_wms_wmproxy. gacl, /opt/condor-c/etc/condor_config, /opt/condor-c/local. <hostname>/condor_config. local INFSO-RI-031688 EGEE-II WLCG Collaboration Workshop 14

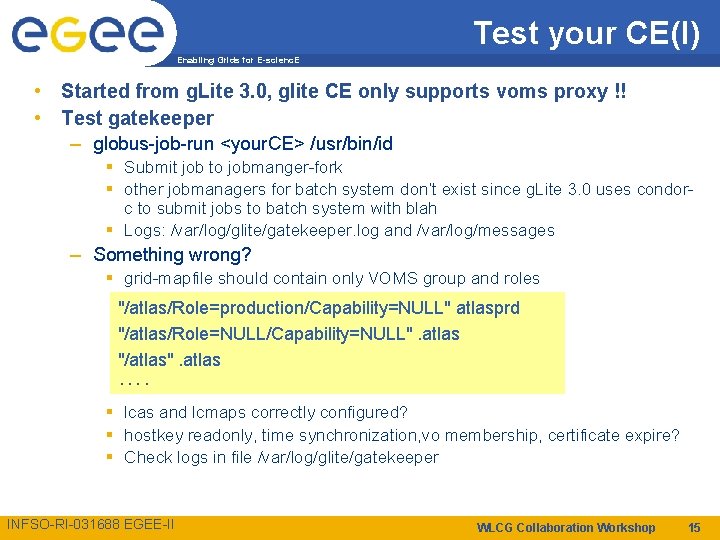

Test your CE(I) Enabling Grids for E-scienc. E • Started from g. Lite 3. 0, glite CE only supports voms proxy !! • Test gatekeeper – globus-job-run <your. CE> /usr/bin/id § Submit job to jobmanger-fork § other jobmanagers for batch system don’t exist since g. Lite 3. 0 uses condorc to submit jobs to batch system with blah § Logs: /var/log/glite/gatekeeper. log and /var/log/messages – Something wrong? § grid-mapfile should contain only VOMS group and roles "/atlas/Role=production/Capability=NULL" atlasprd "/atlas/Role=NULL/Capability=NULL". atlas "/atlas". atlas. . § lcas and lcmaps correctly configured? § hostkey readonly, time synchronization, vo membership, certificate expire? § Check logs in file /var/log/glite/gatekeeper INFSO-RI-031688 EGEE-II WLCG Collaboration Workshop 15

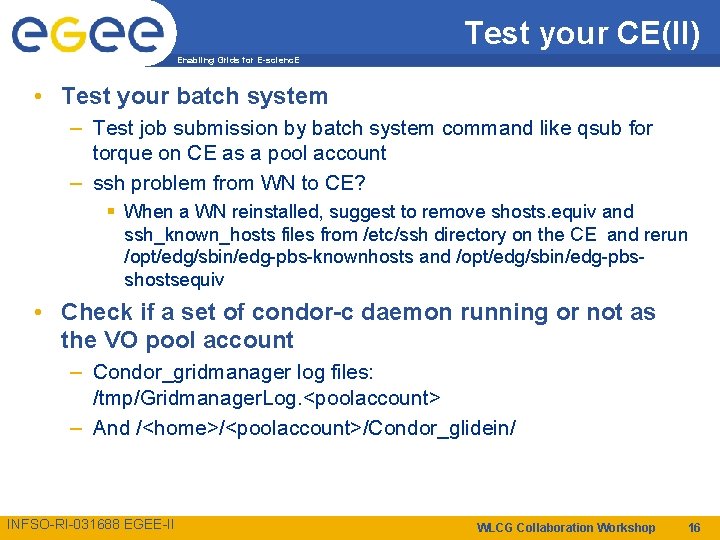

Test your CE(II) Enabling Grids for E-scienc. E • Test your batch system – Test job submission by batch system command like qsub for torque on CE as a pool account – ssh problem from WN to CE? § When a WN reinstalled, suggest to remove shosts. equiv and ssh_known_hosts files from /etc/ssh directory on the CE and rerun /opt/edg/sbin/edg-pbs-knownhosts and /opt/edg/sbin/edg-pbsshostsequiv • Check if a set of condor-c daemon running or not as the VO pool account – Condor_gridmanager log files: /tmp/Gridmanager. Log. <poolaccount> – And /<home>/<poolaccount>/Condor_glidein/ INFSO-RI-031688 EGEE-II WLCG Collaboration Workshop 16

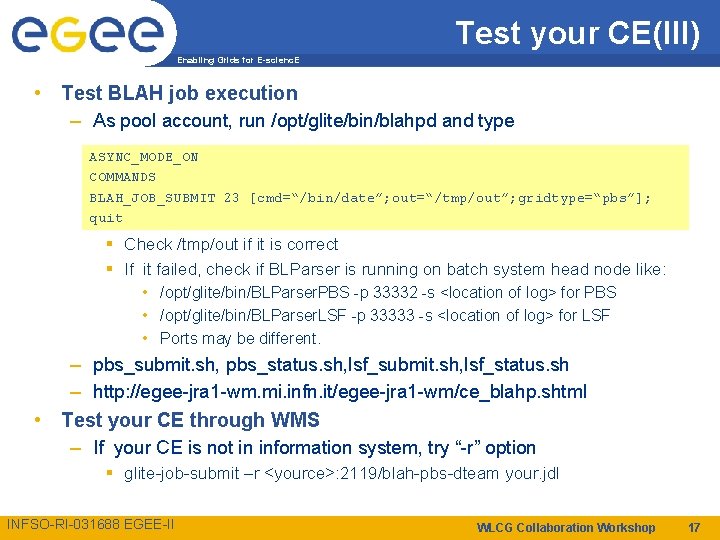

Test your CE(III) Enabling Grids for E-scienc. E • Test BLAH job execution – As pool account, run /opt/glite/bin/blahpd and type ASYNC_MODE_ON COMMANDS BLAH_JOB_SUBMIT 23 [cmd=“/bin/date”; out=“/tmp/out”; gridtype=“pbs”]; quit § Check /tmp/out if it is correct § If it failed, check if BLParser is running on batch system head node like: • /opt/glite/bin/BLParser. PBS -p 33332 -s <location of log> for PBS • /opt/glite/bin/BLParser. LSF -p 33333 -s <location of log> for LSF • Ports may be different. • – pbs_submit. sh, pbs_status. sh, lsf_submit. sh, lsf_status. sh – http: //egee-jra 1 -wm. mi. infn. it/egee-jra 1 -wm/ce_blahp. shtml Test your CE through WMS – If your CE is not in information system, try “-r” option § glite-job-submit –r <yource>: 2119/blah-pbs-dteam your. jdl INFSO-RI-031688 EGEE-II WLCG Collaboration Workshop 17

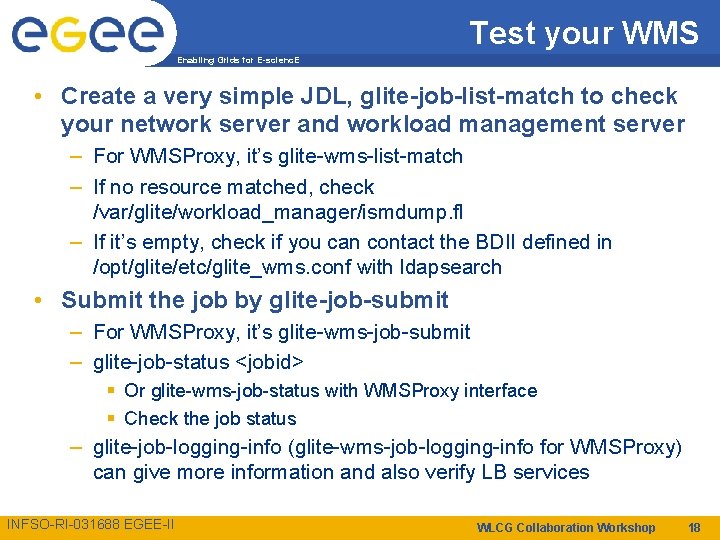

Test your WMS Enabling Grids for E-scienc. E • Create a very simple JDL, glite-job-list-match to check your network server and workload management server – For WMSProxy, it’s glite-wms-list-match – If no resource matched, check /var/glite/workload_manager/ismdump. fl – If it’s empty, check if you can contact the BDII defined in /opt/glite/etc/glite_wms. conf with ldapsearch • Submit the job by glite-job-submit – For WMSProxy, it’s glite-wms-job-submit – glite-job-status <jobid> § Or glite-wms-job-status with WMSProxy interface § Check the job status – glite-job-logging-info (glite-wms-job-logging-info for WMSProxy) can give more information and also verify LB services INFSO-RI-031688 EGEE-II WLCG Collaboration Workshop 18

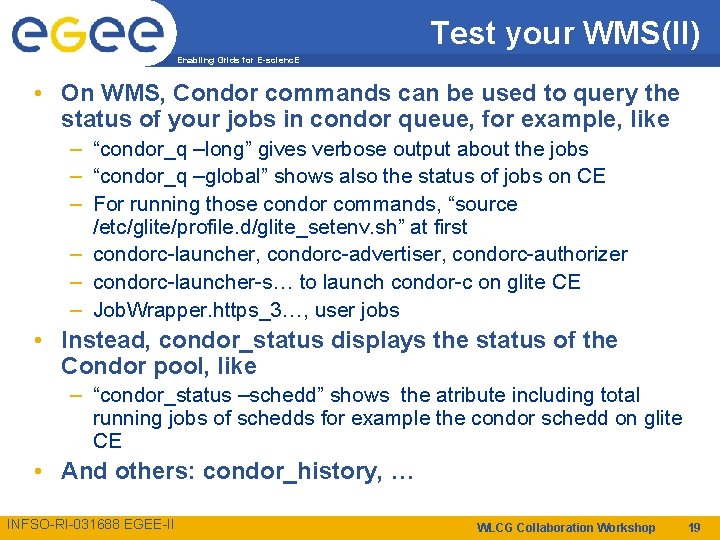

Test your WMS(II) Enabling Grids for E-scienc. E • On WMS, Condor commands can be used to query the status of your jobs in condor queue, for example, like – “condor_q –long” gives verbose output about the jobs – “condor_q –global” shows also the status of jobs on CE – For running those condor commands, “source /etc/glite/profile. d/glite_setenv. sh” at first – condorc-launcher, condorc-advertiser, condorc-authorizer – condorc-launcher-s… to launch condor-c on glite CE – Job. Wrapper. https_3…, user jobs • Instead, condor_status displays the status of the Condor pool, like – “condor_status –schedd” shows the atribute including total running jobs of schedds for example the condor schedd on glite CE • And others: condor_history, … INFSO-RI-031688 EGEE-II WLCG Collaboration Workshop 19

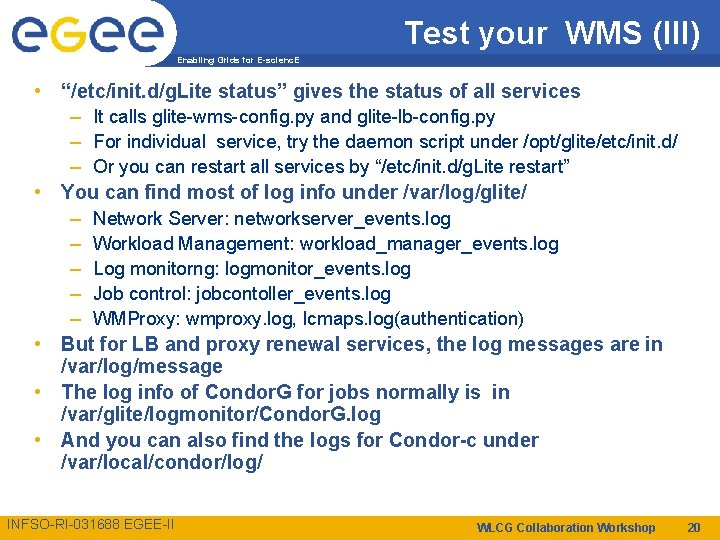

Test your WMS (III) Enabling Grids for E-scienc. E • “/etc/init. d/g. Lite status” gives the status of all services – It calls glite-wms-config. py and glite-lb-config. py – For individual service, try the daemon script under /opt/glite/etc/init. d/ – Or you can restart all services by “/etc/init. d/g. Lite restart” • You can find most of log info under /var/log/glite/ – Network Server: networkserver_events. log – Workload Management: workload_manager_events. log – Log monitorng: logmonitor_events. log – Job control: jobcontoller_events. log – WMProxy: wmproxy. log, lcmaps. log(authentication) • But for LB and proxy renewal services, the log messages are in /var/log/message • The log info of Condor. G for jobs normally is in /var/glite/logmonitor/Condor. G. log • And you can also find the logs for Condor-c under /var/local/condor/log/ INFSO-RI-031688 EGEE-II WLCG Collaboration Workshop 20

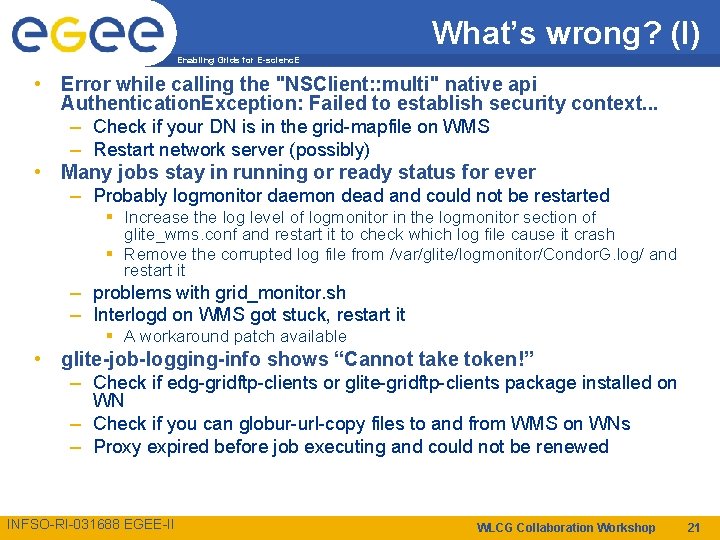

What’s wrong? (I) Enabling Grids for E-scienc. E • Error while calling the "NSClient: : multi" native api Authentication. Exception: Failed to establish security context. . . – Check if your DN is in the grid-mapfile on WMS – Restart network server (possibly) • Many jobs stay in running or ready status for ever – Probably logmonitor daemon dead and could not be restarted § Increase the log level of logmonitor in the logmonitor section of glite_wms. conf and restart it to check which log file cause it crash § Remove the corrupted log file from /var/glite/logmonitor/Condor. G. log/ and restart it – problems with grid_monitor. sh – Interlogd on WMS got stuck, restart it § A workaround patch available • glite-job-logging-info shows “Cannot take token!” – Check if edg-gridftp-clients or glite-gridftp-clients package installed on WN – Check if you can globur-url-copy files to and from WMS on WNs – Proxy expired before job executing and could not be renewed INFSO-RI-031688 EGEE-II WLCG Collaboration Workshop 21

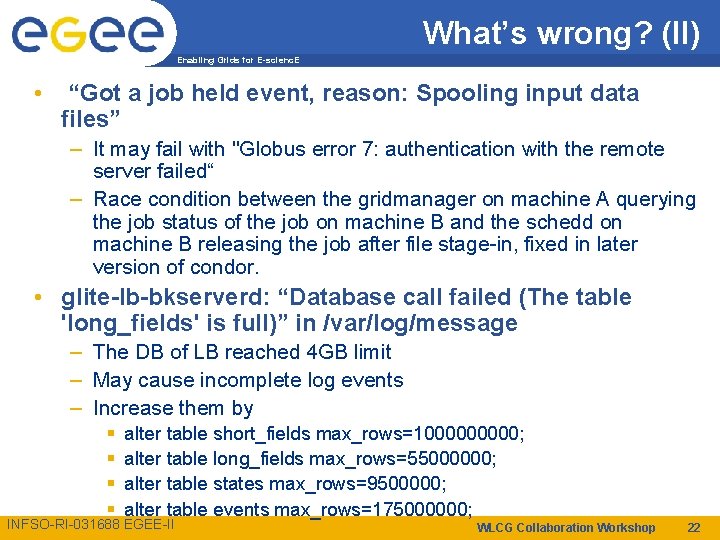

What’s wrong? (II) Enabling Grids for E-scienc. E • “Got a job held event, reason: Spooling input data files” – It may fail with "Globus error 7: authentication with the remote server failed“ – Race condition between the gridmanager on machine A querying the job status of the job on machine B and the schedd on machine B releasing the job after file stage-in, fixed in later version of condor. • glite-lb-bkserverd: “Database call failed (The table 'long_fields' is full)” in /var/log/message – The DB of LB reached 4 GB limit – May cause incomplete log events – Increase them by § § alter table short_fields max_rows=100000; alter table long_fields max_rows=55000000; alter table states max_rows=9500000; alter table events max_rows=175000000; INFSO-RI-031688 EGEE-II WLCG Collaboration Workshop 22

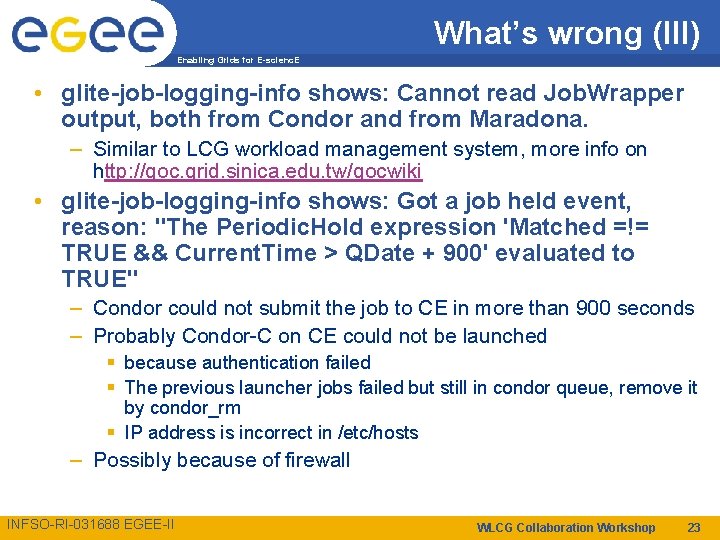

What’s wrong (III) Enabling Grids for E-scienc. E • glite-job-logging-info shows: Cannot read Job. Wrapper output, both from Condor and from Maradona. – Similar to LCG workload management system, more info on http: //goc. grid. sinica. edu. tw/gocwiki • glite-job-logging-info shows: Got a job held event, reason: "The Periodic. Hold expression 'Matched =!= TRUE && Current. Time > QDate + 900' evaluated to TRUE" – Condor could not submit the job to CE in more than 900 seconds – Probably Condor-C on CE could not be launched § because authentication failed § The previous launcher jobs failed but still in condor queue, remove it by condor_rm § IP address is incorrect in /etc/hosts – Possibly because of firewall INFSO-RI-031688 EGEE-II WLCG Collaboration Workshop 23

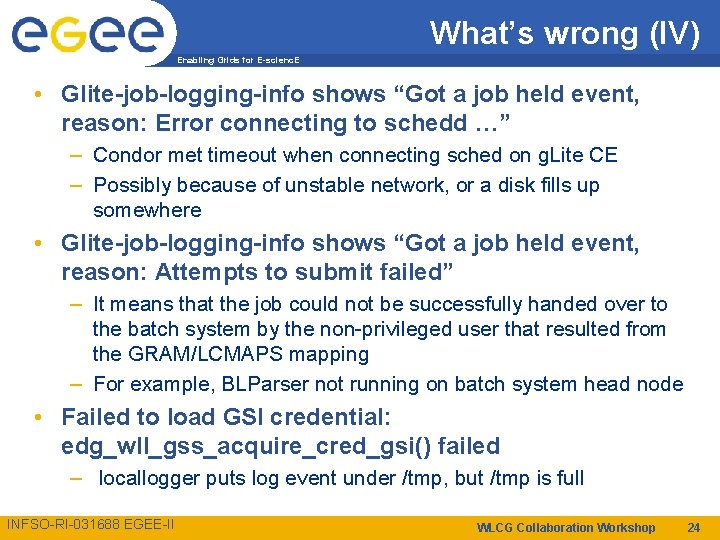

What’s wrong (IV) Enabling Grids for E-scienc. E • Glite-job-logging-info shows “Got a job held event, reason: Error connecting to schedd …” – Condor met timeout when connecting sched on g. Lite CE – Possibly because of unstable network, or a disk fills up somewhere • Glite-job-logging-info shows “Got a job held event, reason: Attempts to submit failed” – It means that the job could not be successfully handed over to the batch system by the non-privileged user that resulted from the GRAM/LCMAPS mapping – For example, BLParser not running on batch system head node • Failed to load GSI credential: edg_wll_gss_acquire_cred_gsi() failed – locallogger puts log event under /tmp, but /tmp is full INFSO-RI-031688 EGEE-II WLCG Collaboration Workshop 24

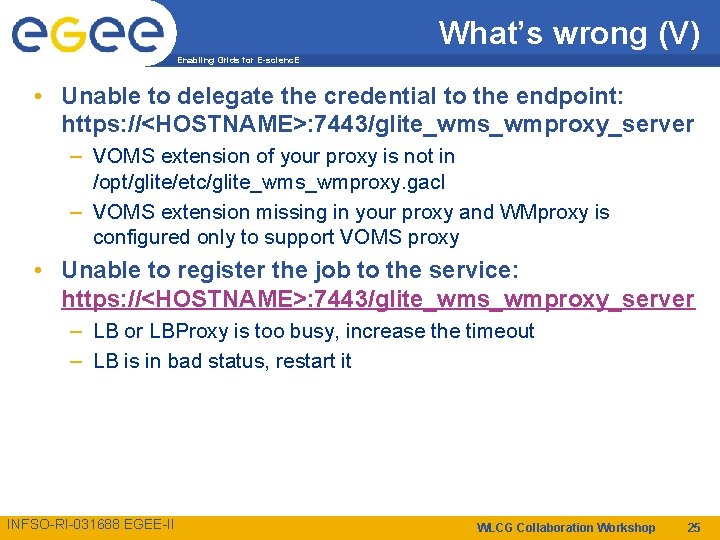

What’s wrong (V) Enabling Grids for E-scienc. E • Unable to delegate the credential to the endpoint: https: //<HOSTNAME>: 7443/glite_wms_wmproxy_server – VOMS extension of your proxy is not in /opt/glite/etc/glite_wms_wmproxy. gacl – VOMS extension missing in your proxy and WMproxy is configured only to support VOMS proxy • Unable to register the job to the service: https: //<HOSTNAME>: 7443/glite_wms_wmproxy_server – LB or LBProxy is too busy, increase the timeout – LB is in bad status, restart it INFSO-RI-031688 EGEE-II WLCG Collaboration Workshop 25

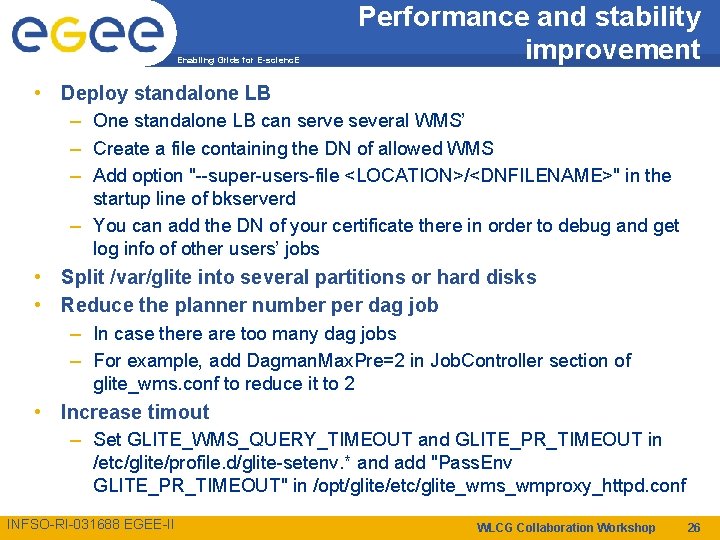

Enabling Grids for E-scienc. E Performance and stability improvement • Deploy standalone LB – One standalone LB can serve several WMS’ – Create a file containing the DN of allowed WMS – Add option "--super-users-file <LOCATION>/<DNFILENAME>" in the startup line of bkserverd – You can add the DN of your certificate there in order to debug and get log info of other users’ jobs • Split /var/glite into several partitions or hard disks • Reduce the planner number per dag job – In case there are too many dag jobs – For example, add Dagman. Max. Pre=2 in Job. Controller section of glite_wms. conf to reduce it to 2 • Increase timout – Set GLITE_WMS_QUERY_TIMEOUT and GLITE_PR_TIMEOUT in /etc/glite/profile. d/glite-setenv. * and add "Pass. Env GLITE_PR_TIMEOUT" in /opt/glite/etc/glite_wms_wmproxy_httpd. conf INFSO-RI-031688 EGEE-II WLCG Collaboration Workshop 26

- Slides: 26