Distributed storage work status Giacinto Donvito INFNBari On

Distributed storage, work status Giacinto Donvito INFN-Bari On behalf of the Distributed Storage Working Group Super. B Collaboration Meeting 1 02 June 2012

Outline O Testing storage solution: O Hadoop testing O Fault tolerance solutions O Patch for a distributed computing infrastructure O Napoli activities O Data access library O Why we need it? O How it should work? O Work status O Future Plans O People involved Only update are reported in this talk: there are others activities planned or ongoing that do not have update to be reported since last meeting O Conclusions Super. B Collaboration Meeting 02 June 2012 2

Testing Storage Solutions O The goal is to test available storage solutions that: O Fulfill Super. B requirements O good performance also on pseudo random access O Accomplish Computing centers need O scalability in the order of PB and hundreds of client nodes O a small TCO O can benefit of a good support on a long term future O Open Source could be preferred over proprietary solutions O Wide community would be a guarantee for long term sustainability O Provide posix-like access APIs by means of a ROOT supported protocols (most interesting seems to be: XRoot. D and HTTP) O Also the copy functionality should be supported Super. B Collaboration Meeting 02 June 2012 3

Testing Storage Solutions O Several software solution could fulfill all those requirements O few of those are already known and used within HEP community O we would test also solutions that are not already well known O focusing our attention on those that could provide fault-tolerance to hw or sw malfunction O At the moment we are focusing on two solutions: O HADOOP (at INFN-Bari) Gluster. FS (at INFN-Napoli) O Collaboration Meeting Super. B 02 June 2012 4

Hadoop testing O Use cases: O Scientific data analysis on cluster with CPU and storage on the same boxes O High Availability of data access without expensive hardware infrastructure O High Availability of data access service also in the event of a complete site failure O Test executed: O Metadata failures O Data failures O “Rack” awareness Super. B Collaboration Meeting 02 June 2012 5

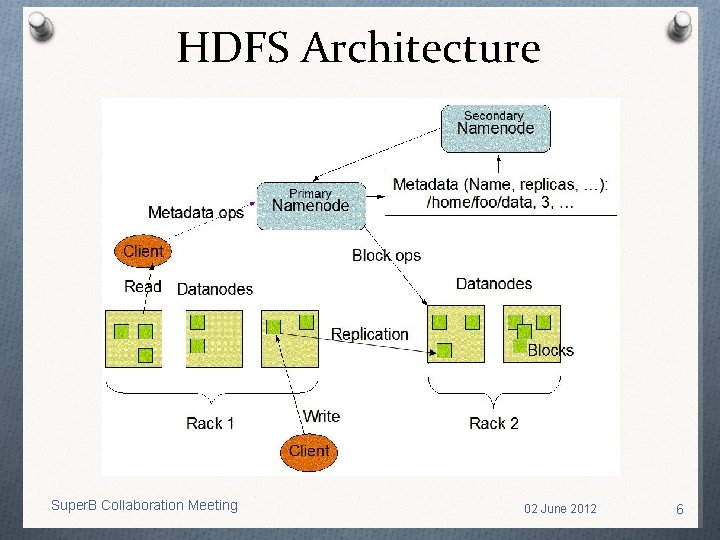

HDFS Architecture Super. B Collaboration Meeting 02 June 2012 6

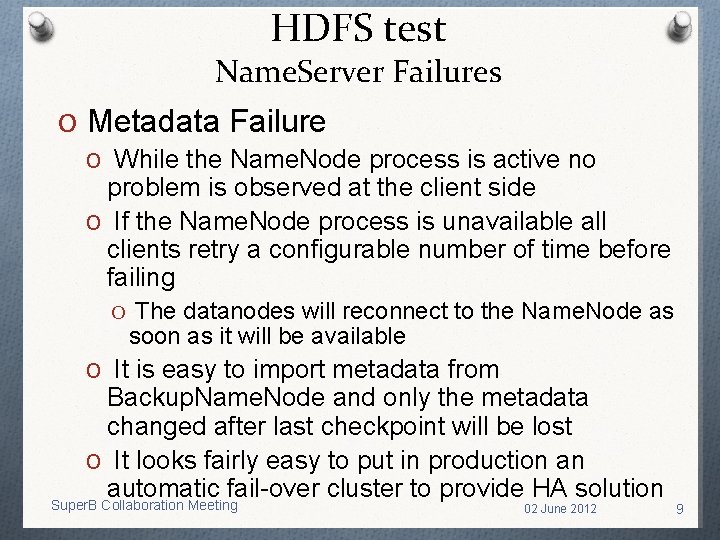

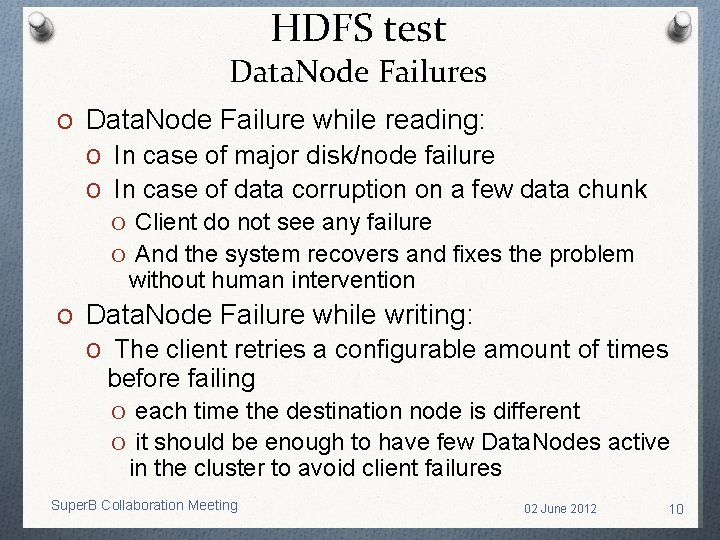

HDFS test O Metadata Failure O Name. Server is a single point of failure O It is possible to have a Back-up snapshot O Data. Node Failure O During read/write operation O Testing FUSE access to HDFS file-system O Testing HTTP access to HDFS file-system O Rack failure resilience O Farm failure resilience Super. B Collaboration Meeting 02 June 2012 7

HDFS test Name. Server Failures O Metadata Failure O It is possible to make a back-up of the metadata O the time between two dump is configurable O It is easy and fast to recover from Primary. Namenode major failure HTTP REQUEST Super. B Collaboration Meeting 02 June 2012 8

HDFS test Name. Server Failures O Metadata Failure O While the Name. Node process is active no problem is observed at the client side O If the Name. Node process is unavailable all clients retry a configurable number of time before failing O The datanodes will reconnect to the Name. Node as soon as it will be available O It is easy to import metadata from Backup. Name. Node and only the metadata changed after last checkpoint will be lost O It looks fairly easy to put in production an automatic fail-over cluster to provide HA solution Super. B Collaboration Meeting 02 June 2012 9

HDFS test Data. Node Failures O Data. Node Failure while reading: O In case of major disk/node failure O In case of data corruption on a few data chunk O Client do not see any failure O And the system recovers and fixes the problem without human intervention O Data. Node Failure while writing: O The client retries a configurable amount of times before failing O each time the destination node is different O it should be enough to have few Data. Nodes active in the cluster to avoid client failures Super. B Collaboration Meeting 02 June 2012 10

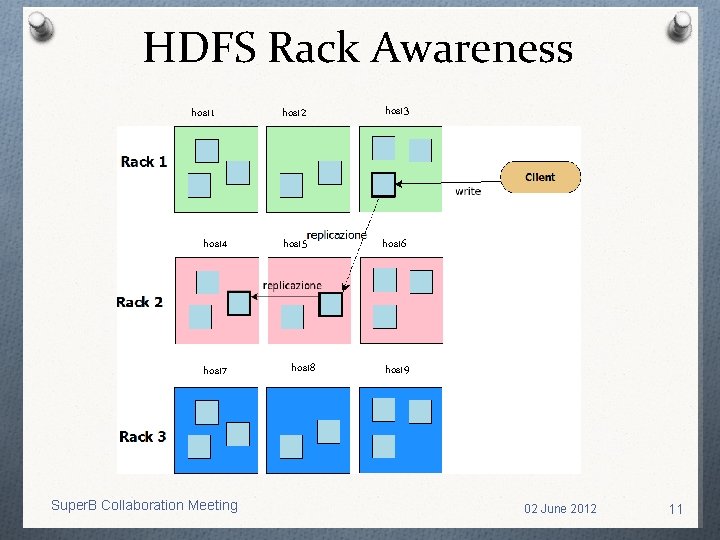

HDFS Rack Awareness host 1 host 4 host 7 Super. B Collaboration Meeting host 2 host 3 host 5 host 6 host 8 host 9 02 June 2012 11

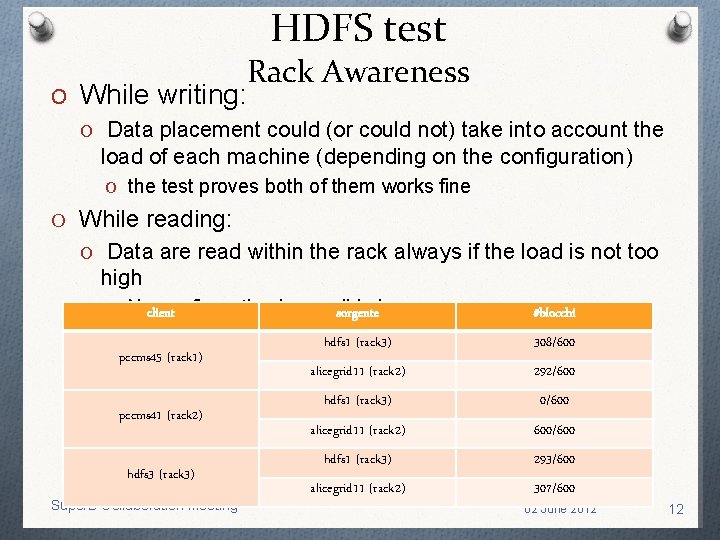

HDFS test O While writing: Rack Awareness O Data placement could (or could not) take into account the load of each machine (depending on the configuration) O the test proves both of them works fine O While reading: O Data are read within the rack always if the load is not too high O Noclient configuration is possible sorgentehere pccms 45 (rack 1) pccms 41 (rack 2) hdfs 3 (rack 3) Super. B Collaboration Meeting #blocchi hdfs 1 (rack 3) 308/600 alicegrid 11 (rack 2) 292/600 hdfs 1 (rack 3) 0/600 alicegrid 11 (rack 2) 600/600 hdfs 1 (rack 3) 293/600 alicegrid 11 (rack 2) 307/600 02 June 2012 12

HDFS test Miscellaneous O Fuse testing: O It works fine for reading O We exploit an OSG version in order to avoid some bugs while using fuse to write files O HTTP testing: O HDFS provide a native Web. Dav interface O Works quite well for reading O Both reading and writing works well with standard Apache module and fuse HDFS mounted via FUSE Super. B Collaboration Meeting 02 June 2012 13

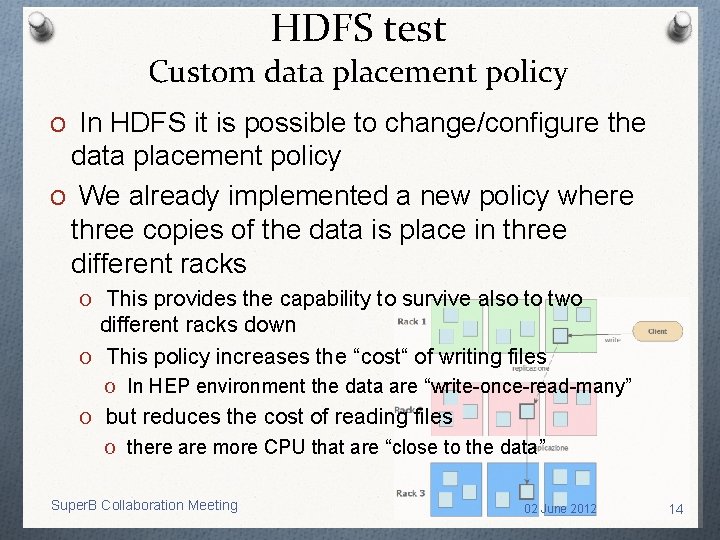

HDFS test Custom data placement policy O In HDFS it is possible to change/configure the data placement policy O We already implemented a new policy where three copies of the data is place in three different racks O This provides the capability to survive also to two different racks down O This policy increases the “cost“ of writing files O In HEP environment the data are “write-once-read-many” O but reduces the cost of reading files O there are more CPU that are “close to the data” Super. B Collaboration Meeting 02 June 2012 14

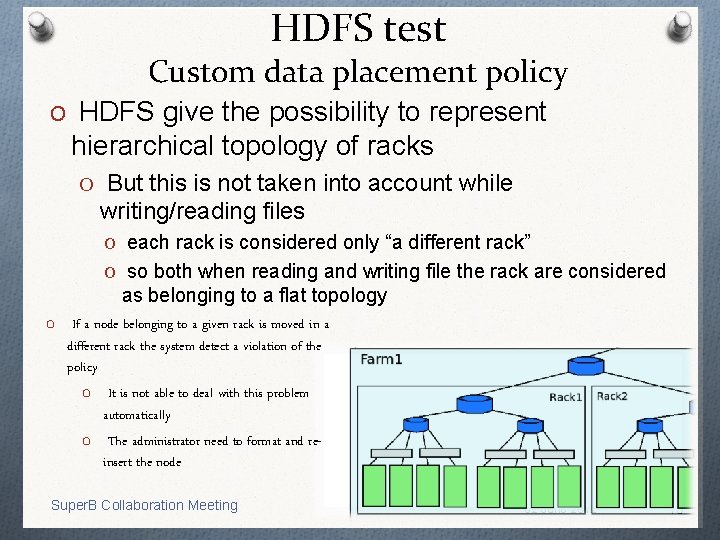

HDFS test Custom data placement policy O HDFS give the possibility to represent hierarchical topology of racks O But this is not taken into account while writing/reading files O each rack is considered only “a different rack” O so both when reading and writing file the rack are considered as belonging to a flat topology O If a node belonging to a given rack is moved in a different rack the system detect a violation of the policy O It is not able to deal with this problem automatically O The administrator need to format and reinsert the node Super. B Collaboration Meeting 02 June 2012 15

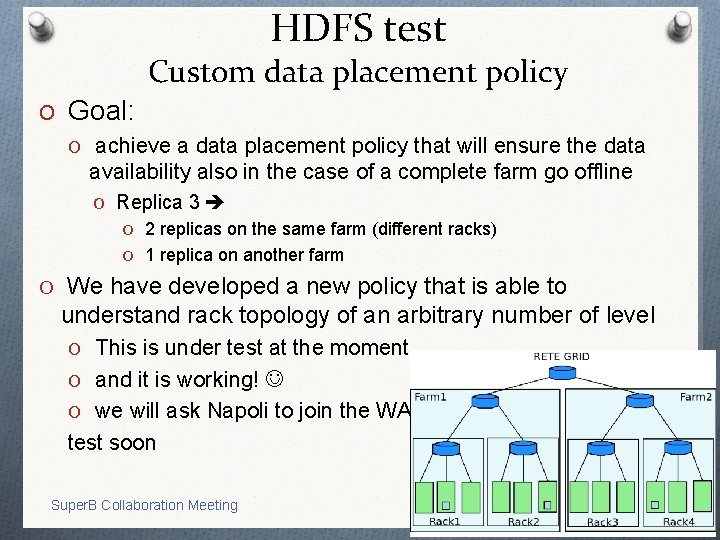

HDFS test Custom data placement policy O Goal: O achieve a data placement policy that will ensure the data availability also in the case of a complete farm go offline O Replica 3 O 2 replicas on the same farm (different racks) O 1 replica on another farm O We have developed a new policy that is able to understand rack topology of an arbitrary number of level O This is under test at the moment… O and it is working! O we will ask Napoli to join the WAN test soon Super. B Collaboration Meeting 02 June 2012 16

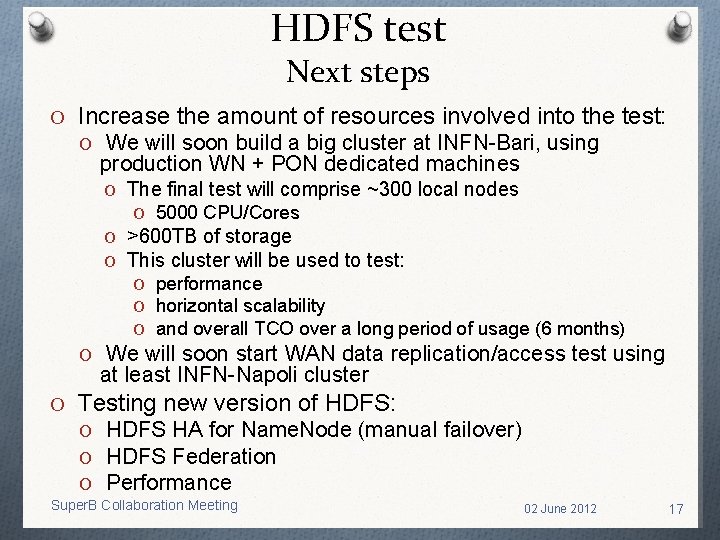

HDFS test Next steps O Increase the amount of resources involved into the test: O We will soon build a big cluster at INFN-Bari, using production WN + PON dedicated machines O The final test will comprise ~300 local nodes O 5000 CPU/Cores O >600 TB of storage O This cluster will be used to test: O performance O horizontal scalability O and overall TCO over a long period of usage (6 months) O We will soon start WAN data replication/access test using at least INFN-Napoli cluster O Testing new version of HDFS: O HDFS HA for Name. Node (manual failover) O HDFS Federation O Performance Super. B Collaboration Meeting 02 June 2012 17

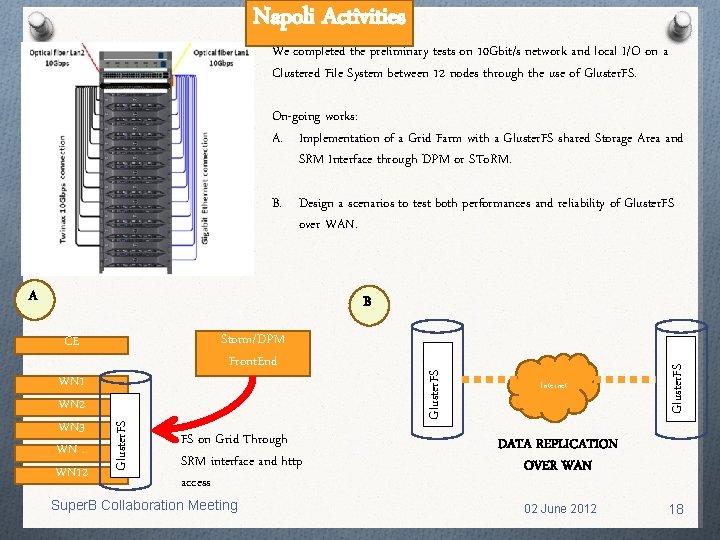

Napoli Activities We completed the preliminary tests on 10 Gbit/s network and local I/O on a Clustered File System between 12 nodes through the use of Gluster. FS. On-going works: A. Implementation of a Grid Farm with a Gluster. FS shared Storage Area and SRM Interface through DPM or STo. RM. B. Design a scenarios to test both performances and reliability of Gluster. FS over WAN. A Gluster. FS WN 1 WN 2 WN 3 WN. . WN 12 FS on Grid Through SRM interface and http access Super. B Collaboration Meeting Internet Gluster. FS Storm/DPM Front. End CE Gluster. FS B DATA REPLICATION OVER WAN 02 June 2012 18

Data Access Library Why we need it? O It could be painful to access data within analysis application if no posix access is provided by computing centers O there are number of library providing posix-like access in HEP community O We need an abstraction layer to be able to use transparently Logical Super. B File Name, without knowledge about the mapping with the Physical File Name at a given site O We need a single place/layer where we could implement reading optimization that would be transparently used by end users O We need an easy way for reading files using protocols that are not already supported by ROOT Super. B Collaboration Meeting 02 June 2012 19

Data Access Library How we can use it? O This work aim to provide a simple set of APIs: O Superb_open, Superb_seek, Superb_read, Superb_close, … O it will be needed to add a dedicated header file and the related library O building the code with this library it will be possible to open seamless local or remote files O exploiting several protocols: http(s), xrootd, etc O Different level of caching will be automatically provided from the library in order to improve the performance of the analysis code O Each site will be able to configure the library as it is needed to fulfill computing centers requirements Super. B Collaboration Meeting 02 June 2012 20

Data Access Library Work status O At the moment we have a test implementation with http -based access O Exploiting curl libraries O It is possible to write an application linking this library and to open and read a list of files O With a list of read operations O It is possible to configure the size of the read buffer O To optimize the performance in reading files over remote network O The results of the first test will be available in few weeks from now… O We already presented WAN data access test at CHEP using curl: O https: //indico. cern. ch/get. File. py/access? contrib. Id=294&sessio n. Id=4&res. Id=0&material. Id=slides&conf. Id=149557 Super. B Collaboration Meeting 02 June 2012 21

Data Access Library Future works O We are already in contact with Philippe Canal for implement new features of ROOT I/O O We will follow the development of the Distributed Computing Working Group to provide an seamless mapping of the Logical File Name to Local Physical File Name O We will soon implement few example of a configuration for local storage system O We need to now which ROOT version is more interesting for Super. B analysis users. O We will start implementing memory based prefetch and caching mechanism within Superb Library Super. B Collaboration Meeting 02 June 2012 22

People involved O Giacinto Donvito – INFN-Bari: O Data Model O HADOOP testing O http & xrootd remote access O Distributed Tier 1 testing O Silvio Pardi, Domenico del Prete, Guido Russo – INFN Napoli: O O Cluster set-up Distributed Tier 1 testing Gluster. FS testing SRM testing O Gianni Marzulli – INFN-Bari: O Cluster set-up O HADOOP testing O Armando Fella – INFN-Pisa: O http remote access O NFSv 4. 1 testing O Data Model O Elisa Manoni – INFN-Perugia: O Developing application code for testing remote data access O Paolo Franchini – INFN-CNAF: O http remote access O Claudio Grandi – INFN- Bologna: O Data Model O Stefano Bagnasco – INFN- Torino: O Gluster. FS testing O Domenico Diacono – INFN- Bari O Developing data access library

Conclusions O Collaborations and synergies with other (mainly LHC) experiments are increasing and improving O First results from testing storage solution are quite promising O We will need soon to increase the effort and the amount of (hw and human) resources involved O Both locally and geographically distributed O This will be of great importance in realizing a distributed Tier 0 center among Italian computing centers O We need to increase the development effort in order to provide a code-base for an efficient data access O This will be one of the key elements in providing good performance Super. B Collaboration Meetingto analysis applications 02 June 2012 24

- Slides: 24