DataDependent Hashing for Nearest Neighbor Search Alex Andoni

Data-Dependent Hashing for Nearest Neighbor Search Alex Andoni (Columbia University) Ilya Razenshteyn (MIT)

Nearest Neighbor Search (NNS) 2

Motivation 000000 011100 010100 000100 011111 000000 001100 000100 110100 111111 3

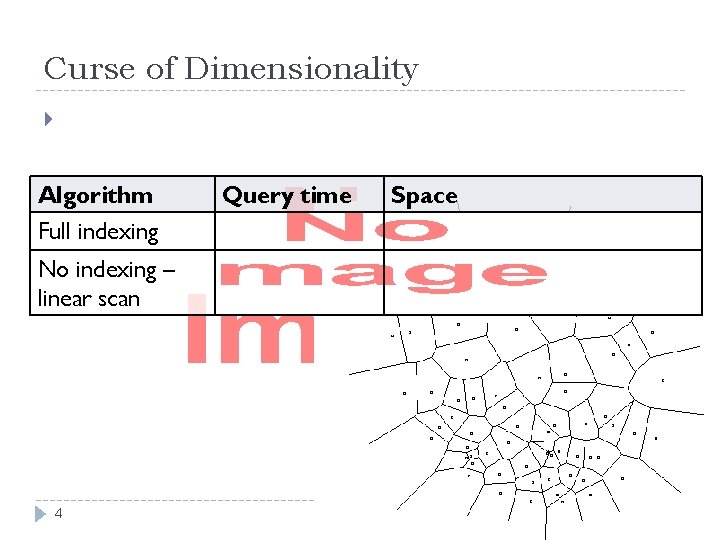

Curse of Dimensionality Algorithm Full indexing No indexing – linear scan 4 Query time Space

Approximate NNS 5

![NNS algorithms Exponential dependence on dimension [Arya-Mount’ 93], [Clarkson’ 94], [Arya-Mount-Netanyahu-Silverman. We’ 98], [Kleinberg’ NNS algorithms Exponential dependence on dimension [Arya-Mount’ 93], [Clarkson’ 94], [Arya-Mount-Netanyahu-Silverman. We’ 98], [Kleinberg’](http://slidetodoc.com/presentation_image_h2/996567e21b55d9427f21e9b9d50b1125/image-6.jpg)

NNS algorithms Exponential dependence on dimension [Arya-Mount’ 93], [Clarkson’ 94], [Arya-Mount-Netanyahu-Silverman. We’ 98], [Kleinberg’ 97], [Har-Peled’ 02], [Arya-Fonseca-Mount’ 11], … Linear/poly dependence on dimension [Kushilevitz-Ostrovsky-Rabani’ 98], [Indyk-Motwani’ 98], [Indyk’ 98, ‘ 01], [Gionis-Indyk-Motwani’ 99], [Charikar’ 02], [Datar-Immorlica-Indyk. Mirrokni’ 04], [Chakrabarti-Regev’ 04], [Panigrahy’ 06], [Ailon-Chazelle’ 06], [A. -Indyk-Nguyen-Razenshteyn’ 14], [A. -Razenshteyn’ 15], [Pagh’ 16], [Becker-Ducas-Gama-Laarhoven’ 16], … 6

![Locality-Sensitive Hashing [Indyk-Motwani’ 98] “not-so-small” 7 Locality-Sensitive Hashing [Indyk-Motwani’ 98] “not-so-small” 7](http://slidetodoc.com/presentation_image_h2/996567e21b55d9427f21e9b9d50b1125/image-7.jpg)

Locality-Sensitive Hashing [Indyk-Motwani’ 98] “not-so-small” 7

![LSH Algorithms Space Time Exponent Sample random bits! Reference Hamming space [IM’ 98] Euclidean LSH Algorithms Space Time Exponent Sample random bits! Reference Hamming space [IM’ 98] Euclidean](http://slidetodoc.com/presentation_image_h2/996567e21b55d9427f21e9b9d50b1125/image-8.jpg)

LSH Algorithms Space Time Exponent Sample random bits! Reference Hamming space [IM’ 98] Euclidean space [IM’ 98, DIIM’ 04] [MNP’ 06, OWZ’ 11] [AI’ 06] [MNP’ 06, OWZ’ 11] 8

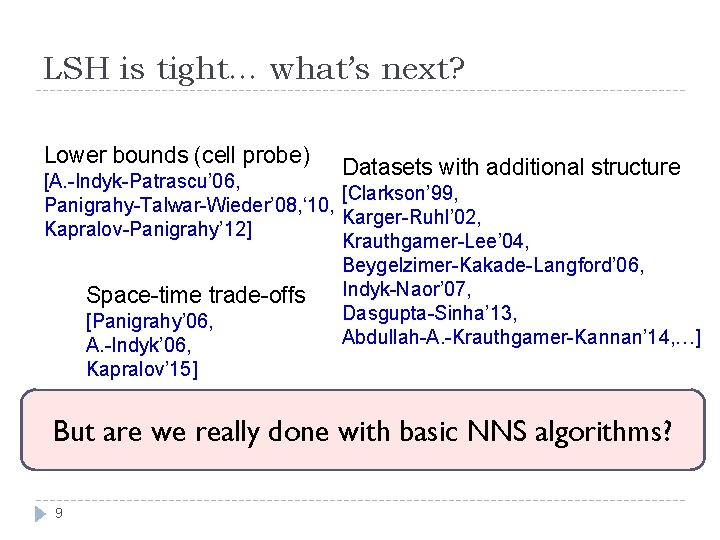

LSH is tight… what’s next? Lower bounds (cell probe) Datasets with additional structure [A. -Indyk-Patrascu’ 06, [Clarkson’ 99, Panigrahy-Talwar-Wieder’ 08, ‘ 10, Karger-Ruhl’ 02, Kapralov-Panigrahy’ 12] Krauthgamer-Lee’ 04, Beygelzimer-Kakade-Langford’ 06, Indyk-Naor’ 07, Space-time trade-offs Dasgupta-Sinha’ 13, [Panigrahy’ 06, Abdullah-A. -Krauthgamer-Kannan’ 14, …] A. -Indyk’ 06, Kapralov’ 15] But are we really done with basic NNS algorithms? 9

![Beyond Locality Sensitive Hashing Space Time Exponent Reference [IM’ 98] Hamming space [MNP’ 06, Beyond Locality Sensitive Hashing Space Time Exponent Reference [IM’ 98] Hamming space [MNP’ 06,](http://slidetodoc.com/presentation_image_h2/996567e21b55d9427f21e9b9d50b1125/image-10.jpg)

Beyond Locality Sensitive Hashing Space Time Exponent Reference [IM’ 98] Hamming space [MNP’ 06, OWZ’ 11] complicated LSH [AINR’ 14] [AR’ 15] Euclidean space [AI’ 06] [MNP’ 06, OWZ’ 11] complicated [AINR’ 14] [AR’ 15] 10 LSH

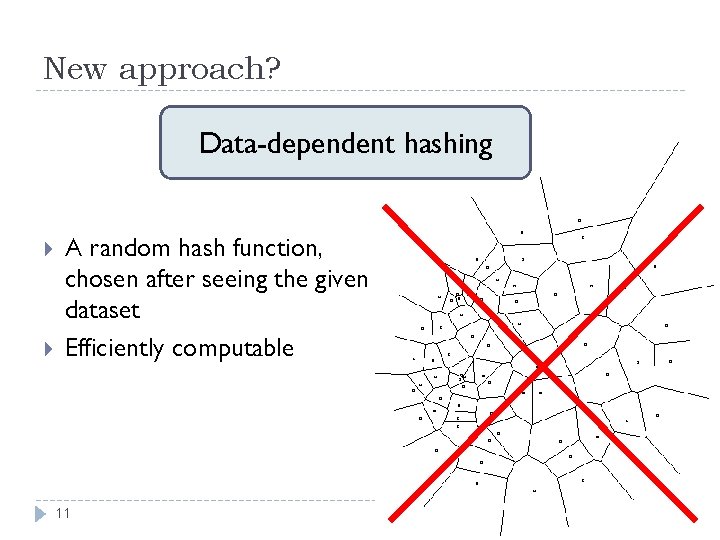

New approach? Data-dependent hashing A random hash function, chosen after seeing the given dataset Efficiently computable 11

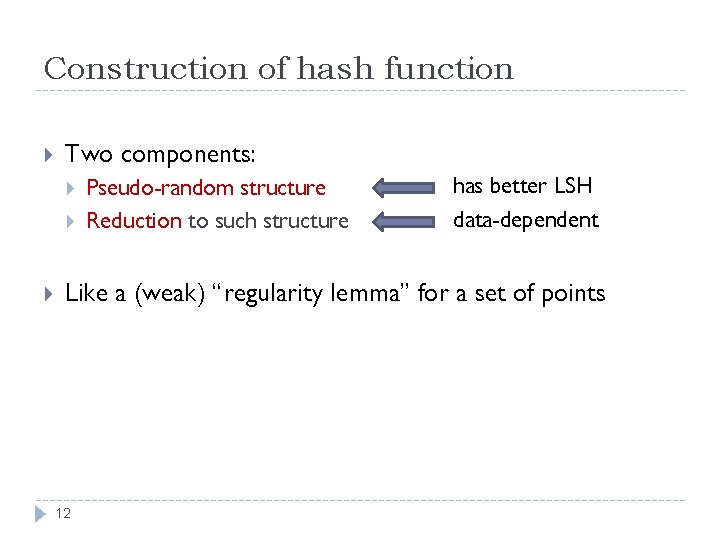

Construction of hash function Two components: Pseudo-random structure Reduction to such structure has better LSH data-dependent Like a (weak) “regularity lemma” for a set of points 12

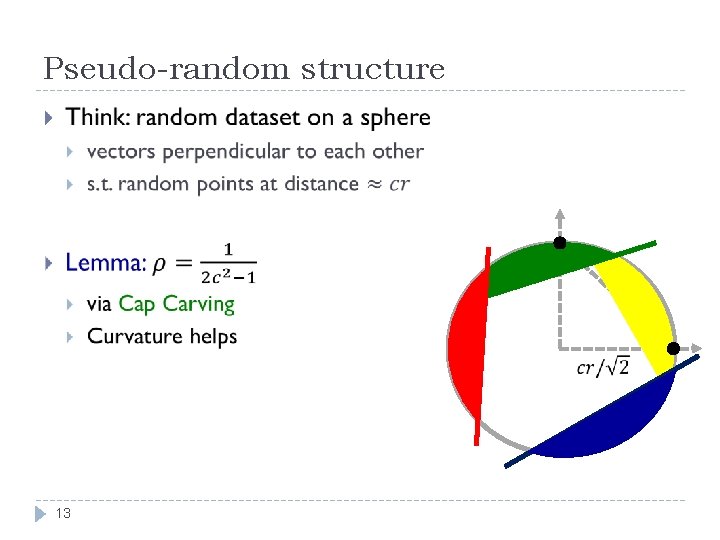

Pseudo-random structure 13

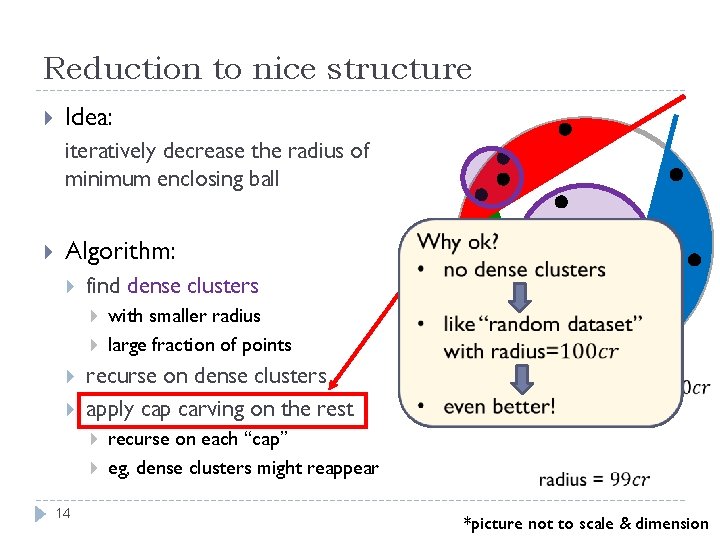

Reduction to nice structure Idea: iteratively decrease the radius of minimum enclosing ball Algorithm: find dense clusters with smaller radius large fraction of points recurse on dense clusters apply cap carving on the rest 14 Why ok? recurse on each “cap” eg, dense clusters might reappear *picture not to scale & dimension

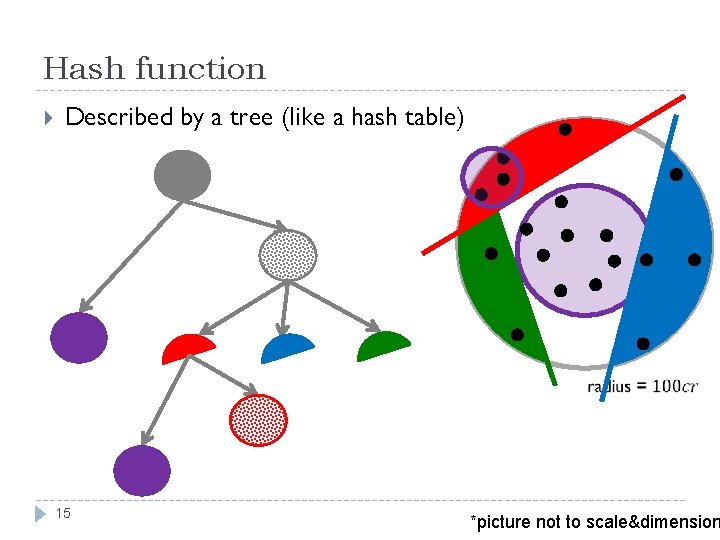

Hash function Described by a tree (like a hash table) 15 *picture not to scale&dimension

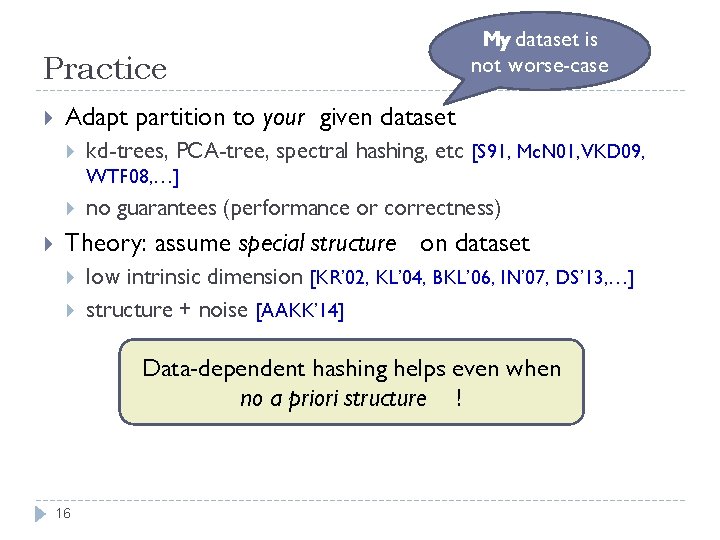

Practice My dataset is not worse-case Adapt partition to your given dataset kd-trees, PCA-tree, spectral hashing, etc [S 91, Mc. N 01, VKD 09, WTF 08, …] no guarantees (performance or correctness) Theory: assume special structure on dataset low intrinsic dimension [KR’ 02, KL’ 04, BKL’ 06, IN’ 07, DS’ 13, …] structure + noise [AAKK’ 14] Data-dependent hashing helps even when no a priori structure ! 16

![Follow-ups [Laarhoven’ 15] 17 Follow-ups [Laarhoven’ 15] 17](http://slidetodoc.com/presentation_image_h2/996567e21b55d9427f21e9b9d50b1125/image-17.jpg)

Follow-ups [Laarhoven’ 15] 17

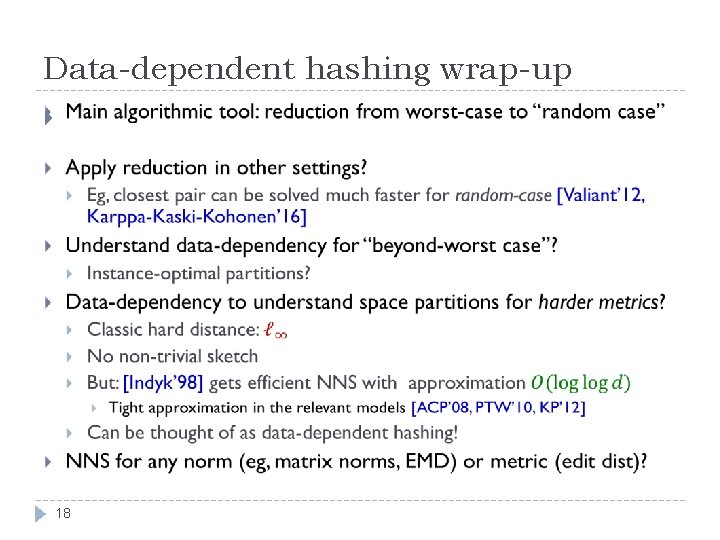

Data-dependent hashing wrap-up 18

- Slides: 18