Author Name Disambiguation Based on Network Embedding Presenter

Author Name Disambiguation Based on Network Embedding Presenter: 李明杰 517030910344

CONTENTS 1 Introduction 2 One Classical Solution 3 Proposed Framework 4 Experimental Results 5 Discussion and Drawbacks

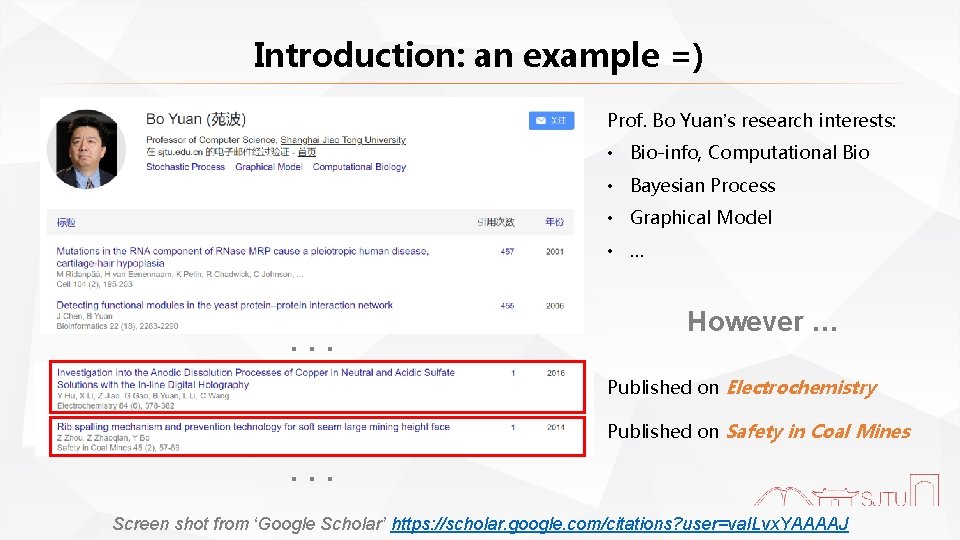

Introduction: an example =) Prof. Bo Yuan’s research interests: • Bio-info, Computational Bio • Bayesian Process • Graphical Model • … . . . However … Published on Electrochemistry Published on Safety in Coal Mines . . . Screen shot from ‘Google Scholar’ https: //scholar. google. com/citations? user=va. ILvx. YAAAAJ

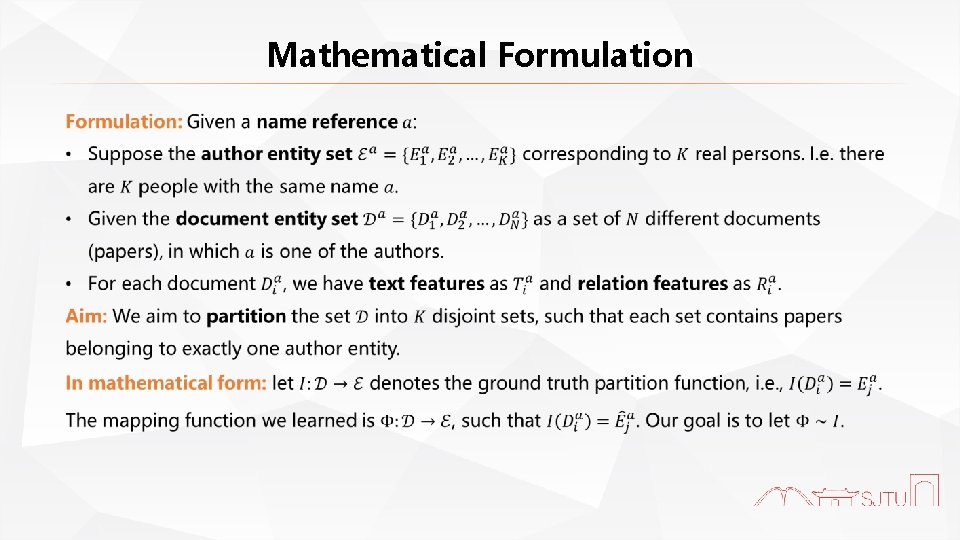

Mathematical Formulation

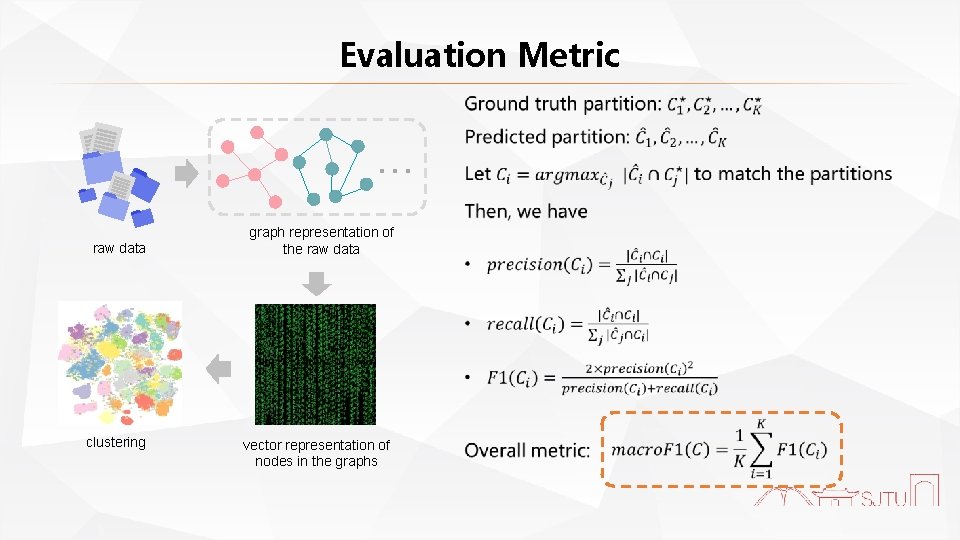

Evaluation Metric . . . raw data clustering graph representation of the raw data vector representation of nodes in the graphs

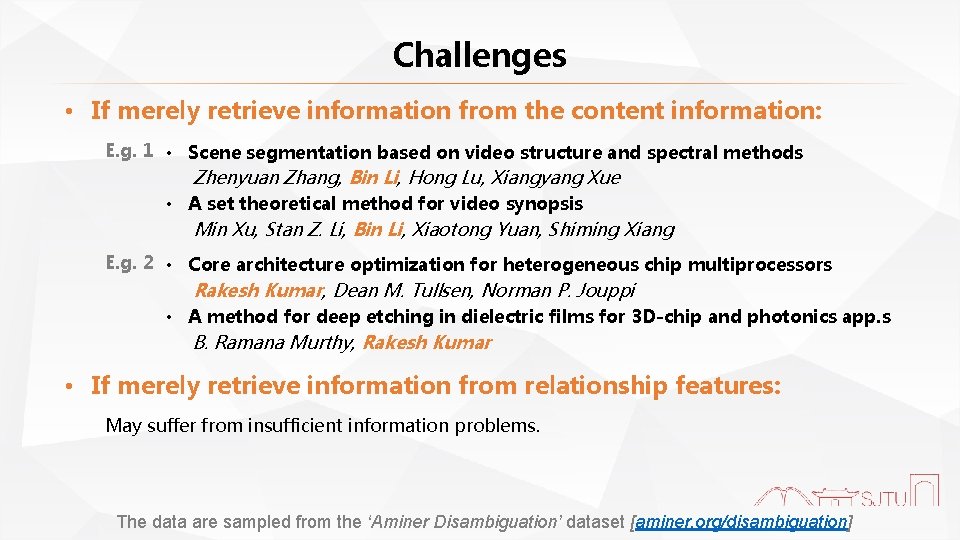

Challenges • If merely retrieve information from the content information: E. g. 1 • Scene segmentation based on video structure and spectral methods Zhenyuan Zhang, Bin Li, Hong Lu, Xiangyang Xue • A set theoretical method for video synopsis Min Xu, Stan Z. Li, Bin Li, Xiaotong Yuan, Shiming Xiang E. g. 2 • Core architecture optimization for heterogeneous chip multiprocessors Rakesh Kumar, Dean M. Tullsen, Norman P. Jouppi • A method for deep etching in dielectric films for 3 D-chip and photonics app. s B. Ramana Murthy, Rakesh Kumar • If merely retrieve information from relationship features: May suffer from insufficient information problems. The data are sampled from the ‘Aminer Disambiguation’ dataset [aminer. org/disambiguation]

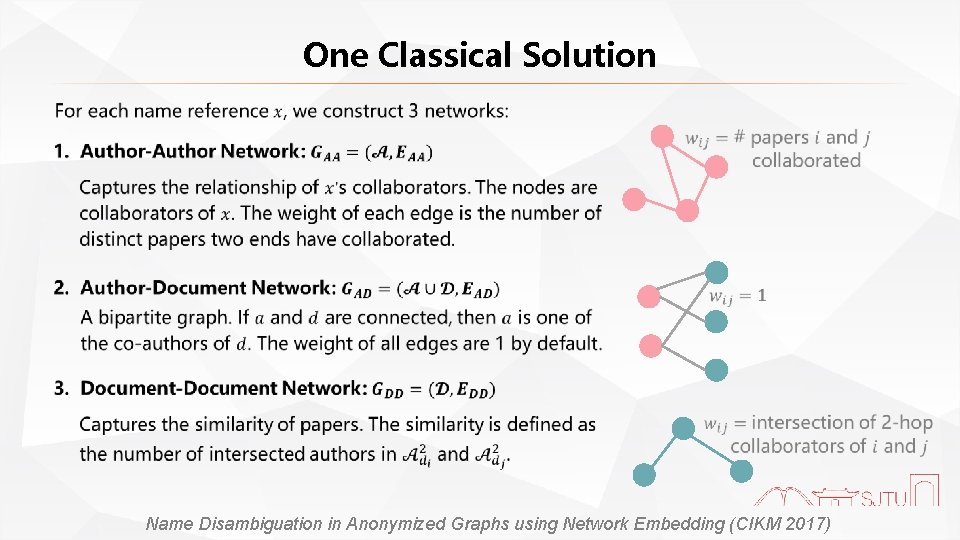

One Classical Solution Name Disambiguation in Anonymized Graphs using Network Embedding (CIKM 2017)

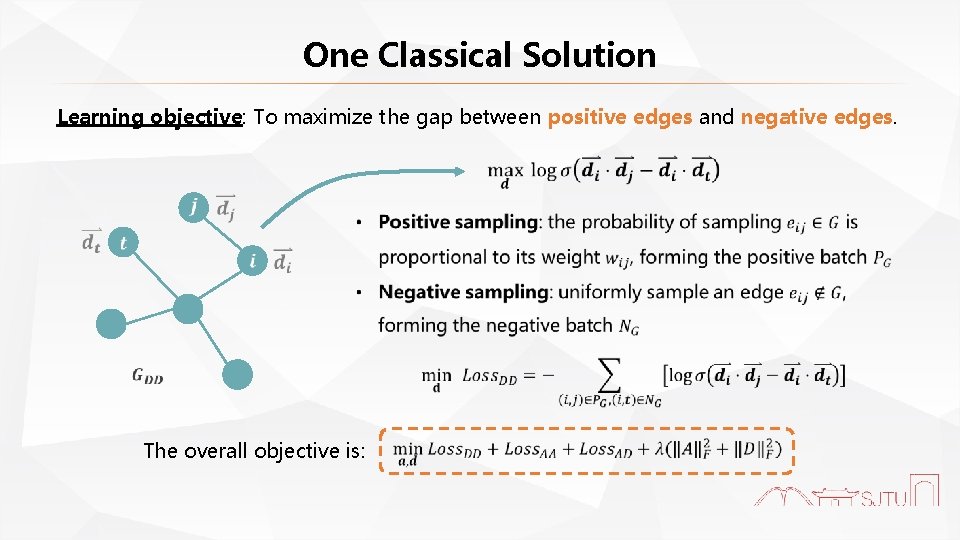

One Classical Solution Learning objective: To maximize the gap between positive edges and negative edges. The overall objective is:

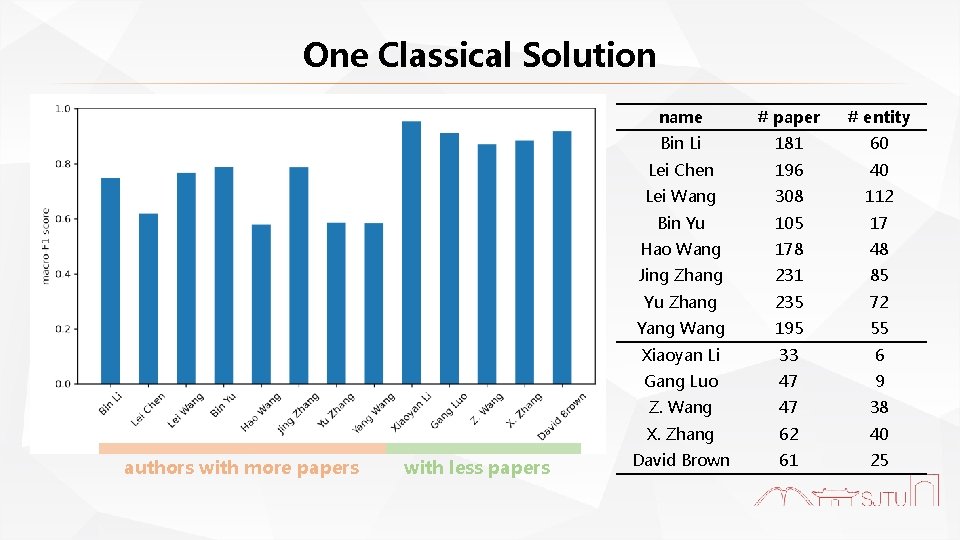

One Classical Solution authors with more papers with less papers name # paper # entity Bin Li 181 60 Lei Chen 196 40 Lei Wang 308 112 Bin Yu 105 17 Hao Wang 178 48 Jing Zhang 231 85 Yu Zhang 235 72 Yang Wang 195 55 Xiaoyan Li 33 6 Gang Luo 47 9 Z. Wang 47 38 X. Zhang 62 40 David Brown 61 25

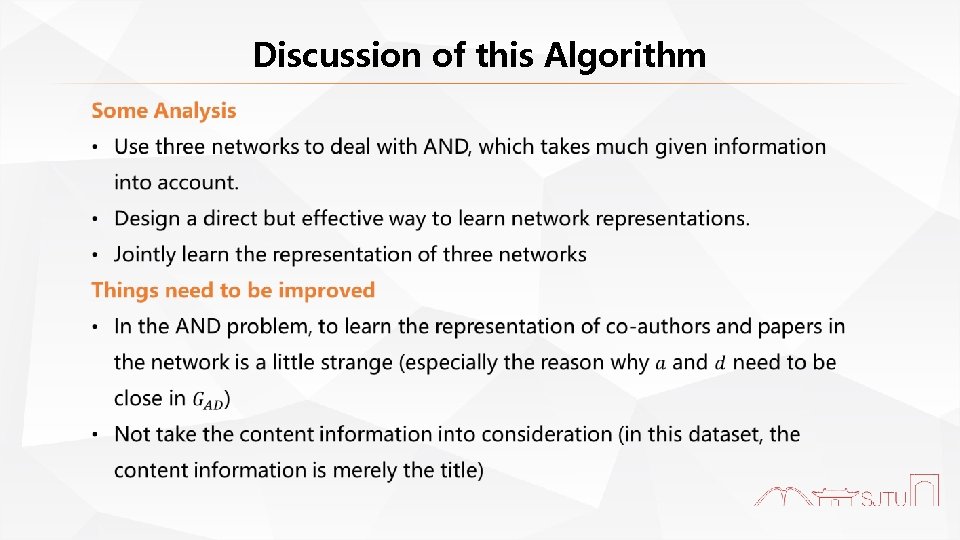

Discussion of this Algorithm

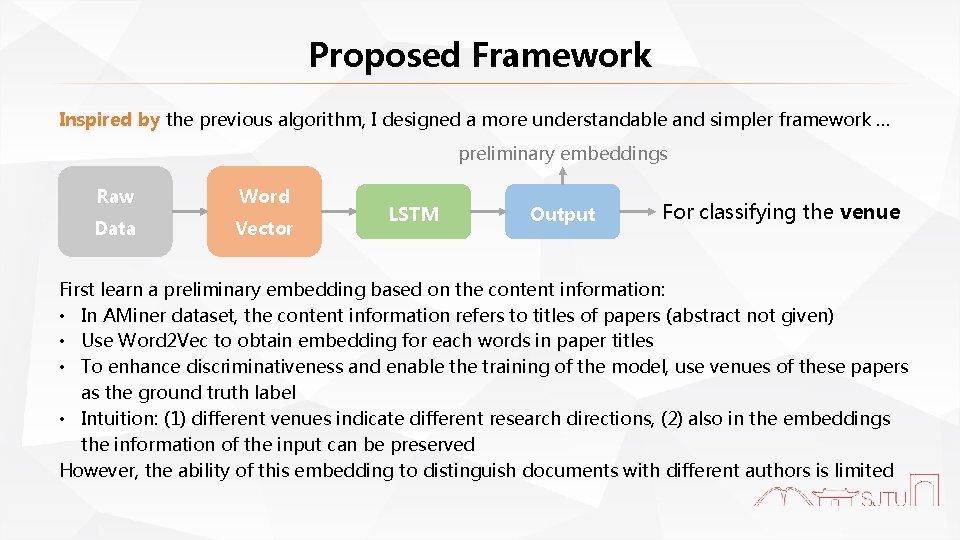

Proposed Framework Inspired by the previous algorithm, I designed a more understandable and simpler framework … preliminary embeddings Raw Word Data Vector LSTM Output For classifying the venue First learn a preliminary embedding based on the content information: • In AMiner dataset, the content information refers to titles of papers (abstract not given) • Use Word 2 Vec to obtain embedding for each words in paper titles • To enhance discriminativeness and enable the training of the model, use venues of these papers as the ground truth label • Intuition: (1) different venues indicate different research directions, (2) also in the embeddings the information of the input can be preserved However, the ability of this embedding to distinguish documents with different authors is limited

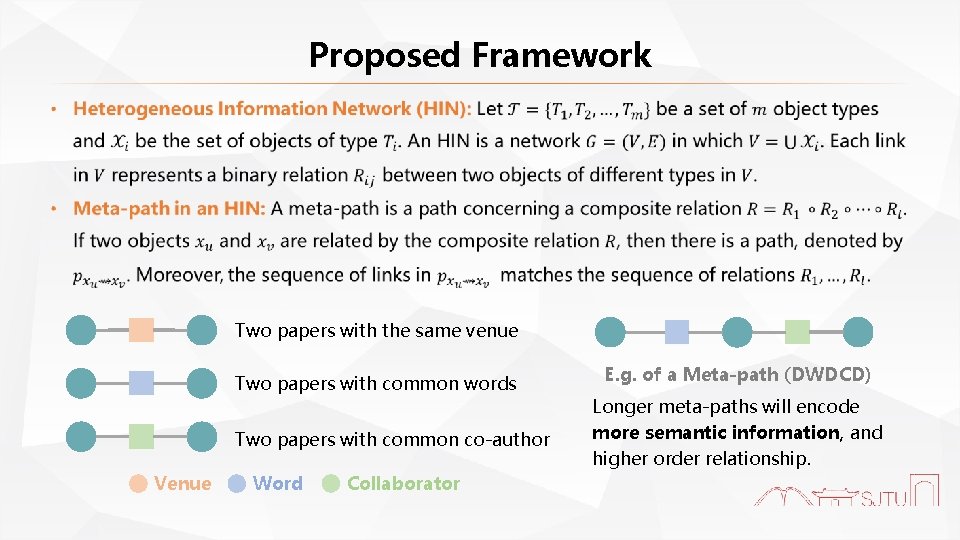

Proposed Framework Two papers with the same venue Two papers with common words Two papers with common co-author Venue Word Collaborator E. g. of a Meta-path (DWDCD) Longer meta-paths will encode more semantic information, and higher order relationship.

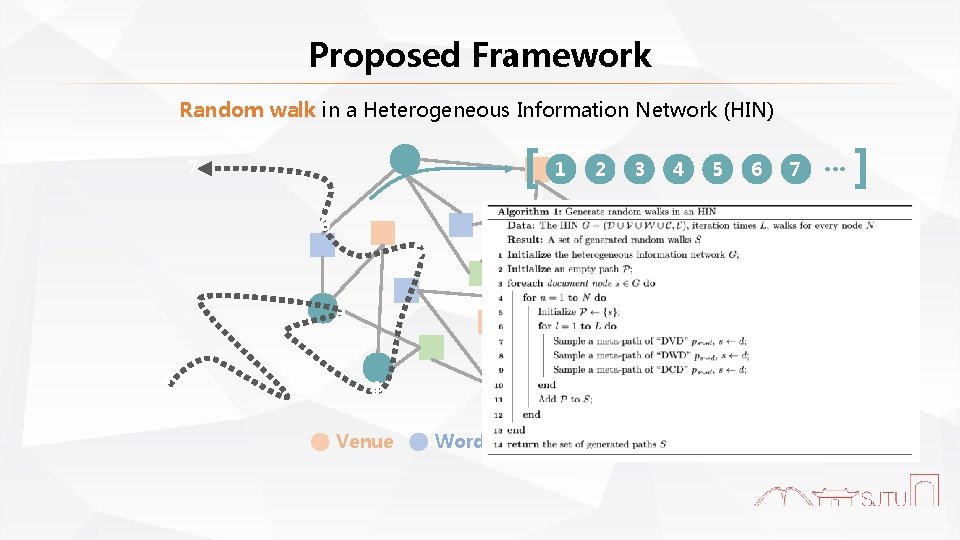

Proposed Framework Random walk in a Heterogeneous Information Network (HIN) [ 7 1 2 3 6 5 3 1 2 4 Venue Word Collaborator 4 5 6 7 … ]

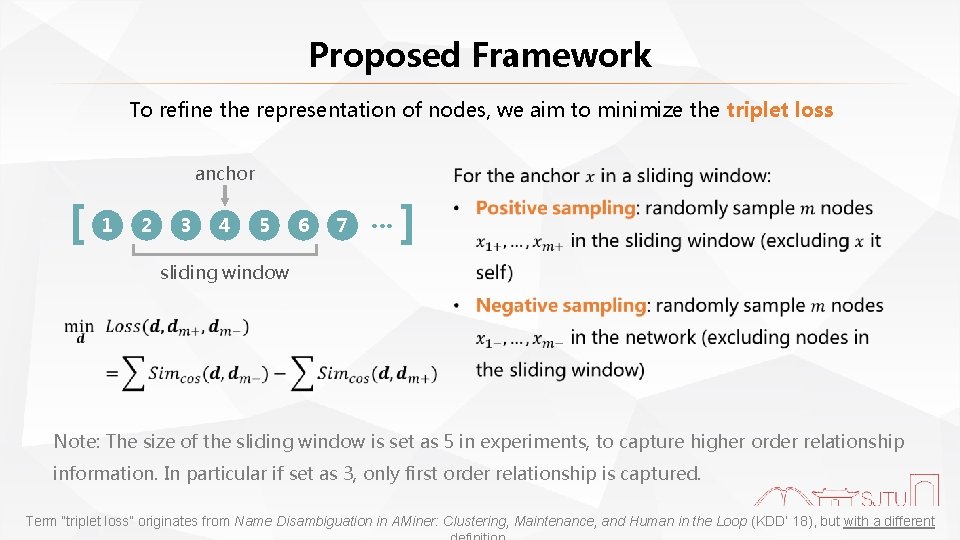

Proposed Framework To refine the representation of nodes, we aim to minimize the triplet loss anchor [ 1 2 3 4 5 6 7 … ] sliding window Note: The size of the sliding window is set as 5 in experiments, to capture higher order relationship information. In particular if set as 3, only first order relationship is captured. Term “triplet loss” originates from Name Disambiguation in AMiner: Clustering, Maintenance, and Human in the Loop (KDD’ 18), but with a different

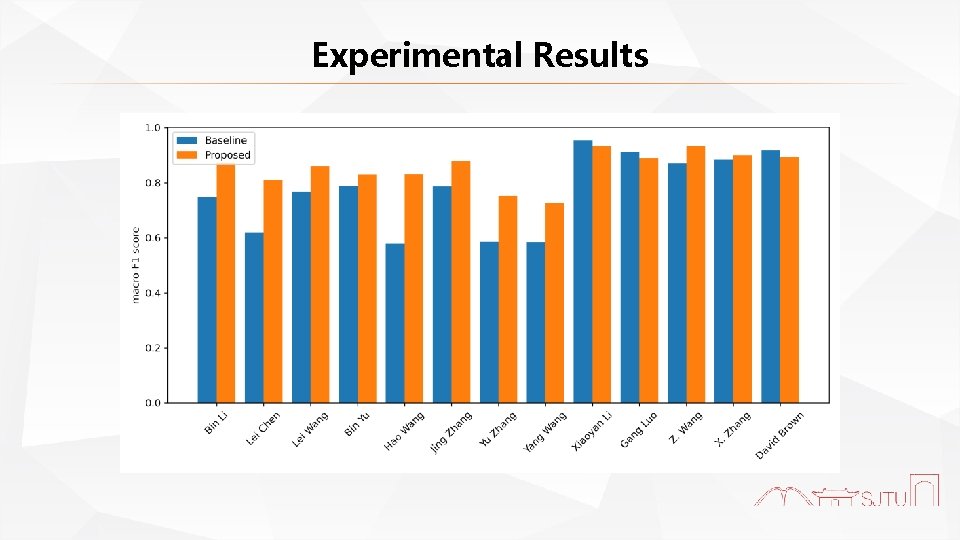

Experimental Results

Summary of this Project In this project, I have done the following things: • Re-implement the baseline algorithm • Inspired by this algorithm, I proposed a simple, yet effective way to tackle the AND problem The proposed framework has the following advantages: • The proposed framework is straight-forward, easier to understand simpler to implement • The proposed framework will fuse more information, including the title, the venue of the paper, etc…

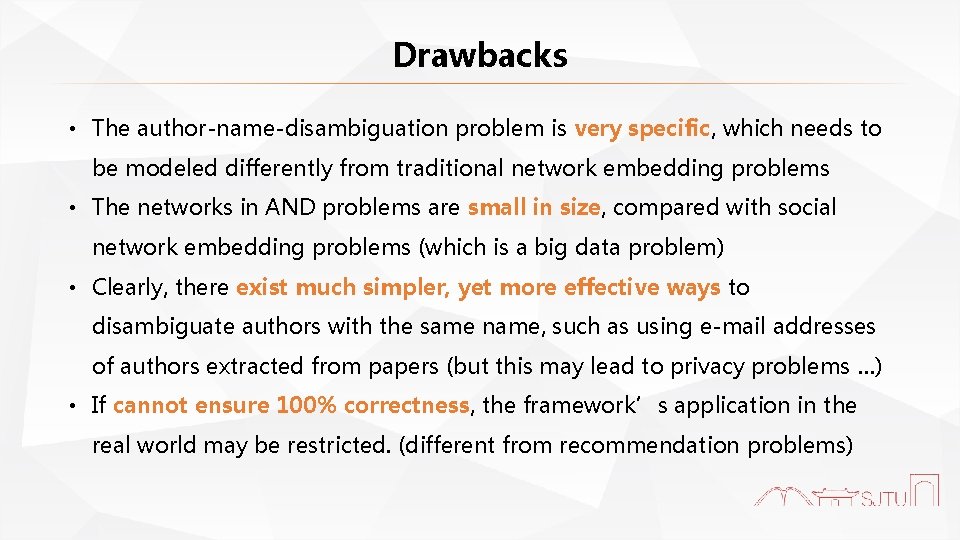

Drawbacks • The author-name-disambiguation problem is very specific, which needs to be modeled differently from traditional network embedding problems • The networks in AND problems are small in size, compared with social network embedding problems (which is a big data problem) • Clearly, there exist much simpler, yet more effective ways to disambiguate authors with the same name, such as using e-mail addresses of authors extracted from papers (but this may lead to privacy problems …) • If cannot ensure 100% correctness, the framework’s application in the real world may be restricted. (different from recommendation problems)

Thanks for Listening! 2020. 05. 27

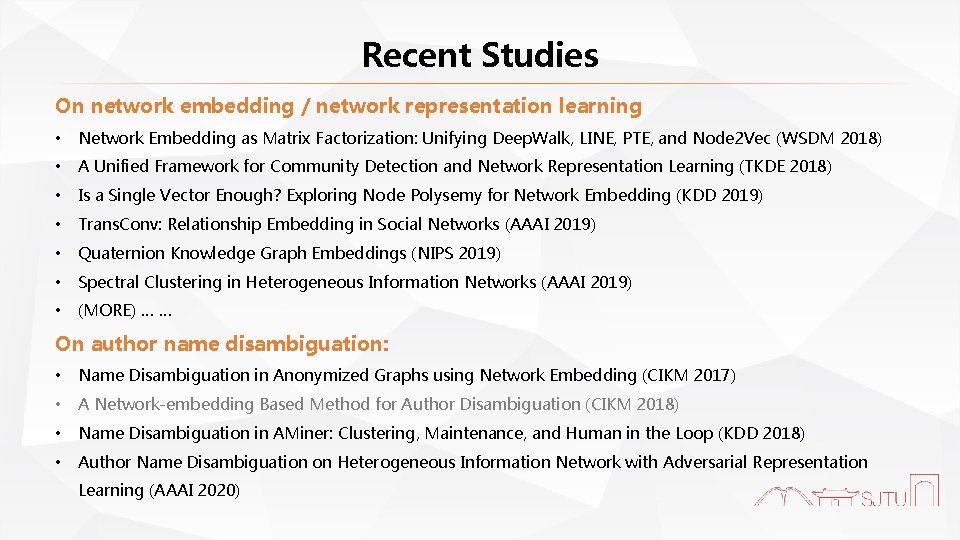

Recent Studies On network embedding / network representation learning • Network Embedding as Matrix Factorization: Unifying Deep. Walk, LINE, PTE, and Node 2 Vec (WSDM 2018) • A Unified Framework for Community Detection and Network Representation Learning (TKDE 2018) • Is a Single Vector Enough? Exploring Node Polysemy for Network Embedding (KDD 2019) • Trans. Conv: Relationship Embedding in Social Networks (AAAI 2019) • Quaternion Knowledge Graph Embeddings (NIPS 2019) • Spectral Clustering in Heterogeneous Information Networks (AAAI 2019) • (MORE) … … On author name disambiguation: • Name Disambiguation in Anonymized Graphs using Network Embedding (CIKM 2017) • A Network-embedding Based Method for Author Disambiguation (CIKM 2018) • Name Disambiguation in AMiner: Clustering, Maintenance, and Human in the Loop (KDD 2018) • Author Name Disambiguation on Heterogeneous Information Network with Adversarial Representation Learning (AAAI 2020)

- Slides: 19